Iris数据集的LDA和PCA二维投影的比较

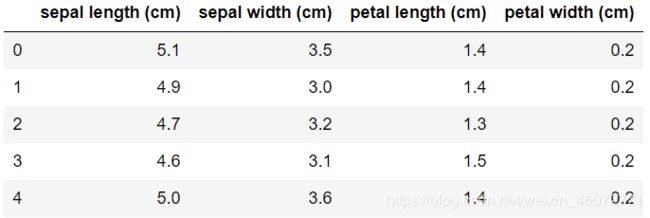

鸢尾花数据集代表3种鸢尾花(Setosa,Versicolour和Virginica),具有4个属性:萼片长度,萼片宽度,花瓣长度和花瓣宽度。

应用于此数据的主成分分析(PCA)可以识别出造成数据差异最大的属性(主要成分或特征空间中的方向)组合。在这里,我们在2个第一主成分上绘制了不同的样本。

线性判别分析(LDA)试图识别出类别之间差异最大的属性。尤其是,与PCA相比,LDA是使用已知类别标签的受监督方法。

import matplotlib.pyplot as plt

from sklearn import datasets

from sklearn.decomposition import PCA

from sklearn.discriminant_analysis import LinearDiscriminantAnalysis

import pandas as pd

iris = datasets.load_iris()

X = iris.data

y = iris.target

target_names = iris.target_names

pd.DataFrame(X, columns=iris.feature_names).head()

target_names

>>>array(['setosa', 'versicolor', 'virginica'], dtype=')

pca = PCA(n_components=2)

X_r = pca.fit(X).transform(X)

lda = LinearDiscriminantAnalysis(n_components=2)

X_r2 = lda.fit(X, y).transform(X)

# Percentage of variance explained for each components

# 各组成部分解释的差异百分比

print('explained variance ratio (first two components): %s'

% str(pca.explained_variance_ratio_))

>>>explained variance ratio (first two components): [0.92461872 0.05306648]

plt.figure()

colors = ['navy', 'turquoise', 'darkorange']

lw = 2

for color, i, target_name in zip(colors, [0, 1, 2], target_names):

plt.scatter(X_r[y == i, 0], X_r[y == i, 1], color=color, alpha=.8, lw=lw,

label=target_name)

plt.legend(loc='best', shadow=False, scatterpoints=1)

plt.title('PCA of IRIS dataset')

plt.figure()

for color, i, target_name in zip(colors, [0, 1, 2], target_names):

plt.scatter(X_r2[y == i, 0], X_r2[y == i, 1], alpha=.8, color=color,

label=target_name)

plt.legend(loc='best', shadow=False, scatterpoints=1)

plt.title('LDA of IRIS dataset')

plt.show()