【caffe】c++中使用训练好的caffe模型,classification工程生成动态链接库——【caffe学习六】

除了在opencv dnn中使用训练好的model,还可以直接通过classification.exe去查看单张图的训练结果。

但是我在使用opencv dnn的时候,发现里面输出的结果和classification.exe并不一样,一时找不到原因,于是还是考虑将classification.cpp写成库供别的程序调用。

1.配置环境。新建工程切换到release x64下

①项目属性中——配置属性——C/C++——常规:

D:\caffe\scripts\build\include;

D:\caffe\scripts\build;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\include\boost-1_61;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\include;

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\include;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\include\opencv;

D:\caffe\include;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\Include;%(AdditionalIncludeDirectories)②项目属性中——配置属性——C/C++——预处理器:

WIN32;

_WINDOWS;

NDEBUG;

CAFFE_VERSION=1.0.0;

BOOST_ALL_NO_LIB;

USE_LMDB;

USE_LEVELDB;

USE_CUDNN;

USE_OPENCV;

CMAKE_WINDOWS_BUILD;

GLOG_NO_ABBREVIATED_SEVERITIES;

GOOGLE_GLOG_DLL_DECL=__declspec(dllimport);

GOOGLE_GLOG_DLL_DECL_FOR_UNITTESTS=__declspec(dllimport);

H5_BUILT_AS_DYNAMIC_LIB=1;

CMAKE_INTDIR="Release";

%(PreprocessorDefinitions)③项目属性中——配置属性——链接器——输入——附加依赖项:

D:\caffe\scripts\build\lib\Release\caffe.lib;

D:\caffe\scripts\build\lib\Release\caffeproto.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\boost_system-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\boost_thread-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\boost_filesystem-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\boost_chrono-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\boost_date_time-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\boost_atomic-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\glog.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\Lib\gflags.lib;

shlwapi.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\libprotobuf.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\caffehdf5_hl.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\caffehdf5.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\cmake\..\lib\caffezlib.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\lmdb.lib;

ntdll.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\leveldb.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\cmake\..\lib\boost_date_time-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\cmake\..\lib\boost_filesystem-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\cmake\..\lib\boost_system-vc140-mt-1_61.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\snappy_static.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\caffezlib.lib;

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cudart.lib;

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\curand.lib;

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cublas.lib;

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cublas_device.lib;

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cudnn.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\x64\vc14\lib\opencv_highgui310.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\x64\vc14\lib\opencv_imgcodecs310.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\x64\vc14\lib\opencv_imgproc310.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\x64\vc14\lib\opencv_core310.lib;

C:\Users\machenike\.caffe\dependencies\libraries_v140_x64_py27_1.1.0\libraries\lib\libopenblas.dll.a;

kernel32.lib;

user32.lib;

gdi32.lib;

winspool.lib;

shell32.lib;

ole32.lib;

oleaut32.lib;

uuid.lib;

comdlg32.lib;

advapi32.lib④项目属性中——配置属性——链接器——输入——忽略特定默认库:

%(IgnoreSpecificDefaultLibraries)⑤复制D:\caffe\scripts\build\tools\Release所有的dll到工程Release下

以上①②③④其实都可以在D:\caffe\scripts\build\Caffe.sln中找到

⑥复制classification需要的5个文件,分别是deploy.prototxt network.caffemodel mean.binaryproto labels.txt img.jpg到工程下(你的不一定是这个文件名)

2.复制源码。

从Caffe.sln中可以找到classification.cpp的源码,全选复制修改输入,修改后如下

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

using namespace caffe; // NOLINT(build/namespaces)

using std::string;

/* Pair (label, confidence) representing a prediction. */

typedef std::pair Prediction;

class Classifier {

public:

Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file);

std::vector Classify(const cv::Mat& img, int N = 5);

private:

void SetMean(const string& mean_file);

std::vector Predict(const cv::Mat& img);

void WrapInputLayer(std::vector* input_channels);

void Preprocess(const cv::Mat& img,

std::vector* input_channels);

private:

shared_ptr > net_;

cv::Size input_geometry_;

int num_channels_;

cv::Mat mean_;

std::vector labels_;

};

Classifier::Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

#ifdef CPU_ONLY

Caffe::set_mode(Caffe::CPU);

#else

Caffe::set_mode(Caffe::GPU);

#endif

/* Load the network. */

net_.reset(new Net(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

static bool PairCompare(const std::pair& lhs,

const std::pair& rhs) {

return lhs.first > rhs.first;

}

/* Return the indices of the top N values of vector v. */

static std::vector Argmax(const std::vector& v, int N) {

std::vector > pairs;

for (size_t i = 0; i < v.size(); ++i)

pairs.push_back(std::make_pair(v[i], static_cast(i)));

std::partial_sort(pairs.begin(), pairs.begin() + N, pairs.end(), PairCompare);

std::vector result;

for (int i = 0; i < N; ++i)

result.push_back(pairs[i].second);

return result;

}

/* Return the top N predictions. */

std::vector Classifier::Classify(const cv::Mat& img, int N) {

std::vector output = Predict(img);

N = std::min(labels_.size(), N);

std::vector maxN = Argmax(output, N);

std::vector predictions;

for (int i = 0; i < N; ++i) {

int idx = maxN[i];

predictions.push_back(std::make_pair(labels_[idx], output[idx]));

}

return predictions;

}

/* Load the mean file in binaryproto format. */

void Classifier::SetMean(const string& mean_file) {

BlobProto blob_proto;

ReadProtoFromBinaryFileOrDie(mean_file.c_str(), &blob_proto);

/* Convert from BlobProto to Blob */

Blob mean_blob;

mean_blob.FromProto(blob_proto);

CHECK_EQ(mean_blob.channels(), num_channels_)

<< "Number of channels of mean file doesn't match input layer.";

/* The format of the mean file is planar 32-bit float BGR or grayscale. */

std::vector channels;

float* data = mean_blob.mutable_cpu_data();

for (int i = 0; i < num_channels_; ++i) {

/* Extract an individual channel. */

cv::Mat channel(mean_blob.height(), mean_blob.width(), CV_32FC1, data);

channels.push_back(channel);

data += mean_blob.height() * mean_blob.width();

}

/* Merge the separate channels into a single image. */

cv::Mat mean;

cv::merge(channels, mean);

/* Compute the global mean pixel value and create a mean image

* filled with this value. */

cv::Scalar channel_mean = cv::mean(mean);

mean_ = cv::Mat(input_geometry_, mean.type(), channel_mean);

}

std::vector Classifier::Predict(const cv::Mat& img) {

Blob* input_layer = net_->input_blobs()[0];

input_layer->Reshape(1, num_channels_,

input_geometry_.height, input_geometry_.width);

/* Forward dimension change to all layers. */

net_->Reshape();

std::vector input_channels;

WrapInputLayer(&input_channels);

Preprocess(img, &input_channels);

net_->Forward();

/* Copy the output layer to a std::vector */

Blob* output_layer = net_->output_blobs()[0];

const float* begin = output_layer->cpu_data();

const float* end = begin + output_layer->channels();

return std::vector(begin, end);

}

/* Wrap the input layer of the network in separate cv::Mat objects

* (one per channel). This way we save one memcpy operation and we

* don't need to rely on cudaMemcpy2D. The last preprocessing

* operation will write the separate channels directly to the input

* layer. */

void Classifier::WrapInputLayer(std::vector* input_channels) {

Blob* input_layer = net_->input_blobs()[0];

int width = input_layer->width();

int height = input_layer->height();

float* input_data = input_layer->mutable_cpu_data();

for (int i = 0; i < input_layer->channels(); ++i) {

cv::Mat channel(height, width, CV_32FC1, input_data);

input_channels->push_back(channel);

input_data += width * height;

}

}

void Classifier::Preprocess(const cv::Mat& img,

std::vector* input_channels) {

/* Convert the input image to the input image format of the network. */

cv::Mat sample;

if (img.channels() == 3 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGR2GRAY);

else if (img.channels() == 4 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGRA2GRAY);

else if (img.channels() == 4 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_BGRA2BGR);

else if (img.channels() == 1 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_GRAY2BGR);

else

sample = img;

cv::Mat sample_resized;

if (sample.size() != input_geometry_)

cv::resize(sample, sample_resized, input_geometry_);

else

sample_resized = sample;

cv::Mat sample_float;

if (num_channels_ == 3)

sample_resized.convertTo(sample_float, CV_32FC3);

else

sample_resized.convertTo(sample_float, CV_32FC1);

cv::Mat sample_normalized;

cv::subtract(sample_float, mean_, sample_normalized);

/* This operation will write the separate BGR planes directly to the

* input layer of the network because it is wrapped by the cv::Mat

* objects in input_channels. */

cv::split(sample_normalized, *input_channels);

CHECK(reinterpret_cast(input_channels->at(0).data)

== net_->input_blobs()[0]->cpu_data())

<< "Input channels are not wrapping the input layer of the network.";

}

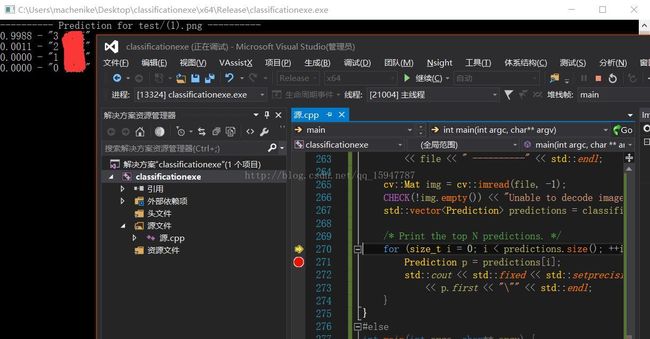

int main()

{

::google::InitGoogleLogging("init");

string model_file = "bvlc_googlenet_iter_5000.prototxt";

string trained_file = "bvlc_googlenet_iter_5000.caffemodel";

string mean_file = "imagenet_mean.binaryproto";

string label_file = "synset_words.txt";

Classifier classifier(model_file, trained_file, mean_file, label_file);

string file = "test/(1).png";

std::cout << "---------- Prediction for "

<< file << " ----------" << std::endl;

cv::Mat img = cv::imread(file, -1);

CHECK(!img.empty()) << "Unable to decode image " << file;

std::vector predictions = classifier.Classify(img);

/* Print the top N predictions. */

for (size_t i = 0; i < predictions.size(); ++i) {

Prediction p = predictions[i];

std::cout << std::fixed << std::setprecision(4) << p.second << " - \""

<< p.first << "\"" << std::endl;

}

}

4.写成动态链接库供别的程序调用。

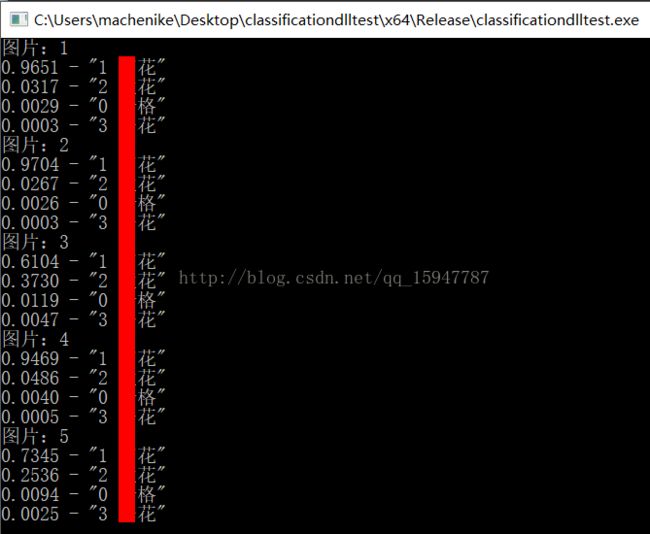

因为我分4类,一般都是直接返回相似度最大的那类,但是我还是想看以下别的类的信息,所以把每一类的相似度都返回。

于是修改上面源码为:

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

using namespace caffe; // NOLINT(build/namespaces)

using std::string;

/* Pair (label, confidence) representing a prediction. */

typedef std::pair Prediction;

class Classifier {

public:

Classifier();

Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file);

void ClassifierInit(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file);

std::vector Classify(const cv::Mat& img, int N = 5);

private:

void SetMean(const string& mean_file);

std::vector Predict(const cv::Mat& img);

void WrapInputLayer(std::vector* input_channels);

void Preprocess(const cv::Mat& img,

std::vector* input_channels);

private:

shared_ptr > net_;

cv::Size input_geometry_;

int num_channels_;

cv::Mat mean_;

std::vector labels_;

};

Classifier::Classifier()

{

}

Classifier::Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

#ifdef CPU_ONLY

Caffe::set_mode(Caffe::CPU);

#else

Caffe::set_mode(Caffe::GPU);

#endif

/* Load the network. */

net_.reset(new Net(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

void Classifier::ClassifierInit(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

#ifdef CPU_ONLY

Caffe::set_mode(Caffe::CPU);

#else

Caffe::set_mode(Caffe::GPU);

#endif

/* Load the network. */

net_.reset(new Net(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

static bool PairCompare(const std::pair& lhs,

const std::pair& rhs) {

return lhs.first > rhs.first;

}

/* Return the indices of the top N values of vector v. */

static std::vector Argmax(const std::vector& v, int N) {

std::vector > pairs;

for (size_t i = 0; i < v.size(); ++i)

pairs.push_back(std::make_pair(v[i], static_cast(i)));

std::partial_sort(pairs.begin(), pairs.begin() + N, pairs.end(), PairCompare);

std::vector result;

for (int i = 0; i < N; ++i)

result.push_back(pairs[i].second);

return result;

}

/* Return the top N predictions. */

std::vector Classifier::Classify(const cv::Mat& img, int N) {

std::vector output = Predict(img);

N = std::min(labels_.size(), N);

std::vector maxN = Argmax(output, N);

std::vector predictions;

for (int i = 0; i < N; ++i) {

int idx = maxN[i];

predictions.push_back(std::make_pair(labels_[idx], output[idx]));

}

return predictions;

}

/* Load the mean file in binaryproto format. */

void Classifier::SetMean(const string& mean_file) {

BlobProto blob_proto;

ReadProtoFromBinaryFileOrDie(mean_file.c_str(), &blob_proto);

/* Convert from BlobProto to Blob */

Blob mean_blob;

mean_blob.FromProto(blob_proto);

CHECK_EQ(mean_blob.channels(), num_channels_)

<< "Number of channels of mean file doesn't match input layer.";

/* The format of the mean file is planar 32-bit float BGR or grayscale. */

std::vector channels;

float* data = mean_blob.mutable_cpu_data();

for (int i = 0; i < num_channels_; ++i) {

/* Extract an individual channel. */

cv::Mat channel(mean_blob.height(), mean_blob.width(), CV_32FC1, data);

channels.push_back(channel);

data += mean_blob.height() * mean_blob.width();

}

/* Merge the separate channels into a single image. */

cv::Mat mean;

cv::merge(channels, mean);

/* Compute the global mean pixel value and create a mean image

* filled with this value. */

cv::Scalar channel_mean = cv::mean(mean);

mean_ = cv::Mat(input_geometry_, mean.type(), channel_mean);

}

std::vector Classifier::Predict(const cv::Mat& img) {

Blob* input_layer = net_->input_blobs()[0];

input_layer->Reshape(1, num_channels_,

input_geometry_.height, input_geometry_.width);

/* Forward dimension change to all layers. */

net_->Reshape();

std::vector input_channels;

WrapInputLayer(&input_channels);

Preprocess(img, &input_channels);

net_->Forward();

/* Copy the output layer to a std::vector */

Blob* output_layer = net_->output_blobs()[0];

const float* begin = output_layer->cpu_data();

const float* end = begin + output_layer->channels();

return std::vector(begin, end);

}

/* Wrap the input layer of the network in separate cv::Mat objects

* (one per channel). This way we save one memcpy operation and we

* don't need to rely on cudaMemcpy2D. The last preprocessing

* operation will write the separate channels directly to the input

* layer. */

void Classifier::WrapInputLayer(std::vector* input_channels) {

Blob* input_layer = net_->input_blobs()[0];

int width = input_layer->width();

int height = input_layer->height();

float* input_data = input_layer->mutable_cpu_data();

for (int i = 0; i < input_layer->channels(); ++i) {

cv::Mat channel(height, width, CV_32FC1, input_data);

input_channels->push_back(channel);

input_data += width * height;

}

}

void Classifier::Preprocess(const cv::Mat& img,

std::vector* input_channels) {

/* Convert the input image to the input image format of the network. */

cv::Mat sample;

if (img.channels() == 3 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGR2GRAY);

else if (img.channels() == 4 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGRA2GRAY);

else if (img.channels() == 4 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_BGRA2BGR);

else if (img.channels() == 1 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_GRAY2BGR);

else

sample = img;

cv::Mat sample_resized;

if (sample.size() != input_geometry_)

cv::resize(sample, sample_resized, input_geometry_);

else

sample_resized = sample;

cv::Mat sample_float;

if (num_channels_ == 3)

sample_resized.convertTo(sample_float, CV_32FC3);

else

sample_resized.convertTo(sample_float, CV_32FC1);

cv::Mat sample_normalized;

cv::subtract(sample_float, mean_, sample_normalized);

/* This operation will write the separate BGR planes directly to the

* input layer of the network because it is wrapped by the cv::Mat

* objects in input_channels. */

cv::split(sample_normalized, *input_channels);

CHECK(reinterpret_cast(input_channels->at(0).data)

== net_->input_blobs()[0]->cpu_data())

<< "Input channels are not wrapping the input layer of the network.";

}

Classifier classifier;

_declspec(dllexport) void initNet(string model_file, string trained_file, string mean_file, string label_file)

{

::google::InitGoogleLogging("init");

classifier.ClassifierInit(model_file, trained_file, mean_file, label_file);

}

//************************************

// Method: RegPic

// FullName: RegPic

// Access: public

// Returns: void

// Qualifier:

// Parameter: int rows

// Parameter: int cols

// Parameter: unsigned __int8 * data 8位单通道,灰度图

// Parameter: float a[4] 4类相似度,也可以修改位返回最大相似度的序号,但是损失了其他信息

//************************************

_declspec(dllexport) void RegPic(int rows, int cols, unsigned __int8 *data, float classPro[4])

{

cv::Mat image(rows, cols, CV_8UC1, &data[0]);//单通道灰度图

if (image.empty())

{

std::cout << "image read error" << std::endl;

return;

}

cv::Mat img;

cvtColor(image, img, CV_GRAY2BGR);

std::vector predictions = classifier.Classify(img);

/* Print the top N predictions. */

for (size_t i = 0; i < predictions.size(); ++i)

{

Prediction p = predictions[i];

std::cout << std::fixed << std::setprecision(4) << p.second << " - \""

<< p.first << "\"" << std::endl;

int classnum = p.first[0] - 48;//类别序号

classPro[classnum] = p.second;

}

}

测试源码:

#include

#include

using namespace std;

using namespace cv;

_declspec(dllexport) void RegPic(int rows, int cols, unsigned __int8 *data, float classPro[4]);

_declspec(dllexport) void initNet(string model_file, string trained_file, string mean_file, string label_file);

void main()

{

string model_file = "bvlc_googlenet_iter_5000.prototxt";

string trained_file = "bvlc_googlenet_iter_5000.caffemodel";

string mean_file = "imagenet_mean.binaryproto";

string label_file = "synset_words.txt";

initNet(model_file, trained_file, mean_file, label_file);//初始化网络,只需要运行一次

for (int i = 0; i < 100; i++)

{

stringstream ss;

ss << "test/(";

ss << i;

ss << ").png";

Mat img = imread(ss.str(), 0);

if (img.empty())

{

continue;

}

float classPro[4];

//0为合格品, 1为X花, 2为XX花, 3为XXX花

cout << "图片:" << i << endl;

RegPic(img.rows, img.cols, img.data, classPro);//识别判断,返回a数组,4个数分别代表四类的相似度最大1最小0

}

}