Gstreamer演示命令,很直观

Gstreamer cheat sheet

This page contains various shortcuts to achieving specific functionality using Gstreamer. These functionalities are mostly related to my Digital Video Transmissionexperiments. There is no easy to read "user manual" for gstreamer but the online the plugin documentation[1] often contains command line examples in addition to the API docs. Other sources of documentation:

- The manual page for gst-launch

- The gst-inspect tool

- Online tutorials

The Gstreamer documentation is also available in Devhelp.

Contents

[hide]- 1 Video Test Source

- 2 Webcam Capture

- 3 Resizing and Cropping

- 4 Filtering

- 5 Encoding and Muxing

- 5.1 Single Stream

- 5.1.1 Test Pattern

- 5.1.2 Webcam

- 5.2 Multiple Streams

- 5.3 Adding Audio

- 5.1 Single Stream

- 6 Decoding and Demuxing

- 7 Network Streaming

- 8 Compositing

- 8.1 Picture in Picture

- 8.1.1 GstVideoMixerPad

- 8.1.2 VideoBox

- 8.1.3 Video Wall

- 8.2 Text Overlay

- 8.3 Time Overlay

- 8.1 Picture in Picture

- 9 Complete Examples

- 9.1 Time-Lapse Video

- 9.1.1 From recorded video

- 9.1.2 Single frame capture

- 9.2 Video Wall: Live from Pluto

- 9.1 Time-Lapse Video

- 10 References

Video Test Source

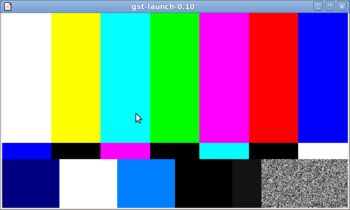

To generate a test video stream use videotestsrc[2]:

gst-launch videotestsrc ! ximagesink

Use the pattern property to select a specific pattern:

gst-launch videotestsrc pattern=snow ! ximagesink

pattern can be both numeric [0,16] and symbolic. Some patterns can be adjusted using additional parameters.

To generate a test pattern of a given size and at a given rate a "caps filter" can be used:

gst-launch videotestsrc ! video/x-raw-rgb, framerate=25/1, width=640, height=360 ! ximagesink

TODO: I'd like to add more about "caps filter" but I can not find any comprehensive documentation.

Webcam Capture

In its simplest form a v4l2src[3] can be connected directly to a video display sink:

gst-launch v4l2src ! xvimagesink

This will grab the images at the highest possible resolution, which for my Logitech QuickCam Pro 9000 is 1600x1200. Adding a "caps filter" in between we can select the size and the desired frametrate:

gst-launch v4l2src ! video/x-raw-yuv,width=320,height=240,framerate=20/1 ! xvimagesink

If the supported framerates are not good use videorate[4] to either insert or drop frames. This can also be used to deliver a fixed framerate in case the framerate from the camera varies.

The "caps filter" is also used to select a specific pixel format. The Logitech QuickCam Pro 9000 supports MJPG, YUYV, RGB3, BGR3, YU12 and YV12. The pixel format in the "caps filter" can be specified using fourcc[5] labels:

gst-launch v4l2src ! video/x-raw-yuv,format=\(fourcc\)YUY2,width=320,height=240 ! xvimagesink

YUY2 is the standard YUYV 4:2:2 pixel format and corresponds to the YUYV format on the Logitech QuickCam Pro 9000. For the other formats supported by the Logitechcameras see Pixel formats.

The camera settings can be controlled using Guvcview[6] while the image is captured using gstremer. This requires guvcview to be executed using the -o or --control_only command line option.

Resizing and Cropping

videocrop

For quick cropping from 4:3 to 16:9, the aspectratiocrop[7] plugin can be used:

gst-launch v4l2src ! video/x-raw-yuv,width=640,height=480,framerate=15/1 ! aspectratiocrop aspect-ratio=16/9 ! \

ffmpegcolorspace ! xvimagesink

videobox

videoscale

Filtering

ffmpegcolorspace

gamma

videobalance

smpte - transitions

smptealpha - PiP transparency using SMPTE transition patterns.

Encoding and Muxing

Single Stream

Test Pattern

Encode video to H.264 using x264 and put it into MPEG-TS transport stream:

gst-launch -e videotestsrc ! video/x-raw-yuv, framerate=25/1, width=640, height=360 ! x264enc ! \

mpegtsmux ! filesink location=test.ts

Note that it requires the Fluendo TS Muxer gst-fluendo-mpegmux for muxing and gst-fluendo-mpegdemux for demuxing. The -e option forces EOS on sources before shutting the pipeline down. This is useful when we write to files and want to shut down by killing gst-launch using CTRL+C or with the kill command[8]. Alternatively, we could use the num-buffers parameter to specify that we only want to record a certain number of frames. The following graph will record 500 frames and then stop:

gst-launch videotestsrc num-buffers=500 ! video/x-raw-yuv, framerate=25/1, width=640, height=360 ! x264enc \

! mpegtsmux ! filesink location=test.ts

We can use the playbin plugin to play the recorded video:

gst-launch -v playbin uri=file:///path/to/test.ts

The -v option allows us to see which blocks gstreamer decides to use. In this case it will automatically select flutsdemux for demuxing the MPEG-TS and ffdec_h264 for decoding the H.264 video. Note that there appears to be no x264dec and no ffenc_h264.

By default x264enc will use 2048 kbps but this can be set to a different value:

gst-launch -e videotestsrc ! video/x-raw-yuv, framerate=20/1, width=640, height=480 ! x264enc bitrate=512 ! \

mpegsmux ! filesink location=test.ts

bitrate is specified in kbps. Note that I've changed the size to 640x480. For H.264 (and most other modern codecs) it is advantageous to use width and height that is an integer multiple of 16. There are also many other options that can be used to optimize compression, quality and speed.

TODO: Find good settings for (1) high quality (2) fast compression (3) etc...

TODO: There is also the ffmux_mpegts but I can not make it work; it generates a 564 bytes long file.

Webcam

If we want to encode the webcam we need to include the ffmpegcolorspace[9] converter block:

gst-launch -e v4l2src ! video/x-raw-yuv, framerate=10/1, width=320, height=240 ! ffmpegcolorspace ! \

x264enc bitrate=256 ! flutsmux ! filesink location=webcam.ts

Multiple Streams

We can mux the test pattern and the webcam into one MPEG-TS stream. For this we first declare the muxer element and name it "muxer". The name is then used as reference when we connect to it:

gst-launch -e mpegtsmux name="muxer" ! filesink location=multi.ts \ v4l2src ! video/x-raw-yuv,format=\(fourcc\)YUY2,framerate=10/1,width=640,height=480 ! videorate ! ffmpegcolorspace ! x264enc ! muxer. \ videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=480 ! x264enc ! muxer.

We can play the recorded multi.ts file with any MPEG-TS capable player:

- VLC will play both channels at the same time in different windows.

- Mplayer will show one stream and we can swap between the streams using the "_" key.

gst-launch playbin uri=/path/to/multi.tswill play one stream

TODO: Should be able to get both streams in gstreamer but might require some magic.

Adding Audio

Capturing and encoding audio is really easy:

gst-launch -e pulsesrc ! audioconvert ! lamemp3enc target=1 bitrate=64 cbr=true ! filesink location=audio.mp3

This will record an MP3 audio using 64kbps CBR. Of course, we would prefer OGG or format, but the MPEG-TS we want to mux into only supports MPEG audio for now (this is a limitation of the flutsmux plugin I believe).

To include the recorded audio in the MUX we simply include it in the pipeline and replace the file sink with the muxer:

gst-launch -e flutsmux name="muxer" ! filesink location=multi.ts \ v4l2src ! video/x-raw-yuv, format=\(fourcc\)YUY2, framerate=10/1, width=640, height=480 ! videorate ! ffmpegcolorspace ! x264enc ! muxer. \ videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=480 ! x264enc ! muxer. \ pulsesrc ! audioconvert ! lamemp3enc target=1 bitrate=64 cbr=true ! muxer.

The audio input device can be specified using the device property:

gst-launch -e pulsesrc device="alsa_input.usb-046d_0809_52A63768-02.analog-mono" ! audioconvert ! \ lamemp3enc target=1 bitrate=64 cbr=true ! filesink location=audio.mp3

The list of valid audio device names can be seen in the listing provided by pactl list or using the command[10]:

$ pactl list | grep -A2 'Source #' | grep 'Name: ' | cut -d" " -f2

Note that this will also list the monitoring pad of the audio output:

$ pactl list | grep -A2 'Source #' | grep 'Name: ' | cut -d" " -f2 alsa_output.pci-0000_80_01.0.analog-stereo.monitor alsa_input.pci-0000_80_01.0.analog-stereo alsa_input.usb-046d_0809_52A63768-02.analog-mono

To list all monitor source we can use the command[11]:

$ pactl list | grep -A2 'Source #' | grep 'Name: .*\.monitor$' | cut -d" " -f2

Decoding and Demuxing

TBD

Network Streaming

TBD

MPEG-TS can be streamed over UDP (TBC)

Raw videos, e.g. H.264, can be packed into RTP before sending over UDP (TBC)

From man gst-launch:

Network streaming

Stream video using RTP and network elements.

gst-launch v4l2src ! video/x-raw-yuv,width=128,height=96,format='(fourcc)'UYVY ! ffmpegcolorspace ! ffenc_h263

! video/x-h263 ! rtph263ppay pt=96 ! udpsink host=192.168.1.1 port=5000 sync=false

Use this command on the receiver

gst-launch udpsrc port=5000 ! application/x-rtp, clock-rate=90000,payload=96 ! rtph263pdepay queue-delay=0 !

ffdec_h263 ! xvimagesink

This command would be run on the transmitter

This example does not work for me! See: http://cgit.freedesktop.org/gstreamer/gst-plugins-good/tree/gst/rtp/README#n251

Compositing

Picture in Picture

The videomixer[12] can be used to mix two or more video streams together forming a PiP effect. The following example will put a 200x150 pixels snow test pattern over a 640x360 pixels SMPTE pattern:

gst-launch -e videotestsrc pattern="snow" ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! videomixer name=mix ! \ ffmpegcolorspace ! xvimagesink videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=360 ! mix.

GstVideoMixerPad

According to the online documentation[12] the position and Z-order can be adjusted using GstVideoMixerPad properties[13]. These properties can be accessed using Python or C (see this post) or even from gst-launch using references to pads, namely sink_i::xpos, sink_i::ypos, sink_i::alpha and sink_i::zorder, where i is the input stream number starting from 0. The GstVideoMixerPad properties are specified together with the declaration of the videomixer:

gst-launch videotestsrc pattern="snow" ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! \ videomixer name=mix sink_1::xpos=20 sink_1::ypos=20 sink_1::alpha=0.5 sink_1::zorder=3 sink_2::xpos=100 sink_2::ypos=100 sink_2::zorder=2 ! \ ffmpegcolorspace ! xvimagesink videotestsrc pattern=13 ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! mix. \ videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=360 ! mix.

Thanks to Stefan Kost for this very useful tip.

The order by which input streams are connected to videomixer inputs is deterministic though difficult to predict. We can have full control over which video stream is connected to which videomixer input by explicitly specifying the pads when we link:

gst-launch \ videomixer name=mix sink_1::xpos=20 sink_1::ypos=20 sink_1::alpha=0.5 sink_1::zorder=3 sink_2::xpos=100 sink_2::ypos=100 sink_2::zorder=2 ! \ ffmpegcolorspace ! xvimagesink \ videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=360 ! mix.sink_0 \ videotestsrc pattern="snow" ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! mix.sink_1 \ videotestsrc pattern=13 ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! mix.sink_2

Using this trick we can swap between the two small pictures by simply swapping mix.sink_1 with mix.sink_2.

VideoBox

We can also move the small video around anywhere using the videobox[14] element with a transparent border. The videobox is inserted between the source video and the mixer:

![]()

The following pipeline will move the small snow pattern 20 pixels to the right and 25 pixels down:

gst-launch -e videotestsrc pattern="snow" ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! videobox border-alpha=0 top=-20 left=-25 ! \ videomixer name=mix ! ffmpegcolorspace ! xvimagesink videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=360 ! mix.

Note that the top and left values are negative, which means that pixels will be added. Positive value means that pixels are cropped from the original image. If we'd made border-alpha 1.0 we'd seen a black border on the top and the left of the child image.

Transparency of each input stream can be controlled by passing the stream through an alpha filter. This is useful for the main (background) image. For the child image we do not need to add and additional alpha filter because the videobox can have it's own alpha channel:

gst-launch -e videotestsrc pattern="snow" ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! \ videobox border-alpha=0 alpha=0.6 top=-20 left=-25 ! videomixer name=mix ! ffmpegcolorspace ! xvimagesink \ videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=360 ! mix.

![]()

A border can be added around the child image by adding an additional videobox[14] where the top/left/right/bottom values correspond to the desired border width andborder-alpha is set to 1.0 (opaque):

gst-launch -e videotestsrc pattern="snow" ! video/x-raw-yuv, framerate=10/1, width=200, height=150 ! \ videobox border-alpha=1.0 top=-2 bottom=-2 left=-2 right=-2 ! videobox border-alpha=0 alpha=0.6 top=-20 left=-25 ! \ videomixer name=mix ! ffmpegcolorspace ! xvimagesink videotestsrc ! video/x-raw-yuv, framerate=10/1, width=640, height=360 ! mix.

![]()

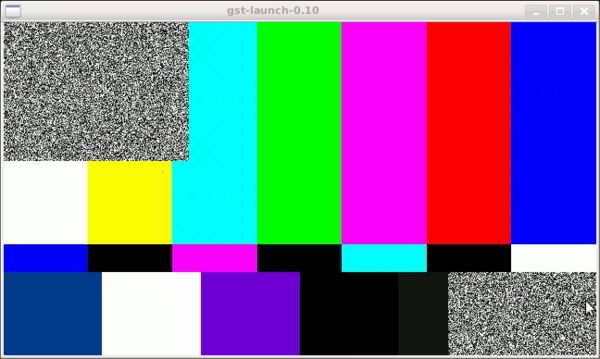

Video Wall

We can of course combine more than two incoming video streams. The following pipeline will take four incoming streams and mix them into a Video Matrix / Wall:

gst-launch -e videomixer name=mix ! ffmpegcolorspace ! xvimagesink \ videotestsrc pattern=1 ! video/x-raw-yuv, framerate=5/1, width=320, height=180 ! videobox border-alpha=0 top=0 left=0 ! mix. \ videotestsrc pattern=15 ! video/x-raw-yuv, framerate=5/1, width=320, height=180 ! videobox border-alpha=0 top=0 left=-320 ! mix. \ videotestsrc pattern=13 ! video/x-raw-yuv, framerate=5/1, width=320, height=180 ! videobox border-alpha=0 top=-180 left=0 ! mix. \ videotestsrc pattern=0 ! video/x-raw-yuv, framerate=5/1, width=320, height=180 ! videobox border-alpha=0 top=-180 left=-320 ! mix. \ videotestsrc pattern=3 ! video/x-raw-yuv, framerate=5/1, width=640, height=360 ! mix.

We had to use a fifth stream as large background stream.

For a more complex example see Video Wall: Live from Pluto.

Text Overlay

The texoverlay[15] plugin can be used to add text to the video stream:

gst-launch videotestsrc ! video/x-raw-yuv,width=640,height=480,framerate=15/1 ! textoverlay text="Hello" ! ffmpegcolorspace ! ximagesink

It has many options for text positioning and alignment. User can also specify font properties as a Pango font description string, e.g. "Sans Italic 24".

TODO: A few font description examples.

Time Overlay

Elapsed time can be added using the timeoverlay[16] plugin:

gst-launch videotestsrc ! timeoverlay ! xvimagesink

Timeoverlay inherits the properties of textoverlay so the text properties can be set using the same properties:

gst-launch -v videotestsrc ! video/x-raw-yuv, framerate=25/1, width=640, height=360 ! \ timeoverlay halign=left valign=bottom text="Stream time:" shaded-background=true ! xvimagesink

Alternatively, cairotimeoverlay[17] can be used but it doesn't seem to have any properties:

gst-launch videotestsrc ! cairotimeoverlay ! xvimagesink

Instead of elapsed time, the system date and time can be added using the clockoverlay[18] plugin:

gst-launch videotestsrc ! clockoverlay ! xvimagesink

Clockloverlay also inherits the properties of textoverlay. In addition to that clockoverlay also allows setting the time format:

gst-launch videotestsrc ! clockoverlay halign=right valign=bottom shaded-background=true time-format="%Y.%m.%D" ! ffmpegcolorspace ! ximagesink

Complete Examples

Time-Lapse Video

From recorded video

A simple "surveillance camera" implementation using the Logitech QuickCam Vision Pro 9000 and Gstreamer. Frames from the camera are captured at 5 fps. The date, time and elapsed time are added. The stream is displayed on the screen at the captured rate and resolution and saved to an OGG file at 1 fps:

gst-launch -e v4l2src ! video/x-raw-yuv,format=\(fourcc\)YUY2,width=1280,height=720,framerate=5/1 ! \ ffmpegcolorspace ! \ timeoverlay halign=right valign=top ! clockoverlay halign=left valign=top time-format="%Y/%m/%d %H:%M:%S" ! \ tee name="splitter" ! queue ! xvimagesink sync=false splitter. ! \ queue ! videorate ! video/x-raw-yuv,framerate=1/1 ! \ theoraenc bitrate=256 ! oggmux ! filesink location=webcam.ogg

Note: We can replace theoraenc+oggmux with x264enc+someothermuxer but then the pipeline will freeze unless we make the queue [19] element in front of the xvimagesink leaky, i.e. "queue leaky=1".

To create a time-lapse video we have to extract the individual frames from the recorded ogg file then assemble them to a new video using the new framerate.

Extract the individual frames:

ffmpeg -i webcam.ogg -r 1 -sameq -f image2 img/webcam-%05d.jpg

Assemble frames to new video:

ffmpeg -r 50 -i img/webcam-%05d.jpg -vcodec libx264 -b 5000k -r 25 timelapse.mov

See the time-lapse video on YouTube.

The "time-lapse factor" can be controlled by setting the input rate. Since we recorded 1 fps and specified and input rate of 50 fps while assembling the time-lapse videos the effective time-lapse factor will be 0.5 fps corresponding to 2 seconds per frame. If we reduce the input framerate to 25, the time-lapse speed will be half, i.e. 1 second per frame:

ffmpeg -r 25 -i img/webcam-%05d.jpg -vcodec libx264 -b 5000k -r 25 timelapse.mov

See the half-speed time-lapse on YouTube.

Single frame capture

In the first example we recorded the captured frames to a theora encoded video file. In order to change the frame rate we had to convert the recorded video to single frames, then re-encode using ffmpeg. A better method is to omit the first video and capture directly to single frames. We can do this using the multifilesink[20] andmultifilesrc[21].

This will record the camera stream to PNG files:

gst-launch -e v4l2src ! video/x-raw-yuv,format=\(fourcc\)YUY2,width=1280,height=720,framerate=5/1 ! ffmpegcolorspace ! \ timeoverlay halign=right valign=bottom ! clockoverlay halign=left valign=bottom time-format="%Y/%m/%d %H:%M:%S" ! \ videorate ! video/x-raw-rgb,framerate=1/1 ! ffmpegcolorspace ! pngenc snapshot=false ! multifilesink location="frame%05d.png"

It was a quick hack and it might have been possible to avoid the two ffmpegcolorspace converters. We can convert to time-lapse video as before (this time I used different codec and framerate:

ffmpeg -i timelapse.mp3 -r 100 -i img/frame%05d.png -sameq -r 50 -ab 320k timelapse.mp4

Note that this time I generated an MP4 video with 50 fps. You can watch the result on YouTube or download the MP4 file here.

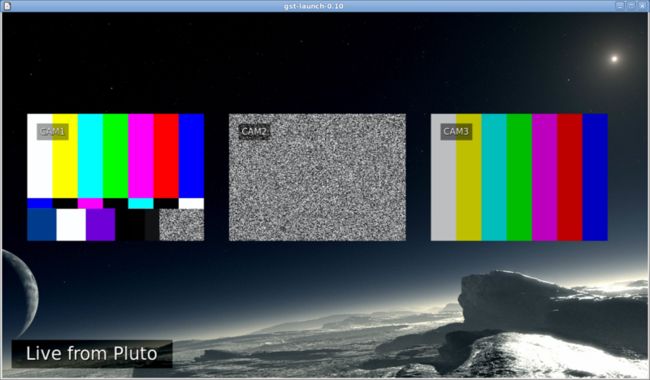

Video Wall: Live from Pluto

Our robotic spaceship has landed on Pluto and is ready to transmit awesome video from the three onboard cameras CAM1, CAM2 and CAM3. We want to show the images on a video wall with a nice background, something like this:

We can accomplish this using picture-in-picture compositing but it is now a little more complicated than the simple examples shown earlier. We have:

- Three small video feeds of size 350x250 pixels

- Each small video feed has a

textoverlayshowing CAMx - A large background 1280x720 pixels coming from a still image (JPG file)

- A

textoverlaysaying "Live from Pluto" at the bottom left of the main screen - The three video feeds, CAM1, CAM2 and CAM3 are put on top of the main screen.

The diagram for the pipeline is shown below. The text above the arrows specifies the pixel formats for a given video stream in the pipeline. If the pipeline fails to launch due to an error that says something about streaming task paused, reason not-negotiated (-4), it is very often due to incompatible connection between two blocks. Gstreamer is not always very good at telling that.

And here is the complete pipeline as entered on the command line:

gst-launch -e videomixer name=mix ! ffmpegcolorspace ! xvimagesink \

videotestsrc pattern=0 ! video/x-raw-yuv, framerate=1/1, width=350, height=250 ! \

textoverlay font-desc="Sans 24" text="CAM1" valign=top halign=left shaded-background=true ! \

videobox border-alpha=0 top=-200 left=-50 ! mix. \

videotestsrc pattern="snow" ! video/x-raw-yuv, framerate=1/1, width=350, height=250 ! \

textoverlay font-desc="Sans 24" text="CAM2" valign=top halign=left shaded-background=true ! \

videobox border-alpha=0 top=-200 left=-450 ! mix. \

videotestsrc pattern=13 ! video/x-raw-yuv, framerate=1/1, width=350, height=250 ! \

textoverlay font-desc="Sans 24" text="CAM3" valign=top halign=left shaded-background=true ! \

videobox border-alpha=0 top=-200 left=-850 ! mix. \

multifilesrc location="pluto.jpg" caps="image/jpeg,framerate=1/1" ! jpegdec ! \

textoverlay font-desc="Sans 26" text="Live from Pluto" halign=left shaded-background=true auto-resize=false ! \

ffmpegcolorspace ! video/x-raw-yuv,format=\(fourcc\)AYUV ! mix.

A few notes on the pipeline:

- For the large background image, which is a still frame I wanted to use the

imagefreezeblock which generates a video stream from a single image file. Unfortunately, it seems that this block is very new and not in the gstreamer package that comes with Ubuntu 10.04. Thereofre, I had to do the trick withmultifilesrcmaking it read the same file over and over again. - I had a hard time getting this pipeline work. Eventually I found out that my problems were due to incompatible caps in the

multifilesrcpart of the pipeline. That's why there is an extra color conversion block between thetextoverlayand thevideomixer. - The

videoboxelements are used to add a transparent border to the small video feed causing the real video to "move"

You can watch the Live from Pluto GStreamer video wall in action on YouTube.

References

- Jump up↑ Gstreamer documentation http://gstreamer.freedesktop.org/documentation/

- Jump up↑ GStreamer Base Plugins 0.10 Plugins Reference Manual – videotestsrc

- Jump up↑ GStreamer Good Plugins 0.10 Plugins Reference Manual – v4l2src

- Jump up↑ GStreamer Base Plugins 0.10 Plugins Reference Manual – videorate

- Jump up↑ FOURCC website – YUV formats

- Jump up↑ Gtk+ UVC Viewer: http://guvcview.berlios.de/

- Jump up↑ GStreamer Good Plugins 0.10 Plugins Reference Manual – aspectratiocrop

- Jump up↑ Elphel Development Blog – Interfacing Elphel cameras with GStreamer, OpenCV, OpenGL/GLSL and python.

- Jump up↑ GStreamer Base Plugins 0.10 Plugins Reference Manual – ffmpegcolorspace. The details are available via

gst-inspect ffmpegcolorspace. - Jump up↑ Pulseaudio FAQ – How do I record stuff? http://pulseaudio.org/wiki/FAQ#HowdoIrecordstuff

- Jump up↑ Pulseaudio FAQ – How do I record other programs' output? http://pulseaudio.org/wiki/FAQ#HowdoIrecordotherprogramsoutput

- ↑ Jump up to:12.0 12.1 GStreamer Good Plugins 0.10 Plugins Reference Manual – videomixer

- Jump up↑ GStreamer Good Plugins 0.10 Plugins Reference Manual – GstVideoMixerPad

- ↑ Jump up to:14.0 14.1 GStreamer Good Plugins 0.10 Plugins Reference Manual – videobox

- Jump up↑ GStreamer Base Plugins 0.10 Plugins Reference Manual – textoverlay

- Jump up↑ GStreamer Base Plugins 0.10 Plugins Reference Manual – timeoverlay

- Jump up↑ GStreamer Good Plugins 0.10 Plugins Reference Manual – cairotimeoverlay

- Jump up↑ GStreamer Base Plugins 0.10 Plugins Reference Manual - clockoverlay

- Jump up↑ GStreamer Core Plugins 0.10 Plugins Reference Manual – queue

- Jump up↑ GStreamer Good Plugins 0.10 Plugins Reference Manual – multifilesink

- Jump up↑ GStreamer Good Plugins 0.10 Plugins Reference Manual – multifilesrc

Other useful links:

- Camera controls example software

- GStreamer Plugin Writer's Guide: List of Defined Types

- http://www.twm-kd.com/computers/software/webcam-and-linux-gstreamer-tutorial/

- http://www.cin.ufpe.br/~cinlug/wiki/index.php/Introducing_GStreamer

- http://noraisin.net/~jan/diary/?p=40

- http://code.google.com/p/gst-gengui/

- Gstreamer