官网实例详解4.1(addition_rnn.py)-keras学习笔记四

基于循环神经网络的加法

欢迎指正。。

Keras实例目录

名词解释

循环神经网络(RNN)

长短期记忆网络(LSTM)

代码注释

# -*- coding: utf-8 -*-

'''An implementation of sequence to sequence learning for performing addition

基于相加的端到端学习实现

Input: "535+61"

输入:"535+61"

Output: "596"

输出:"596"

Padding is handled by using a repeated sentinel character (space)

使用重复的前哨字符(空格)填充

Input may optionally be inverted, shown to increase performance in many tasks in:

输入倒置(可选),在很多任务中展现提升的表现:

"Learning to Execute"

学习执行(论文)

http://arxiv.org/abs/1410.4615

and

"Sequence to Sequence Learning with Neural Networks"

基于神经网络的端到端学习

http://papers.nips.cc/paper/5346-sequence-to-sequence-learning-with-neural-networks.pdf

Theoretically it introduces shorter term dependencies between source and target.

理论上,引入了起源和目标之间的短期依赖关系。

Two digits inverted:

+ One layer LSTM (128 HN), 5k training examples = 99% train/test accuracy in 55 epochs

一层 LSTM(128 HN0,5k 训练样本 = 99% 训练/测试 精确度 50周期

Three digits inverted:

+ One layer LSTM (128 HN), 50k training examples = 99% train/test accuracy in 100 epochs

一层 LSTM(128 HN0,50k 训练样本 = 99% 训练/测试 精确度 100周期

Four digits inverted:

+ One layer LSTM (128 HN), 400k training examples = 99% train/test accuracy in 20 epochs

一层 LSTM(128 HN0,400k 训练样本 = 99% 训练/测试 精确度 20周期

Five digits inverted:

+ One layer LSTM (128 HN), 550k training examples = 99% train/test accuracy in 30 epochs

一层 LSTM(128 HN0,550k 训练样本 = 99% 训练/测试 精确度 30周期

'''

from __future__ import print_function

from keras.models import Sequential

from keras import layers

import numpy as np

from six.moves import range

class CharacterTable(object):

"""Given a set of characters:

+ Encode them to a one hot integer representation

one-hot 整数编码

+ Decode the one hot integer representation to their character output

one-hot 整数解码为字符输出

+ Decode a vector of probabilities to their character output

解码概率向量为字符输出

"""

def __init__(self, chars):

"""Initialize character table.

初始化字符表

# Arguments

参数

chars: Characters that can appear in the input.

字符:输入的字符

"""

self.chars = sorted(set(chars))

self.char_indices = dict((c, i) for i, c in enumerate(self.chars))

self.indices_char = dict((i, c) for i, c in enumerate(self.chars))

def encode(self, C, num_rows):

"""One hot encode given string C.

One hot编码处理字符串C

# Arguments

参数

num_rows: Number of rows in the returned one hot encoding. This is

used to keep the # of rows for each data the same.

num_rows: 用于one hot编码的行数,用来保持每行#数据相同

"""

x = np.zeros((num_rows, len(self.chars)))

for i, c in enumerate(C):

x[i, self.char_indices[c]] = 1

return x

def decode(self, x, calc_argmax=True):

if calc_argmax:

x = x.argmax(axis=-1)

return ''.join(self.indices_char[x] for x in x)

class colors:

ok = '\033[92m'

fail = '\033[91m'

close = '\033[0m'

# Parameters for the model and dataset.

# 模型和数据集参数

TRAINING_SIZE = 50000

DIGITS = 3

INVERT = True

# Maximum length of input is 'int + int' (e.g., '345+678'). Maximum length of

# int is DIGITS.

# 输入的最大长度是 'int + int' (例如 '345+678' (是3+1+3=7))。int 的最大长度是 DIGITS的值(本例是3)

MAXLEN = DIGITS + 1 + DIGITS # 7

# All the numbers, plus sign and space for padding.

# 所有数值、加号和填充的空格

chars = '0123456789+ ' # char: '0123456789+ '

ctable = CharacterTable(chars)

'''

CharacterTable基于chars = '0123456789+ '生成2个dict

char_indices = {dict} {' ': 0, '+': 1, '0': 2, '1': 3, '2': 4, '3': 5, '4': 6, '5': 7, '6': 8, '7': 9, '8': 10, '9': 11}

indices_char = {dict} {0: ' ', 1: '+', 2: '0', 3: '1', 4: '2', 5: '3', 6: '4', 7: '5', 8: '6', 9: '7', 10: '8', 11: '9'}

'''

questions = []

expected = []

seen = set()

print('Generating data...')

while len(questions) < TRAINING_SIZE:

f = lambda: int(''.join(np.random.choice(list('0123456789'))

for i in range(np.random.randint(1, DIGITS + 1))))

a, b = f(), f()

# Skip any addition questions we've already seen

# 跳过任何我们已经看过的附加问题

# Also skip any such that x+Y == Y+x (hence the sorting).

key = tuple(sorted((a, b)))

if key in seen:

continue

seen.add(key)

# Pad the data with spaces such that it is always MAXLEN.

# 用空格填充数据,使数据长度为最大长度(MAXLEN = DIGITS + 1 + DIGITS,是7)

q = '{}+{}'.format(a, b)

query = q + ' ' * (MAXLEN - len(q))

ans = str(a + b)

# Answers can be of maximum size DIGITS + 1.

# 答案可以是最大的 DIGITS + 1+ 1

ans += ' ' * (DIGITS + 1 - len(ans))

if INVERT:

# Reverse the query, e.g., '12+345 ' becomes ' 543+21'. (Note the

# space used for padding.)

# 翻转问题字符串(字符串字符位置倒置),例如 '12+345 ' 处理后 ' 543+21'(注意填充的空格(不要遗漏)。)

query = query[::-1]

questions.append(query)

expected.append(ans)

print('Total addition questions:', len(questions))

print('Vectorization...')

x = np.zeros((len(questions), MAXLEN, len(chars)), dtype=np.bool)

y = np.zeros((len(questions), DIGITS + 1, len(chars)), dtype=np.bool)

for i, sentence in enumerate(questions):

x[i] = ctable.encode(sentence, MAXLEN)

for i, sentence in enumerate(expected):

y[i] = ctable.encode(sentence, DIGITS + 1)

# Shuffle (x, y) in unison as the later parts of x will almost all be larger

# digits.

# 由于x数据集后面部分几乎都是较大的数字,同时筛选(x,y),即打乱数据集已有排序,但x、y对应关系不打乱。

indices = np.arange(len(y))

np.random.shuffle(indices)

x = x[indices]

y = y[indices]

# Explicitly set apart 10% for validation data that we never train over.

# 划分10%的数据(样本)作为验证数据集

split_at = len(x) - len(x) // 10

(x_train, x_val) = x[:split_at], x[split_at:]

(y_train, y_val) = y[:split_at], y[split_at:]

print('Training Data:')

print(x_train.shape)

print(y_train.shape)

print('Validation Data:')

print(x_val.shape)

print(y_val.shape)

# Try replacing GRU, or SimpleRNN.

# 尝试使用GRU(门控循环单元)或SimpleRNN替换

RNN = layers.LSTM

HIDDEN_SIZE = 128

BATCH_SIZE = 128

LAYERS = 1

print('Build model...')

model = Sequential()

# "Encode" the input sequence using an RNN, producing an output of HIDDEN_SIZE.

# Note: In a situation where your input sequences have a variable length,

# use input_shape=(None, num_feature).

# 使用RNN编码输入序列,生成HIDDEN_SIZE的输出

# 注意:某些情况,输入序列是变长的,使用input_shape=(None, num_feature).

model.add(RNN(HIDDEN_SIZE, input_shape=(MAXLEN, len(chars))))

# As the decoder RNN's input, repeatedly provide with the last hidden state of

# RNN for each time step. Repeat 'DIGITS + 1' times as that's the maximum

# length of output, e.g., when DIGITS=3, max output is 999+999=1998.

# 作为解码器RNN的输入,重复提供与最后一个隐藏状态的RNN为每个时间步长。重复“IGITS + 1”次,

# 这是输出的最大长度,例如,当DIGITS=3时,最大输出为999±999=1998。

model.add(layers.RepeatVector(DIGITS + 1))

# The decoder RNN could be multiple layers stacked or a single layer.

# 作为解码器的RNN可以是多层的或单层的。

for _ in range(LAYERS):

# By setting return_sequences to True, return not only the last output but

# all the outputs so far in the form of (num_samples, timesteps,

# output_dim). This is necessary as TimeDistributed in the below expects

# the first dimension to be the timesteps.

# 通过将返回序列设置为true,不仅返回最后的输出,而且还返回迄今为止的所有输出形式

# (NUMYSAMPLE,TimePosits,OutPuxDIM)。后续的时间分布需要以前的维度是时间步骤的。

model.add(RNN(HIDDEN_SIZE, return_sequences=True))

# Apply a dense layer to the every temporal slice of an input. For each of step

# of the output sequence, decide which character should be chosen.

# 输入的每一个时间切片上应用一个dense层。对于输出序列的每个步骤,决定应选择的字符。

model.add(layers.TimeDistributed(layers.Dense(len(chars))))

model.add(layers.Activation('softmax'))

model.compile(loss='categorical_crossentropy',

optimizer='adam',

metrics=['accuracy'])

model.summary()

# Train the model each generation and show predictions against the validation

# dataset.

# 训练模型并显示验证数据集的预测

for iteration in range(1, 200):

print()

print('-' * 50)

print('Iteration', iteration)

model.fit(x_train, y_train,

batch_size=BATCH_SIZE,

epochs=1,

validation_data=(x_val, y_val))

# Select 10 samples from the validation set at random so we can visualize

# errors.

# 从验证集随机选择10个样本,方便可视化错误

for i in range(10):

ind = np.random.randint(0, len(x_val))

rowx, rowy = x_val[np.array([ind])], y_val[np.array([ind])]

preds = model.predict_classes(rowx, verbose=0)

q = ctable.decode(rowx[0])

correct = ctable.decode(rowy[0])

guess = ctable.decode(preds[0], calc_argmax=False)

print('Q', q[::-1] if INVERT else q, end=' ')

print('T', correct, end=' ')

if correct == guess:

print(colors.ok + '☑' + colors.close, end=' ')

else:

print(colors.fail + '☒' + colors.close, end=' ')

print(guess)

class colors: ok = '\033[92m' fail = '\033[91m' close = '\033[0m'

该类用于标记验证效果,ok 为绿色;fail为红色,代码部分

print('Q', q[::-1] if INVERT else q, end=' ')

print('T', correct, end=' ')

if correct == guess:

print(colors.ok + '☑' + colors.close, end=' ')

else:

print(colors.fail + '☒' + colors.close, end=' ')

print(guess)该类用于标记验证效果,ok 为绿色;fail为红色,效果如下

CharacterTable功能举例说明

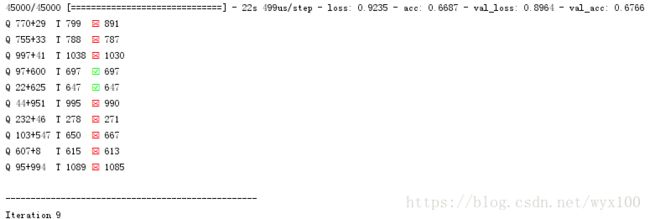

执行过程

C:\ProgramData\Anaconda3\python.exe E:/keras-master/examples/addition_rnn.py

Using TensorFlow backend.

Generating data...

Total addition questions: 50000

Vectorization...

Training Data:

(45000, 7, 12)

(45000, 4, 12)

Validation Data:

(5000, 7, 12)

(5000, 4, 12)

Build model...

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

lstm_1 (LSTM) (None, 128) 72192

_________________________________________________________________

repeat_vector_1 (RepeatVecto (None, 4, 128) 0

_________________________________________________________________

lstm_2 (LSTM) (None, 4, 128) 131584

_________________________________________________________________

time_distributed_1 (TimeDist (None, 4, 12) 1548

_________________________________________________________________

activation_1 (Activation) (None, 4, 12) 0

=================================================================

Total params: 205,324

Trainable params: 205,324

Non-trainable params: 0

_________________________________________________________________

--------------------------------------------------

Iteration 1

Train on 45000 samples, validate on 5000 samples

Epoch 1/1

2018-02-19 22:44:31.792747: I C:\tf_jenkins\workspace\rel-win\M\windows\PY\36\tensorflow\core\platform\cpu_feature_guard.cc:137] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX

128/45000 [..............................] - ETA: 10:05 - loss: 2.4843 - acc: 0.0723

384/45000 [..............................] - ETA: 3:30 - loss: 2.4783 - acc: 0.1621

640/45000 [..............................] - ETA: 2:11 - loss: 2.4713 - acc: 0.1859

896/45000 [..............................] - ETA: 1:37 - loss: 2.4619 - acc: 0.1978

1152/45000 [..............................] - ETA: 1:18 - loss: 2.4510 - acc: 0.2036

1408/45000 [..............................] - ETA: 1:06 - loss: 2.4370 - acc: 0.2058

1664/45000 [>.............................] - ETA: 57s - loss: 2.4177 - acc: 0.2076

1920/45000 [>.............................] - ETA: 51s - loss: 2.3948 - acc: 0.2089

2176/45000 [>.............................] - ETA: 46s - loss: 2.3777 - acc: 0.2104

2432/45000 [>.............................] - ETA: 43s - loss: 2.3627 - acc: 0.2116

2688/45000 [>.............................] - ETA: 40s - loss: 2.3488 - acc: 0.2118

2944/45000 [>.............................] - ETA: 37s - loss: 2.3335 - acc: 0.2131

3200/45000 [=>............................] - ETA: 35s - loss: 2.3226 - acc: 0.2130

3456/45000 [=>............................] - ETA: 33s - loss: 2.3106 - acc: 0.2143

3712/45000 [=>............................] - ETA: 32s - loss: 2.3005 - acc: 0.214342880/45000 [===========================>..] - ETA: 0s - loss: 1.3089e-04 - acc: 1.0000

43136/45000 [===========================>..] - ETA: 0s - loss: 1.3101e-04 - acc: 1.0000

43392/45000 [===========================>..] - ETA: 0s - loss: 1.3093e-04 - acc: 1.0000

43648/45000 [============================>.] - ETA: 0s - loss: 1.3082e-04 - acc: 1.0000

43904/45000 [============================>.] - ETA: 0s - loss: 1.3075e-04 - acc: 1.0000

44160/45000 [============================>.] - ETA: 0s - loss: 1.3079e-04 - acc: 1.0000

44416/45000 [============================>.] - ETA: 0s - loss: 1.3073e-04 - acc: 1.0000

44672/45000 [============================>.] - ETA: 0s - loss: 1.3048e-04 - acc: 1.0000

44928/45000 [============================>.] - ETA: 0s - loss: 1.3037e-04 - acc: 1.0000

45000/45000 [==============================] - 10s 221us/step - loss: 1.3031e-04 - acc: 1.0000 - val_loss: 9.5955e-04 - val_acc: 0.9998

Q 478+869 T 1347 ☑ 1347

Q 36+34 T 70 ☑ 70

Q 303+382 T 685 ☑ 685

Q 1+611 T 612 ☑ 612

Q 674+72 T 746 ☑ 746

Q 803+32 T 835 ☑ 835

Q 444+948 T 1392 ☑ 1392

Q 634+34 T 668 ☑ 668

Q 4+208 T 212 ☑ 212

Q 8+970 T 978 ☑ 978

Process finished with exit code 0