Hadoop运行模式—伪分布式运行模式

一、启动HDFS并运行MapReduce程序

1.分析

(1)配置集群

(2)启动、测试集群增、删、查

(3)执行wordcount案例

2.执行步骤

(1)配置集群

(a)配置:hadoop-env.sh

Linux系统中获取jdk的安装路径:

[admin@ hadoop101 ~]# echo $JAVA_HOME

/opt/module/jdk1.8.0_144 修改JAVA_HOME 路径:

export JAVA_HOME=/opt/module/jdk1.8.0_144 (b)配置:core-site.xml

<property>

<name>fs.defaultFSname>

<value>hdfs://hadoop101:9000value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/opt/module/hadoop-2.7.2/data/tmpvalue>

property> (c)配置:hdfs-site.xml

<property>

<name>dfs.replicationname>

<value>1value>

property>(2)启动集群

(a)格式化NameNode(第一次启动时格式化,以后就不要总格式化)

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs namenode -format(b)启动NameNode

[admin@hadoop101 hadoop-2.7.2]$ sbin/hadoop-daemon.sh start namenode(c)启动DataNode

[admin@hadoop101 hadoop-2.7.2]$ sbin/hadoop-daemon.sh start datanode(3)查看集群

(a)查看是否启动成功

[admin@hadoop101 hadoop-2.7.2]$ jps

13586 NameNode

13668 DataNode

13786 Jps (b)查看产生的log日志

当前目录:/opt/module/hadoop-2.7.2/logs

[admin@hadoop101 logs]$ ls

hadoop-admin-datanode-hadoop.admin.com.log

hadoop-admin-datanode-hadoop.admin.com.out

hadoop-admin-namenode-hadoop.admin.com.log

hadoop-admin-namenode-hadoop.admin.com.out

SecurityAuth-root.audit

[admin@hadoop101 logs]# cat hadoop-admin-datanode-hadoop101.log(c)web端查看HDFS文件系统

http://192.168.1.101:50070/dfshealth.html#tab-overview

(4)操作集群

(a)在hdfs文件系统上创建一个input文件夹

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -mkdir -p /user/admin/input(b)将测试文件内容上传到文件系统上

[admin@hadoop101 hadoop-2.7.2]$bin/hdfs dfs -put wcinput/wc.input

/user/admin/input/(c)查看上传的文件是否正确

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -ls /user/admin/input/

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -cat /user/admin/ input/wc.input(d)运行mapreduce程序

[admin@hadoop101 hadoop-2.7.2]$ bin/hadoop jar

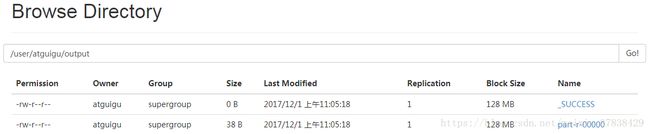

share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /user/admin/input/ /user/admin/output(e)查看输出结果

命令行查看:

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -cat /user/admin/output/*(f)将测试文件内容下载到本地

[admin@hadoop101 hadoop-2.7.2]$ hadoop fs -get /user/admin/

output/part-r-00000 ./wcoutput/(g)删除输出结果

[admin@hadoop101 hadoop-2.7.2]$ hdfs dfs -rm -r /user/admin/output二、YARN上运行MapReduce 程序

1.分析

(1)配置集群yarn上运行

(2)启动、测试集群增、删、查

(3)在yarn上执行wordcount案例

2.执行步骤

(1)配置集群

(a)配置yarn-env.sh

配置一下JAVA_HOME

export JAVA_HOME=/opt/module/jdk1.8.0_144 (b)配置yarn-site.xml

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>hadoop101value>

property> (c)配置:mapred-env.sh

配置一下JAVA_HOME

export JAVA_HOME=/opt/module/jdk1.8.0_144 (d)配置: (对mapred-site.xml.template重新命名为)mapred-site.xml

[admin@hadoop101 hadoop]$ mv mapred-site.xml.template mapred-site.xml

[admin@hadoop101 hadoop]$ vi mapred-site.xml

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>(2)启动集群

(a)启动前必须保证namenode和datanode已经启动

(b)启动resourcemanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start resourcemanager(c)启动nodemanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start nodemanager(3)集群操作

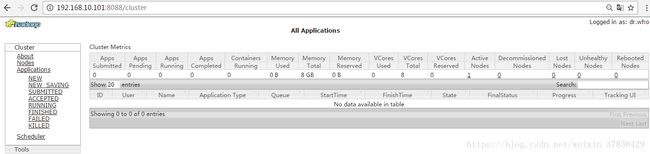

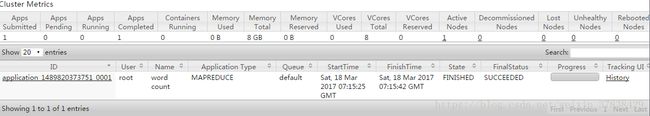

(a)yarn的浏览器页面查看,如图

(b)删除文件系统上的output文件

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -rm -R /user/admin/output(c)执行mapreduce程序

[admin@hadoop101 hadoop-2.7.2]$ bin/hadoop jar

share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /user/admin/input /user/admin/output(d)查看运行结果,如图

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -cat /user/admin/output/*

三、配置历史服务器

1.配置mapred-site.xml

[admin@hadoop101 hadoop]$ vi mapred-site.xml

<property>

<name>mapreduce.jobhistory.addressname>

<value>hadoop101:10020value>

property>

<property>

<name>mapreduce.jobhistory.webapp.addressname>

<value>hadoop101:19888value>

property>2.查看启动历史服务器文件目录

[admin@hadoop101 hadoop-2.7.2]$ ls sbin/ | grep mr

mr-jobhistory-daemon.sh3.启动历史服务器

[admin@hadoop101 hadoop-2.7.2]$ sbin/mr-jobhistory-daemon.sh start historyserver4.查看历史服务器是否启动

[admin@hadoop101 hadoop-2.7.2]$ jps5.查看jobhistory

http://192.168.1.101:19888/jobhistory

四、配置日志的聚集

日志聚集概念:应用运行完成以后,将日志信息上传到HDFS系统上。

开启日志聚集功能步骤:

1.配置yarn-site.xml

[admin@hadoop101 hadoop]$ vi yarn-site.xml

<property>

<name>yarn.log-aggregation-enablename>

<value>truevalue>

property>

<property>

<name>yarn.log-aggregation.retain-secondsname>

<value>604800value>

property>2.关闭nodemanager 、resourcemanager和historymanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh stop resourcemanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh stop nodemanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/mr-jobhistory-daemon.sh stop historyserver3.启动nodemanager 、resourcemanager和historymanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start resourcemanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/yarn-daemon.sh start nodemanager

[admin@hadoop101 hadoop-2.7.2]$ sbin/mr-jobhistory-daemon.sh start historyserver4.删除hdfs上已经存在的hdfs文件

[admin@hadoop101 hadoop-2.7.2]$ bin/hdfs dfs -rm -R /user/admin/output5.执行wordcount程序

[admin@hadoop101 hadoop-2.7.2]$ hadoop jar

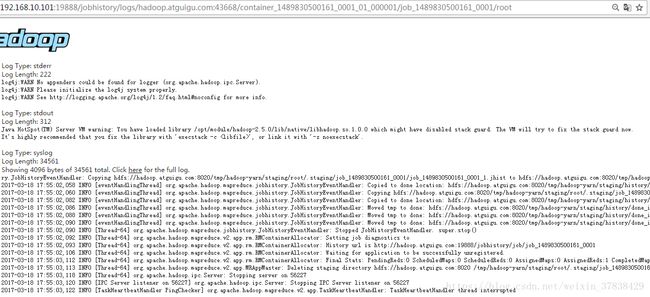

share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /user/admin/input /user/admin/output6.查看日志,如图

五、配置文件说明

Hadoop配置文件分两类:默认配置文件和自定义配置文件,只有用户想修改某一默认配置值时,才需要修改自定义配置文件,更改相应属性值。

(1)默认配置文件:存放在hadoop相应的jar包中

[core-default.xml]

hadoop-common-2.7.2.jar/ core-default.xml

[hdfs-default.xml]

hadoop-hdfs-2.7.2.jar/ hdfs-default.xml

[yarn-default.xml]

hadoop-yarn-common-2.7.2.jar/ yarn-default.xml

[mapred-default.xml]

hadoop-mapreduce-client-core-2.7.2.jar/ mapred-default.xml

(2)自定义配置文件:存放在$HADOOP_HOME/etc/hadoop

core-site.xml

hdfs-site.xml

yarn-site.xml

mapred-site.xml