pytorh之卷积神经网络lenet的实现(CIFAR10数据集)

pytorh之lenet的实现(CIFAR10数据集)

import torch as t

import matplotlib.pyplot as plt

import torchvision as tv

import torchvision.transforms as transforms

from torch.autograd import Variable

from torchvision.transforms import ToPILImage

show = ToPILImage() # 可以把Tensor转成Image,方便可视化

第一次运行程序torchvision会自动下载CIFAR-10数据集,

大约100M,需花费一定的时间,

如果已经下载有CIFAR-10,可通过root参数指定

#定义对数据的预处理

transform = transforms.Compose([

transforms.ToTensor(), # 转为Tensor

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)), # 归一化

])

# 训练集

trainset = tv.datasets.CIFAR10(

root='./data/cifar/',

train=True,

download=True,

transform=transform)

trainloader = t.utils.data.DataLoader(trainset, batch_size=4, shuffle=True)

# 测试集

testset = tv.datasets.CIFAR10(

'./data/cifar/',

train=False,

download=True,

transform=transform)

testloader = t.utils.data.DataLoader(

testset,

batch_size=4,

shuffle=False,

)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

(data, label) = trainset[100]

print(classes[label])

# (data + 1) / 2是为了还原被归一化的数据

show((data + 1) / 2).resize((100, 100))

dataiter = iter(trainloader)

images, labels = dataiter.next() # 返回4张图片及标签

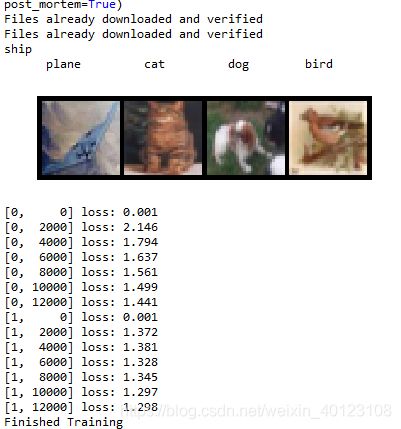

print(' '.join('%11s'%classes[labels[j]] for j in range(4)))

a=show(tv.utils.make_grid((images+1)/2)).resize((400,100))

plt.imshow(a,cmap='gray')

plt.axis('off')

plt.show()

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16*5*5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = F.max_pool2d(F.relu(self.conv1(x)), (2, 2))

x = F.max_pool2d(F.relu(self.conv2(x)), 2)

x = x.view(x.size()[0], -1)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net = Net()

#print(net)

from torch import optim

optimizer = optim.SGD(net.parameters(), lr=0.001, momentum=0.9)

criterion = nn.CrossEntropyLoss() # 交叉熵损失函数

#t.set_num_threads(8)

for epoch in range(2):

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

# 输入数据

inputs, labels = data

inputs, labels = Variable(inputs), Variable(labels)

# 梯度清零

optimizer.zero_grad()

# forward + backward

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

# 更新参数

optimizer.step()

# 打印log信息

running_loss += loss.item()

if i % 2000 == 0: # 每2000个batch打印一下训练状态

print('[%d, %5d] loss: %.3f' \

% (epoch, i, running_loss / 2000))

running_loss = 0.0

print('Finished Training')

函数DataLoader(dataset, batch_size=1, shuffle=False, sampler=None,

num_workers=0, collate_fn=default_collate, pin_memory=False,

drop_last=False)

- dataset:数据集

- batch_size:批处理量

- shuffle::打乱数据

- sampler: 样本抽样

- num_workers:使用多进程加载的进程数,0代表不使用多进程

- collate_fn: 如何将多个样本数据拼接成一个batch,一般使用默认的拼接方式即可

- pin_memory:是否将数据保存在pin memory区,pin memory中的数据转到GPU会快一些

- drop_last:dataset中的数据个数可能不是batch_size的整数倍,drop_last为True会将多出来不足一个batch的数据丢弃