Tensorflow2.0:ReNet18分类CIFAR10数据集

神经网络模型层数的加深会在训练过程中出现梯度弥散和梯度爆炸现象,网络层越深,该现象越容易出现,也越严重。

Skip Connection机制

由于浅层神经网络不容易出现这些梯度现象,那么通过在输入和输出之间添加一条直接连接的 Skip Connection 可以让神经网络具有回退的能力。

以VGG13深度神经网络为例,假设在10层没有观测到梯度弥散现象,那么可考虑在最后的两个卷积层中添加Skip Connection,使模型能自动选择是经过这两个卷积层完成特征变换,还是跳过这两个卷积层直接输出,或者也可以结合两个卷积层和 Skip Connection 的输出。

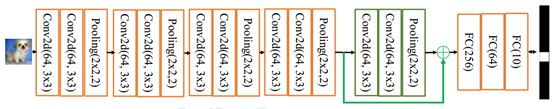

那么对于输入x来说,要和经过两个卷积层后的f(x)上相应元素的相加,才能够计算出最后的输出H(x)。为了能够满足上述关系,x要和f(x)的shape保持一致,因此x要经过identify这个步骤,即经过一定的卷积运算使得和f(x)的shape相一致,其具体抽象为下图:

残差模块

根据tensorflow里keras中的layer类,可以基于layer类重新定义个关于上述过程的类,以方便后期经常调用。其实现方式如下:

class BasicBlock(layers.Layer):

def __init__(self, filter_num, stride=1):

super(BasicBlock, self).__init__()

# f(x)包含了2个普通卷积层,创建卷积层1

self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride, padding='same')

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation('relu')

# 创建卷积层2

self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1, padding='same')

self.bn2 = layers.BatchNormalization()

if stride != 1: # 插入identity层

self.downsample = Sequential()

self.downsample.add(layers.Conv2D(filter_num, (1, 1), strides=stride))

else: # 否则,直接连接

self.downsample = lambda x:x

def call(self, inputs, training=None):

# 前向传播函数

out = self.conv1(inputs) # 通过第一个卷积层

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out) # 通过第二个卷积层

out = self.bn2(out)

# 输入通过identity()转换

identity = self.downsample(inputs)

# f(x)+x运算

output = layers.add([out, identity])

# 再通过激活函数并返回

output = tf.nn.relu(output)

return output

ReNet

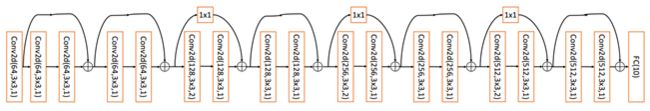

标准的 ResNet18 接受输入为224 × 224 大小的图片,为降低计算代价,使电脑能运行起来,故将 ResNet18 进行适量调整,使输入大小为32 × 32,输出维度为 10,调整后的 ResNet18 网络结构如下。

完整代码:

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, Sequential

############################################

# 一 网络模型构建

############################################

class BasicBlock(layers.Layer):

# 残差模块

def __init__(self, filter_num, stride=1):

super(BasicBlock, self).__init__()

# 第一个卷积单元

self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride, padding='same')

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation('relu')

# 第二个卷积单元

self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1, padding='same')

self.bn2 = layers.BatchNormalization()

if stride != 1:# 通过1x1卷积完成shape匹配

self.downsample = Sequential()

self.downsample.add(layers.Conv2D(filter_num, (1, 1), strides=stride))

else:# shape匹配,直接短接

self.downsample = lambda x:x

def call(self, inputs, training=None):

# [b, h, w, c],通过第一个卷积单元

out = self.conv1(inputs)

out = self.bn1(out)

out = self.relu(out)

# 通过第二个卷积单元

out = self.conv2(out)

out = self.bn2(out)

# 通过identity模块

identity = self.downsample(inputs)

# 2条路径输出直接相加

output = layers.add([out, identity])

output = tf.nn.relu(output) # 激活函数

return output

class ResNet(keras.Model):

# 通用的ResNet实现类

def __init__(self, layer_dims, num_classes=10): # [2, 2, 2, 2]

super(ResNet, self).__init__()

# 根网络,预处理

self.stem = Sequential([layers.Conv2D(64, (3, 3), strides=(1, 1)),

layers.BatchNormalization(),

layers.Activation('relu'),

layers.MaxPool2D(pool_size=(2, 2), strides=(1, 1), padding='same')

])

# 堆叠4个Block,每个block包含了多个BasicBlock,设置步长不一样

self.layer1 = self.build_resblock(64, layer_dims[0])

self.layer2 = self.build_resblock(128, layer_dims[1], stride=2)

self.layer3 = self.build_resblock(256, layer_dims[2], stride=2)

self.layer4 = self.build_resblock(512, layer_dims[3], stride=2)

# 通过Pooling层将高宽降低为1x1

self.avgpool = layers.GlobalAveragePooling2D()

# 最后连接一个全连接层分类

self.fc = layers.Dense(num_classes)

def call(self, inputs, training=None):

# 通过根网络

x = self.stem(inputs)

# 一次通过4个模块

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

# 通过池化层

x = self.avgpool(x)

# 通过全连接层

x = self.fc(x)

return x

def build_resblock(self, filter_num, blocks, stride=1):

# 辅助函数,堆叠filter_num个BasicBlock

res_blocks = Sequential()

# 只有第一个BasicBlock的步长可能不为1,实现下采样

res_blocks.add(BasicBlock(filter_num, stride))

for _ in range(1, blocks):#其他BasicBlock步长都为1

res_blocks.add(BasicBlock(filter_num, stride=1))

return res_blocks

def resnet18():

# 通过调整模块内部BasicBlock的数量和配置实现不同的ResNet

return ResNet([2, 2, 2, 2])

def resnet34():

# 通过调整模块内部BasicBlock的数量和配置实现不同的ResNet

return ResNet([3, 4, 6, 3])

############################################

# 二 网络训练

############################################

import tensorflow as tf

from tensorflow.keras import layers, optimizers, datasets, Sequential

import os

os.environ['TF_CPP_MIN_LOG_LEVEL']='2'

tf.random.set_seed(2345)

def preprocess(x, y):

# 将数据映射到-1~1

x = 2*tf.cast(x, dtype=tf.float32) / 255. - 1

y = tf.cast(y, dtype=tf.int32) # 类型转换

return x,y

(x,y), (x_test, y_test) = datasets.cifar10.load_data() # 加载数据集

y = tf.squeeze(y, axis=1) # 删除不必要的维度

y_test = tf.squeeze(y_test, axis=1) # 删除不必要的维度

print(x.shape, y.shape, x_test.shape, y_test.shape)

train_db = tf.data.Dataset.from_tensor_slices((x,y)) # 构建训练集

# 随机打散,预处理,批量化

train_db = train_db.shuffle(1000).map(preprocess).batch(512)

test_db = tf.data.Dataset.from_tensor_slices((x_test,y_test)) #构建测试集

# 随机打散,预处理,批量化

test_db = test_db.map(preprocess).batch(512)

# 采样一个样本

sample = next(iter(train_db))

print('sample:', sample[0].shape, sample[1].shape,

tf.reduce_min(sample[0]), tf.reduce_max(sample[0]))

def main():

# [b, 32, 32, 3] => [b, 1, 1, 512]

model = resnet18() # ResNet18网络

model.build(input_shape=(None, 32, 32, 3))

model.summary() # 统计网络参数

# 构建优化器

optimizer = optimizers.Adam(lr=1e-4)

# 训练100个epoch

for epoch in range(20):

for step, (x, y) in enumerate(train_db):

with tf.GradientTape() as tape:

# [b, 32, 32, 3] => [b, 10],前向传播

logits = model(x)

# [b] => [b, 10],one-hot编码

y_onehot = tf.one_hot(y, depth=10)

# 计算交叉熵

loss = tf.losses.categorical_crossentropy(y_onehot, logits, from_logits=True)

loss = tf.reduce_mean(loss)

# 计算梯度信息

grads = tape.gradient(loss, model.trainable_variables)

# 更新网络参数

optimizer.apply_gradients(zip(grads, model.trainable_variables))

if step % 50 == 0:

print(epoch, step, 'loss:', float(loss))

total_num = 0

total_correct = 0

for x, y in test_db:

logits = model(x)

prob = tf.nn.softmax(logits, axis=1)

pred = tf.argmax(prob, axis=1)

pred = tf.cast(pred, dtype=tf.int32)

correct = tf.cast(tf.equal(pred, y), dtype=tf.int32)

correct = tf.reduce_sum(correct)

total_num += x.shape[0]

total_correct += int(correct)

acc = total_correct / total_num

print(epoch, 'acc:', acc)