kubernetes存储(四)——NFS Client Provisioner的配置应用+statefulset控制器+部署mysql主从复制集群

一、StorageClass简介

StorageClass提供了一种描述存储类(class)的方法,不同的class可能会映射到不同的服务质量等级和备份策略或其他策略等。

每个 StorageClass 都包含 provisioner、parameters 和 reclaim Policy 字段, 这些字段会在StorageClass需要动态分配 PersistentVolume 时会使用到。

StorageClass的属性

Provisioner(存储分配器):用来决定使用哪个卷插件分配 PV,该字段必须指定。可以指定内部分配器,也可以指定外部分配器。外部分配器的代码地址为: kubernetes-incubator/external-storage,其中包括NFS和Ceph等。

Reclaim Policy(回收策略):通过reclaimPolicy字段指定创建的Persistent Volume的回收策略,回收策略包括:Delete 或者 Retain,没有指定默认为Delete。

更多属性查看:https://kubernetes.io/zh/docs/concepts/storage/storage-classes/

二、NFS Client Provisioner的配置应用

NFS Client Provisioner是一个automatic provisioner,使用NFS作为存储,自动创建PV和对应的PVC,本身不提供NFS存储,需要外部先有一套NFS存储服务。

PV以 ${namespace}-${pvcName}-${pvName}`的命名格式提供(在NFS服务器上)

PV回收的时候以 archieved-${namespace}-${pvcName}-${pvName}的命名格式(在NFS服务器上)

nfs-client-provisioner源码地址:

https://github.com/kubernetes-incubator/external-storage/tree/master/nfs-client

2.1NFS动态分配PV示例:

首先修改外部主机server1的挂载目录

[root@server1 ~]# vim /etc/exports

no_root_squash:增加权限,动态请求会修改目录权限

[root@server1 ~]# cat /etc/exports

/nfsdata *(rw,sync,no_root_squash)

[root@server1 ~]# exportfs -rv 使之生效

exporting *:/nfsdata

[root@server1 ~]# showmount -e

Export list for server1:

/nfsdata *

[root@server1 ~]# cd /nfsdata

[root@server1 nfsdata]# ls

index.html test.html

[root@server1 nfsdata]# rm -rf *

[root@server1 nfsdata]# ls

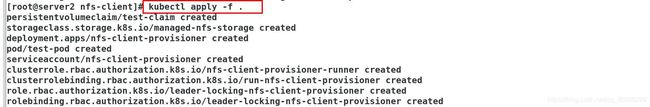

配置授权:

实验环境的纯净

[root@server2 ~]# kubectl get pod

No resources found in default namespace.

[root@server2 ~]# kubectl get cm

No resources found in default namespace.

[root@server2 ~]# kubectl get pv

No resources found in default namespace.

[root@server2 ~]# kubectl get pvc

No resources found in default namespace.

[root@server2 ~]# kubectl get all

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 443/TCP 3d10h

[root@server2 ~]# cd vol/

[root@server2 vol]# mkdir nfs-client

[root@server2 vol]# cd nfs-client/

[root@server2 nfs-client]#

[root@server2 nfs-client]# vim rbac.yml

[root@server2 nfs-client]# cat rbac.yml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

删除重复的选择器字段

[root@server2 nfs-client]# \vi deployment.yml

[root@server2 nfs-client]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: nfs-client-provisioner:latest 提前下载好镜像到仓库

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME 分配器存储类的名称

value: westos.org/nfs 定义分配器的名称

- name: NFS_SERVER

value: 172.25.254.31 定义nfs的主机

- name: NFS_PATH

value: /nfsdata nfs的路径

volumes: 外部的nfs卷

- name: nfs-client-root 指定卷名称

nfs:

server: 172.25.254.31 卷地址

path: /nfsdata 卷名称

定义存储类

[root@server2 nfs-client]# vim class.yml

[root@server2 nfs-client]# cat class.yml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: westos.org/nfs 指定nfs分配器的名称

parameters:

archiveOnDelete: "false" 存储回收策略,删除不打包,改为true删除会打包

创建pvc

[root@server2 nfs-client]# vim pvc.yml

[root@server2 nfs-client]# cat pvc.yml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: test-claim

annotations:

volume.beta.kubernetes.io/storage-class: "managed-nfs-storage"

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 100Mi

[root@server2 nfs-client]# vim pod.yml 创建pod

[root@server2 nfs-client]# cat pod.yml

kind: Pod

apiVersion: v1

metadata:

name: test-pod

spec:

containers:

- name: test-pod

image: busybox:latest 确保镜像仓库有镜像

command:

- "/bin/sh"

args:

- "-c"

- "touch /mnt/SUCCESS && exit 0 || exit 1"

volumeMounts:

- name: nfs-pvc

mountPath: "/mnt"

restartPolicy: "Never"

volumes:

- name: nfs-pvc

persistentVolumeClaim:

claimName: test-claim

[root@server2 nfs-client]# ls

claim.yml class.yml deployment.yml pod.yml rbac.yml

[root@server2 nfs-client]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

pvc1 Bound绑定 pvc-fd367a74-ef0b-4729-8f9e-0f57a10c949e 100Mi RWX managed-nfs-storage 26s

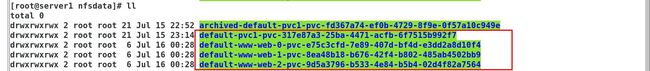

在外部存储查看

default(命名空间)-pvc1-pvc(pvc)-c3be4a0e-bc0d-4908-9286-6e19c18c7f63(pv名称)

SUCCESS:由pod创建

[root@server2 ~]# kubectl delete pod test-pod 删除pod 不会影响到pvc

pod "test-pod" deleted

[root@server2 ~]# kubectl get storageclasses.storage.k8s.io

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

managed-nfs-storage westos.org/nfs Delete Immediate false回收策略删除 20h

[root@server2 ~]# kubectl delete pvc pvc1 删除pvc

persistentvolumeclaim "pvc1" deleted

[root@server1 default-pvc1-pvc-c3be4a0e-bc0d-4908-9286-6e19c18c7f63]# ls 外部挂载卷即/nfsdata下创建的文件会删除

存储回收策略,删除不打包,改为true删除会打包

[root@server2 nfs-client]# ls

class.yml deployment.yml pod.yml pvc.yml rbac.yml

[root@server2 nfs-client]# vim class.yml

[root@server2 nfs-client]# cat class.yml

#apiVersion: storage.k8s.io/v1

#kind: StorageClass

#metadata:

# name: managed-nfs-storage

#provisioner: westos.org/nfs

#parameters:

# archiveOnDelete: "faset"

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: westos.org/nfs

parameters:

archiveOnDelete: "true" 改为true删除pvc后会定点打包

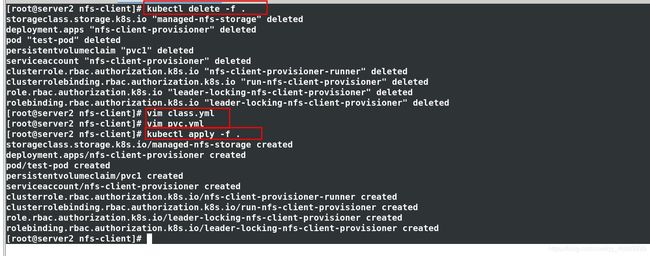

[root@server2 nfs-client]# kubectl patch storageclass managed-nfs-storage -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

storageclass.storage.k8s.io/managed-nfs-storage patched

[root@server2 nfs-client]# kubectl get storageclasses.storage.k8s.io

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

managed-nfs-storage (default) westos.org/nfs Delete Immediate false 53s

[root@server2 nfs-client]# kubectl apply -f pvc.yml 再次创建pvc

[root@server2 nfs-client]# kubectl apply -f class.yml

[root@server2 nfs-client]# kubectl delete -f pvc.yml 再次删除pvc则会打包

persistentvolumeclaim "pvc1" deleted

[root@server1 ~]# cd /nfsdata/

[root@server1 nfsdata]# ls

archived-default-pvc1-pvc-fd367a74-ef0b-4729-8f9e-0f57a10c949e 删除打包保存

default-pvc1-pvc-06ea0af3-c5fb-497d-8284-2ab6e2ccbfb4 新建

再次更改为:fase

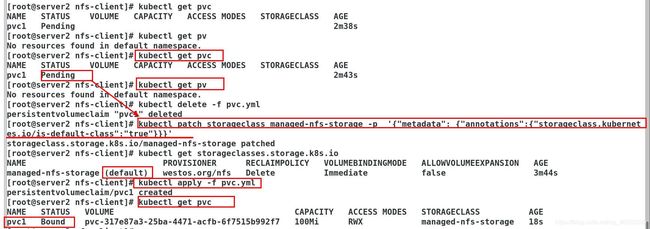

2.2默认的 StorageClass 将被用于动态的为没有特定 storage class 需求的 PersistentVolumeClaims 配置存储:(只能有一个默认StorageClass)

如果没有默认StorageClass,PVC 也没有指定storageClassName 的值,那么意味着它只能够跟 storageClassName 也是“”的 PV 进行绑定。

此时不指定存储类也可以进行绑定

[root@server2 nfs-client]# kubectl get pv

No resources found in default namespace.

[root@server2 nfs-client]# kubectl get pvc

No resources found in default namespace.

[root@server2 nfs-client]# kubectl patch storageclass managed-nfs-storage -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

storageclass.storage.k8s.io/managed-nfs-storage patched

[root@server2 nfs-client]# kubectl get storageclasses.storage.k8s.io

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

managed-nfs-storage (default) westos.org/nfs Delete Immediate false 53s

[root@server2 nfs-client]# cat class.yml

#apiVersion: storage.k8s.io/v1

#kind: StorageClass

#metadata:

# name: managed-nfs-storage

#provisioner: westos.org/nfs

#parameters:

# archiveOnDelete: "faset"

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: westos.org/nfs

parameters:

archiveOnDelete: "false" 不打包

[root@server2 nfs-client]# cat pvc.yml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: pvc1

#annotations: 不指定storageClassName 的值

# volume.beta.kubernetes.io/storage-class: "managed-nfs-storage"

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 100Mi

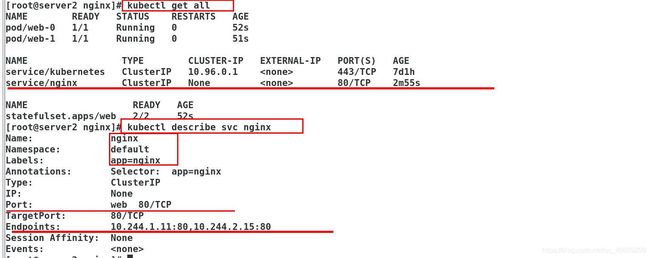

3.StatefulSe控制器

通过无头服务创建StatefulSe控制器维持Pod的拓扑状态

3.1创建service

[root@server2 ~]# cd nginx/

[root@server2 nginx]# ls

service.yml

[root@server2 nginx]# cat service.yml

apiVersion: v1

kind: Service

metadata:

name: nginx

labels:

app: nginx

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx

[root@server2 nginx]# kubectl apply -f service.yml

service/nginx created

[root@server2 nginx]# kubectl get svc Nginx无头服务none

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 443/TCP 7d1h

nginx ClusterIP None 80/TCP 12s

3.2创建statefulset控制器

[root@server2 nginx]# vim statefulset.yml

[root@server2 nginx]# cat statefulset.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx"

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

[root@server2 nginx]# kubectl apply -f statefulset.yml

statefulset.apps/web created

[root@server2 nginx]# kubectl get pod

NAME READY STATUS RESTARTS AGE

web-0 1/1 Running 0 9s

web-1 1/1 Running 0 8s

[root@server2 nginx]# vim /etc/resolv.conf

[root@server2 nginx]# cat /etc/resolv.conf

# Generated by NetworkManager

nameserver 114.114.114.114

nameserver 10.96.0.10

[root@server2 ~]# cd nginx/

[root@server2 nginx]# ls

service.yml statefulset.yml

[root@server2 nginx]# vim statefulset.yml

[root@server2 nginx]# cat statefulset.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx"

replicas: 4

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

[root@server2 nginx]# kubectl apply -f statefulset.yml

statefulset.apps/web configured

[root@server2 nginx]# kubectl get svc nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx ClusterIP None 80/TCP 18m

[root@server2 nginx]# kubectl get pod

NAME READY STATUS RESTARTS AGE

web-0 1/1 Running 0 16m

web-1 1/1 Running 0 16m

web-2 1/1 Running 0 15s

web-3 1/1 Running 0 13s

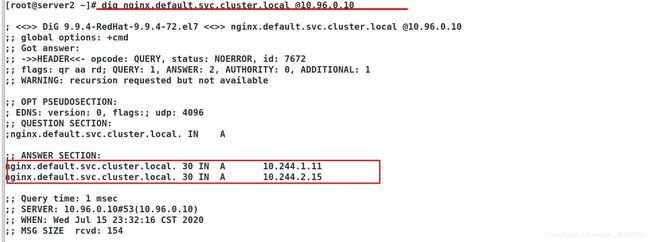

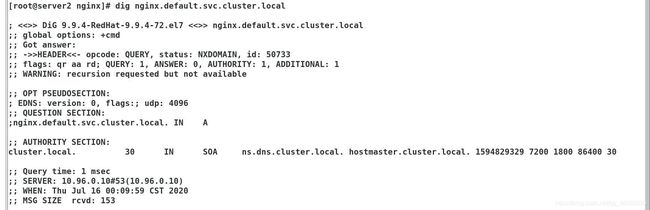

[root@server2 nginx]# dig nginx.default.svc.cluster.local

; <<>> DiG 9.9.4-RedHat-9.9.4-72.el7 <<>> nginx.default.svc.cluster.local

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 50140

;; flags: qr aa rd; QUERY: 1, ANSWER: 4, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

;; QUESTION SECTION:

;nginx.default.svc.cluster.local. IN A

;; ANSWER SECTION:

nginx.default.svc.cluster.local. 30 IN A 10.244.1.11

nginx.default.svc.cluster.local. 30 IN A 10.244.2.15

nginx.default.svc.cluster.local. 30 IN A 10.244.2.16

nginx.default.svc.cluster.local. 30 IN A 10.244.1.12

;; Query time: 1 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Wed Jul 15 23:43:59 CST 2020

;; MSG SIZE rcvd: 248

[root@server2 nginx]# kubectl get pod -o wide 依次创建pod

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

web-0 1/1 Running 0 30m 10.244.2.15 server3

web-1 1/1 Running 0 30m 10.244.1.11 server4

web-2 1/1 Running 0 13m 10.244.2.16 server3

web-3 1/1 Running 0 13m 10.244.1.12 server4

root@server2 nginx]# dig web-2.nginx.default.svc.cluster.local

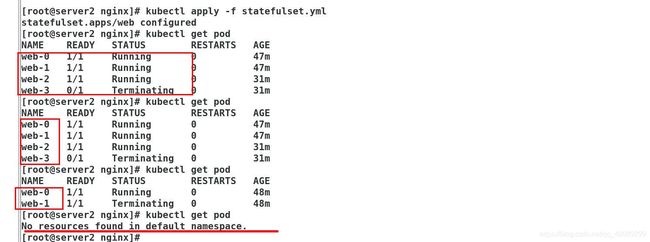

3.3使用statefulset控制器缩减pod(递归缩减)

StatefulSet将应用状态抽象成了两种情况:

拓扑状态:应用实例必须按照某种顺序启动。新创建的Pod必须和原来Pod的网络标识一样

存储状态:应用的多个实例分别绑定了不同存储数据。

StatefulSet给所有的Pod进行了编号,编号规则是: ( s t a t e f u l s e t 名 称 ) − (statefulset名称)- (statefulset名称)−(序号),从0开始。

Pod被删除后重建,重建Pod的网络标识也不会改变,Pod的拓扑状态按照Pod的“名字+编号”的方式固定下来,并且为每个Pod提供了一个固定且唯一的访问入口,即Pod对应的DNS记录。

首先,想要弹缩的StatefulSet. 需先清楚是否能弹缩该应用.

kubectl get statefulsets

改变StatefulSet副本数量: kubectl scale statefulsets --replicas=“数量”

• 如果StatefulSet开始由 kubectl apply 或 kubectl create --save-config 创建,更新StatefulSet manifests中的 .spec.replicas, 然后执行命令 kubectl apply: kubectl apply -f

也可以通过命令 kubectl edit 编辑该字段: kubectl edit statefulsets

使用 kubectl patch: kubectl patch statefulsets -p ‘{“spec”:{“replicas”:}}’

[root@server2 nginx]# vim statefulset.yml

[root@server2 nginx]# cat statefulset.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx"

replicas: 0

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

[root@server2 nginx]# kubectl apply -f statefulset.yml

statefulset.apps/web configured

pod回收完成再次无法解析域名

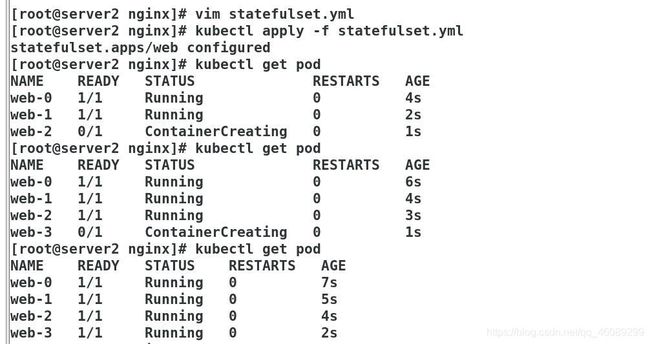

再次创建,依次创建pod

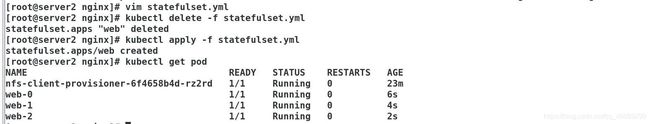

3.3 StatefulSet+存储

PV和PVC的设计,使得StatefulSet对存储状态的管理成为了可能:

Pod的创建也是严格按照编号顺序进行的。比如在web-0进入到running状态,并且Conditions为 Ready之前,web-1一直会处于pending状态。

[root@server2 nginx]# vim statefulset.yml

[root@server2 nginx]# cat statefulset.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx"

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

storageClassName: managed-nfs-storage

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

[root@server2 nginx]# kubectl apply -f statefulset.yml

statefulset.apps/web created

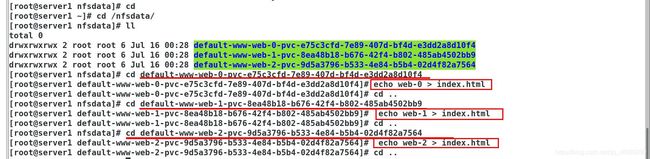

[root@server2 nginx]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

www-web-0 Bound pvc-e75c3cfd-7e89-407d-bf4d-e3dd2a8d10f4 1Gi RWO managed-nfs-storage 8m11s

www-web-1 Bound pvc-8ea48b18-b676-42f4-b802-485ab4502bb9 1Gi RWO managed-nfs-storage 8m7s

www-web-2 Bound pvc-9d5a3796-b533-4e84-b5b4-02d4f82a7564 1Gi RWO managed-nfs-storage 8m3s

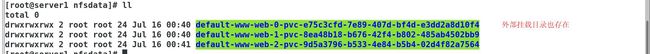

StatefulSet还会为每一个Pod分配并创建一个同样编号的PVC。这样,kubernetes就可以通过 Persistent Volume机制为这个PVC绑定对应的PV,从而保证每一个Pod都拥有一个独立的 Volume。

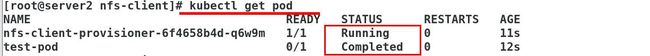

[root@server2 nginx]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nfs-client-provisioner-6f4658b4d-rz2rd 1/1 Running 0 2m1s

web-0 1/1 Running 0 56s

web-1 1/1 Running 0 52s

web-2 1/1 Running 0 48s

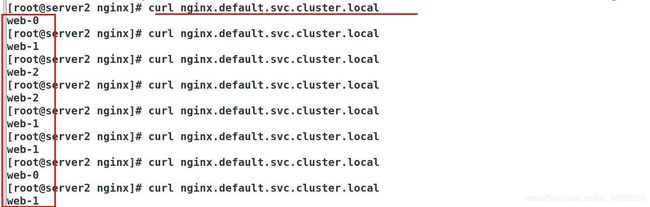

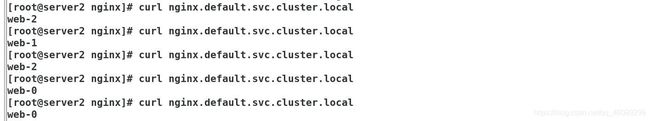

设置负载均衡:在外出挂载存储点编辑挂载卷

如下实现负载均衡

再次拉伸到之前

四、使用statefullset部署mysql主从集群:

参考网址:

https://kubernetes.io/zh/docs/tasks/run-application/run-replicated-stateful-application/

3.1创建configmap配置文件

ConfigMap 提供 my.cnf 覆盖,使之可以独立控制 MySQL 的主服务器和从服务器的配置。

[root@server2 ~]# mkdir mysql

[root@server2 ~]# cd mysql/

[root@server2 mysql]# \vi cm.yml

[root@server2 mysql]# vim cm.yml

[root@server2 mysql]# cat cm.yml

apiVersion: v1

kind: ConfigMap

metadata:

name: mysql

labels:

app: mysql

data:

master.cnf: |

# Apply this config only on the master.

[mysqld]

log-bin

slave.cnf: |

# Apply this config only on slaves.

[mysqld]

super-read-only

3.2创建service

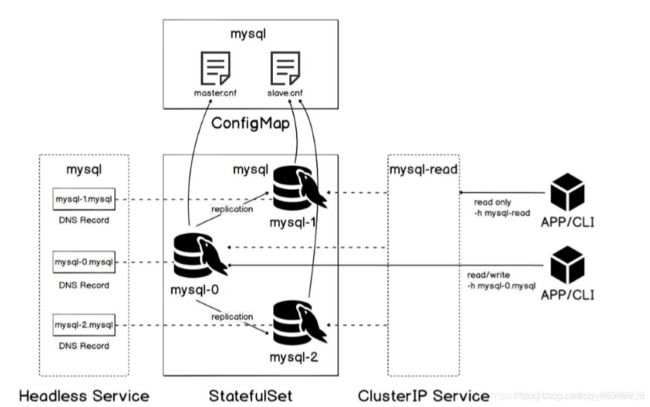

Headless Service 给 StatefulSet 控制器为集合中每个 Pod 创建的 DNS 条目提供了一个宿主。因为 Headless Service 名为 mysql,所以可以通过在同一 Kubernetes 集群和 namespace 中的任何其他 Pod 内解析 .mysql 来访问 Pod。

客户端 Service 称为 mysql-read,是一种常规 Service,具有其自己的群集 IP,该群集 IP 在报告为就绪的所有MySQL Pod 中分配连接。可能端点的集合包括 MySQL 主节点和所有从节点。

请注意,只有读取查询才能使用负载平衡的客户端 Service。因为只有一个 MySQL 主服务器,所以客户端应直接连接到 MySQL 主服务器 Pod (通过其在 Headless Service 中的 DNS 条目)以执行写入操作

[root@server2 mysql]# \vi service.yml

[root@server2 mysql]# vim service.yml

[root@server2 mysql]# cat service.yml

# Headless service for stable DNS entries of StatefulSet members.

apiVersion: v1

kind: Service

metadata:

name: mysql

labels:

app: mysql

spec:

ports:

- name: mysql

port: 3306

clusterIP: None

selector:

app: mysql

---

# Client service for connecting to any MySQL instance for reads.

# For writes, you must instead connect to the master: mysql-0.mysql.

apiVersion: v1

kind: Service

metadata:

name: mysql-read

labels:

app: mysql

spec:

ports:

- name: mysql

port: 3306

selector:

app: mysql

[root@server2 mysql]# kubectl apply -f service.yml

service/mysql created

service/mysql-read created

[root@server2 mysql]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 443/TCP 7d3h

mysql ClusterIP None 3306/TCP 18s

mysql-read ClusterIP 10.101.21.238 3306/TCP 18s

创建StatefulSet

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: mysql

spec:

selector:

matchLabels:

app: mysql

serviceName: mysql

replicas: 3

template:

metadata:

labels:

app: mysql

spec:

initContainers:

- name: init-mysql

image: mysql:5.7 镜像仓库必须有

command:

- bash

- "-c"

- |

set -ex

# Generate mysql server-id from pod ordinal index.

[[ `hostname` =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

echo [mysqld] > /mnt/conf.d/server-id.cnf

# Add an offset to avoid reserved server-id=0 value.

echo server-id=$((100 + $ordinal)) >> /mnt/conf.d/server-id.cnf

# Copy appropriate conf.d files from config-map to emptyDir.

if [[ $ordinal -eq 0 ]]; then

cp /mnt/config-map/master.cnf /mnt/conf.d/

else

cp /mnt/config-map/slave.cnf /mnt/conf.d/

fi

volumeMounts:

- name: conf

mountPath: /mnt/conf.d

- name: config-map

mountPath: /mnt/config-map

- name: clone-mysql

image: xtrabackup:1.0

command:

- bash

- "-c"

- |

set -ex

# Skip the clone if data already exists.

[[ -d /var/lib/mysql/mysql ]] && exit 0

# Skip the clone on master (ordinal index 0).

[[ `hostname` =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

[[ $ordinal -eq 0 ]] && exit 0

# Clone data from previous peer.

ncat --recv-only mysql-$(($ordinal-1)).mysql 3307 | xbstream -x -C /var/lib/mysql

# Prepare the backup.

xtrabackup --prepare --target-dir=/var/lib/mysql

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

containers:

- name: mysql

image: mysql:5.7

env:

- name: MYSQL_ALLOW_EMPTY_PASSWORD

value: "1"

ports:

- name: mysql

containerPort: 3306

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

resources:

requests:

cpu: 500m

memory: 1Gi

livenessProbe:

exec:

command: ["mysqladmin", "ping"]

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

readinessProbe:

exec:

# Check we can execute queries over TCP (skip-networking is off).

command: ["mysql", "-h", "127.0.0.1", "-e", "SELECT 1"]

initialDelaySeconds: 5

periodSeconds: 2

timeoutSeconds: 1

- name: xtrabackup

image: xtrabackup:1.0

ports:

- name: xtrabackup: 镜像仓库必须有 containerPort: 3307

command:

- bash

- "-c"

- |

set -ex

cd /var/lib/mysql

# Determine binlog position of cloned data, if any.

if [[ -f xtrabackup_slave_info && "x$() " != "x" ]]; then

# XtraBackup already generated a partial "CHANGE MASTER TO" query

# because we're cloning from an existing slave. (Need to remove the tailing semicolon!)

cat xtrabackup_slave_info | sed -E 's/;$//g' > change_master_to.sql.in

# Ignore xtrabackup_binlog_info in this case (it's useless).

rm -f xtrabackup_slave_info xtrabackup_binlog_info

elif [[ -f xtrabackup_binlog_info ]]; then

# We're cloning directly from master. Parse binlog position.

[[ `cat xtrabackup_binlog_info` =~ ^(.*?)[[:space:]]+(.*?)$ ]] || exit 1

rm -f xtrabackup_binlog_info xtrabackup_slave_info

echo "CHANGE MASTER TO MASTER_LOG_FILE='${BASH_REMATCH[1]}',\

MASTER_LOG_POS=${BASH_REMATCH[2]}" > change_master_to.sql.in

fi

# Check if we need to complete a clone by starting replication.

if [[ -f change_master_to.sql.in ]]; then

echo "Waiting for mysqld to be ready (accepting connections)"

until mysql -h 127.0.0.1 -e "SELECT 1"; do sleep 1; done

echo "Initializing replication from clone position"

mysql -h 127.0.0.1 \

-e "$(. sql.in), \

MASTER_HOST='mysql-0.mysql', \

MASTER_USER='root', \

MASTER_PASSWORD='', \

MASTER_CONNECT_RETRY=10; \

START SLAVE;" || exit 1

# In case of container restart, attempt this at-most-once.

mv change_master_to.sql.in change_master_to.sql.orig

fi

# Start a server to send backups when requested by peers.

exec ncat --listen --keep-open --send-only --max-conns=1 3307 -c \

"xtrabackup --backup --slave-info --stream=xbstream --host=127.0.0.1 --user=root"

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

resources:

requests:

cpu: 100m

memory: 100Mi

volumes:

- name: conf

emptyDir: {}

- name: config-map

configMap:

name: mysql

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 10Gi

[root@server2 nfs-client]# kubectl get storageclasses.storage.k8s.io

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

managed-nfs-storage (default) westos.org/nfs Delete Immediate false 2m11s

[root@server1 ~]# docker push reg.westos.org/library/xtrabackup:1.0

The push refers to repository [reg.westos.org/library/xtrabackup]

f85c58969eb0: Pushed

82d548d175dd: Pushed

fe4c16cbf7a4: Pushed

1.0: digest: sha256:39f106eb400e18dcb4bded651a7ab308b39c305578ce228ae35f3c76bc715510 size: 949

docker load导入镜像报错:open /var/lib/docker/tmp/docker-import-970689518/bin/json: no such file or directory

今天将之前打包好的mysql5.7的tar包通过docker load命令导入到Docker环境中却报出了如下错误:

[root@server1 ~]# docker load -i mysql-5.7.17-1.el6.x86_64.rpm-bundle.tar

open /var/lib/docker/tmp/docker-import-578020953/repositories: no such file or directory

错误反应的意思是mysql5.7.19这个tar包缺少docker所需要的一些json文件,它只包含了layer.tar这个文件夹,缺少json这个文件夹,因此mysql5.7.19.tar只是一个tar包,并不能直接用docker load导入。

解决办法如下

[root@server1 ~]# cat mysql-5.7.17-1.el6.x86_64.rpm-bundle.tar | docker import - mysql-5.7

sha256:475b8072b16469a4a2020b7b7b5ac4681a2ee5a2d6960167cf4f92a50fbc2cef

[root@server1 ~]# docker images | grep mysql-5.7

mysql-5.7 latest 475b8072b164 About a minute ago 470MB

未完成