VMware Centos7.4+UDEV+AMS+ORACLE 配置RAC环境

目录

一、准备工作

1.1 服务器准备

1.2 IP地址准备

1.3 用户及组准备

1.4 SSH信任关系准备

1.5 文件目录准备(crmtest1和crmtest2)

1.6 RPM包准备

1.7 共享磁盘准备

1.8 系统参数修改

1.9 DNS服务器搭建(可选)

二、安装RAC

2.1 安装grid

2.2 asmca创建data/fra datagroup

2.3 安装database

一、准备工作

1.1 服务器准备

本次准备搭建一个RAC的测试环境,采用的是在VMware Workstation中搭建两台Centos虚拟机,以此搭建RAC的集群环境。虚拟机的信息如下:

|

|

IP |

主机名 |

域名后缀 |

用户名密码 |

安装目录 |

| DB |

192.168.150.128 |

crmtest1 |

tp-link.net |

oracle/grid |

/u1/db |

| 192.168.150.129 |

crmtest2 |

tp-link.net |

oracle/grid |

/u1/db |

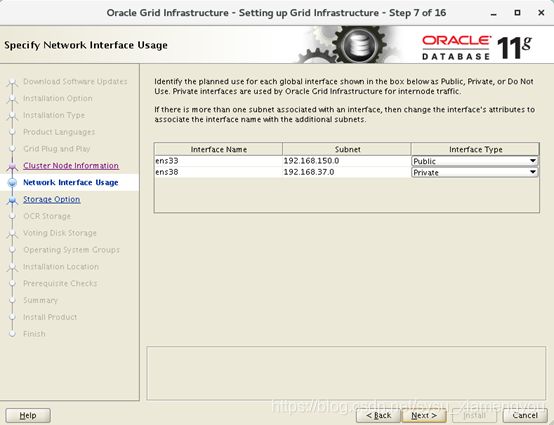

1.2 IP地址准备

RAC的IP地址规划如下。需要注意的是,搭建或者克隆Oracle数据库的时候,一定要先记得配置好/etc/hosts文件,否则有可能会出现数据库一直处理connected状态。

##public IP

192.168.150.128 crmtest1.tp-link.net crmtest1

192.168.150.139 crmtest2.tp-link.net crmtest2

##Virtual IP

192.168.150.130 crmtest1-vip.tp-link.net crmtest1-vip

192.168.150.131 crmtest2-vip.tp-link.net crmtest2-vip

##Private IP

192.168.37.128 crmtest1-priv.tp-link.net crmtest1-priv

192.168.37.129 crmtest2-priv.tp-link.net crmtest2-priv

##Scan IP

192.168.150.135 crmtest-scan.tp-link.net crmtest-scan

192.168.150.136 crmtest-scan.tp-link.net crmtest-scan

192.168.150.137 crmtest-scan.tp-link.net crmtest-scan

为了规划上述IP,需要对Centos虚拟机另外绑定一个网卡。

网卡1:

网卡2:

然后将网卡动态绑定的IP设定为静态IP

[root@crmtest1 network-scripts]# pwd

/etc/sysconfig/network-scripts

[root@crmtest1 network-scripts]# vim ifcfg-ens33

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO="static"

DEFROUTE=yes

IPV4_FAILURE_FATAL=no

IPV6INIT=yes

IPV6_AUTOCONF=yes

IPV6_DEFROUTE=yes

IPV6_FAILURE_FATAL=no

IPV6_ADDR_GEN_MODE=stable-privacy

IPADDR=192.168.150.128

NAME=ens33

UUID=4747bd04-7e51-40b7-9d24-04969f2c5196

DEVICE=ens33

ONBOOT=yes

PREFIX=24

GATEWAY=192.168.150.2[root@crmtest1 network-scripts]# vim ifcfg-Wired_connection_1

HWADDR=00:0C:29:88:E0:AB

MACADDR=00:0C:29:88:E0:AB

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO=none

IPADDR=192.168.37.128

PREFIX=24

GATEWAY=192.168.37.2

DEFROUTE=yes

PEERDNS=no

IPV4_FAILURE_FATAL=no

IPV6INIT=yes

IPV6_AUTOCONF=yes

IPV6_DEFROUTE=yes

IPV6_FAILURE_FATAL=no

IPV6_ADDR_GEN_MODE=stable-privacy

NAME="Wired connection 1"

UUID=21cde08c-d753-3558-b7d4-6aa9f2604e45

ONBOOT=yes

AUTOCONNECT_PRIORITY=-999crmtest2和上述步骤一样,设定静态IP,不需要设定VIP IP

1.3 用户及组准备

[root@crmtest1 ~]# groupadd -g 1000 oinstall

[root@crmtest1 ~]# groupadd -g 1200 asmadmin

[root@crmtest1 ~]# groupadd -g 1201 asmdba

[root@crmtest1 ~]# groupadd -g 1202 asmoper

[root@crmtest1 ~]# groupadd -g 1300 dba

[root@crmtest1 ~]# groupadd -g 1301 oper

[root@crmtest1 ~]# useradd -m -u 1100 -g oinstall -G asmadmin,asmdba,asmoper,dba -s /bin/bash -c "Grid Infrastructure Owner" grid

[root@crmtest1 ~]# useradd -m -u 1101 -g oinstall -G dba,oper,asmdba,asmadmin -s /bin/bash -c "Oracle Software Owner" oracle

[root@crmtest2 ~]# groupadd -g 1000 oinstall

[root@crmtest2 ~]# groupadd -g 1200 asmadmin

[root@crmtest2 ~]# groupadd -g 1201 asmdba

[root@crmtest2 ~]# groupadd -g 1202 asmoper

[root@crmtest2 ~]# groupadd -g 1300 dba

[root@crmtest2 ~]# groupadd -g 1301 oper

[root@crmtest2 ~]# useradd -m -u 1100 -g oinstall -G asmadmin,asmdba,asmoper,dba -s /bin/bash -c "Grid Infrastructure Owner" grid

[root@crmtest2 ~]# useradd -m -u 1101 -g oinstall -G dba,oper,asmdba,asmadmin -s /bin/bash -c "Oracle Software Owner" oracle| 软件组件 |

操作系统用户 |

主组 |

辅助组 |

Oracle基目录/Oracle主目录 |

| Grid Infrastructure |

grid |

oinstall |

asmadmin、asmdba、asmoper |

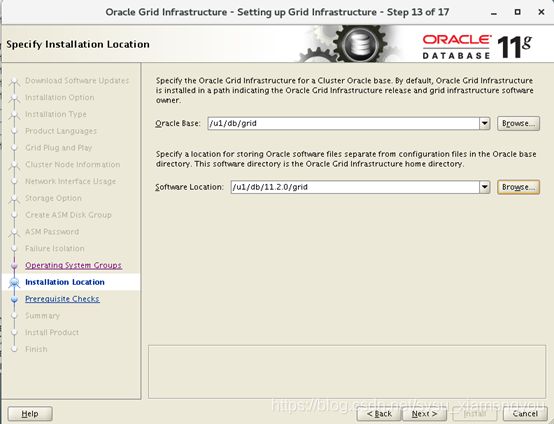

/u1/db/grid /u1/db/11.2.0/grid |

| Oracle RAC |

oracle |

oinstall |

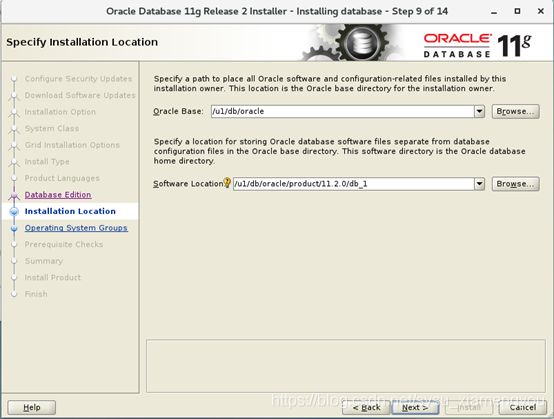

dba、oper、asmdba、asmadmin |

/u1/db/oracle /u1/db/oracle/product/11.2.0/db_1 |

1.4 SSH信任关系准备

oracle用户建立信任关系

在crmtest1节点执行:

[oracle@crmtest1 ~]$ mkdir ~/.ssh

[oracle@crmtest1 ~]$ chmod 700 ~/.ssh

[oracle@crmtest1 ~]$ ssh-keygen -t rsa

[oracle@crmtest1 ~]$ ssh-keygen -t dsa

在crmtest2节点执行

[oracle@crmtest2 ~]$ mkdir ~/.ssh

[oracle@crmtest2 ~]$ chmod 700 ~/.ssh

[oracle@crmtest2 ~]$ ssh-keygen -t rsa

[oracle@crmtest2 ~]$ ssh-keygen -t dsa

在crmtest1节点执行:

[oracle@crmtest1 ~]$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[oracle@crmtest1 ~]$ cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

[oracle@crmtest1 ~]$ ssh crmtest2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[oracle@crmtest1 ~]$ ssh crmtest2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

[oracle@crmtest1 ~]$ scp ~/.ssh/authorized_keys oracle@crmtest2:~/.ssh/authorized_keysgrid用户建立信任关系

在crmtest1节点执行:

[grid@crmtest1 ~]$ mkdir ~/.ssh

[grid@crmtest1 ~]$ chmod 700 ~/.ssh

[grid@crmtest1 ~]$ ssh-keygen -t rsa

[grid @crmtest1 ~]$ ssh-keygen -t dsa

在crmtest2节点执行

[grid@crmtest2 ~]$ mkdir ~/.ssh

[grid@crmtest2 ~]$ chmod 700 ~/.ssh

[grid@crmtest2 ~]$ ssh-keygen -t rsa

[grid@crmtest2 ~]$ ssh-keygen -t dsa

在crmtest1节点执行:

[grid@crmtest1 ~]$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[grid@crmtest1 ~]$ cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

[grid@crmtest1 ~]$ ssh crmtest2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[grid@crmtest1 ~]$ ssh crmtest2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

[grid@crmtest1 ~]$ scp ~/.ssh/authorized_keys grid@crmtest2:~/.ssh/authorized_keys1.5 文件目录准备(crmtest1和crmtest2)

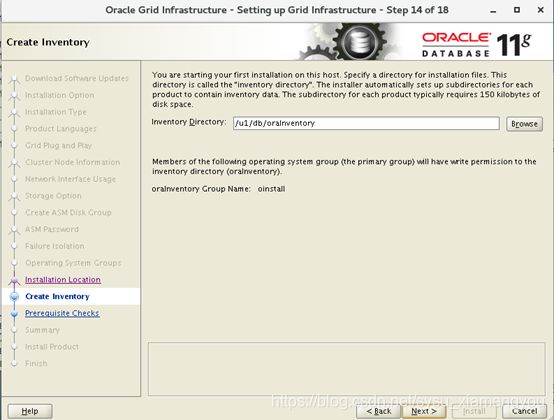

a)创建inventory directory

[root@crmtest1 ~]# mkdir -p /u1/db/oraInventory

[root@crmtest1 ~]# chown -R grid:oinstall /u1/db/oraInventory/

[root@crmtest1 ~]# chmod -R 775 /u1/db/oraInventory/

b)创建grid base,grid home directory

[root@crmtest1 ~]# mkdir -p /u1/db/grid

[root@crmtest1 ~]# chown -R grid:oinstall /u1/db/grid/

[root@crmtest1 ~]# chmod -R 775 /u1/db/grid/

[root@crmtest1 ~]# mkdir -p /u1/db/11.2.0/grid

[root@crmtest1 ~]# chown -R grid:oinstall /u1/db/11.2.0/

[root@crmtest1 ~]# chmod -R 775 /u1/db/11.2.0/

c)创建oracle base,oracle home directory

[root@crmtest1 ~]# mkdir -p /u1/db/oracle/cfgtoollogs

[root@crmtest1 ~]# chown -R oracle:oinstall /u1/db/oracle/

[root@crmtest1 ~]# chmod -R 775 /u1/db/oracle/

[root@crmtest1 ~]# mkdir -p /u1/db/oracle/product/11.2.0/db_1

[root@crmtest1 ~]# chown -R oracle:oinstall /u1/db/oracle/product/11.2.0/db_1/

[root@crmtest1 ~]# chmod -R 775 /u1/db/oracle/product/11.2.0/db_1/1.6 RPM包准备

##这里建议创建一个yum.sh脚本来执行。

yum install -y compat-glibc

yum install -y compat-glibc-headers

yum install -y compat-libstdc++-296

yum install -y compat-libstdc++-296.i686

yum install -y compat-libstdc++-33.i686

yum install -y compat-libstdc++-33

yum install -y gcc

yum install -y gcc-c++

yum install -y gdbm

yum install -y gdbm.i686

yum install -y glibc

yum install -y glibc.i686

yum install -y glibc-common

yum install -y glibc-devel

yum install -y glibc-devel.i686

yum install -y libaio

yum install -y libaio.i686

yum install -y libaio-devel

yum install -y libaio-devel.i686

yum install -y libgcc

yum install -y libgcc.i686

yum install -y libgomp

yum install -y libgomp.i686

yum install -y libstdc++

yum install -y libstdc++.i686

yum install -y libstdc++-devel

yum install -y libstdc++-devel.i686

yum install -y libXp

yum install -y libXp.i686

yum install -y libXp-devel

yum install -y libXp-devel.i686

yum install -y libXtst

yum install -y libXtst.i686

yum install -y libXt

yum install -y libXt.i686

yum install -y libXt-devel

yum install -y libXt-devel.i686

yum install -y make

yum install -y sysstat

yum install -y elfutils-libelf-devel

yum install -y elfutils-libelf-devel.i686

yum install -y unixODBC

yum install -y unixODBC.i686

yum install -y unixODBC-devel

yum install -y unixODBC-devel.i686

yum install -y kernel-headers

yum install -y lrzsz

yum install -y libXrender

yum install -y compat-libcap1

yum install -y binutils

yum install -y ksh在yum安装过程中,有可能由于Centos7.4一些依赖包的版本过高,需要对一些依赖包进行降级

[root@crmtest1 ~]# yum list --showduplicates glibc

[root@crmtest1 ~]# yum downgrade glibc glibc-common glibc-devel glibc-headers

[root@crmtest1 ~]# yum downgrade gcc cpp libgomp

[root@crmtest1 ~]# yum downgrade libgcc

[root@crmtest1 ~]# yum downgrade libstdc++[root@crmtest1 software]# rpm -ivh openmotif21-2.1.30-11.EL5.i386.rpm

[root@crmtest1 software]# rpm -ivh xorg-x11-libs-compat-6.8.2-1.EL.33.0.1.i386.rpm 1.7 共享磁盘准备

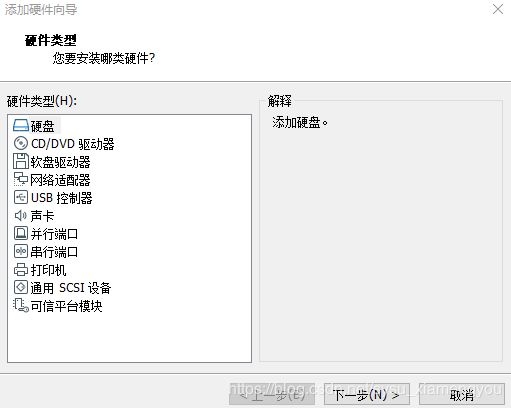

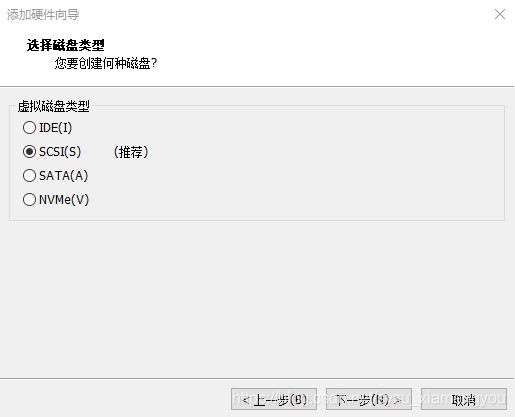

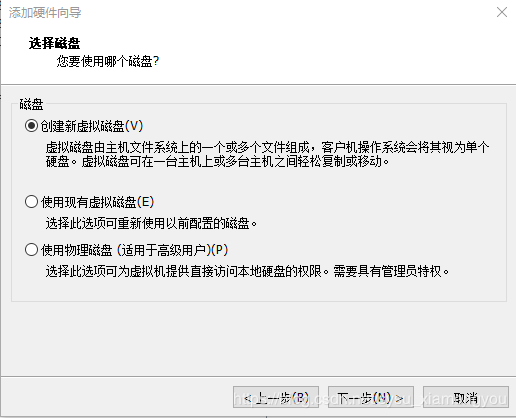

在VMware Centos中配置RAC共享磁盘。第1台虚拟机Centos添加新建磁盘,第2台虚拟机Centos添加第1台虚拟机新建的VMDK文件,磁盘设置为永久模式,共享磁盘的位置到SCSI 1设备节点上。

如果是节点2添加磁盘,则选择使用现有虚拟磁盘

如上步骤分别添加硬盘2(SCSI 1:1)、硬盘3(SCSI 1:2)、硬盘4(SCSI 1:3)

确认磁盘位置信息,分别修改两台虚拟机的VMX文件

#shared disks configure

disk.EnableUUID="TRUE"

disk.locking="FALSE"

diskLib.dataCacheMaxSize="0"

diskLib.dataCacheMaxReadAheadSize="0"

diskLib.dataCacheMinReadAheadSize="0"

diskLib.dataCachePageSize="4096"

diskLib.maxUnsyncedWrites="0"

scsi1.sharedBus="VIRTUAL"

scsi1.virtualDev = "lsilogic"

scsi1:1.present = "TRUE"

scsi1:1.fileName = "E:\Centos-ASM\ASM1.vmdk"

scsi1:1.mode = "independent-persistent"

scsi1:1.deviceType = "disk"

scsi1:2.present = "TRUE"

scsi1:2.fileName = "E:\Centos-ASM\ASM2.vmdk"

scsi1:2.mode = "independent-persistent"

scsi1:2.deviceType = "disk"

scsi1:3.present = "TRUE"

scsi1:3.fileName = "E:\Centos-ASM\ASM3.vmdk"

scsi1:3.mode = "independent-persistent"

scsi1:3.deviceType = "disk"

scsi1:1.redo = ""

scsi1:3.redo = ""

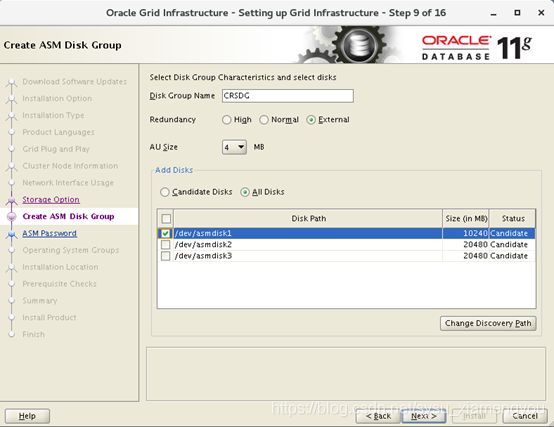

scsi1:2.redo = ""共享磁盘信息如下

+CRSDG 1个10G的磁盘 /dev/sdb crs vote/ocr专用

+DATADG 1个20G的磁盘 /dev/sdc 数据存储专用

+FRADG 1个20G的磁盘 /dev/sdd 归档日志存储专用

[root@crmtest1 ~]# fdisk -l | grep /dev

Disk /dev/sda: 42.9 GB, 42949672960 bytes, 83886080 sectors

/dev/sda1 * 2048 616447 307200 83 Linux

/dev/sda2 616448 8744959 4064256 82 Linux swap / Solaris

/dev/sda3 8744960 83886079 37570560 83 Linux

Disk /dev/sdd: 21.5 GB, 21474836480 bytes, 41943040 sectors

Disk /dev/sdb: 10.7 GB, 10737418240 bytes, 20971520 sectors

Disk /dev/sdc: 21.5 GB, 21474836480 bytes, 41943040 sectors创建udev

查找磁盘的UUID

[root@crmtest1 ~]# for i in b c d

>do

>/usr/lib/udev/scsi_id -g -u -d /dev/sd$i

>done

36000c2947a6468b10cc6cd64589e2028

36000c291f4d76644081f3089bc7701d3

36000c29e1859fa556a72c06fc8f48d2d编辑 UDEV Rules File

vim /etc/udev/rules.d/99-oracle-asmdevices.rules

KERNEL=="sd*[!0-9]", ENV{DEVTYPE}=="disk", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d $devnode", RESULT=="36000c2947a6468b10cc6cd64589e2028", RUN+="/bin/sh -c 'mknod /dev/asmdisk1 b $major $minor; chown grid:asmadmin /dev/asmdisk1; chmod 0660 /dev/asmdisk1'"

KERNEL=="sd*[!0-9]", ENV{DEVTYPE}=="disk", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d $devnode", RESULT=="36000c291f4d76644081f3089bc7701d3", RUN+="/bin/sh -c 'mknod /dev/asmdisk2 b $major $minor; chown grid:asmadmin /dev/asmdisk2; chmod 0660 /dev/asmdisk2'"

KERNEL=="sd*[!0-9]", ENV{DEVTYPE}=="disk", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d $devnode", RESULT=="36000c29e1859fa556a72c06fc8f48d2d", RUN+="/bin/sh -c 'mknod /dev/asmdisk3 b $major $minor; chown grid:asmadmin /dev/asmdisk3; chmod 0660 /dev/asmdisk3'"启动udev

[root@crmtest1 rules.d]# /sbin/udevadm trigger --type=devices --action=change查看磁盘

[root@crmtest1 rules.d]# ll -ltr /dev/asm*

brw-rw----. 1 grid asmadmin 8, 16 Oct 30 11:03 /dev/asmdisk1

brw-rw----. 1 grid asmadmin 8, 48 Oct 30 11:03 /dev/asmdisk3

brw-rw----. 1 grid asmadmin 8, 32 Oct 30 11:03 /dev/asmdisk21.8 系统参数修改

#在/etc/sysctl.conf增加如下参数:

kernel.shmall = 33057936

kernel.shmmax = 67702652928

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

fs.file-max = 6815744

fs.aio-max-nr = 1048576

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 262144

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048576

#/etc/security/limits.conf文件增加:

* hard nofile 65536

* soft nofile 4096

* hard nproc 16384

* soft nproc 4096

使上述参数生效

[root@crmtest1 ~]# /sbin/sysctl -p1.9 DNS服务器搭建(可选)

由于RAC集群安装的时候需要各种DNS解析,我在crmtest1服务器上搭建一个DNS服务器。

[root@crmtest1 ~]# yum –y install bind*打开DNS的主配置文件

[root@crmtest1 ~]# vim /etc/named.conf

options {

# listen-on port 53 { 127.0.0.1; }; #删除本行后会默认在所有接口的UDP 53端口监听服务,建议删除

listen-on-v6 port 53 { ::1; };

directory "/var/named";

dump-file "/var/named/data/cache_dump.db";

statistics-file "/var/named/data/named_stats.txt";

memstatistics-file "/var/named/data/named_mem_stats.txt";

recursing-file "/var/named/data/named.recursing";

secroots-file "/var/named/data/named.secroots";

# allow-query { localhost; }; 删除本行会默认相应所有客户机的查询请求,建议删除

recursion yes; #开启递归查询

dnssec-enable yes;

dnssec-validation yes;

/* Path to ISC DLV key */

bindkeys-file "/etc/named.iscdlv.key";

managed-keys-directory "/var/named/dynamic";

pid-file "/run/named/named.pid";

session-keyfile "/run/named/session.key";

};

logging {

channel default_debug {

file "data/named.run";

severity dynamic;

};

};

zone "." IN {

type hint;

file "named.ca";

};

include "/etc/named.rfc1912.zones";

include "/etc/named.root.key";在/etc/named.rfc1912.zones文件中添加自己所需域名的正向解析

……

zone "tp-link.net" IN {

type master;

file "tp-link.net.zone";

allow-update { none; };

};编辑tp-link.net.zone文件

[root@crmtest1 /]# cd /var/named/

[root@crmtest1 named]# cp named.localhost tp-link.net.zone

[root@crmtest1 named]# vim tp-link.net.zone

$TTL 1D

@ IN SOA tp-link.net. rname.invalid. (

0 ; serial

1D ; refresh

1H ; retry

1W ; expire

3H ) ; minimum

NS @

A 127.0.0.1

AAAA ::1

crmtest1 IN A 192.168.150.128

crmtest2 IN A 192.168.150.129

crmtest1-vip IN A 192.168.150.130

crmtest2-vip IN A 192.168.150.131

crmtest1-priv IN A 192.168.37.128

crmtest2-priv IN A 192.168.37.129

crmtest-scan IN A 192.168.150.135

crmtest-scan IN A 192.168.150.136

crmtest-scan IN A 192.168.150.137全部配置文件编写完成后可以使用以下命令对所有DNS相关的配置文件进行检查,如有语法错误的地方,会依次指出。

[root@crmtest1 /]# named-checkconf /etc/named.conf

[root@crmtest1 /]# named-checkzone tp-link.net /var/named/tp-link.net.zone

#启动DNS服务器

[root@crmtest1 /]# systemctl start named.service

#加入到开机自启动

[root@crmtest1 /]# systemctl enable named.service二、安装RAC

下载grid软件包p10404530_112030_Linux-x86-64_3of7.zip,解压,安装之前检查。

[grid@crmtest1 ~]$ unzip p10404530_112030_Linux-x86-64_3of7.zip

[grid@crmtest1 ~]$ ll grid/

total 56

drwxr-xr-x. 9 grid oinstall 178 Sep 22 2011 doc

drwxr-xr-x. 4 grid oinstall 4096 Sep 22 2011 install

-rwxr-xr-x. 1 grid oinstall 28122 Sep 22 2011 readme.html

drwxr-xr-x. 2 grid oinstall 30 Sep 22 2011 response

drwxr-xr-x. 2 grid oinstall 34 Sep 22 2011 rpm

-rwxr-xr-x. 1 grid oinstall 4878 Sep 22 2011 runcluvfy.sh

-rwxr-xr-x. 1 grid oinstall 3227 Sep 22 2011 runInstaller

drwxr-xr-x. 2 grid oinstall 29 Sep 22 2011 sshsetup

drwxr-xr-x. 14 grid oinstall 4096 Sep 22 2011 stage

-rwxr-xr-x. 1 grid oinstall 4326 Sep 2 2011 welcome.html

[grid@crmtest1 ~]$ cd grid/

[grid@crmtest1 grid]$ ./runcluvfy.sh stage -pre crsinst -n crmtest1,crmtest2 -verbose

Performing pre-checks for cluster services setup

Checking node reachability...

Check: Node reachability from node "crmtest1"

Destination Node Reachable?

------------------------------------ ------------------------

crmtest1 yes

crmtest2 yes

Result: Node reachability check passed from node "crmtest1"

Checking user equivalence...

Check: User equivalence for user "grid"

Node Name Status

------------------------------------ ------------------------

crmtest2 passed

crmtest1 failed

Result: PRVF-4007 : User equivalence check failed for user "grid"

WARNING:

User equivalence is not set for nodes:

crmtest1

Verification will proceed with nodes:

crmtest2

Checking node connectivity...

Checking hosts config file...

Node Name Status

------------------------------------ ------------------------

crmtest2 passed

Verification of the hosts config file successful

……

Check: TCP connectivity of subnet "192.168.122.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

crmtest1:192.168.122.1 crmtest2:192.168.122.1 failed

ERROR:

PRVF-7617 : Node connectivity between "crmtest1 : 192.168.122.1" and "crmtest2 : 192.168.122.1" failed

Result: TCP connectivity check failed for subnet "192.168.122.0"

……

Check: Package existence for "pdksh"

Node Name Available Required Status

------------ ------------------------ ------------------------ ----------

crmtest2 missing pdksh-5.2.14 failed

Result: Package existence check failed for "pdksh"

……

All nodes have one search entry defined in file "/etc/resolv.conf"

Checking DNS response time for an unreachable node

Node Name Status

------------------------------------ ------------------------

crmtest2 failed

crmtest1 failed

PRVF-5637 : DNS response time could not be checked on following nodes: crmtest2,crmtest1

File "/etc/resolv.conf" is not consistent across nodes处理前置安装检查问题

Result: PRVF-4007 : User equivalence check failed for user "grid"

分别在crmtest1和crmtest2上验证PUBLIC IP,VIP IP,PRIVATE IP

[grid@crmtest1 ~]$ ssh crmtest1 date

[grid@crmtest1 ~]$ ssh crmtest1-vip date

[grid@crmtest1 ~]$ ssh crmtest1-priv date

[grid@crmtest1 ~]$ ssh crmtest2 date

[grid@crmtest1 ~]$ ssh crmtest2-vip date

[grid@crmtest1 ~]$ ssh crmtest2-priv date

[grid@crmtest2 ~]$ ssh crmtest1 date

[grid@crmtest2 ~]$ ssh crmtest1-vip date

[grid@crmtest2 ~]$ ssh crmtest1-priv date

[grid@crmtest2 ~]$ ssh crmtest2 date

[grid@crmtest2 ~]$ ssh crmtest2-vip date

[grid@crmtest2 ~]$ ssh crmtest2-priv date

PRVF-7617 : Node connectivity between "crmtest1 : 192.168.122.1" and "crmtest2 : 192.168.122.1" failed

该问题去除Centos虚拟机的虚拟网卡即可

[root@crmtest1 ~]# ifconfig virbr0 down

[root@crmtest1 ~]# brctl delbr virbr0

[root@crmtest1 ~]# systemctl disable libvirtd.service

[root@crmtest2 ~]# ifconfig virbr0 down

[root@crmtest2 ~]# brctl delbr virbr0

[root@crmtest2 ~]# systemctl disable libvirtd.service

Result: Package existence check failed for "pdksh"

忽略即可,pdksh是一个旧的包,已经废弃,使用ksh

PRVF-5637 : DNS response time could not be checked on following nodes: crmtest2,crmtest1

在这里我在crmtest1上搭建了一个DNS服务器

修改crmtest1和crmtest2上的/etc/resolv.conf

search tp-link.net

nameserver 192.168.150.128

然后再修改crmtest1和crmtest2上的 /usr/bin/nslookup

# mv /usr/bin/nslookup /usr/bin/nslookup.orig

# echo '#!/bin/bash

/usr/bin/nslookup.orig $*

exit 0' > /usr/bin/nslookup

# chmod a+x /usr/bin/nslookup再次检查,除了pdksh,其它都成功。

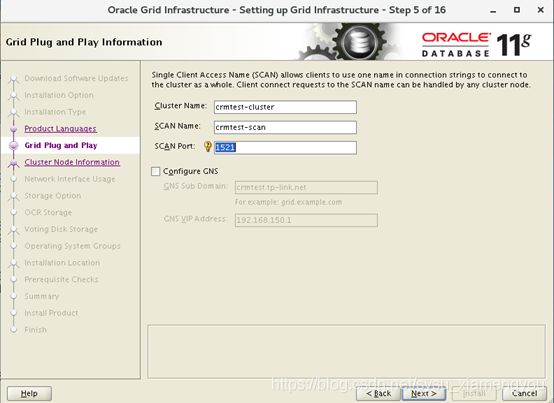

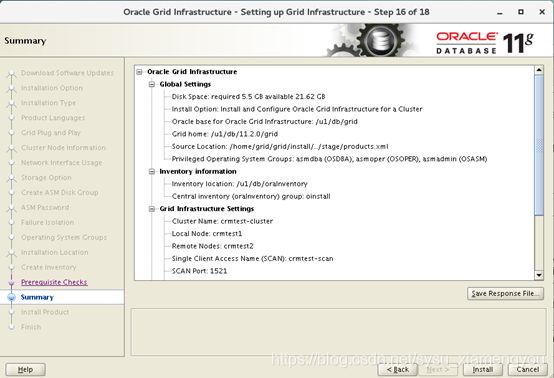

2.1 安装grid

开始安装grid

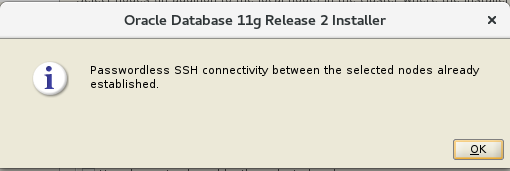

[grid@crmtest1 grid]$ ./runInstaller -jreLoc /etc/alternatives/jre_1.8.0测试crmtest1和crmtest2之间的ssh连通性

测试成功

点击“Next”

发现一直卡住,日志错误为 :

SEVERE: [FATAL] [INS-40912] Virtual host name: crmtest1-vip is assigned to another system on the network.

CAUSE: One or more virtual host names appeared to be assigned to another system on the network.

ACTION: Ensure that the virtual host names assigned to each of the nodes in the cluster are not currently in use, and the IP addresses are registered to the domain name you want to use as the virtual host name.查询资料后发现VIP的IP地址不能手动绑定,需要RAC自己配置,于是在crmtest1和crmtest2上将VIP绑定去除

[root@crmtest1 ~]# ifdown ens33:1

[root@crmtest2 ~]# ifdown ens33:1然后安装可以继续下去了

Password:Oracle123

两个节点都安装cvuqdisk-1.0.9-1.rpm软件包,重新执行检查

点击“Ignore All”

安装到76%的时候,需要在crmtest1和crmtest2上面以root用户执行两个脚本

/u1/db/oraInventory/orainstRoot.sh

/u1/db/11.2.0/grid/root.sh

执行orainstRoot.sh成功,没有问题。

执行root.sh脚本时,遇见了如下问题

[root@crmtest1 grid]# ./root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /u1/db/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Creating /etc/oratab file...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /u1/db/11.2.0/grid/crs/install/crsconfig_params

Creating trace directory

User ignored Prerequisites during installation

OLR initialization - successful

root wallet

root wallet cert

root cert export

peer wallet

profile reader wallet

pa wallet

peer wallet keys

pa wallet keys

peer cert request

pa cert request

peer cert

pa cert

peer root cert TP

profile reader root cert TP

pa root cert TP

peer pa cert TP

pa peer cert TP

profile reader pa cert TP

profile reader peer cert TP

peer user cert

pa user cert

Adding Clusterware entries to inittab

ohasd failed to start

Failed to start the Clusterware. Last 20 lines of the alert log follow:

2019-10-31 11:19:36.572

[client(33002)]CRS-2101:The OLR was formatted using version 3.原因:

在centos7中ohasd需要被设置为一个服务,在运行脚本root.sh之前

以root用户创建服务文件

[root@crmtest1 ~]# touch /usr/lib/systemd/system/ohas.service

[root@crmtest1 ~]# chmod 777 /usr/lib/systemd/system/ohas.service

将以下内容添加到新创建的ohas.service文件中

[root@crmtest1 ~]# cat /usr/lib/systemd/system/ohas.service

[Unit]

Description=Oracle High Availability Services

After=syslog.target

[Service]

ExecStart=/etc/init.d/init.ohasd run >/dev/null 2>&1 Type=simple

Restart=always

[Install]

WantedBy=multi-user.target

以root用户运行下面的命令

[root@crmtest1 ~]# systemctl daemon-reload

[root@crmtest1 ~]# systemctl enable ohas.service

Created symlink from /etc/systemd/system/multi-user.target.wants/ohas.service to /usr/lib/systemd/system/ohas.service.

[root@crmtest1 ~]# systemctl start ohas.service

查看运行状态

[root@crmtest1 ~]# systemctl status ohas.service

● ohas.service - Oracle High Availability Services

Loaded: loaded (/usr/lib/systemd/system/ohas.service; enabled; vendor preset: disabled)

Active: active (running) since Thu 2019-10-31 11:30:42 CST; 4s ago

Main PID: 35265 (init.ohasd)

CGroup: /system.slice/ohas.service

└─35265 /bin/sh /etc/init.d/init.ohasd run >/dev/null 2>&1 Type=si...

Oct 31 11:30:42 crmtest1 systemd[1]: Started Oracle High Availability Services.

Hint: Some lines were ellipsized, use -l to show in full.由于crmtest1和crmtest2同时执行了root.sh,在crmtest1和crmtest2上执行以下操作

[root@crmtest1 grid]# cd /u1/db/11.2.0/grid/crs/install/

[root@crmtest1 install]# /u1/db/11.2.0/grid/perl/bin/perl rootcrs.pl -deconfig -force -verbose

[root@crmtest1 install]# /u1/db/11.2.0/grid/perl/bin/perl roothas.pl -deconfig -force -verbose

[root@crmtest2 grid]# cd /u1/db/11.2.0/grid/crs/install/

[root@crmtest2 install]# /u1/db/11.2.0/grid/perl/bin/perl rootcrs.pl -deconfig -force -verbose

[root@crmtest2 install]# /u1/db/11.2.0/grid/perl/bin/perl roothas.pl -deconfig -force -verbose然后crmtest1和crmtest2再次运行root.sh文件,crmtest1运行成功以后再执行第二个节点

[root@crmtest1 grid]# ./root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /u1/db/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The contents of "oraenv" have not changed. No need to overwrite.

The contents of "coraenv" have not changed. No need to overwrite.

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /u1/db/11.2.0/grid/crs/install/crsconfig_params

User ignored Prerequisites during installation

CRS-2672: Attempting to start 'ora.mdnsd' on 'crmtest1'

CRS-2676: Start of 'ora.mdnsd' on 'crmtest1' succeeded

CRS-2672: Attempting to start 'ora.gpnpd' on 'crmtest1'

CRS-2676: Start of 'ora.gpnpd' on 'crmtest1' succeeded

CRS-2672: Attempting to start 'ora.cssdmonitor' on 'crmtest1'

CRS-2672: Attempting to start 'ora.gipcd' on 'crmtest1'

CRS-2676: Start of 'ora.cssdmonitor' on 'crmtest1' succeeded

CRS-2676: Start of 'ora.gipcd' on 'crmtest1' succeeded

CRS-2672: Attempting to start 'ora.cssd' on 'crmtest1'

CRS-2672: Attempting to start 'ora.diskmon' on 'crmtest1'

CRS-2676: Start of 'ora.diskmon' on 'crmtest1' succeeded

CRS-2676: Start of 'ora.cssd' on 'crmtest1' succeeded

ASM created and started successfully.

Disk Group CRSDG created successfully.

clscfg: -install mode specified

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

CRS-4256: Updating the profile

Successful addition of voting disk 9e32eb96f5ad4f65bf0142d05b027b4c.

Successfully replaced voting disk group with +CRSDG.

CRS-4256: Updating the profile

CRS-4266: Voting file(s) successfully replaced

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 9e32eb96f5ad4f65bf0142d05b027b4c (/dev/asmdisk1) [CRSDG]

Located 1 voting disk(s).

CRS-2672: Attempting to start 'ora.asm' on 'crmtest1'

CRS-2676: Start of 'ora.asm' on 'crmtest1' succeeded

CRS-2672: Attempting to start 'ora.CRSDG.dg' on 'crmtest1'

CRS-2676: Start of 'ora.CRSDG.dg' on 'crmtest1' succeeded

Configure Oracle Grid Infrastructure for a Cluster ... succeededcrmtest2节点执行root.sh

[root@crmtest2 grid]# ./root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /u1/db/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The contents of "oraenv" have not changed. No need to overwrite.

The contents of "coraenv" have not changed. No need to overwrite.

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /u1/db/11.2.0/grid/crs/install/crsconfig_params

Creating trace directory

User ignored Prerequisites during installation

OLR initialization - successful

Adding Clusterware entries to inittab

CRS-4402: The CSS daemon was started in exclusive mode but found an active CSS daemon on node crmtest1, number 1, and is terminating

An active cluster was found during exclusive startup, restarting to join the cluster

Configure Oracle Grid Infrastructure for a Cluster ... succeeded查看错误原因

INFO: PRVF-5494 : The NTP Daemon or Service was not alive on all nodes

INFO: PRVF-5415 : Check to see if NTP daemon or service is running failed

INFO: Clock synchronization check using Network Time Protocol(NTP) failed

INFO: PRVF-9652 : Cluster Time Synchronization Services check failed停止crmtest1和crmtest2系统的NTP服务

[root@crmtest1 ~]# systemctl stop ntpd

[root@crmtest1 ~]# chkconfig ntpd off

[root@crmtest1 ~]# mv /etc/ntp.conf /etc/ntp.conf.original

[root@crmtest2 ~]# systemctl stop ntpd

[root@crmtest2 ~]# chkconfig ntpd off

[root@crmtest2 ~]# mv /etc/ntp.conf /etc/ntp.conf.original至此,RAC软件安装完成。

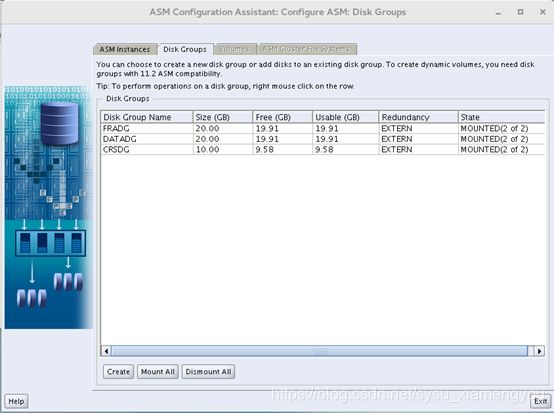

2.2 asmca创建data/fra datagroup

在两个数据库节点位grid用户设置如下环境变量

export ORACLE_BASE=/u1/db/grid

export ORACLE_HOME=/u1/db/11.2.0/grid

export ORACLE_SID=+ASM1 ##节点2这里的值为+ASM2

export PATH=$ORACLE_HOME/bin:$PATH:$ORACLE_HOME/OPatch查看RAC集群状态,我设置了3个SCAN IP

[grid@crmtest1 ~]$ crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.CRSDG.dg

ONLINE ONLINE crmtest1

ONLINE ONLINE crmtest2

ora.DATADG.dg

ONLINE ONLINE crmtest1

ONLINE ONLINE crmtest2

ora.FRADG.dg

ONLINE ONLINE crmtest1

ONLINE ONLINE crmtest2

ora.LISTENER.lsnr

ONLINE ONLINE crmtest1

ONLINE ONLINE crmtest2

ora.asm

ONLINE ONLINE crmtest1 Started

ONLINE ONLINE crmtest2 Started

ora.gsd

OFFLINE OFFLINE crmtest1

OFFLINE OFFLINE crmtest2

ora.net1.network

ONLINE ONLINE crmtest1

ONLINE ONLINE crmtest2

ora.ons

ONLINE ONLINE crmtest1

ONLINE ONLINE crmtest2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.LISTENER_SCAN1.lsnr

1 ONLINE ONLINE crmtest2

ora.LISTENER_SCAN2.lsnr

1 ONLINE ONLINE crmtest1

ora.LISTENER_SCAN3.lsnr

1 ONLINE ONLINE crmtest1

ora.crmtest1.vip

1 ONLINE ONLINE crmtest1

ora.crmtest2.vip

1 ONLINE ONLINE crmtest2

ora.cvu

1 ONLINE ONLINE crmtest1

ora.oc4j

1 ONLINE ONLINE crmtest1

ora.scan1.vip

1 ONLINE ONLINE crmtest2

ora.scan2.vip

1 ONLINE ONLINE crmtest1

ora.scan3.vip

1 ONLINE ONLINE crmtest1 创建DATA和FRA datagroup:

[grid@crmtest1 ~]$ source .bash_profile

[grid@crmtest1 ~]$ asmca最后形成三个磁盘组CRSDG、DATADG、FRADG

在命令行查看

[grid@crmtest1 ~]$ asmcmd

ASMCMD> lsdg

State Type Rebal Sector Block AU Total_MB Free_MB Req_mir_free_MB Usable_file_MB Offline_disks Voting_files Name

MOUNTED EXTERN N 512 4096 4194304 10240 9808 0 9808 0 Y CRSDG/

MOUNTED EXTERN N 512 4096 1048576 20480 20385 0 20385 0 N DATADG/

MOUNTED EXTERN N 512 4096 1048576 20480 20385 0 20385 0 N FRADG/

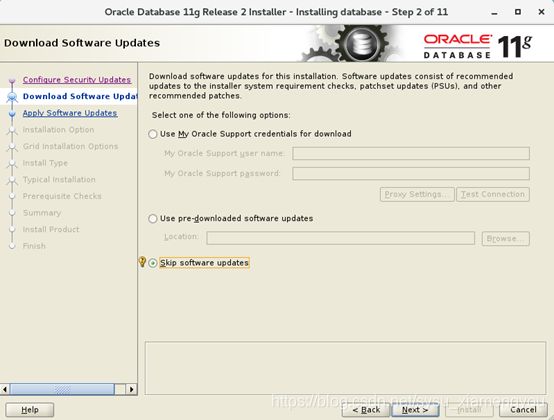

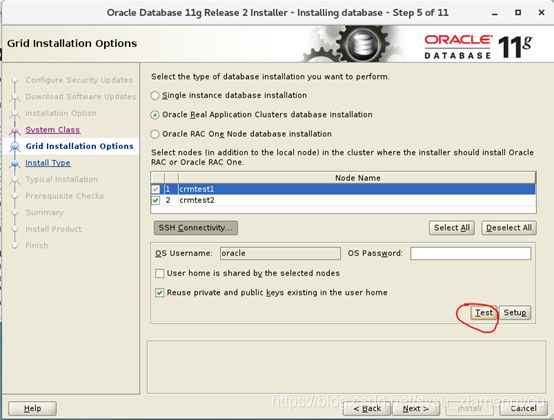

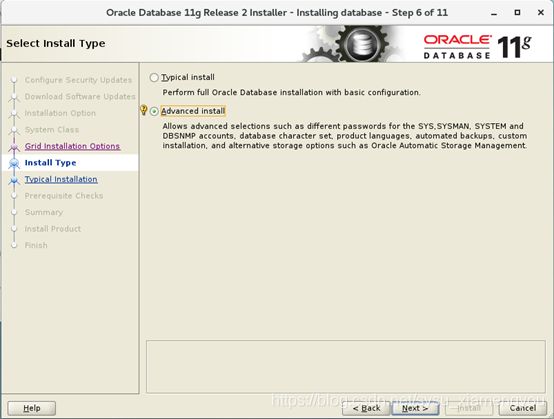

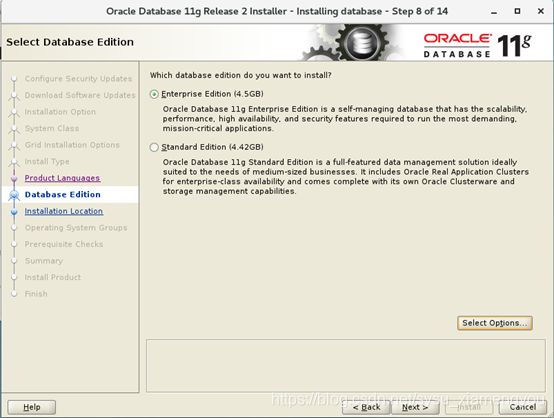

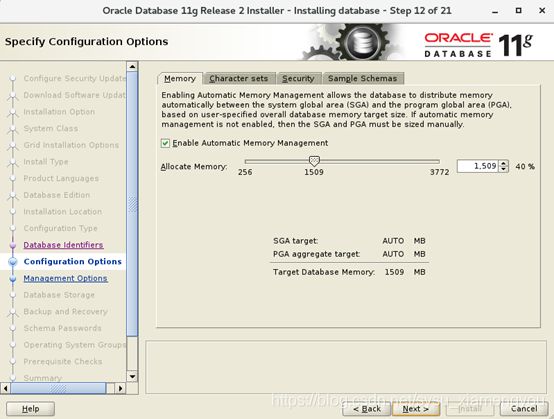

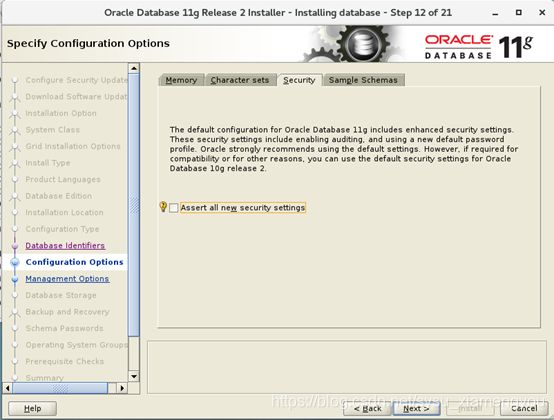

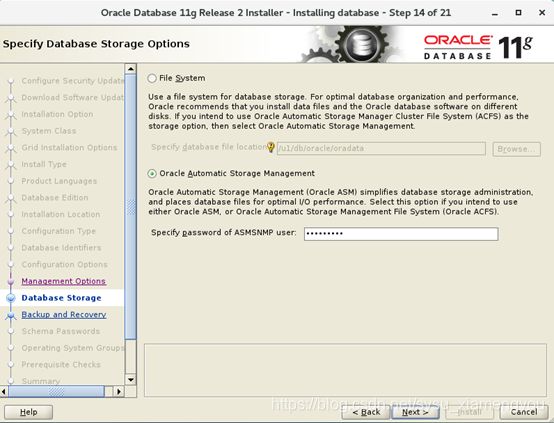

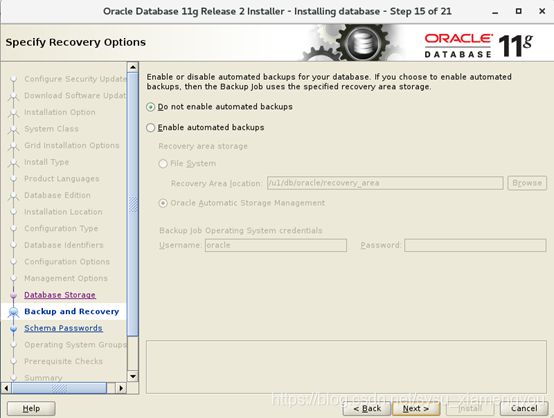

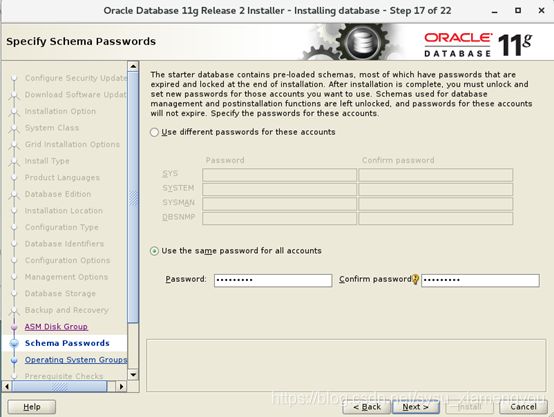

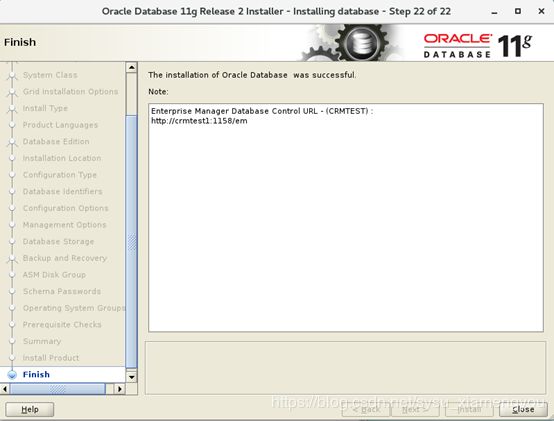

ASMCMD> quit2.3 安装database

Oracle用户在crmtest1上执行

[oracle@crmtest1 ~]$ unzip p10404530_112030_Linux-x86-64_1of7.zip

[oracle@crmtest1 ~]$ unzip p10404530_112030_Linux-x86-64_2of7.zip

[oracle@crmtest1 ~]$ cd database

[oracle@crmtest1 ~]$ ./runInstall -jreLoc /etc/alternatives/jre_1.8.0

![]()

Password: Oracle123

Password: Oracle123

crmtest1执行root.sh脚本

[root@crmtest1 db_1]# ./root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /u1/db/oracle/product/11.2.0/db_1

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The contents of "oraenv" have not changed. No need to overwrite.

The contents of "coraenv" have not changed. No need to overwrite.

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Finished product-specific root actions.crmtest2执行root.sh脚本

[root@crmtest1 db_1]# ./root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /u1/db/oracle/product/11.2.0/db_1

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The contents of "oraenv" have not changed. No need to overwrite.

The contents of "coraenv" have not changed. No need to overwrite.

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Finished product-specific root actions.在两个数据库节点位oracle用户设置如下环境变量:

export ORACLE_BASE=/u1/db/oracle

export ORACLE_HOME=/u1/db/oracle/product/11.2.0/db_1

export ORACLE_SID=CRMTEST1 #节点2这里为CRMTEST2

export PATH=$ORACLE_HOME/perl/bin:$PATH:$ORACLE_HOME/bin至此已经安装成功。

查询数据库状态

[oracle@crmtest1 ~]$ sqlplus / as sysdba

SQL> select instance_name,status from v$instance;

INSTANCE_NAME STATUS

---------------- ------------

CRMTEST1 OPEN

[oracle@crmtest2 ~]$ sqlplus / as sysdba

SQL> select instance_name,status from v$instance;

INSTANCE_NAME STATUS

---------------- ------------

CRMTEST2 OPENgrid用户查询集群状态,正常