用于RGB-D室内语义分割的具有门控融合的局部敏感反卷积网络

abstract

problem: indoor semantic segmentation using RGB-D data

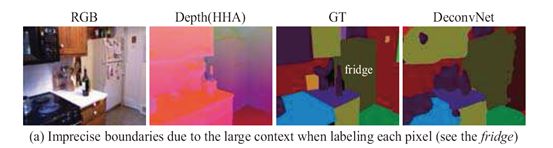

motivation: there is still room for improvements in two aspects:

- boundary segmentation (边界分割)---DeconvNet aggregates large context to predict the label of each pixel, inherently limiting the segmentation precision of object boundaries

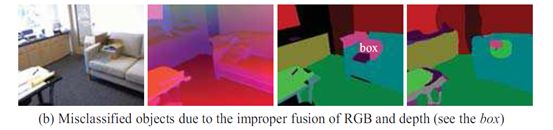

- RGB-D fusion (RGB-D 融合)---Recent state-of-the-art methods generally fuse RGB and depth networks with equal-weight score fusion, regardless of the varying contributions of the two modalities on delineating different categories in different scenes

method to adress problems above: Locality-sensitive DeconvNet; gated fusion layer

introduction

kinect-- capture high-quality synchronized visual (RGB data) and geometrical (depth data)

cues to depict one scene

DeconvNet-- learn to upsample the low-resolution label map of FCN into full resolution with more details

上图(a)(b)是使用的two-stream DeconvNet followed by score fusion with equal-weight sum like FCN model[19] 体现的两个有待改进那的两个方面的例子

This paper aims to augment DeconvNet for indoor semantic segmentation with RGB-D data

Related work

仍然分为两个方面,与motivation对应

Refine Boundaries for Semantic Segmentation

post-processing method

- apply the superpixels generated by graph cuts to smooth the predictions[5,9]

- adopt fully connected condition random fields (CRF) to optimize the holistic segmentation map[3,4]

designing particular deep learning models for dense prediction

- CRF is incorporated into FCN by [29, 17] to encourage spatial and appearance consistency in the labelling outputs

- Affinity CNNs [2, 20] embed additional pixel-wise similarity loss into FCN for dense prediction 相似性CNN?

add one data driven pooling layer on top of DeconvNet to smooth the predictions in every superpixel[12]

Combine RGB and Depth Data for Semantic Segmentation

- [23, 22, 10] simply concatenate the handcrafted RGB and depth features to represent each pixel or superpixel

- [7, 15] incorporate both the RGB and depth cues into graphical models like MRFs or CRFs for semantic segm

- entation

- RNN[16]

three levels of fusion: early middle late

- [5] concatenate the RGB and depth image as four-channel input

- [11] use two CNN to extract features from RGB and Depth images independently,then concatenate them

- Long [19] also learn two independent CNN models but directly predict the score map of each modality, followed by score fusion with equal-weight sum

Proposed approach

LSD-GF

overall architecture

整体来看,分为3个组件

FCN is to learn robust feature representation for each pixel by aggregating multi-scale contextual cues.

ASPP[4] derived from VGG16

LS-DeconvNet is used to restore high-resolution and precise scene details based on the coarse FCN map

a gated fusion layer is introduced to fuse the RGB and depth cues effectively for accurate scene semantic segmentation

concatenate the prediction maps of RGB and depth to learn a weighted gate array

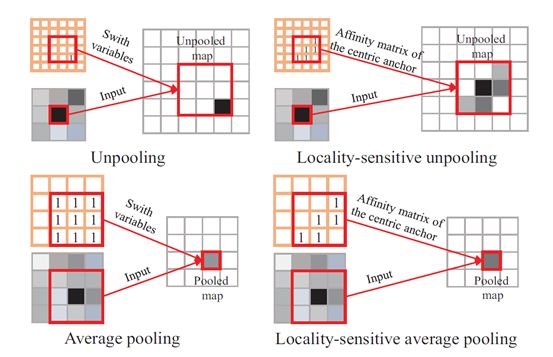

Locality-Sensitive DeconvNet

Locality-Sensitive Unpooling 局部敏感去池化

conventional unpooling 最大池化的逆过程,unpooling is helpful to reconstruct detailed

object boundaries, its capability can be limited a lot due to the excessive dependence on the input responding map with large context.

affinity matrix的来源是 RGB-D pixels

就像一个二维线性插值,更强调相邻的相似像素

Deconvolution 反卷积

discontinuous boundary responses , to make up the missing detais

[21] 关于反卷积操作看这里

Locality-Sensitive Average Pooling 局部敏感平均池化

进一步促进相似像素之间的连续性

传统平均池化有缺点 to blur object boundaries and result in imprecise semantic segmentation map.

根据affinity matrix 只有相似的像素才会计入平均池化操作

can achieve consistent and robust feature representation for the consecutive object structures.

Gated Fusion 门控融合

3 layers concatenation layer/ convolution layer/ sigmoid layer

框图

Implementation Details

- preprocessing

affinity matrix A的计算

method[10] extract low-level RGB-D features(gradients over visual and geometrical cues) for each pixel, employ gPb-ucm[1] to generate over-segments. These over-segments can be used to calculate A by verifying that pairwise pixels belong to the same over-segment (similarity is 1) or not (similarity is 0). Note that we will scale A to match the resolution of the corresponding feature maps.

- Optimization

two stages

- train two independent locality-sensitive DeconvNets on RGB and depth for semantic segmentation without the gated fusion layer 先是分别训练RGB和深度图的两个网络,没有融合层

- In the second stage, we add the gated fusion layer, and then finetune the whole networks on the synchronized RGB and depth data.在第二阶段,我们添加门控融合层,然后在同步RGB和深度数据上微调整个网络

Experiments

Set up

datasets: 2 benchmark RGB-D dataset SUN RGB-D dataset [25] and the popular NYU-Depth v2 dataset

Metrics:pixel accuracy, mean accuracy, mean IOU and frequency weighted IOU

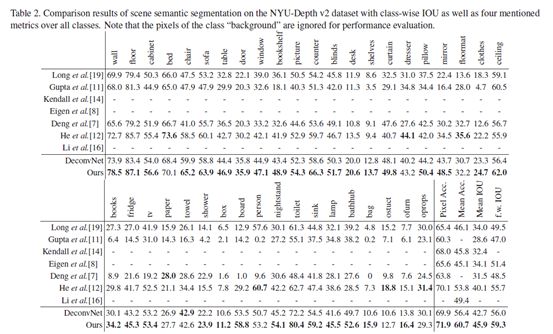

Overall Performance

table1

table2

ablation study

切除研究

removing or replacing each component independently or both together for semantic segmentation on the NYU-Depth v2 dataset

table3

We owe the improvement to the accurate recognition of some hard objects in the scene by gated fusion, such as box on the sofa and chair in the weak lights.

对结果的分析:

visualized Comparisons 可视化得比较

figure4

在 NYU-Depth v2 dataset 的实验结果

分析 在边界和准确的识别物体上有提高

Conclusion

1) the localitysensitive deconvolution networks, which are designed for simultaneously upsamping the coarse fully convolutional maps and refining object boundaries; 2) gated fusion, which can adapt to the varying contributions of RGB and depth for better fusion of the two modalities for object recognition.