flink入门实践批处理本地文件,socket,kafka流数据处理(java版 )

1.flink基本简介,详细介绍https://www.cnblogs.com/davidwang456/p/11256748.html

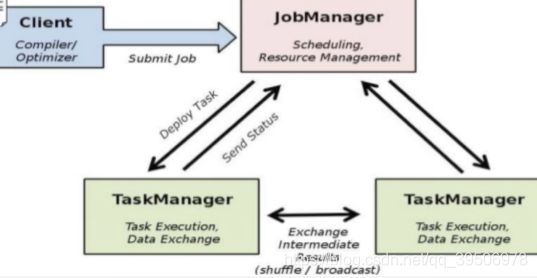

Apache Flink是一个框架和分布式处理引擎,用于对无界(无界流数据通常要求以特定顺序摄取,例如事件发生的顺序)和有界数据流(不需要有序摄取,因为可以始终对有界数据集进行排序)进行有状态计算。Flink设计为在所有常见的集群环境中运行,以内存速度和任何规模执行计算.核心角色就两个:jobmanager /taskManager

2.安装部署

官网:https://flink.apache.org/downloads.html

wget http://mirror.bit.edu.cn/apache/flink/flink-1.9.2/flink-1.9.2-bin-scala_2.11.tgz 然后解压后进入bin目录启动 ./start-cluster.sh

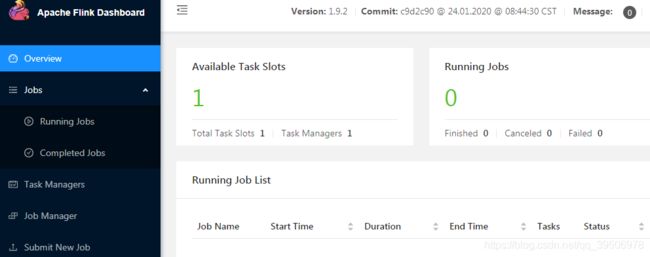

浏览器访问:http://ip:8081 进入flink的dashboard,可以查看当前job运行状态结果,添加新的job等

3.使用官方的demo启动一个job

1.首先安装一个socket工具: yum install nmap-ncat.x86_64 ,nc -l 9001 启动一个交互终端;

2. 换个窗口,启动flink的demo监听9001: /bin/flink run examples/streaming/SocketWindowWordCount.jar --port 9001 ,然后dashboard就可以可以看到这个job

3.这个job会将结果写入安装目录log下,文件名规则flink-用户名-taskexecutor-任务号-服务器ip.out,tail -22f flink-root-taskexecutor-1-192.168.203.131.out

4.然后到步骤1开启终端输入数据回车,步骤3即可看到flink处理后并输出到日志文件的结果4.自定义flink job : socket word count——StreamExecutionEnvironment

idea创建一个普通maven项目,非springboot,pom如下,指定打jar包,版本>= flink dashboard的版本一般没有问题。

4.0.0

test

test

1.0-SNAPSHOT

jar

1.10.0

org.apache.flink

flink-java

${flink.version}

org.apache.flink

flink-streaming-java_2.11

${flink.version}

org.apache.flink

flink-clients_2.11

${flink.version}

建个包test.flink,类SocketWindowWordCount,官方的demo类,main方法指定要连接的socket ip与port

package test.flink;/*

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.util.Collector;

@SuppressWarnings("serial")

public class SocketWindowWordCount {

public static void main(String[] args) throws Exception {

final String hostname;

final int port;

try {

final ParameterTool params = ParameterTool.fromArgs(args);

hostname = params.has("hostname") ? params.get("hostname") : "192.168.203.131";

port = params.has("port")?params.getInt("port"):9001;

} catch (Exception e) {

System.err.println("No port specified. Please run 'SocketWindowWordCount " +

"--hostname --port ', where hostname (localhost by default) " +

"and port is the address of the text server");

System.err.println("To start a simple text server, run 'netcat -l ' and " +

"type the input text into the command line");

return;

}

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStream text = env.socketTextStream(hostname,port,"\n");

//通过text对象转换得到新的DataStream对象,

//转换逻辑是分隔每个字符串,取得的所有单词都创建一个WordWithCount对象

DataStream windowCounts = text.flatMap(new FlatMapFunction() {

@Override

public void flatMap(String s, Collector collector) throws Exception {

for(String word : s.split("\\s")){ //按空格空字符切分单次

collector.collect(new WordWithCount(word, 1L));

}

}

})

.keyBy("word")//key为word字段

.timeWindow(Time.seconds(5)) //五秒一次的翻滚时间窗口

.reduce(new ReduceFunction() { //reduce策略

@Override

public WordWithCount reduce(WordWithCount a, WordWithCount b) throws Exception {

return new WordWithCount(a.word, a.count+b.count);

}

});

//单线程输出结果

windowCounts.print().setParallelism(1);

// 执行job

env.execute("Flink Streaming Java API Skeleton");

}

//pojo

public static class WordWithCount {

public String word;

public long count;

public WordWithCount() {}

public WordWithCount(String word, long count) {

this.word = word;

this.count = count;

}

@Override

public String toString() {

return word + " : " + count;

}

}

}

启动自定义JOB

1. 本地main方法启动job,nc -l 9001输入数据即可在控制台输出结果,本地job与flink dashbord无关的。

2.Linux服务器flink命令如demo启动job:使用命令./bin/flink ru-c test.flink.SocketWindowWordCount test-1.0-SNAPSHOT.jar -hostname 127.0.0.1 --port 9001 指定main方法位置,main方法的两个参数hostname /port

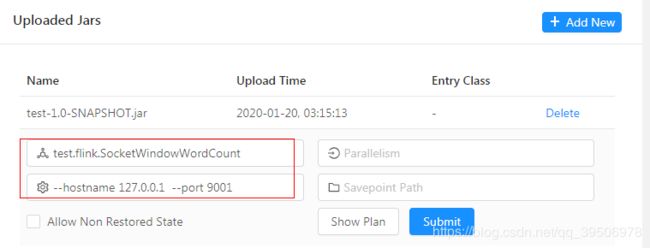

3.使用dashbord 的Submit New Job功能上传本地jar,并指定main方法位置及其它参数进行启动

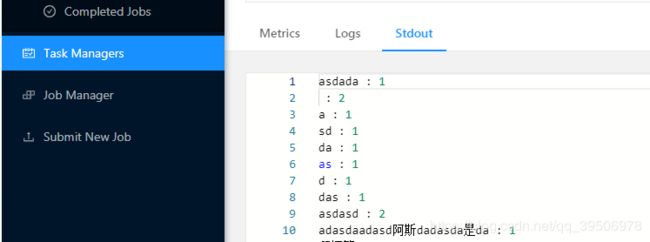

除了日志文件这里也可以看到处理结果

5.自定义flink job:localfile word count(启动同上,在同一个jar中,有限流批处理完自动退出任务)——ExecutionEnvironment

package test.flink;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.aggregation.Aggregations;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.util.Collector;

public class LocalFileWordCount {

public static void main(String[] args) throws Exception {

final ParameterTool params = ParameterTool.fromArgs(args);

final ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

env.getConfig().setGlobalJobParameters(params);

//获取有限流-批处理数据

DataSet text = env.readTextFile(params.get("input"));

DataSet> counts = text.flatMap(new Splitter()) // split up the lines in pairs (2-tuples) containing: (word,1)

.groupBy(0).aggregate(Aggregations.SUM, 1);// group by the tuple field "0" and sum up tuple field "1"

counts.writeAsText(params.get("output"));

env.execute("本地文件word count");

}

}

@SuppressWarnings("serial")

class Splitter implements FlatMapFunction> {

@Override

public void flatMap(String value, Collector> out) {

String[] tokens = value.split("\\W+");

for (String token : tokens) {

if (token.length() > 0) {

out.collect(new Tuple2(token, 1));

}

}

}

}

6.自定义flink job:kafka word count——StreamExecutionEnvironment

操作套路基本同上,只是数据源变了。kafka配置参数较多,建议外部文件引入而不是脚本参数引入ParameterTool fromArgs = ParameterTool.fromPropertiesFile("/home/kafka/kafka.properties")。处理后的数据可能不只是写本地文件,一般存入redis,es,hdfs,db等下游组件,需要导入连接相关的依赖,参考https://blog.csdn.net/boling_cavalry/article/details/85549434?depth_1-utm_source=distribute.pc_relevant.none-task&utm_source=distribute.pc_relevant.none-task

org.apache.flink

flink-connector-kafka-0.11_2.12

${flink.version}

package test.flink;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer011;

import org.apache.flink.table.descriptors.Kafka;

import org.apache.flink.util.Collector;

import java.util.Properties;

public class KafkaFlinkStream {

public static void main(String[] args) throws Exception {

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.enableCheckpointing(5000); // 非常关键,一定要设置启动检查点!!

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

//kafka属性配置

Properties props = new Properties();

props.setProperty("bootstrap.servers", "192.168.0.101:9092");

props.setProperty("group.id", "demo");

props.setProperty("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer"); //key 反序列化

props.setProperty("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.setProperty("auto.offset.reset", "latest"); //value 反序列化

FlinkKafkaConsumer011 consumer = new FlinkKafkaConsumer011("demo",

new SimpleStringSchema(), // String 序列化

props);

DataStream kafkaCount = env.addSource(consumer).flatMap(new FlatMapFunction() {

@Override

public void flatMap(String s, Collector collector) throws Exception {

for (String word : s.split("\\s")) { //按空格空字符切分单词

collector.collect(new WordWithCount(word, 1L));

}

}

}) .filter(wordWithCount ->wordWithCount.word.length()>0) //过滤

.keyBy("word")//key为word字段

.reduce(new ReduceFunction() { //reduce累加

@Override

public WordWithCount reduce(WordWithCount a, WordWithCount b) throws Exception {

return new WordWithCount(a.word, a.count + b.count);

}

});

kafkaCount.print();

env.execute("Flink-Kafka测试");

}

public static class WordWithCount {

public String word;

public long count;

public WordWithCount() {

}

public WordWithCount(String word, long count) {

this.word = word;

this.count = count;

}

@Override

public String toString() {

return word + " : " + count;

}

}

}

测试方式:./kafka-console-producer.sh --broker-list localhost:9092 --topic demo

7.api总结

核心api如map flatmap reduce filter collector等基本类似jdk8,其它元祖Tupl1>>>Tupl25最高25元祖。其它优质API详解参考:https://blog.csdn.net/u014252478/article/details/102516060?ops_request_misc=%257B%2522request%255Fid%2522%253A%2522158358898019725222419850%2522%252C%2522scm%2522%253A%252220140713.130056874..%2522%257D&request_id=158358898019725222419850&biz_id=0&utm_source=distribute.pc_search_result.none-task

https://blog.csdn.net/u014252478/article/details/102516060?ops_request_misc=%257B%2522request%255Fid%2522%253A%2522158358898019725222419850%2522%252C%2522scm%2522%253A%252220140713.130056874..%2522%257D&request_id=158358898019725222419850&biz_id=0&utm_source=distribute.pc_search_result.none-task