由于最近需要准备一些数据,故开始练习使用胶水语言,经过一番探索终于完成了豆瓣电影信息的爬取,特此分享.

需要说明的是,我这里把电影信息提取之后,缓存了电影封面和演职人员的图片,并对图片信息进行了获取入库

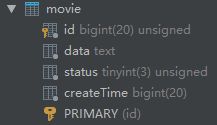

先贴出我两种表结构:

1.电影表:

其中data是存储电影信息的json数据,如下:

{"mActorRole": [{"name": "奥克塔维亚·斯宾瑟 ", "id": 1154263, "role": "暂无角色信息"},{"name": "约翰·浩克斯 ", "id": 1100849, "role": "暂无角色信息"},{"name": "凯蒂·洛茨 ", "id": 1232918, "role": "暂无角色信息"},{"name": "詹姆斯·拉夫尔提 ", "id": 1022640, "role": "暂无角色信息"},{"name": "小克利夫顿·克林斯 ", "id": 1019033, "role": "暂无角色信息"}], "mCoverId": 2499355591, "mDirector": [{"name": "Eshom Nelms ", "id": 1387484}], "mId": 26802500, "mLength": "92分钟", "mName": "小城犯罪 Small Town Crime", "mShowDate": "2017-03-11(美国)", "mSynopsis": " 奥克塔维亚·斯宾瑟将携约翰·浩克斯出演独立惊悚片《小城犯罪》。电影讲述由浩克斯扮演的酗酒前警察偶然发现一具女尸,并不慎将他的家庭至于危险之中,他不得不一边寻找凶手,一边与恶势力作斗争。该片由内尔姆斯兄弟执导,目前正在拍摄中。 ", "mType": "惊悚"}

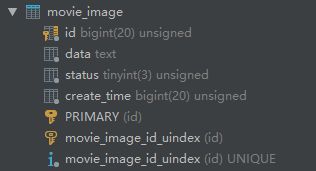

2.图片表:

两个表,结构一致名字不同,id为电影表内演职人员的id.data为图片属性的json字符串,如下:

{"format": "jpg", "height": 383, "size": 20016, "width": 270}

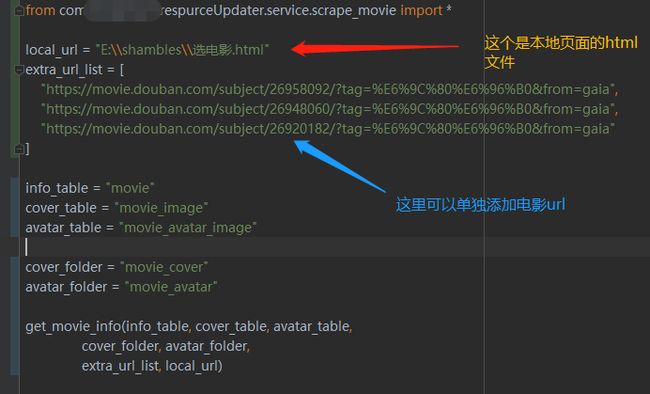

脚本由以下方法调用执行:

需要注意的是 , 下图中的local_url是 豆瓣最新电影 这个页面缓存到本地后的html文件的路径 , 也可以不传 .

# coding=utf-8

import os

import sys

import cv2

import pymysql

import re

import json

import time

import requests

from bs4 import BeautifulSoup

def get_all_url(local_url, extra_url_list):

"""

:param local_url: 电影列表采取动态解析 , 故需要下载解析完毕的数据 . 可为空

:param extra_url_list: 有时候新出的电影凑不够一页,所以手动加在数组里传进来

:return: 单条电影url的list

"""

if local_url is not None:

html_file = open(local_url, 'r', encoding='UTF-8') # NOTE 只读打开 注意编码

try:

html_page = html_file.read()

except IOError:

print("--------------------本地html读取失败! ------------------------")

return

soup = BeautifulSoup(html_page, 'html.parser')

new_list = soup.findAll('a', {'class': 'item'})

print('本次预计进行更新的电影数据个数为: %d' % (len(new_list) + len(extra_url_list)))

for movie in new_list:

movie_url = movie.get('href')

extra_url_list.append(movie_url)

if len(extra_url_list) == 0:

print("没有收到任何url , 请检查参数 !")

sys.exit(0)

command = int(input('本次即将进行更新的电影数据个数为 : %d 个 , 按<1>确认更新,按<0>取消更新.....\n' % len(extra_url_list)))

i = 0

while i < 2:

if command == 1:

return extra_url_list

if command == 0:

print("本次更新已取消 ! ")

sys.exit(0)

else:

i += 1

command = int(input("您的输入有误 , 还有%d次机会 ! 请再次输入..." % (3 - i)))

print("检查一下你的输入法 ! 再见 ! ")

sys.exit(0)

def frisk_image(folder, table):

if not os.path.exists(folder):

print("-------------------------- %s 不存在 , 请检查 !-------------------------------" % folder)

return

start_time = time.time()

m_image = pymysql.Connect(

host="数据库主机", port=3306,

user="用户名", passwd="密码",

db="图片表名", charset='utf8') # NOTE 一定要设置编码

image_cursor = m_image.cursor()

count = 0

for parent, dir_names, file_names in os.walk(folder):

for file_name in file_names:

full_name = os.path.join(parent, file_name) # note 全路径名

try:

img = cv2.imread(full_name)

except Exception as err:

print("-------------------------------读取<%s>时发生错误 !------------------------------ " % full_name)

print(err)

continue

shape = img.shape

image_data = {"format": re.findall(r"[^.]+$", file_name)[0], "height": int(shape[0]),

"size": int(os.path.getsize(full_name)), "width": int(shape[1])}

img_id = int(

file_name.replace(re.findall(r"[^.]+$", file_name)[0], "").replace(".", "").replace("p",

""))

json_data = json.dumps(image_data,

sort_keys=True, ensure_ascii=False).replace("'", '"') # NOTE 格式化

image_cursor.execute("select count(*) from %s where id =%d" % (table, img_id)) # note 有记录则跳过

if not image_cursor.fetchall()[0][0] == 0:

print("----------------------图片库中已存在id为<%d>的数据 ! 已跳过---------------------" % img_id)

continue

sql = "INSERT INTO %s (id,data, create_time) VALUES (%d , '%s', %d)" % (

table, img_id, json_data, round(time.time() * 1000))

try:

image_cursor.execute(sql)

m_image.commit()

except Exception as err:

print(err)

print("-------------------<%s>在写入数据库过程中出现问题 , 已回滚 !---------------------" % full_name)

m_image.rollback() # NOTE 出错则回滚

continue

print("恭喜! %s 已成功入库啦!" % file_name)

count += 1

m_image.close() # TODO 别忘了这个!

print("恭喜您入库完成 ! 本次入库%d条数据,用时%d秒" % (count, time.time() - start_time))

def get_movie_info(info_table, cover_table, avatar_table,

cover_folder, avatar_folder,

extra_url_list, local_url=None):

start_time = time.time()

url_list = get_all_url(local_url, extra_url_list)

mask_tmp = pymysql.Connect(

host="数据库主机", port=3306,

user="用户名", passwd="密码",

db="电影表名", charset='utf8') # NOTE 一定要设置编码

movie_cursor = mask_tmp.cursor()

headers = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/47.0.2526.80 Safari/537.36'

}

count = 0

for url in url_list:

print(">>>>>>>>>>>>>>>>>>>>>>第" + str(count + 1) + "个开始<<<<<<<<<<<<<<<<<<<<<<<<<")

data = requests.get(url, headers=headers).content

row_id = 0

soup = BeautifulSoup(data, 'html.parser') # NOTE 实例化一个BeautifulSoup对象

movie_data = {}

try:

m_id = re.findall(r"ect/(.+?)/", url)[0]

if m_id.isdigit(): # NOTE 校验一下mId是否为数字

movie_data['mId'] = int(m_id) # NOTE 设置id为原站id

else:

continue

except Exception as err:

print(err)

print( str(url))+">>>>>>>>>>>>>>>>>>>>>>>>>>>>>解析id出错 !"

continue

has_movie = movie_cursor.execute(

"select id from " + str(info_table) + " where data like '%mId\":" + str(m_id) + ",%'")

if not has_movie == 0:

row_id = int(movie_cursor.fetchall()[0][0])

print("发现重复电影>>>>>>>>>>>>>>>>>>mId =<%d> row_id=<%d> , ! " % (int(m_id), row_id))

try:

if soup.find('span', {'property': 'v:itemreviewed'}) is None: # NOTE 这个和下面的一样,如果标签找不到就跳过此项数据(或将此数据置默认值)

continue

else:

movie_data['mName'] = soup.find('span', {'property': 'v:itemreviewed'}).getText() # NOTE 设置电影名

if soup.find('span', {'property': 'v:summary'}) is not None:

movie_data['mSynopsis'] = soup.find('span', {'property': 'v:summary'}).getText().replace("\n",

"").replace("\t",

"").replace(

"\v", "").replace("\r", "") # NOTE 设置简介( 去掉格式符号)

else:

movie_data['mSynopsis'] = "暂无电影简介"

if len(soup.findAll('li', {'class': 'celebrity'})) == 0:

continue

else:

director__actor = soup.findAll('li', {'class': 'celebrity'}) # NOTE 演职人员数据都在一起

directors = []

for single_director in director__actor:

if single_director.find("span", {"title": "导演"}) is not None:

director = {}

if single_director.find("a", {"class": "name"}) is not None:

a = single_director.find("a", {"class": "name"})

director["name"] = a.getText()

director["id"] = int(re.findall(r"/celebrity/(.+?)/", a['href'])[0])

inner_all_url = re.findall(r"url\((.+?)\)",

single_director.find("div", {"class": "avatar"})["style"])

img_url = inner_all_url[len(inner_all_url) - 1] # NOTE 图片url (部分人的图片不止一张,最后一张一定人头像)

if img_url.find("default") == -1: # NOTE 初步发现默认图的路径包含 default 如果没有应该就是有图的

if not os.path.exists(avatar_folder):

os.makedirs(avatar_folder)

try:

director_pic = requests.get(img_url, timeout=15)

file_name = "%s/%s" % (avatar_folder,

str(director["id"]) + "." + re.findall(r"[^.]+$", img_url)[

0]) # NOTE 数值id拼接格式,格式为最后一个.后的字符串 图片id为导演id

fp = open(file_name, 'wb')

fp.write(director_pic.content)

fp.close()

print("导演图片 : %s 成功保存到本地 ! " % file_name)

except Exception as err:

print(err)

print(" %s>>>>>>>>>>>>>>>>>在处理导演图片时出错!" % url)

directors.append(director)

movie_data["mDirector"] = [','.join(str(i) for i in directors)] # NOTE 导演

actors = []

for single_actor in director__actor:

has_role = True

li = single_actor.find("span", {"class", "role"})

if li is None: # NOTE 没有角色

has_role = False

else:

if li.getText().find("饰") == -1: # NOTE title与文本不同 肯定不是演员

continue

act = {}

a = single_actor.find("a", {"class": "name"})

if a is None:

continue

act["name"] = a.getText()

act["id"] = int(re.findall(r"/celebrity/(.+?)/", a['href'])[0])

act["role"] = single_actor.find("span", {"class", "role"}).getText().replace("饰 ",

"") if has_role else "暂无角色信息"

inner_all_url = re.findall(r"url\((.+?)\)",

single_actor.find("div", {"class": "avatar"})["style"])

img_url = inner_all_url[len(inner_all_url) - 1] # NOTE 图片url (部分人的图片不止一张,最后一张一定人头像)

if img_url.find("default") == -1: # NOTE 初步发现默认图的路径包含 default 如果没有应该就是有图的

if not os.path.exists(avatar_folder):

os.makedirs(avatar_folder)

try:

actor_pic = requests.get(img_url, timeout=15)

file_name = "%s/%s" % (avatar_folder,

str(act["id"]) + "." + re.findall(r"[^.]+$", img_url)[

0]) # NOTE 数值id拼接格式,格式为最后一个.后的字符串 图片id 为演员id

fp = open(file_name, 'wb')

fp.write(actor_pic.content)

fp.close()

print("演员图片 : %s 成功保存到本地 ! " % file_name)

except Exception as err:

print(err)

print("<%s>>>>>>>>>>>>>>>>>>>>> 在处理演员图片时出错!" % url)

actors.append(act)

movie_data["mActorRole"] = [','.join(str(i) for i in actors)] # NOTE 演员

if len(soup.findAll('span', {'property': 'v:genre'})) == 0:

continue

else:

m_type = soup.findAll('span', {'property': 'v:genre'})

types = []

for single_type in m_type:

types.append(single_type.getText())

movie_data["mType"] = ' / '.join(str(i) for i in types) # NOTE 设置类型 , 结果格式为 : 爱情 / 动作 / 伦理

if len(soup.findAll('span', {'property': 'v:initialReleaseDate'})) == 0:

continue

else:

show_date_list = soup.findAll('span', {'property': 'v:initialReleaseDate'})

show_date = []

for single_date in show_date_list:

show_date.append(single_date.getText())

movie_data["mShowDate"] = ' / '.join(str(i) for i in show_date)

movie_data["mLength"] = soup.find('span', {'property': 'v:runtime'}).getText() \

if soup.find('span', {'property': 'v:runtime'}) is not None else "暂无时长" # NOTE 电影时长

img_url = soup.find("img", {"title": "点击看更多海报"})["src"]

if img_url.find("default") == -1: # NOTE 初步发现默认图的路径包含 default 如果没有应该就是有图的

img_name = re.findall(r"lic/.*", img_url)[0].replace("lic/", "")

if not os.path.exists(cover_folder):

os.makedirs(cover_folder)

try:

cover = requests.get(img_url, timeout=15)

file_name = "%s/%s" % (cover_folder, img_name)

fp = open(file_name, 'wb')

fp.write(cover.content)

fp.close()

print("封面图片 : %s 成功保存到本地 ! " % file_name)

except Exception as err:

print(err)

print("url为----> %s 的电影在存储封面时出错!" % url)

movie_data["mCoverId"] = 0

movie_data["mCoverId"] = int(re.findall(r"ic/p(.+?)\.", img_name)[0])

else:

movie_data["mCoverId"] = 0 # NOTE 没封面的电影图片指向0

json_data = json.dumps(movie_data, sort_keys=True, ensure_ascii=False).replace("'", '"').replace('["',

'[').replace(

'"]', ']') # NOTE 格式化输出电影信息

except Exception as err:

print(err)

print("--------------------------------解析网页失败 %s失败 ----------------------------------" % url)

continue

if not row_id == 0:

sql = "update %s set data = '%s' where id = %d " % (info_table, json_data, row_id)

count -= 1

else:

movie_cursor.execute("select id from %s order by id desc limit 1 " % info_table)

last_id = movie_cursor.fetchall()[0][0] # NOTE 获取最后一条数据的id

sql = "INSERT INTO %s (id,data, createTime) VALUES (%d , '%s', %d)" % (

info_table, last_id + 1, json_data, round(time.time() * 1000))

try:

movie_cursor.execute(sql)

mask_tmp.commit()

except Exception as err:

print(err)

print("---------------------------%s在写入数据库过程中出现问题 , 已回滚 ! -------------------------------" % url)

mask_tmp.rollback() # NOTE 出错则回滚

continue

print("恭喜! 《%s》已成功入库啦!" % url)

count += 1

mask_tmp.close() # TODO 别忘了这个!

print("恭喜您入库完成 ! 本次新入库%d条数据,用时%d秒" % (count, time.time() - start_time))

print("------------------------华丽的分割线-----------------------------")

print("即将扫描生成的图片.............................................................................................倒计时5")

print("即将扫描生成的图片.............................................................................................倒计时4")

print("即将扫描生成的图片.............................................................................................倒计时3")

print("即将扫描生成的图片.............................................................................................倒计时2")

print("即将扫描生成的图片.............................................................................................倒计时1")

print("开始扫描生成的图片!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!")

frisk_image(cover_folder, cover_table) #调用图片处理

frisk_image(avatar_folder, avatar_table) #调用图片处理

经实测,效果显著,百试不爽几乎完美适配所有豆瓣电影!!图书与音乐类似,在此不再赘述!感谢阅读!