Pytorch-LSTM输入输出参数

1:Pytorch中的LSTM中输入输出参数

nn.lstm是继承nn.RNNBase,初始化的定义如下:

class RNNBase(Module):

...

def __init__(self, mode, input_size, hidden_size,

num_layers=1, bias=True, batch_first=False,

dropout=0., bidirectional=False):

以下是Pytorch中的参数及其含义,解释如下:

- input_size – 输入数据的大小,也就是前面例子中每个单词向量的长度

- hidden_size – 隐藏层的大小(即隐藏层节点数量),输出向量的维度等于隐藏节点数

- num_layers – recurrent layer的数量,默认等于1。

- bias – If False, then the layer does not use bias weights b_ih and b_hh. Default: True

- batch_first – 默认为False,也就是说官方不推荐我们把batch放在第一维,这个与之前常见的CNN输入有点不同,此时输入输出的各个维度含义为 (seq_length,batch,feature)。当然如果你想和CNN一样把batch放在第一维,可将该参数设置为True,即 (batch,seq_length,feature),习惯上将batch_first 设置为True。

- dropout – 如果非0,就在除了最后一层的其它层都插入Dropout层,默认为0。

- bidirectional – 如果设置为 True, 则表示双向 LSTM,默认为 False

2:输入数据(以batch_first=True,单层单向为例)

假设输入数据信息如下:

- 输入维度 = 28

nn.lstm中的API输入参数如下:

- time_steps= 3

- batch_first = True

- batch_size = 10

- hidden_size =4

- num_layers = 1

- bidirectional = False

备注:先以简单的num_layers=1和bidirectional=1为例,后面会讲到num_layers与bidirectional的LSTM网络具体构造。

下在面代码的中:

lstm_input是输入数据,隐层初始输入h_init和记忆单元初始输入c_init的解释如下:

h_init:维度形状为 (num_layers * num_directions, batch, hidden_size):

- 第一个参数的含义num_layers * num_directions, 即LSTM的层数乘以方向数量。这个方向数量是由前面介绍的

bidirectional决定,如果为False,则等于1;反之等于2(可以结合下图理解num_layers * num_directions的含义)。 - batch:批数据量大小

- hidden_size: 隐藏层节点数

c_init:维度形状也为(num_layers * num_directions, batch, hidden_size),各参数含义与h_init相同。因为本质上,h_init与c_init只是在不同时刻的不同表达而已。

备注:如果没有传入,h_init和c_init,根据源代码来看,这两个参数会默认为0。

import torch

from torch.autograd import Variable

from torch import nn

input_size = 28

hidden_size = 4

lstm_seq = nn.LSTM(input_size, hidden_size, num_layers=1,batch_first=True) # 构建LSTM网络

lstm_input = Variable(torch.randn(10, 3, 28)) # 构建输入

h_init = Variable(torch.randn(1, lstm_input.size(0), hidden_size)) # 构建h输入参数 -- 每个batch对应一个隐层

c_init = Variable(torch.randn(1, lstm_input.size(0), hidden_size)) # 构建c输出参数 -- 每个batch对应一个隐层

out, (h, c) = lstm_seq(lstm_input, (h_init, c_init)) # 将输入数据和初始化隐层、记忆单元信息传入

print(lstm_seq.weight_ih_l0.shape) # 对应的输入学习参数

print(lstm_seq.weight_hh_l0.shape) # 对应的隐层学习参数

print(out.shape, h.shape, c.shape)

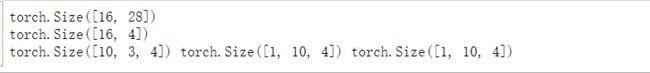

输出结果如下:

输出结果解释如下:

(1)lstm_seq.weight_ih_l0.shape的结果为:torch.Size([16, 28]),表示对应的输入到隐层的学习参数:(4*hidden_size, input_size)。

(2)lstm_seq.weight_hh_l0.shape的结果为:torch.Size([16, 4]),表示对应的隐层到隐层的学习参数:(4*hidden_size, num_directions * hidden_size)

(3)out.shape的输出结果:torch.Size([10,3, 4]),表示隐层到输出层学习参数,即(batch,time_steps, num_directions * hidden_size),维度和输入数据类似,会根据batch_first是否为True进行对应的输出结果,(如果代码中,batch_first=False,则out.shape的结果会变为:torch.Size([3, 10, 4])),

这个输出tensor包含了LSTM模型最后一层每个time_step的输出特征,比如说LSTM有两层,那么最后输出的是![]() ,表示第二层LSTM每个time step对应的输出;另外如果前面对输入数据使用了

,表示第二层LSTM每个time step对应的输出;另外如果前面对输入数据使用了torch.nn.utils.rnn.PackedSequence,那么输出也会做同样的操作编程packed sequence;对于unpacked情况,我们可以对输出做如下处理来对方向作分离output.view(seq_len, batch, num_directions, hidden_size), 其中前向和后向分别用0和1表示Similarly, the directions can be separated in the packed case

h.shape输出结果是: torch.Size([1, 10, 4]),表示隐层到输出层的参数,h_n:(num_layers * num_directions, batch, hidden_size),只会输出最后个time step的隐状态结果(如下图所示)

c.shape的输出结果是: torch.Size([1, 10, 4]),表示隐层到输出层的参数,c_n :(num_layers * num_directions, batch, hidden_size),同样只会输出最后个time step的cell状态结果(如下图所示)

3:输入数据(以batch_first=True,双层双向)

'''

batch_first = True : 输入形式:(batch, seq, feature)

bidirectional = True

num_layers = 2

'''

num_layers = 2

bidirectional_set = True

bidirectional = 2 if bidirectional_set else 1

input_size = 28

hidden_size = 4

lstm_seq = nn.LSTM(input_size, hidden_size, num_layers=num_layers,bidirectional=bidirectional_set,batch_first=True) # 构建LSTM网络

lstm_input = Variable(torch.randn(10, 3, 28)) # 构建输入

h_init = Variable(torch.randn(num_layers*bidirectional, lstm_input.size(0), hidden_size)) # 构建h输入参数

c_init = Variable(torch.randn(num_layers*bidirectional, lstm_input.size(0), hidden_size)) # 构建c输出参数

out, (h, c) = lstm_seq(lstm_input, (h_init, c_init)) # 计算

print(lstm_seq.weight_ih_l0.shape)

print(lstm_seq.weight_hh_l0.shape)

print(out.shape, h.shape, c.shape)

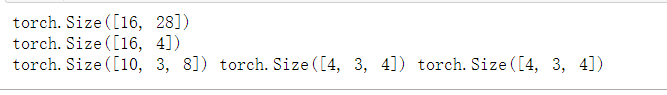

输出结果如下: