linux 下部署装elasticsearch-7.2.0+elasticsearch-head-master+kibana-7.2.0-linux-x86_64+logstash-7.2.0

因为业务需要要将线上elasticsearch-2.3升级到最新版本,官方称7.2.0版本比之前版本运行速度和效率有质的飞跃!

我们将安装elasticsearch-7.2.0配套的环境

一,首先去各自官网下载相应的文件:

elasticsearch-7.2.0

elasticsearch-head-master

kibana-7.2.0-linux-x86_64

logstash-7.2.0

elasticsearch-analysis-ik-7.2.0

node-v8.16.0-linux-x64.tar

在此我就不贴官网地址了,给大家提供个百度云盘我下载好的链接

链接:https://pan.baidu.com/s/1qUSDGHaIRHfjjyfyZ7A9Pg

提取码:e54v

二,安装前准备

1,因为elasticsearch不允许使用root账户启动,所以我们首先创建用户

useradd elk

2,更改系统资源限制

vim /etc/security/limits.conf

添加如下参数:

* soft nofile 65536

* hard nofile 65536

* soft nproc 2048

* hard nproc 4096

vim /etc/sysctl.conf

添加:

vm.max_map_count=655360

使用如下命令使参数生效

sysctl -p

vim /etc/security/limits.d/90-nproc.conf

* soft nproc 1024

* soft nproc 4096

三,安装部署

1,安装elasticsearch

因提供的都是二进制包,无需编译,可以直接使用

解压

tar -xvf elasticsearch-7.2.0

将文件放到合适的地方

mv elasticsearch-7.2.0 /home/elk/

将文件所有者更改为elk

chown -R elk.elk /home/elk/elasticsearch-7.2.0

编辑elasticsearch配置文件:

vim elasticsearch.yml

cluster.name: xavito

transport.tcp.compress: true

cluster.initial_master_nodes: ["vito248","vito203"]

discovery.seed_hosts: ["192.168.1.248", "192.168.1.203"]

node.name: vito203

http.port: 9200

bootstrap.memory_lock: false

bootstrap.system_call_filter: false

network.host: 0.0.0.0

http.cors.enabled: true

http.cors.allow-origin: "*"

其中我这是两个节点的集群,如果是单节点cluster参数就不用配置了,如果是集群要主要集群的写法,有别与之前的版本

写错了就会到导致服务起不来,或者后续elasticsearch-head-master在网页上连接不上。

同时也可以指定数据文件和日志文件路径,默认情况下在当前主文件下

#path.data: /path/to/data

#path.logs: /path/to/logs

直接用elk用户启动即可

su elk

/home/elk/elasticsearch-7.2.0/elasticsearch -d (-d为后台运行)

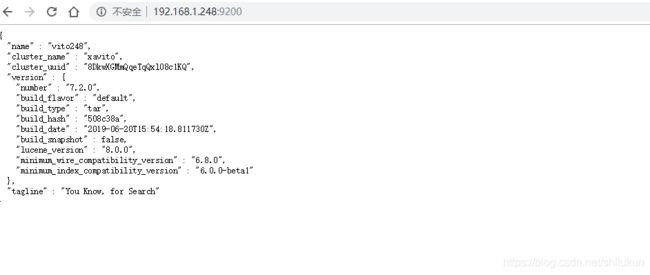

启动后可以用curl或者浏览其访问9200端口:

表示启动已经成功了

2,安装elasticsearch-head-master.zip

解压:

unzip elasticsearch-head-master.zip

mv elasticsearch-head-master /home/elk/

chown -R elk.elk /home/elk/elasticsearch-head-maste

安装:

首先必须有node.js,才可以安装,没有的话安装下,node只要在环境变量里配置好就行了

安装node如下:

将安装包上传到指定位置(我习惯放到:/usr/local/application/目录),并解压

tar -xvf node-v10.6.0-linux-x64.tar.xz

重命名文件夹

1 mv node-v10.6.0-linux-x64 nodejs

通过建立软连接变为全局

ln -s /usr/local/application/nodejs/bin/npm /usr/bin/

ln -s /usr/local/application/nodejs/bin/node /usr/bin/

检查是否安装成功,命令:node-v

node -v

v10.6.0

已安装的上面步骤略过

cd /home/elk/elasticsearch-head-maste

执行 npm install

执行 npm run start (npm run start &)

netstat -nltp

看到9100端口开启,表示安装成功了

可以在浏览器登录

3、安装kibana-7.2.0-linux-x86_64.tar.gz,在7.2版本中官方直接支持中文,在配置文件中即可设置

解压

tar -xvf kibana-7.2.0-linux-x86_64.tar.gz

mv kibana-7.2.0-linux-x86_64 /home/elk/

chown -R elk.elk /home/elk/kibana-7.2.0-linux-x86_64

vim kibana.yml

在配置文件中添加如下参数:

server.host: "192.168.1.248"

i18n.locale: "zh-CN"

即可支持中文

4,安装elasticsearch-analysis-ik-7.2.0.zip中文分词器

unzip elasticsearch-analysis-ik-7.2.0.zip

chown -R elk.elk /home/elk/elasticsearch-analysis-ik-7.2.0

直接加载到elasticsearch的 plugins下

mv elasticsearch-analysis-ik-7.2.0 /home/elk/elasticsearch-7.2.0/plugins/ik

重新启动elasticsearch即可

5,安装logstash-7.2.0.tar.gz

解压:

tar -xvf logstash-7.2.0.tar.gz

mv logstash-7.2.0 /home/elk/

chown -R elk.elk /home/elk/ logstash-7.2.0

根据实际需要编辑配置文件:

例如:

vim format.cnf

input {

file {

path => "/mnt/new/tomcat/LOGS/WebLogs/user/*.log"

type => "userlog"

start_position => "beginning"

sincedb_path => "/root/logstash-7.2.0/sincedb"

}

file {

path => "/mnt/new/tomcat/LOGS/*/output/*.log"

type => "syslog"

codec => multiline {

pattern => "^\d"

negate => true

what => "previous"

}

start_position => "beginning"

sincedb_path => "/root/logstash-7.2.0/sincedb"

}

file {

path => "/mnt/new/tomcat/LOGS/*/pay/*.log"

type => "pay"

codec => multiline {

pattern => "^\d"

negate => true

what => "previous"

}

start_position => "beginning"

sincedb_path => "/root/logstash-7.2.0/sincedb"

}

}

filter{

if [type] == "userlog" {

grok {

patterns_dir => "/root/logstash-7.2.0/reg"

match => {

"message" => "%{logdatetime:times} %{MSG_:msg}"

}

}

json {

source => "msg"

target => "msg"

}

if [msg][uri]=="/base/msg/tips/queryMsgTipsByUser.htm"{

drop {}

}

if [msg][ip] !~ "^127\.|^192\.168\.|^172\.1[6-9]\.|^172\.2[0-9]\.|^172\.3[01]\.|^10\." {

geoip {

source => "[msg][ip]"

database => "/root/logstash-7.2.0/vendor/bundle/jruby/2.5.0/gems/logstash-filter-geoip-6.0.1-java/vendor/GeoLite2-City.mmdb"

target => "geoip"

fields => ["region_name","city_name","location"]

}

}

useragent {

source => "[msg][ua]"

target => "ua"

}

date {

locale => "cn"

match => ["times", "yyyy-MM-dd HH:mm:ss.SSS","UNIX"]

target => "@timestamp"

}

mutate {

remove_field => "@version"

remove_field => "message"

remove_field => "[msg][ua]"

remove_field => "times"

}

}

if [type] == "syslog" {

grok {

patterns_dir => "/root/logstash-7.2.0/reg"

match=>["message","%{logdatetime:times} .*%{LOGLEVEL:loglevel } {0,4}%{CLASSNAME:classname} {0,4}- {0,4}%{INFOMSG:infomsg}"]

}

if [classname] =~"^com.alibaba.dubbo.config.AbstractConfig" {

drop {}

}

if [classname] =~"^org.springframework.web.servlet.mvc.annotation.DefaultAnnotationHandlerMapping" {

drop {}

}

if [classname] =~"^o.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerMapping" {

drop {}

}

if [loglevel] == "DEBUG" {

drop {}

}

date {

locale => "cn"

match => ["times", "yyyy-MM-dd HH:mm:ss.SSS","UNIX"]

target => "@timestamp"

}

mutate {

remove_field => "@version"

remove_field => "times"

remove_field => "message"

remove_field => "tags"

}

}

if [type] == "pay" {

grok {

patterns_dir => "/root/logstash-7.2.0/reg"

match=>["message","%{logdatetime:times} .*%{LOGLEVEL:loglevel } {0,4}%{CLASSNAME:classname} {0,4}- {0,4}%{INFOMSG:infomsg}"]

}

date {

locale => "cn"

match => ["times", "yyyy-MM-dd HH:mm:ss.SSS","UNIX"]

target => "@timestamp"

}

mutate {

remove_field => "@version"

remove_field => "times"

remove_field => "message"

remove_field => "tags"

}

}

}

output {

elasticsearch {

hosts => ["192.168.1.248:9200","192.168.1.203:9200"]

codec => "json"

index => "log-%{type}-%{+YYYYMM}"

manage_template => true

template_overwrite => true

template_name => "my_logstash"

template => "/root/logstash-7.2.0/bin/logstash.json"

}

}

启动:

/home/elk/ logstash-7.2.0/bin/logstash -f /path/format.cnf

到此安装完成!