OPenCV 图像拼接之------stitching和stitching_detailed

Stitcher类与detail命名空间

OpenCV提供了高级别的函数封装在Stitcher类中,使用很方便,不用考虑太多的细节。

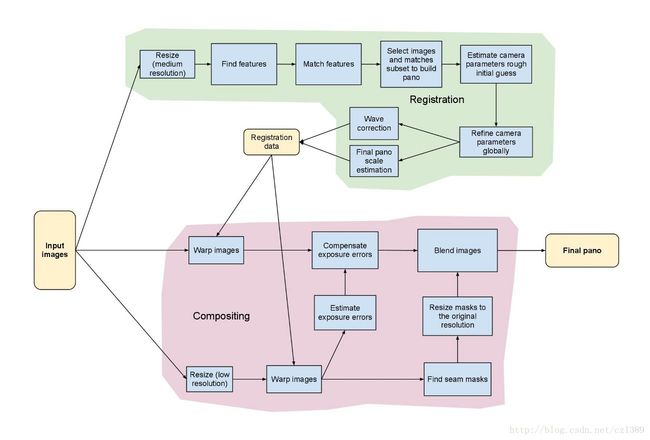

低级别函数封装在detail命名空间中,展示了opencv算法实现的很多步骤和细节,使熟悉如下拼接流水线的用户,方便自己定制。

可见OpenCV图像拼接模块的实现是十分精密和复杂的,拼接的结果很完善,但同时也是费时的,完全不能够实现实时应用。

我在研究detail源码时,由于水平有限,并不能自由灵活地对各种部件取其所需,取舍随意。

官方提供的stitching和stitching_detailed使用示例,分别是高级别和低级别封装这两种方式正确地使用示例。两种结果产生的拼接结果相同,后者却可以允许用户,在参数变量初始化时,选择各项算法。如下所示:

这涉及到以下算法流程:

命令行调用程序,输入源图像以及程序的参数

特征点检测,判断是使用surf还是orb,默认是surf。

对图像的特征点进行匹配,使用最近邻和次近邻方法,

将两个最优的匹配的置信度保存下来。对图像进行排序以及将置信度高的图像保存到同一个集合中,

删除置信度比较低的图像间的匹配,得到能正确匹配的图像序列。

这样将置信度高于门限的所有匹配合并到一个集合中。对所有图像进行相机参数粗略估计,然后求出旋转矩阵

使用光束平均法进一步精准的估计出旋转矩阵。

波形校正,水平或者垂直

拼接

融合,多频段融合,光照补偿,

Stitcher类使用示例

#include 其中的getfiles()函数的功能是获取一个目录下的所有文件地址。这使得可以在windows下批量的读取图像的地址。

stitching_detailed使用示例

#include finder;

if (features_type == "surf")

{

#if defined(HAVE_OPENCV_NONFREE) && defined(HAVE_OPENCV_GPU)

if (try_gpu && gpu::getCudaEnabledDeviceCount() > 0)

finder = new SurfFeaturesFinderGpu();

else

#endif

finder = new SurfFeaturesFinder();

}

else if (features_type == "orb")

{

finder = new OrbFeaturesFinder();

}

else

{

cout << "Unknown 2D features type: '" << features_type << "'.\n";

return -1;

}

Mat full_img, img;

vector adjuster;

if (ba_cost_func == "reproj") adjuster = new detail::BundleAdjusterReproj();

else if (ba_cost_func == "ray") adjuster = new detail::BundleAdjusterRay();

else

{

cout << "Unknown bundle adjustment cost function: '" << ba_cost_func << "'.\n";

return -1;

}

adjuster->setConfThresh(conf_thresh);

Mat_ refine_mask = Mat::zeros(3, 3, CV_8U);

if (ba_refine_mask[0] == 'x') refine_mask(0,0) = 1;

if (ba_refine_mask[1] == 'x') refine_mask(0,1) = 1;

if (ba_refine_mask[2] == 'x') refine_mask(0,2) = 1;

if (ba_refine_mask[3] == 'x') refine_mask(1,1) = 1;

if (ba_refine_mask[4] == 'x') refine_mask(1,2) = 1;

adjuster->setRefinementMask(refine_mask);

(*adjuster)(features, pairwise_matches, cameras);

// Find median focal length

vector<double> focals;

for (size_t i = 0; i < cameras.size(); ++i)

{

LOGLN("Camera #" << indices[i]+1 << ":\n" << cameras[i].K());

focals.push_back(cameras[i].focal);

}

sort(focals.begin(), focals.end());

float warped_image_scale;

if (focals.size() % 2 == 1)

warped_image_scale = static_cast<float>(focals[focals.size() / 2]);

else

warped_image_scale = static_cast<float>(focals[focals.size() / 2 - 1] + focals[focals.size() / 2]) * 0.5f;

if (do_wave_correct)

{

vector warper_creator;

#if defined(HAVE_OPENCV_GPU)

if (try_gpu && gpu::getCudaEnabledDeviceCount() > 0)

{

if (warp_type == "plane") warper_creator = new cv::PlaneWarperGpu();

else if (warp_type == "cylindrical") warper_creator = new cv::CylindricalWarperGpu();

else if (warp_type == "spherical") warper_creator = new cv::SphericalWarperGpu();

}

else

#endif

{

if (warp_type == "plane") warper_creator = new cv::PlaneWarper();

else if (warp_type == "cylindrical") warper_creator = new cv::CylindricalWarper();

else if (warp_type == "spherical") warper_creator = new cv::SphericalWarper();

else if (warp_type == "fisheye") warper_creator = new cv::FisheyeWarper();

else if (warp_type == "stereographic") warper_creator = new cv::StereographicWarper();

else if (warp_type == "compressedPlaneA2B1") warper_creator = new cv::CompressedRectilinearWarper(2, 1);

else if (warp_type == "compressedPlaneA1.5B1") warper_creator = new cv::CompressedRectilinearWarper(1.5, 1);

else if (warp_type == "compressedPlanePortraitA2B1") warper_creator = new cv::CompressedRectilinearPortraitWarper(2, 1);

else if (warp_type == "compressedPlanePortraitA1.5B1") warper_creator = new cv::CompressedRectilinearPortraitWarper(1.5, 1);

else if (warp_type == "paniniA2B1") warper_creator = new cv::PaniniWarper(2, 1);

else if (warp_type == "paniniA1.5B1") warper_creator = new cv::PaniniWarper(1.5, 1);

else if (warp_type == "paniniPortraitA2B1") warper_creator = new cv::PaniniPortraitWarper(2, 1);

else if (warp_type == "paniniPortraitA1.5B1") warper_creator = new cv::PaniniPortraitWarper(1.5, 1);

else if (warp_type == "mercator") warper_creator = new cv::MercatorWarper();

else if (warp_type == "transverseMercator") warper_creator = new cv::TransverseMercatorWarper();

}

if (warper_creator.empty())

{

cout << "Can't create the following warper '" << warp_type << "'\n";

return 1;

}

Ptr warper = warper_creator->create(static_cast<float>(warped_image_scale * seam_work_aspect));

for (int i = 0; i < num_images; ++i)

{

Mat_<float> K;

cameras[i].K().convertTo(K, CV_32F);

float swa = (float)seam_work_aspect;

K(0,0) *= swa; K(0,2) *= swa;

K(1,1) *= swa; K(1,2) *= swa;

corners[i] = warper->warp(images[i], K, cameras[i].R, INTER_LINEAR, BORDER_REFLECT, images_warped[i]);

sizes[i] = images_warped[i].size();

warper->warp(masks[i], K, cameras[i].R, INTER_NEAREST, BORDER_CONSTANT, masks_warped[i]);

}

vector compensator = ExposureCompensator::createDefault(expos_comp_type);

compensator->feed(corners, images_warped, masks_warped);

Ptr seam_finder;

if (seam_find_type == "no")

seam_finder = new detail::NoSeamFinder();

else if (seam_find_type == "voronoi")

seam_finder = new detail::VoronoiSeamFinder();

else if (seam_find_type == "gc_color")

{

#if defined(HAVE_OPENCV_GPU)

if (try_gpu && gpu::getCudaEnabledDeviceCount() > 0)

seam_finder = new detail::GraphCutSeamFinderGpu(GraphCutSeamFinderBase::COST_COLOR);

else

#endif

seam_finder = new detail::GraphCutSeamFinder(GraphCutSeamFinderBase::COST_COLOR);

}

else if (seam_find_type == "gc_colorgrad")

{

#if defined(HAVE_OPENCV_GPU)

if (try_gpu && gpu::getCudaEnabledDeviceCount() > 0)

seam_finder = new detail::GraphCutSeamFinderGpu(GraphCutSeamFinderBase::COST_COLOR_GRAD);

else

#endif

seam_finder = new detail::GraphCutSeamFinder(GraphCutSeamFinderBase::COST_COLOR_GRAD);

}

else if (seam_find_type == "dp_color")

seam_finder = new detail::DpSeamFinder(DpSeamFinder::COLOR);

else if (seam_find_type == "dp_colorgrad")

seam_finder = new detail::DpSeamFinder(DpSeamFinder::COLOR_GRAD);

if (seam_finder.empty())

{

cout << "Can't create the following seam finder '" << seam_find_type << "'\n";

return 1;

}

seam_finder->find(images_warped_f, corners, masks_warped);

// Release unused memory

images.clear();

images_warped.clear();

images_warped_f.clear();

masks.clear();

LOGLN("Compositing...");

#if ENABLE_LOG

t = getTickCount();

#endif

Mat img_warped, img_warped_s;

Mat dilated_mask, seam_mask, mask, mask_warped;

Ptr blender;

//double compose_seam_aspect = 1;

double compose_work_aspect = 1;

for (int img_idx = 0; img_idx < num_images; ++img_idx)

{

LOGLN("Compositing image #" << indices[img_idx]+1);

// Read image and resize it if necessary

full_img = imread(img_names[img_idx]);

if (!is_compose_scale_set)

{

if (compose_megapix > 0)

compose_scale = min(1.0, sqrt(compose_megapix * 1e6 / full_img.size().area()));

is_compose_scale_set = true;

// Compute relative scales

//compose_seam_aspect = compose_scale / seam_scale;

compose_work_aspect = compose_scale / work_scale;

// Update warped image scale

warped_image_scale *= static_cast<float>(compose_work_aspect);

warper = warper_creator->create(warped_image_scale);

// Update corners and sizes

for (int i = 0; i < num_images; ++i)

{

// Update intrinsics

cameras[i].focal *= compose_work_aspect;

cameras[i].ppx *= compose_work_aspect;

cameras[i].ppy *= compose_work_aspect;

// Update corner and size

Size sz = full_img_sizes[i];

if (std::abs(compose_scale - 1) > 1e-1)

{

sz.width = cvRound(full_img_sizes[i].width * compose_scale);

sz.height = cvRound(full_img_sizes[i].height * compose_scale);

}

Mat K;

cameras[i].K().convertTo(K, CV_32F);

Rect roi = warper->warpRoi(sz, K, cameras[i].R);

corners[i] = roi.tl();

sizes[i] = roi.size();

}

}

if (abs(compose_scale - 1) > 1e-1)

resize(full_img, img, Size(), compose_scale, compose_scale);

else

img = full_img;

full_img.release();

Size img_size = img.size();

Mat K;

cameras[img_idx].K().convertTo(K, CV_32F);

// Warp the current image

warper->warp(img, K, cameras[img_idx].R, INTER_LINEAR, BORDER_REFLECT, img_warped);

// Warp the current image mask

mask.create(img_size, CV_8U);

mask.setTo(Scalar::all(255));

warper->warp(mask, K, cameras[img_idx].R, INTER_NEAREST, BORDER_CONSTANT, mask_warped);

// Compensate exposure

compensator->apply(img_idx, corners[img_idx], img_warped, mask_warped);

img_warped.convertTo(img_warped_s, CV_16S);

img_warped.release();

img.release();

mask.release();

dilate(masks_warped[img_idx], dilated_mask, Mat());

resize(dilated_mask, seam_mask, mask_warped.size());

mask_warped = seam_mask & mask_warped;

if (blender.empty())

{

blender = Blender::createDefault(blend_type, try_gpu);

Size dst_sz = resultRoi(corners, sizes).size();

float blend_width = sqrt(static_cast<float>(dst_sz.area())) * blend_strength / 100.f;

if (blend_width < 1.f)

blender = Blender::createDefault(Blender::NO, try_gpu);

else if (blend_type == Blender::MULTI_BAND)

{

MultiBandBlender* mb = dynamic_cast(static_cast(blender));

mb->setNumBands(static_cast<int>(ceil(log(blend_width)/log(2.)) - 1.));

LOGLN("Multi-band blender, number of bands: " << mb->numBands());

}

else if (blend_type == Blender::FEATHER)

{

FeatherBlender* fb = dynamic_cast(static_cast(blender));

fb->setSharpness(1.f/blend_width);

LOGLN("Feather blender, sharpness: " << fb->sharpness());

}

blender->prepare(corners, sizes);

}

// Blend the current image

blender->feed(img_warped_s, mask_warped, corners[img_idx]);

}

Mat result, result_mask;

blender->blend(result, result_mask);

LOGLN("Compositing, time: " << ((getTickCount() - t) / getTickFrequency()) << " sec");

imwrite(result_name, result);

result.convertTo(result,CV_8UC1);

imshow("stitch",result);

ttt = ((double)getTickCount() - ttt) / getTickFrequency();

cout << "总的拼接时间:" << ttt << endl;

waitKey(0);

LOGLN("Finished, total time: " << ((getTickCount() - app_start_time) / getTickFrequency()) << " sec");

return 0;

} 拼接结果

输入4张图像,每张分辨率为327*245,总的拼接时间为9.25s。