数据挖掘TASK3_特征工程

特征工程

目标

对于特征进行进一步分析,并对于数据进行处理

完成对于特征工程的分析,并对于数据进行一些图表或者文字总结并打卡。

内容

常见的特征工程包括:

1、异常处理:

通过箱线图(或 3-Sigma)分析删除异常值;

BOX-COX 转换(处理有偏分布);

长尾截断;

2、特征归一化/标准化:

标准化(转换为标准正态分布);

归一化(抓换到 [0,1] 区间);

针对幂律分布,可以采用公式: l o g ( 1 + x 1 + m e d i a n ) log(\frac{1+x}{1+median}) log(1+median1+x)

3、数据分桶:

等频分桶;

等距分桶;

Best-KS 分桶(类似利用基尼指数进行二分类);

卡方分桶;

4、缺失值处理:

不处理(针对类似 XGBoost 等树模型);

删除(缺失数据太多);

插值补全,包括均值/中位数/众数/建模预测/多重插补/压缩感知补全/矩阵补全等;

分箱,缺失值一个箱;

5、特征构造:

构造统计量特征,报告计数、求和、比例、标准差等;

时间特征,包括相对时间和绝对时间,节假日,双休日等;

地理信息,包括分箱,分布编码等方法;

非线性变换,包括 log/ 平方/ 根号等;

特征组合,特征交叉;

仁者见仁,智者见智。

6、特征筛选

过滤式(filter):先对数据进行特征选择,然后在训练学习器,常见的方法有 Relief/方差选择发/相关系数法/卡方检验法/互信息法;

包裹式(wrapper):直接把最终将要使用的学习器的性能作为特征子集的评价准则,常见方法有 LVM(Las Vegas Wrapper) ;

嵌入式(embedding):结合过滤式和包裹式,学习器训练过程中自动进行了特征选择,常见的有 lasso 回归;

7、降维

PCA/ LDA/ ICA;

特征选择也是一种降维。

代码

import pandas as pd

import numpy as np

import matplotlib

import matplotlib.pyplot as plt

import seaborn as sns

from operator import itemgetter

path = 'C:/Users/lenovo/Desktop/data/'

train = pd.read_csv(path+'used_car_train_20200313.csv', sep=' ')

test = pd.read_csv(path+'used_car_testA_20200313.csv', sep=' ')

print('Train data shape:', train.shape)

print('Test data shape:', test.shape)

Train data shape: (150000, 31)

Test data shape: (50000, 30)

#train.head()

#test.head()

train.columns

Index(['SaleID', 'name', 'regDate', 'model', 'brand', 'bodyType', 'fuelType',

'gearbox', 'power', 'kilometer', 'notRepairedDamage', 'regionCode',

'seller', 'offerType', 'creatDate', 'price', 'v_0', 'v_1', 'v_2', 'v_3',

'v_4', 'v_5', 'v_6', 'v_7', 'v_8', 'v_9', 'v_10', 'v_11', 'v_12',

'v_13', 'v_14'],

dtype='object')

test.columns

Index(['SaleID', 'name', 'regDate', 'model', 'brand', 'bodyType', 'fuelType',

'gearbox', 'power', 'kilometer', 'notRepairedDamage', 'regionCode',

'seller', 'offerType', 'creatDate', 'v_0', 'v_1', 'v_2', 'v_3', 'v_4',

'v_5', 'v_6', 'v_7', 'v_8', 'v_9', 'v_10', 'v_11', 'v_12', 'v_13',

'v_14'],

dtype='object')

data_series = train['power']

data_series.shape[0]

150000

def outliers_proc(data, col_name, scale=3):

"""

用于清洗异常值,默认用 box_plot(scale=3)进行清洗

:param data: 接收 pandas 数据格式

:param col_name: pandas 列名

:param scale: 尺度

:return:

"""

def box_plot_outliers(data_ser, box_scale):

"""

利用箱线图去除异常值

:param data_ser: 接收 pandas.Series 数据格式

:param box_scale: 箱线图尺度,

:return:

"""

iqr = box_scale * (data_ser.quantile(0.75) - data_ser.quantile(0.25))

val_low = data_ser.quantile(0.25) - iqr

val_up = data_ser.quantile(0.75) + iqr

print('下界限、上界线分别是', val_low, val_up)

rule_low = (data_ser < val_low) #低于最低数的异常值

rule_up = (data_ser > val_up) #高于最高数的异常值

return (rule_low, rule_up), (val_low, val_up)

data_n = data.copy()

data_series = data_n[col_name]

rule,value = box_plot_outliers(data_series, box_scale=scale)

index = np.arange(data_series.shape[0])[rule[0]|rule[1]]#得到异常值的索引

print('Delete number is:{}'.format(len(index)))

data_n = data_n.drop(index) #【删除】有异常值的样本

print(data_n.shape)

data_n.reset_index(drop=True, inplace=True)

print('now column number is {}'.format(data_n.shape[0])) #剩下的样本数

index_low = np.arange(data_series.shape[0])[rule[0]]

outliers = data_series.iloc[index_low] #有异常值的样本列

print("Description of data less than the lower bound is:")

print(pd.Series(outliers).describe())

index_up = np.arange(data_series.shape[0])[rule[1]]

outliers = data_series.iloc[index_up] #有异常值的样本列

print("Description of data larger than the upper bound is:")

print(pd.Series(outliers).describe())

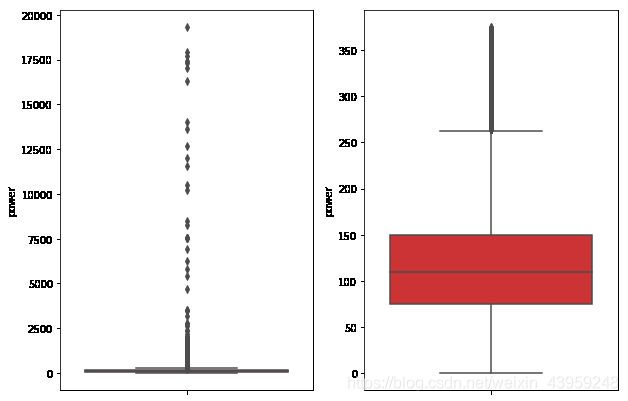

fig, ax = plt.subplots(1,2,figsize=(10,7))

sns.boxplot(y=data[col_name], data=data, palette="Set1", ax=ax[0])

sns.boxplot(y=data_n[col_name], data=data_n, palette="Set1", ax=ax[1])

return data_n

train = outliers_proc(train, 'power', scale=3)

下界限、上界线分别是 -150.0 375.0

Delete number is:963

(149037, 31)

now column number is 149037

Description of data less than the lower bound is:

count 0.0

mean NaN

std NaN

min NaN

25% NaN

50% NaN

75% NaN

max NaN

Name: power, dtype: float64

Description of data larger than the upper bound is:

count 963.000000

mean 846.836968

std 1929.418081

min 376.000000

25% 400.000000

50% 436.000000

75% 514.000000

max 19312.000000

Name: power, dtype: float64

#把训练集和测试集放在一起,方便构造特征

train['train'] = 1

test['train'] = 0

data = pd.concat([train, test], ignore_index=True, sort=False)

#计算汽车使用的时间,使用时间越短价格越高

data['used_time'] = (pd.to_datetime(data['creatDate'], format='%Y%m%d', errors='coerce') -

pd.to_datetime(data['regDate'], format='%Y%m%d', errors='coerce')).dt.days

data['used_time'].isnull().sum()

#从邮编中提取城市信息

data['city'] = data['regionCode'].apply(lambda x: str(x)[:-3])

train_gb = train.groupby('brand') #将样本按照brand分类

all_info={

}

for kind, kind_data in train_gb:

info = {

}

kind_data = kind_data[kind_data['price']>0]

#该品牌共有多少量汽车

info['brand_amount'] = len(kind_data)

info['brand_price_max'] = kind_data.price.max()

info['brand_price_median'] = kind_data.price.median()

info['brand_price_min'] = kind_data.price.min()

info['brand_price_sum'] = kind_data.price.sum()

info['brand_price_std'] = kind_data.price.std()

info['brand_price_average'] = round(kind_data.price.sum()/(len(kind_data)+1), 2)

all_info[kind] = info #统计不同brand汽车价格的各个统计量

brand_fe = pd.DataFrame(all_info).T.reset_index().rename(columns={

'index':'brand'})

#print(brand_fe.head())

#print(data.head())

data = pd.merge(data,brand_fe, how='left', on='brand') #将该品牌汽车的各个统计量添加到表格中

bin = [i*10 for i in range(31)] #设定汽车的power区间

data['power_bin'] = pd.cut(data['power'], bin, labels=False) #划分区间

data[['power_bin', 'power']].head()

#删掉createdata regdata regioncode这些原始数据

data = data.drop(['creatDate', 'regDate', 'regionCode'], axis=1)

print(data.shape)(199037, 39)

print(data.columns)

Index(['SaleID', 'name', 'model', 'brand', 'bodyType', 'fuelType', 'gearbox',

'power', 'kilometer', 'notRepairedDamage', 'seller', 'offerType',

'price', 'v_0', 'v_1', 'v_2', 'v_3', 'v_4', 'v_5', 'v_6', 'v_7', 'v_8',

'v_9', 'v_10', 'v_11', 'v_12', 'v_13', 'v_14', 'train', 'used_time',

'city', 'brand_amount', 'brand_price_average', 'brand_price_max',

'brand_price_median', 'brand_price_min', 'brand_price_std',

'brand_price_sum', 'power_bin'],

dtype='object')

#将数据导出,用于树模型使用

data.to_csv('data_for_tree.csv', index=0)

#构造特征给线性回归LR模型使用

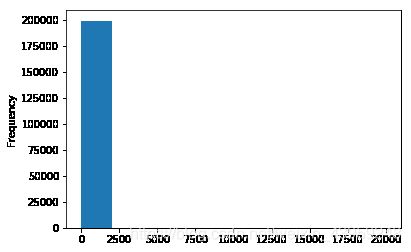

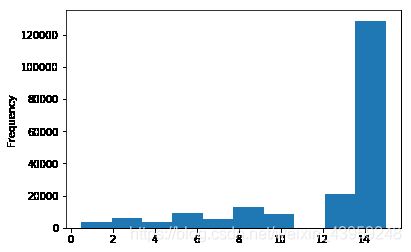

data['power'].plot.hist()

train['power'].plot.hist()

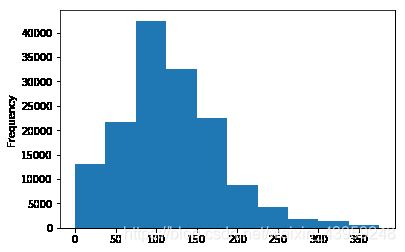

# 我们对power取 log,在做归一化

from sklearn import preprocessing

min_max_scaler = preprocessing.MinMaxScaler()

data['power'] = np.log(data['power'] + 1)

data['power'] = ((data['power'] - np.min(data['power'])) / (np.max(data['power']) - np.min(data['power'])))

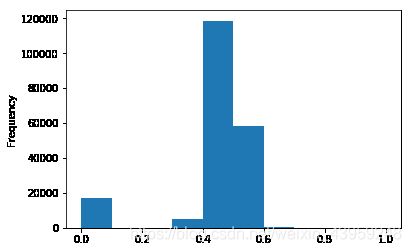

data['power'].plot.hist()

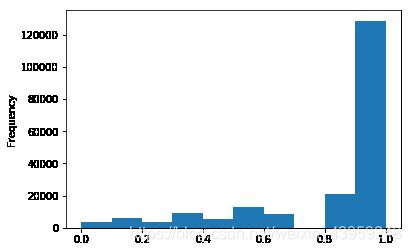

#kilometer已经做过分桶

data['kilometer'].plot.hist()

#直接归一化

data['kilometer'] = ((data['kilometer'] - np.min(data['kilometer'])) /

(np.max(data['kilometer']) - np.min(data['kilometer'])))

data['kilometer'].plot.hist()

#对刚构造的统计量,均归一化

data['brand_amount'] = ((data['brand_amount'] - np.min(data['brand_amount'])) /

(np.max(data['brand_amount']) - np.min(data['brand_amount'])))

data['brand_price_average'] = ((data['brand_price_average'] - np.min(data['brand_price_average'])) /

(np.max(data['brand_price_average']) - np.min(data['brand_price_average'])))

data['brand_price_max'] = ((data['brand_price_max'] - np.min(data['brand_price_max'])) /

(np.max(data['brand_price_max']) - np.min(data['brand_price_max'])))

data['brand_price_median'] = ((data['brand_price_median'] - np.min(data['brand_price_median'])) /

(np.max(data['brand_price_median']) - np.min(data['brand_price_median'])))

data['brand_price_min'] = ((data['brand_price_min'] - np.min(data['brand_price_min'])) /

(np.max(data['brand_price_min']) - np.min(data['brand_price_min'])))

data['brand_price_std'] = ((data['brand_price_std'] - np.min(data['brand_price_std'])) /

(np.max(data['brand_price_std']) - np.min(data['brand_price_std'])))

data['brand_price_sum'] = ((data['brand_price_sum'] - np.min(data['brand_price_sum'])) /

(np.max(data['brand_price_sum']) - np.min(data['brand_price_sum'])))

# 对类别特征进行 OneEncoder

data = pd.get_dummies(data, columns=['model', 'brand', 'bodyType', 'fuelType',

'gearbox', 'notRepairedDamage', 'power_bin']) #提取不同的类别作为特征名,每个样本属于这个特征则编码为1

#data.head()

print(data.columns)

Index(['SaleID', 'name', 'power', 'kilometer', 'seller', 'offerType', 'price',

'v_0', 'v_1', 'v_2',

...

'power_bin_20.0', 'power_bin_21.0', 'power_bin_22.0', 'power_bin_23.0',

'power_bin_24.0', 'power_bin_25.0', 'power_bin_26.0', 'power_bin_27.0',

'power_bin_28.0', 'power_bin_29.0'],

dtype='object', length=370)

data.shape

#data.head()

(199037, 370)

#data.to_csv('data_for_lr.csv',index=0)

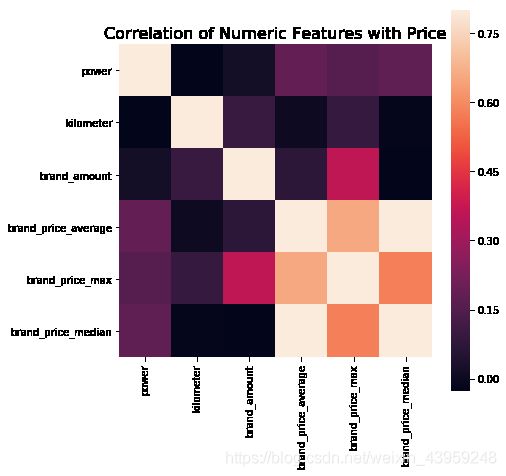

#特征筛选

#过滤式——相关性分析

print(data['power'].corr(data['price'], method='spearman'))

print(data['kilometer'].corr(data['price'], method='spearman'))

print(data['brand_amount'].corr(data['price'], method='spearman'))

print(data['brand_price_average'].corr(data['price'], method='spearman'))

print(data['brand_price_max'].corr(data['price'], method='spearman'))

print(data['brand_price_median'].corr(data['price'], method='spearman'))

0.5728285196051496

-0.4082569701616764

0.058156610025581514

0.3834909576057687

0.259066833880992

0.38691042393409447

# 当然也可以直接看图

data_numeric = data[['power', 'kilometer', 'brand_amount', 'brand_price_average',

'brand_price_max', 'brand_price_median']]

correlation = data_numeric.corr()

f , ax = plt.subplots(figsize = (7, 7))

plt.title('Correlation of Numeric Features with Price',y=1,size=16)

sns.heatmap(correlation,square = True, vmax=0.8)

#包裹式分析

# k_feature 太大会很难跑,没服务器,所以提前 interrupt 了

from mlxtend.feature_selection import SequentialFeatureSelector as SFS

from sklearn.linear_model import LinearRegression

sfs = SFS(LinearRegression(),

k_features=10,

forward=True,

floating=False,

scoring = 'r2',

cv = 0)

x = data.drop(['price'], axis=1)

x = x.fillna(0)

y = data['price']

sfs.fit(x, y)

sfs.k_feature_names_

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

in ()

1 #包裹式分析

2 # k_feature 太大会很难跑,没服务器,所以提前 interrupt 了

----> 3 from mlxtend.feature_selection import SequentialFeatureSelector as SFS

4 from sklearn.linear_model import LinearRegression

5 sfs = SFS(LinearRegression(),

ModuleNotFoundError: No module named 'mlxtend'

# 画出来,可以看到边际效益

from mlxtend.plotting import plot_sequential_feature_selection as plot_sfs

import matplotlib.pyplot as plt

fig1 = plot_sfs(sfs.get_metric_dict(), kind='std_dev')

plt.grid()

plt.show()