Ubuntu14.04离线安装CDH5.6.0

官方安装文档:http://www.cloudera.com/documentation/enterprise/5-6-x/topics/installation.html

相关包的下载地址:

Cloudera Manager地址:http://archive.cloudera.com/cm5/cm/5/

CDH安装包地址:http://archive.cloudera.com/cdh5/parcels/5.6.0/

由于我们的操作系统为ubuntu14.04,需要下载以下文件:

CDH-5.6.0-1.cdh5.6.0.p0.45-trusty.parcel

CDH-5.6.0-1.cdh5.6.0.p0.45-trusty.parcel.sha1

manifest.json 全程采用root安装

机器配置

1. 三台机器的ip和名字为

- 192.168.10.236 hadoop-1 (内存16G)

- 192.168.10.237 hadoop-2 (内存8G)

- 192.168.10.238 hadoop-3 (内存8G)

我们将hadoop-1作为主节点

2. 配置/etc/hosts,使节点间通过 hadoop-X 即可访问其他节点

3. 配置主节点root免密码登录到其他节点(不需要从节点到主节点)

3.1 在hadoop-1上执行ssh-keygen -t rsa -P ''生成无密码密钥对

3.2 将公钥添加到认证文件中:cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

3.3 将认证文件拷贝到hadoop-2和hadoop-3的/root/.ssh/目录下,使主节点免密码访问从节点

4. 配置jdk

4.1 安装oracle-j2sdk1.7版本(主从都要,根据CDH版本选择对应的jdk)

$ apt-get install oracle-j2sdk1.7

$ update-alternatives --install /usr/bin/java java /usr/lib/jvm/java-7-oracle-cloudera/bin/java 300

$ update-alternatives --install /usr/bin/javac javac /usr/lib/jvm/java-7-oracle-cloudera/bin/javac 3004.2 $ vim /etc/profile

在末尾添加

export JAVA_HOME=/usr/lib/jvm/java-7-oracle-cloudera

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=$PATH:${JAVA_HOME}/bin:{JRE_HOME}/bin:$PATH4.3 $ vim /root/.bashrc

在末尾添加

source /etc/profile5. 安装MariaDB-5.5(兼容性请查看官方文档,具体可参照ubuntu14.04 安装MariaDB10.0并允许远程访问)

5.1 执行$ apt-get install mariadb-server-5.5

5.2 数据库设置(官方建议设置)

$ vim /etc/mysql/my.cnf下面是官方建议的配置

[mysqld]

transaction-isolation = READ-COMMITTED

# Disabling symbolic-links is recommended to prevent assorted security risks;

# to do so, uncomment this line:

# symbolic-links = 0

key_buffer = 16M

key_buffer_size = 32M

max_allowed_packet = 32M

thread_stack = 256K

thread_cache_size = 64

query_cache_limit = 8M

query_cache_size = 64M

query_cache_type = 1

max_connections = 550

#expire_logs_days = 10

#max_binlog_size = 100M

#log_bin should be on a disk with enough free space. Replace '/var/lib/mysql/mysql_binary_log' with an appropriate path for your system

#and chown the specified folder to the mysql user.

log_bin=/var/lib/mysql/mysql_binary_log

binlog_format = mixed

read_buffer_size = 2M

read_rnd_buffer_size = 16M

sort_buffer_size = 8M

join_buffer_size = 8M

# InnoDB settings

innodb_file_per_table = 1

innodb_flush_log_at_trx_commit = 2

innodb_log_buffer_size = 64M

innodb_buffer_pool_size = 4G

innodb_thread_concurrency = 8

innodb_flush_method = O_DIRECT

innodb_log_file_size = 512M

[mysqld_safe]

log-error=/var/log/mysqld.log

pid-file=/var/run/mysqld/mysqld.pid重启服务,service mysql restart

5.3 创建相关数据库

进入mysql命令行:$ mysql -u root -p

进入mysql命令行后,直接复制下面的整段话并粘贴:

create database amon DEFAULT CHARACTER SET utf8;

grant all on amon.* TO 'amon'@'%' IDENTIFIED BY 'amon_password';

grant all on amon.* TO 'amon'@'CDH' IDENTIFIED BY 'amon_password';

create database smon DEFAULT CHARACTER SET utf8;

grant all on smon.* TO 'smon'@'%' IDENTIFIED BY 'smon_password';

grant all on smon.* TO 'smon'@'CDH' IDENTIFIED BY 'smon_password';

create database rman DEFAULT CHARACTER SET utf8;

grant all on rman.* TO 'rman'@'%' IDENTIFIED BY 'rman_password';

grant all on rman.* TO 'rman'@'CDH' IDENTIFIED BY 'rman_password';

create database hmon DEFAULT CHARACTER SET utf8;

grant all on hmon.* TO 'hmon'@'%' IDENTIFIED BY 'hmon_password';

grant all on hmon.* TO 'hmon'@'CDH' IDENTIFIED BY 'hmon_password';

create database hive DEFAULT CHARACTER SET utf8;

grant all on hive.* TO 'hive'@'%' IDENTIFIED BY 'hive_password';

grant all on hive.* TO 'hive'@'CDH' IDENTIFIED BY 'hive_password';

create database oozie DEFAULT CHARACTER SET utf8;

grant all on oozie.* TO 'oozie'@'%' IDENTIFIED BY 'oozie_password';

grant all on oozie.* TO 'oozie'@'CDH' IDENTIFIED BY 'oozie_password';

create database metastore DEFAULT CHARACTER SET utf8;

grant all on metastore.* TO 'hive'@'%' IDENTIFIED BY 'hive_password';

grant all on metastore.* TO 'hive'@'CDH' IDENTIFIED BY 'hive_password';

GRANT ALL PRIVILEGES ON *.* TO 'root'@'%' IDENTIFIED BY 'gaoying' WITH GRANT OPTION;

flush privileges;

5.4 安装MariaDB jdbc 驱动

$ apt-get install libmysql-java5.5 使用cloudera脚本在mysql中进行相关配置:(先完成第6.1后再配置)

$ /opt/cloudera-manager/cm-5.6.0/share/cmf/schema/scm_prepare_database.sh mysql -uroot -p --scm-host localhost scm scm scm_password6. 安装Cloudera Manager Server 和 Agents

6.1 把安装包解压到主节点

$ mkdir /opt/cloudera-manager

将下载好的cloudera-manager-trusty-cm5.6.0_amd64.tar.gz解压到/opt/cloudera-manager

6.2 创建用户

$ sudo useradd --system --home=/opt/cloudera-manager/cm-5.6.0/run/cloudera-scm-server --no-create-home --shell=/bin/false --comment "Cloudera SCM User" cloudera-scm

//--home 指向你cloudera-scm-server的路径6.3 创建cloudera manager server的本地数据存储目录(主)

$ sudo mkdir /var/log/cloudera-scm-server

$ sudo chown cloudera-scm:cloudera-scm /var/log/cloudera-scm-server6.4 在每个Cloudera Manager Agent 节点配置server_host(主从都要)

$ vim /opt/cloudera-manager/cm-5.6.0/etc/cloudera-scm-agent/config.ini

//吧server_host改成主节点名称

server_host=hadoop-16.5 将cloudera-manager发送到各从节点对应的目录下(即/opt)

$ scp -r /opt/cloudera-manager root@hadoop-2:/opt6.6 创建Parcel目录

6.6.1 在主节点上:

创建安装包目录 mkdir -p /opt/cloudera/parcel-repo

将CHD5相关的Parcel包放到主节点的/opt/cloudera/parcel-repo/目录中

CDH-5.6.0-1.cdh5.6.0.p0.45-trusty.parcel

CDH-5.6.0-1.cdh5.6.0.p0.45-trusty.parcel.sha1

manifest.json 最后将CDH-5.6.0-1.cdh5.6.0.p0.45-trusty.parcel.sha1,重命名为CDH-5.6.0-1.cdh5.6.0.p0.45-trusty.parcel.sha,这点必须注意,否则,系统会重新下载CDH-5.6.0-1.cdh5.6.0.p0.45-trusty.parcel.sha1文件。

6.6.2 在从节点上:mkdir -p /opt/cloudera/parcels

6.7 在各节点上安装依赖

用apt-get install安装以下依赖

lsb-base

psmisc

bash

libsasl2-modules

libsasl2-modules-gssapi-mit

zlib1g

libxslt1.1

libsqlite3-0

libfuse2

fuse-utils or fuse

rpcbind6.8 启动server和agent

主节点上:

/opt/cloudera-manager/cm-5.6.0/etc/init.d/cloudera-scm-server start

/opt/cloudera-manager/cm-5.6.0/etc/init.d/cloudera-scm-agent stop从节点上:

/opt/cloudera-manager/cm-5.6.0/etc/init.d/cloudera-scm-agent stop若启动出错,可以查看/opt/cloudera-manager/cm-5.6.0/log里的日志

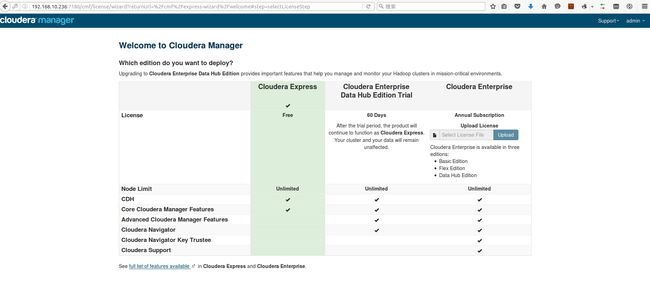

若没错,则等待几分钟后,在浏览器访问Cloudera Manager Admin Console,我的主节点ip为192.168.10.236,那么访问http://192.168.10.236:7180,默认的用户名和密码为admin

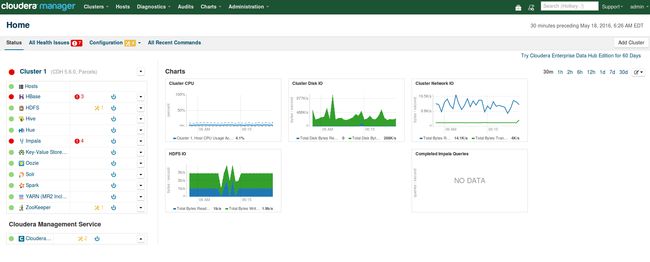

7 CDH5的安装和配置

在new hosts选项卡中用hadoop-[1-3]查找组成集群的主机名

勾选要安装的节点,点继续

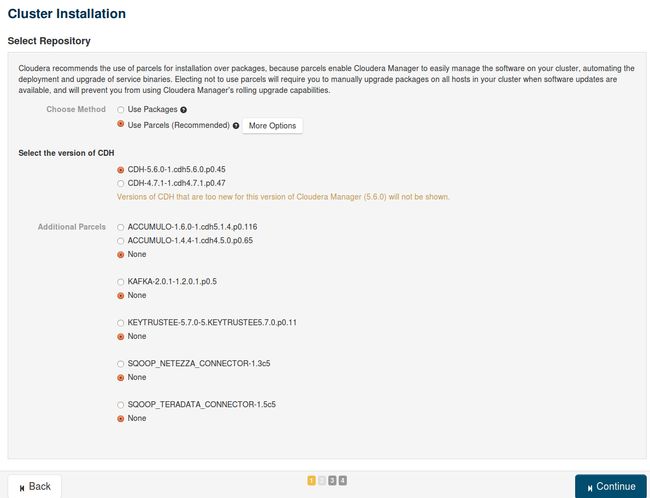

出现以下包名,说明本地Parcel包配置无误,直接点继续

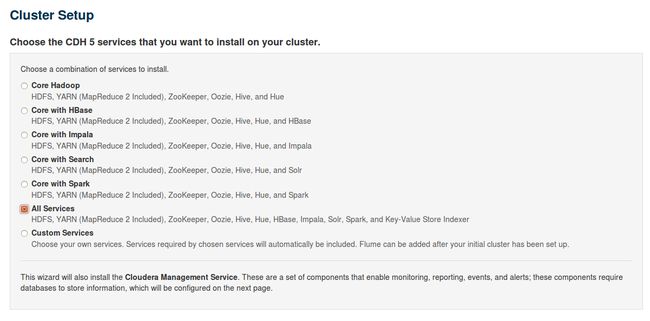

安装所有服务

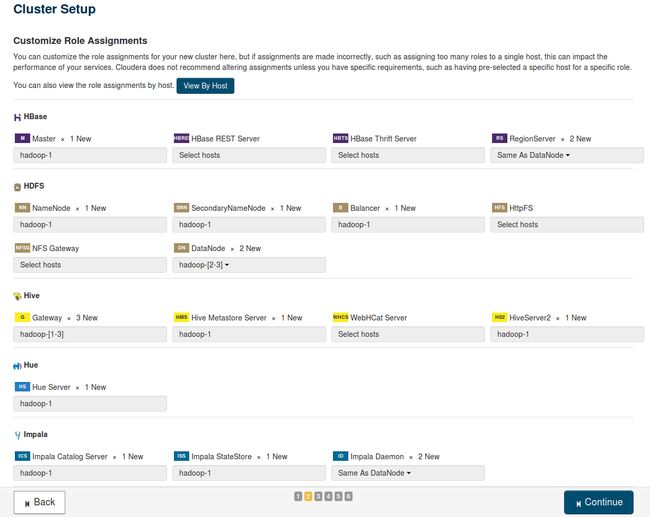

采取默认值

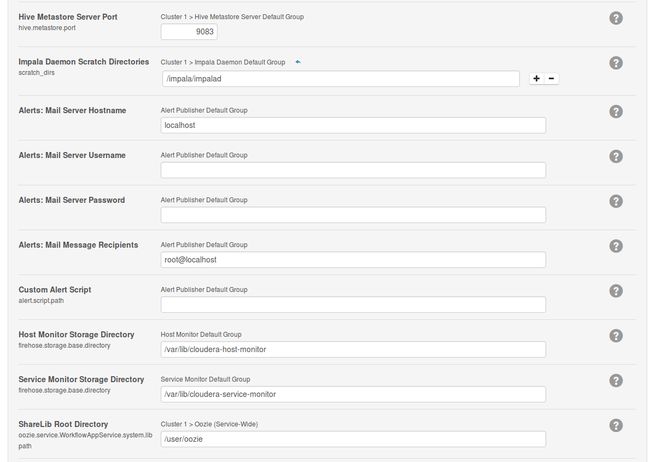

数据库配置

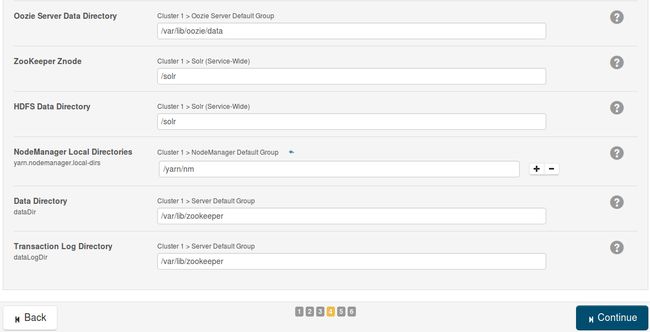

这里也默认

等待安装

安装成功

8 简单测试

8.1 在hadoop上执行MapReduce job

在主节点终端执行

sudo -u hdfs hadoop jar \

/opt/cloudera/parcels/CDH/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar \

pi 10 100终端会输出任务执行情况

root@hadoop-1:~# sudo -u hdfs hadoop jar /opt/cloudera/parcels/CDH/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar pi 10 100

Number of Maps = 10

Samples per Map = 100

Wrote input for Map #0

Wrote input for Map #1

Wrote input for Map #2

Wrote input for Map #3

Wrote input for Map #4

Wrote input for Map #5

Wrote input for Map #6

Wrote input for Map #7

Wrote input for Map #8

Wrote input for Map #9

Starting Job

16/05/18 21:26:58 INFO client.RMProxy: Connecting to ResourceManager at hadoop-1/192.168.10.236:8032

16/05/18 21:26:58 INFO input.FileInputFormat: Total input paths to process : 10

16/05/18 21:26:58 INFO mapreduce.JobSubmitter: number of splits:10

16/05/18 21:26:58 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1463558073107_0001

16/05/18 21:26:58 INFO impl.YarnClientImpl: Submitted application application_1463558073107_0001

16/05/18 21:26:59 INFO mapreduce.Job: The url to track the job: http://hadoop-1:8088/proxy/application_1463558073107_0001/

16/05/18 21:26:59 INFO mapreduce.Job: Running job: job_1463558073107_0001

16/05/18 21:27:05 INFO mapreduce.Job: Job job_1463558073107_0001 running in uber mode : false

16/05/18 21:27:05 INFO mapreduce.Job: map 0% reduce 0%

16/05/18 21:27:10 INFO mapreduce.Job: map 10% reduce 0%

16/05/18 21:27:14 INFO mapreduce.Job: map 20% reduce 0%

16/05/18 21:27:15 INFO mapreduce.Job: map 40% reduce 0%

16/05/18 21:27:18 INFO mapreduce.Job: map 50% reduce 0%

16/05/18 21:27:20 INFO mapreduce.Job: map 70% reduce 0%

16/05/18 21:27:22 INFO mapreduce.Job: map 80% reduce 0%

16/05/18 21:27:24 INFO mapreduce.Job: map 100% reduce 0%

16/05/18 21:27:27 INFO mapreduce.Job: map 100% reduce 100%

16/05/18 21:27:27 INFO mapreduce.Job: Job job_1463558073107_0001 completed successfully

16/05/18 21:27:27 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=96

FILE: Number of bytes written=1272025

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=2630

HDFS: Number of bytes written=215

HDFS: Number of read operations=43

HDFS: Number of large read operations=0

HDFS: Number of write operations=3

Job Counters

Launched map tasks=10

Launched reduce tasks=1

Data-local map tasks=10

Total time spent by all maps in occupied slots (ms)=37617

Total time spent by all reduces in occupied slots (ms)=2866

Total time spent by all map tasks (ms)=37617

Total time spent by all reduce tasks (ms)=2866

Total vcore-seconds taken by all map tasks=37617

Total vcore-seconds taken by all reduce tasks=2866

Total megabyte-seconds taken by all map tasks=38519808

Total megabyte-seconds taken by all reduce tasks=2934784

Map-Reduce Framework

Map input records=10

Map output records=20

Map output bytes=180

Map output materialized bytes=340

Input split bytes=1450

Combine input records=0

Combine output records=0

Reduce input groups=2

Reduce shuffle bytes=340

Reduce input records=20

Reduce output records=0

Spilled Records=40

Shuffled Maps =10

Failed Shuffles=0

Merged Map outputs=10

GC time elapsed (ms)=602

CPU time spent (ms)=12210

Physical memory (bytes) snapshot=4803805184

Virtual memory (bytes) snapshot=15372648448

Total committed heap usage (bytes)=4912578560

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=1180

File Output Format Counters

Bytes Written=97

Job Finished in 29.482 seconds

Estimated value of Pi is 3.14800000000000000000

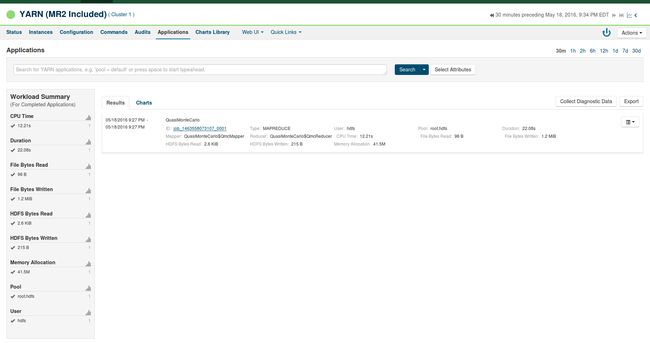

在WebUI界面的Clusters > Cluster 1 > Activities > YARN Applications

参考资料:

http://blog.csdn.net/jdplus/article/details/45920733

http://itindex.net/detail/51928-cloudera-manager-cdh5