- C# 调用 VITS,推理模型 将文字转wav音频调试 -数字人分支

未来之窗软件服务

c#开发语言人工智能数字人

Microsoft.ML.OnnxRuntime.OnnxRuntimeException:[ErrorCode:InvalidArgument]Inputname:'input_name'isnotinthemetadata在Microsoft.ML.OnnxRuntime.InferenceSession.LookupInputMetadata(StringnodeName)位置D:\a\_w

- nested exception is redis.clients.jedis.exceptions.JedisDataException: NOAUTH Authentication requir

qianyel

springbootredis

springboot1.5X升级2.0时,redis配置密码报错org.springframework.dao.InvalidDataAccessApiUsageException:NOAUTHAuthenticationrequired.;nestedexceptionisredis.clients.jedis.exceptions.JedisDataException:NOAUTHAuthen

- SpringBoot中Redis报错:NOAUTH Authentication required.; nested exception is redis.clients.jedis.exceptio

大象_

本地缓存DB-NoSQL数据仓库

SpringBoot中Redis报错:NOAUTHAuthenticationrequired.;nestedexceptionisredis.clients.jedis.exceptions.JedisDataException:NOAUTHAuthenticationrequired.1、复现org.springframework.dao.InvalidDataAccessApiUsageEx

- Spring Boot集成Redis并设置密码后报错: NOAUTH Authentication required

ta叫我小白

JavaSpringBootRedisspringbootredis

报错信息:io.lettuce.core.RedisCommandExecutionException:NOAUTHAuthenticationrequired.Redis密码配置确认无误,但是只要使用Redis存储就报这个异常。很可能是因为配置的spring.redis.password没有被读取到。基本依赖:implementation'org.springframework.boot:spr

- kafka生产消息失败 ...has passed since batch creation plus linger time

Lichenpar

#记录BUG解决kafka网络安全java

背景:公司要使用华为云的kafka服务,我负责进行技术预研,后期要封装kafka组件。从华为云下载了demo,完全按照开发者文档来进行配置文件配置,但是会报以下错误。org.apache.kafka.common.errors.TimeoutException:Expiring10record(s)fortopic-0:30015mshaspassedsincebatchcreationplusl

- 【从零开始学习JAVA】异常体系介绍

Cools0613

从0开始学Java学习

前言:本文我们将为大家介绍一下异常的整个体系,而我们学习异常,不是为了敲代码的时候不出异常,而是为了能够熟练的处理异常,如何解决代码中的异常。异常的两大分类:我们就以这张图作为线索来详细介绍一下Java中的异常:1.Exceptions(异常)在Java中,Exception(异常)是一种表示非致命错误或异常情况的类或接口。Exception通常是由应用程序引发的,可以被程序员捕获、处理或抛出。E

- 《java面向对象(5)》<不含基本语法>

java小白板

java开发语言

本笔记基于黑马程序员java教程整理,仅供参考1.异常1.1异常分类1.1.1Error指系统级别的错误,程序员无法解决,不必理会1.1.2Exception(异常)分为两类:RuntimeException:运行时异常,编译时程序不会报错,运行时报错,如数组越界其他异常:编译时异常,编译时就会报错运行时异常:publicclassText{publicstaticvoidmain(String[

- JAVA泛型的作用

时光呢

javawindowspython

1.类型安全(TypeSafety)在泛型出现之前,集合类(如ArrayList、HashMap)只能存储Object类型元素,导致以下问题:问题:从集合中取出元素时,需手动强制类型转换,容易因类型不匹配导致运行时错误(如ClassCastException)。//JDK1.4时代:非泛型示例Listlist=newArrayList();list.add("Hello");Integer

- Java 双亲委派模型(Parent Delegation Model)

重生之我在成电转码

java开发语言jvm

一、什么是双亲委派模型?双亲委派模型是Java类加载器(ClassLoader)的一种设计机制:✅避免重复加载✅保证核心类安全、避免被篡改✅提高类加载效率核心思想:类加载请求从子加载器逐级向上委托父加载器,只有父加载器加载失败(ClassNotFoundException)后,子加载器才会尝试自己加载。二、双亲委派的加载流程(核心)当某个类加载器接收到类加载请求时:1️⃣先检查自己是否加载过(缓存

- 大数据学习(75)-大数据组件总结

viperrrrrrr

大数据impalayarnhdfshiveCDHmapreduce

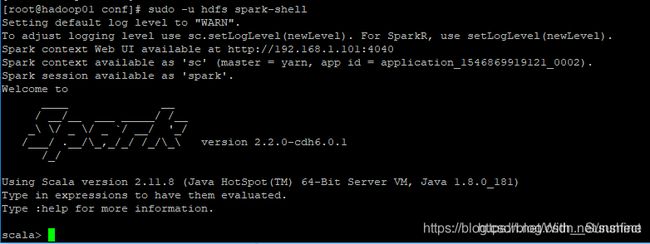

大数据学习系列专栏:哲学语录:用力所能及,改变世界。如果觉得博主的文章还不错的话,请点赞+收藏⭐️+留言支持一下博主哦一、CDHCDH(ClouderaDistributionIncludingApacheHadoop)是由Cloudera公司提供的一个集成了ApacheHadoop以及相关生态系统的发行版本。CDH是一个大数据平台,简化和加速了大数据处理分析的部署和管理。CDH提供Hadoop的

- C++ :try 语句块和异常处理

愚戏师

c++java开发语言

C++异常处理机制:try、catch和throw异常处理是C++中处理运行时错误的机制,通过分离正常逻辑与错误处理提升代码可读性和健壮性。1.基本结构异常处理由三个关键字组成:try:包裹可能抛出异常的代码块。catch:捕获并处理特定类型的异常。throw:主动抛出异常对象。try{//可能抛出异常的代码if(error_condition){throwexception_object;//抛

- 【面试场景题-你知道readTimeOutException,会引发oom异常吗】

F_windy

java面试

今天面试,我讲一个oom的场景。大致是这样:因为我们有一个需要调用第三方接口的http请求,然后因为线程池配置不合理,并且超时时间设置过长,导致线程堆积,最终oom异常。我觉得这个很好理解,然后,面试官一直问,我好像没有讲很清楚。他也有点呆,问我进阻塞队列的线程会运行吗?怎么就oom了?我说,大哥,线程创建出来就要占用内存了呀。他好像还是不懂。然后总结了一下。当系统出现readtimeout异常时

- excel文件有两列,循环读取文件两列赋值到字典列表。字典的有两个key,分别为question和answer。将最终结果输出到json文件

大霞上仙

pythonexceljsonpython

importpandasaspdimportjson#1.读取Excel文件(假设列名为question和answer)try:df=pd.read_excel("input.xlsx",usecols=["question","answer"])#明确指定列exceptExceptionase:print(f"读取文件失败:{str(e)}")exit()#2.转换为字典列表result=[{"

- gradle报错异常【groovy.lang.MissingPropertyException】

北漂老男孩

graldejavasqloracle

报错信息如下:Causedby:groovy.lang.MissingPropertyException:Couldnotsetunknownproperty‘allowInsecureProtocol’forrepositorycontaineroftypeorg.gradle.api.internal.artifacts.dsl.DefaultRepositoryHandler.atorg.g

- 尚硅谷电商数仓6.0,hive on spark,spark启动不了

新时代赚钱战士

hivesparkhadoop

在datagrip执行分区插入语句时报错[42000][40000]Errorwhilecompilingstatement:FAILED:SemanticExceptionFailedtogetasparksession:org.apache.hadoop.hive.ql.metadata.HiveException:FailedtocreateSparkclientforSparksessio

- Java泛型

lgily-1225

日常积累java开发语言后端

Java泛型是Java5引入的一项重要特性,旨在增强类型安全、减少代码冗余,并支持更灵活的代码设计。以下是对泛型的详细介绍及使用指南:一、泛型核心概念泛型允许在类、接口、方法中使用类型参数(如),使得代码可以处理多种数据类型,而无需重复编写逻辑。解决的问题类型安全:避免运行时ClassCastException。消除强制类型转换:编译器自动处理类型转换。代码复用:同一逻辑可处理不同类型的数据。二、

- java 实现数据库备份

李逍遙️

mysql数据库javamysql

importcom.guangyi.project.model.system.DataBaseInFo;importjava.io.BufferedReader;importjava.io.File;importjava.io.FileOutputStream;importjava.io.IOException;importjava.io.InputStream;importjava.io.Inp

- RuoYi框架连接SQL Server时解决“SSL协议不支持”和“加密协议错误”

专注代码十年

ssl网络协议网络

RuoYi框架连接SQLServer时解决“SSL协议不支持”和“加密协议错误”在使用RuoYi框架进行开发时,与SQLServer数据库建立连接可能会遇到SSL协议相关的问题。以下是两个常见的错误信息及其解决方案。错误信息1com.zaxxer.hikari.pool.HikariPool$PoolInitializationException:Failedtoinitializepool;'e

- java 离线语音_Java通过JNA&麦克风调离线语音唤醒

不吃芹菜的鸭梨君

java离线语音

packagecom.day.iFlyInterface.commonUtil.dll.ivw;importjava.io.File;importjava.io.FileInputStream;importjava.io.FileNotFoundException;importjava.io.IOException;importjava.util.Arrays;importjavax.sound.

- python3实现爬取淘宝页面的商品的数据信息(selenium+pyquery+mongodb)

flood_d

mongodbpythonseleniumpyquery爬虫

1.环境须知做这个爬取的时候需要安装好python3.6和selenium、pyquery等等一些比较常用的爬取和解析库,还需要安装MongoDB这个分布式数据库。2.直接上代码spider.pyimportrefromconfigimport*importpymongofromseleniumimportwebdriverfromselenium.common.exceptionsimportT

- 数据中台(二)数据中台相关技术栈

Yuan_CSDF

#数据中台

1.平台搭建1.1.Amabari+HDP1.2.CM+CDH2.相关的技术栈数据存储:HDFS,HBase,Kudu等数据计算:MapReduce,Spark,Flink交互式查询:Impala,Presto在线实时分析:ClickHouse,Kylin,Doris,Druid,Kudu等资源调度:YARN,Mesos,Kubernetes任务调度:Oozie,Azakaban,AirFlow,

- Springboot启动失败:解决「org.yaml.snakeyaml.error.YAMLException」报错全记录

-天凉好秋-

springbootjavaideavisualstudiocode

##关键字Java、Springboot、vscode、idea、nacos启动失败、YAMLException、字符集配置---##背景环境###项目架构-**框架**:SSM(Spring+SpringMVC+MyBatis)-**中间件**:Nacos(配置管理+服务发现)-**配置存储**:Nacos中存储了Springboot的配置,包括:数据库连接信息、Redis连接信息、服务配置等。

- io.lettuce.core.RedisCommandExecutionException: NOAUTH Authentication required可能不是密码问题

专注_每天进步一点点

08Redisjavaredis

背景我用的版本是:io.lettucelettuce-core6.1.10.RELEASE问题描述本地(windows环境)和测试环境redis连接都没有问题,生产环境报错:io.lettuce.core.RedisCommandExecutionException:NOAUTHAuthenticationrequired解决办法(1)第一反应肯定是密码错误,然而检查了密码并没有问题(2)客户端版

- 多线程(4)

噼里啪啦啦.

java算法前端

接着介绍多线程安全问题.由于线程是随机调度,抢占式执行的,随机性就会导致程序的执行顺序产生不同的结果,从而产生BUG.下面是一个线程不安全的例子.packageDemo4;publicclassDemo1{privatestaticintcount=0;publicstaticvoidmain(String[]args)throwsInterruptedException{Threadt1=new

- 解决Python中递归报错的问题

硫酸锌01

Pythonpython

1、问题背景Duringhandlingoftheaboveexception,anotherexceptionoccurred:有没有见到过这个报错?当出现这个报错的时候,意味着报错信息特别特别地长,难以关注到有效信息。那么这种报错是如何产生的?以及如何设计才能避免产生这种冗长的报错?2、我的需求如果我有一个Python的多维数组列表:lst=[[[1,2],[3,4]],[[5,6],[7,8

- Android 高频面试必问之Java基础

2401_83641443

程序员android面试java

BootstrapClassLoader:Bootstrap类加载器负责加载rt.jar中的JDK类文件,它是所有类加载器的父加载器。Bootstrap类加载器没有任何父类加载器,如果调用String.class.getClassLoader(),会返回null,任何基于此的代码会抛出NUllPointerException异常,因此Bootstrap加载器又被称为初始类加载器。ExtClassL

- 一文掌握python异常处理(try...except...)

程序员neil

pythonpython开发语言

目录1、基础结构2、try块3、except块4、else块5、finally块6、自定义异常7、抛出异常8、常用的内置异常类型1)、Exception:捕捉所有异常。2)、BaseException:所有异常的基类。通常不应该直接捕获这个类的实例,除非你确实打算捕获所有异常。3)、SyntaxError:Python语法错误,比如拼写错误或不正确的语句结构。4)、ImportError:尝试导入

- python中 except与 except Exception as e的区别

东木月

pythonpython性能提升python开发语言

python中except与exceptExceptionase的区别1、捕获所有异常使用except#-*-coding:utf-8-*-"""@contact:微信1257309054@file:except与exceptExceptionase的区别.py@time:2024/4/1313:26@author:LDC"""importsysdeffun1():try:sys<

- 编程提示异常就不用挨个度娘了——Python初识必备

爱码小士

Python网络爬虫机器学习web开发人工智能

相信对于很多小白,新手对一些异常提示,都不一定明白其含义,所以给大家整理了这样一份中英对照表,对大家一定有所帮助,当然最好都能熟记于心,这样就不用再去一个个度娘了,觉得这个表不错就点个赞加转发吧,文末更多福利异常名称描述BaseException所有异常的基类SystemExit解释器请求退出KeyboardInterrupt用户中断执行(通常是输入^C)Exception常规错误的基类StopI

- 通过查看Windbg中变量的值,快速定位因内存不足引发bad alloc异常(C++ EH exception - code e06d7363)导致程序崩溃的问题

dvlinker

C/C++实战专栏C++软件调试codee06d7363Windbg内存不足badalloc内存申请失败

目录1、概述2、C++EHexception-codee06d7363与标准C++异常2.1、C++EHexception-codee06d7363说明2.2、C++标准库与C++异常2.2.1、C++抛出异常与捕获异常2.2.2、C++异常类3、查看函数调用堆栈,发现抛出了badalloc内存分配失败的异常4、在调用堆栈中看到CreateBmp创建位图的接口,怀疑可能是使用了异常大的宽高值,导致

- 矩阵求逆(JAVA)初等行变换

qiuwanchi

矩阵求逆(JAVA)

package gaodai.matrix;

import gaodai.determinant.DeterminantCalculation;

import java.util.ArrayList;

import java.util.List;

import java.util.Scanner;

/**

* 矩阵求逆(初等行变换)

* @author 邱万迟

*

- JDK timer

antlove

javajdkschedulecodetimer

1.java.util.Timer.schedule(TimerTask task, long delay):多长时间(毫秒)后执行任务

2.java.util.Timer.schedule(TimerTask task, Date time):设定某个时间执行任务

3.java.util.Timer.schedule(TimerTask task, long delay,longperiod

- JVM调优总结 -Xms -Xmx -Xmn -Xss

coder_xpf

jvm应用服务器

堆大小设置JVM 中最大堆大小有三方面限制:相关操作系统的数据模型(32-bt还是64-bit)限制;系统的可用虚拟内存限制;系统的可用物理内存限制。32位系统下,一般限制在1.5G~2G;64为操作系统对内存无限制。我在Windows Server 2003 系统,3.5G物理内存,JDK5.0下测试,最大可设置为1478m。

典型设置:

java -Xmx

- JDBC连接数据库

Array_06

jdbc

package Util;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.ResultSet;

import java.sql.SQLException;

import java.sql.Statement;

public class JDBCUtil {

//完

- Unsupported major.minor version 51.0(jdk版本错误)

oloz

java

java.lang.UnsupportedClassVersionError: cn/support/cache/CacheType : Unsupported major.minor version 51.0 (unable to load class cn.support.cache.CacheType)

at org.apache.catalina.loader.WebappClassL

- 用多个线程处理1个List集合

362217990

多线程threadlist集合

昨天发了一个提问,启动5个线程将一个List中的内容,然后将5个线程的内容拼接起来,由于时间比较急迫,自己就写了一个Demo,希望对菜鸟有参考意义。。

import java.util.ArrayList;

import java.util.List;

import java.util.concurrent.CountDownLatch;

public c

- JSP简单访问数据库

香水浓

sqlmysqljsp

学习使用javaBean,代码很烂,仅为留个脚印

public class DBHelper {

private String driverName;

private String url;

private String user;

private String password;

private Connection connection;

privat

- Flex4中使用组件添加柱状图、饼状图等图表

AdyZhang

Flex

1.添加一个最简单的柱状图

? 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28

<?xml version=

"1.0"&n

- Android 5.0 - ProgressBar 进度条无法展示到按钮的前面

aijuans

android

在低于SDK < 21 的版本中,ProgressBar 可以展示到按钮前面,并且为之在按钮的中间,但是切换到android 5.0后进度条ProgressBar 展示顺序变化了,按钮再前面,ProgressBar 在后面了我的xml配置文件如下:

[html]

view plain

copy

<RelativeLa

- 查询汇总的sql

baalwolf

sql

select list.listname, list.createtime,listcount from dream_list as list , (select listid,count(listid) as listcount from dream_list_user group by listid order by count(

- Linux du命令和df命令区别

BigBird2012

linux

1,两者区别

du,disk usage,是通过搜索文件来计算每个文件的大小然后累加,du能看到的文件只是一些当前存在的,没有被删除的。他计算的大小就是当前他认为存在的所有文件大小的累加和。

- AngularJS中的$apply,用还是不用?

bijian1013

JavaScriptAngularJS$apply

在AngularJS开发中,何时应该调用$scope.$apply(),何时不应该调用。下面我们透彻地解释这个问题。

但是首先,让我们把$apply转换成一种简化的形式。

scope.$apply就像一个懒惰的工人。它需要按照命

- [Zookeeper学习笔记十]Zookeeper源代码分析之ClientCnxn数据序列化和反序列化

bit1129

zookeeper

ClientCnxn是Zookeeper客户端和Zookeeper服务器端进行通信和事件通知处理的主要类,它内部包含两个类,1. SendThread 2. EventThread, SendThread负责客户端和服务器端的数据通信,也包括事件信息的传输,EventThread主要在客户端回调注册的Watchers进行通知处理

ClientCnxn构造方法

&

- 【Java命令一】jmap

bit1129

Java命令

jmap命令的用法:

[hadoop@hadoop sbin]$ jmap

Usage:

jmap [option] <pid>

(to connect to running process)

jmap [option] <executable <core>

(to connect to a

- Apache 服务器安全防护及实战

ronin47

此文转自IBM.

Apache 服务简介

Web 服务器也称为 WWW 服务器或 HTTP 服务器 (HTTP Server),它是 Internet 上最常见也是使用最频繁的服务器之一,Web 服务器能够为用户提供网页浏览、论坛访问等等服务。

由于用户在通过 Web 浏览器访问信息资源的过程中,无须再关心一些技术性的细节,而且界面非常友好,因而 Web 在 Internet 上一推出就得到

- unity 3d实例化位置出现布置?

brotherlamp

unity教程unityunity资料unity视频unity自学

问:unity 3d实例化位置出现布置?

答:实例化的同时就可以指定被实例化的物体的位置,即 position

Instantiate (original : Object, position : Vector3, rotation : Quaternion) : Object

这样你不需要再用Transform.Position了,

如果你省略了第二个参数(

- 《重构,改善现有代码的设计》第八章 Duplicate Observed Data

bylijinnan

java重构

import java.awt.Color;

import java.awt.Container;

import java.awt.FlowLayout;

import java.awt.Label;

import java.awt.TextField;

import java.awt.event.FocusAdapter;

import java.awt.event.FocusE

- struts2更改struts.xml配置目录

chiangfai

struts.xml

struts2默认是读取classes目录下的配置文件,要更改配置文件目录,比如放在WEB-INF下,路径应该写成../struts.xml(非/WEB-INF/struts.xml)

web.xml文件修改如下:

<filter>

<filter-name>struts2</filter-name>

<filter-class&g

- redis做缓存时的一点优化

chenchao051

redishadooppipeline

最近集群上有个job,其中需要短时间内频繁访问缓存,大概7亿多次。我这边的缓存是使用redis来做的,问题就来了。

首先,redis中存的是普通kv,没有考虑使用hash等解结构,那么以为着这个job需要访问7亿多次redis,导致效率低,且出现很多redi

- mysql导出数据不输出标题行

daizj

mysql数据导出去掉第一行去掉标题

当想使用数据库中的某些数据,想将其导入到文件中,而想去掉第一行的标题是可以加上-N参数

如通过下面命令导出数据:

mysql -uuserName -ppasswd -hhost -Pport -Ddatabase -e " select * from tableName" > exportResult.txt

结果为:

studentid

- phpexcel导出excel表简单入门示例

dcj3sjt126com

PHPExcelphpexcel

先下载PHPEXCEL类文件,放在class目录下面,然后新建一个index.php文件,内容如下

<?php

error_reporting(E_ALL);

ini_set('display_errors', TRUE);

ini_set('display_startup_errors', TRUE);

if (PHP_SAPI == 'cli')

die('

- 爱情格言

dcj3sjt126com

格言

1) I love you not because of who you are, but because of who I am when I am with you. 我爱你,不是因为你是一个怎样的人,而是因为我喜欢与你在一起时的感觉。 2) No man or woman is worth your tears, and the one who is, won‘t

- 转 Activity 详解——Activity文档翻译

e200702084

androidUIsqlite配置管理网络应用

activity 展现在用户面前的经常是全屏窗口,你也可以将 activity 作为浮动窗口来使用(使用设置了 windowIsFloating 的主题),或者嵌入到其他的 activity (使用 ActivityGroup )中。 当用户离开 activity 时你可以在 onPause() 进行相应的操作 。更重要的是,用户做的任何改变都应该在该点上提交 ( 经常提交到 ContentPro

- win7安装MongoDB服务

geeksun

mongodb

1. 下载MongoDB的windows版本:mongodb-win32-x86_64-2008plus-ssl-3.0.4.zip,Linux版本也在这里下载,下载地址: http://www.mongodb.org/downloads

2. 解压MongoDB在D:\server\mongodb, 在D:\server\mongodb下创建d

- Javascript魔法方法:__defineGetter__,__defineSetter__

hongtoushizi

js

转载自: http://www.blackglory.me/javascript-magic-method-definegetter-definesetter/

在javascript的类中,可以用defineGetter和defineSetter_控制成员变量的Get和Set行为

例如,在一个图书类中,我们自动为Book加上书名符号:

function Book(name){

- 错误的日期格式可能导致走nginx proxy cache时不能进行304响应

jinnianshilongnian

cache

昨天在整合某些系统的nginx配置时,出现了当使用nginx cache时无法返回304响应的情况,出问题的响应头: Content-Type:text/html; charset=gb2312 Date:Mon, 05 Jan 2015 01:58:05 GMT Expires:Mon , 05 Jan 15 02:03:00 GMT Last-Modified:Mon, 05

- 数据源架构模式之行数据入口

home198979

PHP架构行数据入口

注:看不懂的请勿踩,此文章非针对java,java爱好者可直接略过。

一、概念

行数据入口(Row Data Gateway):充当数据源中单条记录入口的对象,每行一个实例。

二、简单实现行数据入口

为了方便理解,还是先简单实现:

<?php

/**

* 行数据入口类

*/

class OrderGateway {

/*定义元数

- Linux各个目录的作用及内容

pda158

linux脚本

1)根目录“/” 根目录位于目录结构的最顶层,用斜线(/)表示,类似于

Windows

操作系统的“C:\“,包含Fedora操作系统中所有的目录和文件。 2)/bin /bin 目录又称为二进制目录,包含了那些供系统管理员和普通用户使用的重要

linux命令的二进制映像。该目录存放的内容包括各种可执行文件,还有某些可执行文件的符号连接。常用的命令有:cp、d

- ubuntu12.04上编译openjdk7

ol_beta

HotSpotjvmjdkOpenJDK

获取源码

从openjdk代码仓库获取(比较慢)

安装mercurial Mercurial是一个版本管理工具。 sudo apt-get install mercurial

将以下内容添加到$HOME/.hgrc文件中,如果没有则自己创建一个: [extensions] forest=/home/lichengwu/hgforest-crew/forest.py fe

- 将数据库字段转换成设计文档所需的字段

vipbooks

设计模式工作正则表达式

哈哈,出差这么久终于回来了,回家的感觉真好!

PowerDesigner的物理数据库一出来,设计文档中要改的字段就多得不计其数,如果要把PowerDesigner中的字段一个个Copy到设计文档中,那将会是一件非常痛苦的事情。