hadoop2.4编译安装+wordcount测试

省略linux安装过程 本人在centos6.5环境下完成

首先是hadoop2.4的编译

由于是在64位环境下,所以不得不编译了

这里主要参考了http://blog.csdn.net/wangmuming/article/details/26594923

安装JDK

hadoop是java写的,编译hadoop必须安装jdk。

如果系统自带openjdk,请先删除再安装jdk

rpm -qa | grep java

显示如下信息:

java-1.4.2-gcj-compat-1.4.2.0-40jpp.115

java-1.6.0-openjdk-1.6.0.0-1.7.b09.el5

卸载:

rpm -e --nodeps java-1.4.2-gcj-compat-1.4.2.0-40jpp.115

rpm -e --nodeps java-1.6.0-openjdk-1.6.0.0-1.7.b09.el5

从oracle官网下载jdk,下载地址是http://www.oracle.com/technetwork/java/javase/downloads/jdk7-downloads-1880260.html,选择 jdk-7u60-linux-x64.tar.gz下载。

执行以下命令解压缩jdk

tar -zxvf jdk-7u60-linux-x64.tar.gz

会生成一个文件夹jdk1.7.0_60,然后设置环境变量中。

(这里一定不要用1.8的版本,据说好像是因为1.8不向下兼容,反正我用1.8后面没有编译成功,所以友情提示,用1.7)

执行命令 vi/etc/profile,增加以下内容到配置文件中,结果显示如下

export JAVA_HOME=/usr/java/jdk1.7.0_45

export JAVA_OPTS="-Xms1024m-Xmx1024m"

exportCLASSPATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar:$CLASSPATH

export PATH=$JAVA_HOME/bin:$PATH

保存退出文件后,执行以下命令

source /etc/profile

java –version 看到显示的版本信息即正确。

安装maven

hadoop源码是使用maven组织管理的,必须下载maven。从maven官网下载,下载地址是http://maven.apache.org/download.cgi,选择 apache-maven-3.1.0-bin.tar.gz 下载,不要选择3.1下载。

执行以下命令解压缩jdk

tar -zxvf apache-maven-3.1.0-bin.tar.gz

会生成一个文件夹apache-maven-3.1.0,然后设置环境变量中。

执行命令vi /etc/profile,编辑结果如下所示

MAVEN_HOME=/usr/maven/apache-maven-3.1.0

export MAVEN_HOME

export PATH=${PATH}:${MAVEN_HOME}/bin

保存退出文件后,执行以下命令

source /etc/profile

mvn -version

如果看到下面的显示信息,证明配置正确了。

安装findbugs(可选步骤)

findbugs是用于生成文档的。如果不需要编译生成文档,可以不执行该步骤。从findbugs官网下载findbugs,下载地址是http://sourceforge.jp/projects/sfnet_findbugs/releases/,选择findbugs-3.0.0-dev-20131204-e3cbbd5.tar.gz下载。

执行以下命令解压缩jdk

tar -zxvf findbugs-3.0.0-dev-20131204-e3cbbd5.tar.gz

会生成一个文件夹findbugs-3.0.0-dev-20131204-e3cbbd5,然后设置环境变量中。

执行命令vi /etc/profile,编辑结果如下所示

FINDBUGS_HOME=/usr/local/findbugs

export FINDBUGS_HOME

export PATH=${PATH}:${FINDBUGS_HOME}/bin

保存退出文件后,执行以下命令

source /etc/profile

findbugs -version

如果看到下面的显示信息,证明配置正确了。

安装protoc

hadoop使用protocol buffer通信,从protoc官网下载protoc,下载地址是https://code.google.com/p/protobuf/downloads/list,选择protobuf-2.5.0.tar.gz 下载。

为了编译安装protoc,需要下载几个工具,顺序执行以下命令

yum install gcc

yum intall gcc-c++

yum install make

如果操作系统是redhat6那么gcc和make已经安装了。在命令运行时,需要用户经常输入“y”。

然后执行以下命令解压缩protobuf

tar -zxvf protobuf-2.5.0.tar.gz

会生成一个文件夹protobuf-2.5.0,执行以下命令编译protobuf。

cd protobuf-2.5.0

./configure --prefix=/usr/local/protoc/

make && make install

只要不出错就可以了。

执行完毕后,编译后的文件位于/usr/local/protoc/目录下,我们设置一下环境变量

执行命令vi /etc/profile,编辑结果如下所示

export PATH=${PATH}:/usr/local/protoc/bin

保存退出文件后,执行以下命令

source /etc/profile

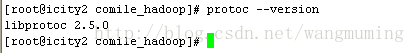

protoc --version

如果看到下面的显示信息,证明配置正确了。

安装其他依赖

顺序执行以下命令

yum install cmake

yum install openssl-devel

yum install ncurses-devel

安装完毕即可。

编译hadoop2.4源码

从hadoop官网下载2.4稳定版,下载地址是http://apache.fayea.com/apache-mirror/hadoop/common/hadoop-2.4.0/,下载hadoop-2.4.0-src.tar.gz 下载。

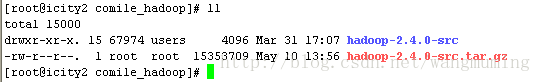

执行以下命令解压缩jdk

tar -zxvf hadoop-2.4.0-src.tar.gz 如图所示:

进入到目录/usr/local/hadoop-2.2.0-src中,执行命令

mvn package -DskipTests-Pdist,native,docs

如果没有安装findbugs,把上面命令中的docs去掉即可,就不必生成文档了。

该命令会从外网下载依赖的jar,编译hadoop源码,需要花费很长时间。

在等待n久之后,可以看到如下的结果:

main:

[INFO] Executedtasks

[INFO]

[INFO] ---maven-javadoc-plugin:2.8.1:jar (module-javadocs) @ hadoop-dist ---

[INFO] Buildingjar: /usr/local/comile_hadoop/hadoop-2.4.0-src/hadoop-dist/target/hadoop-dist-2.4.0-javadoc.jar

[INFO]------------------------------------------------------------------------

[INFO] ReactorSummary:

[INFO]

[INFO] ApacheHadoop Main ................................ SUCCESS [30.591s]

[INFO] ApacheHadoop Project POM ......................... SUCCESS [8.024s]

[INFO] ApacheHadoop Annotations ......................... SUCCESS [19.005s]

[INFO] ApacheHadoop Assemblies .......................... SUCCESS [0.908s]

[INFO] ApacheHadoop Project Dist POM .................... SUCCESS [16.797s]

[INFO] ApacheHadoop Maven Plugins ....................... SUCCESS [14.676s]

[INFO] ApacheHadoop MiniKDC ............................. SUCCESS [20.931s]

[INFO] ApacheHadoop Auth ................................ SUCCESS [52.092s]

[INFO] ApacheHadoop Auth Examples ....................... SUCCESS [10.980s]

[INFO] ApacheHadoop Common .............................. SUCCESS [11:32.369s]

[INFO] ApacheHadoop NFS ................................. SUCCESS [24.092s]

[INFO] ApacheHadoop Common Project ...................... SUCCESS [0.066s]

[INFO] ApacheHadoop HDFS ................................ SUCCESS [23:42.723s]

[INFO] ApacheHadoop HttpFS .............................. SUCCESS [59.023s]

[INFO] ApacheHadoop HDFS BookKeeper Journal ............. SUCCESS [13.856s]

[INFO] ApacheHadoop HDFS-NFS ............................ SUCCESS [18.362s]

[INFO] ApacheHadoop HDFS Project ........................ SUCCESS [0.095s]

[INFO]hadoop-yarn ....................................... SUCCESS [0.153s]

[INFO]hadoop-yarn-api ................................... SUCCESS [2:19.762s]

[INFO]hadoop-yarn-common ................................ SUCCESS [1:09.344s]

[INFO]hadoop-yarn-server ................................ SUCCESS [0.214s]

[INFO]hadoop-yarn-server-common ......................... SUCCESS [16.529s]

[INFO]hadoop-yarn-server-nodemanager .................... SUCCESS [39.870s]

[INFO]hadoop-yarn-server-web-proxy ...................... SUCCESS [7.958s]

[INFO]hadoop-yarn-server-applicationhistoryservice ...... SUCCESS [10.571s]

[INFO]hadoop-yarn-server-resourcemanager ................ SUCCESS [30.395s]

[INFO]hadoop-yarn-server-tests .......................... SUCCESS [3.403s]

[INFO]hadoop-yarn-client ................................ SUCCESS [10.963s]

[INFO]hadoop-yarn-applications .......................... SUCCESS [0.073s]

[INFO]hadoop-yarn-applications-distributedshell ......... SUCCESS [5.617s]

[INFO]hadoop-yarn-applications-unmanaged-am-launcher .... SUCCESS [8.685s]

[INFO]hadoop-yarn-site .................................. SUCCESS [0.415s]

[INFO]hadoop-yarn-project ............................... SUCCESS [10.655s]

[INFO]hadoop-mapreduce-client ........................... SUCCESS [0.498s]

[INFO]hadoop-mapreduce-client-core ...................... SUCCESS [1:09.961s]

[INFO]hadoop-mapreduce-client-common .................... SUCCESS [35.913s]

[INFO]hadoop-mapreduce-client-shuffle ................... SUCCESS [9.373s]

[INFO]hadoop-mapreduce-client-app ....................... SUCCESS [24.999s]

[INFO]hadoop-mapreduce-client-hs ........................ SUCCESS [20.270s]

[INFO]hadoop-mapreduce-client-jobclient ................. SUCCESS [22.128s]

[INFO]hadoop-mapreduce-client-hs-plugins ................ SUCCESS [2.831s]

[INFO] ApacheHadoop MapReduce Examples .................. SUCCESS [16.102s]

[INFO]hadoop-mapreduce .................................. SUCCESS [8.448s]

[INFO] ApacheHadoop MapReduce Streaming ................. SUCCESS [14.307s]

[INFO] ApacheHadoop Distributed Copy .................... SUCCESS [20.261s]

[INFO] ApacheHadoop Archives ............................ SUCCESS [5.800s]

[INFO] ApacheHadoop Rumen ............................... SUCCESS [22.152s]

[INFO] ApacheHadoop Gridmix ............................. SUCCESS [11.213s]

[INFO] ApacheHadoop Data Join ........................... SUCCESS [5.208s]

[INFO] ApacheHadoop Extras .............................. SUCCESS [6.728s]

[INFO] ApacheHadoop Pipes ............................... SUCCESS [6.053s]

[INFO] ApacheHadoop OpenStack support ................... SUCCESS [25.927s]

[INFO] ApacheHadoop Client .............................. SUCCESS [8.744s]

[INFO] ApacheHadoop Mini-Cluster ........................ SUCCESS [0.154s]

[INFO] ApacheHadoop Scheduler Load Simulator ............ SUCCESS [15.622s]

[INFO] ApacheHadoop Tools Dist .......................... SUCCESS [9.695s]

[INFO] ApacheHadoop Tools ............................... SUCCESS [0.110s]

[INFO] ApacheHadoop Distribution ........................ SUCCESS [3:59.323s]

[INFO]------------------------------------------------------------------------

[INFO] BUILDSUCCESS

[INFO]------------------------------------------------------------------------

[INFO] Totaltime: 56:17.413s

[INFO] Finishedat: Thu May 22 17:13:49 CST 2014

[INFO] FinalMemory: 88M/371M

[INFO]------------------------------------------------------------------------

好了,编译完成了。

编译后的代码在hadoop-2.4.0-src/hadoop-dist/target下面

未完成...