Linkedin Databus

Why?

关系型数据库仍然作为主要的primary data store的方案

Relational Databases have been around for a long time and have become a trusted storage medium for all of a company's data.

传统的数据仓库的ETL和OLAP方案

Data is pulled off this primary data store, transformed, and then stored in a secondary data store, such as a data warehouse.

The industry typically uses ETL to run nightly jobs to give executives a view of the previous day's, week's, month's, year's business performance.

OLTP (Online Transaction Processing) vs. OLAP (Online Analytic Processing) :

This differentiates between their uses -- OLTP for primary data serving, OLAP for analytic processing of a modified copy of the primary data.

BUT, 近来产生大量near-real-time data needs

At LinkedIn, it also feeds real-time search indexes, real-time network graph indexes, cache coherency, Database Read Replicas, etc... These are examples of LinkedIn's near-real-time data needs.

对于这样的需求, ETL和OLAP无法满足实时性

我们讨论的是, 怎么把数据从Primay data store以near-real-time搬到另一个地方处理的问题?

How?

Linkedin Databus, 可以让变更事件的延长达到微秒级,每台服务器每秒可以处理数千次数据吞吐变更事件,同时还支持无限回溯能力和丰富的变更订阅功能

如何获取变更?

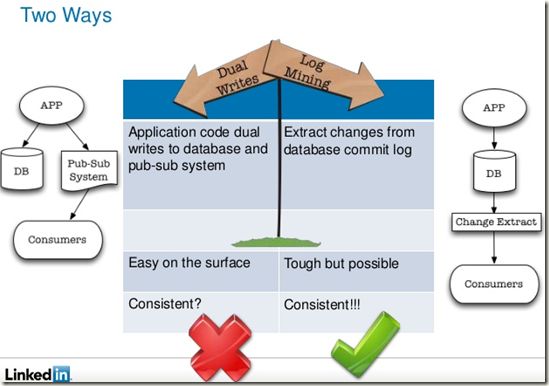

处理这种需求有两种常用方式:

应用驱动双向写:这种模式下,应用层同时向数据库和另一个消息系统发起写操作。这种实现看起来简单,因为可以控制向数据库写的应用代码。但是,它会引入一致性问题,因为没有复杂的协调协议(比如两阶段提交协议或者paxos算法),所以当出现问题时,很难保证数据库和消息系统完全处于相同的锁定状态。两个系统需要精确完成同样的写操作,并以同样的顺序完成序列化。如果写操作是有条件的或是有部分更新的语义,那么事情就会变得更麻烦。

数据库日志挖掘:将数据库作为唯一真实数据来源,并将变更从事务或提交日志中提取出来。这可以解决一致性问题,但是很难实现,因为 Oracle和MySQL这样的数据库有私有的交易日志格式和复制冗余解决方案,难以保证版本升级之后的可用性。由于要解决的是处理应用代码发起的数据变更,然后写入到另一个数据库中,冗余系统就得是用户层面的,而且要与来源无关。对于快速变化的技术公司,这种与数据来源的独立性非常重要,可以避免应用栈的技术锁定,或是绑死在二进制格式上。

如果要求不是很严格, 采用第一种方法也是可以接受的, 在存DB成功后, 再写pub-sub system

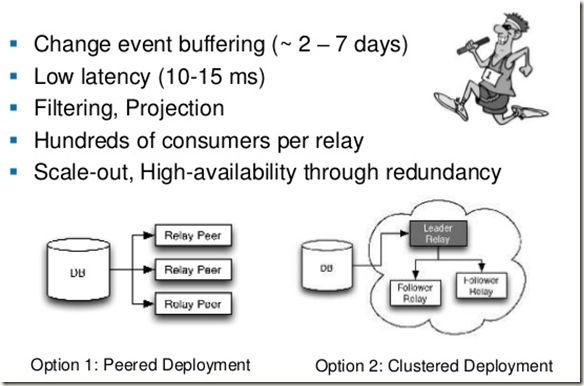

如何微秒级的传递变更?

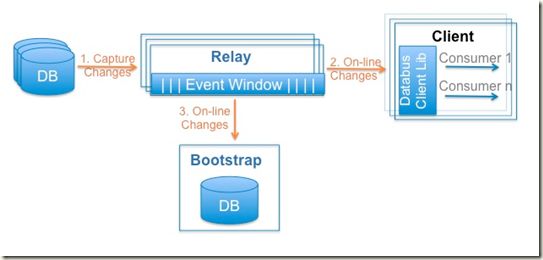

Relays, 中继

中继就是Memory buffer, 仍然是空间换时间的策略, 如果需要速度足够快, 就需要Relay足够多, 离client足够近, 因为client从Relay memory buffer中取数据的速度是无法优化的. 如何组织Relay集群, 有如下两种方式,

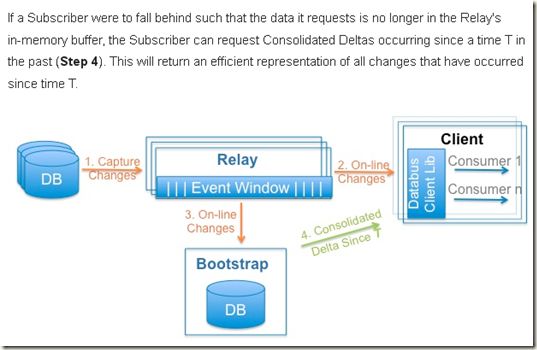

A Databus Relay will pull the recently committed transactions from the source Database (e.g. Oracle, MySQL, etc...) (Step 1).

The Relay will deserialize this data into a compact form (Avro etc...) and store the result in a circular in-memory buffer.

Clients (subscribers) listening for events will pull recent online changes as they appear in the Relay (Step 2).

A Bootstrap component is also listening to on-line changes as they appear in the Relay.(Step 3)

首先, 为了保证效率需要把变更数据转化为比较高效的格式(如Avro), 并且放到circular in-memory buffer

然后, Client(subscribers)侦听并从Relay的memory buffer中把更新数据Pull过去, 不能使用Push模式, 因为不同的分析效率可能有很大区别.

在Relay, 数据是放在memory buffer中的, memory是有限的, 所以采用circular方式

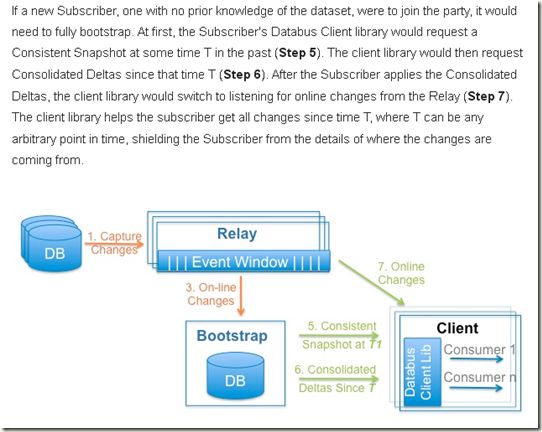

问题是, 每个client的要求是不一样的, 你无法知道什么时候数据真正失效, 所以必须有方法来保存历史数据, 那就是Bootstrap

用户有两种情况会用到Bootstrap,

1. Slow client, 需要的数据在relay中已经被覆盖, 所以需要去Bootstrap里面取

2. New client, 需要取所有的历史数据, Bootstrap之所以得名

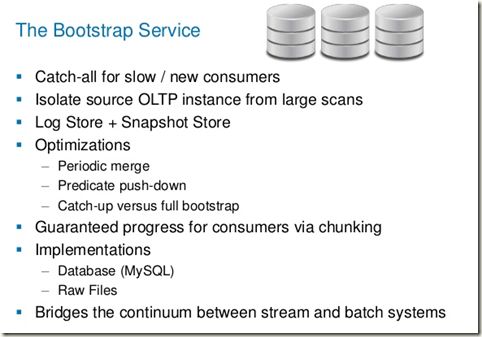

Databus' Bootstrap

One of the most innovative features of Databus is its Bootstrap component.

Data Change Capture systems have existed for a long time (e.g. Oracle Streams). However, all of these systems put load on the primary data store when a consumer falls behind.

Bootstrapping a brand new consumer is another problem. It typically involves a very manual process -- i.e. restore the previous night's snapshot on a temporary Oracle instance, transform the data and transfer it to the consumer, then apply changes since the snapshot, etc...

Databus's Bootstrap component handles both of the above use-cases in a seamless, automated fashion.

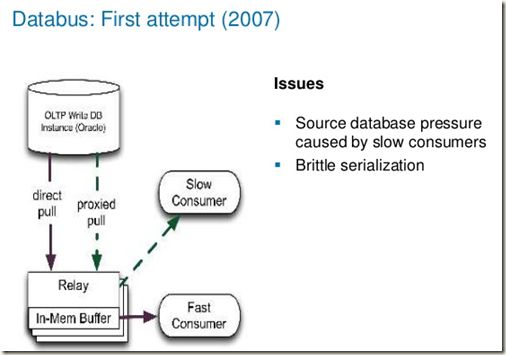

Databus最具创新的是Bootstrap, 因为虽然Data Change Capture一直存在, 但是如同第一版Databus, 有个比较严重的问题是

Relay只能buffer最新的数据, 对于老数据, Relay会作为proxy从primary data store直接取数据, 然后返回给client

所以对于slow client, 这样会大大增加primary data store的负担.

同时对于new client, 如果需要获取全部数据, 是很麻烦的, very manual process

而Bootstrap可以完全seamless的解决上面所有的问题, 确实算是创新

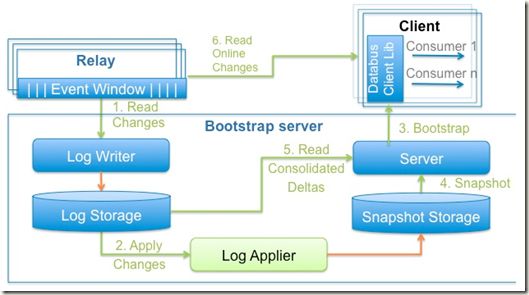

How Does Databus' Bootstrap Component Work?

Bootstrap把更新不断的读到Log storage里面, 然后再批量的导入Snapshot Storage中

这样设计出于效率考虑, 对于Snapshot可以使用Raw Files实现, 而Log storage需要不断更新, 需要使用类似DB取实现.

The Databus Bootstrap component is made up of 2 types of storage,

Log Storage serves Consolidated Deltas

Snapshot Storage serves Consistent Snapshots

1. As shown earlier, the Bootstrap component listens for online changes as they occur in the Relay. A LogWriter appends these changes to Log Storage.

2. A Log Applier applies recent operations in Log Storage to Snapshot Storage

3. If a new subscriber connects to Databus, the subscriber will bootstrap from the Bootstrap Server running inside the Bootstrap component

4. The client will first get a Consistent Snapshot from Snapshot Storage

5. The client will then get outstanding Consolidated Deltas from Log Storage

6. Once the client has caught up to within the Relay's in-memory buffer window, the client will switch to reading from the Relay

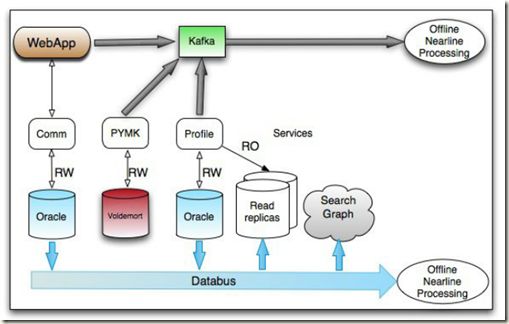

和Kafka有什么区别

Where as DataBus is used for Database change capture and replication, Kafka is used for application-level data streams

在linkedin自己的架构中, 他们的关系是这样的

就现在状态而言, databus更侧重于DB的change capture, 并且完全基于memory应该latency更优秀些

对于其他场景, Kafka更通用一些...

Github

https://github.com/linkedin/databus

LinkedIn: Creating a Low Latency Change Data Capture System with Databus

Databus: LinkedIn's Change Data Capture Pipeline SOCC 2012

http://www.slideshare.net/ShirshankaDas/databus-socc-2012

LinkedIn Data Infrastructure (QCon London 2012)

http://www.slideshare.net/r39132/linkedin-data-infrastructure-qcon-london-2012