HashMap原理源码加面试

HashMap原理

- 几个要点

- 分析源码

-

- 构造函数:

- HashMap的内部结构:

- 下标的计算和存储

- 如何根据key找到value

- 红黑树和链表的转换

几个要点

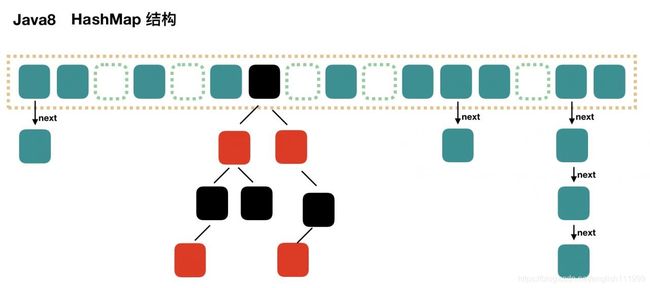

结构:数组+链表

hashing(哈希法)的概念:散列法(Hashing)是一种将字符组成的字符串转换为固定长度(一般是更短长度)的数值或索引值的方法,称为散列法,也叫哈希法。

HashMap的key下标计算方式:先前后16为

扩容机制:每次扩容数组长度翻倍,扩容因数:默认数组长度的四分之三,可自定义;数组的长度也可自己定义

1.8之后变化:链表会和红黑树相互转化;阈值之8和6,java 1.8 就是没有红黑树和链表的转换

查找时间复杂度:红黑树:O(lgn),单链表:O(n)

key下标的计算:先前后16异或操作,之后再和数组长度减一,与操作;近似求余数

分析源码

构造函数:

/**

* Constructs an empty HashMap with the specified initial

* capacity and load factor.

*

* @param initialCapacity the initial capacity

* @param loadFactor the load factor

* @throws IllegalArgumentException if the initial capacity is negative

* or the load factor is nonpositive

*/

public HashMap(int initialCapacity, float loadFactor) {

if (initialCapacity < 0)

throw new IllegalArgumentException("Illegal initial capacity: " +

initialCapacity);

if (initialCapacity > MAXIMUM_CAPACITY)

initialCapacity = MAXIMUM_CAPACITY;

if (loadFactor <= 0 || Float.isNaN(loadFactor))

throw new IllegalArgumentException("Illegal load factor: " +

loadFactor);

this.loadFactor = loadFactor;//扩容因子

this.threshold = tableSizeFor(initialCapacity);//数组长度

}

/**

* Constructs an empty HashMap with the specified initial

* capacity and the default load factor (0.75).

*

* @param initialCapacity the initial capacity.

* @throws IllegalArgumentException if the initial capacity is negative.

*/

public HashMap(int initialCapacity) {

this(initialCapacity, DEFAULT_LOAD_FACTOR);

}

/**

* Constructs an empty HashMap with the default initial capacity

* (16) and the default load factor (0.75).

*/

public HashMap() {

this.loadFactor = DEFAULT_LOAD_FACTOR; // all other fields defaulted

}

可以自定义扩容因子和数组(桶)的长度;扩容因子是可以随意定义的,但是桶的长度不是随意定义的,是经过计算的,重点就在于tableSizeFor()方法;

/**

* Returns a power of two size for the given target capacity.

*/

static final int tableSizeFor(int cap) {

int n = cap - 1;

n |= n >>> 1;

n |= n >>> 2;

n |= n >>> 4;

n |= n >>> 8;

n |= n >>> 16;

return (n < 0) ? 1 : (n >= MAXIMUM_CAPACITY) ? MAXIMUM_CAPACITY : n + 1;

}

输入0~15结果是16,输入16~31结果就是32,以此类推,总能得到最近的2的N次方数;也就是数组的长度不完全自定义;当然最小的数就是16

HashMap的内部结构:

下面是一个简单HashMap结构代码,结构是相同的,就是没有经过hash算法去确定下标和位置;

public class Node<K, V> {

// 这个结构很简单吧,就是一个Node类,然后有两个成员变量,类型不确定,所以是泛型

final int hash;

final K key;

V value;

Node<K, V> next;//链表结构的指针

// 构造函数

Node(int hash, K key, V value, Node<K, V> next) {

this.hash = hash;

this.key = key;

this.value = value;

this.next = next;

}

public static void main(String[] args) {

Node[] nodes = new Node[16];

//构造函数第一个参数写死的0,实际应该是经过hash算法计算得出的;为了方便理解所以写死了

//因为他们三个的第一个参数都是0,所以产生哈希碰撞了,所以就用链表结构了,连接起来,大家都存储在

//Nodes[0]的位置

Node<String, String> node01 = new Node<>(0,"key", "value", null);

Node<String, String> node02 = new Node<>(0,"key", "value", node01);

Node<String, String> node03 = new Node<>(0,"key", "value", node02);

nodes[0] = node03;

//这里的第一个参数都是1,所以他们存在数组的第一个位置,

Node<String, String> node11 = new Node<>(1,"key", "value", null);

Node<String, String> node12 = new Node<>(1,"key", "value", node11);

Node<String, String> node13 = new Node<>(1,"key", "value", node12);

nodes[1] = node13;

//这就是一个小小的HashMap结构,因为HashMap是经过hash算法得到的下标位置,所以叫HashMap

}

}

下标的计算和存储

static final int hash(Object key) {

int h;//如果等于null 下标就是0,不是null就前后16位的异或操作

return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16);

}

然后是是在

final V putVal(int hash, K key, V value, boolean onlyIfAbsent,

boolean evict) {

//方法内容比较多,挑出来关键部分

Node<K,V>[] tab; Node<K,V> p; int n, i;

if ((tab = table) == null || (n = tab.length) == 0)

n = (tab = resize()).length;

if ((p = tab[i = (n - 1) & hash]) == null)//重点是这里i = (n - 1) & hash

tab[i] = newNode(hash, key, value, null);

}

上面的n是数组长度,然后与hash值按位与计算得出结果,这一步其实和算余数结果相同;主要是情况特殊,简单说就 h a s h hash hash % 2 n 2^n 2n = h a s h hash hash & ( 2 n 2^n 2n-1),而且位运算比取余速度快的多。

重点就是:tab[i = (n - 1) & hash]

tab是数组,[]中括号里的就是下标的计算,hash前面已经计算好了,就剩下最后与计算了;这样数据的下标就找到了,然后会看数组里是否有数据,没有数据就放进去,有数据就循环到尾,如下代码,也在 final V putVal()方法中

for (int binCount = 0; ; ++binCount) {

if ((e = p.next) == null) {

p.next = newNode(hash, key, value, null);

if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st

treeifyBin(tab, hash);

break;

}

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

break;

p = e;

}

如何根据key找到value

超找和插入的过程一样的,都是先计算下标,然后再遍历链表

红黑树和链表的转换

红黑树和单链表转换有两个阈值,单链表长度为8转换成红黑树

红黑树数据低于6的时候转换成单链表

如下代码和注释中可以看到

/**

* The bin count threshold for using a tree rather than list for a

* bin. Bins are converted to trees when adding an element to a

* bin with at least this many nodes. The value must be greater

* than 2 and should be at least 8 to mesh with assumptions in

* tree removal about conversion back to plain bins upon

* shrinkage.

*/

static final int TREEIFY_THRESHOLD = 8;

/**

* The bin count threshold for untreeifying a (split) bin during a

* resize operation. Should be less than TREEIFY_THRESHOLD, and at

* most 6 to mesh with shrinkage detection under removal.

*/

static final int UNTREEIFY_THRESHOLD = 6;

HashMap中有一段注释,就说了这个情况为什么是8和6

/*

* Because TreeNodes are about twice the size of regular nodes, we

* use them only when bins contain enough nodes to warrant use

* (see TREEIFY_THRESHOLD). And when they become too small (due to

* removal or resizing) they are converted back to plain bins. In

* usages with well-distributed user hashCodes, tree bins are

* rarely used. Ideally, under random hashCodes, the frequency of

* nodes in bins follows a Poisson distribution

* (http://en.wikipedia.org/wiki/Poisson_distribution) with a

* parameter of about 0.5 on average for the default resizing

* threshold of 0.75, although with a large variance because of

* resizing granularity. Ignoring variance, the expected

* occurrences of list size k are (exp(-0.5) * pow(0.5, k) /

* factorial(k)). The first values are:

*

* 0: 0.60653066

* 1: 0.30326533

* 2: 0.07581633

* 3: 0.01263606

* 4: 0.00157952

* 5: 0.00015795

* 6: 0.00001316

* 7: 0.00000094

* 8: 0.00000006

* more: less than 1 in ten million

*/

红黑树占用空间是普通单链表的两倍(相较于链表结构,链表只有指向下一个节点的指针,二叉树则需要左右指针,分别指向左节点和右节点),所以只有当bin包含足够多的节点时才会转成红黑树(考虑到时间和空间的权衡),而是否足够多就是由TREEIFY_THRESHOLD的值决定的。当bin中节点数变少时,又会转成普通的bin。并且我们查看源码的时候发现,链表长度达到8就转成红黑树,当长度降到6就转成普通bin。

翻译上面的注释意思是:一个bin中链表长度达到8个元素的概率为0.00000006,几乎是不可能事件。这种不可能事件都发生了,说明bin中的节点数很多,查找起来效率不高。至于7,是为了作为缓冲,可以有效防止链表和树频繁转换。

大概意思就是:假如阈值定的是2,那么HashMap中可能经常发生链表和红黑树相互转换的问题,设置成8后,出现转换的概率非常的小,也是为了节省性能,包括红黑树转换单链表是6而不是8也是这个考虑;还有就是网上的一个普遍答案:

黑树的平均查找长度是log(n),如果长度为8,平均查找长度为log(8)=3,链表的平均查找长度为n/2,当长度为8时,平均查找长度为8/2=4,这才有转换成树的必要;链表长度如果是小于等于6,6/2=3,而log(6)=2.6,虽然速度也很快的,但是转化为树结构和生成树的时间并不会太短。

仁者见仁智者见智吧