学术前沿趋势分析 Task1 论文数据统计 笔记1

文章目录

-

- 任务说明

- 数据集介绍

- arxiv论文类别介绍

- 具体代码实现以及讲解

-

- 导入package并读取原始数据

-

- 小小总结

- 数据预处理

-

- 小小总结

- 数据分析及可视化

- 总结

任务说明

- 任务主题:论文数量统计,即统计2019年全年计算机各个方向论文数量;

- 任务内容:赛题的理解、使用 Pandas 读取数据并进行统计;

- 任务成果:学习 Pandas 的基础操作;

- 可参考的学习资料:开源组织Datawhale joyful-pandas项目

数据集介绍

- 数据集来源:数据集链接;

- 数据集的格式如下:

id:arXiv ID,可用于访问论文;submitter:论文提交者;authors:论文作者;title:论文标题;comments:论文页数和图表等其他信息;journal-ref:论文发表的期刊的信息;doi:数字对象标识符,https://www.doi.org;report-no:报告编号;categories:论文在 arXiv 系统的所属类别或标签;license:文章的许可证;abstract:论文摘要;versions:论文版本;authors_parsed:作者的信息。

"root":{

"id":string"0704.0001"

"submitter":string"Pavel Nadolsky"

"authors":string"C. Bal\'azs, E. L. Berger, P. M. Nadolsky, C.-P. Yuan"

"title":string"Calculation of prompt diphoton production cross sections at Tevatron and LHC energies"

"comments":string"37 pages, 15 figures; published version"

"journal-ref":string"Phys.Rev.D76:013009,2007"

"doi":string"10.1103/PhysRevD.76.013009"

"report-no":string"ANL-HEP-PR-07-12"

"categories":string"hep-ph"

"license":NULL

"abstract":string" A fully differential calculation in perturbative quantum chromodynamics is presented for the production of massive photon pairs at hadron colliders. All next-to-leading order perturbative contributions from quark-antiquark, gluon-(anti)quark, and gluon-gluon subprocesses are included, as well as all-orders resummation of initial-state gluon radiation valid at next-to-next-to leading logarithmic accuracy. The region of phase space is specified in which the calculation is most reliable. Good agreement is demonstrated with data from the Fermilab Tevatron, and predictions are made for more detailed tests with CDF and DO data. Predictions are shown for distributions of diphoton pairs produced at the energy of the Large Hadron Collider (LHC). Distributions of the diphoton pairs from the decay of a Higgs boson are contrasted with those produced from QCD processes at the LHC, showing that enhanced sensitivity to the signal can be obtained with judicious selection of events."

"versions":[

0:{

"version":string"v1"

"created":string"Mon, 2 Apr 2007 19:18:42 GMT"

}

1:{

"version":string"v2"

"created":string"Tue, 24 Jul 2007 20:10:27 GMT"

}]

"update_date":string"2008-11-26"

"authors_parsed":[

0:[

0:string"Balázs"

1:string"C."

2:string""]

1:[

0:string"Berger"

1:string"E. L."

2:string""]

2:[

0:string"Nadolsky"

1:string"P. M."

2:string""]

3:[

0:string"Yuan"

1:string"C. -P."

2:string""]]

}

arxiv论文类别介绍

我们从arxiv官网,查询到论文的类别名称以及其解释如下。

链接:https://arxiv.org/help/api/user-manual 的 5.3 小节的 Subject Classifications 的部分,或 https://arxiv.org/category_taxonomy, 具体的153种paper的类别部分如下:

'astro-ph': 'Astrophysics',

'astro-ph.CO': 'Cosmology and Nongalactic Astrophysics',

'astro-ph.EP': 'Earth and Planetary Astrophysics',

'astro-ph.GA': 'Astrophysics of Galaxies',

'cs.AI': 'Artificial Intelligence',

'cs.AR': 'Hardware Architecture',

'cs.CC': 'Computational Complexity',

'cs.CE': 'Computational Engineering, Finance, and Science',

'cs.CV': 'Computer Vision and Pattern Recognition',

'cs.CY': 'Computers and Society',

'cs.DB': 'Databases',

'cs.DC': 'Distributed, Parallel, and Cluster Computing',

'cs.DL': 'Digital Libraries',

'cs.NA': 'Numerical Analysis',

'cs.NE': 'Neural and Evolutionary Computing',

'cs.NI': 'Networking and Internet Architecture',

'cs.OH': 'Other Computer Science',

'cs.OS': 'Operating Systems',

具体代码实现以及讲解

导入package并读取原始数据

# 导入所需的package

import seaborn as sns #用于画图

from bs4 import BeautifulSoup #用于爬取arxiv的数据

import re #用于正则表达式,匹配字符串的模式

import requests #用于网络连接,发送网络请求,使用域名获取对应信息

import json #读取数据,我们的数据为json格式的

import pandas as pd #数据处理,数据分析

import matplotlib.pyplot as plt #画图工具

import time #时间库

这里使用的package的版本如下(python 3.7.4):

- seaborn:0.9.0

- BeautifulSoup:4.8.0

- requests:2.22.0

- json:0.8.5

- pandas:0.25.1

- matplotlib:3.1.1

# 读入数据

data = []

#使用with语句优势:1.自动关闭文件句柄;2.自动显示(处理)文件读取数据异常

with open("./arxiv-metadata-oai-snapshot.json", 'r') as f:

for idx, line in enumerate(f):

# 读取前100行,如果读取所有数据需要8G内存

if idx >= 100:

break

data.append(json.loads(line))

data = pd.DataFrame(data) #将list变为dataframe格式,方便使用pandas进行分析

data.shape #显示数据大小

(10000, 14)

data.head() #显示数据的前五行

| id | submitter | authors | title | comments | journal-ref | doi | report-no | categories | license | abstract | versions | update_date | authors_parsed | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0704.0001 | Pavel Nadolsky | C. Bal\'azs, E. L. Berger, P. M. Nadolsky, C.-... | Calculation of prompt diphoton production cros... | 37 pages, 15 figures; published version | Phys.Rev.D76:013009,2007 | 10.1103/PhysRevD.76.013009 | ANL-HEP-PR-07-12 | hep-ph | None | A fully differential calculation in perturba... | [{'version': 'v1', 'created': 'Mon, 2 Apr 2007... | 2008-11-26 | [[Balázs, C., ], [Berger, E. L., ], [Nadolsky,... |

| 1 | 0704.0002 | Louis Theran | Ileana Streinu and Louis Theran | Sparsity-certifying Graph Decompositions | To appear in Graphs and Combinatorics | None | None | None | math.CO cs.CG | http://arxiv.org/licenses/nonexclusive-distrib... | We describe a new algorithm, the $(k,\ell)$-... | [{'version': 'v1', 'created': 'Sat, 31 Mar 200... | 2008-12-13 | [[Streinu, Ileana, ], [Theran, Louis, ]] |

| 2 | 0704.0003 | Hongjun Pan | Hongjun Pan | The evolution of the Earth-Moon system based o... | 23 pages, 3 figures | None | None | None | physics.gen-ph | None | The evolution of Earth-Moon system is descri... | [{'version': 'v1', 'created': 'Sun, 1 Apr 2007... | 2008-01-13 | [[Pan, Hongjun, ]] |

| 3 | 0704.0004 | David Callan | David Callan | A determinant of Stirling cycle numbers counts... | 11 pages | None | None | None | math.CO | None | We show that a determinant of Stirling cycle... | [{'version': 'v1', 'created': 'Sat, 31 Mar 200... | 2007-05-23 | [[Callan, David, ]] |

| 4 | 0704.0005 | Alberto Torchinsky | Wael Abu-Shammala and Alberto Torchinsky | From dyadic $\Lambda_{\alpha}$ to $\Lambda_{\a... | None | Illinois J. Math. 52 (2008) no.2, 681-689 | None | None | math.CA math.FA | None | In this paper we show how to compute the $\L... | [{'version': 'v1', 'created': 'Mon, 2 Apr 2007... | 2013-10-15 | [[Abu-Shammala, Wael, ], [Torchinsky, Alberto, ]] |

def readArxivFile(path, columns=['id', 'submitter', 'authors', 'title', 'comments', 'journal-ref', 'doi',

'report-no', 'categories', 'license', 'abstract', 'versions',

'update_date', 'authors_parsed'], count=None):

'''

定义读取文件的函数

path: 文件路径

columns: 需要选择的列

count: 读取行数

'''

data = []

with open(path, 'r') as f:

for idx, line in enumerate(f):

if idx == count:

break

d = json.loads(line)

d = {

col : d[col] for col in columns}

data.append(d)

data = pd.DataFrame(data)

return data

data = readArxivFile('arxiv-metadata-oai-snapshot.json', ['id', 'categories', 'update_date'])

小小总结

1 Pandas 可以将列表(list)转换位Dataframe 神奇 https://blog.csdn.net/claroja/article/details/64439735

而且这里转换的列表里面是字典,一个个映射的感觉

数据预处理

首先我们先来粗略统计论文的种类信息:

count:一列数据的元素个数;unique:一列数据中元素的种类;top:一列数据中出现频率最高的元素;freq:一列数据中出现频率最高的元素的个数;

data["categories"].describe()

count 1796911

unique 62055

top astro-ph

freq 86914

Name: categories, dtype: object

data["categories"].value_counts()

astro-ph 86914

hep-ph 73550

quant-ph 53966

hep-th 53287

cond-mat.mtrl-sci 30107

...

cond-mat.stat-mech adap-org chao-dyn cs.CC nlin.AO nlin.CD 1

physics.comp-ph cond-mat.soft cond-mat.stat-mech hep-lat 1

cs.CE math.CO 1

nlin.CD astro-ph.EP astro-ph.SR physics.space-ph 1

hep-th cond-mat.soft cond-mat.str-el hep-ph 1

Name: categories, Length: 62055, dtype: int64

以上的结果表明:共有1796911数据,有62055个子类(因为有论文的类别是多个,例如一篇paper的类别是CS.AI & CS.MM和一篇paper的类别是CS.AI & CS.OS属于不同的子类别,这里仅仅是粗略统计),其中最多的种类是astro-ph,即Astrophysics(天体物理学),共出现了86914次。

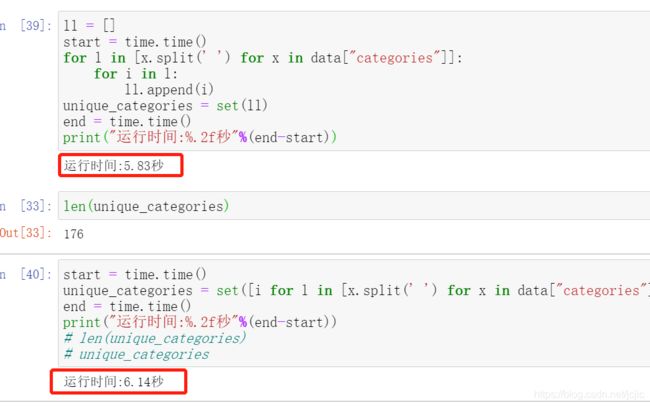

由于部分论文的类别不止一种,所以下面我们判断在本数据集中共出现了多少种独立的数据集。

start = time.time()

ll = []

for l in [x.split(' ') for x in data["categories"]]:

for i in l:

ll.append(i)

unique_categories = set(ll)

end = time.time()

print("运行时间:%.2f秒"%(end-start))

运行时间:5.89秒

start = time.time()

unique_categories = set([i for l in [x.split(' ') for x in data["categories"]] for i in l])

end = time.time()

print("运行时间:%.2f秒"%(end-start))

len(unique_categories)

unique_categories

运行时间:5.79秒

这里使用了 split 函数将多类别使用 “ ”(空格)分开,组成list,并使用 for 循环将独立出现的类别找出来,并使用 set 类别,将重复项去除得到最终所有的独立paper种类。

从以上结果发现,共有176种论文种类,比我们直接从 https://arxiv.org/help/api/user-manual 的 5.3 小节的 Subject Classifications 的部分或 https://arxiv.org/category_taxonomy中的到的类别少,这说明存在一些官网上没有的类别,这是一个小细节。不过对于我们的计算机方向的论文没有影响,依然是以下的40个类别,我们从原数据中提取的和从官网的到的种类是可以一一对应的。

我们的任务要求对于2019年以后的paper进行分析,所以首先对于时间特征进行预处理,从而得到2019年以后的所有种类的论文:

小小总结

1 列表中嵌套for循环的优点

(1)能缩小代码量使代码更加美观

(2)运行时间比普通循环小,这在大规模数据集运算挺重要的

2 扩展内容

http://www.zzvips.com/article/84285.html

pandas.core.series.Series

data["year"] = pd.to_datetime(data["update_date"]).dt.year #将update_date从例如2019-02-20的str变为datetime格式,并提取处year

del data["update_date"] #删除 update_date特征,其使命已完成

data = data[data["year"] >= 2019] #找出 year 中2019年以后的数据,并将其他数据删除

data.groupby(['categories','year']) #以 categories 进行排序,如果同一个categories 相同则使用 year 特征进行排序

data.reset_index(drop=True, inplace=True) #重新编号

data #查看结果

| id | categories | year | |

|---|---|---|---|

| 0 | 0704.0297 | astro-ph | 2019 |

| 1 | 0704.0342 | math.AT | 2019 |

| 2 | 0704.0360 | astro-ph | 2019 |

| 3 | 0704.0525 | gr-qc | 2019 |

| 4 | 0704.0535 | astro-ph | 2019 |

| ... | ... | ... | ... |

| 395118 | quant-ph/9911051 | quant-ph | 2020 |

| 395119 | solv-int/9511005 | solv-int nlin.SI | 2019 |

| 395120 | solv-int/9809008 | solv-int nlin.SI | 2019 |

| 395121 | solv-int/9909010 | solv-int adap-org hep-th nlin.AO nlin.SI | 2019 |

| 395122 | solv-int/9909014 | solv-int nlin.SI | 2019 |

395123 rows × 3 columns

这里我们就已经得到了所有2019年以后的论文,下面我们挑选出计算机领域内的所有文章:

#爬取所有的类别

website_url = requests.get('https://arxiv.org/category_taxonomy').text #获取网页的文本数据

soup = BeautifulSoup(website_url,'lxml') #爬取数据,这里使用lxml的解析器,加速

root = soup.find('div',{

'id':'category_taxonomy_list'}) #找出 BeautifulSoup 对应的标签入口

tags = root.find_all(["h2","h3","h4","p"], recursive=True) #读取 tags

#初始化 str 和 list 变量

level_1_name = ""

level_2_name = ""

level_2_code = ""

level_1_names = []

level_2_codes = []

level_2_names = []

level_3_codes = []

level_3_names = []

level_3_notes = []

#进行

for t in tags:

if t.name == "h2":

level_1_name = t.text

level_2_code = t.text

level_2_name = t.text

elif t.name == "h3":

raw = t.text

level_2_code = re.sub(r"(.*)\((.*)\)",r"\2",raw) #正则表达式:模式字符串:(.*)\((.*)\);被替换字符串"\2";被处理字符串:raw

level_2_name = re.sub(r"(.*)\((.*)\)",r"\1",raw)

elif t.name == "h4":

raw = t.text

level_3_code = re.sub(r"(.*) \((.*)\)",r"\1",raw)

level_3_name = re.sub(r"(.*) \((.*)\)",r"\2",raw)

elif t.name == "p":

notes = t.text

level_1_names.append(level_1_name)

level_2_names.append(level_2_name)

level_2_codes.append(level_2_code)

level_3_names.append(level_3_name)

level_3_codes.append(level_3_code)

level_3_notes.append(notes)

#根据以上信息生成dataframe格式的数据

df_taxonomy = pd.DataFrame({

'group_name' : level_1_names,

'archive_name' : level_2_names,

'archive_id' : level_2_codes,

'category_name' : level_3_names,

'categories' : level_3_codes,

'category_description': level_3_notes

})

#按照 "group_name" 进行分组,在组内使用 "archive_name" 进行排序

df_taxonomy.groupby(["group_name","archive_name"])

df_taxonomy

| group_name | archive_name | archive_id | category_name | categories | category_description | |

|---|---|---|---|---|---|---|

| 0 | Computer Science | Computer Science | Computer Science | Artificial Intelligence | cs.AI | Covers all areas of AI except Vision, Robotics... |

| 1 | Computer Science | Computer Science | Computer Science | Hardware Architecture | cs.AR | Covers systems organization and hardware archi... |

| 2 | Computer Science | Computer Science | Computer Science | Computational Complexity | cs.CC | Covers models of computation, complexity class... |

| 3 | Computer Science | Computer Science | Computer Science | Computational Engineering, Finance, and Science | cs.CE | Covers applications of computer science to the... |

| 4 | Computer Science | Computer Science | Computer Science | Computational Geometry | cs.CG | Roughly includes material in ACM Subject Class... |

| ... | ... | ... | ... | ... | ... | ... |

| 150 | Statistics | Statistics | Statistics | Computation | stat.CO | Algorithms, Simulation, Visualization |

| 151 | Statistics | Statistics | Statistics | Methodology | stat.ME | Design, Surveys, Model Selection, Multiple Tes... |

| 152 | Statistics | Statistics | Statistics | Machine Learning | stat.ML | Covers machine learning papers (supervised, un... |

| 153 | Statistics | Statistics | Statistics | Other Statistics | stat.OT | Work in statistics that does not fit into the ... |

| 154 | Statistics | Statistics | Statistics | Statistics Theory | stat.TH | stat.TH is an alias for math.ST. Asymptotics, ... |

155 rows × 6 columns

这里主要说明一下上面代码中的正则操作,这里我们使用re.sub来用于替换字符串中的匹配项

- pattern : 正则中的模式字符串。

- repl : 替换的字符串,也可为一个函数。

- string : 要被查找替换的原始字符串。

- count : 模式匹配后替换的最大次数,默认 0 表示替换所有的匹配。

- flags : 编译时用的匹配模式,数字形式。

- 其中pattern、repl、string为必选参数

re.sub(pattern, repl, string, count=0, flags=0)

实例如下:

import re

phone = "2004-959-559 # 这是一个电话号码"

# 删除注释

num = re.sub(r'#.*$', "", phone)

print ("电话号码 : ", num)

# 移除非数字的内容

num = re.sub(r'\D', "", phone)

print ("电话号码 : ", num)

电话号码 : 2004-959-559

电话号码 : 2004959559

详细了解可以参考:https://www.runoob.com/python3/python3-reg-expressions.html

对于我们的代码来说:

re.sub(r"(.*)\((.*)\)",r"\2", " Astrophysics(astro-ph)")

'astro-ph'

对应的参数

- 正则中的模式字符串 pattern 的格式为 “任意字符” + “(” + “任意字符” + “)”。

- 替换的字符串 repl 为第2个分组的内容。

- 要被查找替换的原始字符串 string 为原始的爬取的数据。

这里推荐大家一个在线正则表达式测试的网站:https://tool.oschina.net/regex/

数据分析及可视化

接下来我们首先看一下所有大类的paper数量分布:

我们使用merge函数,以两个dataframe共同的属性 “categories” 进行合并,并以 “group_name” 作为类别进行统计,统计结果放入 “id” 列中并排序。

_df = data.merge(df_taxonomy, on="categories", how="left").drop_duplicates(["id","group_name"]).groupby("group_name").agg({

"id":"count"}).sort_values(by="id",ascending=False).reset_index()

_df

| group_name | id | |

|---|---|---|

| 0 | Physics | 79985 |

| 1 | Mathematics | 51567 |

| 2 | Computer Science | 40067 |

| 3 | Statistics | 4054 |

| 4 | Electrical Engineering and Systems Science | 3297 |

| 5 | Quantitative Biology | 1994 |

| 6 | Quantitative Finance | 826 |

| 7 | Economics | 576 |

下面我们使用饼图进行上图结果的可视化:

fig = plt.figure(figsize=(15,12))

explode = (0, 0, 0, 0.2, 0.3, 0.3, 0.2, 0.1)

plt.pie(_df["id"], labels=_df["group_name"], autopct='%1.2f%%', startangle=160, explode=explode)

plt.tight_layout()

plt.show()

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-9vm1MMQG-1610355878360)(output_33_0.png)]

下面统计在计算机各个子领域2019年后的paper数量,我们同样使用 merge 函数,对于两个dataframe 共同的特征 categories 进行合并并且进行查询。然后我们再对于数据进行统计和排序从而得到以下的结果:

group_name="Computer Science"

cats = data.merge(df_taxonomy, on="categories").query("group_name == @group_name")

cats.groupby(["year","category_name"]).count().reset_index().pivot(index="category_name", columns="year",values="id")

| year | 2019 | 2020 |

|---|---|---|

| category_name | ||

| Artificial Intelligence | 558 | 757 |

| Computation and Language | 2153 | 2906 |

| Computational Complexity | 131 | 188 |

| Computational Engineering, Finance, and Science | 108 | 205 |

| Computational Geometry | 199 | 216 |

| Computer Science and Game Theory | 281 | 323 |

| Computer Vision and Pattern Recognition | 5559 | 6517 |

| Computers and Society | 346 | 564 |

| Cryptography and Security | 1067 | 1238 |

| Data Structures and Algorithms | 711 | 902 |

| Databases | 282 | 342 |

| Digital Libraries | 125 | 157 |

| Discrete Mathematics | 84 | 81 |

| Distributed, Parallel, and Cluster Computing | 715 | 774 |

| Emerging Technologies | 101 | 84 |

| Formal Languages and Automata Theory | 152 | 137 |

| General Literature | 5 | 5 |

| Graphics | 116 | 151 |

| Hardware Architecture | 95 | 159 |

| Human-Computer Interaction | 420 | 580 |

| Information Retrieval | 245 | 331 |

| Logic in Computer Science | 470 | 504 |

| Machine Learning | 177 | 538 |

| Mathematical Software | 27 | 45 |

| Multiagent Systems | 85 | 90 |

| Multimedia | 76 | 66 |

| Networking and Internet Architecture | 864 | 783 |

| Neural and Evolutionary Computing | 235 | 279 |

| Numerical Analysis | 40 | 11 |

| Operating Systems | 36 | 33 |

| Other Computer Science | 67 | 69 |

| Performance | 45 | 51 |

| Programming Languages | 268 | 294 |

| Robotics | 917 | 1298 |

| Social and Information Networks | 202 | 325 |

| Software Engineering | 659 | 804 |

| Sound | 7 | 4 |

| Symbolic Computation | 44 | 36 |

| Systems and Control | 415 | 133 |

我们可以从结果看出,Computer Vision and Pattern Recognition(计算机视觉与模式识别)类是CS中paper数量最多的子类,遥遥领先于其他的CS子类,并且paper的数量还在逐年增加;另外,Computation and Language(计算与语言)、Cryptography and Security(密码学与安全)以及 Robotics(机器人学)的2019年paper数量均超过1000或接近1000,这与我们的认知是一致的。

总结

- 学习内容:赛题理解、

Pandas读取数据、数据统计 ; - 学习成果:学习

Pandas基础; - 感受 : 爬虫知识很薄弱,merge在学习练习和实际应用还是有很多出入