目录

- 参考

- 示例说明

- AVAudioFifo介绍

- 示例代码

1. 参考

- [1] FFmpeg/doc/examples/transcoding.c

- [2] FFmpeg/doc/examples/transcode_aac.c

2. 示例说明

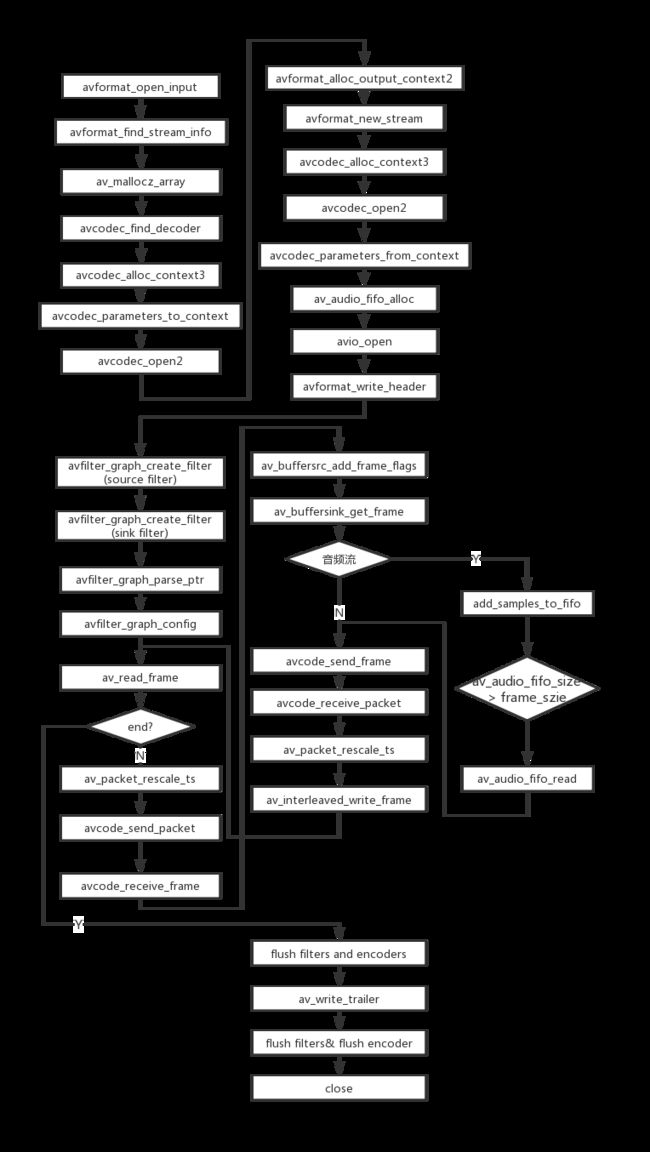

示例提供了一个“解封装->解码->filtering->编码->封装”的处理流程。

示例修改自[1],修改的地方:

- [1]中旧的编解码的API替换为新的API。

- 编码之前的音频数据经过AVAudioFifo处理,用于满足音频编码器对frame size的要求。否则音频编码为AAC的时候,会报more samples than frame size的错误。AVAudioFifo提供了一个先入先出的音频缓冲队列。

- 增加编码之后的AVPacket的pts和dts的针对编码器和解码器的time_base不一样的转换,否则编码出来的AVPacket的pts和dts不正确。

示例的流程图如下所示。

- 编解码API的详细介绍参见 FFmpeg音频解码。

- 转封装流程的详细介绍参见 FFmpeg转封装(remuxing)。

- 其中filtering流程的介绍见 FFmpeg libavfilter使用示例-处理YUV格式数据。

3. AVAudioFifo介绍

AVAudioFifo提供了一个先入先出的音频缓冲队列。

- 操作在样本级别而不是字节级别。

- 支持多通道的格式,不管是planar还是packed类型。

- 当写入一个已满的buffer时会自动重新分配内存。

AVAudioFifo主要的函数:

- av_audio_fifo_alloc():根据采样格式、通道数和样本个数创建一个AVAudioFifo。

- av_audio_fifo_realloc():根据新的样本个数为AVAudioFifo重新分配空间。

- av_audio_fifo_write(): 将数据写入AVAudioFifo。如果可用的空间小于传入nb_samples参数AVAudioFifo将自动重新分配空间,

- av_audio_fifo_size(): 获取当前AVAudioFifo中可供读取的样本数量。

- av_audio_fifo_read():从AVAudioFifo读取数据。

av_audio_fifo_read()的声明在libavutil/audio_fifo.h,如下所示。

/**

* Read data from an AVAudioFifo.

*

* @see enum AVSampleFormat

* The documentation for AVSampleFormat describes the data layout.

*

* @param af AVAudioFifo to read from

* @param data audio data plane pointers

* @param nb_samples number of samples to read

* @return number of samples actually read, or negative AVERROR code

* on failure. The number of samples actually read will not

* be greater than nb_samples, and will only be less than

* nb_samples if av_audio_fifo_size is less than nb_samples.

*/

int av_audio_fifo_read(AVAudioFifo *af, void **data, int nb_samples);

说明:

- data传入指向数据平面的指针,例如数据保存在AVFrame中,则传入AVFrame.data。

4. 示例代码

以下的代码修改自[1]。

/**

* @file

* API example for demuxing, decoding, filtering, encoding and muxing

* @example transcoding.c

*/

#include

#include

#include

#include

#include

#include

#include

#include

static AVFormatContext *ifmt_ctx;

static AVFormatContext *ofmt_ctx;

typedef struct FilteringContext {

AVFilterContext *buffersink_ctx;

AVFilterContext *buffersrc_ctx;

AVFilterGraph *filter_graph;

} FilteringContext;

static FilteringContext *filter_ctx;

typedef struct StreamContext {

AVCodecContext *dec_ctx;

AVCodecContext *enc_ctx;

AVAudioFifo *fifo;

int64_t pts_audio;

} StreamContext;

static StreamContext *stream_ctx;

static int open_input_file(const char *filename)

{

int ret;

unsigned int i;

ifmt_ctx = NULL;

if ((ret = avformat_open_input(&ifmt_ctx, filename, NULL, NULL)) < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot open input file\n");

return ret;

}

if ((ret = avformat_find_stream_info(ifmt_ctx, NULL)) < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot find stream information\n");

return ret;

}

stream_ctx = av_mallocz_array(ifmt_ctx->nb_streams, sizeof(*stream_ctx));

if (!stream_ctx)

return AVERROR(ENOMEM);

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

AVStream *stream = ifmt_ctx->streams[i];

AVCodec *dec = avcodec_find_decoder(stream->codecpar->codec_id);

AVCodecContext *codec_ctx;

if (!dec) {

av_log(NULL, AV_LOG_ERROR, "Failed to find decoder for stream #%u\n", i);

return AVERROR_DECODER_NOT_FOUND;

}

codec_ctx = avcodec_alloc_context3(dec);

if (!codec_ctx) {

av_log(NULL, AV_LOG_ERROR, "Failed to allocate the decoder context for stream #%u\n", i);

return AVERROR(ENOMEM);

}

ret = avcodec_parameters_to_context(codec_ctx, stream->codecpar);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Failed to copy decoder parameters to input decoder context "

"for stream #%u\n", i);

return ret;

}

/* Reencode video & audio and remux subtitles etc. */

if (codec_ctx->codec_type == AVMEDIA_TYPE_VIDEO

|| codec_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

if (codec_ctx->codec_type == AVMEDIA_TYPE_VIDEO)

codec_ctx->framerate = av_guess_frame_rate(ifmt_ctx, stream, NULL);

/* Open decoder */

ret = avcodec_open2(codec_ctx, dec, NULL);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Failed to open decoder for stream #%u\n", i);

return ret;

}

}

stream_ctx[i].dec_ctx = codec_ctx;

}

av_dump_format(ifmt_ctx, 0, filename, 0);

return 0;

}

static int open_output_file(const char *filename)

{

AVStream *out_stream;

AVStream *in_stream;

AVCodecContext *dec_ctx, *enc_ctx;

AVCodec *encoder;

int ret;

unsigned int i;

ofmt_ctx = NULL;

avformat_alloc_output_context2(&ofmt_ctx, NULL, NULL, filename);

if (!ofmt_ctx) {

av_log(NULL, AV_LOG_ERROR, "Could not create output context\n");

return AVERROR_UNKNOWN;

}

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

out_stream = avformat_new_stream(ofmt_ctx, NULL);

if (!out_stream) {

av_log(NULL, AV_LOG_ERROR, "Failed allocating output stream\n");

return AVERROR_UNKNOWN;

}

in_stream = ifmt_ctx->streams[i];

dec_ctx = stream_ctx[i].dec_ctx;

if (dec_ctx->codec_type == AVMEDIA_TYPE_VIDEO

|| dec_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

/* in this example, we choose transcoding to same codec */

encoder = avcodec_find_encoder(dec_ctx->codec_id);

if (!encoder) {

av_log(NULL, AV_LOG_FATAL, "Necessary encoder not found\n");

return AVERROR_INVALIDDATA;

}

enc_ctx = avcodec_alloc_context3(encoder);

if (!enc_ctx) {

av_log(NULL, AV_LOG_FATAL, "Failed to allocate the encoder context\n");

return AVERROR(ENOMEM);

}

/* In this example, we transcode to same properties (picture size,

* sample rate etc.). These properties can be changed for output

* streams easily using filters */

if (dec_ctx->codec_type == AVMEDIA_TYPE_VIDEO) {

enc_ctx->height = dec_ctx->height;

enc_ctx->width = dec_ctx->width;

enc_ctx->sample_aspect_ratio = dec_ctx->sample_aspect_ratio;

/* take first format from list of supported formats */

if (encoder->pix_fmts)

enc_ctx->pix_fmt = encoder->pix_fmts[0];

else

enc_ctx->pix_fmt = dec_ctx->pix_fmt;

/* video time_base can be set to whatever is handy and supported by encoder */

enc_ctx->time_base = av_inv_q(dec_ctx->framerate);

av_log(NULL, AV_LOG_DEBUG, "enc_ctx->time_base=%d/%d\n", enc_ctx->time_base.num, enc_ctx->time_base.den);

} else {

enc_ctx->sample_rate = dec_ctx->sample_rate;

enc_ctx->channel_layout = dec_ctx->channel_layout;

enc_ctx->channels = av_get_channel_layout_nb_channels(enc_ctx->channel_layout);

/* take first format from list of supported formats */

enc_ctx->sample_fmt = encoder->sample_fmts[0];

enc_ctx->time_base = (AVRational){1, enc_ctx->sample_rate};

av_log(NULL, AV_LOG_DEBUG, "enc_ctx->time_base=%d/%d, "\

"enc_ctx->sample_fmt=%s, dec_ctx->sample_fmt=%s\n",

enc_ctx->time_base.num, enc_ctx->time_base.den,

av_get_sample_fmt_name(enc_ctx->sample_fmt),

av_get_sample_fmt_name(dec_ctx->sample_fmt));

}

if (ofmt_ctx->oformat->flags & AVFMT_GLOBALHEADER)

enc_ctx->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

/* Third parameter can be used to pass settings to encoder */

ret = avcodec_open2(enc_ctx, encoder, NULL);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot open video encoder for stream #%u\n", i);

return ret;

}

ret = avcodec_parameters_from_context(out_stream->codecpar, enc_ctx);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Failed to copy encoder parameters to output stream #%u\n", i);

return ret;

}

out_stream->time_base = enc_ctx->time_base;

stream_ctx[i].enc_ctx = enc_ctx;

stream_ctx[i].fifo = av_audio_fifo_alloc(enc_ctx->sample_fmt, enc_ctx->channels, enc_ctx->frame_size);

stream_ctx[i].pts_audio = 0;

} else if (dec_ctx->codec_type == AVMEDIA_TYPE_UNKNOWN) {

av_log(NULL, AV_LOG_FATAL, "Elementary stream #%d is of unknown type, cannot proceed\n", i);

return AVERROR_INVALIDDATA;

} else {

/* if this stream must be remuxed */

ret = avcodec_parameters_copy(out_stream->codecpar, in_stream->codecpar);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Copying parameters for stream #%u failed\n", i);

return ret;

}

out_stream->time_base = in_stream->time_base;

}

}

av_dump_format(ofmt_ctx, 0, filename, 1);

if (!(ofmt_ctx->oformat->flags & AVFMT_NOFILE)) {

ret = avio_open(&ofmt_ctx->pb, filename, AVIO_FLAG_WRITE);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Could not open output file '%s'", filename);

return ret;

}

}

/* init muxer, write output file header */

ret = avformat_write_header(ofmt_ctx, NULL);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Error occurred when opening output file\n");

return ret;

}

return 0;

}

static int init_filter(FilteringContext* fctx, AVCodecContext *dec_ctx,

AVCodecContext *enc_ctx, const char *filter_spec)

{

char args[512];

int ret = 0;

const AVFilter *buffersrc = NULL;

const AVFilter *buffersink = NULL;

AVFilterContext *buffersrc_ctx = NULL;

AVFilterContext *buffersink_ctx = NULL;

AVFilterInOut *outputs = avfilter_inout_alloc();

AVFilterInOut *inputs = avfilter_inout_alloc();

AVFilterGraph *filter_graph = avfilter_graph_alloc();

if (!outputs || !inputs || !filter_graph) {

ret = AVERROR(ENOMEM);

goto end;

}

if (dec_ctx->codec_type == AVMEDIA_TYPE_VIDEO) {

buffersrc = avfilter_get_by_name("buffer");

buffersink = avfilter_get_by_name("buffersink");

if (!buffersrc || !buffersink) {

av_log(NULL, AV_LOG_ERROR, "filtering source or sink element not found\n");

ret = AVERROR_UNKNOWN;

goto end;

}

snprintf(args, sizeof(args),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

dec_ctx->width, dec_ctx->height, dec_ctx->pix_fmt,

dec_ctx->time_base.num, dec_ctx->time_base.den,

dec_ctx->sample_aspect_ratio.num,

dec_ctx->sample_aspect_ratio.den);

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",

args, NULL, filter_graph);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot create buffer source\n");

goto end;

}

ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",

NULL, NULL, filter_graph);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot create buffer sink\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "pix_fmts",

(uint8_t*)&enc_ctx->pix_fmt, sizeof(enc_ctx->pix_fmt),

AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot set output pixel format\n");

goto end;

}

} else if (dec_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

buffersrc = avfilter_get_by_name("abuffer");

buffersink = avfilter_get_by_name("abuffersink");

if (!buffersrc || !buffersink) {

av_log(NULL, AV_LOG_ERROR, "filtering source or sink element not found\n");

ret = AVERROR_UNKNOWN;

goto end;

}

if (!dec_ctx->channel_layout)

dec_ctx->channel_layout =

av_get_default_channel_layout(dec_ctx->channels);

snprintf(args, sizeof(args),

"time_base=%d/%d:sample_rate=%d:sample_fmt=%s:channel_layout=0x%"PRIx64,

dec_ctx->time_base.num, dec_ctx->time_base.den, dec_ctx->sample_rate,

av_get_sample_fmt_name(dec_ctx->sample_fmt),

dec_ctx->channel_layout);

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",

args, NULL, filter_graph);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot create audio buffer source\n");

goto end;

}

ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",

NULL, NULL, filter_graph);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot create audio buffer sink\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "sample_fmts",

(uint8_t*)&enc_ctx->sample_fmt, sizeof(enc_ctx->sample_fmt),

AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot set output sample format\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "channel_layouts",

(uint8_t*)&enc_ctx->channel_layout,

sizeof(enc_ctx->channel_layout), AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot set output channel layout\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "sample_rates",

(uint8_t*)&enc_ctx->sample_rate, sizeof(enc_ctx->sample_rate),

AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot set output sample rate\n");

goto end;

}

} else {

ret = AVERROR_UNKNOWN;

goto end;

}

/* Endpoints for the filter graph. */

outputs->name = av_strdup("in");

outputs->filter_ctx = buffersrc_ctx;

outputs->pad_idx = 0;

outputs->next = NULL;

inputs->name = av_strdup("out");

inputs->filter_ctx = buffersink_ctx;

inputs->pad_idx = 0;

inputs->next = NULL;

if (!outputs->name || !inputs->name) {

ret = AVERROR(ENOMEM);

goto end;

}

if ((ret = avfilter_graph_parse_ptr(filter_graph, filter_spec,

&inputs, &outputs, NULL)) < 0)

goto end;

if ((ret = avfilter_graph_config(filter_graph, NULL)) < 0)

goto end;

/* Fill FilteringContext */

fctx->buffersrc_ctx = buffersrc_ctx;

fctx->buffersink_ctx = buffersink_ctx;

fctx->filter_graph = filter_graph;

end:

avfilter_inout_free(&inputs);

avfilter_inout_free(&outputs);

return ret;

}

/**

* Add converted input audio samples to the FIFO buffer for later processing.

* @param fifo Buffer to add the samples to

* @param converted_input_samples Samples to be added. The dimensions are channel

* (for multi-channel audio), sample.

* @param frame_size Number of samples to be converted

* @return Error code (0 if successful)

*/

static int add_samples_to_fifo(AVAudioFifo *fifo,

uint8_t **converted_input_samples,

const int frame_size)

{

int error;

/* Make the FIFO as large as it needs to be to hold both,

* the old and the new samples. */

if ((error = av_audio_fifo_realloc(fifo, av_audio_fifo_size(fifo) + frame_size)) < 0) {

av_log(NULL, AV_LOG_ERROR, "Could not reallocate FIFO\n");

return error;

}

/* Store the new samples in the FIFO buffer. */

if (av_audio_fifo_write(fifo, (void **)converted_input_samples,

frame_size) < frame_size) {

av_log(NULL, AV_LOG_ERROR, "Could not write data to FIFO\n");

return AVERROR_EXIT;

}

return 0;

}

static int init_filters(void)

{

const char *filter_spec;

unsigned int i;

int ret;

filter_ctx = av_malloc_array(ifmt_ctx->nb_streams, sizeof(*filter_ctx));

if (!filter_ctx)

return AVERROR(ENOMEM);

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

filter_ctx[i].buffersrc_ctx = NULL;

filter_ctx[i].buffersink_ctx = NULL;

filter_ctx[i].filter_graph = NULL;

if (!(ifmt_ctx->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_AUDIO

|| ifmt_ctx->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_VIDEO))

continue;

if (ifmt_ctx->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_VIDEO)

filter_spec = "null"; /* passthrough (dummy) filter for video */

else

filter_spec = "anull"; /* passthrough (dummy) filter for audio */

ret = init_filter(&filter_ctx[i], stream_ctx[i].dec_ctx,

stream_ctx[i].enc_ctx, filter_spec);

if (ret)

return ret;

}

return 0;

}

/**

* Initialize one input frame for writing to the output file.

* The frame will be exactly frame_size samples large.

* @param[out] frame Frame to be initialized

* @param output_codec_context Codec context of the output file

* @param frame_size Size of the frame

* @return Error code (0 if successful)

*/

static int init_output_frame(AVFrame **frame,

AVCodecContext *output_codec_context,

int frame_size)

{

int error;

/* Create a new frame to store the audio samples. */

if (!(*frame = av_frame_alloc())) {

av_log(NULL, AV_LOG_ERROR, "Could not allocate output frame\n");

return AVERROR_EXIT;

}

/* Set the frame's parameters, especially its size and format.

* av_frame_get_buffer needs this to allocate memory for the

* audio samples of the frame.

* Default channel layouts based on the number of channels

* are assumed for simplicity. */

(*frame)->nb_samples = frame_size;

(*frame)->channel_layout = output_codec_context->channel_layout;

(*frame)->format = output_codec_context->sample_fmt;

(*frame)->sample_rate = output_codec_context->sample_rate;

/* Allocate the samples of the created frame. This call will make

* sure that the audio frame can hold as many samples as specified. */

if ((error = av_frame_get_buffer(*frame, 0)) < 0) {

av_log(NULL, AV_LOG_ERROR, "Could not allocate output frame samples (error '%s')\n",

av_err2str(error));

av_frame_free(frame);

return error;

}

return 0;

}

static int encode_write_frame(AVFrame *filt_frame, unsigned int stream_index) {

int ret;

int got_frame_local;

AVPacket enc_pkt;

/* encode filtered frame */

enc_pkt.data = NULL;

enc_pkt.size = 0;

av_init_packet(&enc_pkt);

if (stream_ctx[stream_index].enc_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

if (filt_frame) {

filt_frame->pts = stream_ctx[stream_index].pts_audio;

stream_ctx[stream_index].pts_audio += filt_frame->nb_samples;

}

}

ret = avcodec_send_frame(stream_ctx[stream_index].enc_ctx, filt_frame);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Error submitting the frame to the encoder, %s\n", av_err2str(ret));

return ret;

}

while (1) {

ret = avcodec_receive_packet(stream_ctx[stream_index].enc_ctx, &enc_pkt);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF) {

av_log(NULL, AV_LOG_INFO, "Error of EAGAIN or EOF\n");

return 0;

} else if (ret < 0){

av_log(NULL, AV_LOG_ERROR, "Error during encoding, %s\n", av_err2str(ret));

return ret;

} else {

/* prepare packet for muxing */

enc_pkt.stream_index = stream_index;

av_packet_rescale_ts(&enc_pkt,

stream_ctx[stream_index].dec_ctx->time_base,

stream_ctx[stream_index].enc_ctx->time_base);

av_packet_rescale_ts(&enc_pkt,

stream_ctx[stream_index].enc_ctx->time_base,

ofmt_ctx->streams[stream_index]->time_base);

AVRational enc_timebase = stream_ctx[stream_index].enc_ctx->time_base;

AVRational ofmt_timebase = ofmt_ctx->streams[stream_index]->time_base;

av_log(NULL, AV_LOG_DEBUG, "Muxing frame, enc_pkt->dts=%ld, enc_pkt->pts=%ld,"\

" enc_ctx->time_base=%d/%d, ofmt_ctx->time_base=%d/%d\n", enc_pkt.dts, enc_pkt.pts,

enc_timebase.num, enc_timebase.den, ofmt_timebase.num, ofmt_timebase.den);

/* mux encoded frame */

ret = av_interleaved_write_frame(ofmt_ctx, &enc_pkt);

av_packet_unref(&enc_pkt);

if (ret < 0)

return ret;

}

}

return ret;

}

static int encode_write_frame_fifo(AVFrame *filt_frame, unsigned int stream_index) {

int ret = 0;

if (stream_ctx[stream_index].enc_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

const int output_frame_size = stream_ctx[stream_index].enc_ctx->frame_size;

/* Make sure that there is one frame worth of samples in the FIFO

* buffer so that the encoder can do its work.

* Since the decoder's and the encoder's frame size may differ, we

* need to FIFO buffer to store as many frames worth of input samples

* that they make up at least one frame worth of output samples. */

add_samples_to_fifo(stream_ctx[stream_index].fifo, filt_frame->data, filt_frame->nb_samples);

int audio_fifo_size = av_audio_fifo_size(stream_ctx[stream_index].fifo);

if (audio_fifo_size < stream_ctx[stream_index].enc_ctx->frame_size) {

/* Decode one frame worth of audio samples, convert it to the

* output sample format and put it into the FIFO buffer. */

return 0;

}

/* If we have enough samples for the encoder, we encode them.

* At the end of the file, we pass the remaining samples to

* the encoder. */

while (av_audio_fifo_size(stream_ctx[stream_index].fifo) >= stream_ctx[stream_index].enc_ctx->frame_size) {

/* Take one frame worth of audio samples from the FIFO buffer,

* encode it and write it to the output file. */

/* Use the maximum number of possible samples per frame.

* If there is less than the maximum possible frame size in the FIFO

* buffer use this number. Otherwise, use the maximum possible frame size. */

const int frame_size = FFMIN(av_audio_fifo_size(stream_ctx[stream_index].fifo), stream_ctx[stream_index].enc_ctx->frame_size);

AVFrame *output_frame;

/* Initialize temporary storage for one output frame. */

if (init_output_frame(&output_frame, stream_ctx[stream_index].enc_ctx, frame_size) < 0) {

av_log(NULL, AV_LOG_ERROR, "init_output_frame failed\n");

return AVERROR_EXIT;

}

/* Read as many samples from the FIFO buffer as required to fill the frame.

* The samples are stored in the frame temporarily. */

if (av_audio_fifo_read(stream_ctx[stream_index].fifo, (void **)output_frame->data, frame_size) < frame_size) {

av_log(NULL, AV_LOG_ERROR, "Could not read data from FIFO\n");

av_frame_free(&output_frame);

return AVERROR_EXIT;

}

ret = encode_write_frame(output_frame, stream_index);

av_frame_free(&output_frame);

}

} else {

ret = encode_write_frame(filt_frame, stream_index);

}

return ret;

}

static int filter_encode_write_frame(AVFrame *frame, unsigned int stream_index)

{

int ret;

AVFrame *filt_frame;

av_log(NULL, AV_LOG_INFO, "Pushing decoded frame to filters\n");

/* push the decoded frame into the filtergraph */

ret = av_buffersrc_add_frame_flags(filter_ctx[stream_index].buffersrc_ctx,

frame, 0);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Error while feeding the filtergraph\n");

return ret;

}

/* pull filtered frames from the filtergraph */

while (1) {

filt_frame = av_frame_alloc();

if (!filt_frame) {

return AVERROR(ENOMEM);

}

av_log(NULL, AV_LOG_INFO, "Pulling filtered frame from filters\n");

ret = av_buffersink_get_frame(filter_ctx[stream_index].buffersink_ctx,

filt_frame);

if (ret < 0) {

/* if no more frames for output - returns AVERROR(EAGAIN)

* if flushed and no more frames for output - returns AVERROR_EOF

* rewrite retcode to 0 to show it as normal procedure completion

*/

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF)

ret = 0;

goto cleanup;

}

filt_frame->pict_type = AV_PICTURE_TYPE_NONE;

ret = encode_write_frame_fifo(filt_frame, stream_index);

if (ret < 0)

goto cleanup;

}

cleanup:

av_frame_free(&filt_frame);

return ret;

}

static int flush_encoder(unsigned int stream_index)

{

int ret;

if (!(stream_ctx[stream_index].enc_ctx->codec->capabilities &

AV_CODEC_CAP_DELAY))

return 0;

av_log(NULL, AV_LOG_INFO, "Flushing stream #%u encoder\n", stream_index);

ret = encode_write_frame(NULL, stream_index);

return ret;

}

int main(int argc, char **argv)

{

int ret;

//av_log_set_level(AV_LOG_DEBUG);

AVPacket packet = { .data = NULL, .size = 0 };

AVFrame *frame = NULL;

enum AVMediaType type;

unsigned int stream_index;

unsigned int i;

int got_frame;

if (argc != 3) {

av_log(NULL, AV_LOG_ERROR, "Usage: %s 说明:

- 需要h264编码的时候,在FFmpeg编译的时候需要加入编码器的支持。否则会报如下错误。类似,如果需要使用其他第三方编码器的情况,也需要加入对应的支持。编译方式参考FFmpeg编译-Linux平台

[h264_v4l2m2m @ 0x205a940] Could not find a valid device

[h264_v4l2m2m @ 0x205a940] can't configure encoder

Cannot open video encoder for stream #0

Error occurred: Invalid argument