SparkCore之RDD编程

一、编程模型

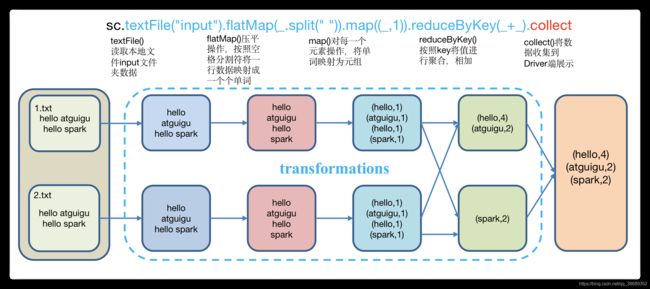

- 在Spark中,RDD被表示为对象,通过对象上的方法调用来对RDD进行转换,RDD经过一系列的transformation转换定义之后,就可以调用actions出发RDD的计算,action可以是向应用程序返回结果,或者是向存储系统保存数据,在Spark中,只有遇到action,才会执行RDD的计算(即延迟计算)。

二、RDD的创建

2.1 IDEA环境准备

- 创建maven工程

- 在pom文件中添加如下依赖

-

org.apache.spark spark-core_2.11 2.1.1 SparkCoreTest net.alchim31.maven scala-maven-plugin 3.4.6 compile testCompile org.apache.maven.plugins maven-assembly-plugin 3.0.0 com.spark.day01.WordCount jar-with-dependencies make-assembly package single - 添加Scala框架支持

- 创建一个Scala文件夹,并把它修改为Source Root

- 创建包

2.2 从集合中创建RDD

- 从集合中创建RDD,Spark主要提供了两种函数:parallelize和makeRDD

package com.spark.day02

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

/*

* 通过读取内存集合中的数据,创建RDD

*

* */

object Spark01_CreateRDD_mem {

def main(args: Array[String]): Unit = {

// 创建Spark配置文件对象

val conf = new SparkConf().setAppName("Spark01_CreateRDD_mem").setMaster("local[*]")

// 创建SparkContext对象

val sc = new SparkContext()

// 创建一个集合对象

val list: List[Int] = List(1, 2, 3, 4)

// 根据集合创建RDD 方式一

// val rdd: RDD[Int] = sc.parallelize(list)

// 根据集合创建RDD 方式二、makeRDD底层调用的就是parallelize

val rdd: RDD[Int] = sc.makeRDD(list)

rdd.collect().foreach(println)

//

// 关闭连接

sc.stop()

}

}

2.3 从外部存储系统的数据集创建

- 由外部存储系统的数据集创建RDD包括:本地的文件系统,还有所有Hadoop支持的数据集,比如HDFS、HBase等。

- 数据准备

- 在新建的SparkCoreTest1项目名称上右键=》新建input文件夹=》在input文件夹上右键=》分别新建1.txt和2.txt。每个文件里面准备一些word单词。

- 创建RDD

package com.spark.day02

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

object Spark02_Create_file {

def main(args: Array[String]): Unit = {

// 创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

// 创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

// 从本地文件中读取数据创建RDD

// val rdd: RDD[String] = sc.textFile("/Users/tiger/Desktop/JinWWProject/Spark_Project/input/1.txt")

// 从HDFS服务器上面读取数据,创建RDD

val rdd: RDD[String] = sc.textFile("hdfs://172.23.4.221:9000/input")

// 关闭连接

sc.stop()

}

}

2.4 从其他RDD创建

-

主要是通过一个RDD运算完后,再产生新的RDD。

三、分区规则

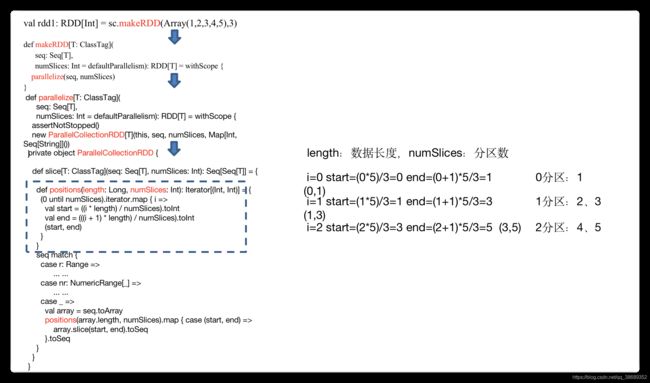

3.1 默认分区源码(RDD数据从集合中创建)

- 默认分区数源码解读

- 代码验证(产生了8个分区)

object partition01_default {

def main(args: Array[String]): Unit = {

val conf: SparkConf = new SparkConf().setMaster("local[*]").setAppName("SparkCoreTest1")

val sc: SparkContext = new SparkContext(conf)

val rdd: RDD[Int] = sc.makeRDD(Array(1,2,3,4))

rdd.saveAsTextFile("output")

}

}3.2 分区源码(RDD数据从集合中创建)

- 分区测试(RDD数据从集合中创建)

object partition02_Array {

def main(args: Array[String]): Unit = {

val conf: SparkConf = new SparkConf().setMaster("local[*]").setAppName("SparkCoreTest1")

val sc: SparkContext = new SparkContext(conf)

//1)4个数据,设置4个分区,输出:0分区->1,1分区->2,2分区->3,3分区->4

//val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4), 4)

//2)4个数据,设置3个分区,输出:0分区->1,1分区->2,2分区->3,4

//val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4), 3)

//3)5个数据,设置3个分区,输出:0分区->1,1分区->2、3,2分区->4、5

val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4, 5), 3)

rdd.saveAsTextFile("output")

sc.stop()

}

}- 分区源码

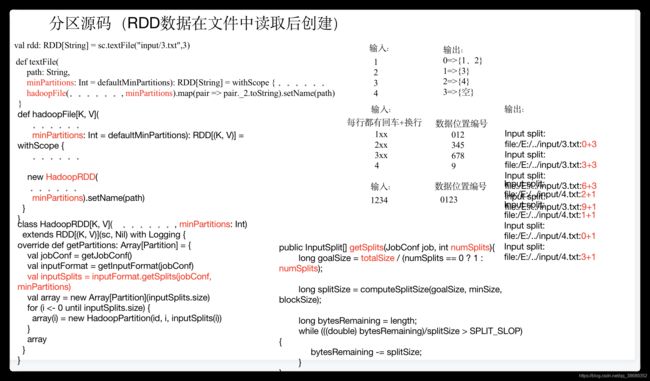

3.3 分区源码(RDD数据从文件中读取后创建)

- 分区测试

object partition03_file {

def main(args: Array[String]): Unit = {

val conf: SparkConf = new SparkConf().setMaster("local[*]").setAppName("SparkCoreTest1")

val sc: SparkContext = new SparkContext(conf)

//1)默认分区的数量:默认取值为当前核数和2的最小值

//val rdd: RDD[String] = sc.textFile("input")

//2)输入数据1-4,每行一个数字;输出:0=>{1、2} 1=>{3} 2=>{4} 3=>{空}

//val rdd: RDD[String] = sc.textFile("input/3.txt",3)

//3)输入数据1-4,一共一行;输出:0=>{1234} 1=>{空} 2=>{空} 3=>{空}

val rdd: RDD[String] = sc.textFile("input/4.txt",3)

rdd.saveAsTextFile("output")

sc.stop()

}

}- 源码解析

- 注意:getSplits文件返回的是切片规划,真正读取是在compute方法中创建LineRecordReader读取的,有两个关键的变量:start=split.getStart(),end=start + split.getLength

四、Transformation转换算子

- RDD整体上分为Value类型、双Value类型和Key-Value类型

4.1 Value类型

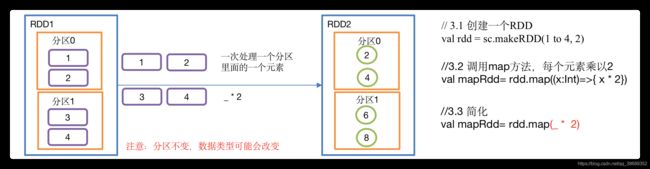

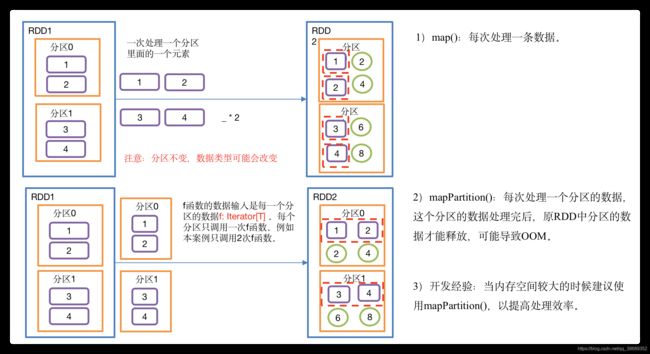

4.1.1 map()映射

- 函数签名

def map[U: ClassTag](f: T => U):RDD[U]- 功能说明:参数f是一个函数,它可以接收一个参数。当某个RDD执行map方法时,会遍历该RDD中的每一个数据项,并依次应用f函数,从而产生一个新的RDD。即,这个新RDD中的每一个元素都是原来RDD中每一个元素依次应用f函数而得到的。

- 示例:创建一个1-4数组的RDD,两个分区,将所有元素*2形成新的RDD

- 具体实现

object value01_map {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(1 to 4,2)

// 3.2 调用map方法,每个元素乘以2

val mapRdd: RDD[Int] = rdd.map(_ * 2)

// 3.3 打印修改后的RDD中数据

mapRdd.collect().foreach(println)

//4.关闭连接

sc.stop()

}

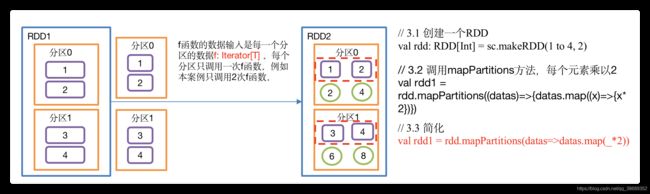

}4.1.2 mapPartitions()以分区为单位执行Map

- 函数签名

def mapPartitions[U: ClassTag](

f: Iterator[T] => Iterator[U],

preservesPartitioning: Boolean = false):RDD[U]- 功能说明:Map是一次处理一个元素,而mapPartitions一次处理一个分区数据

- 示例:创建一个RDD,4个元素,2个分区,使每个元素*2组成新的RDD

- 具体实现

object value02_mapPartitions {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(1 to 4, 2)

// 3.2 调用mapPartitions方法,每个元素乘以2

val rdd1 = rdd.mapPartitions(x=>x.map(_*2))

// 3.3 打印修改后的RDD中数据

rdd1.collect().foreach(println)

//4.关闭连接

sc.stop()

}

}4.1.3 map()和mapPartitions()区别

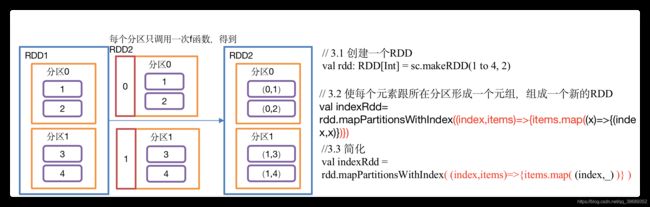

4.1.4 mapPartitionsWithIndex()带分区号

- 函数签名

def mapPartitionsWithIndex[U: ClassTag](

f: (Int, Iterator[T]) => Iterator[U], // Int表示分区号

preservesPartitioning: Boolean = false): RDD[U]

- 功能说明:类似于mapPartitions,比mapPartitions多一个整数参数表示分区号

- 示例:创建一个RDD,使每个元素跟所在分区号形成一个元组,组成一个新的RDD

- 具体实现

object value03_mapPartitionsWithIndex {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(1 to 4, 2)

// 3.2 创建一个RDD,使每个元素跟所在分区号形成一个元组,组成一个新的RDD

val indexRdd = rdd.mapPartitionsWithIndex( (index,items)=>{items.map( (index,_) )} )

//扩展功能:第二个分区元素*2,其余分区不变

// 3.3 打印修改后的RDD中数据

indexRdd.collect().foreach(println)

//4.关闭连接

sc.stop()

}

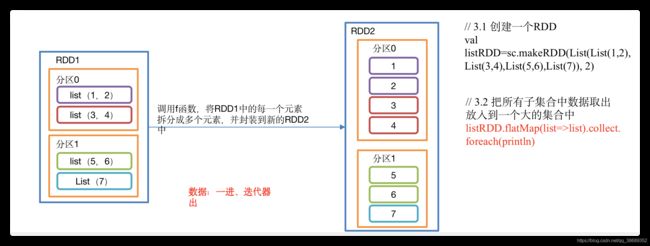

}4.1.5 flatmap()压平

- 函数签名

def flatMap[U: ClassTag](f: T => TraversableOnce[U]):RDD[U]- 函数说明:与map操作类似,将RDD中的每一个元素通过应用f函数依次转换为新的元素,并封装到RDD中。 区别:在flatMap操作中,f函数的返回值是一个集合,并且会将每一个该集合中的元素拆分出来放到新的RDD中。

- 示例:创建一个集合,集合里面存储的还是子集合,把所有子集合中数据取出放入到一个大的集合中。

- 具体实现

object value04_flatMap {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val listRDD=sc.makeRDD(List(List(1,2),List(3,4),List(5,6),List(7)), 2)

// 3.2 把所有子集合中数据取出放入到一个大的集合中

listRDD.flatMap(list=>list).collect.foreach(println)

//4.关闭连接

sc.stop()

}

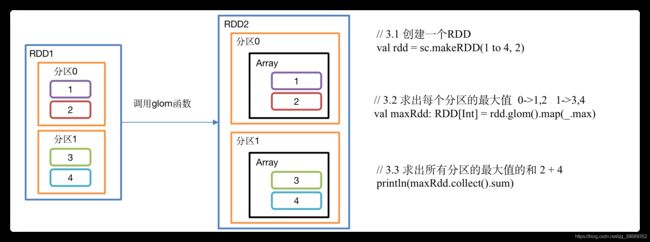

}4.1.6 glom()分区转换数组

- 函数签名

def glom():RDD[Array[T]]

- 功能说明:该操作将RDD中每一个分区变成一个数组,并放置在新的RDD中,数组中元素的类型与原分区中元素类型一致

- 示例:创建一个2个分区的RDD,并将每个分区的数据放到一个数组,求出每个分区的最大值

- 具体实现

object value05_glom {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val rdd = sc.makeRDD(1 to 4, 2)

// 3.2 求出每个分区的最大值 0->1,2 1->3,4

val maxRdd: RDD[Int] = rdd.glom().map(_.max)

// 3.3 求出所有分区的最大值的和 2 + 4

println(maxRdd.collect().sum)

//4.关闭连接

sc.stop()

}

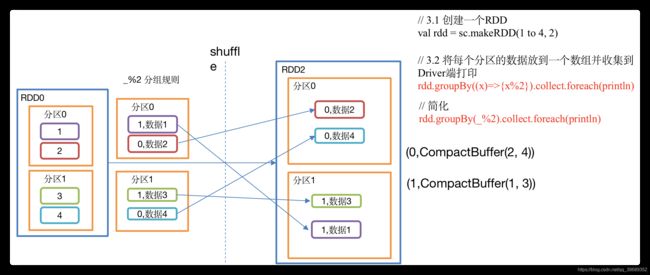

}4.1.7 groupBy()分组

- 函数签名

def groupBy[K](f: T => K)(implicit kt: ClassTag[K]): RDD[(K,Iterable[T])]

- 功能说明:分组,按照传入函数的返回值进行分组。将相同的key对应的值放入一个迭代器。

- 示例:创建一个RDD,按照元素模以2的值进行分组。

- 具体实现

object value06_groupby {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val rdd = sc.makeRDD(1 to 4, 2)

// 3.2 将每个分区的数据放到一个数组并收集到Driver端打印

rdd.groupBy(_ % 2).collect().foreach(println)

// 3.3 创建一个RDD

val rdd1: RDD[String] = sc.makeRDD(List("hello","hive","hadoop","spark","scala"))

// 3.4 按照首字母第一个单词相同分组

rdd1.groupBy(str=>str.substring(0,1)).collect().foreach(println)

sc.stop()

}

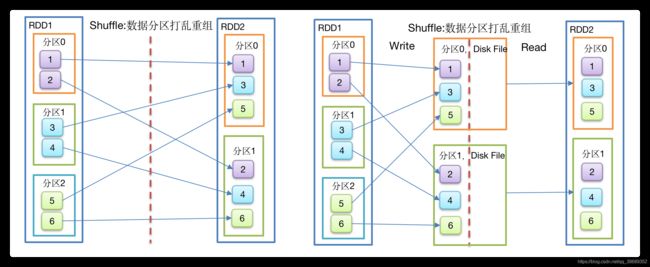

}- 注意:groupBy会存在shuffle过程。

- shuffle:将不同的分区数据进行打乱重组的过程

- shuffle:一定会落盘。可以在local模式下执行程序。通过4040端口查看效果

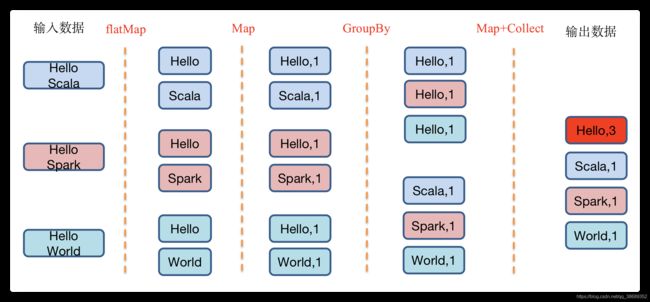

4.1.8 GroupBy之WordCount

- 代码实现

object value06_groupby {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val strList: List[String] = List("Hello Scala", "Hello Spark", "Hello World")

val rdd = sc.makeRDD(strList)

// 3.2 将字符串拆分成一个一个的单词

val wordRdd: RDD[String] = rdd.flatMap(str=>str.split(" "))

// 3.3 将单词结果进行转换:word=>(word,1)

val wordToOneRdd: RDD[(String, Int)] = wordRdd.map(word=>(word, 1))

// 3.4 将转换结构后的数据分组

val groupRdd: RDD[(String, Iterable[(String, Int)])] = wordToOneRdd.groupBy(t=>t._1)

// 3.5 将分组后的数据进行结构的转换

val wordToSum: RDD[(String, Int)] = groupRdd.map {

case (word, list) => {

(word, list.size)

}

}

wordToSum.collect().foreach(println)

sc.stop()

}

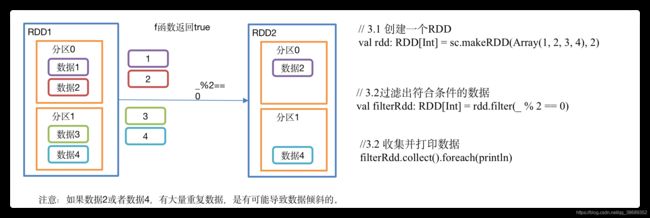

}4.1.9 filter()过滤

- 函数签名

def filter(f: T => Boolean):RDD[T]- 功能说明:接收一个返回值为布尔类型的函数作为参数。当某个RDD调用filter方法时,会对该RDD中每一个元素应用f函数,如果返回值类型为true,则该元素会被添加到新的RDD中。

- 示例:创建一个RDD(由字符串组成),过滤出一个新RDD(包含”xiao”子串)

- 具体实现

object value07_filter {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3.创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4),2)

//3.1 过滤出符合条件的数据

val filterRdd: RDD[Int] = rdd.filter(_ % 2 == 0)

//3.2 收集并打印数据

filterRdd.collect().foreach(println)

//4 关闭连接

sc.stop()

}

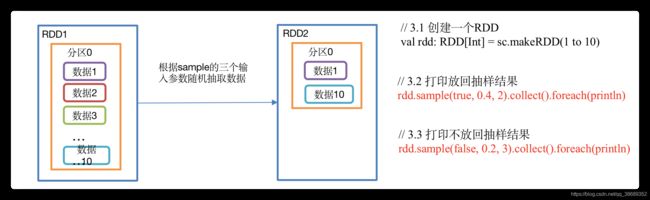

}4.1.10 sample()采样

- 函数签名

def sample(

withReplacement: Boolean, // withReplacement表示:抽出的数据是否放回,true为有放回的抽样,false为无放回的抽样

fraction: Double, // 当withReplacement=false时:选择每个元素的概率;取值一定是[0,1] ;

seed: Long = Utils.random.nextLong): RDD[T] // seed表示:指定随机数生成器种子。

- 功能说明:从大量数据中采样

- 示例:创建一个RDD(1-10),从中选择放回和不放回抽

- 具体实现

object value08_sample {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(1 to 10)

// 3.2 打印放回抽样结果

rdd.sample(true, 0.4, 2).collect().foreach(println)

// 3.3 打印不放回抽样结果

rdd.sample(false, 0.2, 3).collect().foreach(println)

//4.关闭连接

sc.stop()

}

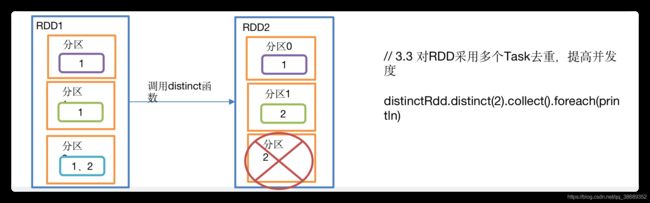

}4.1.11 distinct()去重

- 函数签名

def distinct(): RDD[T] // 默认情况下,distinct会生成与原RDD分区个数一致的分区数- 函数签名

def distinct(numPartitions: Int)(implicit ord: Ordering[T] = null): RDD[T] // 可以去重后修改分区个数

- 功能说明:对内部的元素去重,并将去重后的元素放到新的RDD中。

- 示例

- 具体实现

object value09_distinct {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val distinctRdd: RDD[Int] = sc.makeRDD(List(1,2,1,5,2,9,6,1))

// 3.2 打印去重后生成的新RDD

distinctRdd.distinct().collect().foreach(println)

// 3.3 对RDD采用多个Task去重,提高并发度

distinctRdd.distinct(2).collect().foreach(println)

//4.关闭连接

sc.stop()

}

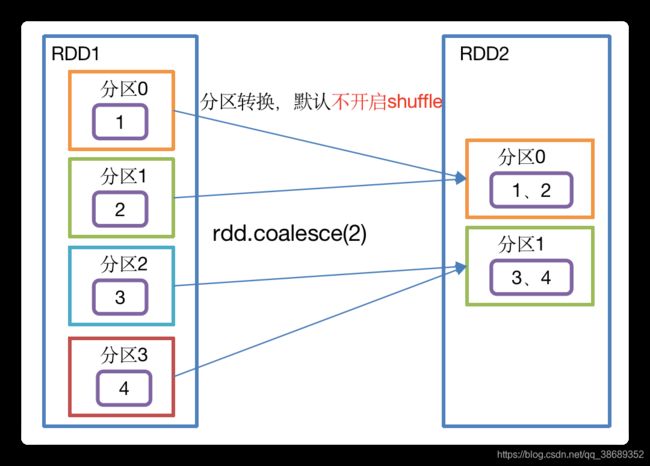

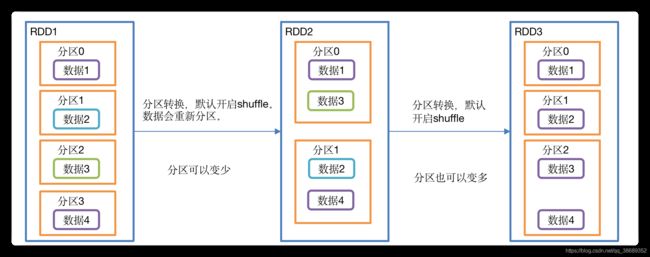

}4.1.12 coalesce()重新分区

- Coalesce算子包括:配置执行Shuffle和配置不执行Shuffle两种方式。

- 函数签名

def coalesce(numPartitions: Int, shuffle: Boolean = false, // shuffle为false,则该操作会将分区数较多的原始RDD向分区数比较少的目标RDD,进行转换。

partitionCoalescer: Option[PartitionCoalescer] = Option.empty)

(implicit ord: Ordering[T] = null) : RDD[T]

- 功能说明:缩减分区数,用于大数据集过滤后,提高小数据集的执行效率。

- 示例:4个分区合并为2个分区

- 具体实现

object value10_coalesce {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3.创建一个RDD

//val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4), 4)

//3.1 缩减分区

//val coalesceRdd: RDD[Int] = rdd.coalesce(2)

//4. 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4, 5, 6), 3)

//4.1 缩减分区

val coalesceRdd: RDD[Int] = rdd.coalesce(2)

//5 打印查看对应分区数据

val indexRdd: RDD[Int] = coalesceRdd.mapPartitionsWithIndex(

(index, datas) => {

// 打印每个分区数据,并带分区号

datas.foreach(data => {

println(index + "=>" + data)

})

// 返回分区的数据

datas

}

)

indexRdd.collect()

//6. 关闭连接

sc.stop()

}

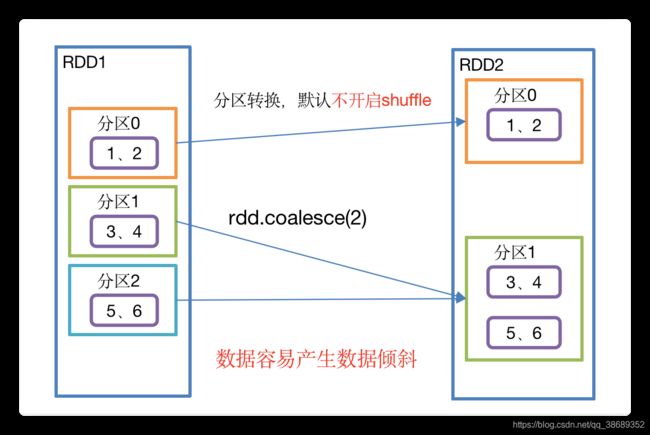

}- 示例:3个分区合并为2个分区

- 具体实现

//3. 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4, 5, 6), 3)

//3.1 执行shuffle

val coalesceRdd: RDD[Int] = rdd.coalesce(2, true)- Shuffle原理

4.1.13 repartition()重新分区(执行shuffle)

- 函数签名

def repartition(numPartitions: Int)(implicit ord: Ordering[T] = null):RDD[T]- 功能说明:该操作内部其实执行的是coalesce操作,参数shuffle的默认值为true。无论是将分区数多的RDD转换为分区数少的RDD,还是将分区数少的RDD转换为分区数多的RDD,repartition操作都可以完成,因为无论如何都会经shuffle过程。

- 示例:创建一个4个分区的RDD,对其重新分区

- 具体实现

object value11_repartition {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3. 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(Array(1, 2, 3, 4, 5, 6), 3)

//3.1 缩减分区

//val coalesceRdd: RDD[Int] = rdd.coalesce(2,true)

//3.2 重新分区

val repartitionRdd: RDD[Int] = rdd.repartition(2)

//4 打印查看对应分区数据

val indexRdd: RDD[Int] = repartitionRdd.mapPartitionsWithIndex(

(index, datas) => {

// 打印每个分区数据,并带分区号

datas.foreach(data => {

println(index + "=>" + data)

})

// 返回分区的数据

datas

}

)

indexRdd.collect()

//6. 关闭连接

sc.stop()

}

}4.1.14 coalesce和repartition区别

- coalesce重新分区,可以选择是否进行shuffle过程。由参数shuffle: Boolean = false/true决定。

- repartition实际上是调用的coalesce,进行shuffle。源码如下:

def repartition(numPartitions: Int)(implicit ord: Ordering[T] = null): RDD[T] = withScope {

coalesce(numPartitions, shuffle = true)

}- coalesce一般为缩减分区,如果扩大分区,不使用shuffle是没有意义的,repartition扩大分区执行shuffle。

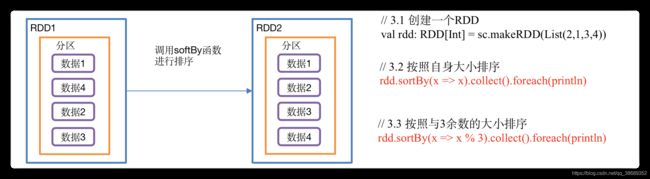

4.1.15 sortBy

- 函数签名

def sortBy[K]( f: (T) => K,

ascending: Boolean = true, // // 默认为正序排列

numPartitions: Int = this.partitions.length)

(implicit ord: Ordering[K], ctag: ClassTag[K]): RDD[T]- 功能说明:该操作用于排序数据。在排序之前,可以将数据通过f函数进行处理,之后按照f函数处理的结果进行排序,默认为正序排列。排序后新产生的RDD的分区数与原RDD的分区数一致。

- 示例:创建一个RDD,按照不同的规则进行排序

- 具体实例

object value12_sortBy {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

// 3.1 创建一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(2, 1, 3, 4, 6, 5))

// 3.2 默认是升序排

val sortRdd: RDD[Int] = rdd.sortBy(num => num)

sortRdd.collect().foreach(println)

// 3.3 配置为倒序排

val sortRdd2: RDD[Int] = rdd.sortBy(num => num, false)

sortRdd2.collect().foreach(println)

// 3.4 创建一个RDD

val strRdd: RDD[String] = sc.makeRDD(List("1", "22", "12", "2", "3"))

// 3.5 按照字符的int值排序

strRdd.sortBy(num => num.toInt).collect().foreach(println)

// 3.5 创建一个RDD

val rdd3: RDD[(Int, Int)] = sc.makeRDD(List((2, 1), (1, 2), (1, 1), (2, 2)))

// 3.6 先按照tuple的第一个值排序,相等再按照第2个值排

rdd3.sortBy(t=>t).collect().foreach(println)

//4.关闭连接

sc.stop()

}

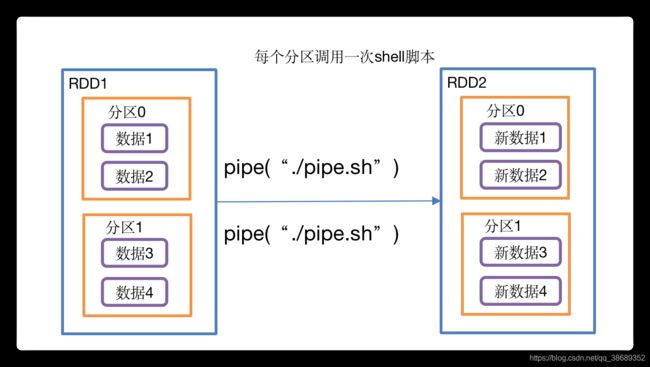

}4.1.16 pipe()调用脚本

- 函数签名

def pipe(command: String):RDD[T]- 功能说明:管道,针对每个分区,都调用一次shell脚本,返回输出的RDD

- 注意:在Worker节点可以访问到的位置放脚本

- 示例:编写一个脚本,使用管道将脚本作用于RDD上。

- 编写一个pipe.sh脚本

#!/bin/sh

echo "Start"

while read LINE; do

echo ">>>"${LINE}

done-

创建一个只有一个分区的RDD

scala> val rdd = sc.makeRDD (List("hi","Hello","how","are","you"),1)- 将脚本作用该RDD并打印

scala> rdd.pipe("/opt/module/spark/pipe.sh").collect()

res18: Array[String] = Array(Start, >>>hi, >>>Hello, >>>how, >>>are, >>>you)- 创建一个有两个分区的RDD

scala> val rdd = sc.makeRDD(List("hi","Hello","how","are","you"),2)- 将脚本作用该RDD并打印

scala> rdd.pipe("/opt/module/spark/pipe.sh").collect()

res19: Array[String] = Array(Start, >>>hi, >>>Hello, Start, >>>how, >>>are, >>>you)4.2 双value类型交互

4.2.1 union()并集

- 函数签名

def union(other: RDD[T]): RDD[T]

- 功能说明:对源RDD和参数RDD求并集后返回一个新的RDD

- 示例:创建两个RDD,求并集

- 具体实现

object DoubleValue01_union {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd1: RDD[Int] = sc.makeRDD(1 to 4)

//3.2 创建第二个RDD

val rdd2: RDD[Int] = sc.makeRDD(4 to 8)

//3.3 计算两个RDD的并集

rdd1.union(rdd2).collect().foreach(println)

//4.关闭连接

sc.stop()

}

}4.2.2 subtract()差集

- 函数签名

def subtract(other: RDD[T]): RDD[T]- 功能说明:计算差的一种函数,去除两个RDD中相同元素,不同的RDD将保留下来

- 示例:创建两个RDD,求第一个RDD与第二个RDD的差集

- 具体实现

object DoubleValue02_subtract {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(1 to 4)

//3.2 创建第二个RDD

val rdd1: RDD[Int] = sc.makeRDD(4 to 8)

//3.3 计算第一个RDD与第二个RDD的差集并打印

rdd.subtract(rdd1).collect().foreach(println)

//4.关闭连接

sc.stop()

}

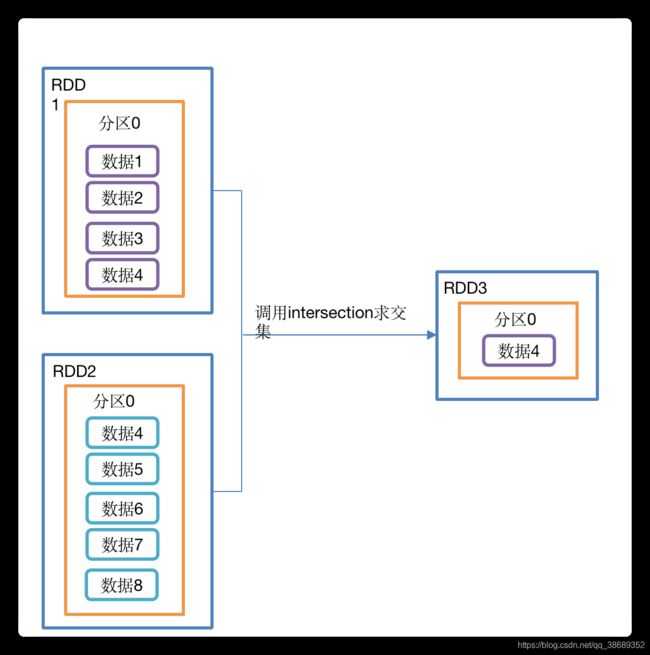

}4.2.3 intersection()交集

- 函数签名

def intersection(other: RDD[T]): RDD[T]- 功能说明:对源RDD和参数RDD求交集后返回一个新的RDD

- 示例:创建两个RDD,求两个RDD的交集

- 具体实现

object DoubleValue03_intersection {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd1: RDD[Int] = sc.makeRDD(1 to 4)

//3.2 创建第二个RDD

val rdd2: RDD[Int] = sc.makeRDD(4 to 8)

//3.3 计算第一个RDD与第二个RDD的差集并打印

rdd1.intersection(rdd2).collect().foreach(println)

//4.关闭连接

sc.stop()

}

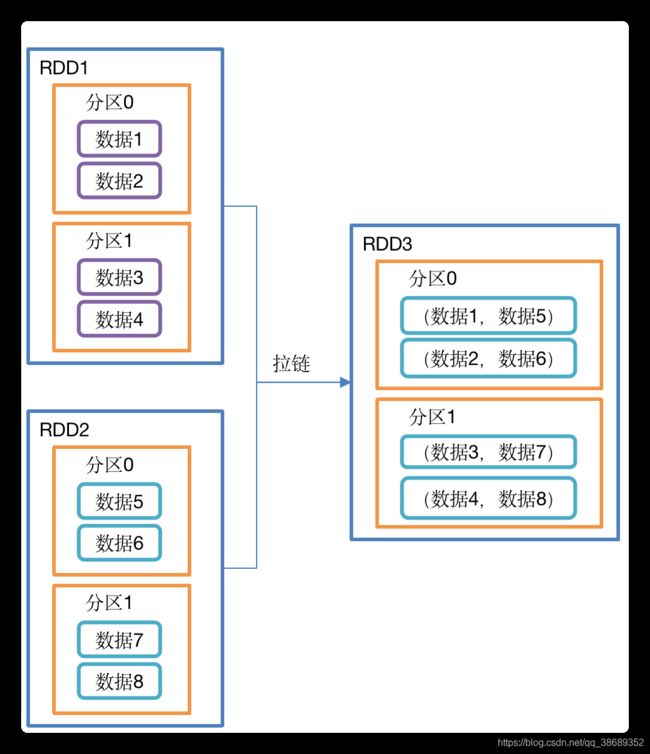

}4.2.4 zip()拉链

- 函数签名

def zip[U: ClassTag](other: RDD[U]): RDD[(T, U)]- 功能说明: 该操作可以将两个RDD中的元素,以键值对的形式进行合并。其中,键值对中的Key为第1个RDD中的元素,Value为第2个RDD中的元素。将两个RDD组合成Key/Value形式的RDD,这里默认两个RDD的partition数量以及元素数量都相同,否则会抛出异常。

- 示例:创建两个RDD,并将两个RDD组合到一起形成一个(k,v)RDD

- 具体实现

object DoubleValue04_zip {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd1: RDD[Int] = sc.makeRDD(Array(1,2,3),3)

//3.2 创建第二个RDD

val rdd2: RDD[String] = sc.makeRDD(Array("a","b","c"),3)

//3.3 第一个RDD组合第二个RDD并打印

rdd1.zip(rdd2).collect().foreach(println)

//3.4 第二个RDD组合第一个RDD并打印

rdd2.zip(rdd1).collect().foreach(println)

//3.5 创建第三个RDD(与1,2分区数不同)

val rdd3: RDD[String] = sc.makeRDD(Array("a","b"),3)

//3.6 元素个数不同,不能拉链

// Can only zip RDDs with same number of elements in each partition

rdd1.zip(rdd3).collect().foreach(println)

//3.7 创建第四个RDD(与1,2分区数不同)

val rdd4: RDD[String] = sc.makeRDD(Array("a","b","c"),2)

//3.8 分区数不同,不能拉链

// Can't zip RDDs with unequal numbers of partitions: List(3, 2)

rdd1.zip(rdd4).collect().foreach(println)

//4.关闭连接

sc.stop()

}

}4.3 Key-Value类型

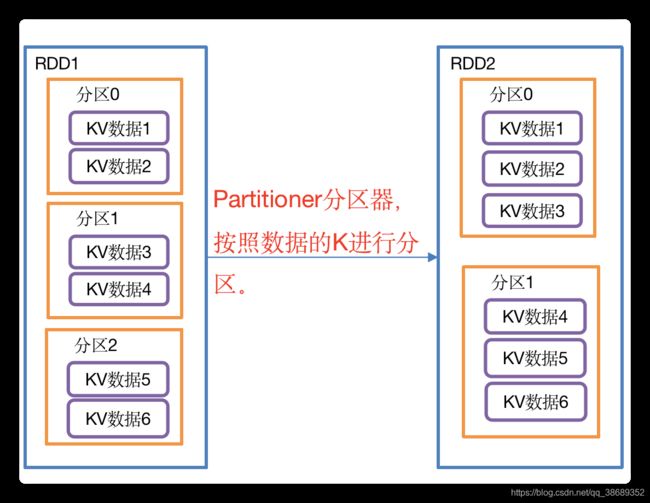

4.3.1 partitionBy()按照Key重新分区

- 函数签名

def partitionBy(partitioner: Partitioner): RDD[(K, V)]- 功能说明:将RDD[K,V]中的K按照指定Partitioner重新进行分区;如果原有的partionRDD和现有的partionRDD是一致的话就不进行分区,否则会产生Shuffle过程。

- 示例:创建一个3个分区的RDD,对其重新分区

- 具体实现

object KeyValue01_partitionBy {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[(Int, String)] = sc.makeRDD(Array((1,"aaa"),(2,"bbb"),(3,"ccc")),3)

//3.2 对RDD重新分区

val rdd2: RDD[(Int, String)] = rdd.partitionBy(new org.apache.spark.HashPartitioner(2))

//3.3 查看新RDD的分区数

println(rdd2.partitions.size)

//4.关闭连接

sc.stop()

}

}- HashPartitioner源码解读

class HashPartitioner(partitions: Int) extends Partitioner {

require(partitions >= 0, s"Number of partitions ($partitions) cannot be negative.")

def numPartitions: Int = partitions

def getPartition(key: Any): Int = key match {

case null => 0

case _ => Utils.nonNegativeMod(key.hashCode, numPartitions)

}

override def equals(other: Any): Boolean = other match {

case h: HashPartitioner =>

h.numPartitions == numPartitions

case _ =>

false

}

override def hashCode: Int = numPartitions

}- 自定义分区器

package com.spark.day04

import org.apache.spark.rdd.RDD

import org.apache.spark.{HashPartitioner, Partitioner, SparkConf, SparkContext}

/*

* 转换算子-parittionBy

* 对KV类型的RDD按key进行重新分区

*

* */

object Spark01_Transformation_partitionBy {

def main(args: Array[String]): Unit = {

// 创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

// 创建SparkContext对象,该对象是提交Spark App的入口

val sc = new SparkContext(conf)

// 创建RDD

// 注意:RDD本身是没有partitionBy这个算子的,通过隐式转换动态给kv类型的RDD扩展的功能

// val rdd: RDD[Int] = sc.makeRDD(List(1, 2, 3, 4))

val rdd: RDD[(Int, String)] = sc.makeRDD(List((1, "aaa"), (2, "bbb"), (3, "ccc")), 3)

rdd.mapPartitionsWithIndex(

(index, datas) => {

println(index + "--->" + datas.mkString(","))

datas

}

).collect()

// rdd.partitionBy(new HashPartitioner(2)).mapPartitionsWithIndex(

// (index, datas) => {

// println(index + "---------->" + datas.mkString(","))

// datas

// }

// ).collect()

rdd.partitionBy(new MyPartiioner(2)).mapPartitionsWithIndex(

(index, datas) => {

println(index + "---------->" + datas.mkString(","))

datas

}

).collect()

// 关闭连接

sc.stop()

}

}

// 自定义分区器

class MyPartiioner(partitions: Int) extends Partitioner {

// 获取分区的个数

override def numPartitions: Int = partitions

// 指定分区规则,返回值Int表示分区编号,从0开始

override def getPartition(key: Any): Int = {

// 8

// val key: String = key.asInstanceOf[String]

// if (key.startsWith("135")) {

// 0

// } else {

// 1

// }

1

}

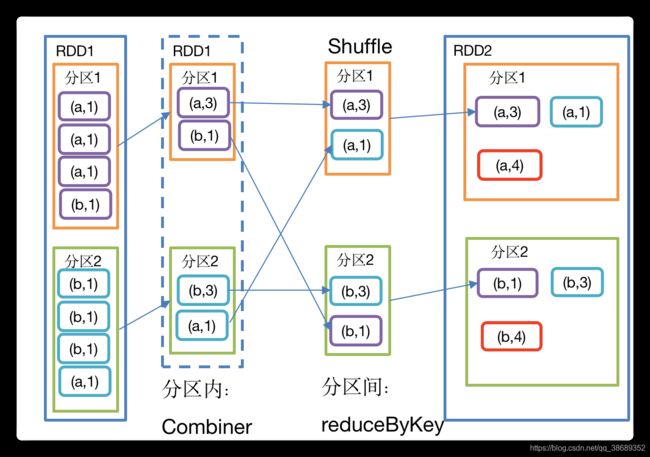

}4.3.2 reduceByKey()按照Key聚合Value

- 函数签名

def reduceByKey(func: (V, V) => V): RDD[(K, V)]

def reduceByKey(func: (V, V) => V, numPartitions: Int): RDD[(K, V)]

- 功能说明:该操作可以将RDD[K,V]中的元素按照相同的K对V进行聚合。 其存在多种重载形式,还可以设置新RDD的分区数。

- 示例:统计单词出现次数

- 具体实现

object KeyValue02_reduceByKey {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd = sc.makeRDD(List(("a",1),("b",5),("a",5),("b",2)))

//3.2 计算相同key对应值的相加结果

val reduce: RDD[(String, Int)] = rdd.reduceByKey((x,y) => x+y)

//3.3 打印结果

reduce.collect().foreach(println)

//4.关闭连接

sc.stop()

}

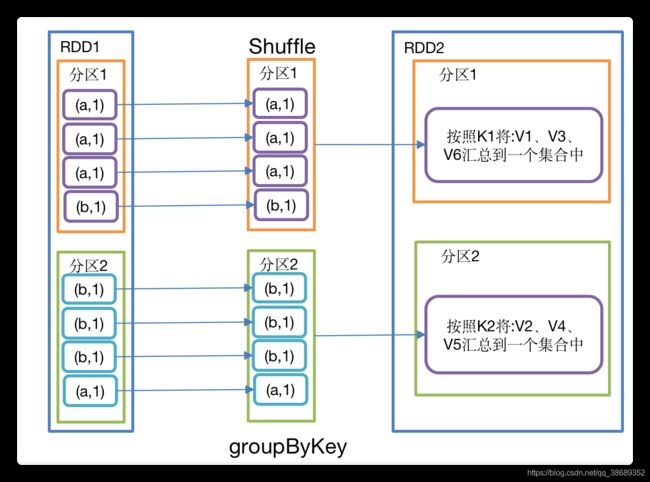

}4.3.3 groupBykey()按照Key重新分组

- 函数签名

def groupByKey(): RDD[(K,Iterable[V])]- 功能说明:groupByKey对每个key进行操作,但只生成一个seq,并不进行聚合。该操作可以指定分区器或者分区数(默认使用HashPartitioner)

- 示例:创建一个pairRDD,将相同key对应值聚合到一个seq中,并计算相同key对应值的相加结果

- 具体实现

object KeyValue03_groupByKey {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd = sc.makeRDD(List(("a",1),("b",5),("a",5),("b",2)))

//3.2 将相同key对应值聚合到一个Seq中

val group: RDD[(String, Iterable[Int])] = rdd.groupByKey()

//3.3 打印结果

group.collect().foreach(println)

//3.4 计算相同key对应值的相加结果

group.map(t=>(t._1,t._2.sum)).collect().foreach(println)

//4.关闭连接

sc.stop()

}

}4.3.4 reduceByKey和groupByKey区别

- reduceByKey:按照key进行聚合,在shuffle之前有combine(预聚合)操作,返回结果是RDD[k,v]。

- groupByKey:按照key进行分组,直接进行shuffle。

- 开发指导:在不影响业务逻辑的前提下,优先选用reduceByKey。求和操作不影响业务逻辑,求平均值影响业务逻辑。

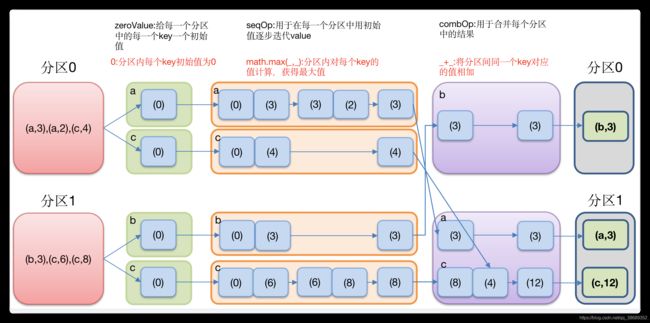

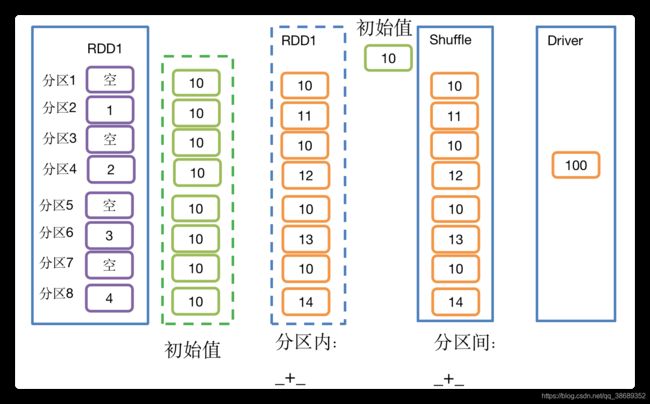

4.3.5 aggregateByKey()按照key处理分区内和分区间逻辑

- 函数签名

- zeroValue(初始值):给每一个分区中的每一种key一个初始值

- seqOp(分区内):函数用于在每一个分区中用初始值逐步迭代value

- combOp(分区间):函数用于合并每个分区中的结果。

def aggregateByKey[U: ClassTag](zeroValue: U)(seqOp: (U, V) => U, combOp: (U, U) => U): RDD[(K, U)]- 功能说明:按照key对分区内以及分区间的数据进行处理。

- 示例:取出每个分区相同key对应值的最大值,然后相加

- 具体实现

object KeyValue04_aggregateByKey {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[(String, Int)] = sc.makeRDD(List(("a", 3), ("a", 2), ("c", 4), ("b", 3), ("c", 6), ("c", 8)), 2)

//3.2 取出每个分区相同key对应值的最大值,然后相加

rdd.aggregateByKey(0)(math.max(_, _), _ + _).collect().foreach(println)

//4.关闭连接

sc.stop()

}

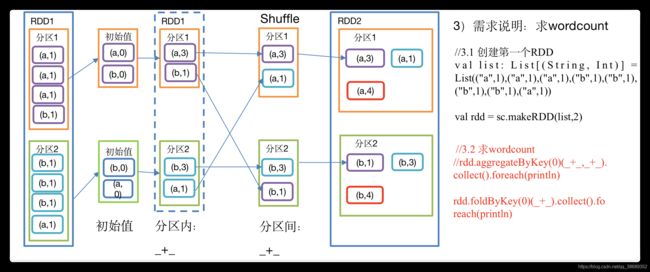

}4.3.6 foldByKey()分区内和分区间相同的aggregateByKey()

- 函数签名

- zerValue:是一个初始化值,它可以是任意类型

- func:是一个函数,两个输入参数相同

def foldByKey(zeroValue: V)(func: (V, V) => V):RDD[(K, V)]- 功能说明:aggregateByKey的简化操作,seqop和combop相同。即,分区内逻辑和分区间逻辑相同。

- 示例:求wordcount

- 具体实现

bject KeyValue05_foldByKey {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val list: List[(String, Int)] = List(("a",1),("a",1),("a",1),("b",1),("b",1),("b",1),("b",1),("a",1))

val rdd = sc.makeRDD(list,2)

//3.2 求wordcount

//rdd.aggregateByKey(0)(_+_,_+_).collect().foreach(println)

rdd.foldByKey(0)(_+_).collect().foreach(println)

//4.关闭连接

sc.stop()

}

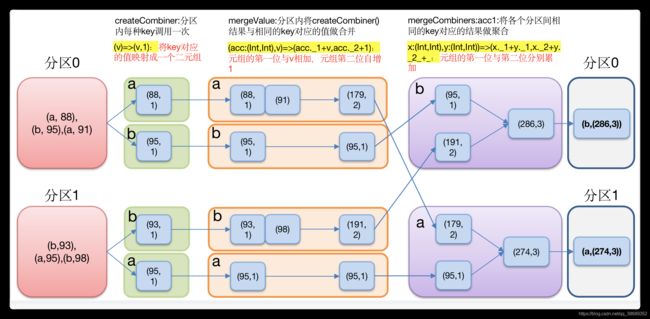

}4.3.7 combineByKey()转换结构后分区和分区间操作

- 函数签名

- createCombiner:V=>C:分组内的创建组合的函数。通俗点将就是对读进来的数据进行初始化,其把当前的值作为参数,可以对该值做一些转换操作,转换为我们想要的数据格式

- mergeValue:(C,V)=>C:该函数主要是分区内的合并函数,作用在每一个分区内部。其功能主要是将V合并到之前(createCombiner)的元素C上,注意,这里的C指的是上一函数转换之后的数据格式,而这里的V指的是原始数据格式(上一函数为转换之前的)

- mergeCombiners:(C,C)=>R:该函数主要是进行多分区合并,此时是将两个C合并为一个C,例如两个C:(Int)进行相加之后得到一个R:(Int)

def combineByKey[C](

createCombiner: V => C,

mergeValue: (C, V) => C,

mergeCombiners: (C, C) => C): RDD[(K, C)]

- 功能说明:针对相同K,将V合并成一个集合

- 示例:针对一个pairRDD,计算每种key的均值(对应值的和/出现次数)

- 具体实现

object KeyValue06_combineByKey {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3.1 创建第一个RDD

val list: List[(String, Int)] = List(("a", 88), ("b", 95), ("a", 91), ("b", 93), ("a", 95), ("b", 98))

val input: RDD[(String, Int)] = sc.makeRDD(list, 2)

//3.2 将相同key对应的值相加,同时记录该key出现的次数,放入一个二元组

val combineRdd: RDD[(String, (Int, Int))] = input.combineByKey(

(_, 1),

(acc: (Int, Int), v) => (acc._1 + v, acc._2 + 1),

(acc1: (Int, Int), acc2: (Int, Int)) => (acc1._1 + acc2._1, acc1._2 + acc2._2)

)

//3.3 打印合并后的结果

combineRdd.collect().foreach(println)

//3.4 计算平均值

combineRdd.map {

case (key, value) => {

(key, value._1 / value._2.toDouble)

}

}.collect().foreach(println)

//4.关闭连接

sc.stop()

}

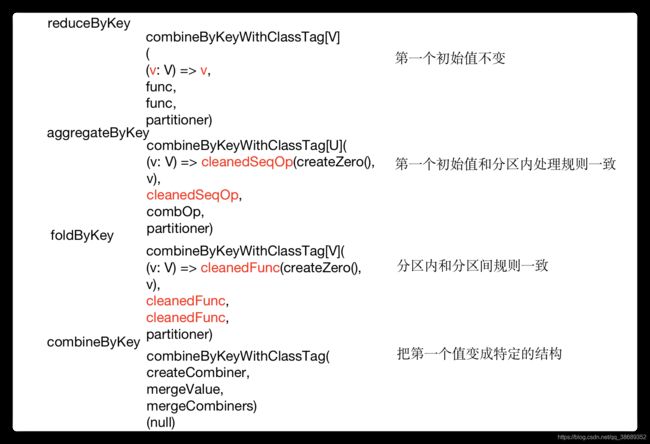

}4.3.8 reduceByKey、aggregateByKey、foldByKey、combineByKey

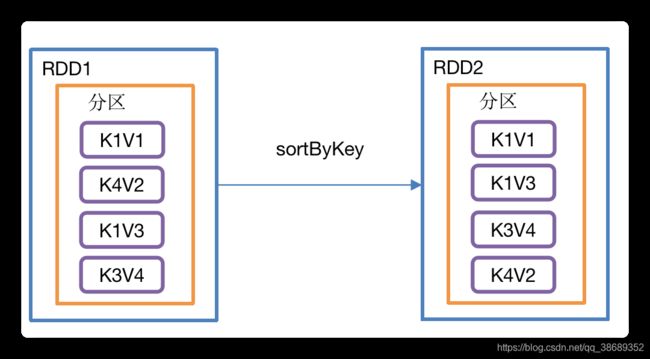

4.3.9 sortByKey()按照K进行排序

- 函数签名

def sortByKey(

ascending: Boolean = true, // 默认升序

numPartitions: Int = self.partitions.length) : RDD[(K, V)]- 功能说明:在一个(K,V)的RDD上调用,K必须实现Ordered接口,返回一个按照key进行排序的(K,V)的RDD

- 示例:创建一个pairRDD,按照key的正序和倒序进行排序

- 具体实现

object KeyValue07_sortByKey {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[(Int, String)] = sc.makeRDD(Array((3,"aa"),(6,"cc"),(2,"bb"),(1,"dd")))

//3.2 按照key的正序(默认顺序)

rdd.sortByKey(true).collect().foreach(println)

//3.3 按照key的倒序

rdd.sortByKey(false).collect().foreach(println)

//4.关闭连接

sc.stop()

}

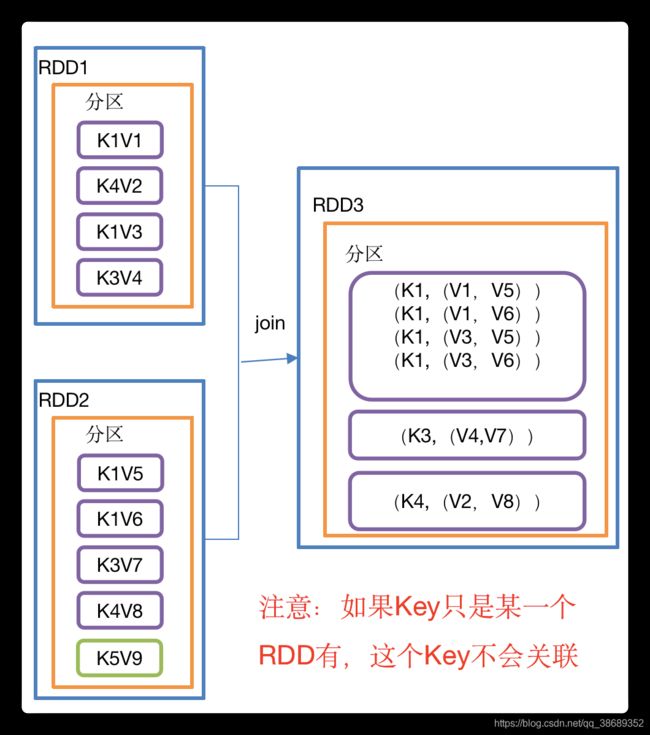

}4.3.10 join()连接将相同key对应的多个value关联在一起

- 函数签名

def join[W](other: RDD[(K, W)]): RDD[(K, (V, W))]

def join[W](other: RDD[(K, W)], numPartitions: Int): RDD[(K, (V, W))]

- 功能说明:在类型为(K,V)和(K,W)的RDD上调用,返回一个相同key对应的所有元素对在一起的(K,(V,W))的RDD

- 示例:创建两个pairRDD,并将key相同的数据聚合到一个元组。

- 具体实现

object KeyValue09_join {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[(Int, String)] = sc.makeRDD(Array((1, "a"), (2, "b"), (3, "c")))

//3.2 创建第二个pairRDD

val rdd1: RDD[(Int, Int)] = sc.makeRDD(Array((1, 4), (2, 5), (4, 6)))

//3.3 join操作并打印结果

rdd.join(rdd1).collect().foreach(println)

//4.关闭连接

sc.stop()

}

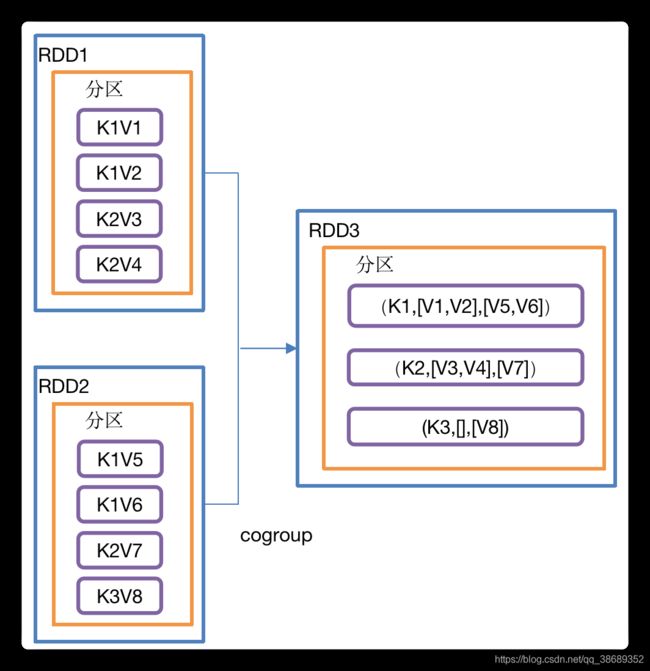

}4.3.11 cogroup()类似全连接,但是在同一个RDD中对key聚合

- 函数签名

def cogroup[W](other: RDD[(K, W)]): RDD[(K, (Iterable[V], Iterable[W]))]

- 功能说明:在类型为(K,V)和(K,W)的RDD上调用,返回一个(K,(Iterable

,Iterable ))类型的RDD - 示例:创建两个pairRDD,并将key相同的数据聚合到一个迭代器。

- 具体实现

object KeyValue10_cogroup {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[(Int, String)] = sc.makeRDD(Array((1,"a"),(2,"b"),(3,"c")))

//3.2 创建第二个RDD

val rdd1: RDD[(Int, Int)] = sc.makeRDD(Array((1,4),(2,5),(3,6)))

//3.3 cogroup两个RDD并打印结果

rdd.cogroup(rdd1).collect().foreach(println)

//4.关闭连接

sc.stop()

}

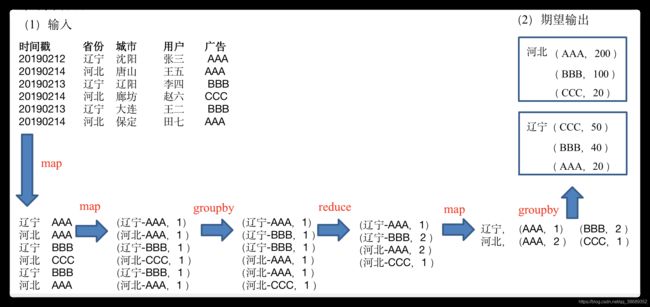

}4.4 案例

- 数据准备:时间戳,省份,城市,用户,广告,中间字段使用空格分割。

- 需求:统计出每一个省份广告被点击次数的Top3

- 需求分析

- 具体实现

object Demo_top3 {

def main(args: Array[String]): Unit = {

//1. 初始化Spark配置信息并建立与Spark的连接

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("Test")

val sc = new SparkContext(sparkConf)

//2. 读取日志文件,获取原始数据

val dataRDD: RDD[String] = sc.textFile("input/agent.log")

//3. 将原始数据进行结构转换string =>(prv-adv,1)

val prvAndAdvToOneRDD: RDD[(String, Int)] = dataRDD.map {

line => {

val datas: Array[String] = line.split(" ")

(datas(1) + "-" + datas(4), 1)

}

}

//4. 将转换结构后的数据进行聚合统计(prv-adv,1)=>(prv-adv,sum)

val prvAndAdvToSumRDD: RDD[(String, Int)] = prvAndAdvToOneRDD.reduceByKey(_ + _)

//5. 将统计的结果进行结构的转换(prv-adv,sum)=>(prv,(adv,sum))

val prvToAdvAndSumRDD: RDD[(String, (String, Int))] = prvAndAdvToSumRDD.map {

case (prvAndAdv, sum) => {

val ks: Array[String] = prvAndAdv.split("-")

(ks(0), (ks(1), sum))

}

}

//6. 根据省份对数据进行分组:(prv,(adv,sum)) => (prv, Iterator[(adv,sum)])

val groupRDD: RDD[(String, Iterable[(String, Int)])] = prvToAdvAndSumRDD.groupByKey()

//7. 对相同省份中的广告进行排序(降序),取前三名

val mapValuesRDD: RDD[(String, List[(String, Int)])] = groupRDD.mapValues {

datas => {

datas.toList.sortWith(

(left, right) => {

left._2 > right._2

}

).take(3)

}

}

//8. 将结果打印

mapValuesRDD.collect().foreach(println)

//9.关闭与spark的连接

sc.stop()

}

}五、Action行动算子

- 行动算子是触发了整个作业的执行。因为转换算子都是懒加载,并不会立即执行。

5.1 reduce()聚合

- 函数签名

def reduce(f: (T, T) => T): T- 功能说明:f函数聚集RDD中的所有元素,先聚合分区内数据,再聚合分区间数据。

- 示例:创建一个RDD,将所有元素聚合得到结果

object action01_reduce {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4))

//3.2 聚合数据

val reduceResult: Int = rdd.reduce(_+_)

println(reduceResult)

//4.关闭连接

sc.stop()

}

}5.2 collect()以数组的形式返回数据集

-

函数签名

def collect(): Array[T]- 功能说明:在驱动程序中,以数组Array的形式返回数据集的所有元素。

- 注意:所有数据都会被拉取到Driver端,慎用

- 示例:创建一个RDD,并将RDD内容收集到Driver端打印

object action02_collect {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4))

//3.2 收集数据到Driver

rdd.collect().foreach(println)

//4.关闭连接

sc.stop()

}

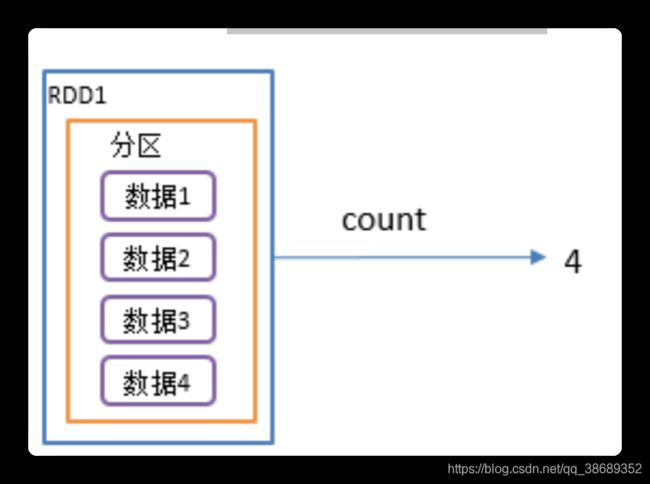

}5.3 count()返回RDD中元素个数

-

函数签名

def count(): Long- 功能说明:返回RDD中元素的个数

- 示例:创建一个RDD,统计该RDD的条数

object action03_count {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4))

//3.2 返回RDD中元素的个数

val countResult: Long = rdd.count()

println(countResult)

//4.关闭连接

sc.stop()

}

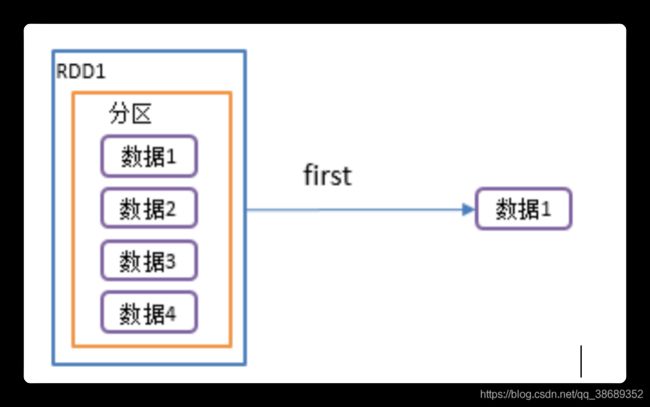

}5.4 first()返回RDD中的第一个元素

- 函数签名

def first(): T- 功能说明:返回RDD中的第一个元素

- 示例:创建一个RDD,返回该RDD中的第一个元素

object action04_first {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4))

//3.2 返回RDD中元素的个数

val firstResult: Int = rdd.first()

println(firstResult)

//4.关闭连接

sc.stop()

}

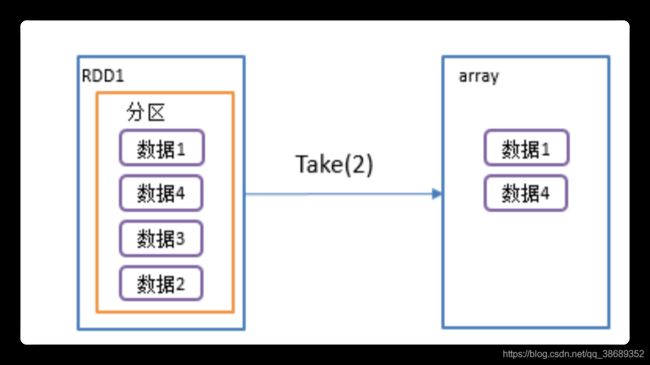

}5.5 take()返回由RDD前n个元素组成的数组

- 函数签名

def take(num: Int): Array[T]- 功能说明:返回一个由RDD的前n个元素组成的数组

- 示例:创建一个RDD,统计该RDD的条数

object action05_take {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4))

//3.2 返回RDD中元素的个数

val takeResult: Array[Int] = rdd.take(2)

println(takeResult)

//4.关闭连接

sc.stop()

}

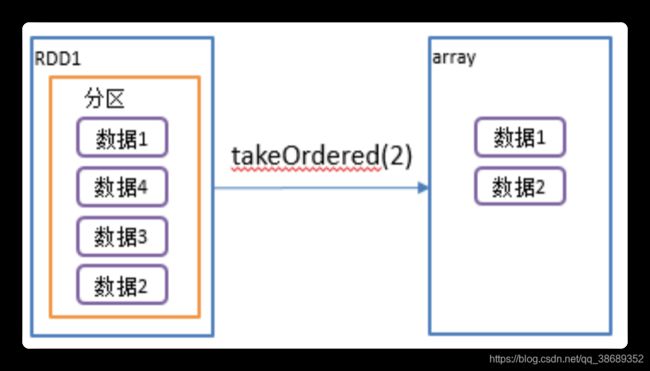

}5.6 takeOrdered()返回该RDD排序后前n个元素组成的数组

- 函数签名

def takeOrdered(num: Int)(implicit ord: Ordering[T]): Array[T]- 功能说明:返回该RDD排序后的前n个元素组成的数组

- 示例:创建一个RDD,获取该RDD排序后的前2个元素

object action06_takeOrdered{

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1,3,2,4))

//3.2 返回RDD中元素的个数

val result: Array[Int] = rdd.takeOrdered(2)

println(result)

//4.关闭连接

sc.stop()

}

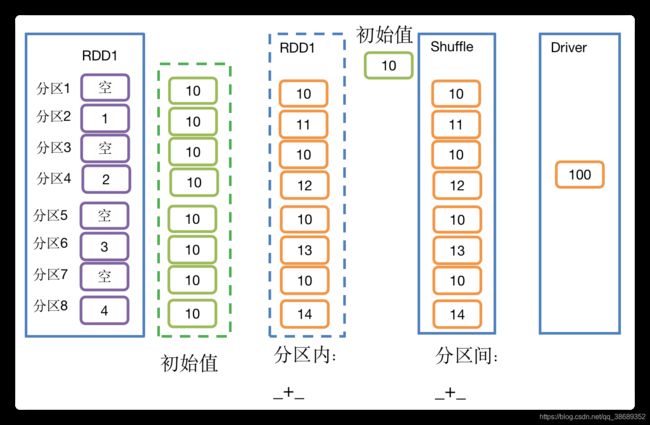

}5.7 aggregate()

- 函数签名

def aggregate[U: ClassTag](zeroValue: U)(seqOp: (U, T) => U, combOp: (U, U) => U): U

- 功能说明:aggregate函数将每个分区里面的元素通过分区内逻辑和初始值进行聚合,然后用分区间逻辑和初始值(zeroValue)进行操作。注意:分区间逻辑再次使用初始值和aggregateByKey是有区别的。

- 示例:创建一个RDD,将所有元素相加得到结果

- 具体实现

object action07_aggregate {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1, 2, 3, 4),8)

//3.2 将该RDD所有元素相加得到结果

//val result: Int = rdd.aggregate(0)(_ + _, _ + _)

val result: Int = rdd.aggregate(10)(_ + _, _ + _)

println(result)

//4.关闭连接

sc.stop()

}

}5.8 fold()

- 函数签名

def fold(zeroValue: T)(op: (T, T) => T): T

- 功能说明:折叠操作,aggregate的简化操作,即,分区内逻辑和分区间逻辑相同

- 示例:创建一个RDD,将所有元素相加得到结果

- 具体实现

object action08_fold {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1, 2, 3, 4))

//3.2 将该RDD所有元素相加得到结果

val foldResult: Int = rdd.fold(0)(_+_)

println(foldResult)

//4.关闭连接

sc.stop()

}

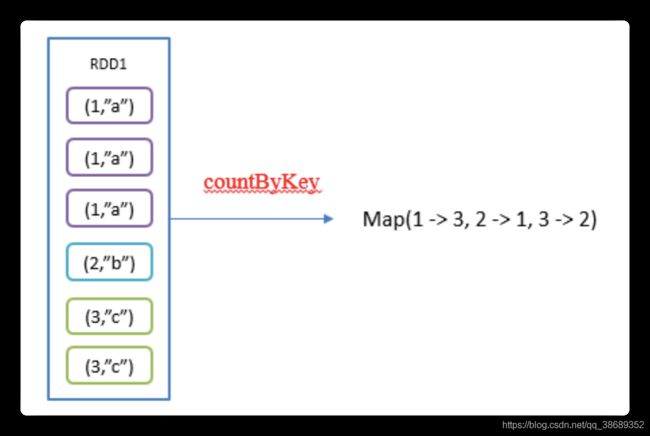

}5.9 countByKey()统计每种key的个数

- 函数签名

def countByKey(): Map[K, Long]- 功能说明:统计每种key的个数

-

示例:创建一个PairRDD,统计每种key的个数

object action09_countByKey {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[(Int, String)] = sc.makeRDD(List((1, "a"), (1, "a"), (1, "a"), (2, "b"), (3, "c"), (3, "c")))

//3.2 统计每种key的个数

val result: collection.Map[Int, Long] = rdd.countByKey()

println(result)

//4.关闭连接

sc.stop()

}

}5.10 save相关算子

- saveAsTextFile(path)保存成Text文件

- 功能说明:将数据集的元素以textfile的形式保存到HDFS文件系统或者其他支持的文件系统,对于每个元素,Spark将会调用toString方法,将它装换为文件中的文本

- saveAsSequenceFile(path) 保存成Sequencefile文件

- 功能说明:将数据集中的元素以Hadoop Sequencefile的格式保存到指定的目录下,可以使HDFS或者其他Hadoop支持的文件系统。

- 注意:只有kv类型RDD有该操作,单值没有

- saveAsObjectFile(path) 序列化成对象保存到文件

- 功能说明:用于将RDD中的元素序列化成对象,存储到文件中。

- 具体实现

object action10_save {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4),2)

//3.2 保存成Text文件

rdd.saveAsTextFile("output")

//3.3 序列化成对象保存到文件

rdd.saveAsObjectFile("output1")

//3.4 保存成Sequencefile文件

rdd.map((_,1)).saveAsSequenceFile("output2")

//4.关闭连接

sc.stop()

}

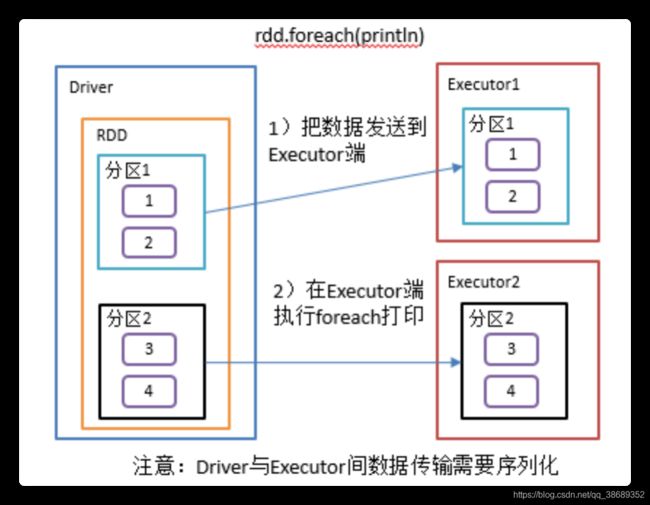

}5.11 foreach(f)遍历RDD中每一个元素

- 函数签名

def foreach(f: T => Unit): Unit- 功能说明:遍历RDD中的每一个元素,并依次应用f函数

- 具体实现

object action11_foreach {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3具体业务逻辑

//3.1 创建第一个RDD

// val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4),2)

val rdd: RDD[Int] = sc.makeRDD(List(1,2,3,4))

//3.2 收集后打印

rdd.map(num=>num).collect().foreach(println)

println("****************")

//3.3 分布式打印

rdd.foreach(println)

//4.关闭连接

sc.stop()

}

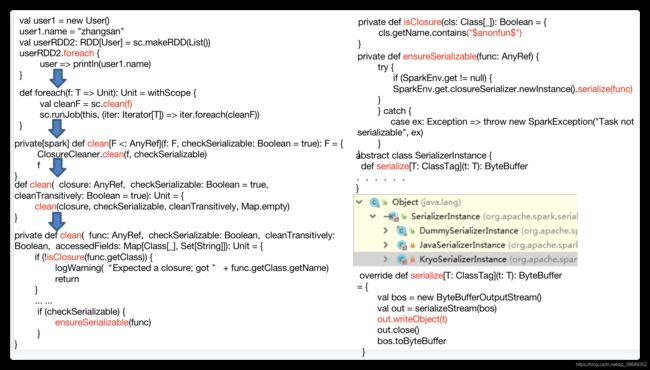

}六、RDD序列化

- 在实际开发中我们往往需要自己定义一些对于RDD的操作,那么此时需要注意的是,初始化工作是在Driver端进行的,而实际运行程序是在Executor端进行的,这就涉及到了跨进程通信,是需要序列化的。下面我们看几个例子:

6.1 闭包检查

- 闭包引入

object serializable01_object {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3.创建两个对象

val user1 = new User()

user1.name = "zhangsan"

val user2 = new User()

user2.name = "lisi"

val userRDD1: RDD[User] = sc.makeRDD(List(user1, user2))

//3.1 打印,ERROR报java.io.NotSerializableException

//userRDD1.foreach(user => println(user.name))

//3.2 打印,RIGHT

val userRDD2: RDD[User] = sc.makeRDD(List())

//userRDD2.foreach(user => println(user.name))

//3.3 打印,ERROR Task not serializable 注意:没执行就报错了

userRDD2.foreach(user => println(user1.name))

//4.关闭连接

sc.stop()

}

}

//class User {

// var name: String = _

//}

class User extends Serializable {

var name: String = _

}- 闭包检查

6.2 序列化方法和属性

- 说明

- Driver:算子以外的代码都是在Driver端执行的

- Executor:算子里面的代码都是在Executor端执行的

- 代码实现

object serializable02_function {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3.创建一个RDD

val rdd: RDD[String] = sc.makeRDD(Array("hello world", "hello spark", "hive", "atguigu"))

//3.1创建一个Search对象

val search = new Search("hello")

// Driver:算子以外的代码都是在Driver端执行

// Executor:算子里面的代码都是在Executor端执行

//3.2 函数传递,打印:ERROR Task not serializable

search.getMatch1(rdd).collect().foreach(println)

//3.3 属性传递,打印:ERROR Task not serializable

search.getMatche2(rdd).collect().foreach(println)

//4.关闭连接

sc.stop()

}

}

class Search(query:String) extends Serializable {

def isMatch(s: String): Boolean = {

s.contains(query)

}

// 函数序列化案例

def getMatch1 (rdd: RDD[String]): RDD[String] = {

//rdd.filter(this.isMatch)

rdd.filter(isMatch)

}

// 属性序列化案例

def getMatche2(rdd: RDD[String]): RDD[String] = {

//rdd.filter(x => x.contains(this.query))

rdd.filter(x => x.contains(query))

//val q = query

//rdd.filter(x => x.contains(q))

}

}- getMatch1()说明

- 在这个方法中所调用的方法isMatch()是定义在Search这个类中的,实际上调用的是this. isMatch(),this表示Search这个类的对象,程序在运行过程中需要将Search对象序列化以后传递到Executor端。

- 类继承scala.Serializable即可

class Search() extends Serializable{...}- getMach2()说明

- 在这个方法中所调用的方法query是定义在Search这个类中的字段,实际上调用的是this. query,this表示Search这个类的对象,程序在运行过程中需要将Search对象序列化以后传递到Executor端。

- 解决方案一:类继承scala.Serializable即可,将类变量query赋值给局部变量

- 修改getMach2为

-

//过滤出包含字符串的RDD def getMatche2(rdd: RDD[String]): RDD[String] = { val q = this.query//将类变量赋值给局部变量 rdd.filter(x => x.contains(q)) }- 解决方案二:把Search类变成样例类,样例类默认是序列化的。

case class Search(query:String) extends Serializable {...}6.3 Kryo序列化框架

- Java的序列化能够序列化任何的类。但是比较重,序列化后对象的提交也比较大.

- Spark出于性能的考虑,Spark2.0开始支持另外一种Kryo序列化机制。Kryo速度是Serializable的10倍。当RDD在Shuffle数据的时候,简单数据类型、数组和字符串类型已经在Spark内部使用kryo来序列化。

- 注意:即使使用kryo序列化,也要继承Serializable接口。

object serializable03_Kryo {

def main(args: Array[String]): Unit = {

val conf: SparkConf = new SparkConf()

.setAppName("SerDemo")

.setMaster("local[*]")

// 替换默认的序列化机制

.set("spark.serializer", "org.apache.spark.serializer.KryoSerializer")

// 注册需要使用 kryo 序列化的自定义类

.registerKryoClasses(Array(classOf[Searcher]))

val sc = new SparkContext(conf)

val rdd: RDD[String] = sc.makeRDD(Array("hello world", "hello atguigu", "atguigu", "hahah"), 2)

val searcher = new Searcher("hello")

val result: RDD[String] = searcher.getMatchedRDD1(rdd)

result.collect.foreach(println)

}

}

case class Searcher(val query: String) {

def isMatch(s: String) = {

s.contains(query)

}

def getMatchedRDD1(rdd: RDD[String]) = {

rdd.filter(isMatch)

}

def getMatchedRDD2(rdd: RDD[String]) = {

val q = query

rdd.filter(_.contains(q))

}

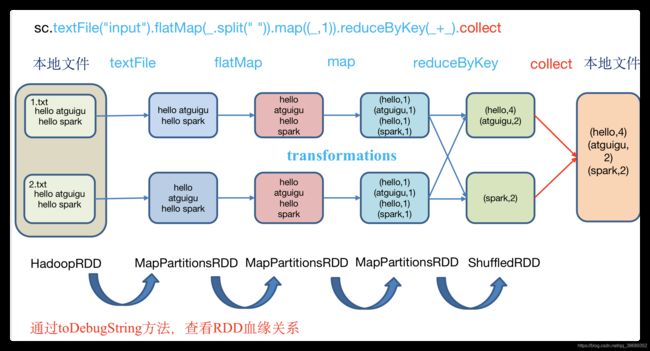

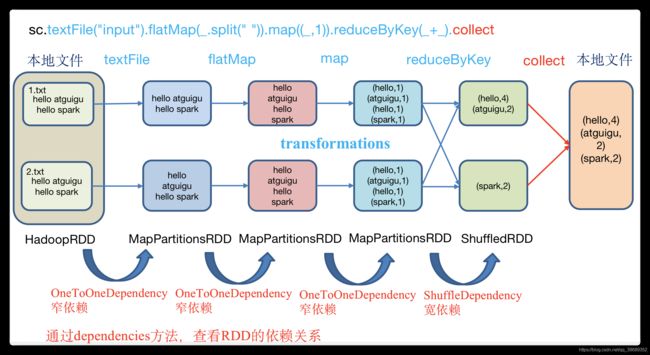

}七、RDD依赖关系

7.1 查看血缘关系

- RDD只支持粗粒度转换,即在大量记录上执行的单个操作。将创建RDD的一系列Lineage(血统)记录下来,以便恢复丢失的分区。RDD的Lineage会记录RDD的元数据信息和转换行为,当该RDD的部分分区数据丢失时,它可以根据这些信息来重新运算和恢复丢失的数据分区。

- 示例

- 具体实现

object Lineage01 {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

val fileRDD: RDD[String] = sc.textFile("input/1.txt")

println(fileRDD.toDebugString)

println("----------------------")

val wordRDD: RDD[String] = fileRDD.flatMap(_.split(" "))

println(wordRDD.toDebugString)

println("----------------------")

val mapRDD: RDD[(String, Int)] = wordRDD.map((_,1))

println(mapRDD.toDebugString)

println("----------------------")

val resultRDD: RDD[(String, Int)] = mapRDD.reduceByKey(_+_)

println(resultRDD.toDebugString)

resultRDD.collect()

//4.关闭连接

sc.stop()

}

}- 打印结果

- 注意:圆括号中的数字表示RDD的并行度,也就是几个分区

(2) input/1.txt MapPartitionsRDD[1] at textFile at Lineage01.scala:15 []

| input/1.txt HadoopRDD[0] at textFile at Lineage01.scala:15 []

----------------------

(2) MapPartitionsRDD[2] at flatMap at Lineage01.scala:19 []

| input/1.txt MapPartitionsRDD[1] at textFile at Lineage01.scala:15 []

| input/1.txt HadoopRDD[0] at textFile at Lineage01.scala:15 []

----------------------

(2) MapPartitionsRDD[3] at map at Lineage01.scala:23 []

| MapPartitionsRDD[2] at flatMap at Lineage01.scala:19 []

| input/1.txt MapPartitionsRDD[1] at textFile at Lineage01.scala:15 []

| input/1.txt HadoopRDD[0] at textFile at Lineage01.scala:15 []

----------------------

(2) ShuffledRDD[4] at reduceByKey at Lineage01.scala:27 []

+-(2) MapPartitionsRDD[3] at map at Lineage01.scala:23 []

| MapPartitionsRDD[2] at flatMap at Lineage01.scala:19 []

| input/1.txt MapPartitionsRDD[1] at textFile at Lineage01.scala:15 []

| input/1.txt HadoopRDD[0] at textFile at Lineage01.scala:15 []7.2 查看依赖关系

- 示例

- 具体实现

object Lineage01 {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

val fileRDD: RDD[String] = sc.textFile("input/1.txt")

println(fileRDD.dependencies)

println("----------------------")

val wordRDD: RDD[String] = fileRDD.flatMap(_.split(" "))

println(wordRDD.dependencies)

println("----------------------")

val mapRDD: RDD[(String, Int)] = wordRDD.map((_,1))

println(mapRDD.dependencies)

println("----------------------")

val resultRDD: RDD[(String, Int)] = mapRDD.reduceByKey(_+_)

println(resultRDD.dependencies)

resultRDD.collect()

//4.关闭连接

sc.stop()

}

}- 打印结果

List(org.apache.spark.OneToOneDependency@f2ce6b)

----------------------

List(org.apache.spark.OneToOneDependency@692fd26)

----------------------

List(org.apache.spark.OneToOneDependency@627d8516)

----------------------

List(org.apache.spark.ShuffleDependency@a518813)- 全局搜索org.apache.spark.OneToOneDependency

class OneToOneDependency[T](rdd: RDD[T]) extends NarrowDependency[T](rdd) {

override def getParents(partitionId: Int): List[Int] = List(partitionId)

}- 注意:想要理解RDDS是如何工作的,最重要的就是理解Transformations。RDD之间的关系可以从两个维度来理解:一个是RDD是从哪些RDD转换而来,也就是RDD的parent RDD(s)是什么;另一个就是RDD依赖于parent RDD(s)的哪些Partition(s)。这种关系就是RDD之间的依赖。

- RDD和它依赖的父RDD(s)的关系有两种不同的类型,即窄依赖(narrow dependency)和宽依赖(wide dependency)。

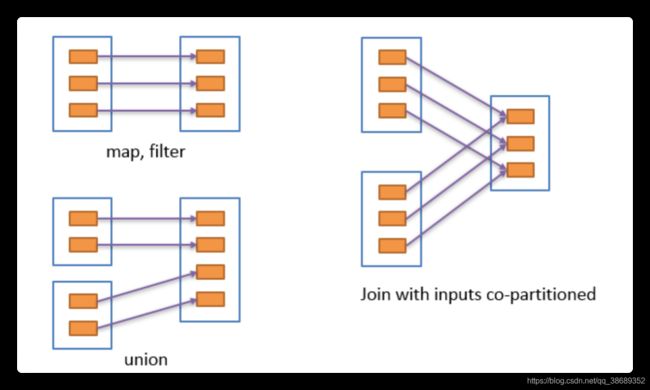

7.3 窄依赖

- 窄依赖表示每一个父RDD的Partition最多被子RDD的一个Partition使用

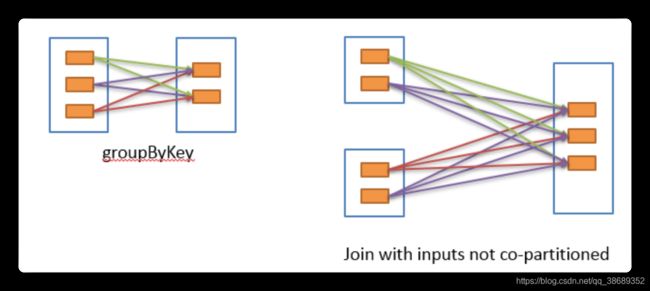

7.4 宽依赖

- 宽依赖表示同一个父RDD的Partition被多个子RDD的Partition依赖,会引起Shuffle

- 具有宽依赖的transformations包括:sort,reduceByKey,groupByKey,join和调用rePartition函数的任何操作

- 宽依赖对Spark去评估一个transformations有更加重要的影响,比如对性能的影响。

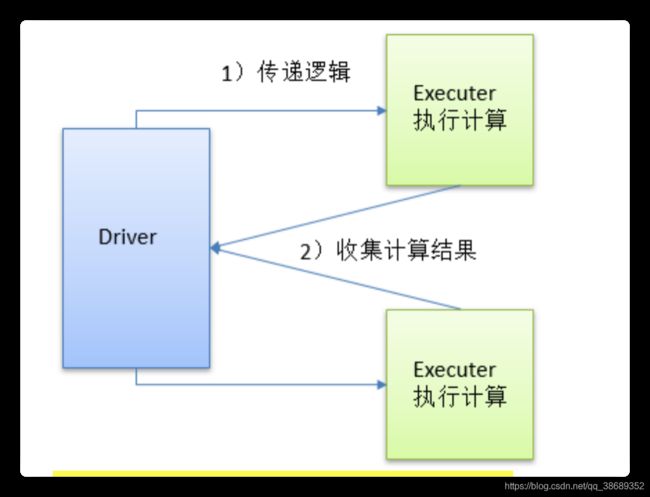

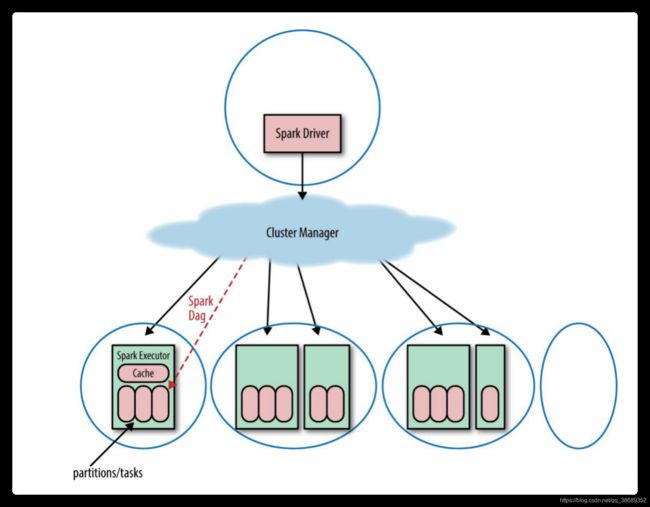

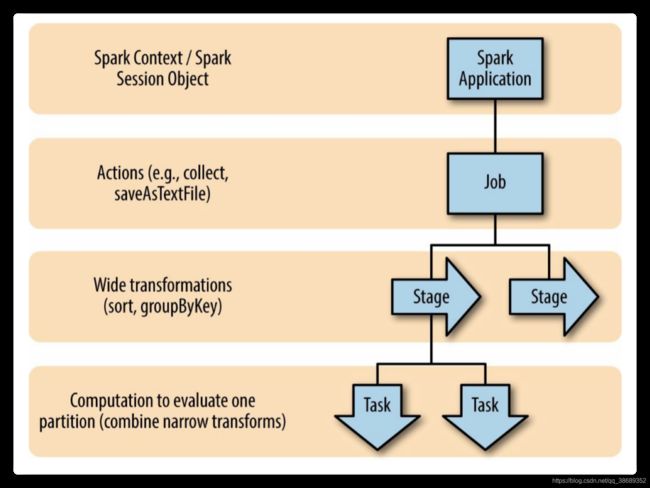

7.5 Spark中的Job调度

- 一个Spark应用包含一个驱动进程(driver process,在这个进程中写Spark的逻辑代码)和多个执行器进程(executor process,跨越集群中的多个节点)。Spark 程序自己是运行在驱动节点, 然后发送指令到执行器节点。

- 一个Spark集群可以同时运行多个Spark应用, 这些应用是由集群管理器(cluster manager)来调度。

- Spark应用可以并发的运行多个job, job对应着给定的应用内的在RDD上的每个 action操作。

7.5.1 Spark应用

- 一个Spark应用可以包含多个Spark job, Spark job是在驱动程序中由SparkContext 来定义的。

- 当启动一个 SparkContext 的时候, 就开启了一个 Spark 应用。 一个驱动程序被启动了, 多个执行器在集群中的多个工作节点(worker nodes)也被启动了。 一个执行器就是一个 JVM, 一个执行器不能跨越多个节点, 但是一个节点可以包括多个执行器。

- 一个 RDD 会跨多个执行器被并行计算. 每个执行器可以有这个 RDD 的多个分区, 但是一个分区不能跨越多个执行器.

7.5.2 Spark的Job划分

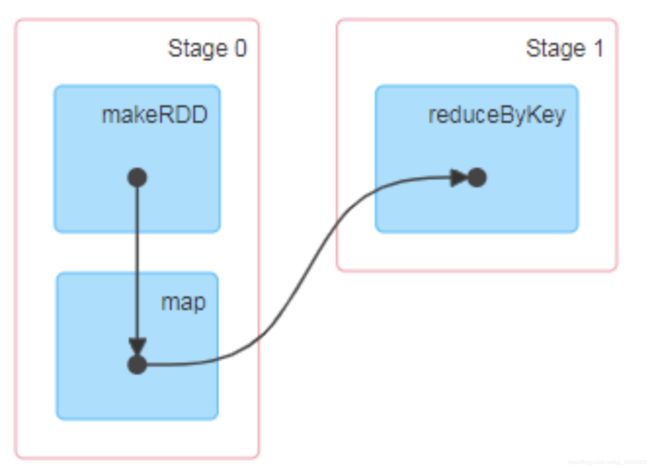

- 由于Spark的懒执行, 在驱动程序调用一个action之前, Spark 应用不会做任何事情,针对每个action,Spark 调度器就创建一个执行图(execution graph)和启动一个 Spark job。

- 每个 job 由多个stages 组成, 这些 stages 就是实现最终的 RDD 所需的数据转换的步骤。一个宽依赖划分一个stage。每个 stage 由多个 tasks 来组成, 这些 tasks 就表示每个并行计算, 并且会在多个执行器上执行。

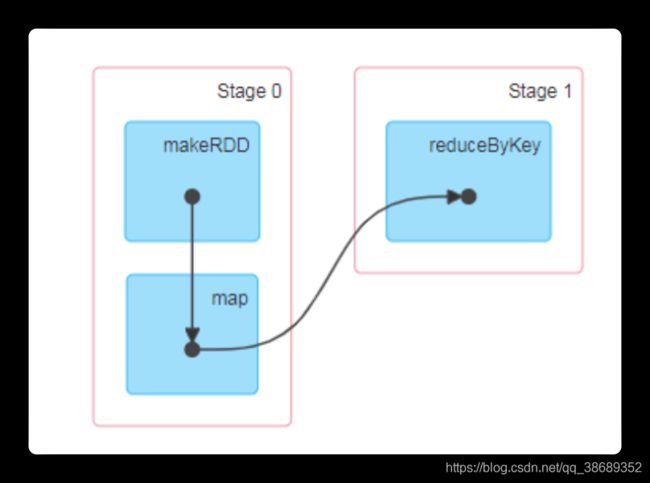

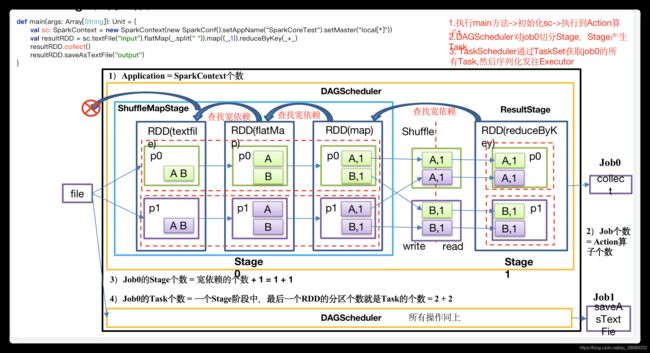

7.6 Stage任务划分

- DAG有向无环图

- DAG(Directed Acyclic Graph)有向无环图是由点和线组成的拓扑图形,该图形具有方向,不会闭环。原始的RDD通过一系列的转换就形成了DAG,根据RDD之间的依赖关系的不同将DAG划分成不同的Stage,对于窄依赖,partition的转换处理在Stage中完成计算。对于宽依赖,由于有Shuffle的存在,只能在parent RDD处理完成后,才能开始接下来的计算,因此宽依赖是划分Stage的依据。例如,DAG记录了RDD的转换过程和任务的阶段。

- RDD任务切分中间为:Application、Job、Stage和Task

- Application:初始化一个SparkContext即生成一个Application;

- Job:一个Action算子就会生成一个Job;

- Stage:Stage等于宽依赖的个数加1

- Task:一个Stage阶段中,最后一个RDD的分区个数就是Task的个数。

- 注意:Application->Job->Stage->Task每一层都是1对n的关系。

- 代码实现

object Stage01 {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2. Application:初始化一个SparkContext即生成一个Application;

val sc: SparkContext = new SparkContext(conf)

//3. 创建RDD

val dataRDD: RDD[Int] = sc.makeRDD(List(1,2,3,4,1,2),2)

//3.1 聚合

val resultRDD: RDD[(Int, Int)] = dataRDD.map((_,1)).reduceByKey(_+_)

// Job:一个Action算子就会生成一个Job;

//3.2 job1打印到控制台

resultRDD.collect().foreach(println)

//3.3 job2输出到磁盘

resultRDD.saveAsTextFile("output")

Thread.sleep(1000000)

//4.关闭连接

sc.stop()

}

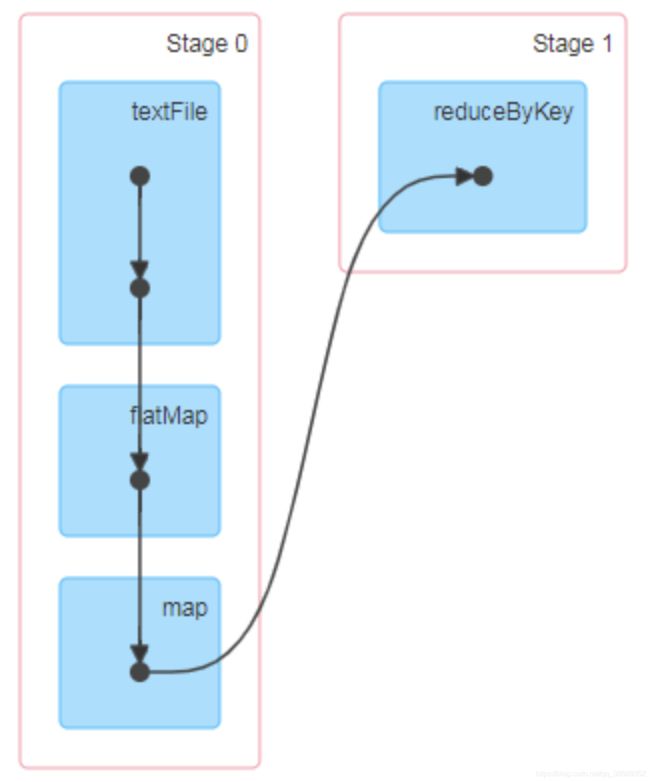

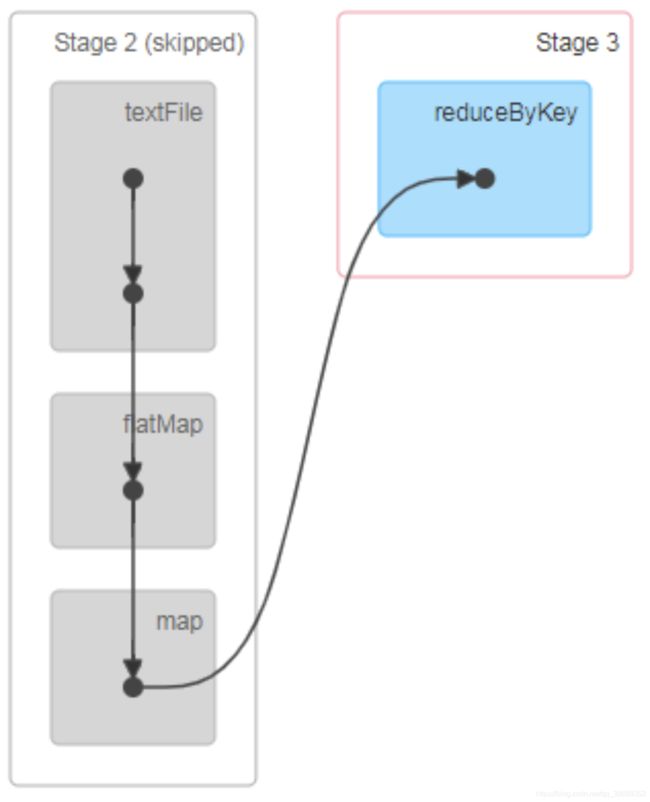

}- 查看Job个数:查看http://localhost:4040/jobs/,发现Job有两个。

- 查看Stage个数:

- 查看Job0的Stage。由于只有1个Shuffle阶段,所以Stage个数为2。

- 查看Job1的Stage。由于只有1个Shuffle阶段,所以Stage个数为2。

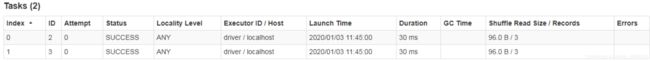

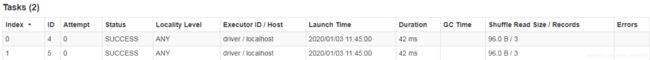

- Task个数

- 查看Job0的Stage0的Task个数

- 查看Job0的Stage1的Task个数

- 查看Job1的Stage2的Task个数

- 查看Job1的Stage3的Task个数

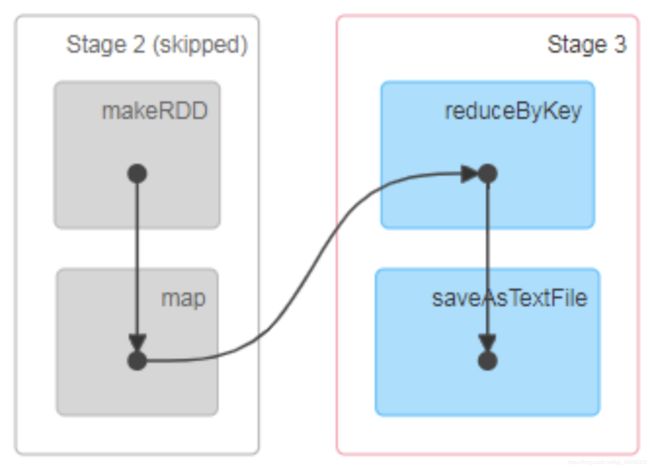

- 注意:如果存在shuffle过程,系统会自动进行缓存,UI界面显示skipped的部分

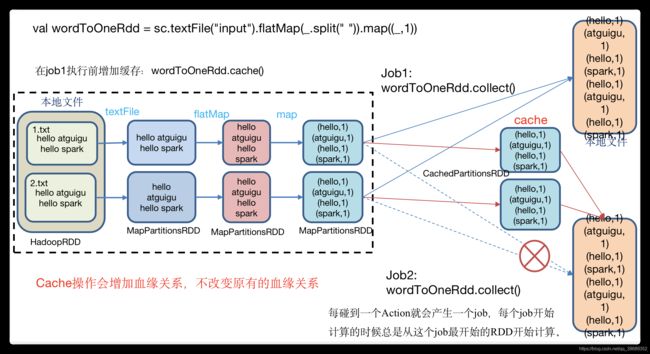

八、RDD持久化

8.1 RDD Cache缓存

- RDD通过Cache或者Persist方法将前面的计算结果缓存,默认情况下会把数据以序列化的形式缓存在JVM的堆内存中。但是并不是这两个方法被调用时立即缓存,而是触发后面的action时,该RDD将会被缓存在计算节点的内存中,并供后面重用。

- 示例:RDD Cache缓存

- 代码实现

object cache01 {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3. 创建一个RDD,读取指定位置文件:hello atguigu atguigu

val lineRdd: RDD[String] = sc.textFile("input1")

//3.1.业务逻辑

val wordRdd: RDD[String] = lineRdd.flatMap(line => line.split(" "))

val wordToOneRdd: RDD[(String, Int)] = wordRdd.map {

word => {

println("************")

(word, 1)

}

}

//3.5 cache操作会增加血缘关系,不改变原有的血缘关系

println(wordToOneRdd.toDebugString)

//3.4 数据缓存。

wordToOneRdd.cache()

//3.6 可以更改存储级别

// wordToOneRdd.persist(StorageLevel.MEMORY_AND_DISK_2)

//3.2 触发执行逻辑

wordToOneRdd.collect()

println("-----------------")

println(wordToOneRdd.toDebugString)

//3.3 再次触发执行逻辑

wordToOneRdd.collect()

//4.关闭连接

sc.stop()

}

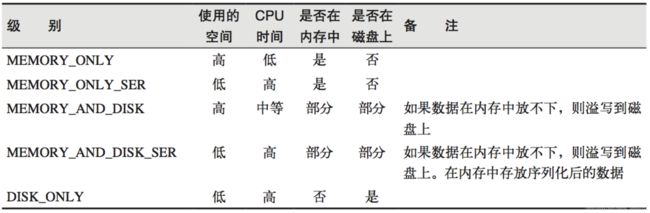

}- 源码解析

mapRdd.cache()

def cache(): this.type = persist()

def persist(): this.type = persist(StorageLevel.MEMORY_ONLY)

object StorageLevel {

val NONE = new StorageLevel(false, false, false, false)

val DISK_ONLY = new StorageLevel(true, false, false, false)

val DISK_ONLY_2 = new StorageLevel(true, false, false, false, 2)

val MEMORY_ONLY = new StorageLevel(false, true, false, true)

val MEMORY_ONLY_2 = new StorageLevel(false, true, false, true, 2)

val MEMORY_ONLY_SER = new StorageLevel(false, true, false, false)

val MEMORY_ONLY_SER_2 = new StorageLevel(false, true, false, false, 2)

val MEMORY_AND_DISK = new StorageLevel(true, true, false, true)

val MEMORY_AND_DISK_2 = new StorageLevel(true, true, false, true, 2)

val MEMORY_AND_DISK_SER = new StorageLevel(true, true, false, false)

val MEMORY_AND_DISK_SER_2 = new StorageLevel(true, true, false, false, 2)

val OFF_HEAP = new StorageLevel(true, true, true, false, 1)- 注意:默认的存储级别都是仅在内存存储一份。在存储级别的末尾加上“_2”表示持久化的数据存为两份。

- 缓存有可能丢失,或者存储于内存的数据由于内存不足而被删除,RDD的缓存容错机制保证了即使缓存丢失也能保证计算的正确执行。通过基于RDD的一系列转换,丢失的数据会被重算,由于RDD的各个Partition是相对独立的,因此只需要计算丢失的部分即可,并不需要重算全部Partition。

- 自带缓存算子:Spark会自动对一些Shuffle操作的中间数据做持久化操作(比如:reduceByKey)。这样做的目的是为了当一个节点Shuffle失败了避免重新计算整个输入。但是,在实际使用的时候,如果想重用数据,仍然建议调用persist或cache。

object cache02 {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3. 创建一个RDD,读取指定位置文件:hello atguigu atguigu

val lineRdd: RDD[String] = sc.textFile("input1")

//3.1.业务逻辑

val wordRdd: RDD[String] = lineRdd.flatMap(line => line.split(" "))

val wordToOneRdd: RDD[(String, Int)] = wordRdd.map {

word => {

println("************")

(word, 1)

}

}

// 采用reduceByKey,自带缓存

val wordByKeyRDD: RDD[(String, Int)] = wordToOneRdd.reduceByKey(_+_)

//3.5 cache操作会增加血缘关系,不改变原有的血缘关系

println(wordByKeyRDD.toDebugString)

//3.4 数据缓存。

//wordByKeyRDD.cache()

//3.2 触发执行逻辑

wordByKeyRDD.collect()

println("-----------------")

println(wordByKeyRDD.toDebugString)

//3.3 再次触发执行逻辑

wordByKeyRDD.collect()

//4.关闭连接

sc.stop()

}

}- 访问http://localhost:4040/jobs/页面,查看第一个和第二个job的DAG图。说明:增加缓存后血缘依赖关系仍然有,但是,第二个job取的数据是从缓存中取的。

8.2 RDD CheckPoint检查点

- 检查点:是通过将RDD中间结果写入磁盘。

- 为什么要做检查点?

- 由于血缘依赖过长会造成容错成本过高,这样就不如在中间阶段做检查点容错,如果检查点之后有节点出现问题,可以从检查点开始重做血缘,减少了开销。

- 检查点存储路径:Checkpoint的数据通常是存储在HDFS等容错、高可用的文件系统

- 检查点数据存储格式为:二进制的文件

- 检查点切断血缘:在Checkpoint的过程中,该RDD的所有依赖于父RDD中的信息将全部被移除。

- 检查点触发时间:对RDD进行checkpoint操作并不会马上被执行,必须执行Action操作才能触发。但是检查点为了数据安全,会从血缘关系的最开始执行一遍

- 设置检查点步骤

- 设置检查点数据存储路径:sc.setCheckpointDir("./checkpoint1")

- 调用检查点方法:wordToOneRdd.checkpoint()

- 代码实现

object checkpoint01 {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

// 需要设置路径,否则抛异常:Checkpoint directory has not been set in the SparkContext

sc.setCheckpointDir("./checkpoint1")

//3. 创建一个RDD,读取指定位置文件:hello atguigu atguigu

val lineRdd: RDD[String] = sc.textFile("input1")

//3.1.业务逻辑

val wordRdd: RDD[String] = lineRdd.flatMap(line => line.split(" "))

val wordToOneRdd: RDD[(String, Long)] = wordRdd.map {

word => {

(word, System.currentTimeMillis())

}

}

//3.5 增加缓存,避免再重新跑一个job做checkpoint

// wordToOneRdd.cache()

//3.4 数据检查点:针对wordToOneRdd做检查点计算

wordToOneRdd.checkpoint()

//3.2 触发执行逻辑

wordToOneRdd.collect().foreach(println)

// 会立即启动一个新的job来专门的做checkpoint运算

//3.3 再次触发执行逻辑

wordToOneRdd.collect().foreach(println)

wordToOneRdd.collect().foreach(println)

Thread.sleep(10000000)

//4.关闭连接

sc.stop()

}

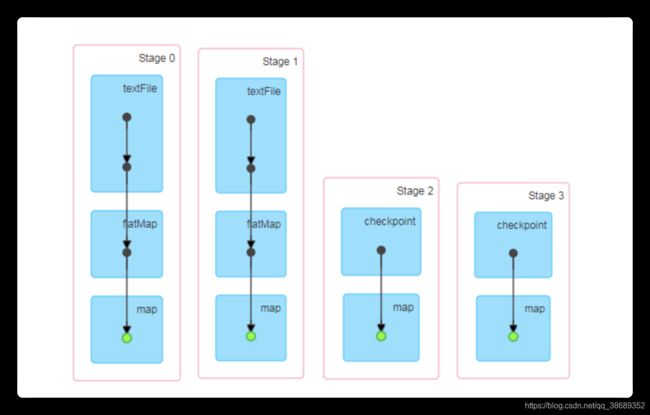

}- 执行结果:访问http://localhost:4040/jobs/页面,查看4个job的DAG图。其中第2个图是checkpoint的job运行DAG图。第3、4张图说明,检查点切断了血缘依赖关系。

- 只增加checkpoint,没有增加Cache缓存打印

- 第1个job执行完,触发了checkpoint,第2个job运行checkpoint,并把数据存储在检查点上。第3、4个job,数据从检查点上直接读取。

- 增加checkpoint,也增加Cache缓存打印

- 第1个job执行完,数据就保存到Cache里面了,第2个job运行checkpoint,直接读取Cache里面的数据,并把数据存储在检查点上。第3、4个job,数据从检查点上直接读取。

- 只增加checkpoint,没有增加Cache缓存打印

8.3 缓存和检查点区别

- Cache缓存只是将数据保存起来,不切断血缘依赖。Checkpoint检查点切断血缘依赖。

- Cache缓存的数据通常存储在磁盘、内存等地方,可靠性低。Checkpoint的数据通常存储在HDFS等容错、高可用的文件系统,可靠性高。

- 建议对checkpoint()的RDD使用Cache缓存,这样checkpoint的job只需从Cache缓存中读取数据即可,否则需要再从头计算一次RDD。

- 如果使用完了缓存,可以通过unpersist()方法释放缓存

8.4 检查点存储到HDFS集群

- 注意:如果检查点数据存储到HDFS集群,要注意配置访问集群的用户名。否则会报访问权限异常。

object checkpoint02 {

def main(args: Array[String]): Unit = {

// 设置访问HDFS集群的用户名

System.setProperty("HADOOP_USER_NAME","atguigu")

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

// 需要设置路径.需要提前在HDFS集群上创建/checkpoint路径

sc.setCheckpointDir("hdfs://hadoop102:9000/checkpoint")

//3. 创建一个RDD,读取指定位置文件:hello atguigu atguigu

val lineRdd: RDD[String] = sc.textFile("input1")

//3.1.业务逻辑

val wordRdd: RDD[String] = lineRdd.flatMap(line => line.split(" "))

val wordToOneRdd: RDD[(String, Long)] = wordRdd.map {

word => {

(word, System.currentTimeMillis())

}

}

//3.4 增加缓存,避免再重新跑一个job做checkpoint

wordToOneRdd.cache()

//3.3 数据检查点:针对wordToOneRdd做检查点计算

wordToOneRdd.checkpoint()

//3.2 触发执行逻辑

wordToOneRdd.collect().foreach(println)

//4.关闭连接

sc.stop()

}

}九、键值对RDD数据分区

- Spark目前支持Hash分区和Range分区,和用户自定义分区。Hash分区为当前的默认分区。分区器直接决定了RDD中分区的个数、RDD中每条数据经过Shuffle后进入哪个分区和Reduce的个数。

- 注意

- 只有Key-Value类型的RDD才有分区器,非Key-Value类型的RDD分区的值是None

- 每个RDD的分区ID范围:0~numPartitions-1,决定这个值是属于那个分区的。

- 获取RDD分区

object partitioner01_get {

def main(args: Array[String]): Unit = {

//1.创建SparkConf并设置App名称

val conf: SparkConf = new SparkConf().setAppName("SparkCoreTest").setMaster("local[*]")

//2.创建SparkContext,该对象是提交Spark App的入口

val sc: SparkContext = new SparkContext(conf)

//3 创建RDD

val pairRDD: RDD[(Int, Int)] = sc.makeRDD(List((1,1),(2,2),(3,3)))

//3.1 打印分区器

println(pairRDD.partitioner)

//3.2 使用HashPartitioner对RDD进行重新分区

val partitionRDD: RDD[(Int, Int)] = pairRDD.partitionBy(new HashPartitioner(2))

//3.3 打印分区器

println(partitionRDD.partitioner)

//4.关闭连接

sc.stop()

}9.1 Hash分区

- 原理:对于给定的key,计算其hashCode,并除以分区的个数取余,如果余数小于0,则用余数+分区的个数(否则加0),最后返回的值就是这个key所属的分区ID。

- 缺点:可能导致每个分区中数据量的不均匀,极端情况下会导致某些分区拥有RDD的全部数据。

9.2 Ranger分区

- 作用:将一定范围内的数映射到某一个分区内,尽量保证每个分区中数据量均匀,而且分区与分区之间是有序的,一个分区中的元素肯定都是比另一个分区内的元素小或者大,但是分区内的元素是不能保证顺序的。简单的说就是将一定范围内的数映射到某一个分区内。

- 过程

- 先从整个RDD中采用水塘抽样算法,抽取出样本数据,将样本数据排序,计算出每个分区的最大key值,形成一个Array[KEY]类型的数组变量rangeBounds;

- 判断key在rangeBounds中所处的范围,给出该key值在下一个RDD中的分区id下标;该分区器要求RDD中的KEY类型必须是可以排序的

9.3 自定义分区

- 见4.3.1