浅谈Spark中的宽依赖和窄依赖

1、何为Spark中的宽依赖和窄依赖

1.1、官方源码解释

1.1.1、NarrowDependency(窄依赖)

/**

* :: DeveloperApi ::

* Base class for dependencies where each partition of the child RDD depends on a small number

* of partitions of the parent RDD. Narrow dependencies allow for pipelined execution.

*/

@DeveloperApi

abstract class NarrowDependency[T](_rdd: RDD[T]) extends Dependency[T] {

/**

* Get the parent partitions for a child partition.

* @param partitionId a partition of the child RDD

* @return the partitions of the parent RDD that the child partition depends upon

*/

def getParents(partitionId: Int): Seq[Int]

override def rdd: RDD[T] = _rdd

}如上源码注释所讲,这些最基本的依赖关系,其中子RDD的每个分区依赖于父RDD的少量分区,并且窄依赖允许通过一个管道来执行。

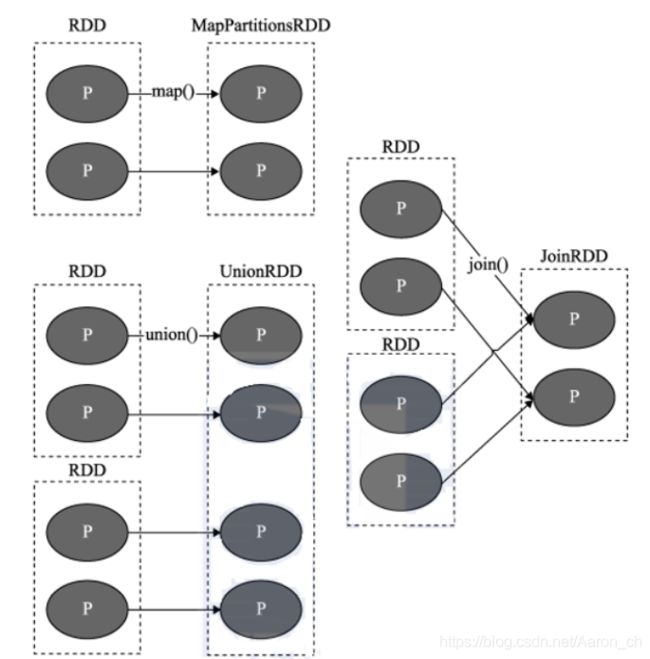

总结来说:父RDD的一个分区只会被子RDD的1个分区所依赖,并且其中是不会产生shuffle过程的。

下图可以帮助理解

1.1.2、ShuffleDependency(宽依赖)

/**

* :: DeveloperApi ::

* Represents a dependency on the output of a shuffle stage. Note that in the case of shuffle,

* the RDD is transient since we don't need it on the executor side.

*

* @param _rdd the parent RDD

* @param partitioner partitioner used to partition the shuffle output

* @param serializer [[org.apache.spark.serializer.Serializer Serializer]] to use. If not set

* explicitly then the default serializer, as specified by `spark.serializer`

* config option, will be used.

* @param keyOrdering key ordering for RDD's shuffles

* @param aggregator map/reduce-side aggregator for RDD's shuffle

* @param mapSideCombine whether to perform partial aggregation (also known as map-side combine)

*/

@DeveloperApi

class ShuffleDependency[K: ClassTag, V: ClassTag, C: ClassTag](

@transient private val _rdd: RDD[_ <: Product2[K, V]],

val partitioner: Partitioner,

val serializer: Serializer = SparkEnv.get.serializer,

val keyOrdering: Option[Ordering[K]] = None,

val aggregator: Option[Aggregator[K, V, C]] = None,

val mapSideCombine: Boolean = false)

extends Dependency[Product2[K, V]] {

if (mapSideCombine) {

require(aggregator.isDefined, "Map-side combine without Aggregator specified!")

}

override def rdd: RDD[Product2[K, V]] = _rdd.asInstanceOf[RDD[Product2[K, V]]]

private[spark] val keyClassName: String = reflect.classTag[K].runtimeClass.getName

private[spark] val valueClassName: String = reflect.classTag[V].runtimeClass.getName

// Note: It's possible that the combiner class tag is null, if the combineByKey

// methods in PairRDDFunctions are used instead of combineByKeyWithClassTag.

private[spark] val combinerClassName: Option[String] =

Option(reflect.classTag[C]).map(_.runtimeClass.getName)

val shuffleId: Int = _rdd.context.newShuffleId()

val shuffleHandle: ShuffleHandle = _rdd.context.env.shuffleManager.registerShuffle(

shuffleId, _rdd.partitions.length, this)

_rdd.sparkContext.cleaner.foreach(_.registerShuffleForCleanup(this))

}

源码注释可以理解为:表示对shuffle阶段的输出的依赖关系。注意,在shuffle的情况下,RDD是暂时的,因为我们不需要在executor端使用它。其实看dependency名字就知道了,顾名思义,只有发生了shuffle才可以称之为宽依赖

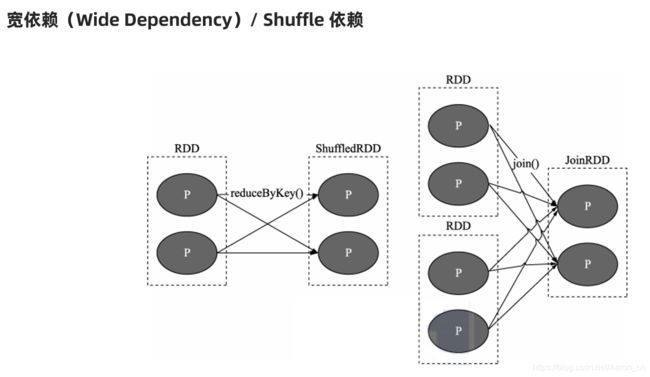

可以总结如下:父RDD的一个分区会被子RDD的多个分区所依赖

下图可以帮助理解

2、为什么需要宽窄依赖

2.1、DAG和Stage概念

2.1.1、DAG

Spark的DAG:就是spark任务/程序执行的流程图,

DAG的开始:从创建RDD开始

DAG的结束:到Action结束

一个Spark程序中有几个Action操作就有几个DAG,如何区分算子是否是action还是transformation,如果api返回的是RDD,则这个api算子一定是transformation,反之则为action。

2.1.2、Stage

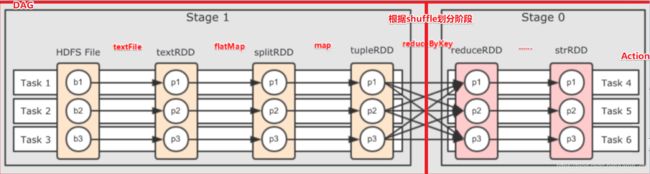

Stage:是DAG中根据shuffle划分出来的阶段,前面的阶段执行完才可以执行后面的阶段,同一个阶段中的各个任务可以并行执行无需等待。

2.2、详解如何宽窄依赖之间是如何切分成Stage的、

如图:

总结:

窄依赖: 并行化+容错

宽依赖: 进行阶段划分(shuffle后的阶段需要等待shuffle前的阶段计算完才能执行)

3、哪些算子是宽依赖那些算子是窄依赖

map、flatMap、filter等等常规情况下都是窄依赖,不会产生shuffle

reduceBykey、groupByKey等等常规情况下都是宽依赖,会产生shuffle

但是其实宽窄依赖不能光靠算子名称来划分,需要根据定义来划分,因为有的时候,join操作可能是窄依赖,有的时候就是宽依赖。具体的还是要看stage具体执行的时候划分。

4、根据Spark UI解读join何时是窄依赖何时是宽依赖

4.1、join窄依赖

当join和前一个父RDD被划分到同一个Stage中的时候,就可以认为这是一个窄依赖

SparkConf conf = new SparkConf().setAppName("Java-Test-WordCount").setMaster("local[*]");

JavaSparkContext jsc = new JavaSparkContext(conf);

List> tuple2List1 = Arrays.asList(new Tuple2<>("Alice", 15), new Tuple2<>("Bob", 18), new Tuple2<>("Thomas", 20), new Tuple2<>("Catalina", 25));

List> tuple3List = Arrays.asList(new Tuple3<>("Alice", "Female", "NanJ"), new Tuple3<>("Thomas", "Male", "ShangH"), new Tuple3<>("Tom", "Male", "BeiJ"));

//通过parallelize构建第一个RDD

JavaRDD> javaRDD1 = jsc.parallelize(tuple2List1);

//通过parallelize构建第二个RDD

JavaRDD> javaRDD2 = jsc.parallelize(tuple3List);

//通过mapToPair根据第一个RDD构建第三个RDD

JavaPairRDD javaRDD3 = javaRDD1.mapToPair(new PairFunction, String, Integer>() {

@Override

public Tuple2 call(Tuple2 tuple2) {

return tuple2;

}

});

//通过partitionBy根据第三个RDD构建第五个RDD

JavaPairRDD javaRDD31 = javaRDD3.partitionBy(new HashPartitioner(2));

//通过mapToPair根据第二个RDD构建第四个RDD

JavaPairRDD> javaRDD4 = javaRDD2.mapToPair(new PairFunction, String, Tuple2>() {

@Override

public Tuple2> call(Tuple3 tuple3) {

return new Tuple2<>(tuple3._1(), new Tuple2<>(tuple3._2(), tuple3._3()));

}

});

//通过partitionBy根据第四个RDD构建第六个RDD

JavaPairRDD> javaRDD41 = javaRDD4.partitionBy(new HashPartitioner(2));

//通过join 根据第五和第六个RDD构建出第七个RDD

JavaPairRDD>> javaRDD6 = javaRDD31.join(javaRDD41);

javaRDD6.foreach(new VoidFunction>>>() {

@Override

public void call(Tuple2>> stringTuple2Tuple2) throws Exception {

System.out.print(stringTuple2Tuple2);

}

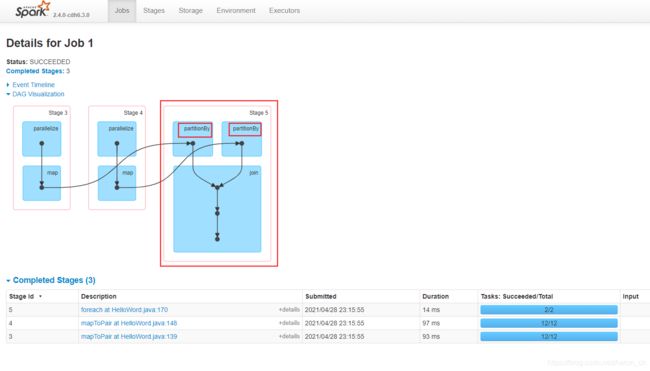

}); 查看Spark Web UI

Note:通过如上UI显示,可以看出,Stage5中,partitionBy 和 join在同一个Stage中,并且join是子RDD的算子,故而可以得出结论,在此Stage中,join就是一个窄依赖

4.2、join宽依赖

SparkConf conf = new SparkConf().setAppName("Java-Test-WordCount").setMaster("local[*]");

JavaSparkContext jsc = new JavaSparkContext(conf);

List> tuple2List1 = Arrays.asList(new Tuple2<>("Alice", 15), new Tuple2<>("Bob", 18), new Tuple2<>("Thomas", 20), new Tuple2<>("Catalina", 25));

List> tuple3List = Arrays.asList(new Tuple3<>("Alice", "Female", "NanJ"), new Tuple3<>("Thomas", "Male", "ShangH"), new Tuple3<>("Tom", "Male", "BeiJ"));

//通过parallelize构建第一个RDD

JavaRDD> javaRDD1 = jsc.parallelize(tuple2List1);

//通过parallelize构建第二个RDD

JavaRDD> javaRDD2 = jsc.parallelize(tuple3List);

//通过mapToPair根据第一个RDD构建第三个RDD

JavaPairRDD javaRDD3 = javaRDD1.mapToPair(new PairFunction, String, Integer>() {

@Override

public Tuple2 call(Tuple2 tuple2) {

return tuple2;

}

});

//通过mapToPair根据第二个RDD构建第四个RDD

JavaPairRDD> javaRDD4 = javaRDD2.mapToPair(new PairFunction, String, Tuple2>() {

@Override

public Tuple2> call(Tuple3 tuple3) {

return new Tuple2<>(tuple3._1(), new Tuple2<>(tuple3._2(), tuple3._3()));

}

});

//通过join 根据第三个RDD和第四个RDD构建得出第五个RDD

JavaPairRDD>> javaRDD5 = javaRDD3.join(javaRDD4);

javaRDD5.foreach(new VoidFunction>>>() {

@Override

public void call(Tuple2>> stringTuple2Tuple2) throws Exception {

System.out.print(stringTuple2Tuple2);

}

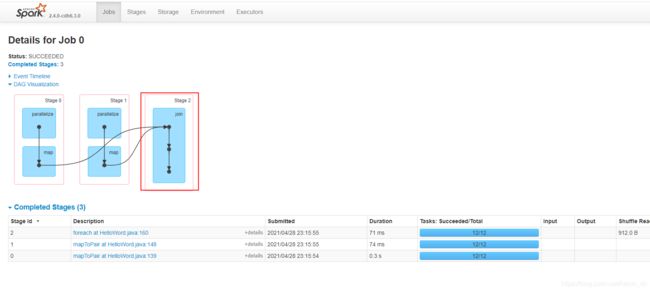

}); 查看Spark Web UI

Note:通过如上UI显示,可以看出Stage2中就只有join一个算子操作,故而此处的join算子就是一个宽依赖。