YOLOv4算法详解

YOLOv4: Optimal Speed and Accuracy of Object Detection-论文链接-代码链接

目录

-

- 1、需求解读

- 2、YOLOv4算法简介

- 3、YOLOv4算法详解

-

- 3.1 YOLOv4网络架构

- 3.2 YOLOv4实现细节详解

-

- 3.2.1 YOLOv4基础组件

- 3.2.2 输入端细节详解

- 3.2.3 基准网络细节详解

- 3.2.3 Neck网络细节详解

- 3.2.4 Head网络细节详解

- 4、YOLOv4代码实现

- 5、YOLOv4效果展示与分析

-

- 5.1、YOLOv4主观效果展示与分析

- 5.2、YOLOv4客观效果展示与分析

- 6、总结与分析

- 参考资料

- 注意事项

1、需求解读

作为一种经典的单阶段目标检测框架,YOLO系列的目标检测算法得到了学术界与工业界们的广泛关注。由于YOLO系列属于单阶段目标检测,因而具有较快的推理速度,能够更好的满足现实场景的需求。随着YOLOv3算法的出现,使得YOLO系列的检测算达到了高潮。YOLOv4则是在YOLOv3算法的基础上增加了很多实用的技巧,使得它的速度与精度都得到了极大的提升,本文将对YOLOv4算法的细节进行分析。

2、YOLOv4算法简介

YOLOv4是一种单阶段目标检测算法,该算法在YOLOv3的基础上添加了一些新的改进思路,使得其速度与精度都得到了极大的性能提升。主要的改进思路如下所示:

- 输入端:在模型训练阶段,做了一些改进操作,主要包括Mosaic数据增强、cmBN、SAT自对抗训练;

- BackBone基准网络:融合其它检测算法中的一些新思路,主要包括:CSPDarknet53、Mish激活函数、Dropblock;

- Neck中间层:目标检测网络在BackBone与最后的Head输出层之间往往会插入一些层,Yolov4中添加了SPP模块、FPN+PAN结构;

- Head输出层:输出层的锚框机制与YOLOv3相同,主要改进的是训练时的损失函数CIOU_Loss,以及预测框筛选的DIOU_nms。

3、YOLOv4算法详解

3.1 YOLOv4网络架构

上图展示了YOLOv4目标检测算法的整体框图。对于一个目标检测算法而言,我们通常可以将其划分为4个通用的模块,具体包括:输入端、基准网络、Neck网络与Head输出端,对应于上图中的4个红色模块。

- 输入端-输入端表示输入的图片。该网络的输入图像大小为608*608,该阶段通常包含一个图像预处理阶段,即将输入图像缩放到网络的输入大小,并进行归一化等操作。在网络训练阶段,YOLOv4使用Mosaic数据增强操作提升了模型的训练速度和网络的精度;利用cmBN及SAT自对抗训练来提升网络的泛化性能;

- 基准网络-基准网络通常是一些性能优异的分类器种的网络,该模块用来提取一些通用的特征表示。YOLOv4中使用了CSPDarknet53作为基准网络;利用Mish激活函数代替原始的RELU激活函数;并在该模块中增加了Dropblock块来进一步提升模型的泛化能力 。

- Neck网络-Neck网络通常位于基准网络和头网络的中间位置,利用它可以进一步提升特征的多样性及鲁棒性。YOLOv4利用SPP模块来融合不同尺度大小的特征图;利用自顶向下的FPN特征金字塔于自底向上的PAN特征金字塔来提升网络的特征提取能力。

- Head输出端-Head用来完成目标检测结果的输出。针对不同的检测算法,输出端的分支个数不尽相同,通常包含一个分类分支和一个回归分支。YOLOv4利用CIOU_Loss来代替Smooth L1 Loss函数,并利用DIOU_nms来代替传统的NMS操作,从而进一步提升算法的检测精度。

3.2 YOLOv4实现细节详解

3.2.1 YOLOv4基础组件

- CBM-CBM是Yolov4网络结构中的最小组件,由Conv+BN+Mish激活函数组成,如上图中模块1所示。

- CBL-CBL模块由Conv+BN+Leaky_relu激活函数组成,如上图中的模块2所示。

- Res unit-借鉴ResNet网络中的残差结构,用来构建深层网络,CBM是残差模块中的子模块,如上图中的模块3所示。

- CSPX-借鉴CSPNet网络结构,由卷积层和X个Res unint模块Concate组成而成,如上图中的模块4所示。

- SPP-采用1×1、5×5、9×9和13×13的最大池化方式,进行多尺度特征融合,如上图中的模块5所示。

3.2.2 输入端细节详解

- Mosaic数据增强-YOLOv4中在训练模型阶段使用了Mosaic数据增强方法,该算法是在CutMix数据增强方法的基础上改进而来的。CutMix仅仅利用了两张图片进行拼接,而Mosaic数据增强方法则采用了4张图片,并且按照随机缩放、随机裁剪和随机排布的方式进行拼接而成,具体的效果如下图所示。这种增强方法可以将几张图片组合成一张,这样不仅可以丰富数据集的同时极大的提升网络的训练速度,而且可以降低模型的内存需求。

3.2.3 基准网络细节详解

- CSPDarknet53-它是在YOLOv3主干网络Darknet53的基础上,借鉴了2019年发表的CSPNet算法的经验,所形成的一种Backbone结构,其中包含了5个CSP模块。每个CSP模型的实现细节如3.2.1所述。CSP模块可以先将基础层的特征映射划分为两部分,然后通过跨阶段层次结构将它们合并起来,这样不仅减少了计算量,而且可以保证模型的准确率。它的优点包括:(1)增强CNN网络的学习能力,轻量化模型的同时保持模型的精度;(2)降低整个模型的计算瓶颈;(3)降低算法的内存成本。有关CSP模块的更多细节请看该论文。

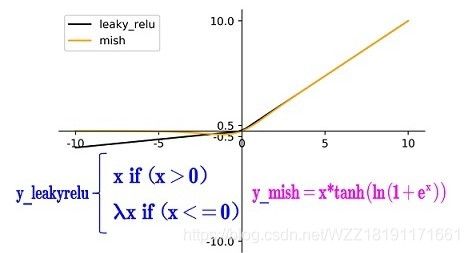

- Mish激活函数-该激活函数是在2019年提出的,该函数是在Leaky_relu算法的基础上改进而来的,具体的比较请看下图。当x>0时,Leaky_relu与Mish激活函数基本相同;当x<0时,Mish函数基本为0,而Leaky_relu函数为 λ x \lambda x λx。YOLOv4的Backbone中均使用了Mish激活函数,而后面的Neck网络中则使用了leaky_relu激活函数。总而言之,Mish函数更加平滑一些,可以进一步提升模型的精度。更多的细节请看该论文。

- Dropblock-Dropblock是一种解决模型过拟合的正则化方法,它的作用与Dropout基本相同。Dropout的主要思路是随机的使网络中的一些神经元失活,从而形成一个新的网络。如下图所示,最左边表示原始的输入图片,中间表示经过Dropout操作之后的结果,它使得图像中的一些位置随机失活,Dropblock的作者认为:由于卷积层通常是三层结构,即卷积+激活+池化层,池化层本身就是对相邻单元起作用,因而卷积层对于这种随机丢弃并不敏感。除此之外,即使是随机丢弃,卷积层仍然可以从相邻的激活单元学习到相同的信息。因此,在全连接层上效果很好的Dropout在卷积层上效果并不好。最右边表示经过Dropblock操作之后的结果,我们可以发现该操作直接对整个局部区域进行失活(连续的几个位置)。

Dropblock是在Cutout数据增强方式的基础上改进而来的,Cutout的主要思路是将输入图像的部分区域清零,而Dropblock的创新点则是将Cutout应用到每一个特征图上面。而且并不是用固定的归零比率,而是在训练时以一个小的比率开始,随着训练过程线性的增加这个比率。与Cutout相比,Dropblck主要具有以下的优点:(1)实验效果表明Dropblock的效果优于Cutout;(2)Cutout只能应用到输入层,而Dropblock则是将Cutout应用到网络中的每一个特征图上面;(3)Dropblock可以定制各种组合,在训练的不同阶段可以灵活的修改删减的概率,不管是从空间层面还是从时间层面来讲,Dropblock更优一些。

3.2.3 Neck网络细节详解

为了获得更鲁棒的特征表示,通常会在基准网络和输出层之间插入一些层,YOLOv4的主要添加了SPP模块与FPN+PAN2种方式。

-

SPP模块-SPP模块通过融合不同大小的最大池化层来获得鲁棒的特征表示,YOLOv4中的k={1*1,5*5,9*9,13*13}包含这4种形式。这里的最大池化层采用padding操作,移动步长为1,比如输入特征图的大小为13x13,使用的池化核大小为5x5,padding=2,因此池化后的特征图大小仍然是13×13。YOLOv4论文表明:(1)与单纯的使用k*k最大池化的方式相比,采用SPP模块的方式能够更有效的增加主干特征的接收范围,显著的分离了最重要的上下文特征。(2)在COCO目标检测任务中,当输入图片的大小为608*608时,只需要额外花费0.5%的计算代价就可以将AP50提升2.7%,因此YOLOv4算法中也采用了SPP模块。

-

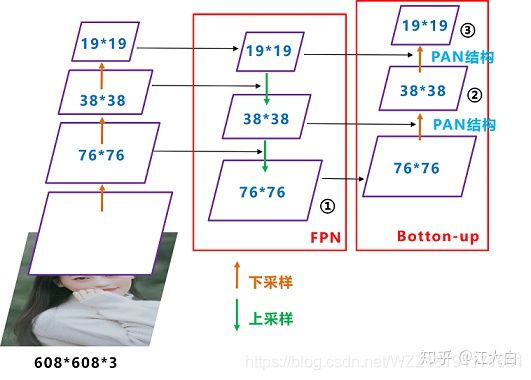

FPN+PAN-所谓的FPN,即特征金字塔网络,通过在特征图上面构建金字塔,可以更好的解决目标检测中尺度问题。PAN则是借鉴了图像分割领域PANet算法中的创新点,它是一种自底向上的结构,它在FPN的基础上增加了两个PAN结构,如下图中的2和3所示。(1)整个网络的输入图像大小为608*608;然后经过CSP块之后生成一个76*76大小的特征映射,经过下采样操作之后生成38*38的特征映射,经过下采样操作之后生成19*19的特征映射;(2)接着将其传入FPN结构中,依次对19*19、38*38、76*76执行融合操作,即先对比较小的特征映射层执行上采样操作,将其调整成相同大小,然后将两个同等大小的特征映射叠加起来。通过FPN操作可以将19*19大小的特征映射调整为76*76大小,这样不仅提升了特征映射的大小,可以更好的解决检测中尺度 问题,而且增加了网络的深度,提升了网络的鲁棒性。(3)接着将其传入PAN结构中,PANet网络的PAN结构是将两个相同大小的特征映射执行按位加操作,YOLOv4中使用Concat操作来代替它。经过两个PAN结构,我们将76*76大小的特征映射重新调整为19*19大小,这样可以在一定程度上提升该算法的目标定位能力。FPN层自顶向下可以捕获强语义特征,而PAF则通过自底向上传达强定位特征,通过组合这两个模块,可以很好的完成目标定位的功能。

3.2.4 Head网络细节详解

- CIOU_loss-目标检测任务的损失函数一般由分类损失函数和回归损失函数两部分构成,回归损失函数的发展过程主要包括:最原始的Smooth L1 Loss函数、2016年提出的IoU Loss、2019年提出的GIoU Loss、2020年提出的DIoU Loss和最新的CIoU Loss函数。

1、IoU Loss-所谓的IoU Loss,即预测框与GT框之间的交集/预测框与GT框之间的并集。这种损失会存在一些问题,具体的问题如下图所示,(1)如状态1所示,当预测框和GT框不相交时,即IOU=0,此时无法反映两个框之间的距离,此时该 损失函数不可导,即IOU_Loss无法优化两个框不相交的情况。(2)如状态2与状态3所示,当两个预测框大小相同时,那么这两个IOU也相同,IOU_Loss无法区分两者相交这种情况。

2、GIOU_Loss-为了解决以上的问题,GIOU损失应运而生。GIOU_Loss中增加了相交尺度的衡量方式,缓解了单纯IOU_Loss时存在的一些问题。

但是这种方法并不能完全解决这种问题,仍然存在着其它的问题。具体的问题如下所示,状态1、2、3都是预测框在GT框内部且预测框大小一致的情况,这时预测框和GT框的差集都是相同的,因此这三种状态的GIOU值也都是相同的,这时GIOU退化成了IOU,无法区分相对位置关系。

3、DIOU_Loss-针对IOU和GIOU损失所存在的问题,DIOU为了解决如何最小化预测框和GT框之间的归一化距离这个问题,DIOU_Loss考虑了预测框与GT框的重叠面积和中心点距离,当GT框包裹预测框的时候,直接度量2个框的距离,因此DIOU_Loss的收敛速度更快一些。

3、DIOU_Loss-针对IOU和GIOU损失所存在的问题,DIOU为了解决如何最小化预测框和GT框之间的归一化距离这个问题,DIOU_Loss考虑了预测框与GT框的重叠面积和中心点距离,当GT框包裹预测框的时候,直接度量2个框的距离,因此DIOU_Loss的收敛速度更快一些。

如下图所示,当GT框包裹预测框时,此时预测框的中心点的位置都是一样的,因此按照DIOU_Loss的计算公式,三者的值都是相同的。为了解决这个问题,CIOU_Loss应运而生。

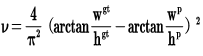

4、CIOU_Loss-CIOU_Loss在DIOU_Loss的基础上增加了一个影响因子,将预测框和GT框的长宽比也考虑了进来。具体的计算方法如下式所示,即CIOU_Loss将GT框的重叠面积、中心点距离和长宽比全都考虑进来了。

总而言之,IOU_Loss主要考虑了检测框和GT框之间的重叠面积;GIOU_Loss在IOU的基础上,解决边界框不重合时出现的问题;DIOU_Loss在IOU和GIOU的基础上,同时考虑了边界框中心点距离信息;CIOU_Loss在DIOU的基础上,又考虑了边界框宽高比的尺度信息。 - DIOU_NMS-如下图所示,对于重叠的摩托车检测任务而言,传统的NMS操作会遗漏掉一些中间的摩托车;由于DIOU_NMS考虑到边界框中心点的位置信息,因而得到了更准确的检测结果,适合处理密集场景下的目标检测问题。

4、YOLOv4代码实现

# 导入对应的python三方包

import torch

from torch import nn

import torch.nn.functional as F

from tool.torch_utils import *

from tool.yolo_layer import YoloLayer

# Mish激活函数类

class Mish(torch.nn.Module):

def __init__(self):

super().__init__()

def forward(self, x):

x = x * (torch.tanh(torch.nn.functional.softplus(x)))

return x

# 上采样操作类

class Upsample(nn.Module):

def __init__(self):

super(Upsample, self).__init__()

def forward(self, x, target_size, inference=False):

assert (x.data.dim() == 4)

# _, _, tH, tW = target_size

if inference:

#B = x.data.size(0)

#C = x.data.size(1)

#H = x.data.size(2)

#W = x.data.size(3)

return x.view(x.size(0), x.size(1), x.size(2), 1, x.size(3), 1).\

expand(x.size(0), x.size(1), x.size(2), target_size[2] // x.size(2), x.size(3), target_size[3] // x.size(3)).\

contiguous().view(x.size(0), x.size(1), target_size[2], target_size[3])

else:

return F.interpolate(x, size=(target_size[2], target_size[3]), mode='nearest')

# Conv+BN+Activation模块

class Conv_Bn_Activation(nn.Module):

def __init__(self, in_channels, out_channels, kernel_size, stride, activation, bn=True, bias=False):

super().__init__()

pad = (kernel_size - 1) // 2

self.conv = nn.ModuleList()

if bias:

self.conv.append(nn.Conv2d(in_channels, out_channels, kernel_size, stride, pad))

else:

self.conv.append(nn.Conv2d(in_channels, out_channels, kernel_size, stride, pad, bias=False))

if bn:

self.conv.append(nn.BatchNorm2d(out_channels))

if activation == "mish":

self.conv.append(Mish())

elif activation == "relu":

self.conv.append(nn.ReLU(inplace=True))

elif activation == "leaky":

self.conv.append(nn.LeakyReLU(0.1, inplace=True))

elif activation == "linear":

pass

else:

print("activate error !!! {} {} {}".format(sys._getframe().f_code.co_filename,

sys._getframe().f_code.co_name, sys._getframe().f_lineno))

def forward(self, x):

for l in self.conv:

x = l(x)

return x

# Res残差块类

class ResBlock(nn.Module):

"""

Sequential residual blocks each of which consists of \

two convolution layers.

Args:

ch (int): number of input and output channels.

nblocks (int): number of residual blocks.

shortcut (bool): if True, residual tensor addition is enabled.

"""

def __init__(self, ch, nblocks=1, shortcut=True):

super().__init__()

self.shortcut = shortcut

self.module_list = nn.ModuleList()

for i in range(nblocks):

resblock_one = nn.ModuleList()

resblock_one.append(Conv_Bn_Activation(ch, ch, 1, 1, 'mish'))

resblock_one.append(Conv_Bn_Activation(ch, ch, 3, 1, 'mish'))

self.module_list.append(resblock_one)

def forward(self, x):

for module in self.module_list:

h = x

for res in module:

h = res(h)

x = x + h if self.shortcut else h

return x

# 下采样方法1

class DownSample1(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = Conv_Bn_Activation(3, 32, 3, 1, 'mish')

self.conv2 = Conv_Bn_Activation(32, 64, 3, 2, 'mish')

self.conv3 = Conv_Bn_Activation(64, 64, 1, 1, 'mish')

# [route]

# layers = -2

self.conv4 = Conv_Bn_Activation(64, 64, 1, 1, 'mish')

self.conv5 = Conv_Bn_Activation(64, 32, 1, 1, 'mish')

self.conv6 = Conv_Bn_Activation(32, 64, 3, 1, 'mish')

# [shortcut]

# from=-3

# activation = linear

self.conv7 = Conv_Bn_Activation(64, 64, 1, 1, 'mish')

# [route]

# layers = -1, -7

self.conv8 = Conv_Bn_Activation(128, 64, 1, 1, 'mish')

def forward(self, input):

x1 = self.conv1(input)

x2 = self.conv2(x1)

x3 = self.conv3(x2)

# route -2

x4 = self.conv4(x2)

x5 = self.conv5(x4)

x6 = self.conv6(x5)

# shortcut -3

x6 = x6 + x4

x7 = self.conv7(x6)

# [route]

# layers = -1, -7

x7 = torch.cat([x7, x3], dim=1)

x8 = self.conv8(x7)

return x8

# 下采样方法2

class DownSample2(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = Conv_Bn_Activation(64, 128, 3, 2, 'mish')

self.conv2 = Conv_Bn_Activation(128, 64, 1, 1, 'mish')

# r -2

self.conv3 = Conv_Bn_Activation(128, 64, 1, 1, 'mish')

self.resblock = ResBlock(ch=64, nblocks=2)

# s -3

self.conv4 = Conv_Bn_Activation(64, 64, 1, 1, 'mish')

# r -1 -10

self.conv5 = Conv_Bn_Activation(128, 128, 1, 1, 'mish')

def forward(self, input):

x1 = self.conv1(input)

x2 = self.conv2(x1)

x3 = self.conv3(x1)

r = self.resblock(x3)

x4 = self.conv4(r)

x4 = torch.cat([x4, x2], dim=1)

x5 = self.conv5(x4)

return x5

# 下采样方法3

class DownSample3(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = Conv_Bn_Activation(128, 256, 3, 2, 'mish')

self.conv2 = Conv_Bn_Activation(256, 128, 1, 1, 'mish')

self.conv3 = Conv_Bn_Activation(256, 128, 1, 1, 'mish')

self.resblock = ResBlock(ch=128, nblocks=8)

self.conv4 = Conv_Bn_Activation(128, 128, 1, 1, 'mish')

self.conv5 = Conv_Bn_Activation(256, 256, 1, 1, 'mish')

def forward(self, input):

x1 = self.conv1(input)

x2 = self.conv2(x1)

x3 = self.conv3(x1)

r = self.resblock(x3)

x4 = self.conv4(r)

x4 = torch.cat([x4, x2], dim=1)

x5 = self.conv5(x4)

return x5

# 下采样方法4

class DownSample4(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = Conv_Bn_Activation(256, 512, 3, 2, 'mish')

self.conv2 = Conv_Bn_Activation(512, 256, 1, 1, 'mish')

self.conv3 = Conv_Bn_Activation(512, 256, 1, 1, 'mish')

self.resblock = ResBlock(ch=256, nblocks=8)

self.conv4 = Conv_Bn_Activation(256, 256, 1, 1, 'mish')

self.conv5 = Conv_Bn_Activation(512, 512, 1, 1, 'mish')

def forward(self, input):

x1 = self.conv1(input)

x2 = self.conv2(x1)

x3 = self.conv3(x1)

r = self.resblock(x3)

x4 = self.conv4(r)

x4 = torch.cat([x4, x2], dim=1)

x5 = self.conv5(x4)

return x5

# 下采样方法5

class DownSample5(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = Conv_Bn_Activation(512, 1024, 3, 2, 'mish')

self.conv2 = Conv_Bn_Activation(1024, 512, 1, 1, 'mish')

self.conv3 = Conv_Bn_Activation(1024, 512, 1, 1, 'mish')

self.resblock = ResBlock(ch=512, nblocks=4)

self.conv4 = Conv_Bn_Activation(512, 512, 1, 1, 'mish')

self.conv5 = Conv_Bn_Activation(1024, 1024, 1, 1, 'mish')

def forward(self, input):

x1 = self.conv1(input)

x2 = self.conv2(x1)

x3 = self.conv3(x1)

r = self.resblock(x3)

x4 = self.conv4(r)

x4 = torch.cat([x4, x2], dim=1)

x5 = self.conv5(x4)

return x5

# Neck网络类

class Neck(nn.Module):

def __init__(self, inference=False):

super().__init__()

self.inference = inference

self.conv1 = Conv_Bn_Activation(1024, 512, 1, 1, 'leaky')

self.conv2 = Conv_Bn_Activation(512, 1024, 3, 1, 'leaky')

self.conv3 = Conv_Bn_Activation(1024, 512, 1, 1, 'leaky')

# SPP

self.maxpool1 = nn.MaxPool2d(kernel_size=5, stride=1, padding=5 // 2)

self.maxpool2 = nn.MaxPool2d(kernel_size=9, stride=1, padding=9 // 2)

self.maxpool3 = nn.MaxPool2d(kernel_size=13, stride=1, padding=13 // 2)

# R -1 -3 -5 -6

# SPP

self.conv4 = Conv_Bn_Activation(2048, 512, 1, 1, 'leaky')

self.conv5 = Conv_Bn_Activation(512, 1024, 3, 1, 'leaky')

self.conv6 = Conv_Bn_Activation(1024, 512, 1, 1, 'leaky')

self.conv7 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

# UP

self.upsample1 = Upsample()

# R 85

self.conv8 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

# R -1 -3

self.conv9 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

self.conv10 = Conv_Bn_Activation(256, 512, 3, 1, 'leaky')

self.conv11 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

self.conv12 = Conv_Bn_Activation(256, 512, 3, 1, 'leaky')

self.conv13 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

self.conv14 = Conv_Bn_Activation(256, 128, 1, 1, 'leaky')

# UP

self.upsample2 = Upsample()

# R 54

self.conv15 = Conv_Bn_Activation(256, 128, 1, 1, 'leaky')

# R -1 -3

self.conv16 = Conv_Bn_Activation(256, 128, 1, 1, 'leaky')

self.conv17 = Conv_Bn_Activation(128, 256, 3, 1, 'leaky')

self.conv18 = Conv_Bn_Activation(256, 128, 1, 1, 'leaky')

self.conv19 = Conv_Bn_Activation(128, 256, 3, 1, 'leaky')

self.conv20 = Conv_Bn_Activation(256, 128, 1, 1, 'leaky')

def forward(self, input, downsample4, downsample3, inference=False):

x1 = self.conv1(input)

x2 = self.conv2(x1)

x3 = self.conv3(x2)

# SPP

m1 = self.maxpool1(x3)

m2 = self.maxpool2(x3)

m3 = self.maxpool3(x3)

spp = torch.cat([m3, m2, m1, x3], dim=1)

# SPP end

x4 = self.conv4(spp)

x5 = self.conv5(x4)

x6 = self.conv6(x5)

x7 = self.conv7(x6)

# UP

up = self.upsample1(x7, downsample4.size(), self.inference)

# R 85

x8 = self.conv8(downsample4)

# R -1 -3

x8 = torch.cat([x8, up], dim=1)

x9 = self.conv9(x8)

x10 = self.conv10(x9)

x11 = self.conv11(x10)

x12 = self.conv12(x11)

x13 = self.conv13(x12)

x14 = self.conv14(x13)

# UP

up = self.upsample2(x14, downsample3.size(), self.inference)

# R 54

x15 = self.conv15(downsample3)

# R -1 -3

x15 = torch.cat([x15, up], dim=1)

x16 = self.conv16(x15)

x17 = self.conv17(x16)

x18 = self.conv18(x17)

x19 = self.conv19(x18)

x20 = self.conv20(x19)

return x20, x13, x6

# Head网络类

class Yolov4Head(nn.Module):

def __init__(self, output_ch, n_classes, inference=False):

super().__init__()

self.inference = inference

self.conv1 = Conv_Bn_Activation(128, 256, 3, 1, 'leaky')

self.conv2 = Conv_Bn_Activation(256, output_ch, 1, 1, 'linear', bn=False, bias=True)

self.yolo1 = YoloLayer(

anchor_mask=[0, 1, 2], num_classes=n_classes,

anchors=[12, 16, 19, 36, 40, 28, 36, 75, 76, 55, 72, 146, 142, 110, 192, 243, 459, 401],

num_anchors=9, stride=8)

# R -4

self.conv3 = Conv_Bn_Activation(128, 256, 3, 2, 'leaky')

# R -1 -16

self.conv4 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

self.conv5 = Conv_Bn_Activation(256, 512, 3, 1, 'leaky')

self.conv6 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

self.conv7 = Conv_Bn_Activation(256, 512, 3, 1, 'leaky')

self.conv8 = Conv_Bn_Activation(512, 256, 1, 1, 'leaky')

self.conv9 = Conv_Bn_Activation(256, 512, 3, 1, 'leaky')

self.conv10 = Conv_Bn_Activation(512, output_ch, 1, 1, 'linear', bn=False, bias=True)

self.yolo2 = YoloLayer(

anchor_mask=[3, 4, 5], num_classes=n_classes,

anchors=[12, 16, 19, 36, 40, 28, 36, 75, 76, 55, 72, 146, 142, 110, 192, 243, 459, 401],

num_anchors=9, stride=16)

# R -4

self.conv11 = Conv_Bn_Activation(256, 512, 3, 2, 'leaky')

# R -1 -37

self.conv12 = Conv_Bn_Activation(1024, 512, 1, 1, 'leaky')

self.conv13 = Conv_Bn_Activation(512, 1024, 3, 1, 'leaky')

self.conv14 = Conv_Bn_Activation(1024, 512, 1, 1, 'leaky')

self.conv15 = Conv_Bn_Activation(512, 1024, 3, 1, 'leaky')

self.conv16 = Conv_Bn_Activation(1024, 512, 1, 1, 'leaky')

self.conv17 = Conv_Bn_Activation(512, 1024, 3, 1, 'leaky')

self.conv18 = Conv_Bn_Activation(1024, output_ch, 1, 1, 'linear', bn=False, bias=True)

self.yolo3 = YoloLayer(

anchor_mask=[6, 7, 8], num_classes=n_classes,

anchors=[12, 16, 19, 36, 40, 28, 36, 75, 76, 55, 72, 146, 142, 110, 192, 243, 459, 401],

num_anchors=9, stride=32)

def forward(self, input1, input2, input3):

x1 = self.conv1(input1)

x2 = self.conv2(x1)

x3 = self.conv3(input1)

# R -1 -16

x3 = torch.cat([x3, input2], dim=1)

x4 = self.conv4(x3)

x5 = self.conv5(x4)

x6 = self.conv6(x5)

x7 = self.conv7(x6)

x8 = self.conv8(x7)

x9 = self.conv9(x8)

x10 = self.conv10(x9)

# R -4

x11 = self.conv11(x8)

# R -1 -37

x11 = torch.cat([x11, input3], dim=1)

x12 = self.conv12(x11)

x13 = self.conv13(x12)

x14 = self.conv14(x13)

x15 = self.conv15(x14)

x16 = self.conv16(x15)

x17 = self.conv17(x16)

x18 = self.conv18(x17)

if self.inference:

y1 = self.yolo1(x2)

y2 = self.yolo2(x10)

y3 = self.yolo3(x18)

return get_region_boxes([y1, y2, y3])

else:

return [x2, x10, x18]

# 整个Yolov4网络类

class Yolov4(nn.Module):

def __init__(self, yolov4conv137weight=None, n_classes=80, inference=False):

super().__init__()

output_ch = (4 + 1 + n_classes) * 3

# backbone

self.down1 = DownSample1()

self.down2 = DownSample2()

self.down3 = DownSample3()

self.down4 = DownSample4()

self.down5 = DownSample5()

# neck

self.neek = Neck(inference)

# yolov4conv137

if yolov4conv137weight:

_model = nn.Sequential(self.down1, self.down2, self.down3, self.down4, self.down5, self.neek)

pretrained_dict = torch.load(yolov4conv137weight)

model_dict = _model.state_dict()

# 1. filter out unnecessary keys

pretrained_dict = {

k1: v for (k, v), k1 in zip(pretrained_dict.items(), model_dict)}

# 2. overwrite entries in the existing state dict

model_dict.update(pretrained_dict)

_model.load_state_dict(model_dict)

# head

self.head = Yolov4Head(output_ch, n_classes, inference)

def forward(self, input):

d1 = self.down1(input)

d2 = self.down2(d1)

d3 = self.down3(d2)

d4 = self.down4(d3)

d5 = self.down5(d4)

x20, x13, x6 = self.neek(d5, d4, d3)

output = self.head(x20, x13, x6)

return output

5、YOLOv4效果展示与分析

5.1、YOLOv4主观效果展示与分析

Yolov4视频检测实例

5.2、YOLOv4客观效果展示与分析

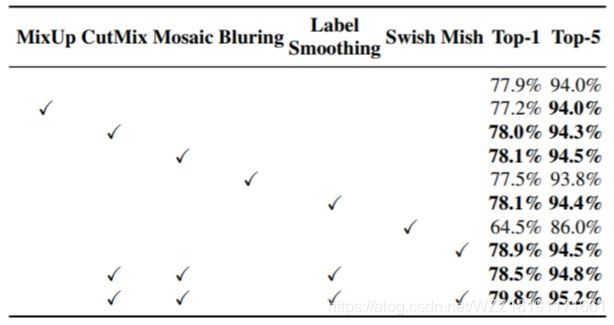

上图展示了不同的数据增强操作对CSPResNeXt-50分类器的精度影响。通过观察我们可以发现:(1)使用CutMix数据增强操作之后,CSPResNeXt-50分类器的top1与top5精度都得到了较大的提升;(2)与CutMix数据增强操作相比,使用Mosaic数据增强操作之后,CSPResNeXt-50分类器能够获得进一步的提升;(3)同时使用CutMix、Mosaic、Label Smoothing与Mish操作之后,CSPResNeXt-50分类器的top1与top5精度得到了最高的精度。

上图展示了使用不同的优化策略之后,对YOLOv4算法的AP指标所产生的影响。其中S表示消除梯度敏感度,M表示使用Mosia数据增强操作,IT表示IoU阈值,GA表示遗传算法,LS表示类别标签平滑操作,CBS表示最小Batch归一化操作,CA表示模拟退火机制,DM表示动态最小Batch大小,OA表示优化的锚点框,loss表示回归分支的损失函数。通过观察我们可以得出以下的初步结论:(1)与没有使用任何优化操作的AP指标相比,使用Mosia数据增强操作之后的AP指标提升了1.1%;(2)与没有使用任何优化操作的AP指标相比,使用GA来选择最优的超参数的方法可以将AP指标提升到38.9%;(3)同时使用消失梯度敏感度、Mosaic数据增强操作、IoU阈值、遗传算法、优化的锚点框和CIoU损失之后,可以获得最高的AP指标。

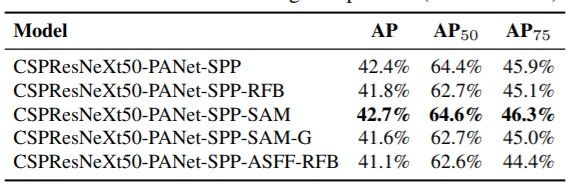

上图展示了PAN、SPP、RFB与SAM模块对YOLOv4算法的影响。通过观察我们可以发现:在基准网络CSPResNeXt50的基础上,同时利用PAN、SPP与SAM模块,可以获得最高的AP指标。

上图展示了YOLOv4与其它state-of-srt目标检测算法在MS COCO数据集上面的各项指标。具体包括目标检测的Backbone网络、模型Size大小、模型帧率FPS、模型评估指标AP、AP50、AP75、APS、APM与APL。通过观察上图,我们可以得出以下的初步结论:(1)与YOLOv3相比,YOLOv4几乎具有相同的输入分辨率,具有相似的模型大小,各项AP指标却得到了极大的性能提升,平均提升了10个百分点左右;(2)与性能优异的RetinaNet相比,YOLOv4不仅具有较小的输入分辨率,而且性能得到了极大的提升,平均提升了5个百分点左右;(3)与基于Anchor-free的目标检测算法CornerNet相比,虽然YOLOv4的模型较大一些,但是YOLOv4的性能提升了3个百分点左右。

上图展示了YOLOv4与其它state-of-srt目标检测算法在MS COCO数据集上面的各项指标。通过观察上图,我们可以得出以下的初步结论:(1)与CenterMask-Lite算法相比,虽然CenterMask-Lite具有更大的分辨率,但是YOLOv4算法的精度提升了3个百分点左右;(2)与HSD目标检测算法相比,两者的分辨率大小基本相同,虽然HSD算法使用了更深的基准网络,但是算法精度并不如YOLOv4算法;(3)与双阶段目标检测算法Faster R-CNN、RetinaNet与Cascade R-CNN相比,YOLOv4算法的AP指标都得到了极大的性能提升;(4)与基于Anchor-free的目标检测算法Centernet相比,当使用了基准网络Hourglass-104之后,虽然Centernet获得了更高的AP指标,但是其推理速度并不能满足很多设备的要求。

上图展示了YOLOv4与其它state-of-srt目标检测算法在MS COCO数据集上面的各项指标。通过观察上图,我们可以得出以下的初步结论:(1)与轻量级目标检测算法EfficientDet相比,当其具有相似的分辨率时,获得了相似的AP指标,但是YOLOv4算法的运行速度更快一些;(2)与添加了ASFF模块的YOLOv3算法相比,虽然它们具有相同的输入分辨率,但是YOLOv4在精度与速度方面优于前者;(3)与SM-NAS E3算法相比,虽然SE-NAS的输入图像更大一些,但是其速度与AP精度均不如YOLOv4检测算法。(4)与NAS-FPN算法相比,该算法不仅利用到NAS技术来寻找最优的目标检测网络架构,而且利用FPN操作来解决目标检测中的尺度问题。当输入图像大小为1024*1024时,虽然NAS-FPN在AP指标上面更高一些,但是它的运行速度变得很慢。

6、总结与分析

YOLOv4是一种单阶段目标检测算法,该算法在YOLOv3的基础上添加了一些新的改进思路,使得其速度与精度都得到了极大的性能提升,具体包括:输入端的Mosaic数据增强、cmBN、SAT自对抗训练操作;基准端的CSPDarknet53、Mish激活函数、Dropblock操作;Neck端的SPP与FPN+PAN结构;输出端的损失函数CIOU_Loss以及预测框筛选的DIOU_nms。实际测试发现YOLOv4算法确定具有较大的性能提升。除此之外,YOLOv4中的各种改进思路仍然可以应用到其它的目标检测算法中。

参考资料

[1] 原始论文

[2] 博客链接1

注意事项

[1] 该博客是本人原创博客,如果您对该博客感兴趣,想要转载该博客,请与我联系(qq邮箱:[email protected]),我会在第一时间回复大家,谢谢大家的关注。

[2] 由于个人能力有限,该博客可能存在很多的问题,希望大家能够提出改进意见。

[3] 如果您在阅读本博客时遇到不理解的地方,希望您可以联系我,我会及时的回复您,和您交流想法和意见,谢谢。

[4] 本文中部分图像的版权归江大白所有。

[5] 本人业余时间承接各种本科毕设设计和各种项目,包括图像处理(数据挖掘、机器学习、深度学习等)、matlab仿真、python算法及仿真等,非诚勿扰,有需要的请加QQ:1575262785详聊,备注“项目”!!!