之前的两篇文章是搭建Spark环境,准备工作做好之后接下来写一个简单的demo,功能是统计本地某个文件中每个单词出现的次数。开发环境为Idea+Maven,开发语言为scala,首先我们要在Idea中下载scala的插件,具体如下:

一、Idea开发环境准备

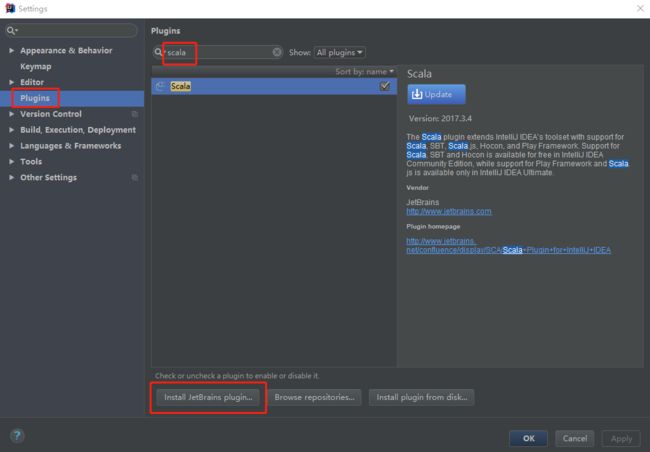

1.下载scala插件

安装插件之前需确保Idea的JDK已经安装并配置好,然后打开Idea,选择File--->Settings,在新窗口中选择Plugins,在右边的输入框中输入“scala”关键字进行搜索,然后在搜索结果中点击下面的Install JetBrains plugin...进行安装。

安装完成之后需要重启Idea。

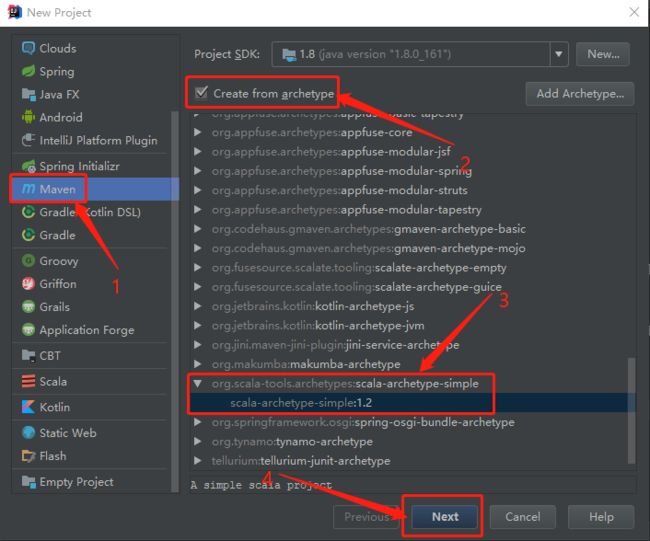

二、新建项目工程

打开Idea,选择File--->New--->Project,在新窗口中选择Maven,勾选右边的Create from archetype,找到scala-archetype-simple展开选择1.2,然后点击Next。

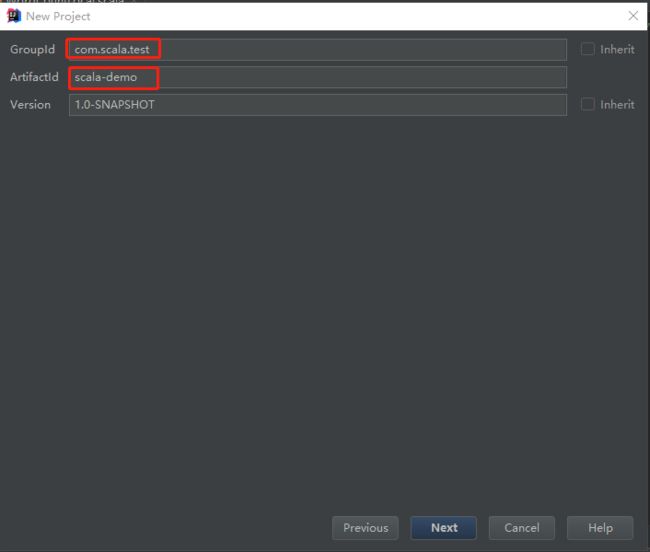

输入GroupId和ArtifactId,然后继续Next,之后选择maven、repository路径并输入项目名称。

pom文件如下:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd"> <modelVersion>4.0.0modelVersion> <groupId>com.testgroupId> <artifactId>testartifactId> <version>1.0-SNAPSHOTversion> <inceptionYear>2008inceptionYear> <properties> <project.build.sourceEncoding>UTF-8project.build.sourceEncoding> <spark.version>2.3.2spark.version> <scala.version>2.11scala.version> <hadoop.version>2.7.0hadoop.version> properties> <dependencies> <dependency> <groupId>org.apache.sparkgroupId> <artifactId>spark-core_${scala.version}artifactId> <version>${spark.version}version> dependency> <dependency> <groupId>org.apache.sparkgroupId> <artifactId>spark-sql_${scala.version}artifactId> <version>${spark.version}version> dependency> <dependency> <groupId>org.apache.sparkgroupId> <artifactId>spark-hive_${scala.version}artifactId> <version>${spark.version}version> dependency> <dependency> <groupId>org.apache.sparkgroupId> <artifactId>spark-streaming_${scala.version}artifactId> <version>${spark.version}version> dependency> <dependency> <groupId>org.apache.hadoopgroupId> <artifactId>hadoop-clientartifactId> <version>2.6.0version> dependency> <dependency> <groupId>org.apache.sparkgroupId> <artifactId>spark-streaming-kafka_${scala.version}artifactId> <version>1.6.3version> dependency> <dependency> <groupId>org.apache.sparkgroupId> <artifactId>spark-mllib_${scala.version}artifactId> <version>${spark.version}version> dependency> <dependency> <groupId>mysqlgroupId> <artifactId>mysql-connector-javaartifactId> <version>5.1.39version> dependency> <dependency> <groupId>junitgroupId> <artifactId>junitartifactId> <version>4.12version> dependency> dependencies> <pluginRepositories> <pluginRepository> <id>scala-tools.orgid> <name>Scala-Tools Maven2 Repositoryname> <url>http://scala-tools.org/repo-releasesurl> pluginRepository> pluginRepositories> <build> <sourceDirectory>src/main/scalasourceDirectory> <testSourceDirectory>src/test/scalatestSourceDirectory> <plugins> <plugin> <groupId>org.scala-toolsgroupId> <artifactId>maven-scala-pluginartifactId> <executions> <execution> <goals> <goal>compilegoal> <goal>testCompilegoal> goals> execution> executions> <configuration> <scalaVersion>${scala.version}scalaVersion> <args> <arg>-target:jvm-1.5arg> args> configuration> plugin> <plugin> <groupId>org.apache.maven.pluginsgroupId> <artifactId>maven-eclipse-pluginartifactId> <configuration> <downloadSources>truedownloadSources> <buildcommands> <buildcommand>ch.epfl.lamp.sdt.core.scalabuilderbuildcommand> buildcommands> <additionalProjectnatures> <projectnature>ch.epfl.lamp.sdt.core.scalanatureprojectnature> additionalProjectnatures> <classpathContainers> <classpathContainer>org.eclipse.jdt.launching.JRE_CONTAINERclasspathContainer> <classpathContainer>ch.epfl.lamp.sdt.launching.SCALA_CONTAINERclasspathContainer> classpathContainers> configuration> plugin> plugins> build> project>

接下来我们要实现分析并统计文件中的单词出现的次数,类文件代码如下:

package com.test import org.apache.spark.SparkContext import org.apache.spark.SparkConf object WordCountLocal { def main(args: Array[String]) { /** * SparkContext 的初始化需要一个SparkConf对象 * SparkConf包含了Spark集群的配置的各种参数 */ val conf=new SparkConf() .setMaster("local")//启动本地化计算 .setAppName("testRdd")//设置本程序名称 //Spark程序的编写都是从SparkContext开始的 val sc=new SparkContext(conf) //以上的语句等价与val sc=new SparkContext("local","testRdd") val data=sc.textFile("D:\\tmp\\hello.txt")//读取本地文件 data.flatMap(_.split(" "))//下划线是占位符,flatMap是对行操作的方法,对读入的数据进行分割 .map((_,1))//将每一项转换为key-value,数据是key,value是1 .reduceByKey(_+_)//将具有相同key的项相加合并成一个 .collect()//将分布式的RDD返回一个单机的scala array,在这个数组上运用scala的函数操作,并返回结果到驱动程序 .foreach(println)//循环打印 } }

hello.txt文件内容可以随便填写,我的如下:

hello scala hello world hello nihao i am scala this is a spark example running program is ok

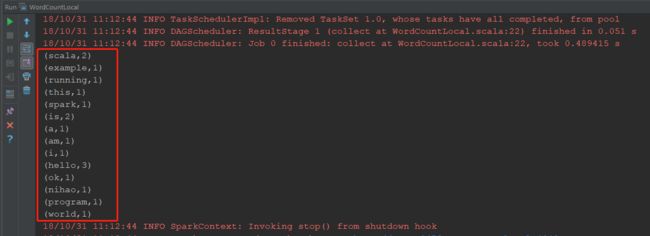

三、运行工程

右键WordCountLocal类,选择Run,如果运行失败并出现java.io.IOException: Could not locate executable null\bin\winutils.exe in the Hadoop binaries.请确认下本地hadoop-x.x.x/bin目录下有没有winutils.exe这个文件,如果没有请到github上下载,

地址:https://github.com/srccodes/hadoop-common-2.2.0-bin

下载并解压成功后配置环境变量,增加用户变量HADOOP_HOME,值是下载的zip包解压的目录,然后在系统变量path里增加%HADOOP_HOME%\bin 即可。大功告成之后再次执行成功,结果如下:

一个简单的数据统计demo就完成了。