1)首先启动hadoop2个进程,进入hadoop/sbin目录下,依次启动如下命令

[root@node02 sbin]# pwd /usr/server/hadoop/hadoop-2.7.0/sbin

sh start-dfs.sh sh start-yarn.sh jps

2)通过jps查看是否正确启动,确保启动如下6个程序

[root@node02 sbin]# jps 10096 DataNode 6952 NodeManager 9962 NameNode 10269 SecondaryNameNode 12526 Jps 6670 ResourceManager

3)如果启动带有文件的话,将文件加入到hdfs 的 /input下,如果出现如下错误的话,

[root@node02 hadoop-2.7.0]# hadoop fs -put sample.txt /input 21/01/02 01:13:15 WARN util.NativeCodeLoader: Unable to load native-hadoop library for atform... using builtin-java classes where applicable

在环境变量中添加如下字段

[root@node02 ~]# vim /etc/profile

export HADOOP_COMMON_LIB_NATIVE_DIR=${HADOOP_PREFIX}/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_PREFIX/lib"

4)进入到hadoop根目录,根据存放位置决定

[root@node02 hadoop-2.7.0]# pwd /usr/server/hadoop/hadoop-2.7.0

5)新建hadoop hdfs 文件系统上的 /input 文件夹(用于存放输入文件)

hadoop fs -mkdir /input

6)传入测试文件,测试文件需要自己上传到根目录下(仅供测试,生产环境下存放到指定目录)

[root@node02 hadoop-2.7.0]# hadoop fs -put sample.txt /input

7)查看传入文件是否存在

[root@node02 hadoop-2.7.0]# hadoop fs -ls /input -rw-r--r-- 1 root supergroup 529 2021-01-02 01:13 /input/sample.txt

8)上传jar包到根目录下(生产环境下,放入指定目录下),测试jar包为study_demo.jar

[root@node02 hadoop-2.7.0]# ll 总用量 1968 drwxr-xr-x. 2 10021 10021 4096 4月 11 2015 bin drwxr-xr-x. 3 10021 10021 4096 4月 11 2015 etc drwxr-xr-x. 2 10021 10021 4096 4月 11 2015 include drwxr-xr-x. 3 10021 10021 4096 4月 11 2015 lib drwxr-xr-x. 2 10021 10021 4096 4月 11 2015 libexec -rw-r--r--. 1 10021 10021 15429 4月 11 2015 LICENSE.txt drwxr-xr-x. 3 root root 4096 1月 2 01:36 logs -rw-r--r--. 1 10021 10021 101 4月 11 2015 NOTICE.txt -rw-r--r--. 1 10021 10021 1366 4月 11 2015 README.txt drwxr-xr-x. 2 10021 10021 4096 4月 11 2015 sbin drwxr-xr-x. 4 10021 10021 4096 4月 11 2015 share -rw-r--r--. 1 root root 1956989 6月 14 2021 study_demo.jar

9)使用hadoop 运行 java jar包,Main函数一定要加上全限定类名

hadoop jar study_demo.jar com.ncst.hadoop.MaxTemperature /input/sample.txt /output

10)运行结果缩略图

21/01/02 01:37:54 INFO mapreduce.Job: Counters: 49 File System Counters FILE: Number of bytes read=61 FILE: Number of bytes written=342877 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=974 HDFS: Number of bytes written=17 HDFS: Number of read operations=9 HDFS: Number of large read operations=0 HDFS: Number of write operations=2 Job Counters Launched map tasks=2 Launched reduce tasks=1 Data-local map tasks=2 Total time spent by all maps in occupied slots (ms)=14668 Total time spent by all reduces in occupied slots (ms)=4352 Total time spent by all map tasks (ms)=14668 Total time spent by all reduce tasks (ms)=4352 Total vcore-seconds taken by all map tasks=14668 Total vcore-seconds taken by all reduce tasks=4352 Total megabyte-seconds taken by all map tasks=15020032 Total megabyte-seconds taken by all reduce tasks=4456448 Map-Reduce Framework Map input records=5 Map output records=5 Map output bytes=45 Map output materialized bytes=67 Input split bytes=180 Combine input records=0 Combine output records=0 Reduce input groups=2 Reduce shuffle bytes=67 Reduce input records=5 Reduce output records=2 Spilled Records=10 Shuffled Maps =2 Failed Shuffles=0 Merged Map outputs=2 GC time elapsed (ms)=525 CPU time spent (ms)=2510 Physical memory (bytes) snapshot=641490944 Virtual memory (bytes) snapshot=6241415168 Total committed heap usage (bytes)=476053504 Shuffle Errors BAD_ID=0 CONNECTION=0 IO_ERROR=0 WRONG_LENGTH=0 WRONG_MAP=0 WRONG_REDUCE=0 File Input Format Counters Bytes Read=794 File Output Format Counters Bytes Written=17

10)运行成功后执行命令查看,此时多出一个 /output 文件夹

[root@node02 hadoop-2.7.0]# hadoop fs -ls / drwxr-xr-x - root supergroup 0 2021-01-02 01:13 /input drwxr-xr-x - root supergroup 0 2021-01-02 01:37 /output drwx------ - root supergroup 0 2021-01-02 01:37 /tmp

11)查看 /output文件夹的文件

[root@node02 hadoop-2.7.0]# hadoop fs -ls /output -rw-r--r-- 1 root supergroup 0 2021-01-02 01:37 /output/_SUCCESS -rw-r--r-- 1 root supergroup 17 2021-01-02 01:37 /output/part-00000

12)查看part-r-00000 文件夹中的内容,我这个测试用例用来获取1949年和1950年的最高气温(华氏度)

[root@node02 hadoop-2.7.0]# hadoop fs -cat /output/part-00000 1949 111 1950 22

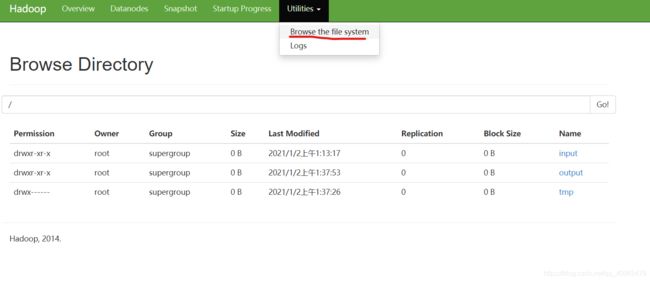

13)在浏览器端访问端口可以观看可视化界面,对应的是hadoop服务器地址和自己设置的端口,通过可视化界面查看input文件夹面刚刚上传的sample.txt文件

http://192.168.194.XXX:50070/

14)测试程序jar包和测试文件已上传到github上面,此目录有面经和我自己总结的面试题

GitHub

如有兴趣的同学也可以查阅我的秒杀系统

秒杀系统

以上就是hadoop如何运行java程序(jar包)运行时动态指定参数的详细内容,更多关于hadoop运行java程序的资料请关注脚本之家其它相关文章!