k8s集群管理-Prometheus+Grafana监控方案

一、系统环境

CentOS Linux release 7.9.2009 (Core)

kubectl-1.20.4-0.x86_64

kubelet-1.20.4-0.x86_64

kubeadm-1.20.4-0.x86_64

kubernetes-cni-0.8.7-0.x86_64

二、k8s架构

| 用途 | ip地址 | 主机名 |

| master | 192.168.10.127 | minio-4 |

| node01 | 192.168.10.124 | minio-1 |

| node02 | 192.168.10.125 | minio-2 |

| node03 | 192.168.10.126 | minio-3 |

| nfs存储 | 192.168.10.143 |

三、Prometheus概述

3.1 Prometheus简介

- 使用指标名称及键值对标识的多维度数据模型。

- 采用灵活的查询语言PromQL。

- 不依赖分布式存储,为自治的单节点服务。

- 使用HTTP完成对监控数据的拉取。

- 支持通过网关推送时序数据。

- 支持多种图形和Dashboard的展示,例如Grafana。

- Prometheus Server:负责监控数据采集和时序数据存储,并提供数据查询功能。

- 客户端SDK:对接Prometheus的开发工具包。

- Push Gateway:推送数据的网关组件。

- 第三方Exporter:各种外部指标收集系统,其数据可以被Prometheus采集。

- AlertManager:告警管理器。

- 其他辅助支持工具。

- 从Kubernetes Master获取需要监控的资源或服务信息;

- 从各种Exporter抓取(Pull)指标数据,然后将指标数据保存在时序数据库(TSDB)中;

- 向其他系统提供HTTP API进行查询;

- 提供基于PromQL语言的数据查询;

- 可以将告警数据推送(Push)给AlertManager,等等。

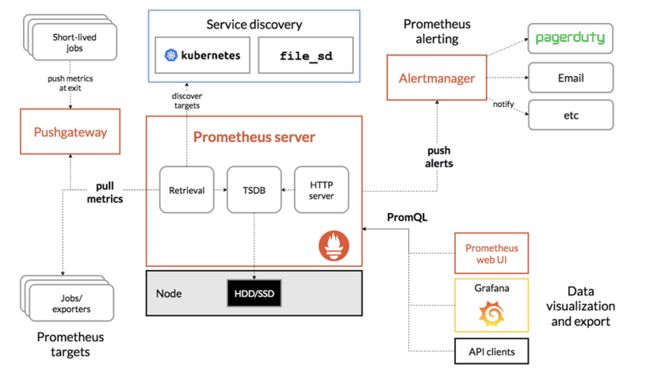

3.2 Prometheus组件架构图

- Prometheus 服务器定期从配置好的 jobs 或者 exporters 中获取度量数据;或者接收来自推送网关发送过来的度量数据。

- Prometheus 服务器在本地存储收集到的度量数据,并对这些数据进行聚合;

- 运行已定义好的 alert.rules,记录新的时间序列或者向告警管理器推送警报。

- 告警管理器根据配置文件,对接收到的警报进行处理,并通过email等途径发出告警。

- Grafana等图形工具获取到监控数据,并以图形化的方式进行展示。

3.3 Prometheus监控粒度

- 基础设施层:监控各个主机服务器资源(包括Kubernetes的Node和非Kubernetes的Node),如CPU,内存,网络吞吐和带宽占用,磁盘I/O和磁盘使用等指标。

- 中间件层:监控独立部署于Kubernetes集群之外的中间件,例如:MySQL、Redis、RabbitMQ、ElasticSearch、Nginx等。

- Kubernetes集群:监控Kubernetes集群本身的关键指标

- Kubernetes集群上部署的应用:监控部署在Kubernetes集群上的应用

四、Prometheus相关概念

4.1 数据模型

- 度量名称和标签

- 样本

- 格式

4.2 度量类型

- Counter(计算器)

- Gauge(测量)

- Histogram(直方图)

- 观察桶的累计计数器,暴露为

_bucket{le=” ”} - 所有观察值的总和,暴露为

_sum - 已观察到的事件的计数,暴露为

_count(等同于 _bucket{le=”+Inf”}) - Summery:类似于Histogram,Summery样本观察(通常是请求持续时间和响应大小)。虽然它也提供观测总数和所有观测值的总和,但它计算滑动时间窗内的可配置分位数。在获取数据期间,具有

基本度量标准名称的Summery会显示多个时间序列:

- 流动φ分位数(0≤φ≤1)的观察事件,暴露为

{quantile=”<φ>”} - 所有观察值的总和,暴露为

_sum - 已经观察到的事件的计数,暴露为

_count

4.3 工作和实例

4.4 标签和时间序列

- job:目标所属的配置作业名称。

- instance:

: 被抓取的目标网址部分。

- up{job=”

”, instance=” ”}:1 如果实例健康,即可达;或者0抓取失败。 - scrape_duration_seconds{job=”

”, instance=” ”}:抓取的持续时间。 - scrape_samples_post_metric_relabeling{job=”

”, instance=” ”}:应用度量标准重新标记后剩余的样本数。 - scrape_samples_scraped{job=”

”, instance=” ”}:目标暴露的样本数量。

五、Prometheus部署

5.1 获取部署文件

登录master服务器

# git clone https://github.com/prometheus/prometheus

# cd prometheus/documentation/examples/5.2 创建命名空间

我这边把命合空间取名为"monitoring"

# vim prometheus-namespace.yaml

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

# kubectl create -f prometheus-namespace.yaml

5.3 创建RBAC

#vim rbac-setup.yml

# To have Prometheus retrieve metrics from Kubelets with authentication and

# authorization enabled (which is highly recommended and included in security

# benchmarks) the following flags must be set on the kubelet(s):

#

# --authentication-token-webhook

# --authorization-mode=Webhook

#

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy #记得这个要加上,原先的文件好像没有,如没有这行,后面CAd会无法up

- nodes/metrics

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

- networking.k8s.io

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics", "/metrics/cadvisor"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitoring #修改命名空间

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitoring #修改命名空间

# kubectl create -f rbac-setup.yml

5.4 创建Prometheus ConfigMap

# cat prometheus-kubernetes.yml | grep -v ^$ | grep -v "#" >> prometheus-configmap.yaml

# vim prometheus-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-server-conf

labels:

name: prometheus-server-conf

namespace: monitoring #修改命名空间

data:

prometheus.yml: |-

global:

scrape_interval: 10s

evaluation_interval: 10s

scrape_configs:

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-nodes'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

- job_name: 'kubernetes-cadvisor'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-services'

metrics_path: /probe

params:

module: [http_2xx]

kubernetes_sd_configs:

- role: service

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__address__]

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

- job_name: 'kubernetes-ingresses'

kubernetes_sd_configs:

- role: ingress

relabel_configs:

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

# kubectl create -f prometheus-config.yaml

5.5 创建PV和PVC存储

这里的pv是用的nfs存储作为共享存储,所需要先把nfs存储配置好,这里就不多介绍了,如果需要,可以查看前面关于pv和pvc的文章

这里prometheus的数据,采用pvc存储,所以这里需要配一下pv和pvc

#创建pv

# vim prometheus-pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: prometheus-pv

labels:

type: nfs

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs

nfs:

path: "/pv"

server: 192.168.10.143 #k8s-nfs matser

readOnly: false

# kubectl create -f prometheus-pv.yaml

#创建pvc

#vim prometheus-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: prometheus-pvc

namespace: monitoring

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 5Gi

storageClassName: nfs

#查看pvc状态 ,状态显示Bound,说明可以正常使用

# kubectl get pvc -n monitoring

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

prometheus-pvc Bound prometheus-pv 5Gi RWX nfs 5d18h

5.6 创建Prometheus Deployment

# vim prometheus-deployment.yaml

#apiVersion: apps/v1beta2

apiVersion: apps/v1 #这里需要根据自已的情况修改

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus-server

namespace: monitoring #修改自已的定义的名字空间

spec:

replicas: 1

selector:

matchLabels:

app: prometheus-server

template:

metadata:

labels:

app: prometheus-server

spec:

containers:

- name: prometheus-server

# image: prom/prometheus:v2.14.0

image: registry-op.test.cn/prometheus:v2.14.0 ##改成自已的仓库

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus/"

- "--storage.tsdb.retention=72h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- name: prometheus-config-volume

mountPath: /etc/prometheus/

#subpath: prometheus.yml

- name: prometheus-storage-volume

mountPath: /prometheus/

serviceAccountName: prometheus

# imagePullSecrets:

# - name: regsecret

imagePullSecrets: #改成自已的私有仓库认证

- name: registry-op.test.cn

volumes:

- name: prometheus-config-volume

configMap:

defaultMode: 420

name: prometheus-server-conf

#- name: prometheus-storage-volume

# emptyDir: {}

- name: prometheus-storage-volume

persistentVolumeClaim:

claimName: prometheus-pvc ##我这边用pvc存储数据,如果对pvc有不明白地方,可以看我关于pvc的文章

# kubectl create -f prometheus-deployment.yaml

5.7 创建Prometheus Service

# vim prometheus-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app: prometheus-service

name: prometheus-service

namespace: monitoring #修改名字空间

spec:

type: NodePort

selector:

app: prometheus-server

ports:

- port: 9090

targetPort: 9090

nodePort: 30909

# kubectl create -f prometheus-service

#查看状态

# kubectl get all -n monitoring

NAME READY STATUS RESTARTS AGE

pod/prometheus-server-79bc46f8f9-ls6vw 1/1 Running 1 26h

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/prometheus-service NodePort 10.10.193.198 9090:30909/TCP 2d

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/prometheus-server 1/1 1 1 26h

NAME DESIRED CURRENT READY AGE

replicaset.apps/prometheus-server-79bc46f8f9 1 1 1 26h

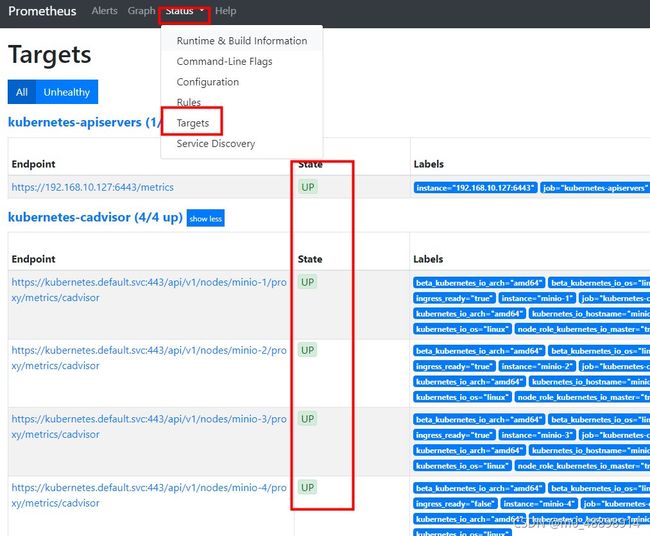

5.8 测试prometheus服务

#在浏览上直接访问http://192.168.10.127:30909/

#查看所有Kubernetes集群上的Endpoint通过服务发现的方式自动连接到了Prometheus,这时要注意这里所有的服务在状state要变成UP状态,如果是Down状态,就需要检查一下原因

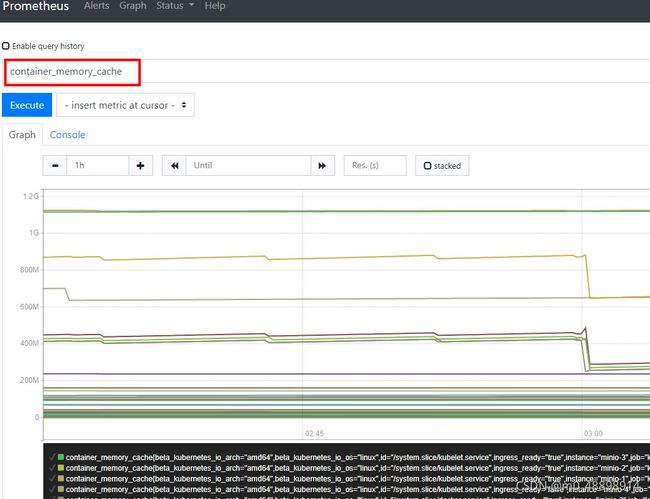

#查看内存数据

更多的请参考官方:https://prometheus.io/docs/prometheus/latest/configuration/configuration/

六、部署Grafana服务

6.1 部署grafana

# cd /root/k8s/prometheus/documentation/examples/

#vim grafana-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: monitoring #修改命名空间

spec:

replicas: 1

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

task: monitoring

app: grafana

#k8s-app: grafana

spec:

containers:

- name: grafana

#image: daocloud.io/liukuan73/grafana:5.0.0

image: registry-op.test.cn/grafana:5.0.0 #修改成自已的仓库

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /var

name: grafana-storage

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

imagePullSecrets:

- name: registry-op.test.cn ##修改成自已的仓库认证

volumes:

- name: grafana-storage

#emptyDir: {}

persistentVolumeClaim:

claimName: prometheus-pvc #使用pvc存储

nodeSelector:

node-role.kubernetes.io/master: "true"

# tolerations:

# - key: "node-role.kubernetes.io/master"

# effect: "NoSchedule"

#执行以下的目的是让master节点参与调度

# kubectl label nodes minio-1 node-role.kubernetes.io/master=true

# kubectl label nodes minio-2 node-role.kubernetes.io/master=true

# kubectl label nodes minio-3 node-role.kubernetes.io/master=true

#kubectl taint nodes --all node-role.kubernetes.io/master-

#kubectl create -f grafana-deploy.yaml

6.2 创建grafana service服务

#vim grafana-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

annotations:

prometheus.io/scrape: 'true'

prometheus.io/tcp-probe: 'true'

prometheus.io/tcp-probe-port: '80'

name: monitoring-grafana

namespace: monitoring #修改命名空间

spec:

type: NodePort

# In a production setup, we recommend accessing Grafana through an external Loadbalancer

# or through a public IP.

# type: LoadBalancer

# You could also use NodePort to expose the service at a randomly-generated port

# type: NodePort

ports:

- port: 80

targetPort: 3000

nodePort: 30010 ##端口

selector:

app: grafana

# kubectl get all -n monitoring

NAME READY STATUS RESTARTS AGE

pod/monitoring-grafana-94d975947-4kf75 1/1 Running 1 43h

pod/prometheus-server-79bc46f8f9-ls6vw 1/1 Running 1 29h

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/monitoring-grafana NodePort 10.10.28.89 80:30010/TCP 43h

service/prometheus-service NodePort 10.10.193.198 9090:30909/TCP 2d3h

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/monitoring-grafana 1/1 1 1 43h

deployment.apps/prometheus-server 1/1 1 1 29h

NAME DESIRED CURRENT READY AGE

replicaset.apps/monitoring-grafana-94d975947 1 1 1 43h

replicaset.apps/prometheus-server-79bc46f8f9 1 1 1 29h

6.3 验证grafana服务

#我这里deploy配置文件中,使用的nodeport是30010

浏览里输入http://192.168.10.127:30010/,使用默认用户名admin/admin登录。

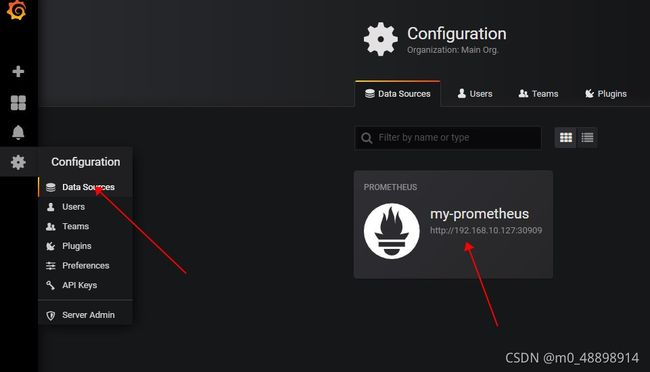

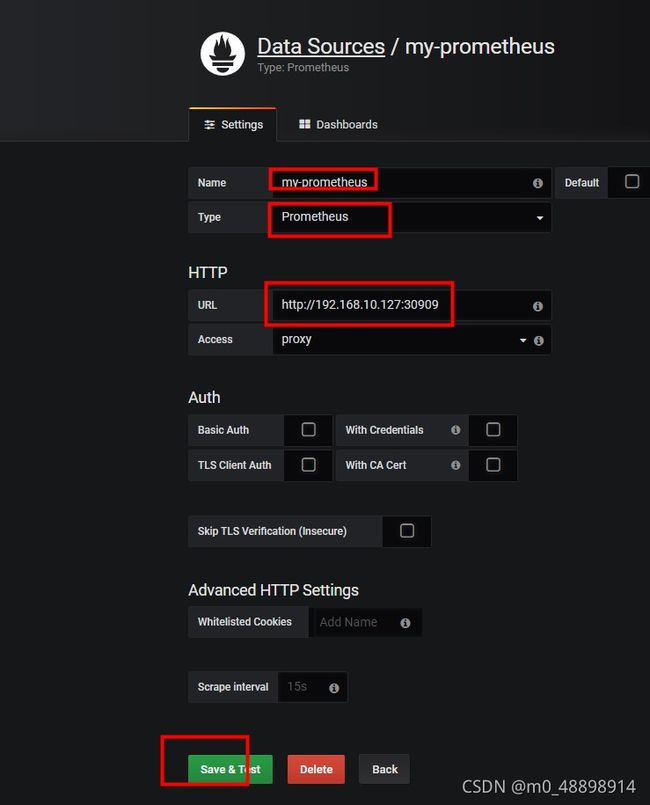

6.4 添加数据源

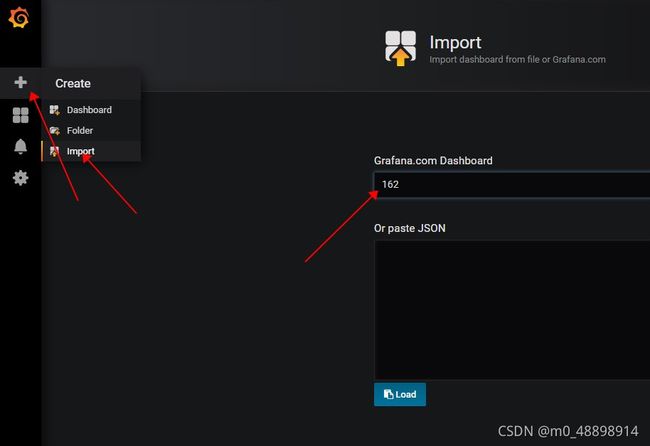

6.4 配置grafana dashboard

别的一些功能,比如添加用户,修改密码,修改时区等操作,就不多说了。

#查看我们刚才添加的监控图