记录一次爬虫接单项目【采集国际淘宝数据】

1.背景

前几天接了一个爬虫的单子,上周六已经完成这个单子,也收到了酬劳(数目还不错,哈哈哈,小喜了一下)。这个项目大概我用了两天写完了(空闲时间写的)。

2.介绍

大概要采集的数据步骤:1)输入商品名称;2)搜索供应商;3)爬取所有供应商的里所有商品数据和对应商品的交易数据;

alibaba国际淘宝链接:

https://www.alibaba.com/

1.这个爬虫项目是对alibaba国际淘宝网站采集数据。

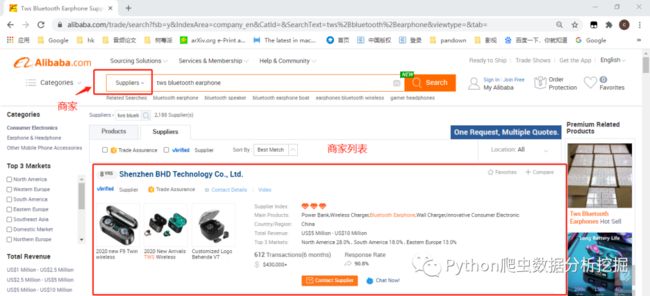

2.通过输入商品,比如:蓝牙耳机

tws+bluetooth+earphone

链接

https://www.alibaba.com/trade/search?fsb=y&IndexArea=company_en&CatId=&SearchText=tws%2Bbluetooth%2Bearphone&viewtype=&tab=

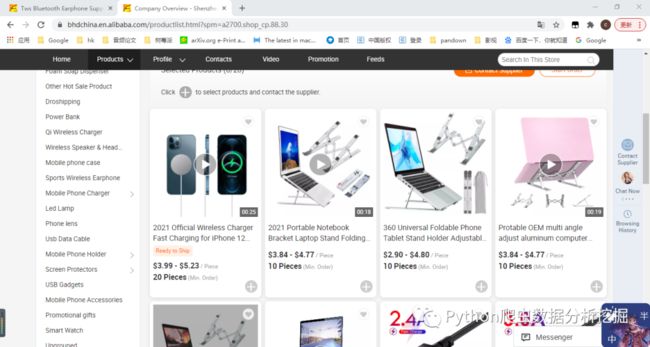

3.其中某一个商家的所有商品

链接

https://bhdchina.en.alibaba.com/productlist.html?spm=a2700.shop_cp.88.30

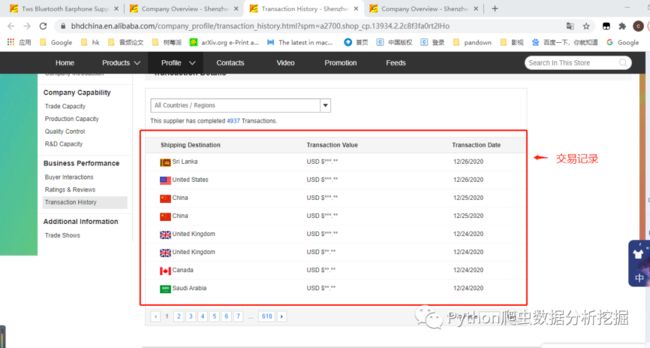

4.对应的交易数据记录

链接

https://bhdchina.en.alibaba.com/company_profile/transaction_history.html?spm=a2700.shop_cp.13934.2.2c8f3fa0rt2lHo

3.爬取商家信息

为什么要先爬取商家信息,因为商品数据和交易数据都是需要根据商家名称去爬取,所有先开始爬取商家信息。

导入库包

import requests

import json

from lxml import etree

import datetime

import xlwt

import os

import time

requests请求头

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:64.0) Gecko/20100101 Firefox/64.0'

}

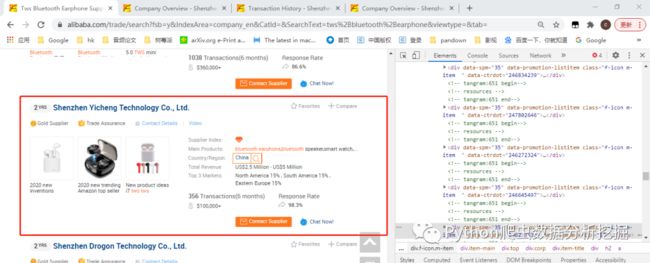

先看看要采集哪些字段

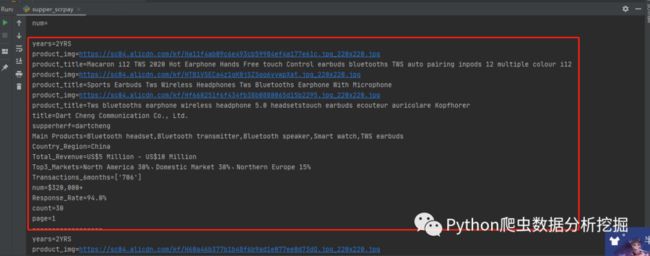

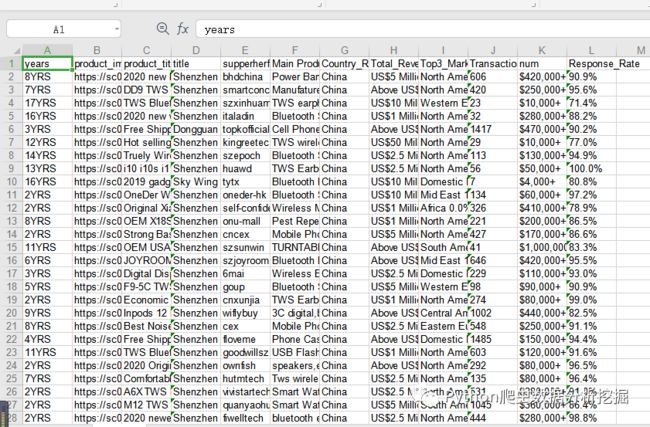

红框中的这些数据都是需要的(years,product_img,product_title,supperherf,Main Products,Country_Region,Total_Revenue,Top3_Markets,Transactions_6months,Response_Rate......)

其中supperherf是从url链接里面提取出的商家名称,后面爬取商品数据和交易数据需要用到

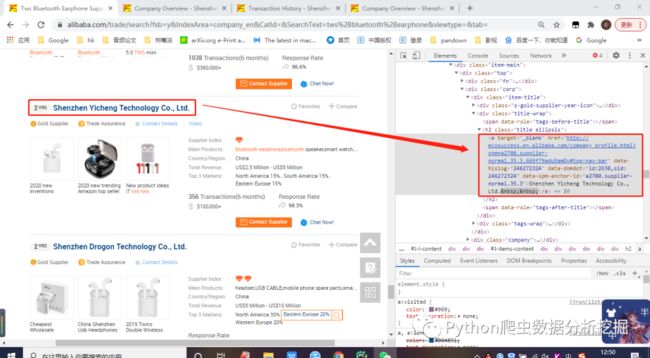

解析网页标签

比如名称对应的网页标签div是title ellipsis,在代码里面通过xpath可以解析到内容(这里都比较简单所以就介绍原理,小白不懂的可以看之前的文章去进行学习)

请求url数据

url = "https://www.alibaba.com/trade/search?spm=a2700.supplier-normal.16.1.7b4779adaAmpGa&page="+str(page)+"&f1=y&n=38&viewType=L&keyword="+keyword+"&indexArea=company_en"

r = requests.get(url, headers=headers)

r.encoding = 'utf-8'

s = r.text

解析字段内容

items = selector.xpath('//*[@class="f-icon m-item "]')

if(len(items)>1):

for item in items:

try:

years = item.xpath('.//*[@class="s-gold-supplier-year-icon"]/text()')

print("years=" + str(years[0])+"YRS")

for i in item.xpath('.//*[@class="product"]'):

product_img = i.xpath('.//*[@class="img-thumb"]/@data-big')[0]

product_title = i.xpath('.//a/@title')[0]

product_img = str(product_img)

index1 = product_img.index("imgUrl:'")

index2 = product_img.index("title:")

product_img = "https:"+product_img[index1 + 8:index2 - 2]

print("product_img="+str(product_img))

print("product_title=" + str(product_title))

title = item.xpath('.//*[@class="title ellipsis"]/a/text()')

print("title="+str(title[0]))

supperherf = item.xpath('.//*[@class="title ellipsis"]/a/@href')[0]

index1 = supperherf.index("://")

index2 = supperherf.index("en.alibaba")

supperherf = supperherf[index1 + 3:index2 - 1]

print("supperherf=" + str(supperherf))

Main_Products = item.xpath('.//*[@class="value ellipsis ph"]/@title')

Main_Products = "、".join(Main_Products)

print("Main Products=" + str(Main_Products))

CTT = item.xpath('.//*[@class="ellipsis search"]/text()')

Country_Region=CTT[0]

Total_Revenue=CTT[1]

Top3_Markets = CTT[2:]

Top3_Markets = "、".join(Top3_Markets)

print("Country_Region=" + str(Country_Region))

print("Total_Revenue=" + str(Total_Revenue))

print("Top3_Markets=" + str(Top3_Markets))

Transactions_6months= item.xpath('.//*[@class="lab"]/b/text()')

print("Transactions_6months=" + str(Transactions_6months))

num = item.xpath('.//*[@class="num"]/text()')[0]

print("num=" + str(num))

Response_Rate = item.xpath('.//*[@class="record util-clearfix"]/li[2]/div[2]/a/text()')[0]

print("Response_Rate=" + str(Response_Rate))

count =count+1

print("count="+str(count))

print("page=" + str(page))

print("------------------")

解析结果

到这里就采集完商家数据了,下面开始爬取商家商品数据

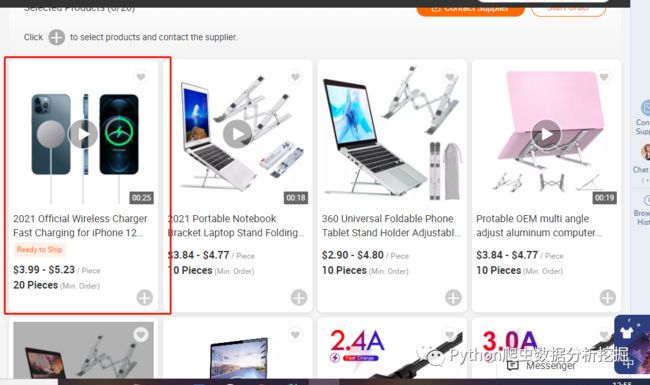

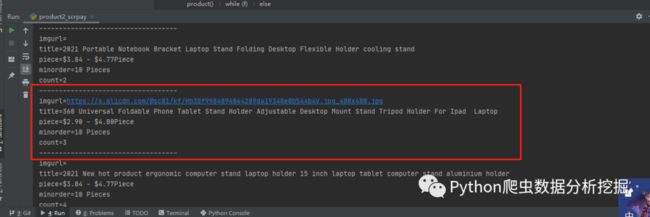

4.采集商品数据

这里商品数据的内容就少了很多(商品图片imgurl,名称title,价格piece,最低价格minorder)。

解析网页标签

请求网页数据

url = "https://" + str(compayname) + ".en.alibaba.com/productlist-" + str(

page) + ".html?spm=a2700.shop_pl.41413.41.140b44809b9ZBY&filterSimilar=true&filter=null&sortType=null"

r = requests.get(url, headers=headers)

r.encoding = 'utf-8'

s = r.text

解析标签内容

items = selector.xpath('//*[@class="icbu-product-card vertical large product-item"]')

if(len(items)>1):

try:

for item in items:

imgurl = item.xpath(

'.//*[@class="next-row next-row-no-padding next-row-justify-center next-row-align-center img-box"]/img/@src')

title = item.xpath('.//*[@class="product-info"]/div/a/span/text()')

piece = item.xpath('.//*[@class="product-info"]/div[@class="price"]/span/text()')

minorder = item.xpath('.//*[@class="product-info"]/div[@class="moq"]/span/text()')

print("imgurl=" + str("".join(imgurl)))

print("title=" + str(title[0]))

print("piece=" + str("".join(piece)))

print("minorder=" + str(minorder[0]))

print("count="+str(count))

print("-----------------------------------")

count =count+1

爬取结果

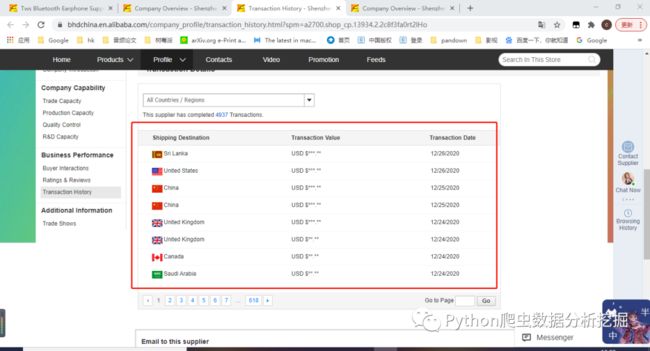

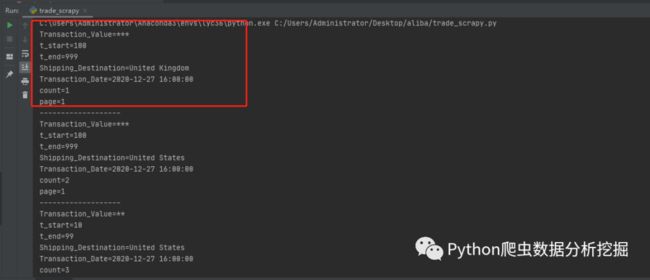

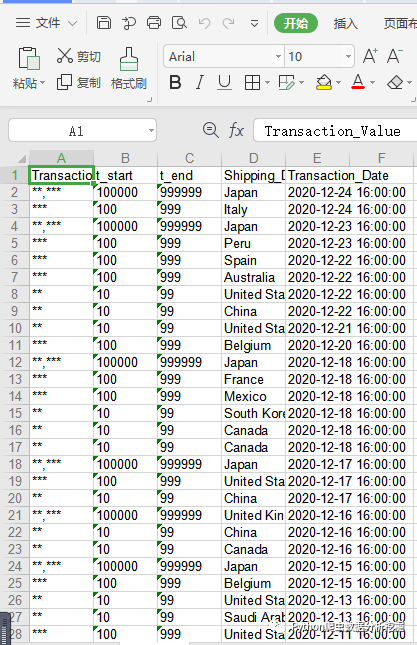

5.爬取交易数据

交易数据需要采集的内容字段也很少,只有三个(交易金额Transaction_Value,买家所属国家Shipping_Destination,交易时间Transaction_Date)

说明

1)这里金额是***.**,客户要求是小数点后面的去掉,前面有三位就定义为100~999,如果2位就是10~99

Transaction_Value = Transaction_Value.split(".")[0]

t_len = len(Transaction_Value)

t_start ='1'

t_end='9'

for j in range(1,t_len): # 10 -99

t_start =t_start+"0"

t_end = t_end +'9'

2)时间是12/26/2020,但是采集下来的是1607068800,需要转为2020-12-27 16:00:00

def todate(timeStamp):

#timeStamp = 1607068800

dateArray = datetime.datetime.fromtimestamp(timeStamp)

otherStyleTime = dateArray.strftime("%Y-%m-%d %H:%M:%S")

return otherStyleTime

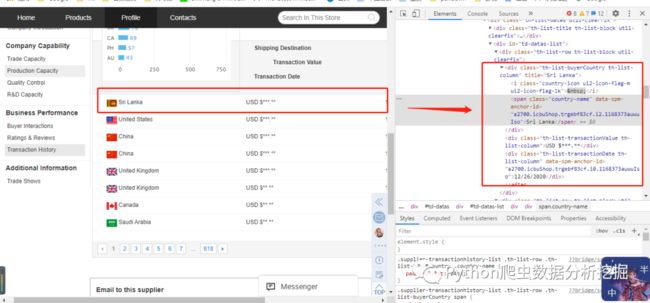

解析网页标签

请求网页数据

url="https://"+str(comapyname)+".en.alibaba.com/core/CommonSupplierTransactionHistoryWidget/list.action?dmtrack_pageid=705d8dfe0b14ebf35fe2e8411768e5b42b8bf0e7a7&page="+str(page)+"&size=8&aliMemberEncryptId=IDX1XQIkua5DjeLlKZC11XM1vlbptpTQfKxDA__pNkStGmQpqTMbPXOgDkVD6T7jySw3&_=1608706140145"

r = requests.get(url)

r.encoding='gbk'

s = json.loads(r.text)

items = s['data']['tradeList']['value']['resultList']

解析内容

if(len(items)>1):

for i in items:

Transaction_Value = i['amt']

Transaction_Value = str(Transaction_Value)

Transaction_Value = Transaction_Value.split(".")[0]

t_len = len(Transaction_Value)

t_start ='1'

t_end='9'

for j in range(1,t_len): # 10 -99

t_start =t_start+"0"

t_end = t_end +'9'

print("Transaction_Value=" + str(Transaction_Value))

print("t_start="+t_start)

print("t_end="+(t_end))

Shipping_Destination = i['countryFullName']

print("Shipping_Destination="+str(Shipping_Destination))

Transaction_Date = i['tradeDate']

Transaction_Date = int(str(Transaction_Date)[:-3])

Transaction_Date = todate(Transaction_Date)

print("Transaction_Date="+str(Transaction_Date))

爬取结果

到这里数据采集的工作已经基本完成了。

6.保存到csv

采集到数据后,需要保存带csv里

引入csv库

import xlwt

python写入csv

# 创建一个workbook 设置编码

workbook = xlwt.Workbook(encoding = 'utf-8')

worksheet = workbook.add_sheet('sheet1')

worksheet.write(0, 0, label="李运辰")

workbook.save("lyc/lyc_"+str("李运辰") + '.xls')

不懂python写入csv的,可以参考这篇文章

一篇文章带你使用 Python搞定对 Excel 表的读写和处理(xlsx文件的处理)

商家数据保存到csv

excel表格标题

# 创建一个worksheet

worksheet = workbook.add_sheet('sheet1')

# 参数对应 行, 列, 值

worksheet.write(0, 0, label='years')

worksheet.write(0, 1, label='product_imgs')

worksheet.write(0, 2, label='product_titles')

worksheet.write(0, 3, label='title')

worksheet.write(0, 4, label='supperherf')

worksheet.write(0, 5, label='Main Products')

worksheet.write(0, 6, label='Country_Region')

worksheet.write(0, 7, label='Total_Revenue')

worksheet.write(0, 8, label='Top3_Markets')

worksheet.write(0, 9, label='Transactions_6months')

worksheet.write(0, 10, label='num')

worksheet.write(0, 11, label='Response_Rate')

写入数据

worksheet.write(count, 0, label=str(years[0]) + "YRS")

worksheet.write(count, 1, label=str(" , ".join(product_imgs)))

worksheet.write(count, 2, label=str(" , ".join(product_titles)))

worksheet.write(count, 3, label=str(title[0]))

worksheet.write(count, 4, label=str(supperherf))

worksheet.write(count, 5, label=str(Main_Products))

worksheet.write(count, 6, label=str(Country_Region))

worksheet.write(count, 7, label=str(Total_Revenue))

worksheet.write(count, 8, label=str(Top3_Markets))

worksheet.write(count, 9, label=str(Transactions_6months[0]))

worksheet.write(count, 10, label=str(num))

worksheet.write(count, 11, label=str(Response_Rate))

供应商数据

商品数据

交易数据

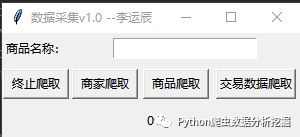

7.结尾

为了让客户方便使用,还写了一个命令行的操作界面

if __name__ == '__main__':

menu()

me = int(input("请输入:"))

f = 1

while(f):

if me == 1:

print("开始爬取商品数据")

get_product()

elif me == 2:

ke = input("请输入商品名称:")

print("开始爬取商家数据,关键字:"+str(ke))

supper(ke)

elif me == 3:

print("开始爬取交易数据")

get_trade()

else:

f=0

break

tkinter界面

但是为了方便其他机器上可以使用,我通过python写了界面

界面源码

import tkinter as tk

master = tk.Tk()

# 窗口命名

master.title("数据采集v1.0 --李运辰")

# 窗口width不可变,height可变

master.resizable(width=False, height=True)

tk.Label(master, text="商品名称:").grid(row=0)

# tk.Label(master, text="作者:").grid(row=1)

e1 = tk.Entry(master)

# e2 = tk.Entry(master)

e1.grid(row=0, column=1,columnspan=4, padx=10, pady=5)

w = tk.Label(master, text=str(0)

tk.Button(master, text="终止爬取", width=8, command=isstart).grid(row=3, column=0, sticky="w", padx=1, pady=5)

tk.Button(master, text="商家爬取", width=8, command=supper).grid(row=3, column=1, sticky="e", padx=2, pady=2)

tk.Button(master, text="商品爬取", width=8, command=product).grid(row=3, column=2, sticky="e", padx=3, pady=5)

tk.Button(master, text="交易数据爬取", width=10, command=get_trade).grid(row=3, column=3, sticky="e", padx=4, pady=5)

w.grid(row=4, column=0, columnspan=4, sticky="nesw", padx=0, pady=5)

master.mainloop()

总结

1、以上就是本次的接单的项目过程和工作,本文也是记录一下这个过程,等以后再看的时候可能是一种享受的感觉,同时也分享给你们,给小白可以学习。

2.大家如果有什么问题的可以在下方进行留言,相互学习。

------------------- End -------------------

Scrapy爬虫:链家全国各省城市房屋数据批量爬取,别再为房屋发愁!

pyhton爬取爱豆(李易峰)微博评论(附源码)

你的未来有我导航----教你如何爬取高德地图

![]()

欢迎大家点赞,留言,转发,转载,感谢大家的相伴与支持

想加入Python学习群请在后台回复【入群】

万水千山总是情,点个【在看】行不行

【加群获取学习资料QQ群:901381280】

【各种爬虫源码获取方式】

识别文末二维码,回复:爬虫源码

欢迎关注公众号:Python爬虫数据分析挖掘,方便及时阅读最新文章

回复【开源源码】免费获取更多开源项目源码

![]()