K8s-day2-二进制安装K8s+部署

文章目录

- K8S 二进制安装部署

- 优化节点并安装Docker

-

- 一、系统优化

- 二、安装docker

- 三、生成+颁发集群证书

-

- 1.准备证书生成工具

- 2.生成根证书

- 3.生成根证书请求文件

-

- 证书详解

- 4.生成根证书

-

- 参数详解

- 四、部署ETCD集群

-

- 1.节点规划

- 2.创建ETCD集群证书

-

- 配置项详解

- 3.生成ETCD证书

-

- 参数详解

- 4.分发ETCD证书

- 5.部署ETCD

- 6.注册ETCD服务

- 7.测试ETCD服务

-

- 1)第一种测试方式

-

- 测试结果

- 2)第二种测试方式

-

- 测试结果

- 部署master节点

-

- 一、创建证书

-

- 1.创建集群CA证书

-

- 1)创建集群证书

- 2)创建根证书签名

-

- 查看创建

- 3)生成根证书

-

- 生成过程···

- 2.创建集群普通证书

-

- 1)创建kube-apiserver的证书

-

- 1> 创建证书签名配置

- 2> 生成证书

- 3> 生成过程

- 4> 查看生成

- 2)创建controller-manager的证书

-

- 1> 创建证书签名配置

- 2> 生成证书

- 3> 生成过程

- 3)创建kube-scheduler的证书

-

- 1> 创建证书签名配置

- 2> 开始生成

- 3> 生成过程

- 4)创建kube-proxy证书

-

- 1> 创建证书签名配置

- 2> 开时生成

- 3> 生成过程

- 5)创建集群管理员证书

-

- 1> 创建证书签名配置

- 2> 开时生成

- 3> 生成过程

- 6)颁发证书

- 二、下载安装包+编写配置文件

-

- 1.下载安装包并分发组件

-

- 1)下载安装包

-

- 1> 方式1:下载server安装包(推荐)

- 2> 方式2:从容器中复制出来

- 2)分发组件

- 2.创建集群配置文件

-

- 1)创建kube-controller-manager.kubeconfig

- 2)创建kube-scheduler.kubeconfig

- 3)创建kube-proxy.kubeconfig集群配置文件

- 4)创建超级管理员的集群配置文件

- 5)颁发集群配置文件

-

- 1> 给其他master节点办法集群配置文件

- 2> 查看(其他master节点)

- 6)创建集群token

-

- 1> 创建集群token

- 2> 分发集群token(用于集群TLS认证)

- 3> 分发过程

- 三、部署各个组件

-

- 1.安装kube-apiserver

-

- 1)创建kube-apiserver的配置文件

- 参数详解

- 2)注册kube-apiserver的服务

- 3)对kube-apiserver做高可用

-

- 1> 安装高可用软件

- 2> 修改keepalived配置文件

- 3> 修改haproxy配置文件

- 2.部署TLS

-

- 1)创建集群配置文件

-

- 1> 查看自己的token值并复制

- 2> 开始创建

- 2)检查token是否正确

- 3)颁发 TLS bootstrap 证书

-

- 1> 颁发证书给其他master节点

- 2> 颁发成功

- 4)创建TLS低权限用户

- 3.部署contorller-manager

-

- 1)编辑配置文件

- 2)注册服务

- 3)启动

- 4.部署kube-scheduler

-

- 1)编写配置文件

- 2)注册服务

- 3)启动

- 4)查看集群状态

- 5.部署kubelet服务

-

- 1)创建kubelet服务配置文件

- 2)创建kubelet-config.yaml

- 3)注册kubelet的服务

- 4)启动

- 6.部署kube-proxy

-

- 1)创建配置文件

- 2)创建kube-proxy-config.yml

- 3)注册服务

- 4)启动

- 7.加入集群节点

-

- 1)查看集群master节点加入请求

- 2)批准master节点加入集群

- 8.安装网络插件

-

- 1)下载flannel安装包并安装推送

- 2)将flannel配置写入集群数据库

- 3)注册flannel服务(所有master节点执行)

- 4)修改docker启动文件(所有master节点执行)

- 5)启动(所有master节点执行)

- 6)验证集群网络

- 9.安装集群DNS

-

- 1)下载DNS安装配置文件包

- 2)执行部署命令

- 3)验证集群DNS

- 10.验证集群

-

- 1)绑定一下超管用户

- 2)验证集群DNS和集群网络成功

- 部署Node节点

-

-

- 1.分发软件包

- 2.分发证书

- 3.分发配置文件

- 4.部署kubelet

-

- 1)修改配置文件kubelet-config.yml的ip和kubelet.conf的节点名称为自身

- 2)启动kubelet(所有node节点)

- 5.部署kube-proxy

-

- 1)修改kube-proxy-config.yml中ip和主机名为自身

- 2)启动kube-proxy(所有node节点)

- 6.加入集群

-

- 1)查看集群状态

- 2)查看加入集群请求

- 3)批准加入

- 4)查看加入状态

- 5)查看加入节点

- 7.设置集群角色

-

- 1)设置集群角色

- 2)继续依次执行

- 3)查看节点信息

- 8.安装集群图形化界面

-

- 1)安装

- 3)查看修改后得端口

- 4)创建token配置文件

- 5)部署token到集群

- 6)获取token

- 附:增加命令提示功能(所有节点)

-

K8S 二进制安装部署

- kubernetes

k8s和docker之间的关系?

k8s是一个容器化管理平台,docker是一个容器

- 角色与部署

集群角色:

- master节点: 管理集群

- node节点: 主要用来部署应用

Master节点部署插件:

- kube-apiserver : 中央管理器,调度管理集群

- kube-controller-manager :控制器: 管理容器,监控容器

- kube-scheduler:调度器:调度容器

- flannel : 提供集群间网络

- etcd:数据库

- kubelet

- kube-proxy

Node节点部署插件:

- kubelet : 部署容器,监控容器

- kube-proxy : 提供容器间的网络

优化节点并安装Docker

-

以下操作均在所有节点执行

-

准备相应机器,配置节点规划

- 所有节点配置以下内容到hosts文件内

192.168.12.51 172.16.1.51 k8s-m1 m1

192.168.12.52 172.16.1.52 k8s-m2 m2

192.168.12.53 172.16.1.53 k8s-m3 m3

192.168.12.54 172.16.1.54 k8s-n1 n1

192.168.12.55 172.16.1.55 k8s-n2 n2

192.168.12.56 172.16.1.56 k8s-m-vip vip # 虚拟VIP

- 插件规划参考

# Master节点规划

kube-apiserver

kube-controller-manager

kube-scheduler

flannel

etcd

kubelet

kube-proxy

# Node节点规划

kubelet

kube-proxy

一、系统优化

- 以下操作均在所有节点执行

setenforce 0

sed -i "s#enabled#disabled#g" /etc/selinux/config

systemctl disable --now firewalld

# 关闭swap分区

swapoff -a

修改/etc/fstab

echo 'KUBELET_EXTRA_ARGS="--fail-swap-on=false"' > /etc/sysconfig/kubelet # kubelet忽略swap

# 做免密登录

[root@k8s-m-01 ~]# ssh-keygen -t rsa

[root@k8s-m-01 ~]# for i in m1 n1 n2;do ssh-copy-id -i ~/.ssh/id_rsa.pub root@$i; done

# 同步集群时间

# 配置镜像源

[root@k8s-m-01 ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo https://repo.huaweicloud.com/repository/conf/CentOS-7-reg.repo

[root@k8s-m-01 ~]# yum clean all

[root@k8s-m-01 ~]# yum makecache

# 更新系统

[root@k8s-m-01 ~]# yum update -y --exclud=kernel*

# 安装基础常用软件

[root@k8s-m-01 ~]# yum install wget expect vim net-tools ntp bash-completion ipvsadm ipset jq iptables conntrack sysstat libseccomp -y

# 更新系统内核(docker 对系统内核要求比较高,最好使用4.4+)

[root@k8s-m-01 ~]# wget https://elrepo.org/linux/kernel/el7/x86_64/RPMS/kernel-lt-5.4.107-1.el7.elrepo.x86_64.rpm

[root@k8s-m-01 ~]# https://elrepo.org/linux/kernel/el7/x86_64/RPMS/kernel-lt-devel-5.4.107-1.el7.elrepo.x86_64.rpm

## 安装系统内容

[root@k8s-m-01 ~]# yum localinstall -y kernel-lt*

## 调到默认启动

[root@k8s-m-01 ~]# grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

## 查看当前默认启动的内核

[root@k8s-m-01 ~]# grubby --default-kernel

## 重启

[root@k8s-m-01 ~]# reboot

# 安装IPVS

yum install -y conntrack-tools ipvsadm ipset conntrack libseccomp

## 加载IPVS模块

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_fo ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in \${ipvs_modules}; do

/sbin/modinfo -F filename \${kernel_module} > /dev/null 2>&1

if [ $? -eq 0 ]; then

/sbin/modprobe \${kernel_module}

fi

done

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

# 修改内核启动参数

cat > /etc/sysctl.d/k8s.conf << EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp.keepaliv.probes = 3

net.ipv4.tcp_keepalive_intvl = 15

net.ipv4.tcp.max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp.max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.top_timestamps = 0

net.core.somaxconn = 16384

EOF

# 立即生效

sysctl --system

二、安装docker

- 以下操作均在所有节点执行

# 卸载之前安装过得docker

[root@k8s-m-01 ~]# sudo yum remove docker docker-common docker-selinux docker-engine

# 安装docker需要的依赖包

[root@k8s-m-01 ~]# sudo yum install -y yum-utils device-mapper-persistent-data lvm2

# 安装dockeryum源

[root@k8s-m-01 ~]# wget -O /etc/yum.repos.d/docker-ce.repo https://repo.huaweicloud.com/docker-ce/linux/centos/docker-ce.repo

# 安装docker

[root@k8s-m-01 ~]# yum install docker-ce -y

# 设置开机自启动

[root@k8s-m-01 ~]# systemctl enable --now docker.service

三、生成+颁发集群证书

- 以下命令只需要在master1执行即可

1.准备证书生成工具

# 安装证书生成工具

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

# 设置执行权限

chmod +x cfssl*

# 移动到/usr/local/bin

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl_linux-amd64 /usr/local/bin/cfssl

2.生成根证书

mkdir -p /opt/cert/ca

cat > /opt/cert/ca/ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "8760h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "8760h"

}

}

}

}

EOF

3.生成根证书请求文件

cat > /opt/cert/ca/ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names":[{

"C": "CN",

"ST": "ShangHai",

"L": "ShangHai"

}]

}

EOF

证书详解

| 证书项 | 解释 |

|---|---|

| C | 国家 |

| ST | 省 |

| L | 城市 |

| O | 组织 |

| OU | 组织别名 |

4.生成根证书

[root@k8s-m-01 /opt/cert/ca] cd /opt/cert/ca

[root@k8s-m-01 /opt/cert/ca] cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

# 过程

2021/03/26 17:34:55 [INFO] generating a new CA key and certificate from CSR

2021/03/26 17:34:55 [INFO] generate received request

2021/03/26 17:34:55 [INFO] received CSR

2021/03/26 17:34:55 [INFO] generating key: rsa-2048

2021/03/26 17:34:56 [INFO] encoded CSR

2021/03/26 17:34:56 [INFO] signed certificate with serial number 661764636777400005196465272245416169967628201792

# 查看生成证书

[root@k8s-m-01 /opt/cert/ca]# ll

total 20

-rw-r--r-- 1 root root 285 Mar 26 17:34 ca-config.json

-rw-r--r-- 1 root root 960 Mar 26 17:34 ca.csr

-rw-r--r-- 1 root root 153 Mar 26 17:34 ca-csr.json

-rw------- 1 root root 1675 Mar 26 17:34 ca-key.pem

-rw-r--r-- 1 root root 1281 Mar 26 17:34 ca.pem

参数详解

| 参数项 | 解释 |

|---|---|

| gencert | 生成新的key(密钥)和签名证书 |

| –initca | 初始化一个新CA证书 |

四、部署ETCD集群

- ETCD需要做高可用(一般建议至少为三台节点,企业内一般为五台,可防止宕机两台)

- 在一台master节点执行即可

- etcd不是非要部署在master或node节点,它就像mysql,只要相互能联通即可~

1.节点规划

192.168.12.51 etcd-1

192.168.12.52 etcd-2

192.168.12.53 etcd-3

2.创建ETCD集群证书

- hosts:在几台部署etcd,则指定几台IP,且最后以为IP不能有都好(固定格式。遵守即可)

mkdir -p /opt/cert/etcd

cd /opt/cert/etcd

cat > etcd-csr.json << EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.12.51",

"192.168.12.52",

"192.168.12.53"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "ShangHai",

"L": "ShangHai"

}

]

}

EOF

配置项详解

| 配置选项 | 选项操作 |

|---|---|

| name | 节点名称 |

| data-dir | 指定节点的数据存储目录 |

| listen-peer-urls | 与集群其它成员之间的通信地址 |

| listen-client-urls | 监听本地端口,对外提供服务的地址 |

| initial-advertise-peer-urls | 通告给集群其它节点,本地的对等URL地址 |

| advertise-client-urls | 客户端URL,用于通告集群的其余部分信息 |

| initial-cluster | 集群中的所有信息节点 |

| initial-cluster-token | 集群的token,整个集群中保持一致 |

| initial-cluster-state | 初始化集群状态,默认为new |

| –cert-file | 客户端与服务器之间TLS证书文件的路径 |

| –key-file | 客户端与服务器之间TLS密钥文件的路径 |

| –peer-cert-file | 对等服务器TLS证书文件的路径 |

| –peer-key-file | 对等服务器TLS密钥文件的路径 |

| –trusted-ca-file | 签名client证书的CA证书,用于验证client证书 |

| –peer-trusted-ca-file | 签名对等服务器证书的CA证书 |

| –trusted-ca-file | 签名client证书的CA证书,用于验证client证书 |

| –peer-trusted-ca-file | 签名对等服务器证书的CA证书。 |

3.生成ETCD证书

[root@k8s-m-01 /opt/cert/etcd]# cfssl gencert -ca=../ca/ca.pem -ca-key=../ca/ca-key.pem -config=../ca/ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2021/03/26 17:38:57 [INFO] generate received request

2021/03/26 17:38:57 [INFO] received CSR

2021/03/26 17:38:57 [INFO] generating key: rsa-2048

2021/03/26 17:38:58 [INFO] encoded CSR

2021/03/26 17:38:58 [INFO] signed certificate with serial number 179909685000914921289186132666286329014949215773

2021/03/26 17:38:58 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

# 参数详解:

- gencert:生成新的key()密钥和签名证书

- initca:初始化一个ca

-ca-key:指明ca证书

-config:知名ca的私钥文件

-profile:知名请求证书的json文件

-ca:与config中的profile对应,是根据config中的profile段来生成证书的相关信息

参数详解

| 参数项 | 解释 |

|---|---|

| gencert | 生成新的key(密钥)和签名证书 |

| -initca | 初始化一个新的ca |

| -ca-key | 指明ca的证书 |

| -config | 指明ca的私钥文件 |

| -profile | 指明请求证书的json文件 |

| -ca | 与config中的profile对应,是指根据config中的profile段来生成证书的相关信息 |

4.分发ETCD证书

[root@k8s-m-01 /opt/cert/etcd]# for ip in m{1..3};do

ssh root@${ip} "mkdir -pv /etc/etcd/ssl"

scp ../ca/ca*.pem root@${ip}:/etc/etcd/ssl

scp ./etcd*.pem root@${ip}:/etc/etcd/ssl

done

mkdir: created directory ‘/etc/etcd’

mkdir: created directory ‘/etc/etcd/ssl’

ca-key.pem 100% 1675 299.2KB/s 00:00

ca.pem 100% 1281 232.3KB/s 00:00

etcd-key.pem 100% 1675 1.4MB/s 00:00

etcd.pem 100% 1379 991.0KB/s 00:00

mkdir: created directory ‘/etc/etcd’

mkdir: created directory ‘/etc/etcd/ssl’

ca-key.pem 100% 1675 1.1MB/s 00:00

ca.pem 100% 1281 650.8KB/s 00:00

etcd-key.pem 100% 1675 507.7KB/s 00:00

etcd.pem 100% 1379 166.7KB/s 00:00

mkdir: created directory ‘/etc/etcd’

mkdir: created directory ‘/etc/etcd/ssl’

ca-key.pem 100% 1675 109.1KB/s 00:00

ca.pem 100% 1281 252.9KB/s 00:00

etcd-key.pem 100% 1675 121.0KB/s 00:00

etcd.pem 100% 1379 180.4KB/s 00:00

# 查看分发证书

[root@k8s-m-01 /opt/cert/etcd]# ll /etc/etcd/ssl/

total 16

-rw------- 1 root root 1675 Mar 26 17:41 ca-key.pem

-rw-r--r-- 1 root root 1281 Mar 26 17:41 ca.pem

-rw------- 1 root root 1675 Mar 26 17:41 etcd-key.pem

-rw-r--r-- 1 root root 1379 Mar 26 17:41 etcd.pem

5.部署ETCD

# 下载ETCD安装包

wget https://mirrors.huaweicloud.com/etcd/v3.3.24/etcd-v3.3.24-linux-amd64.tar.gz

# 解压

tar xf etcd-v3.3.24-linux-amd64.tar.gz

# 分发至其他节点

for i in m1 m2 m3;do scp ./etcd-v3.3.24-linux-amd64/etcd* root@$i:/usr/local/bin/;done

# 三节点各自查看自己ETCD版本(是否分发成功)

[root@k8s-m-01 /opt/etcd-v3.3.24-linux-amd64]# etcd --version

etcd Version: 3.3.24

Git SHA: bdd57848d

Go Version: go1.12.17

Go OS/Arch: linux/amd64

6.注册ETCD服务

- 这里部署在三台master,故在三台master节点上同时执行此步骤

- 利用变量主机名与ip,让其在每台master节点注册

- INITIAL_CLUSTER:此变量指定所部署etcd的服务器(主机名=ip:2380),主机名一定要与主机自身相同,不可用别名!

mkdir -pv /etc/kubernetes/conf/etcd

ETCD_NAME=`hostname`

INTERNAL_IP=`hostname -I | awk '{print $1}'`

INITIAL_CLUSTER=k8s-m1=https://192.168.12.51:2380,k8s-m2=https://192.168.12.52:2380,k8s-m3=https://192.168.12.53:2380

cat << EOF | sudo tee /usr/lib/systemd/system/etcd.service

[Unit]

Description=etcd

Documentation=https://github.com/coreos

[Service]

ExecStart=/usr/local/bin/etcd \\

--name ${ETCD_NAME} \\

--cert-file=/etc/etcd/ssl/etcd.pem \\

--key-file=/etc/etcd/ssl/etcd-key.pem \\

--peer-cert-file=/etc/etcd/ssl/etcd.pem \\

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \\

--trusted-ca-file=/etc/etcd/ssl/ca.pem \\

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \\

--peer-client-cert-auth \\

--client-cert-auth \\

--initial-advertise-peer-urls https://${INTERNAL_IP}:2380 \\

--listen-peer-urls https://${INTERNAL_IP}:2380 \\

--listen-client-urls https://${INTERNAL_IP}:2379,https://127.0.0.1:2379 \\

--advertise-client-urls https://${INTERNAL_IP}:2379 \\

--initial-cluster-token etcd-cluster \\

--initial-cluster ${INITIAL_CLUSTER} \\

--initial-cluster-state new \\

--data-dir=/var/lib/etcd

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

# 启动ETCD服务

systemctl enable --now etcd

# 若其他主节点启动etcd失败:systemctl status etcd

6月 21 13:12:54 k8s-master2 systemd[1]: Unit etcd.service entered failed state.

6月 21 13:12:54 k8s-master2 systemd[1]: etcd.service failed.

# 失败原因:可能是hostname -i 获取的ip变量不对,需修改配置文件,对应着master1节点的配置文件进行修改,

vim /usr/lib/systemd/system/etcd.service

# 一般将这四行的ip改为服务器本身的ip,端口不变

--initial-advertise-peer-urls https://172.23.0.222:2380 \

--listen-peer-urls https://172.23.0.222:2380 \

--listen-client-urls https://172.23.0.222:2379,https://127.0.0.1:2379 \

--advertise-client-urls https://172.23.0.222:2379 \

# 保存,重载、重启

# 注意:先启动node节点,再启动master节点,否则启动报错无法连接!

systemctl daemon-reload;systemctl start etcd;systemctl status etcd

# 若还不行的话,可再尝试修改词条配置~

--initial-cluster-state=existing \ # 将new这个参数修改成existing,启动正常!

7.测试ETCD服务

- 在一台master节点执行即可(如master1)

1)第一种测试方式

ETCDCTL_API=3 etcdctl \

--cacert=/etc/etcd/ssl/etcd.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

--endpoints="https://192.168.12.51:2379,https://192.168.12.52:2379,https://192.168.12.53:2379" \

endpoint status --write-out='table'

测试结果

+----------------------------+------------------+---------+---------+-----------+-----------+------------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | RAFT TERM | RAFT INDEX |

+----------------------------+------------------+---------+---------+-----------+-----------+------------+

| https://192.168.12.51:2379 | 12b1a3a96a68c457 | 3.3.24 | 20 kB | false | 2 | 8 |

| https://192.168.12.52:2379 | 5864e8e236d495ab | 3.3.24 | 20 kB | false | 2 | 8 |

| https://192.168.12.53:2379 | 7afcc86f3afa7f1b | 3.3.24 | 20 kB | true | 2 | 8 |

+----------------------------+------------------+---------+---------+-----------+-----------+------------+

2)第二种测试方式

ETCDCTL_API=3 etcdctl \

--cacert=/etc/etcd/ssl/etcd.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

--endpoints="https://192.168.12.51:2379,https://192.168.12.52:2379,https://192.168.12.53:2379" \

member list --write-out='table'

测试结果

+------------------+---------+--------+----------------------------+----------------------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS |

+------------------+---------+--------+----------------------------+----------------------------+

| 12b1a3a96a68c457 | started | k8s-m1 | https://192.168.12.51:2380 | https://192.168.12.51:2379 |

| 5864e8e236d495ab | started | k8s-m2 | https://192.168.12.52:2380 | https://192.168.12.52:2379 |

| 7afcc86f3afa7f1b | started | k8s-m3 | https://192.168.12.53:2380 | https://192.168.12.53:2379 |

+------------------+---------+--------+----------------------------+----------------------------+

- 小结作业:部署到etcd,自己画一份k8s架构图

部署master节点

小结目的:主要把master节点上的各个组件部署成功

- 集群规划

192.168.12.51 172.16.1.51 k8s-m1 m1

192.168.12.52 172.16.1.52 k8s-m2 m2

192.168.12.53 172.16.1.53 k8s-m3 m3

kube-apiserver、控制器、调度器、flannel、etcd、kubelet、kube-proxy、DNS

一、创建证书

- 只需要在任意一台 master 节点上执行(如master1)

1.创建集群CA证书

- Master 节点是集群当中最为重要的一部分,组件众多,部署也最为复杂

- 以下证书均是在 /opt/cert/k8s 下生成

1)创建集群证书

mkdir /opt/cert/k8s

cd /opt/cert/k8s

cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

2)创建根证书签名

cat > ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "ShangHai",

"ST": "ShangHai"

}

]

}

EOF

查看创建

ll

total 8

-rw-r--r-- 1 root root 294 Sep 13 19:59 ca-config.json

-rw-r--r-- 1 root root 212 Sep 13 20:01 ca-csr.json

3)生成根证书

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

生成过程···

2020/09/13 20:01:45 [INFO] generating a new CA key and certificate from CSR

2020/09/13 20:01:45 [INFO] generate received request

2020/09/13 20:01:45 [INFO] received CSR

2020/09/13 20:01:45 [INFO] generating key: rsa-2048

2020/09/13 20:01:46 [INFO] encoded CSR

2020/09/13 20:01:46 [INFO] signed certificate with serial number 588993429584840635805985813644877690042550093427

# 查看生成证书

[root@kubernetes-master-01 k8s]# ll

total 20

-rw-r--r-- 1 root root 294 Sep 13 19:59 ca-config.json

-rw-r--r-- 1 root root 960 Sep 13 20:01 ca.csr

-rw-r--r-- 1 root root 212 Sep 13 20:01 ca-csr.json

-rw------- 1 root root 1679 Sep 13 20:01 ca-key.pem

-rw-r--r-- 1 root root 1273 Sep 13 20:01 ca.pem

2.创建集群普通证书

- 即创建集群各个组件之间的证书

1)创建kube-apiserver的证书

1> 创建证书签名配置

cat > /opt/cert/k8s/server-csr.json << EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.12.51",

"192.168.12.52",

"192.168.12.53",

"192.168.12.54",

"192.168.12.55",

"192.168.12.56",

"10.96.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "ShangHai",

"ST": "ShangHai"

}

]

}

EOF

2> 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

3> 生成过程

2021/03/29 09:31:02 [INFO] generate received request

2021/03/29 09:31:02 [INFO] received CSR

2021/03/29 09:31:02 [INFO] generating key: rsa-2048

2021/03/29 09:31:02 [INFO] encoded CSR

2021/03/29 09:31:02 [INFO] signed certificate with serial number 475285860832876170844498652484239182294052997083

2021/03/29 09:31:02 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

4> 查看生成

ll

total 36

-rw-r--r-- 1 root root 294 Mar 29 09:13 ca-config.json

-rw-r--r-- 1 root root 960 Mar 29 09:16 ca.csr

-rw-r--r-- 1 root root 214 Mar 29 09:14 ca-csr.json

-rw------- 1 root root 1675 Mar 29 09:16 ca-key.pem

-rw-r--r-- 1 root root 1281 Mar 29 09:16 ca.pem

-rw-r--r-- 1 root root 1245 Mar 29 09:31 server.csr

-rw-r--r-- 1 root root 603 Mar 29 09:29 server-csr.json

-rw------- 1 root root 1675 Mar 29 09:31 server-key.pem

-rw-r--r-- 1 root root 1574 Mar 29 09:31 server.pem

2)创建controller-manager的证书

1> 创建证书签名配置

- hosts 列表包含所有 kube-controller-manager 节点 IP

- vip地址不需要输入

cat > kube-controller-manager-csr.json << EOF

{

"CN": "system:kube-controller-manager",

"hosts": [

"127.0.0.1",

"192.168.12.51",

"192.168.12.52",

"192.168.12.53"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "ShangHai",

"ST": "ShangHai",

"O": "system:kube-controller-manager",

"OU": "System"

}

]

}

EOF

2> 生成证书

- ca路径一定要指定正确,否则生成是失败!

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

3> 生成过程

2021/03/29 09:33:31 [INFO] generate received request

2021/03/29 09:33:31 [INFO] received CSR

2021/03/29 09:33:31 [INFO] generating key: rsa-2048

2021/03/29 09:33:31 [INFO] encoded CSR

2021/03/29 09:33:31 [INFO] signed certificate with serial number 159207911625502250093013220742142932946474251607

2021/03/29 09:33:31 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements")

3)创建kube-scheduler的证书

1> 创建证书签名配置

cat > kube-scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.12.51",

"192.168.12.52",

"192.168.12.53",

"192.168.12.54",

"192.168.12.55",

"192.168.12.56"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-scheduler",

"OU": "System"

}

]

}

EOF

2> 开始生成

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

3> 生成过程

2021/03/29 09:34:57 [INFO] generate received request

2021/03/29 09:34:57 [INFO] received CSR

2021/03/29 09:34:57 [INFO] generating key: rsa-2048

2021/03/29 09:34:58 [INFO] encoded CSR

2021/03/29 09:34:58 [INFO] signed certificate with serial number 38647006614878532408684142936672497501281226307

2021/03/29 09:34:58 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

4)创建kube-proxy证书

1> 创建证书签名配置

cat > kube-proxy-csr.json << EOF

{

"CN":"system:kube-proxy",

"hosts":[],

"key":{

"algo":"rsa",

"size":2048

},

"names":[

{

"C":"CN",

"L":"BeiJing",

"ST":"BeiJing",

"O":"system:kube-proxy",

"OU":"System"

}

]

}

EOF

2> 开时生成

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

3> 生成过程

2021/03/29 09:37:44 [INFO] generate received request

2021/03/29 09:37:44 [INFO] received CSR

2021/03/29 09:37:44 [INFO] generating key: rsa-2048

2021/03/29 09:37:44 [INFO] encoded CSR

2021/03/29 09:37:44 [INFO] signed certificate with serial number 703321465371340829919693910125364764243453439484

2021/03/29 09:37:44 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

5)创建集群管理员证书

1> 创建证书签名配置

cat > admin-csr.json << EOF

{

"CN":"admin",

"key":{

"algo":"rsa",

"size":2048

},

"names":[

{

"C":"CN",

"L":"BeiJing",

"ST":"BeiJing",

"O":"system:masters",

"OU":"System"

}

]

}

EOF

2> 开时生成

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

3> 生成过程

2021/03/29 09:36:26 [INFO] generate received request

2021/03/29 09:36:26 [INFO] received CSR

2021/03/29 09:36:26 [INFO] generating key: rsa-2048

2021/03/29 09:36:26 [INFO] encoded CSR

2021/03/29 09:36:26 [INFO] signed certificate with serial number 258862825289855717894394114308507213391711602858

2021/03/29 09:36:26 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

6)颁发证书

-

给所有master节点颁发证书

-

Master 节点所需证书

- ca、kube-apiservver

- kube-controller-manager

- kube-scheduler

- 用户证书

- Etcd 证书

for i in m1 m2 m3;do

ssh root@$i "mkdir -pv /etc/kubernetes/ssl"

scp ./{

ca*pem,server*pem,kube-controller-manager*pem,kube-scheduler*.pem,kube-proxy*pem,admin*.pem} root@$i:/etc/kubernetes/ssl

done

二、下载安装包+编写配置文件

- 在一台master节点执行即可(如master1)

1.下载安装包并分发组件

1)下载安装包

1> 方式1:下载server安装包(推荐)

- 若需要下载node或client直接将server字段替换就行

cd && wget https://dl.k8s.io/v1.18.8/kubernetes-server-linux-amd64.tar.gz

2> 方式2:从容器中复制出来

docker pull registry.cn-hangzhou.aliyuncs.com/k8sos/k8s:v1.18.8.1

docker run -d registry.cn-hangzhou.aliyuncs.com/k8sos/k8s:v1.18.8.1 6ea70f4b

docker cp 6ea70f4b:/kubernetes-server-linux-amd64.tar.gz .

2)分发组件

tar -xf kubernetes-server-linux-amd64.tar.gz

cd kubernetes/server/bin

for i in m1 m2 m3 ;do

scp kube-apiserver kube-controller-manager kube-proxy kubectl kubelet kube-scheduler root@$i:/usr/local/bin

done

2.创建集群配置文件

- 直接全选复制粘贴即可

- 注意自己配置的虚拟vip,此处为192.168.12.56(新的,要与任何主从节点与不同才行)

1)创建kube-controller-manager.kubeconfig

# 切进目录内

cd /opt/cert/k8s

# 创建kube-controller-manager.kubeconfig

# 定义kube_apiserver的IP+端口变量, 供下面几部使用~

export KUBE_APISERVER="https://192.168.12.56:8443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-controller-manager.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "kube-controller-manager" \

--client-certificate=/etc/kubernetes/ssl/kube-controller-manager.pem \

--client-key=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=kube-controller-manager.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kube-controller-manager" \

--kubeconfig=kube-controller-manager.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kube-controller-manager.kubeconfig

2)创建kube-scheduler.kubeconfig

# 创建kube-scheduler.kubeconfig

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-scheduler.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "kube-scheduler" \

--client-certificate=/etc/kubernetes/ssl/kube-scheduler.pem \

--client-key=/etc/kubernetes/ssl/kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=kube-scheduler.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kube-scheduler" \

--kubeconfig=kube-scheduler.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kube-scheduler.kubeconfig

3)创建kube-proxy.kubeconfig集群配置文件

## 创建kube-proxy.kubeconfig集群配置文件

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "kube-proxy" \

--client-certificate=/etc/kubernetes/ssl/kube-proxy.pem \

--client-key=/etc/kubernetes/ssl/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kube-proxy" \

--kubeconfig=kube-proxy.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

4)创建超级管理员的集群配置文件

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=admin.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "admin" \

--client-certificate=/etc/kubernetes/ssl/admin.pem \

--client-key=/etc/kubernetes/ssl/admin-key.pem \

--embed-certs=true \

--kubeconfig=admin.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="admin" \

--kubeconfig=admin.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=admin.kubeconfig

5)颁发集群配置文件

1> 给其他master节点办法集群配置文件

cd /opt/cert/k8s

for i in m1 m2 m3; do

ssh root@$i "mkdir -pv /etc/kubernetes/cfg"

scp ./*.kubeconfig root@$i:/etc/kubernetes/cfg

done

2> 查看(其他master节点)

[root@k8s-m1 k8s] ll /etc/kubernetes/cfg/

total 32

-rw------- 1 root root 6103 Mar 29 20:45 admin.kubeconfig

-rw------- 1 root root 6315 Mar 29 20:45 kube-controller-manager.kubeconfig

-rw------- 1 root root 6137 Mar 29 20:45 kube-proxy.kubeconfig

-rw------- 1 root root 6261 Mar 29 20:45 kube-scheduler.kubeconfig

6)创建集群token

- 在master1创建后,分发到其他master节点

1> 创建集群token

cd /opt/cert/k8s

TLS_BOOTSTRAPPING_TOKEN=`head -c 16 /dev/urandom | od -An -t x | tr -d ' '`

cat > token.csv << EOF

${TLS_BOOTSTRAPPING_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

2> 分发集群token(用于集群TLS认证)

cd /opt/cert/k8s

for i in m1 m2 m3;do

scp token.csv root@$i:/etc/kubernetes/cfg/

done

3> 分发过程

token.csv 100% 84 31.4KB/s 00:00

token.csv 100% 84 35.6KB/s 00:00

token.csv 100% 84 28.9KB/s 00:00

三、部署各个组件

安装各个组件,使其可以正常工作

1.安装kube-apiserver

-在所有的master节点上执行

1)创建kube-apiserver的配置文件

KUBE_APISERVER_IP=`hostname -I | awk '{print $1}'`

cat > /etc/kubernetes/cfg/kube-apiserver.conf << EOF

KUBE_APISERVER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--advertise-address=${KUBE_APISERVER_IP} \\

--default-not-ready-toleration-seconds=360 \\

--default-unreachable-toleration-seconds=360 \\

--max-mutating-requests-inflight=2000 \\

--max-requests-inflight=4000 \\

--default-watch-cache-size=200 \\

--delete-collection-workers=2 \\

--bind-address=0.0.0.0 \\

--secure-port=6443 \\

--allow-privileged=true \\

--service-cluster-ip-range=10.96.0.0/16 \\

--service-node-port-range=30000-52767 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--enable-bootstrap-token-auth=true \\

--token-auth-file=/etc/kubernetes/cfg/token.csv \\

--kubelet-client-certificate=/etc/kubernetes/ssl/server.pem \\

--kubelet-client-key=/etc/kubernetes/ssl/server-key.pem \\

--tls-cert-file=/etc/kubernetes/ssl/server.pem \\

--tls-private-key-file=/etc/kubernetes/ssl/server-key.pem \\

--client-ca-file=/etc/kubernetes/ssl/ca.pem \\

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/var/log/kubernetes/k8s-audit.log \\

--etcd-servers=https://192.168.12.51:2379,https://192.168.12.52:2379,https://192.168.12.53:2379 \\

--etcd-cafile=/etc/etcd/ssl/ca.pem \\

--etcd-certfile=/etc/etcd/ssl/etcd.pem \\

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem"

EOF

参数详解

| 配置项 | 说明 |

|---|---|

| –logtostderr=false | 输出日志到文件中,不输出到标准错误控制台 |

| –v=2 | 指定输出日志的级别 |

| –advertise-address | 向集群成员通知apiserver消息的IP地址 |

| –etcd-servers | 连接的etcd服务器列表 |

| –etcd-cafile | 用于etcd通信的SSLCA文件 |

| –etcd-certfile | 用于etcd通信的的SSL证书文件 |

| –etcd-keyfile | 用于etcd通信的SSL密钥文件 |

| –service-cluster-ip-range | Service网络地址分配 |

| –bind-address | 监听–seure-port的IP地址,如果为空,则将使用所有接口(0.0.0.0) |

| –secure-port=6443 | 用于监听具有认证授权功能的HTTPS协议的端口,默认值是6443 |

| –allow-privileged | 是否启用授权功能 |

| –service-node-port-range | Service使用的端口范围 |

| –default-not-ready-toleration-seconds | 表示notReady状态的容忍度秒数 |

| –default-unreachable-toleration-seconds | 表示unreachable状态的容忍度秒数 |

| –max-mutating-requests-inflight=2000 | 在给定时间内进行中可变请求的最大数量,0值表示没有限制(默认值200) |

| –default-watch-cache-size=200 | 默认监视缓存大小,0表示对于没有设置默认监视大小的资源,将禁用监视缓存 |

| –delete-collection-workers=2 | 用于DeleteCollection调用的工作者数量,这被用于加速namespace的清理(默认值1) |

| –enable-admission-plugins | 资源限制的相关配置 |

| –authorization-mode | 在安全端口上进行权限验证的插件的顺序列表,以逗号分隔的列表。 |

2)注册kube-apiserver的服务

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=10

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

# 重载、自启、启动、状态

systemctl daemon-reload;systemctl enable --now kube-apiserver;systemctl status kube-apiserver

3)对kube-apiserver做高可用

1> 安装高可用软件

yum install -y keepalived haproxy

2> 修改keepalived配置文件

- 根据节点的不同,修改的配置也不同

- 以下命令以配置自动获取ip,可直接复制粘贴

mv /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf_bak

cd /etc/keepalived

KUBE_APISERVER_IP=`hostname -I | awk '{print $1}'`

cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_kubernetes {

script "/etc/keepalived/check_kubernetes.sh"

interval 2

weight -5

fall 3

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface eth0

mcast_src_ip ${KUBE_APISERVER_IP}

virtual_router_id 51

priority 100

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.12.56

}

}

EOF

systemctl enable --now keepalived;systemctl status keepalived

3> 修改haproxy配置文件

- 高可用软件

- 只需修改底部对应IP地址即可(master节点,且keepalived高可用的主机)

cat > /etc/haproxy/haproxy.cfg <<EOF

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

listen stats

bind *:8006

mode http

stats enable

stats hide-version

stats uri /stats

stats refresh 30s

stats realm Haproxy\ Statistics

stats auth admin:admin

frontend k8s-master

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server kubernetes-master1 192.168.12.51:6443 check inter 2000 fall 2 rise 2 weight 100

server kubernetes-master2 192.168.12.52:6443 check inter 2000 fall 2 rise 2 weight 100

server kubernetes-master3 192.168.12.53:6443 check inter 2000 fall 2 rise 2 weight 100

EOF

systemctl enable --now haproxy;systemctl status haproxy

2.部署TLS

- 只需要在一台master节点上执行即可

- 在 kubernetes 中,我们需要创建一个配置文件,用来配置集群、用户、命名空间及身份认证等信息

- apiserver 动态签署颁发到Node节点,实现证书签署自动化

1)创建集群配置文件

1> 查看自己的token值并复制

cat /opt/cert/k8s/token.csv

ebec85a1c8555fe24fd1b39483a0ffc1,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

# PS:token值为:ebec85a1c8555fe24fd1b39483a0ffc1

替换粘贴如下的token值

2> 开始创建

# 此处为选择的虚拟IP地址

cd /etc/kubernetes/cfg/

export KUBE_APISERVER="https://192.168.12.56:8443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kubelet-bootstrap.kubeconfig

# 设置客户端认证参数,此处token必须用上叙token.csv中的token

kubectl config set-credentials "kubelet-bootstrap" \

--token=ebec85a1c8555fe24fd1b39483a0ffc1 \

--kubeconfig=kubelet-bootstrap.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kubelet-bootstrap" \

--kubeconfig=kubelet-bootstrap.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

2)检查token是否正确

- 若两个token值不对应,则进行第一步,重新查看并配置,再进行推送

[root@k8s-m1 cfg] cat /etc/kubernetes/cfg/kubelet-bootstrap.kubeconfig | grep token

token: ebec85a1c8555fe24fd1b39483a0ffc1

[root@k8s-m1 cfg]# cat token.csv

ebec85a1c8555fe24fd1b39483a0ffc1,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

3)颁发 TLS bootstrap 证书

1> 颁发证书给其他master节点

for i in m1 m2 m3; do

scp kubelet-bootstrap.kubeconfig root@$i:/etc/kubernetes/cfg/

done

2> 颁发成功

kubelet-bootstrap.kubeconfig 100% 1990 1.6MB/s 00:00

kubelet-bootstrap.kubeconfig 100% 1990 749.1KB/s 00:00

kubelet-bootstrap.kubeconfig 100% 1990 745.3KB/s 00:00

4)创建TLS低权限用户

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

3.部署contorller-manager

- 需要在三台master节点上执行

- Controller Manager 作为集群内部的管理控制中心,负责管理集群内的:

- Node、Pod 副本、服务端点(Endpoint)、 命名空间(Namespace)

- 服务账号(ServiceAccount)、资源定额(ResourceQuota)

- 当某个 Node 意外宕机时,Controller Manager 会及时发现并执行自动化修复流程,确保集群始终处于预期的工作状态。

- 若多个控制器管理器同时生效,则会有一致性问题,所以 kube-controller-manager 的高可用,只能是主备模式, 而 kubernetes 集群是采用租赁锁实现 leader 选举,需要在启动参数中加入 --leader-elect=true。

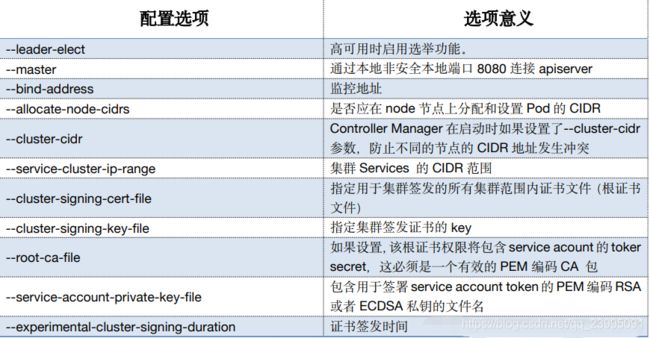

1)编辑配置文件

cat > /etc/kubernetes/cfg/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--leader-elect=true \\

--cluster-name=kubernetes \\

--bind-address=127.0.0.1 \\

--allocate-node-cidrs=true \\

--cluster-cidr=10.244.0.0/12 \\

--service-cluster-ip-range=10.96.0.0/16 \\

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/etc/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig \\

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \\

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s \\

--controllers=*,bootstrapsigner,tokencleaner \\

--use-service-account-credentials=true \\

--node-monitor-grace-period=10s \\

--horizontal-pod-autoscaler-use-rest-clients=true"

EOF

2)注册服务

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

3)启动

systemctl daemon-reload;systemctl enable --now kube-controller-manager;systemctl status kube-controller-manager

4.部署kube-scheduler

- 三台机器上都需要执行

1)编写配置文件

cd /opt/cert/k8s

cat > /etc/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--kubeconfig=/etc/kubernetes/cfg/kube-scheduler.kubeconfig \\

--leader-elect=true \\

--master=http://127.0.0.1:8080 \\

--bind-address=127.0.0.1 "

EOF

2)注册服务

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

3)启动

systemctl daemon-reload;systemctl enable --now kube-scheduler;systemctl status kube-scheduler

4)查看集群状态

- 以下状态即为成功

[root@k8s-m-01 /opt/cert/k8s] kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {

"health":"true"}

etcd-1 Healthy {

"health":"true"}

etcd-0 Healthy {

"health":"true"}

5.部署kubelet服务

- 需要在三台master节点上执行

1)创建kubelet服务配置文件

KUBE_HOSTNAME=`hostname`

cat > /etc/kubernetes/cfg/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--hostname-override=${KUBE_HOSTNAME} \\

--container-runtime=docker \\

--kubeconfig=/etc/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/etc/kubernetes/cfg/kubelet-bootstrap.kubeconfig \\

--config=/etc/kubernetes/cfg/kubelet-config.yml \\

--cert-dir=/etc/kubernetes/ssl \\

--image-pull-progress-deadline=15m \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/k8sos/pause:3.2"

EOF

2)创建kubelet-config.yaml

KUBE_HOSTNAME=`hostname -I | awk '{print $1}'`

cat > /etc/kubernetes/cfg/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${KUBE_HOSTNAME}

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.96.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOF

3)注册kubelet的服务

cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kubelet.conf

ExecStart=/usr/local/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

4)启动

systemctl daemon-reload;systemctl enable --now kubelet;systemctl status kubelet

6.部署kube-proxy

- 需要在三台master节点上执行

1)创建配置文件

cat > /etc/kubernetes/cfg/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--config=/etc/kubernetes/cfg/kube-proxy-config.yml"

EOF

2)创建kube-proxy-config.yml

KUBE_HOSTNAME=`hostname -I | awk '{print $1}'`

HOSTNAME=`hostname`

cat > /etc/kubernetes/cfg/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: ${KUBE_HOSTNAME}

healthzBindAddress: ${KUBE_HOSTNAME}:10256

metricsBindAddress: ${KUBE_HOSTNAME}:10249

clientConnection:

burst: 200

kubeconfig: /etc/kubernetes/cfg/kube-proxy.kubeconfig

qps: 100

hostnameOverride: ${

HOSTNAME}

clusterCIDR: 10.96.0.0/16

enableProfiling: true

mode: "ipvs"

kubeProxyIPTablesConfiguration:

masqueradeAll: false

kubeProxyIPVSConfiguration:

scheduler: rr

excludeCIDRs: []

EOF

3)注册服务

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-proxy.conf

ExecStart=/usr/local/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

4)启动

systemctl daemon-reload;systemctl enable --now kube-proxy;systemctl status kube-proxy

7.加入集群节点

- 只需要在一台 master 节点上执行即可

1)查看集群master节点加入请求

[root@k8s-m1 ~] kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-PAtnqh5jryvN9V8ekWNaYEZNz3EiPkSy6eJRPJsJsbc 118m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-Q31QwPEvniS_ZVvUg_tRdB_0uYVlpPaD7rbO6Hd0lcU 119m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-feAuDyr8xL8D2j_HOVPKITUovsJUspgpc0CupZHMKEA 119m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

2)批准master节点加入集群

[root@k8s-m1 ~] kubectl certificate approve `kubectl get csr | grep "Pending" | awk '{print $1}'`

certificatesigningrequest.certificates.k8s.io/node-csr-PAtnqh5jryvN9V8ekWNaYEZNz3EiPkSy6eJRPJsJsbc approved

certificatesigningrequest.certificates.k8s.io/node-csr-Q31QwPEvniS_ZVvUg_tRdB_0uYVlpPaD7rbO6Hd0lcU approved

certificatesigningrequest.certificates.k8s.io/node-csr-feAuDyr8xL8D2j_HOVPKITUovsJUspgpc0CupZHMKEA approved

# 查看节点信息

[root@k8s-m1 ~] kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-m1 Ready <none> 3s v1.18.8

k8s-m2 Ready <none> 4s v1.18.8

k8s-m3 Ready <none> 3s v1.18.8

8.安装网络插件

本次选择使用flannel网络插件

只需要在一台节点上执行即可

1)下载flannel安装包并安装推送

[root@k8s-m1 opt] wget https://github.com/coreos/flannel/releases/download/v0.13.1-rc1/flannel-v0.13.1-rc1-linux-amd64.tar.gz

#若下载失败,可用网盘下载链接:

https://pan.baidu.com/s/1JNWPO4itvmcsdBEln8b41g 提取码:uj2d

[root@k8s-m1 opt] tar -xf flannel-v0.11.0-linux-amd64.tar.gz

[root@k8s-m1 opt] for i in m{

1..3};do scp mk-docker-opts.sh flanneld root@$i:/usr/local/bin/;done

mk-docker-opts.sh 100% 2139 1.3MB/s 00:00

flanneld 100% 2139 2.5MB/s 00:00

mk-docker-opts.sh 100% 2139 616.0KB/s 00:00

flanneld 100% 2139 2.5MB/s 00:00

mk-docker-opts.sh 100% 2139 435.3KB/s 00:00

flanneld 100% 2139 2.5MB/s 00:00

2)将flannel配置写入集群数据库

etcdctl \

--ca-file=/etc/etcd/ssl/ca.pem \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--endpoints="https://192.168.12.51:2379,https://192.168.12.52:2379,https://192.168.12.53:2379" \

mk /coreos.com/network/config '{"Network":"10.244.0.0/12", "SubnetLen": 21, "Backend": {"Type": "vxlan", "DirectRouting": true}}'

3)注册flannel服务(所有master节点执行)

cat > /usr/lib/systemd/system/flanneld.service << EOF

[Unit]

Description=Flanneld address

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

ExecStart=/usr/local/bin/flanneld \\

-etcd-cafile=/etc/etcd/ssl/ca.pem \\

-etcd-certfile=/etc/etcd/ssl/etcd.pem \\

-etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\

-etcd-endpoints=https://192.168.12.51:2379,https://192.168.12.52:2379,https://192.168.12.53:2379 \\

-etcd-prefix=/coreos.com/network \\

-ip-masq

ExecStartPost=/usr/local/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=always

RestartSec=5

StartLimitInterval=0

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

EOF

4)修改docker启动文件(所有master节点执行)

- 让flannel接管docker网络

sed -i '/ExecStart/s/\(.*\)/#\1/' /usr/lib/systemd/system/docker.service

sed -i '/ExecReload/a ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H fd:// --containerd=/run/containerd/containerd.sock' /usr/lib/systemd/system/docker.service

sed -i '/ExecReload/a EnvironmentFile=-/run/flannel/subnet.env' /usr/lib/systemd/system/docker.service

5)启动(所有master节点执行)

- 先启动flannel,再启动docker

systemctl daemon-reload && systemctl enable --now flanneld && systemctl restart docker

6)验证集群网络

- 集群节点互ping对方的flannel网络

- 所有master节点查看docker0网络是否与flannel网络处于相同网段,不同则集群网络不能用,需修改docker配置文件

- 查看所有master节点flannel网络地址,且所有master节点互ping,若可以ping通,则正确,否则,需修改docker配置文件

[root@k8s-master1 ~]# ip a | grep flannel | grep inet

inet 10.240.160.0/32 brd 10.240.160.0 scope global flannel.1

[root@k8s-master2 ~]# ip a | grep flannel | grep inet

inet 10.240.16.0/32 brd 10.240.16.0 scope global flannel.1

# 双方互ping测试

# master1:

[root@k8s-master1 ~]# ping 10.240.160.0

PING 10.240.160.0 (10.240.160.0) 56(84) bytes of data.

64 bytes from 10.240.160.0: icmp_seq=1 ttl=64 time=0.031 ms

64 bytes from 10.240.160.0: icmp_seq=2 ttl=64 time=0.030 ms

[root@k8s-master1 ~]# ping 10.240.16.0

PING 10.240.16.0 (10.240.16.0) 56(84) bytes of data.

64 bytes from 10.240.16.0: icmp_seq=1 ttl=64 time=0.639 ms

64 bytes from 10.240.16.0: icmp_seq=2 ttl=64 time=0.187 ms

# master2:

[root@k8s-master2 ~]# ping 10.240.160.0

PING 10.240.160.0 (10.240.160.0) 56(84) bytes of data.

64 bytes from 10.240.160.0: icmp_seq=1 ttl=64 time=0.214 ms

64 bytes from 10.240.160.0: icmp_seq=2 ttl=64 time=0.173 ms

[root@k8s-master2 ~]# ping 10.240.16.0

PING 10.240.16.0 (10.240.16.0) 56(84) bytes of data.

64 bytes from 10.240.16.0: icmp_seq=1 ttl=64 time=0.053 ms

64 bytes from 10.240.16.0: icmp_seq=2 ttl=64 time=0.025 ms

9.安装集群DNS

- 只需要在一台节点上执行即可

1)下载DNS安装配置文件包

[root@k8s-m-01 ~]# wget https://github.com/coredns/deployment/archive/refs/heads/master.zip

[root@k8s-m-01 ~]# unzip master.zip

[root@k8s-m-01 ~]# cd deployment-master/kubernetes

[root@k8s-m-01 kubernetes]# cat coredns.yaml.sed |grep image

image: coredns/coredns:1.8.4

imagePullPolicy: IfNotPresent

2)执行部署命令

[root@k8s-m-01 ~/deployment-master/kubernetes]# ./deploy.sh -i 10.96.0.2 -s | kubectl apply -f -

3)验证集群DNS

[root@k8s-m-01 ~/deployment-master/kubernetes]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6ff445f54-m28gw 1/1 Running 0 48s

10.验证集群

- 只需要在一台服务器上执行即可

1)绑定一下超管用户

[root@k8s-m-01 ~/deployment-master/kubernetes]# kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubernetes

clusterrolebinding.rbac.authorization.k8s.io/cluster-system-anonymous created

2)验证集群DNS和集群网络成功

[root@k8s-m-01 ~/deployment-master/kubernetes]# kubectl run test -it --rm --image=busybox:1.28.3

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes

Server: 10.96.0.2

Address 1: 10.96.0.2 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

部署Node节点

node需要部署哪些组件?

kubelet、kube-proxy、flannel

- 集群规划

192.168.15.54 k8s-n-01 n1

192.168.15.55 k8s-n-02 n2

- 集群优化:相互做免密登录

- 以下操作仅在master1上执行

1.分发软件包

- mk-docker-opts.sh

- flanneld

cd /usr/local/bin

for i in n1 n2;do scp flanneld mk-docker-opts.sh flanneld /usr/local/bin/kubelet /usr/local/bin/kube-proxy root@$i:/usr/local/bin; done

2.分发证书

for i in n1 n2; do ssh root@$i "mkdir -pv /etc/kubernetes/ssl"; scp -pr /etc/kubernetes/ssl/{

ca*.pem,admin*pem,kube-proxy*pem} root@$i:/etc/kubernetes/ssl; done

3.分发配置文件

- etcd证书

- docker.service

for i in n1 n2 ;do ssh root@$i "mkdir -pv /etc/etcd/ssl"; scp ./* root@$i:/etc/etcd/ssl; done

for i in n1 n2;do scp /usr/lib/systemd/system/docker.service root@$i:/usr/lib/systemd/system/docker.service; scp /usr/lib/systemd/system/flanneld.service root@$i:/usr/lib/systemd/system/flanneld.service; done

4.部署kubelet

1)修改配置文件kubelet-config.yml的ip和kubelet.conf的节点名称为自身

for i in n1 n2 ;do

ssh root@$i "mkdir -pv /etc/kubernetes/cfg";

scp /etc/kubernetes/cfg/kubelet.conf root@$i:/etc/kubernetes/cfg/kubelet.conf;

scp /etc/kubernetes/cfg/kubelet-config.yml root@$i:/etc/kubernetes/cfg/kubelet-config.yml;

scp /usr/lib/systemd/system/kubelet.service root@$i:/usr/lib/systemd/system/kubelet.service;

scp /etc/kubernetes/cfg/kubelet.kubeconfig root@$i:/etc/kubernetes/cfg/kubelet.kubeconfig;

scp /etc/kubernetes/cfg/kubelet-bootstrap.kubeconfig root@$i:/etc/kubernetes/cfg/kubelet-bootstrap.kubeconfig;

scp /etc/kubernetes/cfg/token.csv root@$i:/etc/kubernetes/cfg/token.csv;

done

2)启动kubelet(所有node节点)

systemctl enable --now kubelet;systemctl status kubelet

5.部署kube-proxy

1)修改kube-proxy-config.yml中ip和主机名为自身

for i in n1 n2 ; do

scp /etc/kubernetes/cfg/kube-proxy.conf root@$i:/etc/kubernetes/cfg/kube-proxy.conf;

scp /etc/kubernetes/cfg/kube-proxy-config.yml root@$i:/etc/kubernetes/cfg/kube-proxy-config.yml;

scp /usr/lib/systemd/system/kube-proxy.service root@$i:/usr/lib/systemd/system/kube-proxy.service;

scp /etc/kubernetes/cfg/kube-proxy.kubeconfig root@$i:/etc/kubernetes/cfg/kube-proxy.kubeconfig;

done

2)启动kube-proxy(所有node节点)

systemctl enable --now kube-proxy;systemctl status kube-proxy

6.加入集群

1)查看集群状态

[root@k8s-m1 ~] kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {

"health":"true"}

etcd-1 Healthy {

"health":"true"}

etcd-2 Healthy {

"health":"true"}

2)查看加入集群请求

[root@k8s-m1 ~] kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-_yClVuQCNzDb566yZV5sFJmLsoU13Wba0FOhQ5pmVPY 12m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-m3kFnO7GPBYeBcen5GQ1RdTlt77_rhedLPe97xO_5hw 12m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

3)批准加入

[root@k8s-m1 ~] kubectl certificate approve `kubectl get csr | grep "Pending" | awk '{print $1}'`

certificatesigningrequest.certificates.k8s.io/node-csr-_yClVuQCNzDb566yZV5sFJmLsoU13Wba0FOhQ5pmVPY approved

certificatesigningrequest.certificates.k8s.io/node-csr-m3kFnO7GPBYeBcen5GQ1RdTlt77_rhedLPe97xO_5hw approved

4)查看加入状态

[root@k8s-m1 ~] kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-_yClVuQCNzDb566yZV5sFJmLsoU13Wba0FOhQ5pmVPY 14m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

node-csr-m3kFnO7GPBYeBcen5GQ1RdTlt77_rhedLPe97xO_5hw 14m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

5)查看加入节点

- 需等待一会才可加入完成

[root@k8s-m1 ~] kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-m-01 Ready <none> 21h v1.18.8

k8s-m-02 Ready <none> 21h v1.18.8

k8s-m-03 Ready <none> 21h v1.18.8

k8s-n-01 Ready <none> 36s v1.18.8

k8s-n-02 Ready <none> 36s v1.18.8

7.设置集群角色

1)设置集群角色

[root@k8s-m1 ~] kubectl label nodes k8s-m1 node-role.kubernetes.io/master=k8s-m1

s.io/node=k8s-n1

# 执行结果如下:

kubectl label nodes k8s-n-02 node-role.kubernetes.io/node=k8s-n-02node/k8s-m-01 labeled

2)继续依次执行

kubectl label nodes k8s-m2 node-role.kubernetes.io/master=k8s-m2

node/k8s-m2 labeled

kubectl label nodes k8s-m3 node-role.kubernetes.io/master=k8s-m3

node/k8s-m3 labeled

kubectl label nodes k8s-n1 node-role.kubernetes.io/node=k8s-n1

node/k8s-n1 labeled

kubectl label nodes k8s-n2 node-role.kubernetes.io/node=k8s-n2

node/k8s-n2 labeled

3)查看节点信息

[root@k8s-m1 ~] kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-m-01 Ready master 21h v1.18.8

k8s-m-02 Ready master 21h v1.18.8

k8s-m-03 NotReady master 21h v1.18.8

k8s-n-01 Ready node 4m5s v1.18.8

k8s-n-02 Ready node 4m5s v1.18.8

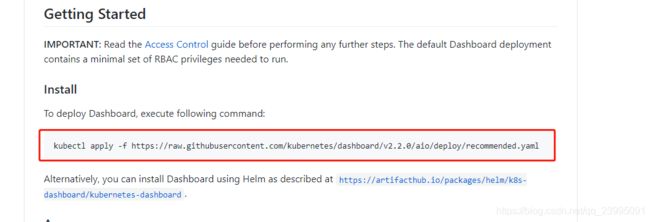

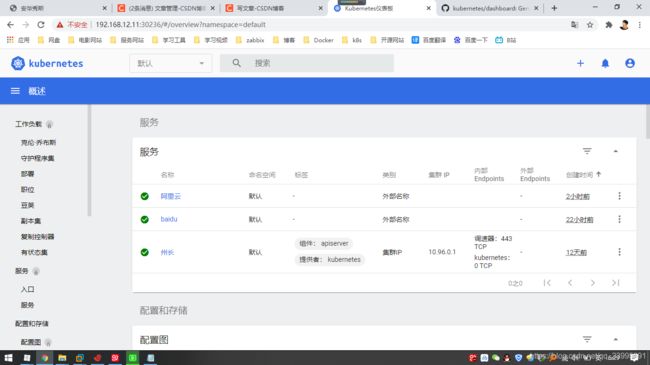

8.安装集群图形化界面

- 可访问此网站获取帮户信息

https://github.com/kubernetes/dashboard

1)安装

[root@k8s-m1 ~] kubectl kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.2.0/aio/deploy/recommended.yaml

2)开一个端口,用于访问

# 将最后的type类型改为NodePort,保存退出

[root@k8s-m1 ~] kubectl edit svc -n kubernetes-dashboard

···

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

sessionAffinity: None

type: NodePort

···

3)查看修改后得端口

- 访问端口号为:30236

[root@k8s-m1 ~] kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.104.84.22 <none> 8000/TCP 15m

kubernetes-dashboard NodePort 10.101.227.244 <none> 443:30236/TCP 15m

4)创建token配置文件

cat > vim token.yaml <apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kube-system

EOF

5)部署token到集群

[root@k8s-m1 ~] kubectl apply -f token.yaml

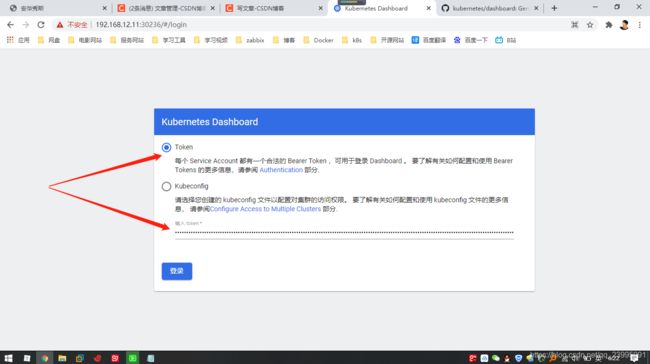

6)获取token

- 浏览器访问 https://192.168.12.11:30236/

- 复制一下token值输入到浏览器

[root@k8s-m1 ~] kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}') | grep token: | awk '{print $2}'

eyJhbGciOiJSUzI1NiIsImtpZCI6IndUZ3dqNXFxc1VKdl9LNVpEYk1EY1dqNFlYOGNMMmJaaV9COFVPbHluQzQifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLWNzbnpiIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiIyYjRmN2Q3YS0yNTc2LTQ3ZmQtYjUyOC00Mzg0ZjFhODQ3ZjgiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.KNQn8Q-sDJqheBU7pi6zc8LO3gmmwyHItssn_M0pD03EuOAVh1DGu2l5SAUzkF45TrszHe66oU8nWbRi52AVVvNTD4BvePnlo_pNuOpNe3MB5USU_iHV_u2vN1rg9eHNu46slyU_a3uzJwJxqvsCwhwbadnggB4PPMhYGuzF3bCaA8XoYZS6y_LZZS0uUdmBrAr4wRcLjLN7z38W6RJxuc6yr8pMGs-x8dA0_1TSESXl0Y5qFALpfJw8En2hpWEKGW0mNbbSTKgjduxNwzl_F83jouahZMDHjzmlR2hExJPMv4HOKaOeP0QC9aftpJvtliC7-jEhgBJ9cM-20bUejg

附:增加命令提示功能(所有节点)

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc