tensorflow模型转换成tensorflow lite模型

1、转换mobilenet_v1_1.0_224模型

之前实践过,但是由于长时间没做,当时也没写笔记所以后续也浪费了一点时间

对应的google已经训练好的模型可以在这里下载

https://github.com/tensorflow/models/blob/master/research/slim/nets/mobilenet_v1.md

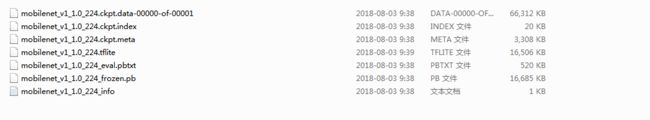

其中frozen_graph的输入文件使用到的有mobilenet_v1_1.0_224.ckpt.*+mobilenet_v1_1.0_224_eval.pbtxt

使用的命令如下:

freeze_graph

--input_graph=C:\Users\judy.yuan\_bazel_judy.yuan\i7fa2ce7\execroot\org_tensorflow\bazel-out\x64_windows-opt\bin\tensorflow\lite\toco\test\mobilenet_v1_1.0_224_eval.pbtxt

--input_checkpoint=C:\Users\judy.yuan\_bazel_judy.yuan\i7fa2ce7\execroot\org_tensorflow\bazel-out\x64_windows-opt\bin\tensorflow\lite\toco\test\mobilenet_v1_1.0_224.ckpt

--output_graph=C:\Users\judy.yuan\_bazel_judy.yuan\i7fa2ce7\execroot\org_tensorflow\bazel-out\x64_windows-opt\bin\tensorflow\lite\toco\test\mobilenet_v1_1.0_224_frozen_judy.pb

--output_node_names=MobilenetV1/Predictions/Reshape_1

执行该命令之后会生成frozen的pb文件

生成冻图之后需要的是生成tflite的文件

toco

--input_file=C:\Users\hui.yuan\_bazel_judy.yuan\i7fa2ce7\execroot\org_tensorflow\bazel-out\x64_windows-opt\bin\tensorflow\lite\toco\test\mobilenet_v1_1.0_224_frozen_judy.pb

--output_file=C:\Users\hui.yuan\_bazel_judy.yuan\i7fa2ce7\execroot\org_tensorflow\bazel-out\x64_windows-opt\bin\tensorflow\lite\toco\test\mobilenet_v1_1.0_224_frozen_judy.tflite

--input_shape="1,224, 224,3"

--input_array=input

--output_array=MobilenetV1/Predictions/Reshape_1

2、转换自己训练的module

第一种方法是直接在toco cmd

toco --input_file=****_frozen.pb --output_file=****.tflite --input_shape="1,49" --input_array=inputs/input --output_array=layer5/logits

执行该命令一定需要在toco应用程序所在目录

还有一种方法目前正在尝试

import tensorflow as tf

convert=tf.lite.TFLiteConverter.from_frozen_graph("model_proc_mobile_fps.pb",input_arrays=["inputs/input"],output_arrays=["layer5/logits"],

input_shapes={"inputs/input":[1,49]})

convert.post_training_quantize=False

tflite_model=convert.convert()

open("quantized_model.tflite","wb").write(tflite_model)其中对应的tensorflow的版本为1.13.1

进行toco转换的时候需要输入--input_array= 和 --output_array= 这些信息可以由下面这个脚本得出

gf = tf.GraphDef()

gf.ParseFromString(open('save/model.pb','rb').read())

for n in gf.node:

print ( n.name +' ===> '+n.op )实例

import tensorflow as tf

import numpy as np

from tensorflow.python.framework import graph_util

with tf.Session(graph=tf.Graph()) as sess:

# 使用 NumPy 生成假数据(phony data), 总共 100 个点.

with tf.name_scope("input"):

x = tf.placeholder(tf.float32, [1, 10], name='input0')

x_data = np.float32(np.random.rand(1, 10)) # 随机输入

print(x_data)

# 构造一个线性模型

#

with tf.name_scope('bias'):

b = tf.Variable(tf.zeros([1]), name='b')

print(b)

with tf.name_scope('weight'):

W = tf.Variable(tf.random_uniform([1, 1], -1.0, 1.0), name='weight')

print(W)

with tf.name_scope('output'):

y = tf.matmul(W,x) + b

print(y)

"""

# 最小化方差

with tf.name_scope('mean'):

loss = tf.reduce_mean(tf.square(y))

print("loss")

print(loss)

optimizer = tf.train.GradientDescentOptimizer(0.5)

print("optimizer")

print(optimizer)

train = optimizer.minimize(loss)

print("train")

print(train)

"""

# 初始化变量

init = tf.initialize_all_variables()

# 启动图 (graph)

sess.run(init)

# 拟合平面

for step in range(0, 201):

#sess.run(train, feed_dict)

if step % 20 == 0:

print(step, sess.run(W), sess.run(b))

input_x = np.float32([[1,2,0,0,0,0,0,0,0,0]])

feed_dict = {x: input_x}

print(sess.run(y, feed_dict))

constant_graph = graph_util.convert_variables_to_constants(sess, sess.graph_def, ['output/add'])

saver = tf.train.Saver()

model_path = "kk/model.ckpt"

save_path = saver.save(sess, model_path)

with tf.gfile.GFile('kk/model.pb', mode='wb') as f: #模型的名字是model.pb

f.write(constant_graph.SerializeToString())

gf = tf.compat.v1.GraphDef()

gf.ParseFromString(open('kk/model.pb','rb').read())

print("\n\n\n")

for n in gf.node:

print ( n.name +' ===> '+n.op )

convert=tf.lite.TFLiteConverter.from_frozen_graph("kk/model.pb",input_arrays=["input/input0"],output_arrays=["output/add"],

input_shapes={"input/input0":[1,10]})

convert.post_training_quantize=False

tflite_model=convert.convert()

open("kk/model.tflite","wb").write(tflite_model)

输入是10组数据,输出也是10组数据

1, 2, 0, 0, 0, 0, 0, 0, 0, 0],

放在手机中解析后,使用模型推理出来的结果如下:

Loaded model model.tflite

resolved reporter

num 0batch 1

invoked

average time: 0.011 ms

Inference output 0 value is -0.0405481

Inference output 1 value is -0.0810962

Inference output 2 value is 0

Inference output 3 value is 0

Inference output 4 value is 0

Inference output 5 value is 0

Inference output 6 value is 0

Inference output 7 value is 0

Inference output 8 value is 0

Inference output 9 value is 0

grade(0-4), Inference grade is :2

num 1batch 1