python之sklearn-分类算法-3.4 逻辑回归与二分类

一,逻辑回归的应用场景

- 广告点击率

- 是否为垃圾邮件

- 是否患病

- 金融诈骗

- 虚假账号

二,逻辑回归的原理

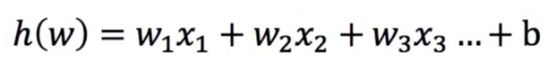

1,输入

2,激活函数

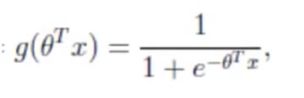

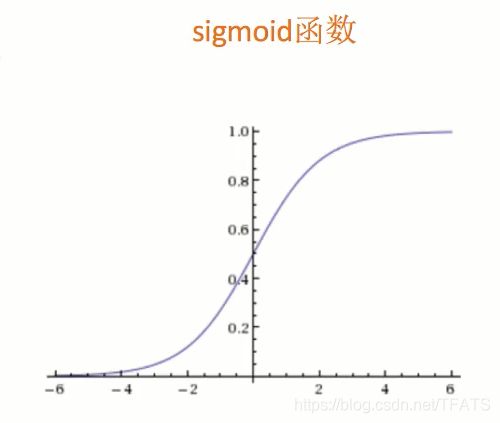

1)sigmoid函数

- 输出结果:[0,1]区间中的一个概率值,默认为0.5的门限值

2)注意:

逻辑回归的最终分类是通过某个类别的概率来判断是否属于某个类别,并且这个类别默认标记为1(正例),另一个标记为0(反例)。默认目标值少的为正例。

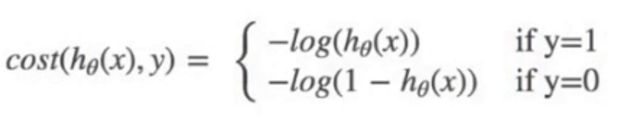

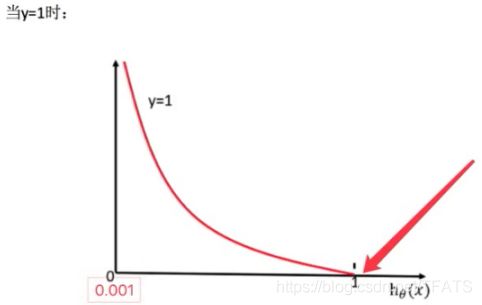

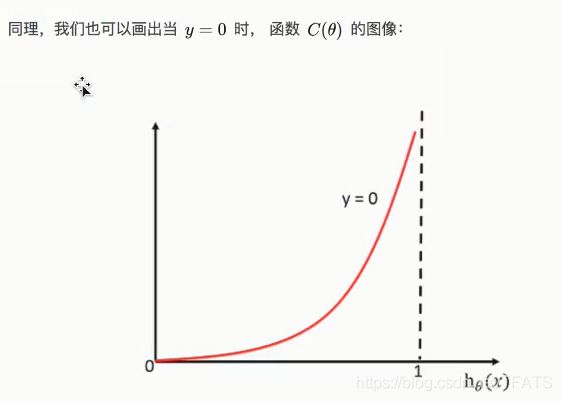

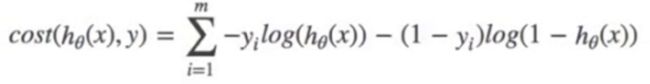

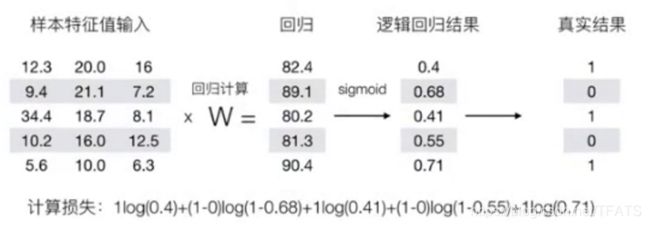

3,损失函数

1)对数似然损失公式

2)综合完整损失函数如下:

3)理解对数似然损失示例如下:

如上可知,降低损失需要(正例减少sigmoid返回结果,反例增加sigmod返回结果)

4,优化方法

同样使用梯度下降优化算法,去减少损失函数的值,这样去更新逻辑回归前面对应算法的权重参数,提升原本属于1类别的概率,降低原本为0类别的概率。

三,逻辑回归API

sklearn.linear_model.LogisticRegression(solver=‘liblinear’,penalty=‘i2’,c=1.0)

- solver:优化求解方式(默认开源的liblinear库实现,内部使用了坐标轴下降法来迭代优化损失函数)

- sag:根据数据集自动选择,随机平局梯度下降

- penalty:正则化种类

- c:正则化力度

默认将类别数量少的当正例

四,案例:癌症分类预测

数据源:https://archive.ics.uci.edu/ml/machine-learning-databases/breast-cancer-wisconsin/

import pandas as pd

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

def logisticregression():

'''逻辑回归癌症预测'''

# 确定数据columns数值

columns = ["Sample code number","Clump Thickness","Uniformity of Cell Size","Uniformity of Cell Shape","Marginal Adhesion","Single Epithelial Cell Size","Bare Nuclei","Bland Chromatin","Normal Nucleoli","Mitoses","Class"]

data = pd.read_csv("breast-cancer-wisconsin.data",names=columns)

# 去掉缺失值

data.replace(to_replace="?",value=np.nan,inplace=True)

data.dropna(axis=0,inplace=True,how="any")

# 提取目标值

target = data["Class"]

# 提取特征值

data = data.drop(["Sample code number"],axis=1).iloc[:,:-1]

# 切割训练集和测试集

x_train,x_test,y_train,y_test = train_test_split(data,target,test_size=0.3)

# 进行标准化

std = StandardScaler()

x_train = std.fit_transform(x_train)

x_test = std.fit_transform(x_test)

# 逻辑回归进行训练和预测

lr = LogisticRegression()

lr.fit(x_train,y_train)

print("逻辑回归权重:",lr.coef_)

print("逻辑回归偏置:",lr.intercept_)

# 逻辑回归测试集预测结果

pre_result = lr.predict(x_test)

print(pre_result)

# 逻辑回归预测准确率

sore = lr.score(x_test,y_test)

print(sore)

if __name__ == '__main__':

logisticregression()

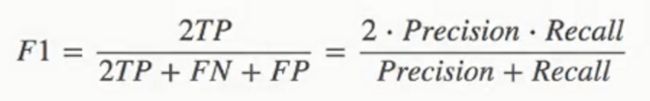

五,二分类的评估方法–(精确率(Precision)与召回率(Recall))

1,精确率:

2,召回率:

真是为正例的样本中预测结果为正例的比例(查的全,对正样本的区分能力)

3,F1-score

4,模型评估API

sklearn.metrics.classification_report(y_true,y_pred,target_names=None)

- y_true: 真实目标值

- y_pred: 估计器预测目标值

- target_names: 目标类名称

- return: 每个类别精准率与召回率

5,代码

import pandas as pd

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import classification_report

def logisticregression():

'''逻辑回归癌症预测'''

# 确定数据columns数值

columns = ["Sample code number","Clump Thickness","Uniformity of Cell Size","Uniformity of Cell Shape","Marginal Adhesion","Single Epithelial Cell Size","Bare Nuclei","Bland Chromatin","Normal Nucleoli","Mitoses","Class"]

data = pd.read_csv("breast-cancer-wisconsin.data",names=columns)

# 去掉缺失值

data.replace(to_replace="?",value=np.nan,inplace=True)

data.dropna(axis=0,inplace=True,how="any")

# 提取目标值

target = data["Class"]

# 提取特征值

data = data.drop(["Sample code number"],axis=1).iloc[:,:-1]

# 切割训练集和测试集

x_train,x_test,y_train,y_test = train_test_split(data,target,test_size=0.3)

# 进行标准化

std = StandardScaler()

x_train = std.fit_transform(x_train)

x_test = std.fit_transform(x_test)

# 逻辑回归进行训练和预测

lr = LogisticRegression()

lr.fit(x_train,y_train)

# 得到训练集返回数据

# print("逻辑回归权重:",lr.coef_)

# print("逻辑回归偏置:",lr.intercept_)

# 逻辑回归测试集预测结果

pre_result = lr.predict(x_test)

# print(pre_result)

# 逻辑回归预测准确率

sore = lr.score(x_test,y_test)

print(sore)

# 精确率(Precision)与召回率(Recall)

report = classification_report(y_test,pre_result,target_names=["良性","恶性"])

print(report)

if __name__ == '__main__':

logisticregression()

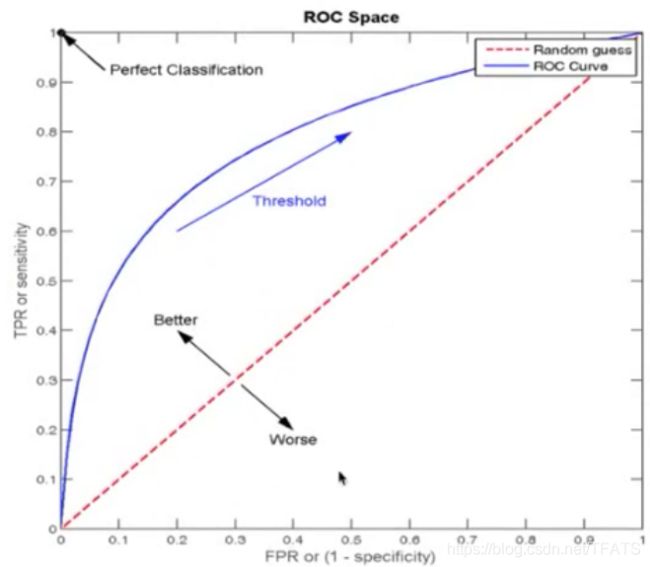

六,ROC曲线与AUC指标

问题:如何衡量样本不均衡下的评估?

1,ROC曲线与FPR

- TPR = TP / (TP + FN)

- 所有真实类别为1的样本中,预测类别为1的比例

- FPR = FP / (TP + FN)

- 所有真实类别为0的样本中,预测类别为1的比例

2,ROC曲线

ROC曲线的横轴就是FPRate,纵轴就是TPRate,当二者相等时,表示的意义则是:对于不论真实类别时1还是0的样本,分类器预测为1的概率是相等的,此时AUC为0.5 。

3,AUC指标

- AUC的概率意义时随机取一对正负样本,正样本得分大于负样本的概率。

- AUC的最小值为0.5,最大值为1,取值越高越好。

- AUC=1,完美分类器,采用这个预测模型时,不管设定什么门限值都能得出完美预测。绝大多数预测的场合,不存在完美分类器。

- 0.5

- AUC=0.5,跟随机猜测一样(例:丢铜板),模型没有预测价值。

- AUC<0.5,比随机猜测还差;但只要总是反预测而行,就优于随机猜测,因此不存在AIC<0.5的情况

最终AUC的范围在[0.5,1],并且越接近1越好。

4,AUC计算API

from sklearn.metrics import roc_auc_score

- sklearn.metrics.roc_auc_score(y_true,y_score)

- 计算ROC曲线面积,即AUC值

- y_true:每个样本的真是类别,必须为0(反例),1(正例)标记

- y_score:每个样本预测的概率值

import pandas as pd

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import classification_report,roc_auc_score

def logisticregression():

'''逻辑回归癌症预测'''

# 确定数据columns数值

columns = ["Sample code number","Clump Thickness","Uniformity of Cell Size","Uniformity of Cell Shape","Marginal Adhesion","Single Epithelial Cell Size","Bare Nuclei","Bland Chromatin","Normal Nucleoli","Mitoses","Class"]

data = pd.read_csv("breast-cancer-wisconsin.data",names=columns)

# 去掉缺失值

data.replace(to_replace="?",value=np.nan,inplace=True)

data.dropna(axis=0,inplace=True,how="any")

# 提取目标值

target = data["Class"]

# 提取特征值

data = data.drop(["Sample code number"],axis=1).iloc[:,:-1]

# 切割训练集和测试集

x_train,x_test,y_train,y_test = train_test_split(data,target,test_size=0.3)

# 进行标准化

std = StandardScaler()

x_train = std.fit_transform(x_train)

x_test = std.fit_transform(x_test)

# 逻辑回归进行训练和预测

lr = LogisticRegression()

lr.fit(x_train,y_train)

# 得到训练集返回数据

# print("逻辑回归权重:",lr.coef_)

# print("逻辑回归偏置:",lr.intercept_)

# 逻辑回归测试集预测结果

pre_result = lr.predict(x_test)

# print(pre_result)

# 逻辑回归预测准确率

sore = lr.score(x_test,y_test)

print(sore)

# 精确率(Precision)与召回率(Recall)

report = classification_report(y_test,pre_result,target_names=["良性","恶性"])

print(report)

# 查看AUC指标

y_test = np.where(y_test>2.5,1,0)

print(y_test)

auc_score = roc_auc_score(y_test,pre_result)

print(auc_score)

if __name__ == '__main__':

logisticregression()

5,总结

- AUC只能用来评价二分类

- AUC非常适合评价样本不平衡中的分类器性能

- AUC会比较预测出来的概率,而不仅仅是标签类

- AUC指标大于0.7,一般都是比较好的分类器

七,Scikit-learn的算法实现总结

- scikit-learn把梯度下降求解的单独分开,叫SGDclassifier和SGDRegressor,他们的损失都是分类和回归对应的损失,比如分类:有log loss, 和 hingle loss(SVM)的,回归如:比如 均方误差, 其它的API是一样的损失,求解并不是用梯度下降的,所以一般大规模的数据都是用scikit-learn其中SGD的方式求解。