机器学习之鸢尾花数据处理流程

鸢尾花数据集的处理,大多机器学习的入门应该都会接触到,本人也是新手,依据自己的理解整理了如下内容,供大家参考,有错误的地方请大家多多指正!

处理鸢尾花数据目标

根据鸢尾花数据集样本,建立模型,以花萼长度、花萼宽度、花瓣长度、花瓣宽度这4个特征值来推断目标值,属于山鸢尾、虹膜锦葵、变色鸢尾的哪一种。

模型训练与预测完整实例可以分成以下几个步骤:

- 获取数据

- 特征工程

- 训练集测试集数据分割

- 模型训练

- 模型预测评估

- 交叉验证

- 搜索最优参数

获取数据

数据集介绍

Iris数据集是常用的分类实验数据集,由Fisher,1936年收集整理。Iris也称鸢尾花卉数据集,是一类多重变量分析的数据集。

关于数据集的具体介绍:

- 特征值:花萼长度、花萼宽度、花瓣长度、花瓣宽度

[‘sepal length (cm)’, ‘sepal width (cm)’, ‘petal length (cm)’, ‘petal width (cm)’] - 目标值 山鸢尾、虹膜锦葵、变色鸢尾

[‘setosa’ ‘versicolor’ ‘virginica’]

如何去获取?

使用scikit-learn数据集API:sklearn.datasets 加载获取流行数据集

- datasets.load_*() 获取小规模数据集,数据包含在datasets里

- datasets.fetch_*(data_home=None) 获取大规模数据集,需要从网上下载,函数的第一个参数是data_home,标识数据集下载的目录,默认是 ~/scikit_learn_data/

sklearn数据集返回值介绍

load和fetch返回的数据类型是datasets.base.Bunch(字典格式)

- data:特征值数组,是[n_samples * n_features]的二维numpy.ndarray数组

- target:标签数组,是 n_samples 的一维 numpy.ndarray数组

- DESCR:数据描述

- feature_names:特证明,新闻数据,手写数字、回归数据集没有

- target_names:标签名

获取鸢尾花数据集代码示例

from sklearn.datasets import load_iris

#获取鸢尾花数据集

iris = load_iris()

print("鸢尾花数据集的返回值:\n",iris)

#返回值是一个继承自字典的Bench

print("鸢尾花的特征值:\n",iris["data"])

print("鸢尾花的目标值:\n",iris.target)

print("鸢尾花的特征名称:\n",iris.feature_names)

print("鸢尾花的目标名称:\n",iris.target_names)

print("鸢尾花的描述:\n",iris.DESCR)

鸢尾花数据集的返回值:

{'data': array([[5.1, 3.5, 1.4, 0.2],

[4.9, 3. , 1.4, 0.2],

[4.7, 3.2, 1.3, 0.2],

[4.6, 3.1, 1.5, 0.2],

[5. , 3.6, 1.4, 0.2],

[5.4, 3.9, 1.7, 0.4],

[4.6, 3.4, 1.4, 0.3],

[5. , 3.4, 1.5, 0.2],

[4.4, 2.9, 1.4, 0.2],

[4.9, 3.1, 1.5, 0.1],

[5.4, 3.7, 1.5, 0.2],

[4.8, 3.4, 1.6, 0.2],

[4.8, 3. , 1.4, 0.1],

[4.3, 3. , 1.1, 0.1],

[5.8, 4. , 1.2, 0.2],

[5.7, 4.4, 1.5, 0.4],

[5.4, 3.9, 1.3, 0.4],

[5.1, 3.5, 1.4, 0.3],

[5.7, 3.8, 1.7, 0.3],

[5.1, 3.8, 1.5, 0.3],

[5.4, 3.4, 1.7, 0.2],

[5.1, 3.7, 1.5, 0.4],

[4.6, 3.6, 1. , 0.2],

[5.1, 3.3, 1.7, 0.5],

[4.8, 3.4, 1.9, 0.2],

[5. , 3. , 1.6, 0.2],

[5. , 3.4, 1.6, 0.4],

[5.2, 3.5, 1.5, 0.2],

[5.2, 3.4, 1.4, 0.2],

[4.7, 3.2, 1.6, 0.2],

[4.8, 3.1, 1.6, 0.2],

[5.4, 3.4, 1.5, 0.4],

[5.2, 4.1, 1.5, 0.1],

[5.5, 4.2, 1.4, 0.2],

[4.9, 3.1, 1.5, 0.2],

[5. , 3.2, 1.2, 0.2],

[5.5, 3.5, 1.3, 0.2],

[4.9, 3.6, 1.4, 0.1],

[4.4, 3. , 1.3, 0.2],

[5.1, 3.4, 1.5, 0.2],

[5. , 3.5, 1.3, 0.3],

[4.5, 2.3, 1.3, 0.3],

[4.4, 3.2, 1.3, 0.2],

[5. , 3.5, 1.6, 0.6],

[5.1, 3.8, 1.9, 0.4],

[4.8, 3. , 1.4, 0.3],

[5.1, 3.8, 1.6, 0.2],

[4.6, 3.2, 1.4, 0.2],

[5.3, 3.7, 1.5, 0.2],

[5. , 3.3, 1.4, 0.2],

[7. , 3.2, 4.7, 1.4],

[6.4, 3.2, 4.5, 1.5],

[6.9, 3.1, 4.9, 1.5],

[5.5, 2.3, 4. , 1.3],

[6.5, 2.8, 4.6, 1.5],

[5.7, 2.8, 4.5, 1.3],

[6.3, 3.3, 4.7, 1.6],

[4.9, 2.4, 3.3, 1. ],

[6.6, 2.9, 4.6, 1.3],

[5.2, 2.7, 3.9, 1.4],

[5. , 2. , 3.5, 1. ],

[5.9, 3. , 4.2, 1.5],

[6. , 2.2, 4. , 1. ],

[6.1, 2.9, 4.7, 1.4],

[5.6, 2.9, 3.6, 1.3],

[6.7, 3.1, 4.4, 1.4],

[5.6, 3. , 4.5, 1.5],

[5.8, 2.7, 4.1, 1. ],

[6.2, 2.2, 4.5, 1.5],

[5.6, 2.5, 3.9, 1.1],

[5.9, 3.2, 4.8, 1.8],

[6.1, 2.8, 4. , 1.3],

[6.3, 2.5, 4.9, 1.5],

[6.1, 2.8, 4.7, 1.2],

[6.4, 2.9, 4.3, 1.3],

[6.6, 3. , 4.4, 1.4],

[6.8, 2.8, 4.8, 1.4],

[6.7, 3. , 5. , 1.7],

[6. , 2.9, 4.5, 1.5],

[5.7, 2.6, 3.5, 1. ],

[5.5, 2.4, 3.8, 1.1],

[5.5, 2.4, 3.7, 1. ],

[5.8, 2.7, 3.9, 1.2],

[6. , 2.7, 5.1, 1.6],

[5.4, 3. , 4.5, 1.5],

[6. , 3.4, 4.5, 1.6],

[6.7, 3.1, 4.7, 1.5],

[6.3, 2.3, 4.4, 1.3],

[5.6, 3. , 4.1, 1.3],

[5.5, 2.5, 4. , 1.3],

[5.5, 2.6, 4.4, 1.2],

[6.1, 3. , 4.6, 1.4],

[5.8, 2.6, 4. , 1.2],

[5. , 2.3, 3.3, 1. ],

[5.6, 2.7, 4.2, 1.3],

[5.7, 3. , 4.2, 1.2],

[5.7, 2.9, 4.2, 1.3],

[6.2, 2.9, 4.3, 1.3],

[5.1, 2.5, 3. , 1.1],

[5.7, 2.8, 4.1, 1.3],

[6.3, 3.3, 6. , 2.5],

[5.8, 2.7, 5.1, 1.9],

[7.1, 3. , 5.9, 2.1],

[6.3, 2.9, 5.6, 1.8],

[6.5, 3. , 5.8, 2.2],

[7.6, 3. , 6.6, 2.1],

[4.9, 2.5, 4.5, 1.7],

[7.3, 2.9, 6.3, 1.8],

[6.7, 2.5, 5.8, 1.8],

[7.2, 3.6, 6.1, 2.5],

[6.5, 3.2, 5.1, 2. ],

[6.4, 2.7, 5.3, 1.9],

[6.8, 3. , 5.5, 2.1],

[5.7, 2.5, 5. , 2. ],

[5.8, 2.8, 5.1, 2.4],

[6.4, 3.2, 5.3, 2.3],

[6.5, 3. , 5.5, 1.8],

[7.7, 3.8, 6.7, 2.2],

[7.7, 2.6, 6.9, 2.3],

[6. , 2.2, 5. , 1.5],

[6.9, 3.2, 5.7, 2.3],

[5.6, 2.8, 4.9, 2. ],

[7.7, 2.8, 6.7, 2. ],

[6.3, 2.7, 4.9, 1.8],

[6.7, 3.3, 5.7, 2.1],

[7.2, 3.2, 6. , 1.8],

[6.2, 2.8, 4.8, 1.8],

[6.1, 3. , 4.9, 1.8],

[6.4, 2.8, 5.6, 2.1],

[7.2, 3. , 5.8, 1.6],

[7.4, 2.8, 6.1, 1.9],

[7.9, 3.8, 6.4, 2. ],

[6.4, 2.8, 5.6, 2.2],

[6.3, 2.8, 5.1, 1.5],

[6.1, 2.6, 5.6, 1.4],

[7.7, 3. , 6.1, 2.3],

[6.3, 3.4, 5.6, 2.4],

[6.4, 3.1, 5.5, 1.8],

[6. , 3. , 4.8, 1.8],

[6.9, 3.1, 5.4, 2.1],

[6.7, 3.1, 5.6, 2.4],

[6.9, 3.1, 5.1, 2.3],

[5.8, 2.7, 5.1, 1.9],

[6.8, 3.2, 5.9, 2.3],

[6.7, 3.3, 5.7, 2.5],

[6.7, 3. , 5.2, 2.3],

[6.3, 2.5, 5. , 1.9],

[6.5, 3. , 5.2, 2. ],

[6.2, 3.4, 5.4, 2.3],

[5.9, 3. , 5.1, 1.8]]), 'target': array([0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2,

2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2,

2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2, 2]), 'target_names': array(['setosa', 'versicolor', 'virginica'], dtype='特征工程

特征工程的定义

通过一些转换函数将特征转换成更加适合算法模型的特征数据过程

特征工程的API有很多,这里我们选用sklearn.preprocessing.PolynomialFeatures来进行特征工程

PolynomialFeatures的作用是生成多项式和交互特征。

生成由度小于或等于指定度的特征的所有多项式组合组成的新特征矩阵。

例如,如果输入样本是二维且格式为[a,b],则2阶多项式特征为[1,a,b,a ^ 2,ab,b ^ 2]。

- 参数

- degree:多项式特征的程度。默认值= 2。

- interaction_only: 默认为False,如果指定为True,那么就不会有特征自己和自己结合的项,上面的二次项中没有a2和b2。

- include_bias:默认为True。如果为True的话,那么就会有上面的 1那一项。

#对鸢尾花数据集进行特征工程

from sklearn.preprocessing import PolynomialFeatures

ploy = PolynomialFeatures(3)

data_ploy = ploy.fit_transform(iris.data)

print("扩充特征前的特征值:\n",iris.data)

print("扩充特征后的特征值:\n,",data_ploy)

扩充特征前的特征值:

[[5.1 3.5 1.4 0.2]

[4.9 3. 1.4 0.2]

[4.7 3.2 1.3 0.2]

[4.6 3.1 1.5 0.2]

[5. 3.6 1.4 0.2]

[5.4 3.9 1.7 0.4]

[4.6 3.4 1.4 0.3]

[5. 3.4 1.5 0.2]

[4.4 2.9 1.4 0.2]

[4.9 3.1 1.5 0.1]

[5.4 3.7 1.5 0.2]

[4.8 3.4 1.6 0.2]

[4.8 3. 1.4 0.1]

[4.3 3. 1.1 0.1]

[5.8 4. 1.2 0.2]

[5.7 4.4 1.5 0.4]

[5.4 3.9 1.3 0.4]

[5.1 3.5 1.4 0.3]

[5.7 3.8 1.7 0.3]

[5.1 3.8 1.5 0.3]

[5.4 3.4 1.7 0.2]

[5.1 3.7 1.5 0.4]

[4.6 3.6 1. 0.2]

[5.1 3.3 1.7 0.5]

[4.8 3.4 1.9 0.2]

[5. 3. 1.6 0.2]

[5. 3.4 1.6 0.4]

[5.2 3.5 1.5 0.2]

[5.2 3.4 1.4 0.2]

[4.7 3.2 1.6 0.2]

[4.8 3.1 1.6 0.2]

[5.4 3.4 1.5 0.4]

[5.2 4.1 1.5 0.1]

[5.5 4.2 1.4 0.2]

[4.9 3.1 1.5 0.2]

[5. 3.2 1.2 0.2]

[5.5 3.5 1.3 0.2]

[4.9 3.6 1.4 0.1]

[4.4 3. 1.3 0.2]

[5.1 3.4 1.5 0.2]

[5. 3.5 1.3 0.3]

[4.5 2.3 1.3 0.3]

[4.4 3.2 1.3 0.2]

[5. 3.5 1.6 0.6]

[5.1 3.8 1.9 0.4]

[4.8 3. 1.4 0.3]

[5.1 3.8 1.6 0.2]

[4.6 3.2 1.4 0.2]

[5.3 3.7 1.5 0.2]

[5. 3.3 1.4 0.2]

[7. 3.2 4.7 1.4]

[6.4 3.2 4.5 1.5]

[6.9 3.1 4.9 1.5]

[5.5 2.3 4. 1.3]

[6.5 2.8 4.6 1.5]

[5.7 2.8 4.5 1.3]

[6.3 3.3 4.7 1.6]

[4.9 2.4 3.3 1. ]

[6.6 2.9 4.6 1.3]

[5.2 2.7 3.9 1.4]

[5. 2. 3.5 1. ]

[5.9 3. 4.2 1.5]

[6. 2.2 4. 1. ]

[6.1 2.9 4.7 1.4]

[5.6 2.9 3.6 1.3]

[6.7 3.1 4.4 1.4]

[5.6 3. 4.5 1.5]

[5.8 2.7 4.1 1. ]

[6.2 2.2 4.5 1.5]

[5.6 2.5 3.9 1.1]

[5.9 3.2 4.8 1.8]

[6.1 2.8 4. 1.3]

[6.3 2.5 4.9 1.5]

[6.1 2.8 4.7 1.2]

[6.4 2.9 4.3 1.3]

[6.6 3. 4.4 1.4]

[6.8 2.8 4.8 1.4]

[6.7 3. 5. 1.7]

[6. 2.9 4.5 1.5]

[5.7 2.6 3.5 1. ]

[5.5 2.4 3.8 1.1]

[5.5 2.4 3.7 1. ]

[5.8 2.7 3.9 1.2]

[6. 2.7 5.1 1.6]

[5.4 3. 4.5 1.5]

[6. 3.4 4.5 1.6]

[6.7 3.1 4.7 1.5]

[6.3 2.3 4.4 1.3]

[5.6 3. 4.1 1.3]

[5.5 2.5 4. 1.3]

[5.5 2.6 4.4 1.2]

[6.1 3. 4.6 1.4]

[5.8 2.6 4. 1.2]

[5. 2.3 3.3 1. ]

[5.6 2.7 4.2 1.3]

[5.7 3. 4.2 1.2]

[5.7 2.9 4.2 1.3]

[6.2 2.9 4.3 1.3]

[5.1 2.5 3. 1.1]

[5.7 2.8 4.1 1.3]

[6.3 3.3 6. 2.5]

[5.8 2.7 5.1 1.9]

[7.1 3. 5.9 2.1]

[6.3 2.9 5.6 1.8]

[6.5 3. 5.8 2.2]

[7.6 3. 6.6 2.1]

[4.9 2.5 4.5 1.7]

[7.3 2.9 6.3 1.8]

[6.7 2.5 5.8 1.8]

[7.2 3.6 6.1 2.5]

[6.5 3.2 5.1 2. ]

[6.4 2.7 5.3 1.9]

[6.8 3. 5.5 2.1]

[5.7 2.5 5. 2. ]

[5.8 2.8 5.1 2.4]

[6.4 3.2 5.3 2.3]

[6.5 3. 5.5 1.8]

[7.7 3.8 6.7 2.2]

[7.7 2.6 6.9 2.3]

[6. 2.2 5. 1.5]

[6.9 3.2 5.7 2.3]

[5.6 2.8 4.9 2. ]

[7.7 2.8 6.7 2. ]

[6.3 2.7 4.9 1.8]

[6.7 3.3 5.7 2.1]

[7.2 3.2 6. 1.8]

[6.2 2.8 4.8 1.8]

[6.1 3. 4.9 1.8]

[6.4 2.8 5.6 2.1]

[7.2 3. 5.8 1.6]

[7.4 2.8 6.1 1.9]

[7.9 3.8 6.4 2. ]

[6.4 2.8 5.6 2.2]

[6.3 2.8 5.1 1.5]

[6.1 2.6 5.6 1.4]

[7.7 3. 6.1 2.3]

[6.3 3.4 5.6 2.4]

[6.4 3.1 5.5 1.8]

[6. 3. 4.8 1.8]

[6.9 3.1 5.4 2.1]

[6.7 3.1 5.6 2.4]

[6.9 3.1 5.1 2.3]

[5.8 2.7 5.1 1.9]

[6.8 3.2 5.9 2.3]

[6.7 3.3 5.7 2.5]

[6.7 3. 5.2 2.3]

[6.3 2.5 5. 1.9]

[6.5 3. 5.2 2. ]

[6.2 3.4 5.4 2.3]

[5.9 3. 5.1 1.8]]

扩充特征后的特征值:

, [[1.0000e+00 5.1000e+00 3.5000e+00 ... 3.9200e-01 5.6000e-02 8.0000e-03]

[1.0000e+00 4.9000e+00 3.0000e+00 ... 3.9200e-01 5.6000e-02 8.0000e-03]

[1.0000e+00 4.7000e+00 3.2000e+00 ... 3.3800e-01 5.2000e-02 8.0000e-03]

...

[1.0000e+00 6.5000e+00 3.0000e+00 ... 5.4080e+01 2.0800e+01 8.0000e+00]

[1.0000e+00 6.2000e+00 3.4000e+00 ... 6.7068e+01 2.8566e+01 1.2167e+01]

[1.0000e+00 5.9000e+00 3.0000e+00 ... 4.6818e+01 1.6524e+01 5.8320e+00]]

数据分割

机器学习一般的数据集会划分成两个部分:

- 训练数据:用于训练,构建模型

- 测试数据:在模型检验时使用,用于评估模型是否有效

划分比例一般为:

- 训练集:70%~80%

- 测试集:20~30%

数据划分API

sklearn.model_selection_train_test_split(arrays, *options)

- 参数

- x 数据集的特征值

- y 数据集的标签值

- test_siez测试集的大小,一般为float,为空默认为0.25

- train_size训练集的大小,一般为float,为空默认为0.75

- random_state 随机数种子,不同种子会造成不同的随机采样结果。相同的种子采样结果相同。、

- 返回值

- x_train,x_test,y_train,y_test

from sklearn.model_selection import train_test_split

#数据集划分

# 这里是使用原始数据的特征值

x_train,x_test,y_train,y_test = train_test_split(iris.data,iris.target,test_size=0.2,random_state=1)

print("训练集的特征值是:\n",x_train)

print("训练集的目标值是:\n",y_train)

print("测试集的特征值是:\n",x_test)

print("测试集的目标值是:\n",y_test)

print("--------------------------")

# 这里是使用为扩充特征的特征值

x_train,x_test,y_train,y_test = train_test_split(data_ploy,iris.target,test_size=0.2,random_state=1)

print("训练集的特征值是:\n",x_train)

print("训练集的目标值是:\n",y_train)

print("测试集的特征值是:\n",x_test)

print("测试集的目标值是:\n",y_test)

训练集的特征值是:

[[6.1 3. 4.6 1.4]

[7.7 3. 6.1 2.3]

[5.6 2.5 3.9 1.1]

[6.4 2.8 5.6 2.1]

[5.8 2.8 5.1 2.4]

[5.3 3.7 1.5 0.2]

[5.5 2.3 4. 1.3]

[5.2 3.4 1.4 0.2]

[6.5 2.8 4.6 1.5]

[6.7 2.5 5.8 1.8]

[6.8 3. 5.5 2.1]

[5.1 3.5 1.4 0.3]

[6. 2.2 5. 1.5]

[6.3 2.9 5.6 1.8]

[6.6 2.9 4.6 1.3]

[7.7 2.6 6.9 2.3]

[5.7 3.8 1.7 0.3]

[5. 3.6 1.4 0.2]

[4.8 3. 1.4 0.3]

[5.2 2.7 3.9 1.4]

[5.1 3.4 1.5 0.2]

[5.5 3.5 1.3 0.2]

[7.7 3.8 6.7 2.2]

[6.9 3.1 5.4 2.1]

[7.3 2.9 6.3 1.8]

[6.4 2.8 5.6 2.2]

[6.2 2.8 4.8 1.8]

[6. 3.4 4.5 1.6]

[7.7 2.8 6.7 2. ]

[5.7 3. 4.2 1.2]

[4.8 3.4 1.6 0.2]

[5.7 2.5 5. 2. ]

[6.3 2.7 4.9 1.8]

[4.8 3. 1.4 0.1]

[4.7 3.2 1.3 0.2]

[6.5 3. 5.8 2.2]

[4.6 3.4 1.4 0.3]

[6.1 3. 4.9 1.8]

[6.5 3.2 5.1 2. ]

[6.7 3.1 4.4 1.4]

[5.7 2.8 4.5 1.3]

[6.7 3.3 5.7 2.5]

[6. 3. 4.8 1.8]

[5.1 3.8 1.6 0.2]

[6. 2.2 4. 1. ]

[6.4 2.9 4.3 1.3]

[6.5 3. 5.5 1.8]

[5. 2.3 3.3 1. ]

[6.3 3.3 6. 2.5]

[5.5 2.5 4. 1.3]

[5.4 3.7 1.5 0.2]

[4.9 3.1 1.5 0.2]

[5.2 4.1 1.5 0.1]

[6.7 3.3 5.7 2.1]

[4.4 3. 1.3 0.2]

[6. 2.7 5.1 1.6]

[6.4 2.7 5.3 1.9]

[5.9 3. 5.1 1.8]

[5.2 3.5 1.5 0.2]

[5.1 3.3 1.7 0.5]

[5.8 2.7 4.1 1. ]

[4.9 3.1 1.5 0.1]

[7.4 2.8 6.1 1.9]

[6.2 2.9 4.3 1.3]

[7.6 3. 6.6 2.1]

[6.7 3. 5.2 2.3]

[6.3 2.3 4.4 1.3]

[6.2 3.4 5.4 2.3]

[7.2 3.6 6.1 2.5]

[5.6 2.9 3.6 1.3]

[5.7 4.4 1.5 0.4]

[5.8 2.7 3.9 1.2]

[4.5 2.3 1.3 0.3]

[5.5 2.4 3.8 1.1]

[6.9 3.1 4.9 1.5]

[5. 3.4 1.6 0.4]

[6.8 2.8 4.8 1.4]

[5. 3.5 1.6 0.6]

[4.8 3.4 1.9 0.2]

[6.3 3.4 5.6 2.4]

[5.6 2.8 4.9 2. ]

[6.8 3.2 5.9 2.3]

[5. 3.3 1.4 0.2]

[5.1 3.7 1.5 0.4]

[5.9 3.2 4.8 1.8]

[4.6 3.1 1.5 0.2]

[5.8 2.7 5.1 1.9]

[4.8 3.1 1.6 0.2]

[6.5 3. 5.2 2. ]

[4.9 2.5 4.5 1.7]

[4.6 3.2 1.4 0.2]

[6.4 3.2 5.3 2.3]

[4.3 3. 1.1 0.1]

[5.6 3. 4.1 1.3]

[4.4 2.9 1.4 0.2]

[5.5 2.4 3.7 1. ]

[5. 2. 3.5 1. ]

[5.1 3.5 1.4 0.2]

[4.9 3. 1.4 0.2]

[4.9 2.4 3.3 1. ]

[4.6 3.6 1. 0.2]

[5.9 3. 4.2 1.5]

[6.1 2.9 4.7 1.4]

[5. 3.4 1.5 0.2]

[6.7 3.1 4.7 1.5]

[5.7 2.9 4.2 1.3]

[6.2 2.2 4.5 1.5]

[7. 3.2 4.7 1.4]

[5.8 2.7 5.1 1.9]

[5.4 3.4 1.7 0.2]

[5. 3. 1.6 0.2]

[6.1 2.6 5.6 1.4]

[6.1 2.8 4. 1.3]

[7.2 3. 5.8 1.6]

[5.7 2.6 3.5 1. ]

[6.3 2.8 5.1 1.5]

[6.4 3.1 5.5 1.8]

[6.3 2.5 4.9 1.5]

[6.7 3.1 5.6 2.4]

[4.9 3.6 1.4 0.1]]

训练集的目标值是:

[1 2 1 2 2 0 1 0 1 2 2 0 2 2 1 2 0 0 0 1 0 0 2 2 2 2 2 1 2 1 0 2 2 0 0 2 0

2 2 1 1 2 2 0 1 1 2 1 2 1 0 0 0 2 0 1 2 2 0 0 1 0 2 1 2 2 1 2 2 1 0 1 0 1

1 0 1 0 0 2 2 2 0 0 1 0 2 0 2 2 0 2 0 1 0 1 1 0 0 1 0 1 1 0 1 1 1 1 2 0 0

2 1 2 1 2 2 1 2 0]

测试集的特征值是:

[[5.8 4. 1.2 0.2]

[5.1 2.5 3. 1.1]

[6.6 3. 4.4 1.4]

[5.4 3.9 1.3 0.4]

[7.9 3.8 6.4 2. ]

[6.3 3.3 4.7 1.6]

[6.9 3.1 5.1 2.3]

[5.1 3.8 1.9 0.4]

[4.7 3.2 1.6 0.2]

[6.9 3.2 5.7 2.3]

[5.6 2.7 4.2 1.3]

[5.4 3.9 1.7 0.4]

[7.1 3. 5.9 2.1]

[6.4 3.2 4.5 1.5]

[6. 2.9 4.5 1.5]

[4.4 3.2 1.3 0.2]

[5.8 2.6 4. 1.2]

[5.6 3. 4.5 1.5]

[5.4 3.4 1.5 0.4]

[5. 3.2 1.2 0.2]

[5.5 2.6 4.4 1.2]

[5.4 3. 4.5 1.5]

[6.7 3. 5. 1.7]

[5. 3.5 1.3 0.3]

[7.2 3.2 6. 1.8]

[5.7 2.8 4.1 1.3]

[5.5 4.2 1.4 0.2]

[5.1 3.8 1.5 0.3]

[6.1 2.8 4.7 1.2]

[6.3 2.5 5. 1.9]]

测试集的目标值是:

[0 1 1 0 2 1 2 0 0 2 1 0 2 1 1 0 1 1 0 0 1 1 1 0 2 1 0 0 1 2]

--------------------------

训练集的特征值是:

[[1.0000e+00 6.1000e+00 3.0000e+00 ... 2.9624e+01 9.0160e+00 2.7440e+00]

[1.0000e+00 7.7000e+00 3.0000e+00 ... 8.5583e+01 3.2269e+01 1.2167e+01]

[1.0000e+00 5.6000e+00 2.5000e+00 ... 1.6731e+01 4.7190e+00 1.3310e+00]

...

[1.0000e+00 6.3000e+00 2.5000e+00 ... 3.6015e+01 1.1025e+01 3.3750e+00]

[1.0000e+00 6.7000e+00 3.1000e+00 ... 7.5264e+01 3.2256e+01 1.3824e+01]

[1.0000e+00 4.9000e+00 3.6000e+00 ... 1.9600e-01 1.4000e-02 1.0000e-03]]

训练集的目标值是:

[1 2 1 2 2 0 1 0 1 2 2 0 2 2 1 2 0 0 0 1 0 0 2 2 2 2 2 1 2 1 0 2 2 0 0 2 0

2 2 1 1 2 2 0 1 1 2 1 2 1 0 0 0 2 0 1 2 2 0 0 1 0 2 1 2 2 1 2 2 1 0 1 0 1

1 0 1 0 0 2 2 2 0 0 1 0 2 0 2 2 0 2 0 1 0 1 1 0 0 1 0 1 1 0 1 1 1 1 2 0 0

2 1 2 1 2 2 1 2 0]

测试集的特征值是:

[[1.0000e+00 5.8000e+00 4.0000e+00 ... 2.8800e-01 4.8000e-02 8.0000e-03]

[1.0000e+00 5.1000e+00 2.5000e+00 ... 9.9000e+00 3.6300e+00 1.3310e+00]

[1.0000e+00 6.6000e+00 3.0000e+00 ... 2.7104e+01 8.6240e+00 2.7440e+00]

...

[1.0000e+00 5.1000e+00 3.8000e+00 ... 6.7500e-01 1.3500e-01 2.7000e-02]

[1.0000e+00 6.1000e+00 2.8000e+00 ... 2.6508e+01 6.7680e+00 1.7280e+00]

[1.0000e+00 6.3000e+00 2.5000e+00 ... 4.7500e+01 1.8050e+01 6.8590e+00]]

测试集的目标值是:

[0 1 1 0 2 1 2 0 0 2 1 0 2 1 1 0 1 1 0 0 1 1 1 0 2 1 0 0 1 2]

模型训练

在鸢尾花数据集中,我们选用适合进行分类的K近邻算法进行模型训练

K近邻算法API介绍

- sklearn.neighbors.KNeighborsClassifier(n_neighbors=5,algorithm=‘auto’)

- n_neighbors int,可选默认(=5)用于kneighbors查询的邻居数。

- algorithm 用于计算k近邻的算法{‘auto’,‘ball_tree’,‘kd_tree’,‘brute’},默认=‘auto’

- auto 自动选择以下3个算法中最合适的一种

- brute 蛮力搜索,线性扫描,当训练集很大时,计算非常耗时

- kd_tree 构造kd树存储数据以便对其进行快速检索,kd树相当于二叉树,是以中值切分构造的树,在维数小于20时效率高

- ball_tree 为了克服kd树高维失效而发明的,构造过程是以质心C和半径r分割样本空间,每个节点是一个超球体

- 更多参数介绍参考 https://scikit-learn.org/stable/modules/generated/sklearn.neighbors.KNeighborsClassifier.html?highlight=kneighborsclassifier#sklearn.neighbors.KNeighborsClassifier

from sklearn.neighbors import KNeighborsClassifier

#训练鸢尾花数据集模型

knn = KNeighborsClassifier(n_neighbors=5)

knn.fit(x_train,y_train)

KNeighborsClassifier(algorithm='auto', leaf_size=30, metric='minkowski',

metric_params=None, n_jobs=None, n_neighbors=5, p=2,

weights='uniform')

模型预测和评估

#对比真实值和预测值

y_predict = knn.predict(x_test)

print("预测结果为:\n",y_predict)

print("真实结果为:\n",y_test)

print("比对真实值和预测值:\n",y_predict == y_test)

#计算准确率

score = knn.score(x_test,y_test)

print("训练出来的模型使用测试集验证,准确率为:\n",score)

预测结果为:

[0 1 1 0 2 1 2 0 0 2 1 0 2 1 1 0 1 1 0 0 1 1 1 0 2 1 0 0 1 2]

真实结果为:

[0 1 1 0 2 1 2 0 0 2 1 0 2 1 1 0 1 1 0 0 1 1 1 0 2 1 0 0 1 2]

比对真实值和预测值:

[ True True True True True True True True True True True True

True True True True True True True True True True True True

True True True True True True]

训练出来的模型使用测试集验证,准确率为:

1.0

交叉验证

将拿到的训练数据,分为训练和验证集。

以cv=5为例,系统会将训练数据分为5组模型。每一组都有5份数据,其中一份作为验证集。

然后经过5次(组)测试,每次更换不同的验证集。即得到5组模型的结果,取平均值作为最终结果。

注意划分时只会使用训练集数据进行5组划分,不会使用测试集进行划分。

交叉验证的目的是让被评估的模型更加准确可信 并不是让模型预估的更加精准

- sklearn.model_selection.cross_val_score(estimator, X, y=None, , groups=None, scoring=None, cv=None, n_jobs=None, verbose=0, fit_params=None, pre_dispatch='2n_jobs’, error_score=nan)

- estimator 算法模型

- x 特征值数据

- y 目标值数据

- cv 交叉验证次数

- scoring 评价指标,如’accuracy’代表精确度

- 更多参数参考https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.cross_val_score.html?highlight=cross_val_score#sklearn.model_selection.cross_val_score

from sklearn.model_selection import cross_val_score

scores = cross_val_score(knn,data_ploy,iris.target,cv=5,scoring='accuracy')

#打印出每次验证的结果数组

print(scores)

#计算交叉验证均值

print(scores.mean())

[0.96666667 0.96666667 0.9 0.96666667 1. ]

0.9600000000000002

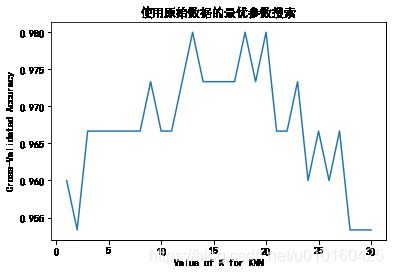

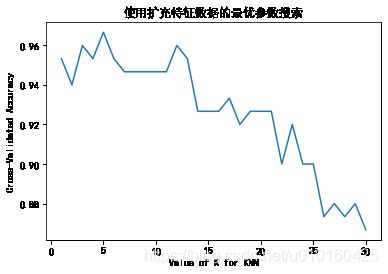

搜索最优参数

搜索最优参数的原理是,通过对参数的遍历,如K近邻的n_neighbors参数选择一个范围,在这个范围内进行交叉验证,最后得出每个值对应的模型评测结果均值,最高的参数即可作为最优参数。

import matplotlib.pyplot as plt

def search_param(type):

'''搜索最优参数,type为1使用原始数据,其他为使用扩充特征后的数据'''

k_range = range(1,31)

k_scores = []

for k in k_range:

knn = KNeighborsClassifier(n_neighbors=k)

if type == 1:

scores = cross_val_score(knn,iris.data,iris.target,cv=10,scoring='accuracy')

else:

scores = cross_val_score(knn,data_ploy,iris.target,cv=10,scoring='accuracy')

k_scores.append(scores.mean())

return k_range,k_scores

k_range,k_scores = search_param(1)

plt.plot(k_range,k_scores)

plt.title("使用原始数据的最优参数搜索")

plt.xlabel('Value of K for KNN')

plt.ylabel('Cross-Validated Accuracy')

plt.show()

k_range,k_scores = search_param(2)

plt.plot(k_range,k_scores)

plt.title("使用扩充特征数据的最优参数搜索")

plt.xlabel('Value of K for KNN')

plt.ylabel('Cross-Validated Accuracy')

plt.show()

整体代码

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.model_selection import cross_val_score

from sklearn.neighbors import KNeighborsClassifier

from sklearn.preprocessing import PolynomialFeatures

#加载鸢尾花数据

iris = load_iris()

x = iris.data

y = iris.target

#扩充特征

ploy = PolynomialFeatures(3)

x_ploy = ploy.fit_transform(x)

print('扩充特征后的训练集数据:\n',x_ploy)

#切分数据集

# 原始数据的切分

# x_train,x_test,y_train,y_test = train_test_split(x,y,random_state=4)

#特征扩充数据切分

x_train,x_test,y_train,y_test = train_test_split(x_ploy,y,random_state=4)

#模型训练

knn = KNeighborsClassifier(n_neighbors=5)

knn.fit(x_train,y_train)

print("模型:\n",knn)

#根据x_test得到预测值y_pred

y_pred = knn.predict(x_test)

print('预测目标值:\n',y_pred)

print('真实目标值:\n',y_test)

print('预测目标值与真实目标值对比:\n',y_test == y_pred)

print('模型评分->',knn.score(x_test,y_test))

scores = cross_val_score(knn,x,y,cv=5,scoring='accuracy')

print('交叉验证模型评分',scores)

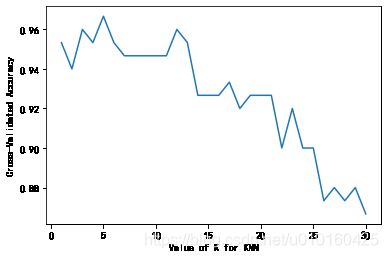

import matplotlib.pyplot as plt

k_range = range(1,31)

k_scores = []

for k in k_range:

knn = KNeighborsClassifier(n_neighbors=k)

#使用原始数据未做扩充进行交叉验证

# scores = cross_val_score(knn,x,y,cv=10,scoring='accuracy')

#使用扩充特征话后的值进行交叉验证

scores = cross_val_score(knn,x_ploy,y,cv=10,scoring='accuracy')

k_scores.append(scores.mean())

plt.plot(k_range,k_scores)

plt.xlabel('Value of K for KNN')

plt.ylabel('Cross-Validated Accuracy')

plt.show()

扩充特征后的训练集数据:

[[1.0000e+00 5.1000e+00 3.5000e+00 ... 3.9200e-01 5.6000e-02 8.0000e-03]

[1.0000e+00 4.9000e+00 3.0000e+00 ... 3.9200e-01 5.6000e-02 8.0000e-03]

[1.0000e+00 4.7000e+00 3.2000e+00 ... 3.3800e-01 5.2000e-02 8.0000e-03]

...

[1.0000e+00 6.5000e+00 3.0000e+00 ... 5.4080e+01 2.0800e+01 8.0000e+00]

[1.0000e+00 6.2000e+00 3.4000e+00 ... 6.7068e+01 2.8566e+01 1.2167e+01]

[1.0000e+00 5.9000e+00 3.0000e+00 ... 4.6818e+01 1.6524e+01 5.8320e+00]]

模型:

KNeighborsClassifier(algorithm='auto', leaf_size=30, metric='minkowski',

metric_params=None, n_jobs=None, n_neighbors=5, p=2,

weights='uniform')

预测目标值:

[2 0 2 2 2 1 2 0 0 2 0 0 0 2 2 0 1 0 0 2 0 2 1 0 0 0 0 0 0 2 1 0 2 0 1 2 2

1]

真实目标值:

[2 0 2 2 2 1 1 0 0 2 0 0 0 1 2 0 1 0 0 2 0 2 1 0 0 0 0 0 0 2 1 0 2 0 1 2 2

1]

预测目标值与真实目标值对比:

[ True True True True True True False True True True True True

True False True True True True True True True True True True

True True True True True True True True True True True True

True True]

模型评分-> 0.9473684210526315

交叉验证模型评分 [0.96666667 1. 0.93333333 0.96666667 1. ]