第8讲 视觉里程计2

8.3 实践:LK光流

(1)环境

opencv2

(2)代码详解

CMakeLists.txt

cmake_minimum_required( VERSION 2.8 )

project( useLK )

set( CMAKE_BUILD_TYPE Release )

set( CMAKE_CXX_FLAGS "-std=c++11 -O3" )

find_package( OpenCV )

include_directories( ${OpenCV_INCLUDE_DIRS} )

add_executable( useLK useLK.cpp )

target_link_libraries( useLK ${OpenCV_LIBS} )

useLK.cpp

#include

#include detector = cv::FastFeatureDetector::create();

cv::Ptr<cv::FeatureDetector> detector = cv::FeatureDetector::create ("ORB"); //opencv2 use this

detector->detect(colorImage, kps);

for (auto kp : kps) {

keypoints.push_back(kp.pt);

}

last_colorImage = colorImage;

continue;

}

if ( colorImage.data==nullptr || depthImage.data==nullptr ){

continue;

}

// 对其他帧用LK跟踪特征点

vector<cv::Point2f> next_keypoints;

vector<cv::Point2f> prev_keypoints;

for (auto kp : keypoints) {

prev_keypoints.push_back(kp);

}

vector<unsigned char> status;

vector<float> error;

//起始时间

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

cv::calcOpticalFlowPyrLK(last_colorImage, colorImage, prev_keypoints, next_keypoints, status, error);

//光流结束时间

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>( t2-t1 );

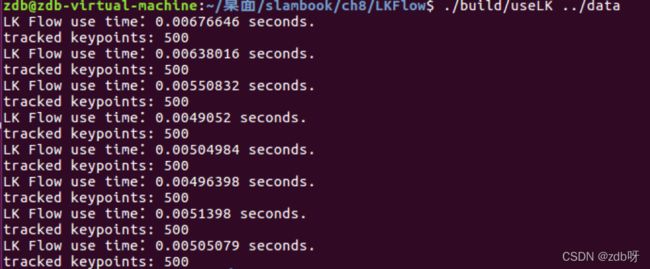

cout << "LK Flow use time:" << time_used.count() << " seconds." << endl;

// 把跟丢的点删掉

int i=0;

for (auto iter=keypoints.begin(); iter!=keypoints.end(); i++) {

if (status[i] == 0){

iter = keypoints.erase(iter);

continue;

}

*iter = next_keypoints[i];

iter++;

}

cout << "tracked keypoints: " << keypoints.size() << endl;

//如果全部跟丢

if (keypoints.size() == 0) {

cout << "all keypoints are lost." << endl;

break;

}

// 画出 keypoints

cv::Mat img_show = colorImage.clone();

for (auto kp : keypoints) {

cv::circle(img_show, kp, 12, cv::Scalar(0, 240, 0), 1);

}

cv::imshow("corners", img_show);

cv::waitKey(0);

last_colorImage = colorImage;

}

return 0;

}

(3)编译

data记得解压

mkdir build

cd build

cmake ..

make

cd ..

./build/useLK ../data

8.5 实践:RGB-D的直接法

8.5.1 稀疏直接法

(1)代码详解

CMakeLists.txt

cmake_minimum_required( VERSION 2.8 )

project( directMethod )

set( CMAKE_BUILD_TYPE Release )

set( CMAKE_CXX_FLAGS "-std=c++11 -O3" )

# 添加cmake模块路径

list( APPEND CMAKE_MODULE_PATH ${PROJECT_SOURCE_DIR}/cmake_modules )

find_package( OpenCV )

include_directories( ${OpenCV_INCLUDE_DIRS} )

find_package( G2O )

include_directories( ${G2O_INCLUDE_DIRS} )

include_directories( "/usr/include/eigen3" )

set( G2O_LIBS

g2o_core g2o_types_sba g2o_solver_csparse g2o_stuff g2o_csparse_extension

)

add_executable( direct_sparse direct_sparse.cpp )

target_link_libraries( direct_sparse ${OpenCV_LIBS} ${G2O_LIBS} )

#add_executable( direct_semidense direct_semidense.cpp )

#target_link_libraries( direct_semidense ${OpenCV_LIBS} ${G2O_LIBS} )

directMethod.cpp

#include

#include detector = cv::FastFeatureDetector::create();

cv::Ptr<cv::FeatureDetector> detector = cv::FeatureDetector::create ("ORB"); //opencv2 use this

detector->detect (color, keypoints);

for (auto kp : keypoints) {

// 去掉邻近边缘处的点

if (kp.pt.x<20 || kp.pt.y<20 || (kp.pt.x+20)>color.cols || (kp.pt.y+20)>color.rows) continue;

ushort d = depth.ptr<ushort> (cvRound(kp.pt.y)) [cvRound (kp.pt.x)];

if (d == 0) continue;

Eigen::Vector3d p3d = project2Dto3D ( kp.pt.x, kp.pt.y, d, fx, fy, cx, cy, depth_scale );

float grayscale = float ( gray.ptr<uchar> ( cvRound ( kp.pt.y ) ) [ cvRound ( kp.pt.x ) ] );

measurements.push_back(Measurement(p3d, grayscale));

}

prev_color = color.clone();

continue;

}

// 使用直接法计算相机运动

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

poseEstimationDirect (measurements, &gray, K, Tcw); //直接法

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>> (t2-t1);

cout<<"direct method costs time: "<<time_used.count() <<"seconds."<<endl;

cout << "Tcw=" << Tcw.matrix() << endl;

// plot the feature points

cv::Mat img_show (color.rows*2, color.cols, CV_8UC3);

prev_color.copyTo(img_show(cv::Rect (0, 0, color.cols, color.rows)));

color.copyTo(img_show(cv::Rect(0, color.rows, color.cols, color.rows)));

for (Measurement m : measurements) {

if ( rand() > RAND_MAX/5 )

continue;

Eigen::Vector3d p = m.pos_world;

Eigen::Vector2d pixel_prev = project3Dto2D (p(0,0), p(1,0), p(2,0), fx, fy, cx, cy);

Eigen::Vector3d p2 = Tcw * m.pos_world;

Eigen::Vector2d pixel_now = project3Dto2D (p2(0,0), p2(1,0), p2(2,0), fx, fy, cx, cy);

if (pixel_now(0,0)<0 || pixel_now(0,0)>=color.cols ||

pixel_now(1,0)<0 || pixel_now(1,0)>=color.rows) continue;

float b = 255 * float(rand()) / RAND_MAX;

float g = 255 * float(rand()) / RAND_MAX;

float r = 255 * float(rand()) / RAND_MAX;

cv::circle (img_show, cv::Point2d(pixel_prev(0,0), pixel_prev(1,0)), 8, cv::Scalar(b,g,r), 2);

cv::circle(img_show, cv::Point2d(pixel_now(0,0), pixel_now(1,0) +color.rows), 8, cv::Scalar(b,g,r), 2);

cv::line (img_show, cv::Point2d(pixel_prev(0,0), pixel_prev(1,0)), cv::Point2d(pixel_now(0,0), pixel_now(1,0)+color.rows), cv::Scalar (b,g,r), 1);

}

cv::imshow ( "result", img_show );

cv::waitKey ( 0 );

}

return 0;

}

//直接法实现位姿估计

bool poseEstimationDirect ( const vector< Measurement >& measurements, cv::Mat* gray, Eigen::Matrix3f& K, Eigen::Isometry3d& Tcw ) {

// 初始化g2o

typedef g2o::BlockSolver<g2o::BlockSolverTraits<6,1>> DirectBlock; // 求解的向量是6*1的

DirectBlock::LinearSolverType* linearSolver = new g2o::LinearSolverDense< DirectBlock::PoseMatrixType > ();

DirectBlock* solver_ptr = new DirectBlock ( linearSolver );

// g2o::OptimizationAlgorithmGaussNewton* solver = new g2o::OptimizationAlgorithmGaussNewton( solver_ptr ); // G-N 高斯牛顿法

g2o::OptimizationAlgorithmLevenberg* solver = new g2o::OptimizationAlgorithmLevenberg ( solver_ptr ); // L-M

g2o::SparseOptimizer optimizer;

optimizer.setAlgorithm ( solver );

optimizer.setVerbose( true );

g2o::VertexSE3Expmap* pose = new g2o::VertexSE3Expmap();

pose->setEstimate ( g2o::SE3Quat ( Tcw.rotation(), Tcw.translation() ) );

pose->setId ( 0 );

optimizer.addVertex ( pose );

// 添加边

int id = 1;

for (Measurement m : measurements) {

EdgeSE3ProjectDirect* edge = new EdgeSE3ProjectDirect (

m.pos_world, K(0,0), K(1,1), K(0,2), K(1,2), gray);

edge->setVertex ( 0, pose );

edge->setMeasurement ( m.grayscale );

edge->setInformation ( Eigen::Matrix<double,1,1>::Identity() );

edge->setId ( id++ );

optimizer.addEdge ( edge );

}

cout << "edges in graph: " << optimizer.edges().size() << endl;

optimizer.initializeOptimization();

optimizer.optimize ( 30 );

Tcw = pose->estimate();

}

(2)编译

mkdir build

cd build

cmake ..

make

cd ..

./build/direct_sparse ../data

8.5.4 半稠密直接法

(1)代码详解

CMakeLists.txt

cmake_minimum_required( VERSION 2.8 )

project( directMethod )

set( CMAKE_BUILD_TYPE Release )

set( CMAKE_CXX_FLAGS "-std=c++11 -O3" )

# 添加cmake模块路径

list( APPEND CMAKE_MODULE_PATH ${PROJECT_SOURCE_DIR}/cmake_modules )

find_package( OpenCV )

include_directories( ${OpenCV_INCLUDE_DIRS} )

find_package( G2O )

include_directories( ${G2O_INCLUDE_DIRS} )

include_directories( "/usr/include/eigen3" )

set( G2O_LIBS

g2o_core g2o_types_sba g2o_solver_csparse g2o_stuff g2o_csparse_extension

)

#add_executable( direct_sparse direct_sparse.cpp )

#target_link_libraries( direct_sparse ${OpenCV_LIBS} ${G2O_LIBS} )

add_executable( direct_semidense direct_semidense.cpp )

target_link_libraries( direct_semidense ${OpenCV_LIBS} ${G2O_LIBS} )

directMethod.cpp

#include

#include (2)编译

mkdir build

cd build

cmake ..

make

cd ..

./build/direct_semidense ../data