docker之网络(原生网络与自定义网络)9

#本文章写的是单机的多容器之间网络通信以及跨主机容器的网络通信

docker原生网络

删掉没有使用的网络,prune这个参数是docker每个指令都有的。

[root@docker1 harbor]# docker network prune

Are you sure you want to continue? [y/N] y

docker 原生网络 bridge host null ,默认情况用的是桥接

[root@docker1 harbor]# docker network ls

NETWORK ID NAME DRIVER SCOPE

8db80f123dd8 bridge bridge local

b51b022f8430 host host local

c386dbae12f0 none null local

容器间如何通信

桥接模式

docker0是当前这个主机所有容器的网关,容器地址在默认分配上是单调递增的,第一个是0.2,第二个是0.3…

当容器停掉后,它的ip资源就会被释放出来,然后分配给其它容器,ip地址在容器里面是会变的是动态的

首先安装一个工具,这个工具是来操作桥接口的,桥接网卡掉了后,可以通过这个工具加上去

[root@docker1 harbor]# yum install bridge-utils.x86_64 -y

[root@docker1 harbor]# brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242b9a15b22 no

veth7c468c6是容器的虚拟网卡,容器桥接上去了,一头在容器内网络,一头在docker0

[root@docker1 harbor]# docker run -d --name nginx nginx

[root@docker1 harbor]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

f28a0d105547 nginx "/docker-entrypoint.…" 3 seconds ago Up 2 seconds 80/tcp nginx

[root@docker1 harbor]# brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242b9a15b22 no veth7c468c6

虚拟网卡docker0上是39,容器里面可能就是40,一个网线的两端

veth7c468c6@if39:

[root@docker1 harbor]# ip addr show

40: veth7c468c6@if39: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker0 state UP group default

link/ether aa:23:2b:fe:e9:94 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::a823:2bff:fefe:e994/64 scope link

valid_lft forever preferred_lft forever

[root@docker1 harbor]# docker run -d --name nginx2 nginx

f640de1d58fe3628540618fc03aa8f4fe4259c55fec6d22eb41b741b94b6ae24

[root@docker1 harbor]# brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242b9a15b22 no veth5af9c0d

veth7c468c6

ip是单调递增的

[root@docker1 harbor]# ip addr show docker0

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

所以

[root@docker1 harbor]# curl 172.17.0.2

<title>Welcome to nginx!</title>

[root@docker1 harbor]# curl 172.17.0.3

<h1>Welcome to nginx!</h1>

容器通过nat可以访问外网,开启了地址伪装MASQUERADE

容器通过nat可以访问外网,开启了地址伪装MASQUERADE

容器通过虚拟网络对桥接到docker0上面,docker0通过eth0(打开内核路由功能 ipv4=1)来发送外网

[root@docker1 harbor]# iptables -t nat -nL

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

MASQUERADE all -- 172.17.0.0/16 0.0.0.0/0

host共享宿主机的网络

默认是桥接,所以要用–network参数

[root@docker1 harbor]# docker run -d --name demo --network host nginx

里面只有一个虚拟网卡,host不会生成虚拟网卡,直接使用宿主机的网络就有公有ip了,外网可以访问容器,但是需要避免端口冲突,避免容器之间和容器与宿主机间的冲突

[root@docker1 harbor]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

1b9e64aaa49a nginx "/docker-entrypoint.…" About a minute ago Up About a minute demo

f28a0d105547 nginx "/docker-entrypoint.…" 14 minutes ago Up 14 minutes 80/tcp nginx

[root@docker1 harbor]# brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242b9a15b22 no veth7c468c6

host网络模式

[root@docker1 harbor]# curl 172.25.254.1

<title>Welcome to nginx!</title>

端口冲突,启动不了

[root@docker1 harbor]# docker run -d --name demo2 --network host nginx

[root@docker1 harbor]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

1b9e64aaa49a nginx "/docker-entrypoint.…" 9 minutes ago Up 9 minutes demo

f28a0d105547 nginx "/docker-entrypoint.…" 21 minutes ago Up 21 minutes 80/tcp nginx

[root@docker1 harbor]# docker logs demo2

nginx: [emerg] bind() to [::]:80 failed (98: Address already in use)

host直连不需要nat,但是

host直连不需要nat,但是

none模式

none模式

禁用网络模式,不给容器ip地址

[root@docker1 harbor]# docker run -d --name demo2 --network none nginx

[root@docker1 harbor]# docker run -it --rm --network none busybox

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

docker自定义网络

overlay是互联网常用,三大运营商常用macvlan(底层硬件方式去解决网络跨主机通信)

自定义bridge可以自己固定某个容器的ip,而bridge在容器使用时会自动分配一个ip如1,关闭后重启,可能就不是ip1变成ip2等等。

自定义bridge还可以自定义ip网段和子网地址等

创建自定义网桥,默认自定义网络是bridge

创建自定义网桥,默认自定义网络是bridge

[root@docker1 harbor]# docker network --help

[root@docker1 harbor]# docker network create my_net1

[root@docker1 harbor]# docker network ls

NETWORK ID NAME DRIVER SCOPE

8db80f123dd8 bridge bridge local

b51b022f8430 host host local

18fb73b3c2f5 my_net1 bridge local

c386dbae12f0 none null local

harbor也是自己创建的仓库,因为它可以自动DNS解析容器名称到IP,所以容器用bridge网桥的时候,建议使用自己创建的

不加网络参数默认会桥接到原生的bridge中

[root@docker1 harbor]# docker run -d --name web1 nginx

[root@docker1 harbor]# brctl show

bridge name bridge id STP enabled interfaces

br-18fb73b3c2f5 8000.024231c9d512 no

docker0 8000.0242b9a15b22 no veth2e47b03

[root@docker1 harbor]# docker run -d --name web2 --network my_net1 nginx

[root@docker1 harbor]# brctl show

bridge name bridge id STP enabled interfaces

br-18fb73b3c2f5 8000.024231c9d512 no veth231ba38

docker0 8000.0242b9a15b22 no veth2e47b03

这个容器默认是原生bridge,是不提供解析的

[root@docker1 harbor]# docker run -it --rm busybox

ping: bad address 'web1'

/ # ping 172.17.0.2

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.059 ms

/ # ping web2

ping: bad address 'web2'

自定义网桥功能

通过DNS解析来通信

[root@docker1 harbor]# docker run -it --rm --network my_net1 busybox

/ # ping web2

64 bytes from 172.18.0.2: seq=0 ttl=64 time=0.120 ms

/ # ping web1

ping: bad address 'web1'

ping 不同web1,因为自定义的网段和docker0网段不同

[root@docker1 harbor]# ip addr show

43: br-18fb73b3c2f5: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:31:c9:d5:12 brd ff:ff:ff:ff:ff:ff

inet 172.18.0.1/16 brd 172.18.255.255 scope global br-18fb73b3c2f5

valid_lft forever preferred_lft forever

inet6 fe80::42:31ff:fec9:d512/64 scope link

valid_lft forever preferred_lft forever

5: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

容器的ip是动态的,用ip通信不行,ip是动态变更的,用容器名字来通信

[root@docker1 harbor]# docker stop web2

[root@docker1 harbor]# docker run -d --name web3 --network my_net1 nginx

[root@docker1 harbor]# docker start web2

[root@docker1 harbor]# docker run -it --rm --network my_net1 busybox

/ # ping web2

64 bytes from 172.18.0.3: seq=0 ttl=64 time=0.051 ms

[root@docker1 harbor]# docker network create --help

[root@docker1 harbor]# docker network create --subnet 172.20.0.0/24 --gateway 172.20.0.1 my_net2

3043c2a41e35d3a32a7a649fcaa3b4b0b2d3700121751b9a63415b6d31308bae

[root@docker1 harbor]# docker network ls

NETWORK ID NAME DRIVER SCOPE

8db80f123dd8 bridge bridge local

b51b022f8430 host host local

18fb73b3c2f5 my_net1 bridge local

3043c2a41e35 my_net2 bridge local

c386dbae12f0 none null local

[root@docker1 harbor]# ip addr show 生成桥接口了

58: br-3043c2a41e35: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:4f:90:95:90 brd ff:ff:ff:ff:ff:ff

inet 172.20.0.1/24 brd 172.20.0.255 scope global br-3043c2a41e35

valid_lft forever preferred_lft forever

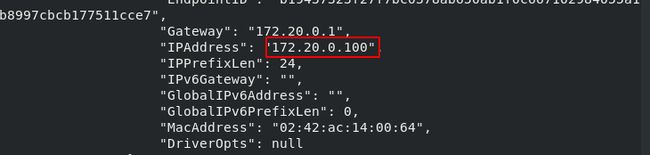

[root@docker1 harbor]# docker run -d --name web4 --network my_net2 --ip 172.20.0.100 nginx

[root@docker1 harbor]# docker inspect web4

[root@docker1 harbor]# docker stop web4

[root@docker1 harbor]# docker start web4

[root@docker1 harbor]# docker inspect web4

必须是自定义的网段上面才能–ip

必须是自定义的网段上面才能–ip

my_net1没有自定义网段

my_net1没有自定义网段

[root@docker1 harbor]# docker run -d --name web5 --network my_net1 --ip 172.18.0.100 nginx

docker: Error response from daemon: user specified IP address is supported only when connecting to networks with user configured subnets.

docker为了安全,所以隔离不同的network,查看它的策略都是DROP丢弃的

不同网桥的容器通信不要调整它的策略这样会破坏它的隔离性

[root@docker1 ~]# iptables -L

Chain DOCKER-ISOLATION-STAGE-2 (3 references)

target prot opt source destination

DROP all -- anywhere anywhere

DROP all -- anywhere anywhere

DROP all -- anywhere anywhere

RETURN all -- anywhere anywhere

web3 my_net1 web4 my_net2,让其互通

web3 my_net1 web4 my_net2,让其互通

给web3添加一块my_net1的网卡

[root@docker1 ~]# docker network connect my_net1 web4

[root@docker1 ~]# docker inspect web3

Docker容器通信

Joined容器:

Joined容器:

共享一个容器的网络,两个不同容器可以通过localhost高速通信

比如lamp架构,nginx mysql php可以不同容器部署运行,但是通过localhost访问,只是通过不同的端口访问,比如mysql3306,php9000,nginx80。

web3是my_net1

[root@docker1 ~]# docker inspect web3

"Gateway": "172.18.0.1",

共享web3的ip地址

[root@docker1 ~]# docker pull radial/busyboxplus

[root@docker1 ~]# docker tag radial/busyboxplus:latest busyboxplus:latest

[root@docker1 ~]# docker run -it --rm --network container:web3 busyboxplus

/ # ip addr

inet 172.18.0.3/16 brd 172.18.255.255 scope global eth0

/ # curl localhost

<title>Welcome to nginx!</title>

默认网络没有解析,给默认网路添加DNS解析,当容器重启后,ip变更,它的解析也自动变更。

主机名:别名,可以通信ping主机名也可以通信别名

这种不如直接用自定义的briege里面的DNS

[root@docker1 ~]# docker run -it --rm --link web3:nginx --network my_net1 busyboxplus

/ # ip addr

inet 172.18.0.4/16 brd 172.18.255.255 scope global eth0

/ # ping web3

64 bytes from 172.18.0.3: seq=0 ttl=64 time=0.057 ms

/ # env

HOSTNAME=ca85afe9651d

SHLVL=1

HOME=/

TERM=xterm

PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

PWD=/

web1桥接的是docker0,env它将变量和解析加进去了

[root@docker1 ~]# docker inspect web1

"IPAddress": "172.17.0.2",

[root@docker1 ~]# docker run -it --rm --link web1:nginx busyboxplus

/ # ping web1

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.060 ms

/ # env

HOSTNAME=01ba3b877905

SHLVL=1

HOME=/

NGINX_ENV_PKG_RELEASE=1~bullseye

NGINX_PORT_80_TCP=tcp://172.17.0.2:80

NGINX_ENV_NGINX_VERSION=1.21.5

NGINX_ENV_NJS_VERSION=0.7.1

TERM=xterm

PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

NGINX_PORT=tcp://172.17.0.2:80

NGINX_NAME=/sad_benz/nginx

PWD=/

NGINX_PORT_80_TCP_ADDR=172.17.0.2

NGINX_PORT_80_TCP_PORT=80

NGINX_PORT_80_TCP_PROTO=tcp

/ # ping nginx

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.052 ms

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 nginx 2fc55fe8c1fd web1

172.17.0.3 01ba3b877905

加解析了

所以–link不能用于自定义网络,只能用于默认网络。

默认网络没有解析

/ # ping web1

ping: bad address 'web1'

并且它的解析会随着容器ip地址的变更而变更,但是它写入的变量env不会变更

将web1关闭启动变更它的ip,它的解析也变更了

/ # cat /etc/hosts

172.17.0.2 nginx 2fc55fe8c1fd web1

/ # cat /etc/hosts

172.17.0.4 nginx 2fc55fe8c1fd web1

[root@docker1 ~]# iptables -t nat -nL

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

MASQUERADE all -- 172.17.0.0/16 0.0.0.0/0

MASQUERADE all -- 172.18.0.0/16 0.0.0.0/0

MASQUERADE all -- 172.20.0.0/24 0.0.0.0/0

docker0与真实网卡,通过Linux内核实现数据包的路由

双冗余,一个通过iptable火墙转发,一种通过进程代理来实现,两种存在一个都可以生效(外网访问容器)

双冗余,一个通过iptable火墙转发,一种通过进程代理来实现,两种存在一个都可以生效(外网访问容器)

docker prox不能完全替代iptable DNAT因为转发还要用iptable

清空容器

清空容器

[root@docker1 ~]# docker ps -aq

81cd079f1e08

f7d480524524

e305ecfdc237

7b5f010de330

ddcac54b82da

2fc55fe8c1fd

[root@docker1 ~]# docker rm -f `docker ps -aq`

当访问本机的80会重定向到容器的80端口

[root@docker1 ~]# docker run -d --name demo -p 80:80 nginx

[root@docker1 ~]# iptables -t nat -nL

Chain DOCKER (2 references)

DNAT tcp -- 0.0.0.0/0 0.0.0.0/0 tcp dpt:80 to:172.17.0.2:80

[root@docker1 ~]# curl 172.25.254.1

<title>Welcome to nginx!</title>

专门的进程,监听80端口,双冗余

[root@docker1 ~]# netstat -antlp

tcp6 0 0 :::80 :::* LISTEN 7569/docker-proxy

证明外部访问容器是双冗余

宿主机直接走的网关docker0 ping通

[root@docker1 ~]# iptables -t nat -F

[root@docker1 ~]# iptables -t nat -nL

Chain PREROUTING (policy ACCEPT)

target prot opt source destination

Chain INPUT (policy ACCEPT)

target prot opt source destination

Chain OUTPUT (policy ACCEPT)

target prot opt source destination

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

Chain DOCKER (0 references)

target prot opt source destination

因为都在一个网桥不需要火墙和prox进程

/ # ping 172.17.0.2

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.079 ms

[root@docker1 ~]# ps ax

7569 ? Sl 0:00 /usr/bin/docker-proxy -proto tcp -host-ip 0.0.0.0 -host-port 80 -contai

[root@docker1 ~]# kill 7569

/ # ping 172.17.0.2

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.065 ms

外部就不通了

没有火墙和proxy关了,外部进不来了

[kiosk@foundation38 Desktop]$ curl 172.25.254.1

curl: (7) Failed to connect to 172.25.254.1 port 80: Connection refused

关闭火墙外部数据还是能进入容器,通过docker proxy

[root@docker1 ~]# systemctl restart docker

[root@docker1 ~]# iptables -t nat -nL

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

MASQUERADE all -- 172.17.0.0/16 0.0.0.0/0

MASQUERADE all -- 172.18.0.0/16 0.0.0.0/0

MASQUERADE all -- 172.20.0.0/24 0.0.0.0/0

[root@docker1 ~]# docker start demo

[root@docker1 ~]# iptables -t nat -nL

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

MASQUERADE all -- 172.17.0.0/16 0.0.0.0/0

MASQUERADE all -- 172.18.0.0/16 0.0.0.0/0

MASQUERADE all -- 172.20.0.0/24 0.0.0.0/0

MASQUERADE tcp -- 172.17.0.2 172.17.0.2 tcp dpt:80

DNAT tcp -- 0.0.0.0/0 0.0.0.0/0 tcp dpt:80 to:172.17.0.2:80

[kiosk@foundation38 Desktop]$ curl 172.25.254.1

<title>Welcome to nginx!</title>

[root@docker1 ~]# ps ax | grep proxy

8496 ? Sl 0:00 /usr/bin/docker-proxy -proto tcp -host-ip 0.0.0.0 -host-port 80 -container-ip 172.17.0.2 -container-port 80

8572 pts/1 S+ 0:00 grep --color=auto proxy

[root@docker1 ~]# iptables -t nat -F

[root@docker1 ~]# iptables -t nat -nL

Chain PREROUTING (policy ACCEPT)

target prot opt source destination

Chain INPUT (policy ACCEPT)

target prot opt source destination

Chain OUTPUT (policy ACCEPT)

target prot opt source destination

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

Chain DOCKER (0 references)

target prot opt source destination

[kiosk@foundation38 Desktop]$ curl 172.25.254.1

<title>Welcome to nginx!</title>

关闭进程,留着火墙

[root@docker1 ~]# systemctl restart docker

[root@docker1 ~]# docker start demo

[root@docker1 ~]# ps ax | grep proxy

8896 ? Sl 0:00 /usr/bin/docker-proxy -proto tcp -host-ip 0.0.0.0 -host-port 80 -container-ip 172.17.0.2 -container-port 80

8971 pts/1 R+ 0:00 grep --color=auto proxy

[root@docker1 ~]# kill 8896

[kiosk@foundation38 Desktop]$ curl 172.25.254.1

<title>Welcome to nginx!</title>

所以外部访问容器是双冗余机制

跨主机容器网络

清理环境

[root@docker1 ~]# docker network prune

WARNING! This will remove all custom networks not used by at least one container.

Are you sure you want to continue? [y/N] y

Deleted Networks:

my_net1

my_net2

[root@docker1 ~]# docker rm -f demo

[root@docker1 ~]# docker volume prune

Are you sure you want to continue? [y/N] y

[root@docker1 ~]# docker image prune

删掉只有名字,id是none,用不了的镜像,其实是镜像的缓存

实验环境

[root@docker1 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

3811ae4f8bf9 bridge bridge local

b51b022f8430 host host local

c386dbae12f0 none null local

[root@docker2 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

568aa16db709 bridge bridge local

20d505b4b669 host host local

09ed8d8227c2 none null local

overlay是docker swarm 使用,这次先用macvlan

第三方是k8s使用

docker官方给你网络库和模型了,你根据自己需要的网络自己开发

Endpoint相当于虚拟网卡

Endpoint相当于虚拟网卡

macvlan是底层解决,硬件来解决

macvlan是底层解决,硬件来解决

给docker1和docker2各添加一个桥接在eth0的网卡

给docker1和docker2各添加一个桥接在eth0的网卡

激活新加入网卡的混杂模式

[root@docker1 ~]# ip addr

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

46: enp7s0: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

[root@docker1 ~]# ip link set enp7s0 promisc on

[root@docker1 ~]# ip addr

46: enp7s0: <BROADCAST,MULTICAST,PROMISC> mtu 1500 qdisc noop state DOWN group default qlen 1000

[root@docker1 ~]# ip link set up enp7s0

[root@docker1 ~]# ip addr

46: enp7s0: <BROADCAST,MULTICAST,PROMISC,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

[root@docker2 ~]# ip link set enp7s0 promisc on

[root@docker2 ~]# ip link set up enp7s0

[root@docker2 ~]# ip addr

4: enp7s0: <NO-CARRIER,BROADCAST,MULTICAST,PROMISC,UP> mtu 1500 qdisc pfifo_fast state DOWN group default qlen 1000

不能让两个ip冲突,两个网卡可以分配ip都为0.1,但是,因为是在一个vlan中,ip不要冲突。

[root@docker1 ~]# docker network create -d macvlan --subnet 10.0.0.0/24 --gateway 10.0.0.1 -o parent=enp7s0 mynet1

[root@docker1 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

3811ae4f8bf9 bridge bridge local

b51b022f8430 host host local

9e1d71bae46c mynet1 macvlan local

c386dbae12f0 none null local

[root@docker1 ~]# docker run -it --rm --network mynet1 --ip 10.0.0.10 busyboxplus

/ # ip addr

inet 10.0.0.10/24 brd 10.0.0.255 scope global eth0

[root@docker2 ~]# docker network create -d macvlan --subnet 10.0.0.0/24 --gateway 10.0.0.1 -o parent=enp7s0 mynet1

[root@docker2 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

568aa16db709 bridge bridge local

20d505b4b669 host host local

dd46171171cb mynet1 macvlan local

09ed8d8227c2 none null local

[root@docker2 ~]# docker run -it --rm --network mynet1 --ip 10.0.0.11 busybox

/ # ip addr

inet 10.0.0.11/24 brd 10.0.0.255 scope global eth0

/ # ping 10.0.0.10

64 bytes from 10.0.0.10: seq=0 ttl=64 time=0.814 ms

/ # ping 10.0.0.11

64 bytes from 10.0.0.11: seq=0 ttl=64 time=0.284 ms

通过底层的硬件去解决跨主机通信,由于容器是成千上万,可能需要很多网段需要大量的网卡,硬件可以插多少网卡呢?

没有新建linux bridge和虚拟网卡

没有新建linux bridge和虚拟网卡

[root@docker1 ~]# brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242c27a3cac no

[root@docker1 ~]# ip addr

46: enp7s0: <BROADCAST,MULTICAST,PROMISC,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

[root@docker1 ~]# ip link set enp7s0 down

/ # ping 10.0.0.10

不通

[root@docker1 ~]# ip link set enp7s0 up

64 bytes from 10.0.0.10: seq=29 ttl=64 time=1002.343 ms

无需NAT和端口映射,所有这种macvlan只要把底层的物理链路通了,剩下的不用管了,docker不管

[root@docker2 ~]# docker run -d --name demo --network mynet1 --ip 10.0.0.11 webserver:v3

/ # curl 10.0.0.11

<title>Welcome to nginx!</title>

[root@docker2 ~]# netstat -antlp

[root@docker2 ~]# iptables -nL -t nat

没有DNAT,没有端口映射

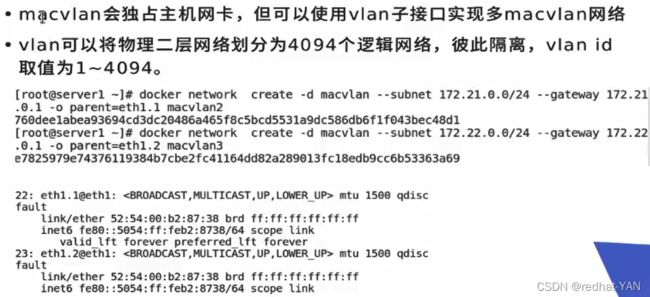

由于不能接无限个网卡,所以可以创建子接口(.1 .2.3.4…)依此类推

由于不能接无限个网卡,所以可以创建子接口(.1 .2.3.4…)依此类推

二层

[root@docker2 ~]# docker network create -d macvlan --subnet 20.0.0.0/24 --gateway 20.0.0.1 -o parent=enp7s0.1 mynet2

[root@docker1 ~]# docker network create -d macvlan --subnet 20.0.0.0/24 --gateway 20.0.0.1 -o parent=enp7s0.1 mynet2

[root@docker1 ~]# docker run -d --network mynet2 nginx

[root@docker1 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

e91e508fdd2b nginx "/docker-entrypoint.…" 28 seconds ago Up 26 seconds practical_chandrasekhar

[root@docker1 ~]# docker inspect e91e508fdd2b

"IPAddress": "20.0.0.2",

[root@docker1 ~]# docker network create -d macvlan --subnet 30.0.0.0/24 --gateway 30.0.0.1 -o parent=enp7s0.2 mynet3

要使得二层通信,可以在三层做路由网关,将macvlan网络联通起来

要使得二层通信,可以在三层做路由网关,将macvlan网络联通起来

inspect查看具体信息无论网络、卷、容器、镜像这些参数相通用的

inspect查看具体信息无论网络、卷、容器、镜像这些参数相通用的

[root@docker1 ~]# docker network inspect mynet2

[root@docker1 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

3811ae4f8bf9 bridge bridge local

b51b022f8430 host host local

9e1d71bae46c mynet1 macvlan local

7450c8721d84 mynet2 macvlan local

2642e999f12a mynet3 macvlan local

c386dbae12f0 none null local