resnet50代码前向对齐

主要思路:先导入预训练模型,然后导出权重参数和npy输出,然后再将torch的代码对应的改写成paddle的代码,导入权重参数,输出npy文件,最后对比着两个npy文件即可

完整项目链接

1.安装pycharm

破解版的,直接安装就好

2.安装anconda

安装链接

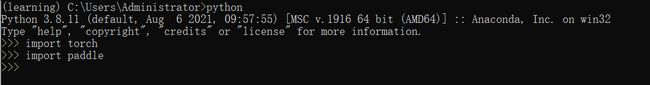

3.安装paddlepaddle和torch

conda create -n resnet50 python=3.7

conda activate resnet50

conda install paddlepaddle --channel https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/Paddle/

pip install torch torchvision -i https://pypi.tuna.tsinghua.edu.cn/simple

pip install torchvision

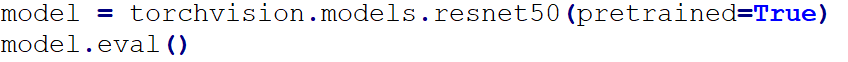

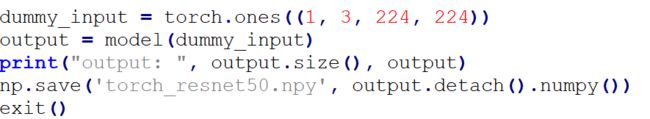

4.resnet50_torch.py代码讲解

先导入预训练模型,然后开启预测模式(为了后面导出权重做准备)

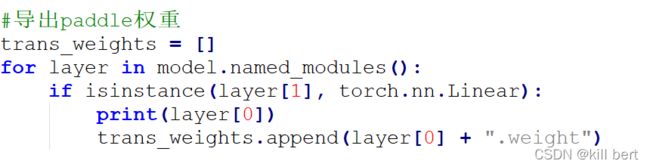

先定义了一个空列表,然后通过named_modules函数(获取网络结构)遍历所有的层,如果是线性层的话就会添加到这个列表中(在resnet中只有最后一层fc层是线性层,所以这段代码的意思就是先把resnet网络中的最后一层提取出来)

named_modules()函数和named_children( ):

从定义上讲:

named_children( ):返回包含子模块的迭代器,同时产生模块的名称以及模块本身。

named_modules( ):返回网络中所有模块的迭代器,同时产生模块的名称以及模块本身。

测试一下:

import torch

import torch.nn as nn

class TestModule(nn.Module):

def __init__(self):

super(TestModule,self).__init__()

self.layer1 = nn.Sequential(

nn.Conv2d(16,32,3,1),

nn.ReLU(inplace=True)

)

self.layer2 = nn.Sequential(

nn.Linear(32,10)

)

def forward(self,x):

x = self.layer1(x)

x = self.layer2(x)

model = TestModule()

for name, module in model.named_children():

print('children module:', name)

for name, module in model.named_modules():

print('modules:', name)

>>out:

children module: layer1

children module: layer2

modules:

modules: layer1

modules: layer1.0

modules: layer1.1

modules: layer2

modules: layer2.0

可以看到named_children只输出了layer1和layer2两个子module,而named_modules输出了包括layer1和layer2下面所有的modolue。

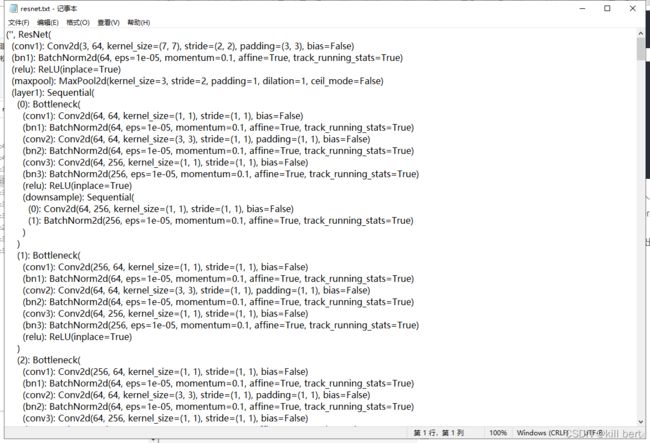

所以可以利用named_modules()函数将网络结构打印出来,博主将结构打印到txt文件中以便查阅。

函数isinstance()可以判断一个变量的类型,既可以用在Python内置的数据类型如str、list、dict,也可以用在我们自定义的类,它们本质上都是数据类型。

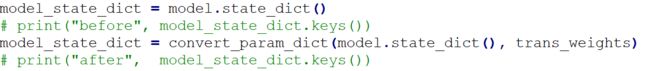

model.state_dict():

这个函数是可以去获得模型的状态字典,这个字典是在定义后模型后自动生成的。

convert_param_dict(model_dict, trans_weights):

该函数是将torch中的状态字典转为paddle中的状态字典。

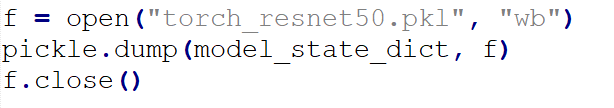

保存参数字典

保存输出

最后得到torch_resnet50.pkl和torch_resnet50.npy两个文件

至此就得到torch环境下的运行结果和paddle环境下的权重文件了,接下来就是要把torch的代码对应成paddle代码。

5.代码对齐

打开这个网站,使用浏览器的查找功能,对torch的API进行逐个比对

效果展示:

import paddle

import paddle.nn as nn

import pickle

import numpy as np

def conv3x3(in_planes, out_planes, stride = 1, groups = 1, dilation = 1):

"""3x3 convolution with padding"""

return nn.Conv2D(in_planes, out_planes, kernel_size=3, stride=stride,

padding=dilation, groups=groups, dilation=dilation)

def conv1x1(in_planes, out_planes, stride = 1):

"""1x1 convolution"""

return nn.Conv2D(in_planes, out_planes, kernel_size=1, stride=stride)

class BasicBlock(nn.Layer):

expansion = 1

def __init__(self,

inplanes,

planes,

stride=1,

downsample=None,

groups=1,

base_width=64,

dilation=1,

norm_layer=None):

super(BasicBlock, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2D

if groups != 1 or base_width != 64:

raise ValueError('BasicBlock only supports groups=1 and base_width=64')

if dilation > 1:

raise NotImplementedError(

"Dilation > 1 not supported in BasicBlock")

self.conv1 = nn.Conv2D(

inplanes, planes, 3, padding=1, stride=stride, bias_attr=False)

self.bn1 = norm_layer(planes)

self.relu = nn.ReLU()

self.conv2 = nn.Conv2D(planes, planes, 3, padding=1, bias_attr=False)

self.bn2 = norm_layer(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class BottleneckBlock(nn.Layer):

expansion = 4

def __init__(self,

inplanes,

planes,

stride=1,

downsample=None,

groups=1,

base_width=64,

dilation=1,

norm_layer=None):

super(BottleneckBlock, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2D

width = int(planes * (base_width / 64.)) * groups

self.conv1 = conv1x1(inplanes, width)

self.bn1 = norm_layer(width)

self.conv2 = conv3x3(width, width, stride, groups, dilation)

self.bn2 = norm_layer(width)

self.conv3 = conv1x1(width, planes * self.expansion)

self.bn3 = norm_layer(planes * self.expansion)

self.relu = nn.ReLU()

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class ResNet(nn.Layer):

def __init__(

self,

block,

layers,

num_classes = 1000,

zero_init_residual = False,

groups = 1,

width_per_group = 64,

replace_stride_with_dilation = None,

norm_layer = None

):

super(ResNet, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2D

self._norm_layer = norm_layer

self.inplanes = 64

self.dilation = 1

if replace_stride_with_dilation is None:

# each element in the tuple indicates if we should replace

# the 2x2 stride with a dilated convolution instead

replace_stride_with_dilation = [False, False, False]

if len(replace_stride_with_dilation) != 3:

raise ValueError("replace_stride_with_dilation should be None "

"or a 3-element tuple, got {}".format(replace_stride_with_dilation))

self.groups = groups

self.base_width = width_per_group

self.conv1 = nn.Conv2D(3, self.inplanes, kernel_size=7, stride=2, padding=3)

self.bn1 = norm_layer(self.inplanes)

self.relu = nn.ReLU()

self.maxpool = nn.MaxPool2D(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2,

dilate=replace_stride_with_dilation[0])

self.layer3 = self._make_layer(block, 256, layers[2], stride=2,

dilate=replace_stride_with_dilation[1])

self.layer4 = self._make_layer(block, 512, layers[3], stride=2,

dilate=replace_stride_with_dilation[2])

self.avgpool = nn.AdaptiveAvgPool2D((1, 1))

self.fc = nn.Linear(512 * block.expansion, num_classes)

def _make_layer(self, block, planes, blocks,

stride = 1, dilate = False):

norm_layer = self._norm_layer

downsample = None

previous_dilation = self.dilation

if dilate:

self.dilation *= stride

stride = 1

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

conv1x1(self.inplanes, planes * block.expansion, stride),

norm_layer(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample, self.groups,

self.base_width, previous_dilation, norm_layer))

self.inplanes = planes * block.expansion

for _ in range(1, blocks):

layers.append(block(self.inplanes, planes, groups=self.groups,

base_width=self.base_width, dilation=self.dilation,

norm_layer=norm_layer))

return nn.Sequential(*layers)

def _forward_impl(self, x):

# See note [TorchScript super()]

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = paddle.flatten(x, 1)

x = self.fc(x)

return x

def forward(self, x):

return self._forward_impl(x)

def _resnet(arch, block, layers,**kwargs):

model = ResNet(block, layers,**kwargs)

return model

def resnet50(**kwargs):

return _resnet('resnet50', BottleneckBlock, [3, 4, 6, 3])

if __name__ == "__main__":

dummy_input = [paddle.ones(shape=[3, 224, 224])]

model = resnet50()

with open('torch_resnet50.pkl', 'rb') as f:

param2 = pickle.load(f)

model.set_state_dict(param2)

model.eval()

output = model(paddle.to_tensor(dummy_input))

np.save('paddle_resnet50.npy', output.numpy())

最后运行返回Ture即为成功

import numpy as np

paddle_output=np.load('paddle_resnet50.npy')

torch_output=np.load('torch_resnet50.npy')

print(np.allclose(paddle_output, torch_output, atol=1e-5))