探索云原生技术之基石-Docker容器高级篇(1)

❤️作者简介:2022新星计划第三季云原生与云计算赛道Top5、华为云享专家、云原生领域潜力新星

博客首页:C站个人主页

作者目的:如有错误请指正,将来会不断的完善笔记,帮助更多的Java爱好者入门,共同进步!

文章目录

-

- 探索云原生技术之基石-Docker容器高级篇(1)

-

- 什么是云原生

- 什么是Docker

- MySQL主从复制(1主1从)

-

- Master节点配置

- Slave节点配置

- 测试主从同步

- 主从同步失败问题(Slave_SQL_Running:No)

-

- Slave_SQL_Running:No的解决办法

- Redis集群(3主6从)

-

- 10亿条数据怎么进行缓存

-

- 哈希取余算法(小厂采用)

- 一致性hash算法(中大厂采用)

- hash槽算法(大厂采用、和Redis集群也是采用这种)

- 快速搭建Redis Cluster(3主6从)

-

- 快速生成9个容器实例

- 查看容器实例是否全部启动

- 配置主从

- 以集群的方式进入Redis客户端

- 操作set命令

- 查看集群节点信息

- 查看集群状态

- 主从切换

- 主从扩容

- 主从缩容

探索云原生技术之基石-Docker容器高级篇(1)

本博文一共有6篇,如下

- 探索云原生技术之基石-Docker容器入门篇(1)

- 探索云原生技术之基石-Docker容器入门篇(2)

- 探索云原生技术之基石-Docker容器入门篇(3)

- 探索云原生技术之基石-Docker容器入门篇(4),=>由于篇幅过长,所以另起一篇

等你对Docker有一定理解的时候可以看高级篇,不过不太建议。

- 探索云原生技术之基石-Docker容器高级篇(1)

- 探索云原生技术之基石-Docker容器高级篇(2)

剧透:未来将出云原生技术-Kubernetes(k8s),此时的你可以对Docker进行统一管理、动态扩缩容等等。

看完之后你会对Docker有一定的理解,并能熟练的使用Docker进行容器化开发、以及Docker部署微服务、Docker网络等等。干起来!

什么是云原生

Pivotal公司的Matt Stine于2013年首次提出云原生(Cloud-Native)的概念;2015年,云原生刚推广时,Matt Stine在《迁移到云原生架构》一书中定义了符合云原生架构的几个特征:12因素、微服务、自敏捷架构、基于API协作、扛脆弱性;到了2017年,Matt Stine在接受InfoQ采访时又改了口风,将云原生架构归纳为模块化、可观察、可部署、可测试、可替换、可处理6特质;而Pivotal最新官网对云原生概括为4个要点:DevOps+持续交付+微服务+容器。

总而言之,符合云原生架构的应用程序应该是:采用开源堆栈(K8S+Docker)进行容器化,基于微服务架构提高灵活性和可维护性,借助敏捷方法、DevOps支持持续迭代和运维自动化,利用云平台设施实现弹性伸缩、动态调度、优化资源利用率。

(此处摘选自《知乎-华为云官方帐号》)

![]()

什么是Docker

- Docker 是一个开源的应用容器引擎,基于Go语言开发。

- Docker 可以让开发者打包他们的应用以及依赖包到一个轻量级、可移植的容器中,然后发布到任何流行的 Linux 机器上,也可以实现虚拟化。

- 容器是完全使用沙箱机制(容器实例相互隔离),容器性能开销极低(高性能)。

总而言之:

Docker是一个高性能的容器引擎;

可以把本地源代码、配置文件、依赖、环境通通打包成一个容器即可以到处运行;

使用Docker安装软件十分方便,而且安装的软件十分精简,方便扩展。

MySQL主从复制(1主1从)

Master节点配置

- 运行一个mysql容器实例。作为Master节点

docker run -p 3307:3306 \

-v /my-sql/mysql-master/log:/var/log/mysql \

-v /my-sql/mysql-master/data:/var/lib/mysql \

-v /my-sql/mysql-master/conf:/etc/mysql \

-e MYSQL_ROOT_PASSWORD=123456 \

--name mysql-master \

-d mysql:5.7

- 创建my.cnf文件(也就是mysql的配置文件)

vim /my-sql/mysql-master/conf/my.cnf

- 将内容粘贴进my.cnf文件

[client]

# 指定编码格式为utf8,默认的MySQL会有中文乱码问题

default_character_set=utf8

[mysqld]

collation_server=utf8_general_ci

character_set_server=utf8

# 全局唯一id(不允许有相同的)

server_id=200

binlog-ignore-db=mysql

# 指定MySQL二进制日志

log-bin=order-mysql-bin

# binlog最大容量

binlog_cache_size=1M

# 二进制日志格式(这里指定的是混合日志)

binlog_format=mixed

# binlog的有效期(单位:天)

expire_logs_days=7

slave_skip_errors=1062

- 重启该mysql容器实例

docker restart mysql-master

- 进入容器内部,并登陆mysql

$ docker exec -it mysql-master /bin/bash

$ mysql -uroot -p

- 在Master节点的MySQL中创建用户和分配权限

在主数据库创建的该帐号密码只是用来进行同步数据。

create user 'slave'@'%' identified by '123456';

grant replication slave, replication client on *.* to 'slave'@'%';

Slave节点配置

- 运行一个MySQL容器实例,作为slave节点(从节点)

docker run -p 3308:3306 \

-v /my-sql/mysql-slave/log:/var/log/mysql \

-v /my-sql/mysql-slave/data:/var/lib/mysql \

-v /my-sql/mysql-slave/conf:/etc/mysql \

-e MYSQL_ROOT_PASSWORD=123456 \

--name mysql-slave \

-d mysql:5.7

- 创建my.cnf文件(也就是mysql的配置文件)

vim /my-sql/mysql-slave/conf/my.cnf

- 将内容粘贴进my.cnf文件

[client]

# 指定编码格式为utf8,默认的MySQL会有中文乱码问题

default_character_set=utf8

[mysqld]

collation_server=utf8_general_ci

character_set_server=utf8

# 全局唯一id(不允许有相同的)

server_id=201

binlog-ignore-db=mysql

# 指定MySQL二进制日志

log-bin=order-mysql-bin

# binlog最大容量

binlog_cache_size=1M

# 二进制日志格式(这里指定的是混合日志)

binlog_format=mixed

# binlog的有效期(单位:天)

expire_logs_days=7

slave_skip_errors=1062

# 表示slave将复制事件写进自己的二进制日志

log_slave_updates=1

# 表示从机只能读

read_only=1

- 重启该mysql容器实例

docker restart mysql-slave

- 查看容器实例是否都是up

[root@aubin ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

2c4136668536 mysql:5.7 "docker-entrypoint.s…" 3 minutes ago Up 9 seconds 33060/tcp, 0.0.0.0:3308->3306/tcp, :::3308->3306/tcp mysql-slave

20fc7174d1a7 mysql:5.7 "docker-entrypoint.s…" 15 hours ago Up 8 minutes 33060/tcp, 0.0.0.0:3307->3306/tcp, :::3307->3306/tcp mysql-master

- 进入Master节点查看主从同步状态

mysql> show master status;

+------------------------+----------+--------------+------------------+-------------------+

| File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set |

+------------------------+----------+--------------+------------------+-------------------+

| order-mysql-bin.000001 | 617 | | mysql | |

+------------------------+----------+--------------+------------------+-------------------+

1 row in set (0.00 sec)

- 进入从机MySQL

$ docker exec -it mysql-slave /bin/bash

$ mysql -uroot -p

- 在从数据库(slave)配置主从同步(重点)

在Master节点中找到ens33的ip地址并放到下面的master_host中(记住这个ip是Master数据库的服务器ip,不是从数据库的):

[root@aubin ~]# ifconfig

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.184.132 netmask 255.255.255.0 broadcast 192.168.184.255

inet6 fe80::5c87:5037:8d1d:7650 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:23:28:59 txqueuelen 1000 (Ethernet)

RX packets 4186 bytes 443992 (433.5 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 3146 bytes 428816 (418.7 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

记住下面这个命令要在从(slave)数据库执行:

change master to master_host='192.168.184.132',master_user='slave',master_password='123456',master_port=3307,master_log_file='order-mysql-bin.000001',master_log_pos=617,master_connect_retry=30;

配置参数解析

- master_host:主数据库(Master)的 ip 地址

- master_user:在Master数据库中创建的用来进行同步的帐号

- master_password:在Master数据库中创建的用来进行同步的帐号的密码

- master_port:Master数据库的 MySQL端口号,这里是3307

- master_log_file:MySQL的 binlog文件名

- master_log_pos:binlog读取位置

- 在从数据库(slave)查看主从同步状态:

mysql> show slave status \G;

*************************** 1. row ***************************

Slave_IO_State:

Master_Host: 192.168.184.132

Master_User: slave

Master_Port: 3307

Connect_Retry: 30

Master_Log_File: order-mysql-bin.000001

Read_Master_Log_Pos: 617

Relay_Log_File: 2c4136668536-relay-bin.000001

Relay_Log_Pos: 4

Relay_Master_Log_File: order-mysql-bin.000001

Slave_IO_Running: No

Slave_SQL_Running: No

Replicate_Do_DB:

Replicate_Ignore_DB:

Replicate_Do_Table:

Replicate_Ignore_Table:

Replicate_Wild_Do_Table:

Replicate_Wild_Ignore_Table:

Last_Errno: 0

Last_Error:

Skip_Counter: 0

Exec_Master_Log_Pos: 617

Relay_Log_Space: 154

Until_Condition: None

Until_Log_File:

Until_Log_Pos: 0

Master_SSL_Allowed: No

Master_SSL_CA_File:

Master_SSL_CA_Path:

Master_SSL_Cert:

Master_SSL_Cipher:

Master_SSL_Key:

Seconds_Behind_Master: NULL

Master_SSL_Verify_Server_Cert: No

Last_IO_Errno: 0

Last_IO_Error:

Last_SQL_Errno: 0

Last_SQL_Error:

Replicate_Ignore_Server_Ids:

Master_Server_Id: 0

Master_UUID:

Master_Info_File: /var/lib/mysql/master.info

SQL_Delay: 0

SQL_Remaining_Delay: NULL

Slave_SQL_Running_State:

Master_Retry_Count: 86400

Master_Bind:

Last_IO_Error_Timestamp:

Last_SQL_Error_Timestamp:

Master_SSL_Crl:

Master_SSL_Crlpath:

Retrieved_Gtid_Set:

Executed_Gtid_Set:

Auto_Position: 0

Replicate_Rewrite_DB:

Channel_Name:

Master_TLS_Version:

1 row in set (0.00 sec)

我们找到里面的Slave_IO_Running: No,Slave_SQL_Running: No属性,发现都是No的状态,证明主从同步还没有开始。。。

- 在从数据库(slave)正式开启主从同步

start slave;

- 再次在从数据库中查看主从同步状态

mysql> show slave status \G;

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.184.132

Master_User: slave

Master_Port: 3307

Connect_Retry: 30

Master_Log_File: order-mysql-bin.000001

Read_Master_Log_Pos: 617

Relay_Log_File: 2c4136668536-relay-bin.000002

Relay_Log_Pos: 326

Relay_Master_Log_File: order-mysql-bin.000001

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

.......

我们可以看到已经都为yes了,说明主从同步已经开启。

测试主从同步

在Master数据库执行:

- 创建数据库

create database mall;

- 使用数据库

use mall;

- 创建表(表是我从之前写的项目中拿的)

CREATE TABLE `order` (

`id` bigint(20) NOT NULL,

`imgUrl` varchar(255) NOT NULL,

`goodsInfo` varchar(255) NOT NULL,

`goodsParams` varchar(255) DEFAULT NULL,

`goodsCount` int(20) NOT NULL,

`singleGoodsMoney` decimal(10,2) NOT NULL,

`realname` varchar(255) NOT NULL,

`phone` varchar(255) NOT NULL,

`address` text NOT NULL,

`created` datetime DEFAULT NULL COMMENT '创建时间',

`userid` bigint(20) NOT NULL,

`productid` bigint(20) NOT NULL,

`statusid` bigint(20) NOT NULL COMMENT '订单状态',

PRIMARY KEY (`id`)

) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4;

- 插入数据

INSERT INTO `order` VALUES (3655575498130437, 'http://localhost/static/img/nav/max.jpg', '小米Max', '全网通 4GB内存+64GB容量 指纹识别 黑色 每月20G流量套餐', 2, 2998.00, '张三', '123123666', '广东省河源市源城区', '2021-11-26 16:40:57', 1, 1, 2);

INSERT INTO `order` VALUES (3655575610852357, 'http://localhost/static/img/nav/max.jpg', '小米Max', '全网通 4GB内存+64GB容量 指纹识别 黑色 每月20G流量套餐', 2, 2998.00, '张三', '123123666', '广东省河源市源城区', '2021-11-26 16:40:59', 1, 1, 6);

INSERT INTO `order` VALUES (3672761237177349, 'http://localhost/static/img/nav/max.jpg', '小米Max', '全网通 4GB内存+64GB容量 指纹识别 黑色 每月20G流量套餐', 2, 2998.00, '张三', '123123666', '广东省河源市源城区', '2021-11-29 17:31:31', 1, 1, 6);

INSERT INTO `order` VALUES (3678103231529989, 'http://localhost/static/img/nav/max.jpg', '小米Max', '全网通 4GB内存+64GB容量 指纹识别 黑色 每月20G流量套餐', 1, 1499.00, '张三', '123123666', '广东省河源市源城区', '2021-11-30 16:10:03', 1, 1, 6);

INSERT INTO `order` VALUES (3678347308893189, 'http://localhost/static/img/nav/mi5.jpg', '小米手机5', '标准版', 1, 1999.00, '张三', '123123666', '广东省河源市源城区', '2021-11-30 17:12:07', 1, 2, 4);

- 查询数据库表(在Master数据库执行)

mysql> select * from `order`;

+------------------+-----------------------------------------+-----------+-----------------+------------+------------------+----------+-----------+---------+---------------------+--------+-----------+----------+

| id | imgUrl | goodsInfo | goodsParams | goodsCount | singleGoodsMoney | realname | phone | address | created | userid | productid | statusid |

+------------------+-----------------------------------------+-----------+-----------------+------------+------------------+----------+-----------+---------+---------------------+--------+-----------+----------+

| 3655575498130437 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 2 | 2998.00 | | 123123666 | | 2021-11-26 16:40:57 | 1 | 1 | 2 |

| 3655575610852357 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 2 | 2998.00 | | 123123666 | | 2021-11-26 16:40:59 | 1 | 1 | 6 |

| 3672761237177349 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 2 | 2998.00 | | 123123666 | | 2021-11-29 17:31:31 | 1 | 1 | 6 |

| 3678103231529989 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 1 | 1499.00 | | 123123666 | | 2021-11-30 16:10:03 | 1 | 1 | 6 |

| 3678347308893189 | http://localhost/static/img/nav/mi5.jpg | 5 | | 1 | 1999.00 | | 123123666 | | 2021-11-30 17:12:07 | 1 | 2 | 4 |

+------------------+-----------------------------------------+-----------+-----------------+------------+------------------+----------+-----------+---------+---------------------+--------+-----------+----------+

5 rows in set (0.00 sec)

- 切换从数据库机器(在slave数据库执行)

mysql> use mall;

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

- 查询数据库表(在slave数据库执行)

mysql> select * from `order`;

+------------------+-----------------------------------------+-----------+-----------------+------------+------------------+----------+-----------+---------+---------------------+--------+-----------+----------+

| id | imgUrl | goodsInfo | goodsParams | goodsCount | singleGoodsMoney | realname | phone | address | created | userid | productid | statusid |

+------------------+-----------------------------------------+-----------+-----------------+------------+------------------+----------+-----------+---------+---------------------+--------+-----------+----------+

| 3655575498130437 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 2 | 2998.00 | | 123123666 | | 2021-11-26 16:40:57 | 1 | 1 | 2 |

| 3655575610852357 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 2 | 2998.00 | | 123123666 | | 2021-11-26 16:40:59 | 1 | 1 | 6 |

| 3672761237177349 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 2 | 2998.00 | | 123123666 | | 2021-11-29 17:31:31 | 1 | 1 | 6 |

| 3678103231529989 | http://localhost/static/img/nav/max.jpg | Max | 4GB+64GB 20G | 1 | 1499.00 | | 123123666 | | 2021-11-30 16:10:03 | 1 | 1 | 6 |

| 3678347308893189 | http://localhost/static/img/nav/mi5.jpg | 5 | | 1 | 1999.00 | | 123123666 | | 2021-11-30 17:12:07 | 1 | 2 | 4 |

+------------------+-----------------------------------------+-----------+-----------------+------------+------------------+----------+-----------+---------+---------------------+--------+-----------+----------+

5 rows in set (0.00 sec)

- Docker+MySQL主从同步搞定!

也可以用Navicat去连接这两个数据库。如果Navicat出现连接不了docker的mysql,则可以:

方法一:关闭防火墙(测试环境用,生产环境不可以用)

sudo systemctl stop firewalld

方法二:开放防火墙对应端口,比如master数据库的3307和slave数据库的3308(生产环境用这个)

主从同步失败问题(Slave_SQL_Running:No)

mysql> show slave status \G;

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.184.132

Master_User: slave

Master_Port: 3307

Connect_Retry: 30

Master_Log_File: order-mysql-bin.000001

Read_Master_Log_Pos: 3752

Relay_Log_File: 2c4136668536-relay-bin.000002

Relay_Log_Pos: 326

Relay_Master_Log_File: order-mysql-bin.000001

Slave_IO_Running: Yes

Slave_SQL_Running: No

Replicate_Do_DB:

Replicate_Ignore_DB:

Replicate_Do_Table:

Replicate_Ignore_Table:

Replicate_Wild_Do_Table:

Replicate_Wild_Ignore_Table:

Last_Errno: 1007

Last_Error: Error 'Can't create database 'mall'; database exists' on query. Default database: 'mall'. Query: 'create database mall'

- Slave_SQL_Running为No的状态,那么这是为什么呢?

- 可以看到Last_Error: Error ‘Can’t create database ‘mall’; database exists’ on query. Default database: ‘mall’. Query: ‘create database mall’

- 原因是主数据库(Master)和从数据库(slave)不一致造成的,我在从数据库执行了命令导致数据不一致,最终导致主从复制失败。

Slave_SQL_Running:No的解决办法

1:把主数据库和从数据库变成一模一样,也就是把多余的数据库和表删除掉,变成默认的mysql状态。(切记生产环境下要做好数据备份!!!)

2:结束同步:

stop slave;

3:再次开启同步:

start slave;

4:搞定!

mysql> show slave status \G;

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.184.132

Master_User: slave

Master_Port: 3307

Connect_Retry: 30

Master_Log_File: order-mysql-bin.000001

Read_Master_Log_Pos: 3752

Relay_Log_File: 2c4136668536-relay-bin.000003

Relay_Log_Pos: 326

Relay_Master_Log_File: order-mysql-bin.000001

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Redis集群(3主6从)

10亿条数据怎么进行缓存

- 这么大量的数据放在一个Redis里面显然是不行的,所以我们必须进行搭建分布式的Redis集群进行存储。

- 那么我们如何确定每一条数据存放在哪一个Redis中呢?我们有如下三种方法。

哈希取余算法(小厂采用)

过程

- 1:假设一共有5台Redis服务器,编号分别为:0 1 2 3 4,服务器台数为count=5

- 2:先把key(关键字)进行哈希后产生一个哈希值为val,假设这个val为22

- 3:再用哈希取余算法公式:val%count,也就是22%5=2

- 4:那么就定位到3号(0为1号机器),那么该数据就插入3号Redis机器中

缺点:

- 1:Redis节点扩容十分麻烦,如果此时从5台机器变成10台,那么我们需要去修改哈希取余算法的公式,不利于扩展。

- 2:又或者是缩容也十分麻烦,如果有一台服务器宕机了,在线机器从5->4台,那么也需要去修改哈希取余算法公式。

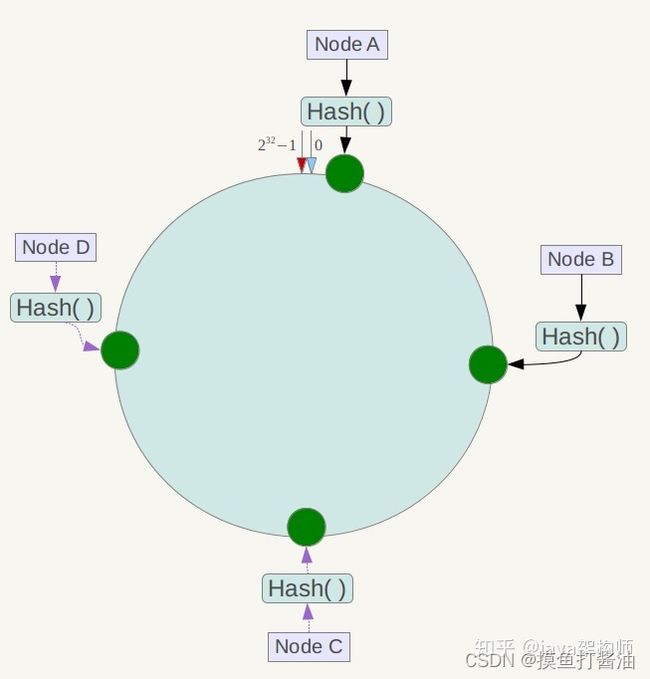

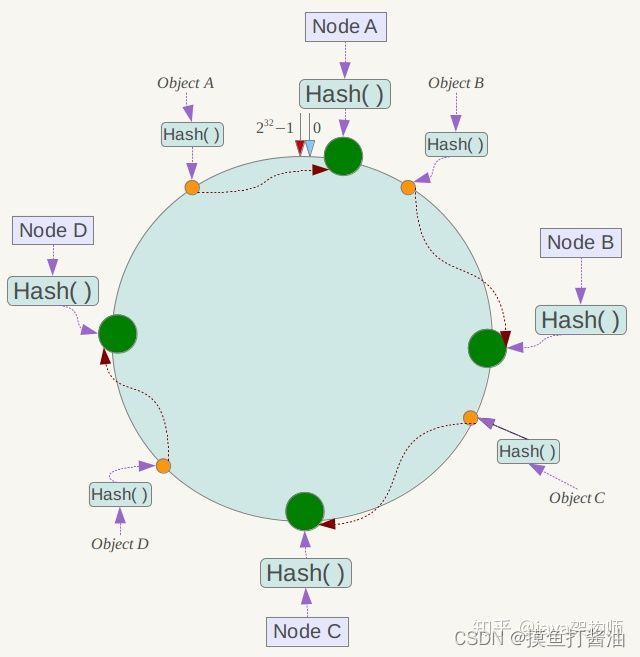

一致性hash算法(中大厂采用)

图解:

先虚拟一个哈希环,记录我们机器的ip的hash对应到哈希环中:

将对象的关键字key进行hash,顺时针寻找我们的机器,如果机器还在线就插入:

过程

- 1:虚拟一个哈希环(类似于大小为2^32的数组arr),即0 ~ (2^32)-1的空间中。

- 2:对我们所有的Redis节点所在的服务器IP或者主机名进行hash,得到的这个hash值记录下来。

- 3:先把key(关键字)进行hash后产生一个hash值为val

- 4:把这个val当成数组的下标进行遍历(也就是从这个位置顺时针遍历哈希环)一旦遇到Redis节点的hash值则进行插入,如果该节点宕机了则插入失败,这个时候我们就会跳过这个Redis节点继续顺时针搜索合适的节点,直到插入成功或者遍历完整个哈希环(当然此时是最坏情况)。

- 5:如果有新增的节点就按照第2步操作即可

- 6:如果Redis节点被删除或者宕机了,就移除这个记录的节点即可。

优点:

- 方便扩容和缩容,不需要和哈希取余算法一样更改算法公式,扩展性很好。

- 是因为哈希环的大小是2^32,几乎涵盖了所有hash值的可能。

缺点:

- 缺点也是十分明显的,当我们的Redis节点过少时,会造成数据倾斜问题。这种问题也是十分致命的。

- 因此该算法小厂不适合用,中大厂在多节点的情况下采用效果会更好。

hash槽算法(大厂采用、和Redis集群也是采用这种)

- 1:由于一致性hash算法会导致数据倾斜问题,而hash槽可以避免这个问题。

- 2:Redis的hash槽大小固定为:16384个。

- 3:将hash槽均匀分配到各个Master节点上(注意:slave节点不会被分配)

- 4:假如有三个Redis节点,则会分配成5461:5462:5461

- Redis的1号节点:负责0-5460号槽位

- Redis的2号节点:负责5461-10922号槽位

- Redis的3号节点:负责10923-16383号槽位

- 5:将关键字(key)进行hash取值,并且映射到对应的hash槽位上。

- 6:如果需要扩容或者缩容,只需要重新分配槽位即可。

快速搭建Redis Cluster(3主6从)

- 搭建的集群为:3主6从。

- 其中内部是1个Master主节点对应2个slave从节点

快速生成9个容器实例

- 由于我们搭建3主6从,所以需要9个Redis容器实例。这里的Redis都是最新版,如果需要改变版本,只需要把下面的版本换成你需要指定的版本即可。

docker run -d \

--name redis-node-1 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-1:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6381

docker run -d \

--name redis-node-2 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-2:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6382

docker run -d \

--name redis-node-3 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-3:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6383

docker run -d \

--name redis-node-4 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-4:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6384

docker run -d \

--name redis-node-5 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-5:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6385

docker run -d \

--name redis-node-6 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-6:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6386

docker run -d \

--name redis-node-7 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-7:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6387

docker run -d \

--name redis-node-8 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-8:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6388

docker run -d \

--name redis-node-9 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-9:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6389

查看容器实例是否全部启动

[root@aubin ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

73caff0968f1 redis:latest "docker-entrypoint.s…" 4 seconds ago Up 4 seconds redis-node-9

c8c5bd1034b4 redis:latest "docker-entrypoint.s…" 5 seconds ago Up 5 seconds redis-node-8

c7cf3d764f65 redis:latest "docker-entrypoint.s…" 6 seconds ago Up 5 seconds redis-node-7

98dcf5fd80d7 redis:latest "docker-entrypoint.s…" 6 seconds ago Up 5 seconds redis-node-6

7035e4a73a83 redis:latest "docker-entrypoint.s…" 6 seconds ago Up 5 seconds redis-node-5

7ae839c95a0c redis:latest "docker-entrypoint.s…" 6 seconds ago Up 5 seconds redis-node-4

87f841d136b9 redis:latest "docker-entrypoint.s…" 6 seconds ago Up 6 seconds redis-node-3

98e605469e0e redis:latest "docker-entrypoint.s…" 6 seconds ago Up 6 seconds redis-node-2

69eec71b0835 redis:latest "docker-entrypoint.s…" 7 seconds ago Up 6 seconds redis-node-1

配置主从

- 随便进入一个Redis容器(我们这里选择redis-node-2,其实都行),执行下面命令

- 下面的ip需要修改成Redis所在的服务器的ip。

- 如果需要输入内容,则输入:yes

docker exec -it redis-node-2 /bin/bash

redis-cli --cluster create 192.168.184.132:6381 192.168.184.132:6382 192.168.184.132:6383 192.168.184.132:6384 192.168.184.132:6385 192.168.184.132:6386 192.168.184.132:6387 192.168.184.132:6388 192.168.184.132:6389 --cluster-replicas 2

>>> Performing hash slots allocation on 9 nodes...

Master[0] -> Slots 0 - 5460

Master[1] -> Slots 5461 - 10922

Master[2] -> Slots 10923 - 16383

Adding replica 192.168.184.132:6385 to 192.168.184.132:6381

Adding replica 192.168.184.132:6386 to 192.168.184.132:6381

Adding replica 192.168.184.132:6387 to 192.168.184.132:6382

Adding replica 192.168.184.132:6388 to 192.168.184.132:6382

Adding replica 192.168.184.132:6389 to 192.168.184.132:6383

Adding replica 192.168.184.132:6384 to 192.168.184.132:6383

>>> Trying to optimize slaves allocation for anti-affinity

[WARNING] Some slaves are in the same host as their master

M: 888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381

slots:[0-5460] (5461 slots) master

M: 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382

slots:[5461-10922] (5462 slots) master

M: af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383

slots:[10923-16383] (5461 slots) master

S: a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

S: c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386

replicates 888158ad30d9a173175b115974b9d1cc5b39736e

S: 5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

S: 92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: 9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389

replicates 888158ad30d9a173175b115974b9d1cc5b39736e

Can I set the above configuration? (type 'yes' to accept): yes

>>> Nodes configuration updated

>>> Assign a different config epoch to each node

>>> Sending CLUSTER MEET messages to join the cluster

Waiting for the cluster to join

.

>>> Performing Cluster Check (using node 192.168.184.132:6381)

M: 888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381

slots:[0-5460] (5461 slots) master

2 additional replica(s)

S: 9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389

slots: (0 slots) slave

replicates 888158ad30d9a173175b115974b9d1cc5b39736e

S: 92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

M: 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382

slots:[5461-10922] (5462 slots) master

2 additional replica(s)

S: a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

M: af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383

slots:[10923-16383] (5461 slots) master

2 additional replica(s)

S: 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386

slots: (0 slots) slave

replicates 888158ad30d9a173175b115974b9d1cc5b39736e

S: 5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

- –cluster-replicas 2:意味着Master和slave是以1比2的形式生成。

- Redis集群至少需要3个Master节点,意味着每个Master对应replicas为2个slave节点。

- 如果–cluster-replicas 1,那么就是说1个Master节点对应1个slave节点。

以集群的方式进入Redis客户端

- 只需要多加一个-c即表示集群方式进入客户端。

- 别忘了指定端口号。因为Redis默认是6379端口!!!!!

redis-cli -p 6381 -c

- 如果搭建了Redis集群,而不使用Redis集群的方式进入客户端会怎样?

- 上面说过,Redis集群是通过hash槽进行存储kv键值对数据的,这种情况下,当我们进入1号Redis节点进行set值,如果刚好这个set的key的hash值刚好分配到我们当前所在的1号节点,那么就可以插入成功。

- 如果这个key的hash值分配到其他节点比如2,3号这些节点,则会插入失败。因为这个连接没有用集群的方式进入客户端。

- 只有我们使用集群的方式进入客户端才能随意插入数据,否则只能看缘分插入!!!!!!!!

操作set命令

192.168.184.132:6381> set k1 v1

-> Redirected to slot [12706] located at 192.168.184.132:6383

OK

192.168.184.132:6383> set k2 v2

-> Redirected to slot [449] located at 192.168.184.132:6381

OK

192.168.184.132:6381> set k3 v3

OK

192.168.184.132:6381> get k1

-> Redirected to slot [12706] located at 192.168.184.132:6383

"v1"

192.168.184.132:6383> get k2

-> Redirected to slot [449] located at 192.168.184.132:6381

"v2"

192.168.184.132:6381> get k3

"v3"

- 可以看到Redis集群底层都是通过hash槽算法分区。

查看集群节点信息

redis-cli -p 6381 -c

192.168.184.132:6381> cluster nodes

9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389@16389 slave 888158ad30d9a173175b115974b9d1cc5b39736e 0 1651761707000 1 connected

888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381@16381 myself,master - 0 1651761703000 1 connected 0-5460

92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388@16388 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651761706000 3 connected

c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385@16385 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651761708298 3 connected

1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382@16382 master - 0 1651761704000 2 connected 5461-10922

a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384@16384 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651761708000 2 connected

af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383@16383 master - 0 1651761708000 3 connected 10923-16383

29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386@16386 slave 888158ad30d9a173175b115974b9d1cc5b39736e 0 1651761710336 1 connected

5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387@16387 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651761709314 2 connected

查看集群状态

root@aubin:/data# redis-cli --cluster check 192.168.184.132:6381

192.168.184.132:6381 (888158ad...) -> 2 keys | 5461 slots | 2 slaves.

192.168.184.132:6382 (1ff5a2a1...) -> 0 keys | 5462 slots | 2 slaves.

192.168.184.132:6383 (af282884...) -> 1 keys | 5461 slots | 2 slaves.

[OK] 3 keys in 3 masters.

0.00 keys per slot on average.

>>> Performing Cluster Check (using node 192.168.184.132:6381)

M: 888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381

slots:[0-5460] (5461 slots) master

2 additional replica(s)

S: 9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389

slots: (0 slots) slave

replicates 888158ad30d9a173175b115974b9d1cc5b39736e

S: 92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

M: 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382

slots:[5461-10922] (5462 slots) master

2 additional replica(s)

S: a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

M: af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383

slots:[10923-16383] (5461 slots) master

2 additional replica(s)

S: 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386

slots: (0 slots) slave

replicates 888158ad30d9a173175b115974b9d1cc5b39736e

S: 5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

主从切换

- 我们可以看到6381、6382、6383是Master节点。

127.0.0.1:6382> CLUSTER NODES

29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386@16386 slave 888158ad30d9a173175b115974b9d1cc5b39736e 0 1651766520602 1 connected

a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384@16384 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651766520000 2 connected

c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385@16385 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651766521621 3 connected

9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389@16389 slave 888158ad30d9a173175b115974b9d1cc5b39736e 0 1651766516521 1 connected

92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388@16388 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651766522638 3 connected

5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387@16387 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651766519000 2 connected

af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383@16383 master - 0 1651766521000 3 connected 10923-16383

1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382@16382 myself,master - 0 1651766519000 2 connected 5461-10922

888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381@16381 master - 0 1651766518571 1 connected 0-5460

-

可以看出6381的从节点是:6389、6386

-

把6381的容器实例给stop掉。

docker stop redis-node-1

- 先过一会,再次查看集群节点

192.168.184.132:6383> CLUSTER NODES

c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385@16385 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651767445299 3 connected

9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389@16389 slave 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 0 1651767443000 10 connected

888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381@16381 master,fail - 1651767320800 1651767314000 1 disconnected

1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382@16382 master - 0 1651767444000 2 connected 5461-10922

29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386@16386 master - 0 1651767444000 10 connected 0-5460

92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388@16388 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651767443000 3 connected

af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383@16383 myself,master - 0 1651767443000 3 connected 10923-16383

5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387@16387 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651767444284 2 connected

a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384@16384 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651767446319 2 connected

我们可以看到以前6381的从节点6386变成了Master节点。说明已经进行主从切换了。

- 如果此时重新启动6381的容器

docker start redis-node-1

192.168.184.132:6383> CLUSTER NODES

c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385@16385 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651767586000 3 connected

9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389@16389 slave 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 0 1651767586183 10 connected

888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381@16381 slave 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 0 1651767582247 10 connected

1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382@16382 master - 0 1651767586000 2 connected 5461-10922

29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386@16386 master - 0 1651767588204 10 connected 0-5460

92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388@16388 slave af28288480e3398b18402e4475c9ae52c39ea776 0 1651767586000 3 connected

af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383@16383 myself,master - 0 1651767587000 3 connected 10923-16383

5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387@16387 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651767585000 2 connected

a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384@16384 slave 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 0 1651767587192 2 connected

我们可以看到6381变成了从节点(slave),主节点(Master)是6386。

主从扩容

- 启动三个Redis容器实例,分别为6390、6391、6392

- 目的是配置6390为Master节点加入集群,6391和6392为6390的Slave节点

docker run -d \

--name redis-node-10 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-10:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6390

docker run -d \

--name redis-node-11 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-11:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6391

docker run -d \

--name redis-node-12 \

--net host \

--privileged=true \

-v /data/redis/share/redis-node-12:/data \

redis:latest \

--cluster-enabled yes \

--appendonly yes \

--port 6392

- 查看docker实例是否全部启动

[root@aubin ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

2d51b83798fc redis:latest "docker-entrypoint.s…" 7 seconds ago Up 6 seconds redis-node-12

7155d53bda8f redis:latest "docker-entrypoint.s…" 8 seconds ago Up 7 seconds redis-node-11

80f3006ff904 redis:latest "docker-entrypoint.s…" 8 seconds ago Up 7 seconds redis-node-10

79af920ed704 redis:latest "docker-entrypoint.s…" 13 seconds ago Up 12 seconds redis-node-9

8476f6db25f1 redis:latest "docker-entrypoint.s…" 14 seconds ago Up 13 seconds redis-node-8

dbcee539c3d4 redis:latest "docker-entrypoint.s…" 14 seconds ago Up 13 seconds redis-node-7

3883016c4225 redis:latest "docker-entrypoint.s…" 14 seconds ago Up 13 seconds redis-node-6

073b551c8366 redis:latest "docker-entrypoint.s…" 14 seconds ago Up 14 seconds redis-node-5

0114f11f42ae redis:latest "docker-entrypoint.s…" 14 seconds ago Up 14 seconds redis-node-4

bd9b8fd9aa8b redis:latest "docker-entrypoint.s…" 15 seconds ago Up 14 seconds redis-node-3

f1e90ded9cb3 redis:latest "docker-entrypoint.s…" 15 seconds ago Up 14 seconds redis-node-2

f9c61333faae redis:latest "docker-entrypoint.s…" 15 seconds ago Up 15 seconds redis-node-1

docker exec -it redis-node-10 /bin/bash

- 首先将主节点6390(Master)添加进集群中。

- 192.168.184.132:6390:是你想要加入的Redis节点ip+端口号

- 192.168.184.132:6383:随便一个集群中的Master节点的ip+端口(这个Master代表着整个集群,必须得是Master节点)

redis-cli --cluster add-node 192.168.184.132:6390 192.168.184.132:6383

- 检查一下集群

root@aubin:/data# redis-cli --cluster check 192.168.184.132:6383

192.168.184.132:6383 (af282884...) -> 2 keys | 5461 slots | 2 slaves.

192.168.184.132:6382 (1ff5a2a1...) -> 0 keys | 5462 slots | 2 slaves.

192.168.184.132:6386 (29a79c9f...) -> 3 keys | 5461 slots | 2 slaves.

192.168.184.132:6390 (a25da2e5...) -> 0 keys | 0 slots | 0 slaves.

[OK] 5 keys in 4 masters.

0.00 keys per slot on average.

>>> Performing Cluster Check (using node 192.168.184.132:6383)

M: af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383

slots:[10923-16383] (5461 slots) master

2 additional replica(s)

S: a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

S: 888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

S: 5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

S: c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: 92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

M: 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382

slots:[5461-10922] (5462 slots) master

2 additional replica(s)

M: 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386

slots:[0-5460] (5461 slots) master

2 additional replica(s)

S: 9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

M: a25da2e5be0487b3109b23e807b2d4c9c23e5a1a 192.168.184.132:6390

slots: (0 slots) master

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

发现6390已经成功加入到集群,并且是Master节点,但是没有被分配槽位,slots为0。需要分配槽位才有用

- 重新分配槽位,下面的6386也是Master节点,哪个Master节点都行

redis-cli --cluster reshard 192.168.184.132:6386

提示:How many slots do you want to move (from 1 to 16384)?

-

因为我们是4个Master节点,所以是16384/4=4096,所以输入:4096

-

再输入新加入的6390Master节点的容器id,输入:a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

-

再输入:all ,回车

-

输入:yes

-

检查一下集群:

root@aubin:/data# redis-cli --cluster check 192.168.184.132:6390

192.168.184.132:6390 (a25da2e5...) -> 1 keys | 4096 slots | 1 slaves.

192.168.184.132:6386 (29a79c9f...) -> 2 keys | 4096 slots | 2 slaves.

192.168.184.132:6382 (1ff5a2a1...) -> 0 keys | 4096 slots | 1 slaves.

192.168.184.132:6383 (af282884...) -> 2 keys | 4096 slots | 2 slaves.

[OK] 5 keys in 4 masters.

0.00 keys per slot on average.

>>> Performing Cluster Check (using node 192.168.184.132:6390)

M: a25da2e5be0487b3109b23e807b2d4c9c23e5a1a 192.168.184.132:6390

slots:[0-1364],[5461-6826],[10923-12287] (4096 slots) master

1 additional replica(s)

M: 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386

slots:[1365-5460] (4096 slots) master

2 additional replica(s)

S: 92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

M: 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382

slots:[6827-10922] (4096 slots) master

1 additional replica(s)

M: af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383

slots:[12288-16383] (4096 slots) master

2 additional replica(s)

S: c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: 5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387

slots: (0 slots) slave

replicates a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

S: 888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

S: a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

S: 9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

-

搞定,扩容成功。

-

添加6390的从节点(6391和6392)

-

xxxxx1和xxxxx2替换成:6390(也就是想加入的Master节点)的id

redis-cli --cluster add-node 192.168.184.132:6391 192.168.184.132:6390 --cluster-slave --cluster-master-id xxxxx1

#比如添加6391

redis-cli --cluster add-node 192.168.184.132:6391 192.168.184.132:6390 --cluster-slave --cluster-master-id a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

-----------------------------

redis-cli --cluster add-node 192.168.184.132:6392 192.168.184.132:6390 --cluster-slave --cluster-master-id xxxxx2

#比如添加6392

redis-cli --cluster add-node 192.168.184.132:6392 192.168.184.132:6390 --cluster-slave --cluster-master-id a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

- 再次检查集群

root@aubin:/data# redis-cli --cluster check 192.168.184.132:6390

192.168.184.132:6390 (a25da2e5...) -> 1 keys | 4096 slots | 3 slaves.

192.168.184.132:6386 (29a79c9f...) -> 2 keys | 4096 slots | 2 slaves.

192.168.184.132:6382 (1ff5a2a1...) -> 0 keys | 4096 slots | 1 slaves.

192.168.184.132:6383 (af282884...) -> 2 keys | 4096 slots | 2 slaves.

[OK] 5 keys in 4 masters.

0.00 keys per slot on average.

>>> Performing Cluster Check (using node 192.168.184.132:6390)

M: a25da2e5be0487b3109b23e807b2d4c9c23e5a1a 192.168.184.132:6390

slots:[0-1364],[5461-6826],[10923-12287] (4096 slots) master

3 additional replica(s)

S: 5f34c1cd5a35e1195522695143941d044f1cdb96 192.168.184.132:6392

slots: (0 slots) slave

replicates a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

M: 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386

slots:[1365-5460] (4096 slots) master

2 additional replica(s)

S: a86979e7ff21ca39bad8da1ce63c551f2598c779 192.168.184.132:6391

slots: (0 slots) slave

replicates a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

S: 92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

M: 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382

slots:[6827-10922] (4096 slots) master

1 additional replica(s)

M: af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383

slots:[12288-16383] (4096 slots) master

2 additional replica(s)

S: c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: 5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387

slots: (0 slots) slave

replicates a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

S: 888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

S: a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

S: 9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

主从缩容

-

目的是移除6390(Master)和6391、6392(Slave)。

-

删除从节点6391。xxx1替换成:6391的容器id

redis-cli --cluster del-node 192.168.184.132:6391 xxx1

#比如删除从节点6391

root@aubin:/data# redis-cli --cluster del-node 192.168.184.132:6391 a86979e7ff21ca39bad8da1ce63c551f2598c779

>>> Removing node a86979e7ff21ca39bad8da1ce63c551f2598c779 from cluster 192.168.184.132:6391

>>> Sending CLUSTER FORGET messages to the cluster...

>>> Sending CLUSTER RESET SOFT to the deleted node.

- 删除从节点6392。xxx2替换成:6392的容器id

redis-cli --cluster del-node 192.168.184.132:6392 xxx2

#比如删除从节点6392

root@aubin:/data# redis-cli --cluster del-node 192.168.184.132:6392 5f34c1cd5a35e1195522695143941d044f1cdb96

>>> Removing node 5f34c1cd5a35e1195522695143941d044f1cdb96 from cluster 192.168.184.132:6392

>>> Sending CLUSTER FORGET messages to the cluster...

>>> Sending CLUSTER RESET SOFT to the deleted node.

- 删除Master节点6390之前,必须先把槽位给其他Master节点。

- xxxxxx1:随便一个Master节点,我们这里指定6386

redis-cli --cluster reshard xxxxxx1

#比如

redis-cli --cluster reshard 192.168.184.132:6386

-

输入删除的槽位数:因为6390的槽位是4096个,所以输入:4096

-

再输入谁来接收这些残留下来的槽位的容器id,我们这里把4096个都给6383,所以输入6383的容器id:af28288480e3398b18402e4475c9ae52c39ea776(哪个Master节点都行)

-

再输入删除的这个Master节点的id,也就是6390的容器id:a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

-

再输入:done

-

再输入:yes

此时6390的槽位已经全部给了6383,这个时候可以删除6390节点了。xxx1为6390的容器id

redis-cli --cluster del-node 192.168.184.132:6390 xxx1

#比如

redis-cli --cluster del-node 192.168.184.132:6390 a25da2e5be0487b3109b23e807b2d4c9c23e5a1a

- 再次检查一下集群

root@aubin:/data# redis-cli --cluster check 192.168.184.132:6383

192.168.184.132:6383 (af282884...) -> 3 keys | 8192 slots | 3 slaves.

192.168.184.132:6382 (1ff5a2a1...) -> 0 keys | 4096 slots | 1 slaves.

192.168.184.132:6386 (29a79c9f...) -> 2 keys | 4096 slots | 2 slaves.

[OK] 5 keys in 3 masters.

0.00 keys per slot on average.

>>> Performing Cluster Check (using node 192.168.184.132:6383)

M: af28288480e3398b18402e4475c9ae52c39ea776 192.168.184.132:6383

slots:[0-1364],[5461-6826],[10923-16383] (8192 slots) master

3 additional replica(s)

S: a11993ddc7a726e3ad476858fb53bdc97d4834b8 192.168.184.132:6384

slots: (0 slots) slave

replicates 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e

S: 888158ad30d9a173175b115974b9d1cc5b39736e 192.168.184.132:6381

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

S: 5b20d04646f7f38eb57266b81749e565825ab6e5 192.168.184.132:6387

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: c9d7f4ed642fa2fde013f2b060476b542b9af76e 192.168.184.132:6385

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

S: 92d06bfb428a1358149c209c336152cae3304c66 192.168.184.132:6388

slots: (0 slots) slave

replicates af28288480e3398b18402e4475c9ae52c39ea776

M: 1ff5a2a15526e3d7984b231cf7e47f8f442e4a9e 192.168.184.132:6382

slots:[6827-10922] (4096 slots) master

1 additional replica(s)

M: 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2 192.168.184.132:6386

slots:[1365-5460] (4096 slots) master

2 additional replica(s)

S: 9ef7a5bb156de64a969688eade8535399983dd00 192.168.184.132:6389

slots: (0 slots) slave

replicates 29a79c9f9a007bf80fdfc2ffaaf1cf27d29396c2

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

- 主从缩容搞定了。

❤️本章结束,我们下一章见❤️