pytorch 实战测试——MNIST 手写数字识别

代码实现

工具类代码

# 文件名 utils01

import torch

from matplotlib import pyplot as plt

# 将列表中对应数据画出来

def plot_curve(ls):

fig = plt.figure()

palette = plt.get_cmap('Set1')

# 读取数据,ls = [{'key01': list01}, {'key02': list02}, ...]

for idx, data in enumerate(ls):

for key, value in data.items():

plt.plot(range(len(value)), value, color=palette(idx), label=key)

plt.legend()

plt.xlabel('step')

plt.ylabel('value')

plt.show()

# 展示部分 MNIST 图片

def plot_image(img, label, name):

fig = plt.figure()

for i in range(6):

plt.subplot(2, 3, i+1)

plt.tight_layout()

plt.imshow(img[i][0]*0.3081+0.1307, cmap='gray', interpolation='none')

plt.title("{}: {}".format(name, label[i].item()))

plt.xticks([])

plt.yticks([])

plt.show()

# one hot 编码

def one_hot(label, depth=10):

out = torch.zeros(label.size(0), depth)

idx = torch.LongTensor(label).view(-1, 1)

out.scatter_(dim=1, index=idx, value=1)

return out

MNIST 测试代码

# 文件名 mnist_test

import numpy as np

import torch

from torch import nn

from torch.nn import functional as F

from torch import optim

import torchvision

from utils01 import plot_image, plot_curve, one_hot

batch_size = 512 # 每一批训练数据大小

total_correct = 0 # 总的正确数量

loss_list = [] # 存放使用不同梯度下降算法训练的损失值,内容为字典

# 网络模型

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# 全连接层 wx+b,由于数字有 10 个,所以 fc3 的输出维度为 10

self.fc1 = nn.Linear(28 * 28, 256)

self.fc2 = nn.Linear(256, 64)

self.fc3 = nn.Linear(64, 10)

def forward(self, x):

x = F.relu(self.fc1(x)) # h1 = relu(xw1 + b1)

x = F.relu(self.fc2(x)) # h2 = relu(h1w2 + b2)

x = self.fc3(x) # h3 = h2w3 + b3

return x

# 根据 flag 加载 MNIST 数据集,当 flag=True 时为训练的 loader

def get_loader(flag):

return torch.utils.data.DataLoader(

torchvision.datasets.MNIST('mnist_data', train=flag, download=True,

transform=torchvision.transforms.Compose(

[torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize(

(0.1307,), (0.3081,))

])), batch_size=batch_size, shuffle=flag)

# 训练,loader 对应 train_loader,epoch 为训练轮次,

# device 表示 GPU 或 CPU,GD 为梯度下降算法

def train(loader, epoch, device, GD):

train_loss = [] # 存放本次训练的损失函数值

for i in range(epoch):

for batch_idx, (x, y) in enumerate(loader):

# 把数据 x 展开: [b, 1, 28, 28] => [b, 784]

# size(0) 获取第一维大小,to(device) 选择将数据存放至 GPU 或 CPU

x = x.view(x.size(0), 28 * 28).to(device)

out = net(x)

y_onehot = one_hot(y).to(device) # one hot

# loss = MSE(out, y_onehot)

# loss = F.mse_loss(out, y_onehot)

loss = F.cross_entropy(out, y_onehot).to(device)

optimizer.zero_grad() # 清除梯度

loss.backward() # 反向传播

optimizer.step() # 梯度更新

# loss.item() 将 tensor 类型进行转换

train_loss.append(loss.item())

# 打印日志

if batch_idx % 10 == 0:

print('{} train epoch {}: [{} / {}]({:.2f}%) loss => {}'.format(

GD, i + 1, batch_idx * batch_size, len(train_loader.dataset),

(batch_idx * batch_size) / len(train_loader.dataset) * 100, loss.item()))

loss_list.append({GD: train_loss}) # 损失函数值汇总

return train_loss

# 测试

def test(loader, total_correct, device, GD):

for x, y in loader:

x = x.view(x.size(0), 28 * 28).to(device)

out = net(x)

pred = out.argmax(dim=1)

correct = pred.eq(y.to(device)).sum().float().item()

total_correct += correct

total_num = len(loader.dataset)

acc = total_correct / total_num

print('{} test acc: {:.2f}%'.format(GD, acc * 100))

# visdom 测试,可通过 pip install visdom 安装 visdom 库

def visdom_test(loss):

from visdom import Visdom

viz = Visdom()

# 先在终端运行 python -m visdom.server

# 将训练的损失图画出来,以下是 Adam 的损失函数,可根据 loss 的形状获取其余损失值

viz.line([0.], [0.], win='train_loss', opts=dict(title='train loss'))

viz.line(np.array(loss[0]['Adam']), np.array(

[i for i in range(len(loss[0]['Adam']))]), win='train_loss', update='append')

if __name__ == '__main__':

# 判断 cuda 是否可用

if torch.cuda.is_available():

device = torch.device('cuda:0')

else:

device = torch.device('cpu')

# 测试 3 种梯度下降算法,可继续添加

for GD in ['Adam', 'SGD', 'Adagrad']:

train_loader = get_loader(True)

net = Net().to(device)

if GD == 'Adam':

optimizer = optim.Adam(net.parameters(), lr=0.01)

elif GD == 'SGD':

optimizer = optim.SGD(net.parameters(), lr=0.01, momentum=0.9)

elif GD == 'Adagrad':

optimizer = optim.Adagrad(net.parameters(), lr=0.01)

# 查看 train_loader 的内容,可将图片和对应的 label 打印出来

# x, y = next(iter(train_loader))

# plot_image(x, y, 'image sample')

# 使用训练数据训练 3 轮,device 表示使用 GPU 或 CPU,梯度下降算法由 GD 指定

train(train_loader, 3, device, GD)

test_loader = get_loader(False)

test(test_loader, total_correct, device, GD)

print('=' * 50)

plot_curve(loss_list)

# 测试 visdom 时可将下面注释去掉

# visdom_test(loss_list)

注:数据存放在 CPU 和 GPU 上类型是不同的

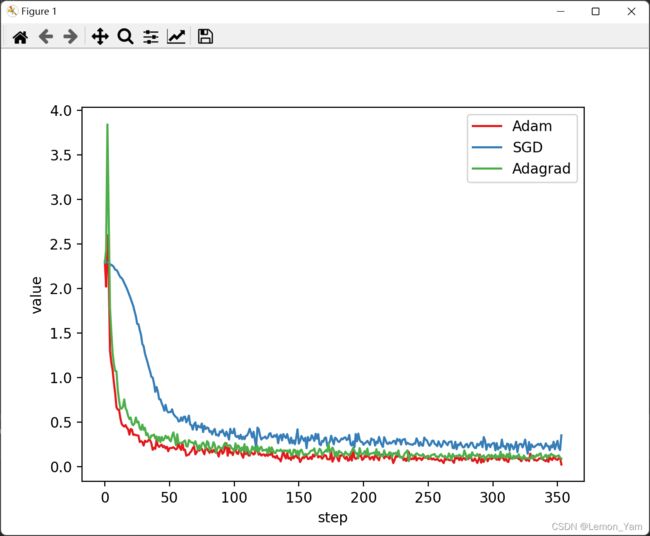

测试结果

- 3 轮训练后损失函数值

- 在 visdom 上查看 1 轮训练后 Adam 的损失函数值