Softmax Loss、Softtriplet Loss

目录

19-BMVC-Classification is a Strong Baseline for Deep Metric Learning

Softmax Loss

Layer Normalization

类别平衡采样

binary embeddings

19-ICCV-SoftTriple Loss:Deep Metric Learning Without Triplet Sampling

SoftTriple Loss

19-BMVC-Classification is a Strong Baseline for Deep Metric Learning

1) we establish that classification is a strong baseline for deep metric learning across different datasets, base feature networks and embedding dimensions,

2) we provide insights into the performance effects of binarization and subsampling classes for scalable extreme classification-based training(极端分类),

3) we propose a classification-based approach to learn high-dimensional binary embeddings.

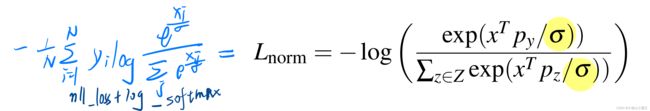

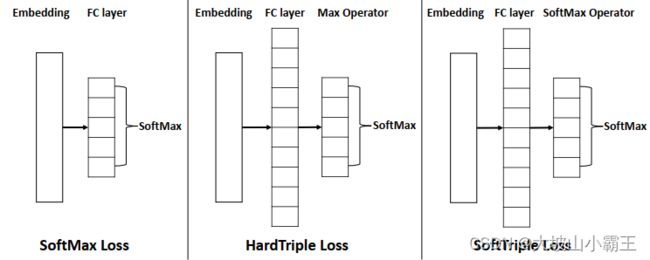

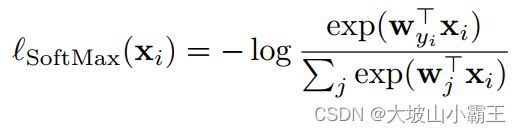

Softmax Loss

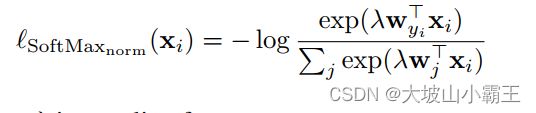

当类的权重看做proxy,使用余弦距离,Normalized softmax loss符合proxy paradigm

- 移除最后一层线性层的bias。

- 输入x和权重p都经过L2归一化(因为这里是余弦相似度)

- Temperature scaling:经典概率校准方法。放大类间差异,提升精度。

class NormSoftmaxLoss(nn.Module):

"""

L2 normalize weights and apply temperature scaling on logits.

"""

def __init__(self,

dim,

num_instances,

temperature=0.05):

super(NormSoftmaxLoss, self).__init__()

# 移除线性层的bias

self.weight = Parameter(torch.Tensor(num_instances, dim))

# Initialization from nn.Linear (https://github.com/pytorch/pytorch/blob/v1.0.0/torch/nn/modules/linear.py#L129)

stdv = 1. / math.sqrt(self.weight.size(1))

self.weight.data.uniform_(-stdv, stdv)

self.temperature = temperature

self.loss_fn = nn.CrossEntropyLoss()

def forward(self, embeddings, instance_targets):

norm_weight = nn.functional.normalize(self.weight, dim=1)# L2归一化权重

prediction_logits = nn.functional.linear(embeddings, norm_weight)

loss = self.loss_fn(prediction_logits / self.temperature, instance_targets)

return loss

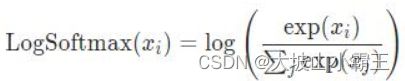

CrossEntropyLoss=LogSoftmax+NLLLoss计算softmax要计算指数, 可能出现nan.。分类问题里使用CrossEntropy的时候需要进行log运算, 如果将Log运算和Softmax结合在一起, 可以避免这个问题。

NLLLoss:与label相乘取负求均值

这里还提到了Large Margin Cosine Loss (LMCL):《CosFace: Large Margin Cosine Loss for Deep Face Recognition》2018,Hao Wang et al. Tencent AI Lab

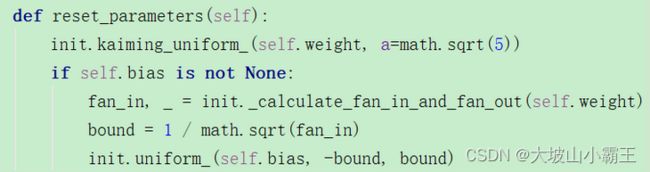

Layer Normalization

嵌入具有以0为中心值的分布。

- easily binarize embeddings via thresholding at zero.

- helps the network better initialize new parameters and reach better optima.

BatchNorm: 对一个batch-size样本内的每个特征做归一化

LayerNorm: 针对每条样本,对每条样本的所有特征做归一化BatchNorm和LayerNorm的区别_DataAlgo的博客-CSDN博客_layernorm和batchnorm的区别

self.standardize = nn.LayerNorm(input_dim=2048, elementwise_affine=False)

类别平衡采样

每个batch采样c个类,每个类采样s个样本。

缓解损失由类内最差近似示例限定(17-ICCV-No Fuss Distance Metric Learning using Proxies)

Subsampling:二次采样,不使用全部的类

binary embeddings

二值化嵌入

汉明距离(Hamming distance):无需计算内积, 可以降低计算复杂度;

两个二进制编码异或运算后各位数值加和的结果, 如 1011101(2)与1001001(2)之间的汉明距离是 2, 本质上就是两个二值向量的欧式距离;

binary_query_embeddings = np.require(query_embeddings > 0, dtype='float32')

binary_db_embeddings = np.require(db_embeddings > 0, dtype='float32')

# knn retrieval from embeddings (binary embeddings + euclidean = hamming distance)

dists, retrieved_result_indices = _retrieve_knn_faiss_gpu_euclidean(binary_query_embeddings,binary_db_embeddings,k,gpu_id=gpu_id)代码:GitHub - azgo14/classification_metric_learning

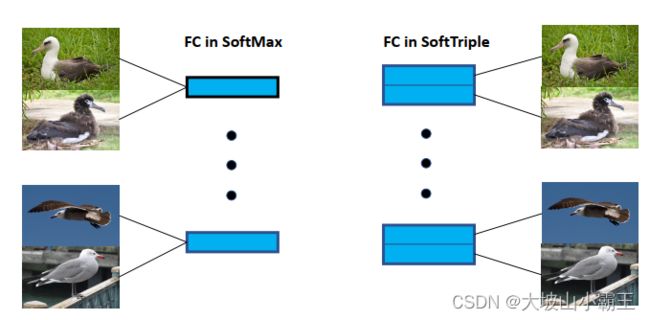

19-ICCV-SoftTriple Loss:Deep Metric Learning Without Triplet Sampling

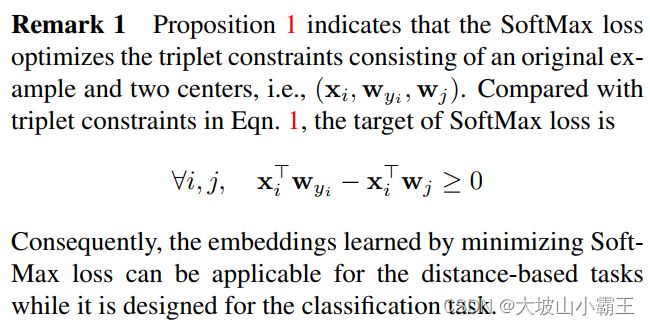

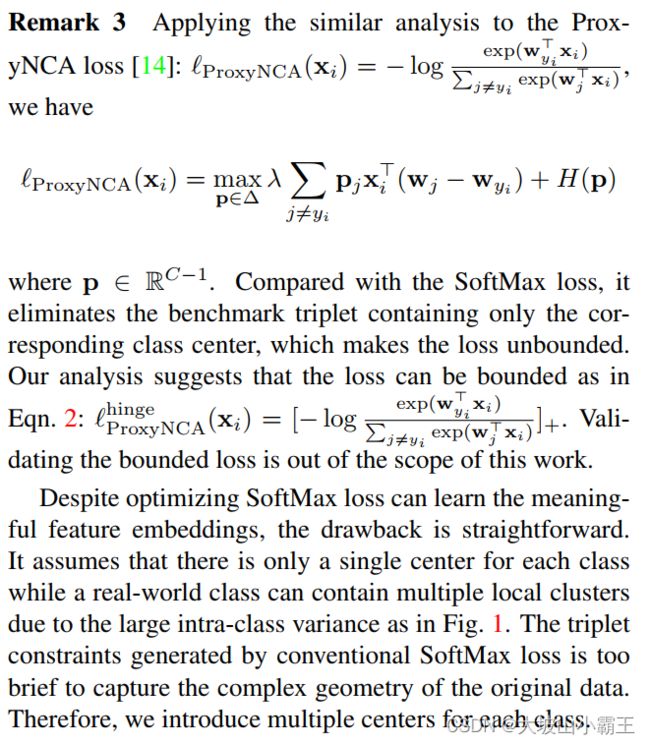

1)SoftMax loss is equivalent to a smoothed triplet loss where each class has a single center.

现实中一个类不只有一个中心,例如鸟有很多姿势(从细粒度角度解释)。扩展SoftMax loss,每个类有多中心。

2)learn the embeddings without the sampling phase by mildly increasing the size of the last fully connected layer.不需要采样。

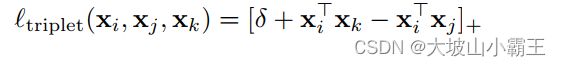

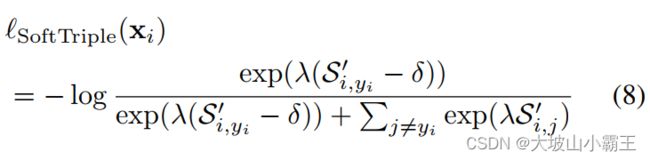

SoftTriple Loss

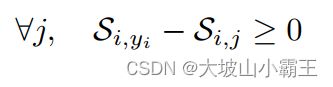

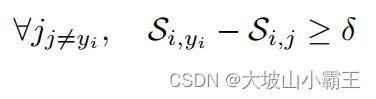

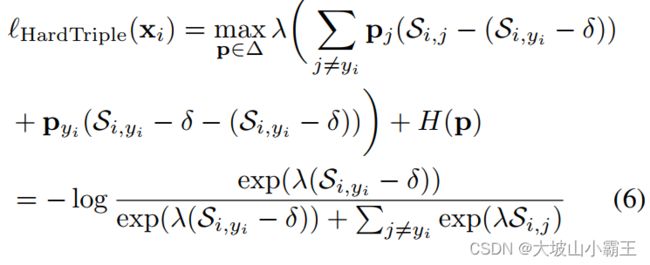

最小化有平滑项 λ的normalized SoftMax loss=最大化平滑的triplet loss

这接下来都是证明推导了些啥??

Multiple Centers:每个类c有k个中心。

Inspired by the SoftMax loss, improve the robustness by smoothing the max operator.

原本是直接选最大值。

现在是对所有值加权求和,为保证和最大,原本较大的值对应的权值q一定也大。

类中心越多,类内方差越小;中心数=样本数时,类内方差为0。

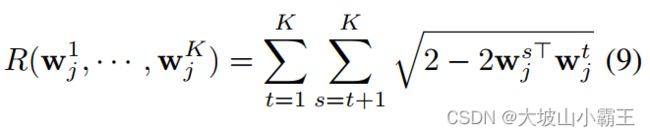

Adaptive Number of Centers: 一个中心到其他中心的距离

一个中心到其他中心的距离

共N个样本,最小化中心间的距离,为0时即合并。

class SoftTriple(nn.Module):

def __init__(self, la, gamma, tau, margin, dim, cN, K):

super(SoftTriple, self).__init__()

self.la = la

self.gamma = 1./gamma

self.tau = tau

self.margin = margin

self.cN = cN # 有cN个类

self.K = K # 每个类K个中心

self.fc = Parameter(torch.Tensor(dim, cN*K))

self.weight = torch.zeros(cN*K, cN*K, dtype=torch.bool).cuda()

for i in range(0, cN):

for j in range(0, K):

self.weight[i*K+j, i*K+j+1:(i+1)*K] = 1

init.kaiming_uniform_(self.fc, a=math.sqrt(5))

return

def forward(self, input, target):

centers = F.normalize(self.fc, p=2, dim=0)

simInd = input.matmul(centers)

simStruc = simInd.reshape(-1, self.cN, self.K)

prob = F.softmax(simStruc*self.gamma, dim=2)

simClass = torch.sum(prob*simStruc, dim=2)

marginM = torch.zeros(simClass.shape).cuda()

marginM[torch.arange(0, marginM.shape[0]), target] = self.margin

lossClassify = F.cross_entropy(self.la*(simClass-marginM), target)

if self.tau > 0 and self.K > 1:

simCenter = centers.t().matmul(centers)

reg = torch.sum(torch.sqrt(2.0+1e-5-2.*simCenter[self.weight]))/(self.cN*self.K*(self.K-1.))

return lossClassify+self.tau*reg

else:

return lossClassify代码里Sij也减去marginM了?

代码:GitHub - idstcv/SoftTriple: PyTorch Implementation for SoftTriple Loss