基于Spark封装的二次开发工程edata-base,介绍

目录

-

- 介绍

- 工程介绍

-

- edata-base 中的POM

- edata-base-component在自定义工程中的使用

- edata-base-component的模块划分

- 工程的使用

介绍

edata-base是基于Spark的大数据二次开发库,它封装了Spark与其他常用中间的使用方法,使得基于Spark的开发更加简便。

源码仓库地址:https://gitee.com/alan-sword/edata-base

工程介绍

edata-base 中的POM

edata-base 规定了Spark API与其他中间件API的版本,自定义Spark工程可以自行引用,edata-base-component引用了这个父工程。

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0modelVersion>

<groupId>com.edata.bigdatagroupId>

<artifactId>edata-baseartifactId>

<packaging>pompackaging>

<version>1.0-SNAPSHOTversion>

<properties>

<java.version>1.8java.version>

<scala.version>2.11scala.version>

<scala.binary.version>2.11scala.binary.version>

<spark.version>2.4.3spark.version>

<hadoop.version>3.1.2hadoop.version>

<posgresql.version>42.1.1posgresql.version>

<nebula.version>2.6.1nebula.version>

<flink.version>1.14.3flink.version>

<zookeeper.version>3.6.1zookeeper.version>

properties>

<modules>

<module>edata-base-componentmodule>

<module>edata-bigdata-testmodule>

modules>

<dependencies>

<dependency>

<groupId>org.postgresqlgroupId>

<artifactId>postgresqlartifactId>

<version>42.3.1version>

dependency>

<dependency>

<groupId>org.mongodb.sparkgroupId>

<artifactId>mongo-spark-connector_${scala.version}artifactId>

<version>2.4.3version>

dependency>

<dependency>

<groupId>org.apache.sparkgroupId>

<artifactId>spark-core_${scala.version}artifactId>

<version>${spark.version}version>

dependency>

<dependency>

<groupId>org.apache.sparkgroupId>

<artifactId>spark-streaming_${scala.version}artifactId>

<version>${spark.version}version>

dependency>

<dependency>

<groupId>org.apache.sparkgroupId>

<artifactId>spark-streaming-kafka-0-10_${scala.version}artifactId>

<version>${spark.version}version>

dependency>

<dependency>

<groupId>org.apache.sparkgroupId>

<artifactId>spark-sql_${scala.version}artifactId>

<version>${spark.version}version>

dependency>

<dependency>

<groupId>org.apache.sparkgroupId>

<artifactId>spark-hive_${scala.version}artifactId>

<version>${spark.version}version>

dependency>

<dependency>

<groupId>org.apache.sparkgroupId>

<artifactId>spark-mllib_${scala.version}artifactId>

<version>${spark.version}version>

dependency>

<dependency>

<groupId>org.apache.zookeepergroupId>

<artifactId>zookeeperartifactId>

<version>${zookeeper.version}version>

dependency>

dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.pluginsgroupId>

<artifactId>maven-compiler-pluginartifactId>

<configuration>

<source>${java.version}source>

<target>${java.version}target>

<encoding>UTF-8encoding>

<showWarnings>trueshowWarnings>

configuration>

plugin>

<plugin>

<groupId>org.scala-toolsgroupId>

<artifactId>maven-scala-pluginartifactId>

<version>2.15.2version>

<executions>

<execution>

<goals>

<goal>compilegoal>

<goal>testCompilegoal>

goals>

execution>

executions>

plugin>

plugins>

build>

project>

edata-base-component在自定义工程中的使用

我们可以先大致看看在自定义工程edata-base-test中是如何使用edata-base-component的,首先是POM文件

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>edata-baseartifactId>

<groupId>com.edata.bigdatagroupId>

<version>1.0-SNAPSHOTversion>

parent>

<artifactId>edata-bigdata-testartifactId>

<modelVersion>4.0.0modelVersion>

<dependencies>

<dependency>

<groupId>com.edata.bigdatagroupId>

<artifactId>edata-base-componentartifactId>

<version>1.0-SNAPSHOTversion>

dependency>

dependencies>

project>

如上所示,edata-base-test引用了edata-base-component的包。便可以如下图般使用相关的库。

package com.edata.bigdata.viewmain

import com.edata.bigdata.bean.MyClass

import com.edata.bigdata.mongo.{SparkMongoConnector, SparkMongoImpl}

object testing {

def main(args: Array[String]): Unit = {

//创建连接器

val connector:SparkMongoConnector = new SparkMongoConnector()

connector.appname="SparkMongoTesting"

connector.master = "local[*]"

connector.ipport = "192.168.36.141:27017"

connector.database = "spark"

connector.collection = "collection"

connector.username = "admin"

connector.password = "123456"

//connector.uri = "mongodb://admin:[email protected]:27017/spark.collection?authSource=admin"

//创建Spark-mongo数据交互实例,赋予连接器

val smi = new SparkMongoImpl[MyClass]

smi.connector = connector

val data = smi.find()

data.first()

smi.save(data)

}

}

自定义工程在使用edata-base-component时,只需要实现两步

(1)创建Spark与其他中间件的连接器(connector)实例。

(2)创建Spark与其他中间的接口实例,创建过程中传入自定义case class(上面代码是MyClass),并将连接器实例赋给接口实例。

edata-base-component的模块划分

如下图所示

edata-base-component根据Spark与主流中间件在交互上的不同划分模块,例如hdfs,mongodb,postgresql等,此外还有一些工具类,以及针对自定义case class的反射转换。使得基于edata-base-component开发的Spark程序能够自动将用户定义的case class转换成DataFrame中的Schema。

工程的使用

将edata-base-component工程打包成Jar,并在自己的自定义工程里进行引用,POM文件如下

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>edata-baseartifactId>

<groupId>com.edata.bigdatagroupId>

<version>1.0-SNAPSHOTversion>

parent>

<artifactId>edata-bigdata-testartifactId>

<modelVersion>4.0.0modelVersion>

<dependencies>

<dependency>

<groupId>com.edata.bigdatagroupId>

<artifactId>edata-base-componentartifactId>

<version>1.0-SNAPSHOTversion>

dependency>

dependencies>

project>

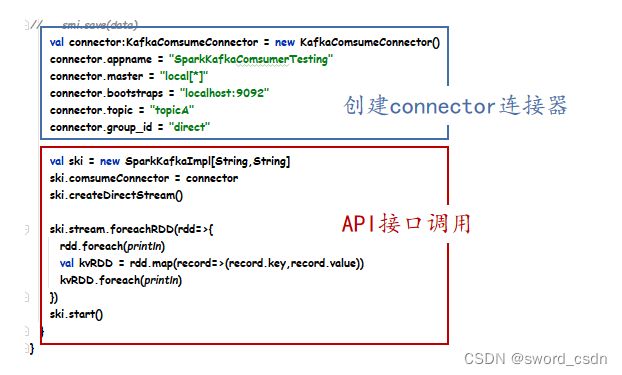

总体上,可以将基于edata-base-component的代码分成两个部分,创建连接器,以及调用API。

val connector:KafkaComsumeConnector = new KafkaComsumeConnector()

connector.appname = "SparkKafkaComsumerTesting"

connector.master = "local[*]"

connector.bootstraps = "localhost:9092"

connector.topic = "topicA"

connector.group_id = "direct"

val ski = new SparkKafkaImpl[String,String]

ski.comsumeConnector = connector

ski.createDirectStream()

ski.stream.foreachRDD(rdd=>{

rdd.foreach(println)

val kvRDD = rdd.map(record=>(record.key,record.value))

kvRDD.foreach(println)

})

ski.start()