- html 中如何使用 uniapp 的部分方法

某公司摸鱼前端

htmluni-app前端

示例代码:Documentconsole.log(window);效果展示:好了,现在就可以uni.使用相关的方法了

- python os.environ

江湖偌大

python深度学习

os.environ['TF_CPP_MIN_LOG_LEVEL']='0'#默认值,输出所有信息os.environ['TF_CPP_MIN_LOG_LEVEL']='1'#屏蔽通知信息(INFO)os.environ['TF_CPP_MIN_LOG_LEVEL']='2'#屏蔽通知信息和警告信息(INFO\WARNING)os.environ['TF_CPP_MIN_LOG_LEVEL']='

- python os.environ_python os.environ 读取和设置环境变量

weixin_39605414

pythonos.environ

>>>importos>>>os.environ.keys()['LC_NUMERIC','GOPATH','GOROOT','GOBIN','LESSOPEN','SSH_CLIENT','LOGNAME','USER','HOME','LC_PAPER','PATH','DISPLAY','LANG','TERM','SHELL','J2REDIR','LC_MONETARY','QT_QPA

- log4j配置

yy爱yy

#log4j.rootLogger配置的是大于等于当前级别的日志信息的输出#log4j.rootLogger用法:(注意appenderName可以是一个或多个)#log4j.rootLogger=日志级别,appenderName1,appenderName2,....#log4j.appender.appenderName2定义的是日志的输出方式,有两种:一种是命令行输出或者叫控制台输出,另一

- MYSQL面试系列-04

king01299

面试mysql面试

MYSQL面试系列-0417.关于redolog和binlog的刷盘机制、redolog、undolog作用、GTID是做什么的?innodb_flush_log_at_trx_commit及sync_binlog参数意义双117.1innodb_flush_log_at_trx_commit该变量定义了InnoDB在每次事务提交时,如何处理未刷入(flush)的重做日志信息(redolog)。它

- MongoDB Oplog 窗口

喝醉酒的小白

MongoDB运维

在MongoDB中,oplog(操作日志)是一个特殊的日志系统,用于记录对数据库的所有写操作。oplog允许副本集成员(通常是从节点)应用主节点上已经执行的操作,从而保持数据的一致性。它是MongoDB副本集实现数据复制的基础。MongoDBOplog窗口oplog窗口是指在MongoDB副本集中,从节点可以用来同步数据的时间范围。这个窗口通常由以下因素决定:Oplog大小:oplog的大小是有限

- ARM驱动学习之基础小知识

JT灬新一

ARM嵌入式arm开发学习

ARM驱动学习之基础小知识•sch原理图工程师工作内容–方案–元器件选型–采购(能不能买到,价格)–原理图(涉及到稳定性)•layout画板工程师–layout(封装、布局,布线,log)(涉及到稳定性)–焊接的一部分工作(调试阶段板子的焊接)•驱动工程师–驱动,原理图,layout三部分的交集容易发生矛盾•PCB研发流程介绍–方案,原理图(网表)–layout工程师(gerber文件)–PCB板

- 【无标题】达瓦达瓦

JhonKI

考研

博客主页:https://blog.csdn.net/2301_779549673欢迎点赞收藏⭐留言如有错误敬请指正!本文由JohnKi原创,首发于CSDN未来很长,值得我们全力奔赴更美好的生活✨文章目录前言111️111❤️111111111111111总结111前言111骗骗流量券,嘿嘿111111111111111111111111111️111❤️111111111111111总结11

- 上图为是否色发

JhonKI

考研

博客主页:https://blog.csdn.net/2301_779549673欢迎点赞收藏⭐留言如有错误敬请指正!本文由JohnKi原创,首发于CSDN未来很长,值得我们全力奔赴更美好的生活✨文章目录前言111️111❤️111111111111111总结111前言111骗骗流量券,嘿嘿111111111111111111111111111️111❤️111111111111111总结11

- 【华为OD技术面试真题 - 技术面】- python八股文真题题库(1)

算法大师

华为od面试python

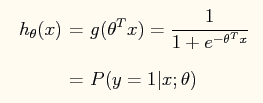

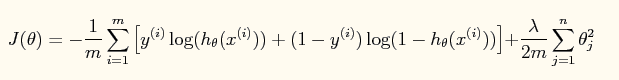

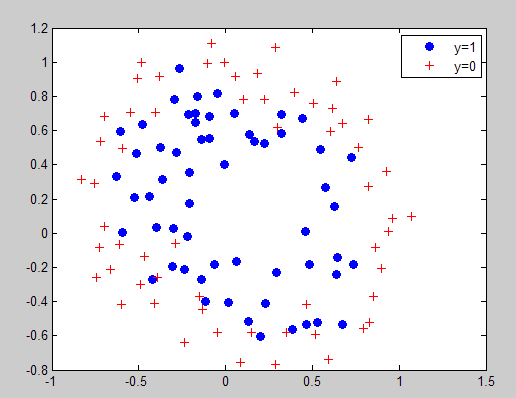

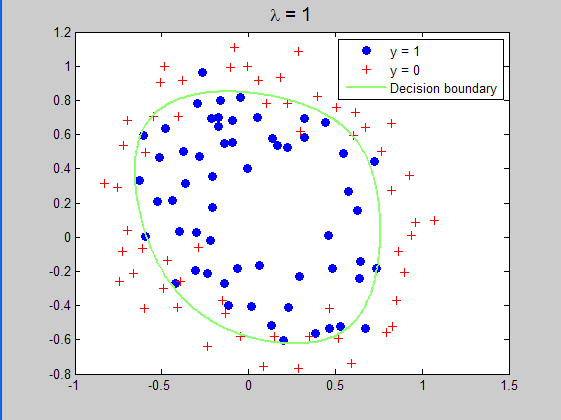

华为OD面试真题精选专栏:华为OD面试真题精选目录:2024华为OD面试手撕代码真题目录以及八股文真题目录文章目录华为OD面试真题精选1.数据预处理流程数据预处理的主要步骤工具和库2.介绍线性回归、逻辑回归模型线性回归(LinearRegression)模型形式:关键点:逻辑回归(LogisticRegression)模型形式:关键点:参数估计与评估:3.python浅拷贝及深拷贝浅拷贝(Shal

- 143234234123432

JhonKI

考研

博客主页:https://blog.csdn.net/2301_779549673欢迎点赞收藏⭐留言如有错误敬请指正!本文由JohnKi原创,首发于CSDN未来很长,值得我们全力奔赴更美好的生活✨文章目录前言111️111❤️111111111111111总结111前言111骗骗流量券,嘿嘿111111111111111111111111111️111❤️111111111111111总结11

- Python中深拷贝与浅拷贝的区别

yuxiaoyu.

转自:http://blog.csdn.net/u014745194/article/details/70271868定义:在Python中对象的赋值其实就是对象的引用。当创建一个对象,把它赋值给另一个变量的时候,python并没有拷贝这个对象,只是拷贝了这个对象的引用而已。浅拷贝:拷贝了最外围的对象本身,内部的元素都只是拷贝了一个引用而已。也就是,把对象复制一遍,但是该对象中引用的其他对象我不复

- ExpRe[25] bash外的其它shell:zsh和fish

tritone

ExpRebashlinuxubuntushell

文章目录zsh基础配置实用特性插件`autojump`语法高亮自动补全fish优点缺点时效性本篇撰写时间为2021.12.15,由于计算机技术日新月异,博客中所有内容都有时效和版本限制,具体做法不一定总行得通,链接可能改动失效,各种软件的用法可能有修改。但是其中透露的思想往往是值得学习的。本篇前置:ExpRe[10]Ubuntu[2]准备神秘软件、备份恢复软件https://www.cnblogs

- (179)时序收敛--->(29)时序收敛二九

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发时序收敛

1目录(a)FPGA简介(b)Verilog简介(c)时钟简介(d)时序收敛二九(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限的缺点。(b)

- (180)时序收敛--->(30)时序收敛三十

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发时序收敛

1目录(a)FPGA简介(b)Verilog简介(c)时钟简介(d)时序收敛三十(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限的缺点。(b)

- (158)时序收敛--->(08)时序收敛八

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发时序收敛

1目录(a)FPGA简介(b)Verilog简介(c)时钟简介(d)时序收敛八(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限的缺点。(b)F

- (159)时序收敛--->(09)时序收敛九

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发时序收敛

1目录(a)FPGA简介(b)Verilog简介(c)时钟简介(d)时序收敛九(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限的缺点。(b)F

- (160)时序收敛--->(10)时序收敛十

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发时序收敛

1目录(a)FPGA简介(b)Verilog简介(c)时钟简介(d)时序收敛十(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限的缺点。(b)F

- (153)时序收敛--->(03)时序收敛三

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发时序收敛

1目录(a)FPGA简介(b)Verilog简介(c)时钟简介(d)时序收敛三(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限的缺点。(b)F

- (121)DAC接口--->(006)基于FPGA实现DAC8811接口

FPGA系统设计指南针

FPGA接口开发(项目实战)fpga开发FPGAIC

1目录(a)FPGA简介(b)IC简介(c)Verilog简介(d)基于FPGA实现DAC8811接口(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电

- FPGA复位专题---(3)上电复位?

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发

(3)上电复位?1目录(a)FPGA简介(b)Verilog简介(c)复位简介(d)上电复位?(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限

- spring如何整合druid连接池?

惜.己

springspringjunit数据库javaidea后端xml

目录spring整合druid连接池1.新建maven项目2.新建mavenModule3.导入相关依赖4.配置log4j2.xml5.配置druid.xml1)xml中如何引入properties2)下面是配置文件6.准备jdbc.propertiesJDBC配置项解释7.配置druid8.测试spring整合druid连接池1.新建maven项目打开IDE(比如IntelliJIDEA,Ecl

- (182)时序收敛--->(32)时序收敛三二

FPGA系统设计指南针

FPGA系统设计(内训)fpga开发时序收敛

1目录(a)FPGA简介(b)Verilog简介(c)时钟简介(d)时序收敛三二(e)结束1FPGA简介(a)FPGA(FieldProgrammableGateArray)是在PAL(可编程阵列逻辑)、GAL(通用阵列逻辑)等可编程器件的基础上进一步发展的产物。它是作为专用集成电路(ASIC)领域中的一种半定制电路而出现的,既解决了定制电路的不足,又克服了原有可编程器件门电路数有限的缺点。(b)

- Linux查看服务器日志

TPBoreas

运维linux运维

一、tail这个是我最常用的一种查看方式用法如下:tail-n10test.log查询日志尾部最后10行的日志;tail-n+10test.log查询10行之后的所有日志;tail-fn10test.log循环实时查看最后1000行记录(最常用的)一般还会配合着grep用,(实时抓包)例如:tail-fn1000test.log|grep'关键字'(动态抓包)tail-fn1000test.log

- SpringCloudAlibaba—Sentinel(限流)

菜鸟爪哇

前言:自己在学习过程的记录,借鉴别人文章,记录自己实现的步骤。借鉴文章:https://blog.csdn.net/u014494148/article/details/105484410Sentinel介绍Sentinel诞生于阿里巴巴,其主要目标是流量控制和服务熔断。Sentinel是通过限制并发线程的数量(即信号隔离)来减少不稳定资源的影响,而不是使用线程池,省去了线程切换的性能开销。当资源

- 光盘文件系统 (iso9660) 格式解析

穷人小水滴

光盘文件系统iso9660denoGNU/Linuxjavascript

越简单的系统,越可靠,越不容易出问题.光盘文件系统(iso9660)十分简单,只需不到200行代码,即可实现定位读取其中的文件.参考资料:https://wiki.osdev.org/ISO_9660相关文章:《光盘防水嘛?DVD+R刻录光盘泡水实验》https://blog.csdn.net/secext2022/article/details/140583910《光驱的内部结构及日常使用》ht

- springboot+vue项目实战一-创建SpringBoot简单项目

苹果酱0567

面试题汇总与解析springboot后端java中间件开发语言

这段时间抽空给女朋友搭建一个个人博客,想着记录一下建站的过程,就当做笔记吧。虽然复制zjblog只要一个小时就可以搞定一个网站,或者用cms系统,三四个小时就可以做出一个前后台都有的网站,而且想做成啥样也都行。但是就是要从新做,自己做的意义不一样,更何况,俺就是专门干这个的,嘿嘿嘿要做一个网站,而且从零开始,首先呢就是技术选型了,经过一番思量决定选择-SpringBoot做后端,前端使用Vue做一

- 科幻游戏 《外卖员模拟器》 主要地理环境设定 (1)

穷人小水滴

游戏科幻设计

游戏名称:《外卖员模拟器》(英文名称:waimai_se)作者:穷人小水滴本故事纯属虚构,如有雷同实属巧合.故事发生在一个(架空)平行宇宙的地球,21世纪(超低空科幻流派).相关文章:https://blog.csdn.net/secext2022/article/details/141790630目录1星球整体地理设定2巨蛇国主要设定3海蛇市主要设定3.1主要地标建筑3.2交通3.3能源(电力)

- C++ lambda闭包消除类成员变量

barbyQAQ

c++c++java算法

原文链接:https://blog.csdn.net/qq_51470638/article/details/142151502一、背景在面向对象编程时,常常要添加类成员变量。然而类成员一旦多了之后,也会带来干扰。拿到一个类,一看成员变量好几十个,就问你怕不怕?二、解决思路可以借助函数式编程思想,来消除一些不必要的类成员变量。三、实例举个例子:classClassA{public:...intfu

- tiff批量转png

诺有缸的高飞鸟

opencv图像处理pythonopencv图像处理

目录写在前面代码完写在前面1、本文内容tiff批量转png2、平台/环境opencv,python3、转载请注明出处:https://blog.csdn.net/qq_41102371/article/details/132975023代码importnumpyasnpimportcv2importosdeffindAllFile(base):file_list=[]forroot,ds,fsin

- 微信开发者验证接口开发

362217990

微信 开发者 token 验证

微信开发者接口验证。

Token,自己随便定义,与微信填写一致就可以了。

根据微信接入指南描述 http://mp.weixin.qq.com/wiki/17/2d4265491f12608cd170a95559800f2d.html

第一步:填写服务器配置

第二步:验证服务器地址的有效性

第三步:依据接口文档实现业务逻辑

这里主要讲第二步验证服务器有效性。

建一个

- 一个小编程题-类似约瑟夫环问题

BrokenDreams

编程

今天群友出了一题:

一个数列,把第一个元素删除,然后把第二个元素放到数列的最后,依次操作下去,直到把数列中所有的数都删除,要求依次打印出这个过程中删除的数。

&

- linux复习笔记之bash shell (5) 关于减号-的作用

eksliang

linux关于减号“-”的含义linux关于减号“-”的用途linux关于“-”的含义linux关于减号的含义

转载请出自出处:

http://eksliang.iteye.com/blog/2105677

管道命令在bash的连续处理程序中是相当重要的,尤其在使用到前一个命令的studout(标准输出)作为这次的stdin(标准输入)时,就显得太重要了,某些命令需要用到文件名,例如上篇文档的的切割命令(split)、还有

- Unix(3)

18289753290

unix ksh

1)若该变量需要在其他子进程执行,则可用"$变量名称"或${变量}累加内容

什么是子进程?在我目前这个shell情况下,去打开一个新的shell,新的那个shell就是子进程。一般状态下,父进程的自定义变量是无法在子进程内使用的,但通过export将变量变成环境变量后就能够在子进程里面应用了。

2)条件判断: &&代表and ||代表or&nbs

- 关于ListView中性能优化中图片加载问题

酷的飞上天空

ListView

ListView的性能优化网上很多信息,但是涉及到异步加载图片问题就会出现问题。

具体参看上篇文章http://314858770.iteye.com/admin/blogs/1217594

如果每次都重新inflate一个新的View出来肯定会造成性能损失严重,可能会出现listview滚动是很卡的情况,还会出现内存溢出。

现在想出一个方法就是每次都添加一个标识,然后设置图

- 德国总理默多克:给国人的一堂“震撼教育”课

永夜-极光

教育

http://bbs.voc.com.cn/topic-2443617-1-1.html德国总理默多克:给国人的一堂“震撼教育”课

安吉拉—默克尔,一位经历过社会主义的东德人,她利用自己的博客,发表一番来华前的谈话,该说的话,都在上面说了,全世界想看想传播——去看看默克尔总理的博客吧!

德国总理默克尔以她的低调、朴素、谦和、平易近人等品格给国人留下了深刻印象。她以实际行动为中国人上了一堂

- 关于Java继承的一个小问题。。。

随便小屋

java

今天看Java 编程思想的时候遇见一个问题,运行的结果和自己想想的完全不一样。先把代码贴出来!

//CanFight接口

interface Canfight {

void fight();

}

//ActionCharacter类

class ActionCharacter {

public void fight() {

System.out.pr

- 23种基本的设计模式

aijuans

设计模式

Abstract Factory:提供一个创建一系列相关或相互依赖对象的接口,而无需指定它们具体的类。 Adapter:将一个类的接口转换成客户希望的另外一个接口。A d a p t e r模式使得原本由于接口不兼容而不能一起工作的那些类可以一起工作。 Bridge:将抽象部分与它的实现部分分离,使它们都可以独立地变化。 Builder:将一个复杂对象的构建与它的表示分离,使得同

- 《周鸿祎自述:我的互联网方法论》读书笔记

aoyouzi

读书笔记

从用户的角度来看,能解决问题的产品才是好产品,能方便/快速地解决问题的产品,就是一流产品.

商业模式不是赚钱模式

一款产品免费获得海量用户后,它的边际成本趋于0,然后再通过广告或者增值服务的方式赚钱,实际上就是创造了新的价值链.

商业模式的基础是用户,木有用户,任何商业模式都是浮云.商业模式的核心是产品,本质是通过产品为用户创造价值.

商业模式还包括寻找需求

- JavaScript动态改变样式访问技术

百合不是茶

JavaScriptstyle属性ClassName属性

一:style属性

格式:

HTML元素.style.样式属性="值";

创建菜单:在html标签中创建 或者 在head标签中用数组创建

<html>

<head>

<title>style改变样式</title>

</head>

&l

- jQuery的deferred对象详解

bijian1013

jquerydeferred对象

jQuery的开发速度很快,几乎每半年一个大版本,每两个月一个小版本。

每个版本都会引入一些新功能,从jQuery 1.5.0版本开始引入的一个新功能----deferred对象。

&nb

- 淘宝开放平台TOP

Bill_chen

C++c物流C#

淘宝网开放平台首页:http://open.taobao.com/

淘宝开放平台是淘宝TOP团队的产品,TOP即TaoBao Open Platform,

是淘宝合作伙伴开发、发布、交易其服务的平台。

支撑TOP的三条主线为:

1.开放数据和业务流程

* 以API数据形式开放商品、交易、物流等业务;

&

- 【大型网站架构一】大型网站架构概述

bit1129

网站架构

大型互联网特点

面对海量用户、海量数据

大型互联网架构的关键指标

高并发

高性能

高可用

高可扩展性

线性伸缩性

安全性

大型互联网技术要点

前端优化

CDN缓存

反向代理

KV缓存

消息系统

分布式存储

NoSQL数据库

搜索

监控

安全

想到的问题:

1.对于订单系统这种事务型系统,如

- eclipse插件hibernate tools安装

白糖_

Hibernate

eclipse helios(3.6)版

1.启动eclipse 2.选择 Help > Install New Software...> 3.添加如下地址:

http://download.jboss.org/jbosstools/updates/stable/helios/ 4.选择性安装:hibernate tools在All Jboss tool

- Jquery easyui Form表单提交注意事项

bozch

jquery easyui

jquery easyui对表单的提交进行了封装,提交的方式采用的是ajax的方式,在开发的时候应该注意的事项如下:

1、在定义form标签的时候,要将method属性设置成post或者get,特别是进行大字段的文本信息提交的时候,要将method设置成post方式提交,否则页面会抛出跨域访问等异常。所以这个要

- Trie tree(字典树)的Java实现及其应用-统计以某字符串为前缀的单词的数量

bylijinnan

java实现

import java.util.LinkedList;

public class CaseInsensitiveTrie {

/**

字典树的Java实现。实现了插入、查询以及深度优先遍历。

Trie tree's java implementation.(Insert,Search,DFS)

Problem Description

Igna

- html css 鼠标形状样式汇总

chenbowen00

htmlcss

css鼠标手型cursor中hand与pointer

Example:CSS鼠标手型效果 <a href="#" style="cursor:hand">CSS鼠标手型效果</a><br/>

Example:CSS鼠标手型效果 <a href="#" style=&qu

- [IT与投资]IT投资的几个原则

comsci

it

无论是想在电商,软件,硬件还是互联网领域投资,都需要大量资金,虽然各个国家政府在媒体上都给予大家承诺,既要让市场的流动性宽松,又要保持经济的高速增长....但是,事实上,整个市场和社会对于真正的资金投入是非常渴望的,也就是说,表面上看起来,市场很活跃,但是投入的资金并不是很充足的......

- oracle with语句详解

daizj

oraclewithwith as

oracle with语句详解 转

在oracle中,select 查询语句,可以使用with,就是一个子查询,oracle 会把子查询的结果放到临时表中,可以反复使用

例子:注意,这是sql语句,不是pl/sql语句, 可以直接放到jdbc执行的

----------------------------------------------------------------

- hbase的简单操作

deng520159

数据库hbase

近期公司用hbase来存储日志,然后再来分析 ,把hbase开发经常要用的命令找了出来.

用ssh登陆安装hbase那台linux后

用hbase shell进行hbase命令控制台!

表的管理

1)查看有哪些表

hbase(main)> list

2)创建表

# 语法:create <table>, {NAME => <family&g

- C语言scanf继续学习、算术运算符学习和逻辑运算符

dcj3sjt126com

c

/*

2013年3月11日20:37:32

地点:北京潘家园

功能:完成用户格式化输入多个值

目的:学习scanf函数的使用

*/

# include <stdio.h>

int main(void)

{

int i, j, k;

printf("please input three number:\n"); //提示用

- 2015越来越好

dcj3sjt126com

歌曲

越来越好

房子大了电话小了 感觉越来越好

假期多了收入高了 工作越来越好

商品精了价格活了 心情越来越好

天更蓝了水更清了 环境越来越好

活得有奔头人会步步高

想做到你要努力去做到

幸福的笑容天天挂眉梢 越来越好

婆媳和了家庭暖了 生活越来越好

孩子高了懂事多了 学习越来越好

朋友多了心相通了 大家越来越好

道路宽了心气顺了 日子越来越好

活的有精神人就不显

- java.sql.SQLException: Value '0000-00-00' can not be represented as java.sql.Tim

feiteyizu

mysql

数据表中有记录的time字段(属性为timestamp)其值为:“0000-00-00 00:00:00”

程序使用select 语句从中取数据时出现以下异常:

java.sql.SQLException:Value '0000-00-00' can not be represented as java.sql.Date

java.sql.SQLException: Valu

- Ehcache(07)——Ehcache对并发的支持

234390216

并发ehcache锁ReadLockWriteLock

Ehcache对并发的支持

在高并发的情况下,使用Ehcache缓存时,由于并发的读与写,我们读的数据有可能是错误的,我们写的数据也有可能意外的被覆盖。所幸的是Ehcache为我们提供了针对于缓存元素Key的Read(读)、Write(写)锁。当一个线程获取了某一Key的Read锁之后,其它线程获取针对于同

- mysql中blob,text字段的合成索引

jackyrong

mysql

在mysql中,原来有一个叫合成索引的,可以提高blob,text字段的效率性能,

但只能用在精确查询,核心是增加一个列,然后可以用md5进行散列,用散列值查找

则速度快

比如:

create table abc(id varchar(10),context blog,hash_value varchar(40));

insert into abc(1,rep

- 逻辑运算与移位运算

latty

位运算逻辑运算

源码:正数的补码与原码相同例+7 源码:00000111 补码 :00000111 (用8位二进制表示一个数)

负数的补码:

符号位为1,其余位为该数绝对值的原码按位取反;然后整个数加1。 -7 源码: 10000111 ,其绝对值为00000111 取反加一:11111001 为-7补码

已知一个数的补码,求原码的操作分两种情况:

- 利用XSD 验证XML文件

newerdragon

javaxmlxsd

XSD文件 (XML Schema 语言也称作 XML Schema 定义(XML Schema Definition,XSD)。 具体使用方法和定义请参看:

http://www.w3school.com.cn/schema/index.asp

java自jdk1.5以上新增了SchemaFactory类 可以实现对XSD验证的支持,使用起来也很方便。

以下代码可用在J

- 搭建 CentOS 6 服务器(12) - Samba

rensanning

centos

(1)安装

# yum -y install samba

Installed:

samba.i686 0:3.6.9-169.el6_5

# pdbedit -a rensn

new password:123456

retype new password:123456

……

(2)Home文件夹

# mkdir /etc

- Learn Nodejs 01

toknowme

nodejs

(1)下载nodejs

https://nodejs.org/download/ 选择相应的版本进行下载 (2)安装nodejs 安装的方式比较多,请baidu下

我这边下载的是“node-v0.12.7-linux-x64.tar.gz”这个版本 (1)上传服务器 (2)解压 tar -zxvf node-v0.12.

- jquery控制自动刷新的代码举例

xp9802

jquery

1、html内容部分 复制代码代码示例: <div id='log_reload'>

<select name="id_s" size="1">

<option value='2'>-2s-</option>

<option value='3'>-3s-</option