docker-compose 搭建 kafka 集群

kafka依赖zookeeper,所以搭建kafka需要先配置zookeeper

zookeeper:127.0.0.1:2181

kafka1: 127.0.0.1:9092

kafka2: 127.0.0.1:9093

kafka3: 127.0.0.1:9094

1.安装 docker-compose

curl -L http://mirror.azure.cn/docker-toolbox/linux/compose/1.25.4/docker-compose-Linux-x86_64 -o /usr/local/bin/docker-compose chmod +x /usr/local/bin/docker-compose

2、创建 docker-compose.yml 文件

version: '3.3'

services:

zookeeper:

image: wurstmeister/zookeeper

container_name: zookeeper

ports:

- 2181:2181

volumes:

- /data/zookeeper/data:/data

- /data/zookeeper/datalog:/datalog

- /data/zookeeper/logs:/logs

restart: always

kafka1:

image: wurstmeister/kafka

depends_on:

- zookeeper

container_name: kafka1

ports:

- 9092:9092

environment:

KAFKA_BROKER_ID: 1

KAFKA_ZOOKEEPER_CONNECT: 192.168.10.219:2181

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.10.219:9092

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

KAFKA_LOG_DIRS: /data/kafka-data

KAFKA_LOG_RETENTION_HOURS: 24

volumes:

- /data/kafka1/data:/data/kafka-data

restart: unless-stopped

kafka2:

image: wurstmeister/kafka

depends_on:

- zookeeper

container_name: kafka2

ports:

- 9093:9093

environment:

KAFKA_BROKER_ID: 2

KAFKA_ZOOKEEPER_CONNECT: 192.168.10.219:2181

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.10.219:9093

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9093

KAFKA_LOG_DIRS: /data/kafka-data

KAFKA_LOG_RETENTION_HOURS: 24

volumes:

- /data/kafka2/data:/data/kafka-data

restart: unless-stopped

kafka3:

image: wurstmeister/kafka

depends_on:

- zookeeper

container_name: kafka3

ports:

- 9094:9094

environment:

KAFKA_BROKER_ID: 3

KAFKA_ZOOKEEPER_CONNECT: 192.168.10.219:2181

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.10.219:9094

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9094

KAFKA_LOG_DIRS: /data/kafka-data

KAFKA_LOG_RETENTION_HOURS: 24

volumes:

- /data/kafka3/data:/data/kafka-data

restart: unless-stopped

参数说明:

- KAFKA_ZOOKEEPER_CONNECT: zookeeper服务地址

- KAFKA_ADVERTISED_LISTENERS: kafka服务地址

- KAFKA_BROKER_ID: kafka节点ID,不可重复

- KAFKA_LOG_DIRS: kafka数据文件地址(非必选。下面的volumes会将容器内文件挂载到宿主机上)

- KAFKA_LOG_RETENTION_HOURS: 数据文件保留时间(非必选。缺省168小时)

3、启动

docker-compose up -d

测试

1、登录到 kafka1 容器

docker-compose exec kafka1 bash

切到 /opt/kakfa/bin 目录下

cd /opt/kafka/bin/

创建一个 topic:名称为first,3个分区,2个副本

./kafka-topics.sh --create --topic first --zookeeper 192.168.10.219:2181 --partitions 3 --replication-factor 2

zookeeper在kafka中的作用https://www.jianshu.com/p/a036405f989c![]() https://www.jianshu.com/p/a036405f989c

https://www.jianshu.com/p/a036405f989c

注意:副本数不能超过brokers数(分区是可以超过的),否则会创建失败。

2.查看 topic 列表

./kafka-topics.sh --list --zookeeper 192.168.10.219:2181

./kafka-topics.sh --describe --topic first --zookeeper 192.168.10.219:2181

4.在宿主机上,切到 /data/kafka1/data下,可以看到topic的数据

说明:

- 数据文件名称组成:topic名称_分区号

- 由于是3个分区+两个副本,所有会生成6个数据文件,不重复的分摊到3台borker上(查看kafka2和kafka3目录下可验证)

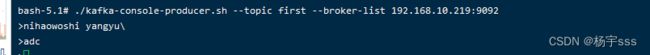

5.创建一个生产者,向 topic 中发送消息

6.登录到 kafka2 或者 kafka3 容器内(参考第1步),然后创建一个消费者,接收 topic 中的消息

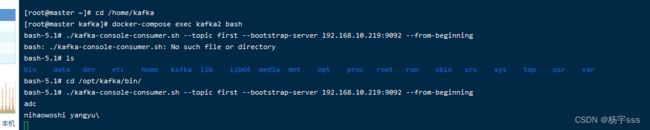

1、删除topic

./kafka-topics.sh --delete --topic first --zookeeper 192.168.10.219:2181

删除topic,不会立马删除,而是会先给该topic打一个标记。在/data/kafka1/data下可以看到:

2、查看某个topic对应的消息数量

./kafka-run-class.sh kafka.tools.GetOffsetShell --topic second --time -1 --broker-list 192.168.10.219:9092

3、查看所有消费者组

./kafka-consumer-groups.sh --bootstrap-server 192.168.56.101:9092 --list

4、查看日志文件内容(消息内容)

./kafka-dump-log.sh --files /data/kafka-data/my-topic-0/00000000000000000000.log --print-data-log

查看topic=my-topic的消息,日志文件为00000.log,--print-data-log表示查看消息内容(不加不会显示消息内容)

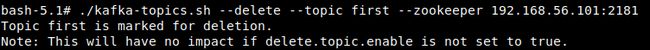

5、修改kafka对应topic分区数(只能增加,不能减少)

./kafka-topics.sh --alter --topic al-test --partitions 2 --zookeeper 192.168.10.219:2181

6、修改kafka对应topic数据的保存时长(可以查看server.properties文件中log.retention.hours配置,默认168小时)

./kafka-topics.sh --alter --zookeeper 192.168.56.101:2181 --topic al-test --config retention.ms=86400000

这里是改为24小时=24*3600*1000

总结

- 1、连接信息

- producer --> broker-list

- kafka集群 --> zookeeper

- consumer --> bootstrap-server 或 zookeeper

- 0.9版本以前,consumer是连向zookeeper,0.9版本以后,默认连接bootstrap-server

- 2、docker-compose.yaml配置文件,kafka.environment下的配置项,是对应server.properties文件下的配置的:

- borker.id --> KAFKA_BORKER_ID

- log.dirs --> KAFKA_LOG_DIRS

- 3、消费者与生产者

- 一个分区,只能被同一个消费者组中的一个消费者消费,但是可以被多个消费者组消费

- 同一个分区内的消息有序,不同分区之间的消息无序

- 踩坑

- 1、修改docker-compose.yaml配置文件后,up启动失败,使用docker-compose logs 命令查看日志,包错:

-

The broker is trying to join the wrong cluster. Configured zookeeper.connect may be wrong.

- 解决办法:删除宿主机上kafka挂载的数据文件(/data目录下),然后docker-compose down,最后再up

参考地址:docker-compose 搭建 kafka 集群 - 仅此而已-远方 - 博客园