【深度学习21天学习挑战赛】8、卷积神经网络(认识Xception模型):动物识别

活动地址:CSDN21天学习挑战赛

- 本文为365天深度学习训练营 中的学习记录博客

- 参考文章地址: 深度学习100例 | 第24天-卷积神经网络(Xception):动物识别

- 作者:K同学啊

1、 Xception模型主要思想

传统的卷积操作同时对输入的feature mapping的跨通道交互性(cross-channel correlations)、空间交互性(spatial correlations) 进行了映射。

Inception系列结构着力于将上述过程进行分解,在一定程度上实现了跨通道相关性和空间相关性的解耦。

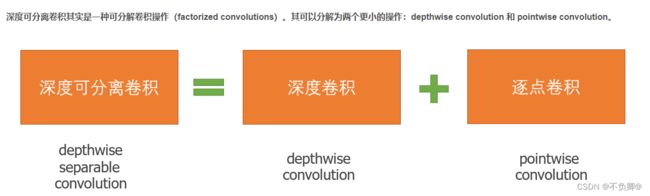

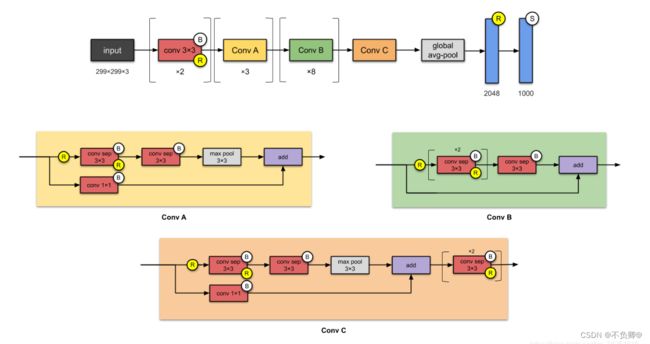

在Inception的基础上进行改进,使用深度可分离卷积(depthwise separate convolution)替代传统的Inception块,实现跨通道相关性和空间相关性的完全解耦。此外,文章还引入了残差连接,最终提出了Xception的网络结构。

Xception是谷歌公司继Inception后,提出的InceptionV3的一种改进模型,其中Inception模块已被深度可分离卷积(depthwise separable convolution)替换。它与Inception-v1(23M)的参数数量大致相同。

2、深度可分离卷积

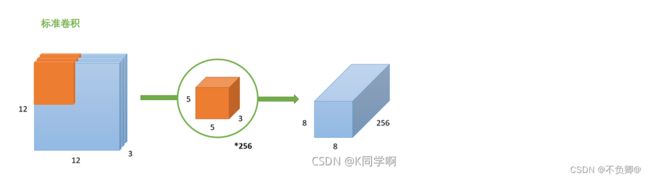

2.1 标准卷积

输入一个12×12×3的一个输入特征图,经过 5×5×3的卷积核得到一个8×8×1的输出特征图。如果我们此时有256个卷积核,我们将会得到一个8×8×256的输出特征图。

2.2 深度卷积

与标准卷积网络不一样的是,这里会将卷积核拆分成单通道形式,在不改变输入特征图像的深度的情况下,对每一通道进行卷积操作,这样就得到了和输入特征图通道数一致的输出特征图。如上图,输入12x12x3 的特征图,经过5x5x1x3的深度卷积之后,得到了8x8x3的输出特征图。输入和输出的维度是不变的3,这样就会有一个问题,通道数太少,特征图的维度太少,能获得足够的有效信息吗?

2.3逐点卷积

逐点卷积就是1*1卷积,主要作用就是对特征图进行升维和降维,如下图:

在深度卷积的过程中,我们得到了8x8x3的输出特征图,我们用256个1x1x3的卷积核对输入特征图进行卷积操作,输出的特征图和标准的卷积操作一样都是8x8x256了。

可见,深度可分离卷积可以实现更少的参数,更少的运算量。

3、构建Xception模型

数据导入及预处理部分略

#====================================#

# Xception的网络部分

#====================================#

from tensorflow.keras.preprocessing import image

from tensorflow.keras.models import Model

from tensorflow.keras import layers

from tensorflow.keras.layers import Dense,Input,BatchNormalization,Activation,Conv2D,SeparableConv2D,MaxPooling2D

from tensorflow.keras.layers import GlobalAveragePooling2D,GlobalMaxPooling2D

from tensorflow.keras import backend as K

from tensorflow.keras.applications.imagenet_utils import decode_predictions

def Xception(input_shape = [299,299,3],classes=1000):

img_input = Input(shape=input_shape)

#=================#

# Entry flow

#=================#

# block1

# 299,299,3 -> 149,149,64

x = Conv2D(32, (3, 3), strides=(2, 2), use_bias=False, name='block1_conv1')(img_input)

x = BatchNormalization(name='block1_conv1_bn')(x)

x = Activation('relu', name='block1_conv1_act')(x)

x = Conv2D(64, (3, 3), use_bias=False, name='block1_conv2')(x)

x = BatchNormalization(name='block1_conv2_bn')(x)

x = Activation('relu', name='block1_conv2_act')(x)

# block2

# 149,149,64 -> 75,75,128

residual = Conv2D(128, (1, 1), strides=(2, 2), padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv1')(x)

x = BatchNormalization(name='block2_sepconv1_bn')(x)

x = Activation('relu', name='block2_sepconv2_act')(x)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv2')(x)

x = BatchNormalization(name='block2_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block2_pool')(x)

x = layers.add([x, residual])

# block3

# 75,75,128 -> 38,38,256

residual = Conv2D(256, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block3_sepconv1_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv1')(x)

x = BatchNormalization(name='block3_sepconv1_bn')(x)

x = Activation('relu', name='block3_sepconv2_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv2')(x)

x = BatchNormalization(name='block3_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block3_pool')(x)

x = layers.add([x, residual])

# block4

# 38,38,256 -> 19,19,728

residual = Conv2D(728, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block4_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv1')(x)

x = BatchNormalization(name='block4_sepconv1_bn')(x)

x = Activation('relu', name='block4_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv2')(x)

x = BatchNormalization(name='block4_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block4_pool')(x)

x = layers.add([x, residual])

#=================#

# Middle flow

#=================#

# block5--block12

# 19,19,728 -> 19,19,728

for i in range(8):

residual = x

prefix = 'block' + str(i + 5)

x = Activation('relu', name=prefix + '_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv1')(x)

x = BatchNormalization(name=prefix + '_sepconv1_bn')(x)

x = Activation('relu', name=prefix + '_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv2')(x)

x = BatchNormalization(name=prefix + '_sepconv2_bn')(x)

x = Activation('relu', name=prefix + '_sepconv3_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv3')(x)

x = BatchNormalization(name=prefix + '_sepconv3_bn')(x)

x = layers.add([x, residual])

#=================#

# Exit flow

#=================#

# block13

# 19,19,728 -> 10,10,1024

residual = Conv2D(1024, (1, 1), strides=(2, 2),

padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block13_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block13_sepconv1')(x)

x = BatchNormalization(name='block13_sepconv1_bn')(x)

x = Activation('relu', name='block13_sepconv2_act')(x)

x = SeparableConv2D(1024, (3, 3), padding='same', use_bias=False, name='block13_sepconv2')(x)

x = BatchNormalization(name='block13_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block13_pool')(x)

x = layers.add([x, residual])

# block14

# 10,10,1024 -> 10,10,2048

x = SeparableConv2D(1536, (3, 3), padding='same', use_bias=False, name='block14_sepconv1')(x)

x = BatchNormalization(name='block14_sepconv1_bn')(x)

x = Activation('relu', name='block14_sepconv1_act')(x)

x = SeparableConv2D(2048, (3, 3), padding='same', use_bias=False, name='block14_sepconv2')(x)

x = BatchNormalization(name='block14_sepconv2_bn')(x)

x = Activation('relu', name='block14_sepconv2_act')(x)

x = GlobalAveragePooling2D(name='avg_pool')(x)

x = Dense(classes, activation='softmax', name='predictions')(x)

inputs = img_input

model = Model(inputs, x, name='xception')

return model

打印模型如下:

Model: "xception"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) [(None, 299, 299, 3) 0

__________________________________________________________________________________________________

block1_conv1 (Conv2D) (None, 149, 149, 32) 864 input_1[0][0]

__________________________________________________________________________________________________

block1_conv1_bn (BatchNormaliza (None, 149, 149, 32) 128 block1_conv1[0][0]

__________________________________________________________________________________________________

......

__________________________________________________________________________________________________

block14_sepconv2 (SeparableConv (None, 10, 10, 2048) 3159552 block14_sepconv1_act[0][0]

__________________________________________________________________________________________________

block14_sepconv2_bn (BatchNorma (None, 10, 10, 2048) 8192 block14_sepconv2[0][0]

__________________________________________________________________________________________________

block14_sepconv2_act (Activatio (None, 10, 10, 2048) 0 block14_sepconv2_bn[0][0]

__________________________________________________________________________________________________

avg_pool (GlobalAveragePooling2 (None, 2048) 0 block14_sepconv2_act[0][0]

__________________________________________________________________________________________________

predictions (Dense) (None, 1000) 2049000 avg_pool[0][0]

==================================================================================================

Total params: 22,910,480

Trainable params: 22,855,952

Non-trainable params: 54,528

__________________________________________________________________________________________________

模型学习率、编译、训练部分略

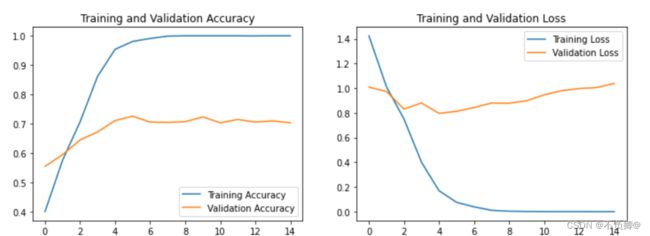

4、评估

5、启发

Xception作为Inception v3的改进,主要是在Inception v3的基础上引入了depthwise separable convolution,在基本不增加网络复杂度的前提下提高了模型的效果。