深度学习实战07-卷积神经网络(Xception)实现动物识别

- 本文为365天深度学习训练营 中的学习记录博客

参考文章:

https://blog.csdn.net/qq_38389717/article/details/108904221

一、前期准备

1. 设置GPU

如果使用的是cpu,可以去掉这部分代码。

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) # 设置GPU显存用量按需使用

tf.config.set_visible_devices(gpus[0], "GPU")

# 打印显卡信息,确认GPU可用

print(gpus)

2. 导入数据

import matplotlib.pyplot as plt

# 支持中文

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

import os, PIL

# 设置随机种子尽可能使结果可以重现

import numpy as np

np.random.seed(1)

# 设置随机种子,尽可能使结果可以重现

import tensorflow as tf

tf.random.set_seed(1)

import pathlib

data_dir = "./datasets/data/"

data_dir = pathlib.Path(data_dir)

3.查看数据

image_count = len(list(data_dir.glob('*/*')))

print("图片总数为:",image_count)

二、数据预处理

1. 加载数据

使用image_dataset_from_directory方法将磁盘中的数据加载到tf.data.Dataset中。

batch_size = 10

img_height = 299

img_width = 299

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="training",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="validation",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

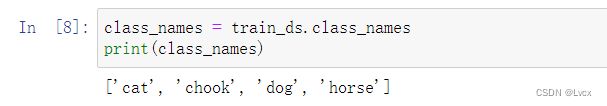

我们可以通过class_names输出数据集的标签。将标签按字母顺序对应目录的名称。

class_names = train_ds.class_names

print(class_names)

补充:去掉.ipynb_checkpoints类的方法:

# %autosave 0

# os.removedirs("./datasets/data/" + "/.ipynb_checkpoints")

3. 再次检查数据

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

- Image_batch是形状的张量(2,299,299,3)。这是一批形状2402403的8张图片(最后一维指的是彩色通道RGB)。

- Label_batch是形状(8,)的张量,这些标签对应8张图片。

4. 配置数据集

- shuffle():打乱数据,关于此函数的详细介绍可以参考:

https://zhuanlan.zhihu.com/p/42417456 - prefetch():预取数据,加速运行。

-cache():将数据集缓存到内存当中,加速运行。

# AUTOTUNE = tf.data.AUTOTUNE

# 报错:AttributeError: module 'tensorflow._api.v2.data' has no attribute 'AUTOTUNE'

# 解决方法:

AUTOTUNE = tf.data.experimental.AUTOTUNE

train_ds = (

train_ds.cache()

.shuffle(1000)

# .map(train_preprocessing) # 这里可以设置预处理函数

# .batch(batch_size) # 在image_dataset_from_directory处已经设置了batch_size

.prefetch(buffer_size=AUTOTUNE)

)

val_ds = (

val_ds.cache()

.shuffle(1000)

# .map(val_preprocessing) # 这里可以设置预处理函数

# .batch(batch_size) # 在image_dataset_from_directory处已经设置了batch_size

.prefetch(buffer_size=AUTOTUNE)

)

三、构建模型

Xception是谷歌公司继Inception后,提出的InceptionV3的一种改进模型,其中Inception模块已被深度可分离卷积(depthwise separable convolution)替换。它与Inception-v1(23M)的参数数量大致相同。

1. 深度可分离卷积

深度可分离卷积其实是一种可分解卷积操作(factorized convolutions)。其中可以分解为两个更小的操作:depthwise convolution和pointwise convolution。

(1)标准卷积

输入一个12 12 3的一个输入特征图,经过5 5 3的卷积核得到一个881的输出特征图。如果我们此时有256个卷积核,我们将会得到一个8 8 256的输出特征图。

以上就是标准卷积做的活,那么深度卷积和逐点卷积呢?

(2)深度卷积

与标准卷积不一样的是,这里会将卷积核拆分成单通道形式,在不改变输入特征图像的深度的情况下,对每一通道进行卷积操作,这样就得到了和输入特征图通道数一致的输出特征图。如上图,输入12 * 12 * 3的特征图,经过5 * 5 * 1 * 5的深度卷积后,得到了8 * 8 * 3的输出特征图。输入和输出的维度是不变的3,这样就会有一个问题,通道数太少,特征图的维度太少,能获得足够的有效信息吗?

(3)逐点卷积

逐点卷积就是1 * 1卷积,主要作用就是对特征图进行升维和降维,如下图:

在深度卷积的过程中,我们得到了8 * 8 * 3的输出特征图,我们用256个1 * 1 * 3的卷积核对输入特征图进行卷积操作,输出的特征图和标准卷积操作一样都是8 * 8 * 256了。

(4)为什么要用深度可分离卷积?

深度可分离卷积可以实现更少的参数,更少的运算量。

2. 构建Xception模型

#====================================#

# Xception的网络部分

#====================================#

from tensorflow.keras.preprocessing import image

from tensorflow.keras.models import Model

from tensorflow.keras import layers

from tensorflow.keras.layers import Dense,Input,BatchNormalization,Activation,Conv2D,SeparableConv2D,MaxPooling2D

from tensorflow.keras.layers import GlobalAveragePooling2D,GlobalMaxPooling2D

from tensorflow.keras import backend as K

from tensorflow.keras.applications.imagenet_utils import decode_predictions

def Xception(input_shape = [299,299,3],classes=1000):

img_input = Input(shape=input_shape)

#=================#

# Entry flow

#=================#

# block1

# 299,299,3 -> 149,149,64

x = Conv2D(32, (3, 3), strides=(2, 2), use_bias=False, name='block1_conv1')(img_input)

x = BatchNormalization(name='block1_conv1_bn')(x)

x = Activation('relu', name='block1_conv1_act')(x)

x = Conv2D(64, (3, 3), use_bias=False, name='block1_conv2')(x)

x = BatchNormalization(name='block1_conv2_bn')(x)

x = Activation('relu', name='block1_conv2_act')(x)

# block2

# 149,149,64 -> 75,75,128

residual = Conv2D(128, (1, 1), strides=(2, 2), padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv1')(x)

x = BatchNormalization(name='block2_sepconv1_bn')(x)

x = Activation('relu', name='block2_sepconv2_act')(x)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv2')(x)

x = BatchNormalization(name='block2_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block2_pool')(x)

x = layers.add([x, residual])

# block3

# 75,75,128 -> 38,38,256

residual = Conv2D(256, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block3_sepconv1_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv1')(x)

x = BatchNormalization(name='block3_sepconv1_bn')(x)

x = Activation('relu', name='block3_sepconv2_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv2')(x)

x = BatchNormalization(name='block3_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block3_pool')(x)

x = layers.add([x, residual])

# block4

# 38,38,256 -> 19,19,728

residual = Conv2D(728, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block4_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv1')(x)

x = BatchNormalization(name='block4_sepconv1_bn')(x)

x = Activation('relu', name='block4_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv2')(x)

x = BatchNormalization(name='block4_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block4_pool')(x)

x = layers.add([x, residual])

#=================#

# Middle flow

#=================#

# block5--block12

# 19,19,728 -> 19,19,728

for i in range(8):

residual = x

prefix = 'block' + str(i + 5)

x = Activation('relu', name=prefix + '_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv1')(x)

x = BatchNormalization(name=prefix + '_sepconv1_bn')(x)

x = Activation('relu', name=prefix + '_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv2')(x)

x = BatchNormalization(name=prefix + '_sepconv2_bn')(x)

x = Activation('relu', name=prefix + '_sepconv3_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv3')(x)

x = BatchNormalization(name=prefix + '_sepconv3_bn')(x)

x = layers.add([x, residual])

#=================#

# Exit flow

#=================#

# block13

# 19,19,728 -> 10,10,1024

residual = Conv2D(1024, (1, 1), strides=(2, 2),

padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block13_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block13_sepconv1')(x)

x = BatchNormalization(name='block13_sepconv1_bn')(x)

x = Activation('relu', name='block13_sepconv2_act')(x)

x = SeparableConv2D(1024, (3, 3), padding='same', use_bias=False, name='block13_sepconv2')(x)

x = BatchNormalization(name='block13_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block13_pool')(x)

x = layers.add([x, residual])

# block14

# 10,10,1024 -> 10,10,2048

x = SeparableConv2D(1536, (3, 3), padding='same', use_bias=False, name='block14_sepconv1')(x)

x = BatchNormalization(name='block14_sepconv1_bn')(x)

x = Activation('relu', name='block14_sepconv1_act')(x)

x = SeparableConv2D(2048, (3, 3), padding='same', use_bias=False, name='block14_sepconv2')(x)

x = BatchNormalization(name='block14_sepconv2_bn')(x)

x = Activation('relu', name='block14_sepconv2_act')(x)

x = GlobalAveragePooling2D(name='avg_pool')(x)

x = Dense(classes, activation='softmax', name='predictions')(x)

inputs = img_input

model = Model(inputs, x, name='xception')

return model

model = Xception()

# 打印模型信息

model.summary()

Model: "xception"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) [(None, 299, 299, 3) 0

__________________________________________________________________________________________________

block1_conv1 (Conv2D) (None, 149, 149, 32) 864 input_1[0][0]

__________________________________________________________________________________________________

block1_conv1_bn (BatchNormaliza (None, 149, 149, 32) 128 block1_conv1[0][0]

__________________________________________________________________________________________________

block1_conv1_act (Activation) (None, 149, 149, 32) 0 block1_conv1_bn[0][0]

__________________________________________________________________________________________________

block1_conv2 (Conv2D) (None, 147, 147, 64) 18432 block1_conv1_act[0][0]

__________________________________________________________________________________________________

block1_conv2_bn (BatchNormaliza (None, 147, 147, 64) 256 block1_conv2[0][0]

__________________________________________________________________________________________________

block1_conv2_act (Activation) (None, 147, 147, 64) 0 block1_conv2_bn[0][0]

__________________________________________________________________________________________________

block2_sepconv1 (SeparableConv2 (None, 147, 147, 128 8768 block1_conv2_act[0][0]

__________________________________________________________________________________________________

block2_sepconv1_bn (BatchNormal (None, 147, 147, 128 512 block2_sepconv1[0][0]

__________________________________________________________________________________________________

block2_sepconv2_act (Activation (None, 147, 147, 128 0 block2_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block2_sepconv2 (SeparableConv2 (None, 147, 147, 128 17536 block2_sepconv2_act[0][0]

__________________________________________________________________________________________________

block2_sepconv2_bn (BatchNormal (None, 147, 147, 128 512 block2_sepconv2[0][0]

__________________________________________________________________________________________________

conv2d (Conv2D) (None, 74, 74, 128) 8192 block1_conv2_act[0][0]

__________________________________________________________________________________________________

block2_pool (MaxPooling2D) (None, 74, 74, 128) 0 block2_sepconv2_bn[0][0]

__________________________________________________________________________________________________

batch_normalization (BatchNorma (None, 74, 74, 128) 512 conv2d[0][0]

__________________________________________________________________________________________________

add (Add) (None, 74, 74, 128) 0 block2_pool[0][0]

batch_normalization[0][0]

__________________________________________________________________________________________________

block3_sepconv1_act (Activation (None, 74, 74, 128) 0 add[0][0]

__________________________________________________________________________________________________

block3_sepconv1 (SeparableConv2 (None, 74, 74, 256) 33920 block3_sepconv1_act[0][0]

__________________________________________________________________________________________________

block3_sepconv1_bn (BatchNormal (None, 74, 74, 256) 1024 block3_sepconv1[0][0]

__________________________________________________________________________________________________

block3_sepconv2_act (Activation (None, 74, 74, 256) 0 block3_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block3_sepconv2 (SeparableConv2 (None, 74, 74, 256) 67840 block3_sepconv2_act[0][0]

__________________________________________________________________________________________________

block3_sepconv2_bn (BatchNormal (None, 74, 74, 256) 1024 block3_sepconv2[0][0]

__________________________________________________________________________________________________

conv2d_1 (Conv2D) (None, 37, 37, 256) 32768 add[0][0]

__________________________________________________________________________________________________

block3_pool (MaxPooling2D) (None, 37, 37, 256) 0 block3_sepconv2_bn[0][0]

__________________________________________________________________________________________________

batch_normalization_1 (BatchNor (None, 37, 37, 256) 1024 conv2d_1[0][0]

__________________________________________________________________________________________________

add_1 (Add) (None, 37, 37, 256) 0 block3_pool[0][0]

batch_normalization_1[0][0]

__________________________________________________________________________________________________

block4_sepconv1_act (Activation (None, 37, 37, 256) 0 add_1[0][0]

__________________________________________________________________________________________________

block4_sepconv1 (SeparableConv2 (None, 37, 37, 728) 188672 block4_sepconv1_act[0][0]

__________________________________________________________________________________________________

block4_sepconv1_bn (BatchNormal (None, 37, 37, 728) 2912 block4_sepconv1[0][0]

__________________________________________________________________________________________________

block4_sepconv2_act (Activation (None, 37, 37, 728) 0 block4_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block4_sepconv2 (SeparableConv2 (None, 37, 37, 728) 536536 block4_sepconv2_act[0][0]

__________________________________________________________________________________________________

block4_sepconv2_bn (BatchNormal (None, 37, 37, 728) 2912 block4_sepconv2[0][0]

__________________________________________________________________________________________________

conv2d_2 (Conv2D) (None, 19, 19, 728) 186368 add_1[0][0]

__________________________________________________________________________________________________

block4_pool (MaxPooling2D) (None, 19, 19, 728) 0 block4_sepconv2_bn[0][0]

__________________________________________________________________________________________________

batch_normalization_2 (BatchNor (None, 19, 19, 728) 2912 conv2d_2[0][0]

__________________________________________________________________________________________________

add_2 (Add) (None, 19, 19, 728) 0 block4_pool[0][0]

batch_normalization_2[0][0]

__________________________________________________________________________________________________

block5_sepconv1_act (Activation (None, 19, 19, 728) 0 add_2[0][0]

__________________________________________________________________________________________________

block5_sepconv1 (SeparableConv2 (None, 19, 19, 728) 536536 block5_sepconv1_act[0][0]

__________________________________________________________________________________________________

block5_sepconv1_bn (BatchNormal (None, 19, 19, 728) 2912 block5_sepconv1[0][0]

__________________________________________________________________________________________________

block5_sepconv2_act (Activation (None, 19, 19, 728) 0 block5_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block5_sepconv2 (SeparableConv2 (None, 19, 19, 728) 536536 block5_sepconv2_act[0][0]

__________________________________________________________________________________________________

block5_sepconv2_bn (BatchNormal (None, 19, 19, 728) 2912 block5_sepconv2[0][0]

__________________________________________________________________________________________________

block5_sepconv3_act (Activation (None, 19, 19, 728) 0 block5_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block5_sepconv3 (SeparableConv2 (None, 19, 19, 728) 536536 block5_sepconv3_act[0][0]

__________________________________________________________________________________________________

block5_sepconv3_bn (BatchNormal (None, 19, 19, 728) 2912 block5_sepconv3[0][0]

__________________________________________________________________________________________________

add_3 (Add) (None, 19, 19, 728) 0 block5_sepconv3_bn[0][0]

add_2[0][0]

__________________________________________________________________________________________________

block6_sepconv1_act (Activation (None, 19, 19, 728) 0 add_3[0][0]

__________________________________________________________________________________________________

block6_sepconv1 (SeparableConv2 (None, 19, 19, 728) 536536 block6_sepconv1_act[0][0]

__________________________________________________________________________________________________

block6_sepconv1_bn (BatchNormal (None, 19, 19, 728) 2912 block6_sepconv1[0][0]

__________________________________________________________________________________________________

block6_sepconv2_act (Activation (None, 19, 19, 728) 0 block6_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block6_sepconv2 (SeparableConv2 (None, 19, 19, 728) 536536 block6_sepconv2_act[0][0]

__________________________________________________________________________________________________

block6_sepconv2_bn (BatchNormal (None, 19, 19, 728) 2912 block6_sepconv2[0][0]

__________________________________________________________________________________________________

block6_sepconv3_act (Activation (None, 19, 19, 728) 0 block6_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block6_sepconv3 (SeparableConv2 (None, 19, 19, 728) 536536 block6_sepconv3_act[0][0]

__________________________________________________________________________________________________

block6_sepconv3_bn (BatchNormal (None, 19, 19, 728) 2912 block6_sepconv3[0][0]

__________________________________________________________________________________________________

add_4 (Add) (None, 19, 19, 728) 0 block6_sepconv3_bn[0][0]

add_3[0][0]

__________________________________________________________________________________________________

block7_sepconv1_act (Activation (None, 19, 19, 728) 0 add_4[0][0]

__________________________________________________________________________________________________

block7_sepconv1 (SeparableConv2 (None, 19, 19, 728) 536536 block7_sepconv1_act[0][0]

__________________________________________________________________________________________________

block7_sepconv1_bn (BatchNormal (None, 19, 19, 728) 2912 block7_sepconv1[0][0]

__________________________________________________________________________________________________

block7_sepconv2_act (Activation (None, 19, 19, 728) 0 block7_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block7_sepconv2 (SeparableConv2 (None, 19, 19, 728) 536536 block7_sepconv2_act[0][0]

__________________________________________________________________________________________________

block7_sepconv2_bn (BatchNormal (None, 19, 19, 728) 2912 block7_sepconv2[0][0]

__________________________________________________________________________________________________

block7_sepconv3_act (Activation (None, 19, 19, 728) 0 block7_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block7_sepconv3 (SeparableConv2 (None, 19, 19, 728) 536536 block7_sepconv3_act[0][0]

__________________________________________________________________________________________________

block7_sepconv3_bn (BatchNormal (None, 19, 19, 728) 2912 block7_sepconv3[0][0]

__________________________________________________________________________________________________

add_5 (Add) (None, 19, 19, 728) 0 block7_sepconv3_bn[0][0]

add_4[0][0]

__________________________________________________________________________________________________

block8_sepconv1_act (Activation (None, 19, 19, 728) 0 add_5[0][0]

__________________________________________________________________________________________________

block8_sepconv1 (SeparableConv2 (None, 19, 19, 728) 536536 block8_sepconv1_act[0][0]

__________________________________________________________________________________________________

block8_sepconv1_bn (BatchNormal (None, 19, 19, 728) 2912 block8_sepconv1[0][0]

__________________________________________________________________________________________________

block8_sepconv2_act (Activation (None, 19, 19, 728) 0 block8_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block8_sepconv2 (SeparableConv2 (None, 19, 19, 728) 536536 block8_sepconv2_act[0][0]

__________________________________________________________________________________________________

block8_sepconv2_bn (BatchNormal (None, 19, 19, 728) 2912 block8_sepconv2[0][0]

__________________________________________________________________________________________________

block8_sepconv3_act (Activation (None, 19, 19, 728) 0 block8_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block8_sepconv3 (SeparableConv2 (None, 19, 19, 728) 536536 block8_sepconv3_act[0][0]

__________________________________________________________________________________________________

block8_sepconv3_bn (BatchNormal (None, 19, 19, 728) 2912 block8_sepconv3[0][0]

__________________________________________________________________________________________________

add_6 (Add) (None, 19, 19, 728) 0 block8_sepconv3_bn[0][0]

add_5[0][0]

__________________________________________________________________________________________________

block9_sepconv1_act (Activation (None, 19, 19, 728) 0 add_6[0][0]

__________________________________________________________________________________________________

block9_sepconv1 (SeparableConv2 (None, 19, 19, 728) 536536 block9_sepconv1_act[0][0]

__________________________________________________________________________________________________

block9_sepconv1_bn (BatchNormal (None, 19, 19, 728) 2912 block9_sepconv1[0][0]

__________________________________________________________________________________________________

block9_sepconv2_act (Activation (None, 19, 19, 728) 0 block9_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block9_sepconv2 (SeparableConv2 (None, 19, 19, 728) 536536 block9_sepconv2_act[0][0]

__________________________________________________________________________________________________

block9_sepconv2_bn (BatchNormal (None, 19, 19, 728) 2912 block9_sepconv2[0][0]

__________________________________________________________________________________________________

block9_sepconv3_act (Activation (None, 19, 19, 728) 0 block9_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block9_sepconv3 (SeparableConv2 (None, 19, 19, 728) 536536 block9_sepconv3_act[0][0]

__________________________________________________________________________________________________

block9_sepconv3_bn (BatchNormal (None, 19, 19, 728) 2912 block9_sepconv3[0][0]

__________________________________________________________________________________________________

add_7 (Add) (None, 19, 19, 728) 0 block9_sepconv3_bn[0][0]

add_6[0][0]

__________________________________________________________________________________________________

block10_sepconv1_act (Activatio (None, 19, 19, 728) 0 add_7[0][0]

__________________________________________________________________________________________________

block10_sepconv1 (SeparableConv (None, 19, 19, 728) 536536 block10_sepconv1_act[0][0]

__________________________________________________________________________________________________

block10_sepconv1_bn (BatchNorma (None, 19, 19, 728) 2912 block10_sepconv1[0][0]

__________________________________________________________________________________________________

block10_sepconv2_act (Activatio (None, 19, 19, 728) 0 block10_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block10_sepconv2 (SeparableConv (None, 19, 19, 728) 536536 block10_sepconv2_act[0][0]

__________________________________________________________________________________________________

block10_sepconv2_bn (BatchNorma (None, 19, 19, 728) 2912 block10_sepconv2[0][0]

__________________________________________________________________________________________________

block10_sepconv3_act (Activatio (None, 19, 19, 728) 0 block10_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block10_sepconv3 (SeparableConv (None, 19, 19, 728) 536536 block10_sepconv3_act[0][0]

__________________________________________________________________________________________________

block10_sepconv3_bn (BatchNorma (None, 19, 19, 728) 2912 block10_sepconv3[0][0]

__________________________________________________________________________________________________

add_8 (Add) (None, 19, 19, 728) 0 block10_sepconv3_bn[0][0]

add_7[0][0]

__________________________________________________________________________________________________

block11_sepconv1_act (Activatio (None, 19, 19, 728) 0 add_8[0][0]

__________________________________________________________________________________________________

block11_sepconv1 (SeparableConv (None, 19, 19, 728) 536536 block11_sepconv1_act[0][0]

__________________________________________________________________________________________________

block11_sepconv1_bn (BatchNorma (None, 19, 19, 728) 2912 block11_sepconv1[0][0]

__________________________________________________________________________________________________

block11_sepconv2_act (Activatio (None, 19, 19, 728) 0 block11_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block11_sepconv2 (SeparableConv (None, 19, 19, 728) 536536 block11_sepconv2_act[0][0]

__________________________________________________________________________________________________

block11_sepconv2_bn (BatchNorma (None, 19, 19, 728) 2912 block11_sepconv2[0][0]

__________________________________________________________________________________________________

block11_sepconv3_act (Activatio (None, 19, 19, 728) 0 block11_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block11_sepconv3 (SeparableConv (None, 19, 19, 728) 536536 block11_sepconv3_act[0][0]

__________________________________________________________________________________________________

block11_sepconv3_bn (BatchNorma (None, 19, 19, 728) 2912 block11_sepconv3[0][0]

__________________________________________________________________________________________________

add_9 (Add) (None, 19, 19, 728) 0 block11_sepconv3_bn[0][0]

add_8[0][0]

__________________________________________________________________________________________________

block12_sepconv1_act (Activatio (None, 19, 19, 728) 0 add_9[0][0]

__________________________________________________________________________________________________

block12_sepconv1 (SeparableConv (None, 19, 19, 728) 536536 block12_sepconv1_act[0][0]

__________________________________________________________________________________________________

block12_sepconv1_bn (BatchNorma (None, 19, 19, 728) 2912 block12_sepconv1[0][0]

__________________________________________________________________________________________________

block12_sepconv2_act (Activatio (None, 19, 19, 728) 0 block12_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block12_sepconv2 (SeparableConv (None, 19, 19, 728) 536536 block12_sepconv2_act[0][0]

__________________________________________________________________________________________________

block12_sepconv2_bn (BatchNorma (None, 19, 19, 728) 2912 block12_sepconv2[0][0]

__________________________________________________________________________________________________

block12_sepconv3_act (Activatio (None, 19, 19, 728) 0 block12_sepconv2_bn[0][0]

__________________________________________________________________________________________________

block12_sepconv3 (SeparableConv (None, 19, 19, 728) 536536 block12_sepconv3_act[0][0]

__________________________________________________________________________________________________

block12_sepconv3_bn (BatchNorma (None, 19, 19, 728) 2912 block12_sepconv3[0][0]

__________________________________________________________________________________________________

add_10 (Add) (None, 19, 19, 728) 0 block12_sepconv3_bn[0][0]

add_9[0][0]

__________________________________________________________________________________________________

block13_sepconv1_act (Activatio (None, 19, 19, 728) 0 add_10[0][0]

__________________________________________________________________________________________________

block13_sepconv1 (SeparableConv (None, 19, 19, 728) 536536 block13_sepconv1_act[0][0]

__________________________________________________________________________________________________

block13_sepconv1_bn (BatchNorma (None, 19, 19, 728) 2912 block13_sepconv1[0][0]

__________________________________________________________________________________________________

block13_sepconv2_act (Activatio (None, 19, 19, 728) 0 block13_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block13_sepconv2 (SeparableConv (None, 19, 19, 1024) 752024 block13_sepconv2_act[0][0]

__________________________________________________________________________________________________

block13_sepconv2_bn (BatchNorma (None, 19, 19, 1024) 4096 block13_sepconv2[0][0]

__________________________________________________________________________________________________

conv2d_3 (Conv2D) (None, 10, 10, 1024) 745472 add_10[0][0]

__________________________________________________________________________________________________

block13_pool (MaxPooling2D) (None, 10, 10, 1024) 0 block13_sepconv2_bn[0][0]

__________________________________________________________________________________________________

batch_normalization_3 (BatchNor (None, 10, 10, 1024) 4096 conv2d_3[0][0]

__________________________________________________________________________________________________

add_11 (Add) (None, 10, 10, 1024) 0 block13_pool[0][0]

batch_normalization_3[0][0]

__________________________________________________________________________________________________

block14_sepconv1 (SeparableConv (None, 10, 10, 1536) 1582080 add_11[0][0]

__________________________________________________________________________________________________

block14_sepconv1_bn (BatchNorma (None, 10, 10, 1536) 6144 block14_sepconv1[0][0]

__________________________________________________________________________________________________

block14_sepconv1_act (Activatio (None, 10, 10, 1536) 0 block14_sepconv1_bn[0][0]

__________________________________________________________________________________________________

block14_sepconv2 (SeparableConv (None, 10, 10, 2048) 3159552 block14_sepconv1_act[0][0]

__________________________________________________________________________________________________

block14_sepconv2_bn (BatchNorma (None, 10, 10, 2048) 8192 block14_sepconv2[0][0]

__________________________________________________________________________________________________

block14_sepconv2_act (Activatio (None, 10, 10, 2048) 0 block14_sepconv2_bn[0][0]

__________________________________________________________________________________________________

avg_pool (GlobalAveragePooling2 (None, 2048) 0 block14_sepconv2_act[0][0]

__________________________________________________________________________________________________

predictions (Dense) (None, 1000) 2049000 avg_pool[0][0]

==================================================================================================

Total params: 22,910,480

Trainable params: 22,855,952

Non-trainable params: 54,528

__________________________________________________________________________________________________

四、设置动态学习率

先罗列以下学习率大与小的优缺点:

- 学习率大

- 优点

- 加快学习速率。

- 有助于跳出局部最优值。

- 缺点

- 导致模型训练不收敛。

- 单单使用大学习率容易导致模型不精确。

- 优点

- 学习率小

- 优点

- 有助于模型收敛、模型细化。

- 提高模型精度。

- 缺点

- 很难跳出局部最优值。

- 收敛缓慢。

- 优点

注意:这里设置的动态学习率为:指数衰减(ExponentialDecay)。在每一个epoch开始前,学习率(learning_rate)都将会重置为初始学习率(initial_learning_rate),然后再重新开始衰减。计算公式如下:

== learning_rate = initial_learning_rate * decay_rate^(step / decay_steps) ==

# 设置初始学习率

initial_learning_rate = 1e-4

lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay(

initial_learning_rate,

decay_steps=300, # 敲黑板!!!这里是指 steps,不是指epochs

decay_rate=0.96, # lr经过一次衰减就会变成 decay_rate*lr

staircase=True)

# 将指数衰减学习率送入优化器

optimizer = tf.keras.optimizers.Adam(learning_rate=lr_schedule)

五、编译

在准备对模型进行训练之前,还需要再对其进行一些设置。以下内容是在模型的编译步骤中添加的:

- 优化器(optimizer):决定模型如何根据其看到的数据和自身的损失函数进行更新。

- 损失函数(loss):用于估量预测值与真实值的不一致程度。

- 评价函数(metrics):用于监控训练步骤和测试步骤。以下示例使用了准确率,即被正确分类的图像的比例。

model.compile(optimizer=optimizer,

loss ='sparse_categorical_crossentropy',

metrics =['accuracy'])

六、训练模型

epochs = 5

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

七、模型评估

1. Accuracy和Loss图

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

2. 混淆矩阵

Seaborn是一个画图库,它基于Matplotlib核心库进行了更高阶的API封装,可以让你轻松画出更漂亮的图形。Seaborn的漂亮主要体现在配色更加舒服,以及图形元素的样式更加细腻。

from sklearn.metrics import confusion_matrix

import seaborn as sns

import pandas as pd

# 定义一个绘制混淆矩阵图的函数

def plot_cm(labels, predictions):

# 生成混淆矩阵

conf_numpy = confusion_matrix(labels, predictions)

# 将矩阵转化为 DataFrame

conf_df = pd.DataFrame(conf_numpy, index=class_names ,columns=class_names)

plt.figure(figsize=(8,7))

sns.heatmap(conf_df, annot=True, fmt="d", cmap="BuPu")

plt.title('混淆矩阵',fontsize=15)

plt.ylabel('真实值',fontsize=14)

plt.xlabel('预测值',fontsize=14)

val_pre = []

val_label = []

for images, labels in val_ds:#这里可以取部分验证数据(.take(1))生成混淆矩阵

for image, label in zip(images, labels):

# 需要给图片增加一个维度

img_array = tf.expand_dims(image, 0)

# 使用模型预测图片中的人物

prediction = model.predict(img_array)

val_pre.append(class_names[np.argmax(prediction)])

val_label.append(class_names[label])

plot_cm(val_label, val_pre)

八、保存和加载模型

# 保存模型

model.save('model/24_model.h5')

# 加载模型

new_model = tf.keras.models.load_model('model/24_model.h5')