RNN&GNU&LSTM与PyTorch

文章目录

- RNN

- GRU

- LSTM

- 辅助函数

-

- 问题

- pad_sequence & unpad_sequence

- pack_padded_sequence & pad_packed_sequence

- pack_sequence & unpack_sequence

- 语言翻译例

官方API

RNN: 循环神经网络 short-term memory 只能记住比较短的时间序列的信息,时间长了会遗忘

GRU: 折中方案,相对于LSTM更加简单,计算成本更低

LSTM: 长短期记忆神经网络 long short-term memory 能够记住比较长的时间序列信息

RNN

$$ h'=tanh(W_{ih}x+b_{ih}+W_{hh}h+b_{hh})\\ \\ x:[batch\_size,input\_size]\\ h\:h':[batch\_size,hidden\_size]\\ W_{ih}:[input\_size,hidden\_size]\\ W_{hh}:[hidden\_size,hidden\_size]\\ b_{ih}\:b_{hh}:[hidden\_size] $$

$$ h'=tanh(W_{ih}x+b_{ih}+W_{hh}h+b_{hh})\\ \\ x:[batch\_size,input\_size]\\ h\:h':[batch\_size,hidden\_size]\\ W_{ih}:[input\_size,hidden\_size]\\ W_{hh}:[hidden\_size,hidden\_size]\\ b_{ih}\:b_{hh}:[hidden\_size] $$

CLASS torch.nn.RNNCell(input_size, hidden_size, bias=True, nonlinearity='tanh', device=None, dtype=None)

# nonlinearity: The non-linearity to use. Can be either 'tanh' or 'relu'. Default: 'tanh'

# 输入:

# input [batch_size, input_size]

# hidden [batch_size, hidden_size] (Defaults to zero if not provided.)

# 输出:

# h' [batch_size, hidden_size]

CLASS torch.nn.RNN(input_size, hidden_size, num_layers=1, nonlinearity='tanh', bias=True, batch_first=False, dropout=0, bidirectional=False, device=None, dtype=None)

# num_layers: Number of recurrent layers. E.g., setting num_layers=2 would mean stacking two RNNs together to form a stacked RNN, with the second RNN taking in outputs of the first RNN and computing the final results. Default: 1

# dropout: If non-zero, introduces a Dropout layer on the outputs of each RNN layer except the last layer, with dropout probability equal to dropout. Default: 0

# bidirectional: If True, becomes a bidirectional RNN. Default: False

# 注:bidirectional=False,则实际num_layers层;bidirectional=True,则实际2 * num_layers层

# D=2 if bidirectional=True otherwise 1

# 输入:

# input [seq_len, batch_size, input_size] 或 PackedSequence

# h_0 [D*num_layers, batch_size, hidden_size] (Defaults to zero if not provided.)

# 输出:

# output(单向最上面一层的所有输出,双向最上面两层的所有输出) [seq_len, batch_size, D*hidden_size]

# h_n(所有层的最后隐状态hidden state) [D*num_layers, batch_size, hidden_size]

GRU

$$ r=\sigma(W_{ir}x+b_{ir}+W_{hr}h+b_{hr})\\ z=\sigma(W_{iz}x+b_{iz}+W_{hz}h+b_{hz})\\ n=tanh(W_{in}x+b_{in}+r*(W_{hn}h+b_{hn}))\\ h'=(1-z)*h+z*n\\ \\ x:[batch\_size,input\_size]\\ h\:h':[batch\_size,hidden\_size]\\ W_{i?}:[input\_size,hidden\_size]\\ W_{h?}:[hidden\_size,hidden\_size]\\ b:[hidden\_size] $$

$$ r=\sigma(W_{ir}x+b_{ir}+W_{hr}h+b_{hr})\\ z=\sigma(W_{iz}x+b_{iz}+W_{hz}h+b_{hz})\\ n=tanh(W_{in}x+b_{in}+r*(W_{hn}h+b_{hn}))\\ h'=(1-z)*h+z*n\\ \\ x:[batch\_size,input\_size]\\ h\:h':[batch\_size,hidden\_size]\\ W_{i?}:[input\_size,hidden\_size]\\ W_{h?}:[hidden\_size,hidden\_size]\\ b:[hidden\_size] $$

CLASS torch.nn.GRUCell(input_size, hidden_size, bias=True, device=None, dtype=None)

# 输入:

# input [batch_size, input_size]

# hidden [batch_size, hidden_size] (Defaults to zero if not provided.)

# 输出:

# h' [batch_size, hidden_size]

CLASStorch.nn.GRU(input_size, hidden_size, num_layers=1, bias=True, batch_first=False, dropout=0, bidirectional=False, device=None, dtype=None)

# D=2 if bidirectional=True otherwise 1

# 输入:

# input [seq_len, batch_size, input_size] 或 PackedSequence

# h_0 [D*num_layers, batch_size, hidden_size] (Defaults to zero if not provided.)

# 输出:

# output(单向最上面一层的所有输出,双向最上面两层的所有输出) [seq_len, batch_size, D*hidden_size]

# h_n(所有层的最后隐状态hidden state) [D*num_layers, batch_size, hidden_size]

LSTM

$$ f=\sigma(W_{if}x+b_{if}+W_{hf}h+b_{hf})\\ i=\sigma(W_{ii}x+b_{ii}+W_{hi}h+b_{hi})\\ g=tanh(W_{ig}x+b_{ig}+W_{hg}h+b_{hg})\\ o=\sigma(W_{io}x+b_{io}+W_{ho}h+b_{ho})\\ c'=f*c+i*g\\ h'=o*tanh(c')\\ \\ x:[batch\_size,input\_size]\\ h\:h':[batch\_size,hidden\_size]\\ c\:c':[batch\_size,hidden\_size]\\ W_{i?}:[input\_size,hidden\_size]\\ W_{h?}:[hidden\_size,hidden\_size]\\ b:[hidden\_size] $$

$$ f=\sigma(W_{if}x+b_{if}+W_{hf}h+b_{hf})\\ i=\sigma(W_{ii}x+b_{ii}+W_{hi}h+b_{hi})\\ g=tanh(W_{ig}x+b_{ig}+W_{hg}h+b_{hg})\\ o=\sigma(W_{io}x+b_{io}+W_{ho}h+b_{ho})\\ c'=f*c+i*g\\ h'=o*tanh(c')\\ \\ x:[batch\_size,input\_size]\\ h\:h':[batch\_size,hidden\_size]\\ c\:c':[batch\_size,hidden\_size]\\ W_{i?}:[input\_size,hidden\_size]\\ W_{h?}:[hidden\_size,hidden\_size]\\ b:[hidden\_size] $$

CLASS torch.nn.LSTMCell(input_size, hidden_size, bias=True, device=None, dtype=None)

# 输入:

# input [batch_size, input_size] 或 PackedSequence

# h_0 [batch_size, hidden_size] (Defaults to zero if not provided.)

# c_0 [batch_size, hidden_size] (Defaults to zero if not provided.)

# 输出:

# h_1 [batch_size, hidden_size]

# c_1 [batch_size, hidden_size]

CLASStorch.nn.LSTM(input_size, hidden_size, num_layers=1, bias=True, batch_first=False, dropout=0, bidirectional=False, proj_size=0, device=None, dtype=None)

# proj_size – If > 0, will use LSTM with projections of corresponding size. Default: 0

# D=2 if bidirectional=True otherwise 1

# 输入:

# input [seq_len, batch_size, input_size]

# h_0 [D*num_layers, batch_size, hidden_size或proj_size](proj_size不为0时[D*num_layers, batch_size, proj_size]否则[D*num_layers, batch_size, hidden_size]) (Defaults to zero if not provided.)

# c_0 [D*num_layers, batch_size, hidden_size] (Defaults to zero if not provided.)

# 输出:

# output(单向最上面一层的所有输出,双向最上面两层的所有输出) [seq_len, batch_size, D*hidden_size或D*prog_size]

# h_n(所有层的最后隐状态hidden state) [D*num_layers, batch_size, hidden_size或prog_size]

# c_n(所有层的最后单元状态cell state) [D*num_layers, batch_size, hidden_size]

辅助函数

问题

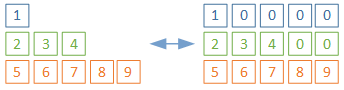

一个batch中的3个样本,最长长度为5,用0填充,如下图1所示。将3个样本的数据按照时间步不断输入一个RNN、GRU、LSTM单元时,样本1和样本2有多次输入了padding的数据0。为了减少padding的影响,我们希望样本1输入1后即得到最后的hidden state(或加cell state)、样本2输入2、3、4后即得到最后的hidden state(或加cell state),如下图2所示。可以使用后面的PackedSequence实现。

在seq2seq应用中,编码器推荐用此方法,因为h_5是对待翻译句子的记忆,而解码器不必要

pad_sequence & unpad_sequence

torch.nn.utils.rnn.pad_sequence(sequences, batch_first=False, padding_value=0.0)

参数:

sequences (list[Tensor]): list of variable length sequences

返回:

[max_seq_len, batch_size, *] (*表示剩余的多个维度)

# 注意:该函数将对padded_sequences进行原址变换。在batch_first=False下padded_sequences由[max_seq_len, batch_size] -> [batch_size, max_seq_len];在batch_first=True下padded_sequences将保持[batch_size, max_seq_len]。推测该形态可能是unpad_sequence过程中的中间状态bug。

torch.nn.utils.rnn.unpad_sequence(padded_sequences, lengths, batch_first=False)

参数:

padded_sequences (Tensor): [max_seq_len, batch_size, *] (*表示剩余的多个维度)

lengths (Tensor): length of original (unpadded) sequences.

返回:

list of variable length sequences

import torch

from torch.nn.utils.rnn import pad_sequence, unpad_sequence

a = torch.tensor([1])

b = torch.tensor([2, 3, 4])

c = torch.tensor([5, 6, 7, 8, 9])

test_data = [a, b, c]

lengths = torch.as_tensor([v.size(0) for v in test_data])

padded_sequences = pad_sequence(test_data)

print(padded_sequences)

sequences = unpad_sequence(padded_sequences, lengths)

print(sequences)

# tensor([[1, 2, 5],

# [0, 3, 6],

# [0, 4, 7],

# [0, 0, 8],

# [0, 0, 9]])

# [tensor([1]), tensor([2, 3, 4]), tensor([5, 6, 7, 8, 9])]

import torch

from torch.nn.utils.rnn import pad_sequence, unpad_sequence

a = torch.tensor([1])

b = torch.tensor([2, 3, 4])

c = torch.tensor([5, 6, 7, 8, 9])

test_data = [a, b, c]

lengths = torch.as_tensor([v.size(0) for v in test_data])

padded_sequences = pad_sequence(test_data)

print(padded_sequences)

sequences = unpad_sequence(padded_sequences, lengths)

print(sequences)

# tensor([[1, 2, 5],

# [0, 3, 6],

# [0, 4, 7],

# [0, 0, 8],

# [0, 0, 9]])

# [tensor([1]), tensor([2, 3, 4]), tensor([5, 6, 7, 8, 9])]

padded_sequences = pad_sequence(test_data)

print(padded_sequences)

sequences = unpad_sequence(padded_sequences, lengths)

print(padded_sequences)

# tensor([[1, 2, 5],

# [0, 3, 6],

# [0, 4, 7],

# [0, 0, 8],

# [0, 0, 9]])

# tensor([[1, 0, 0, 0, 0],

# [2, 3, 4, 0, 0],

# [5, 6, 7, 8, 9]])

padded_sequences = pad_sequence(test_data, batch_first=True)

print(padded_sequences)

sequences = unpad_sequence(padded_sequences, lengths, batch_first=True)

print(padded_sequences)

# tensor([[1, 0, 0, 0, 0],

# [2, 3, 4, 0, 0],

# [5, 6, 7, 8, 9]])

# tensor([[1, 0, 0, 0, 0],

# [2, 3, 4, 0, 0],

# [5, 6, 7, 8, 9]])

pack_padded_sequence & pad_packed_sequence

# (重要)注:input是长度降序(enforce_sorted随意) 或 input不是长度降序但指定enforce_sorted=False,否则抛出异常

torch.nn.utils.rnn.pack_padded_sequence(input, lengths, batch_first=False, enforce_sorted=True)

参数:

input (Tensor): padded batch of variable length sequences.

lengths (Tensor or list(int)): list of sequence lengths of each batch element. (must be on the CPU if provided as a tensor).

enforce_sorted: if True, the input is expected to contain sequences sorted by length in a decreasing order. If False, the input will get sorted unconditionally. Default: True.

返回:

a PackedSequence object

# (重要)注:如果pack_padded_sequence的 input不是长度降序但指定enforce_sorted=False,那么pad_packed_sequence返回值也不是长度降序而是本来的顺序。

torch.nn.utils.rnn.pad_packed_sequence(sequence, batch_first=False, padding_value=0.0, total_length=None)

参数:

sequence (PackedSequence): batch to pad

total_length (int, optional): if not None, the output will be padded to have length total_length. This method will throw ValueError if total_length is less than the max sequence length in sequence.

返回:

Tuple of Tensor containing the padded sequence, and a Tensor containing the list of lengths of each sequence in the batch. Batch elements will be re-ordered as they were ordered originally when the batch was passed to pack_padded_sequence or pack_sequence.

# PackedSequence中sorted_indices为sorted_input在input上的顺序,unsorted_indices为input在unsorted_input上的顺序

# 若传入的input是长度降序,则sorted_input和unsorted_indices均为tensor([0, 1, ..., batch_size - 1])

import torch

from torch.nn.utils.rnn import pad_sequence, pack_padded_sequence, pad_packed_sequence

a = torch.tensor([1])

b = torch.tensor([2, 3, 4])

c = torch.tensor([5, 6, 7, 8, 9])

test_data = [a, b, c]

lengths = torch.as_tensor([v.size(0) for v in test_data])

padded_sequences = pad_sequence(test_data)

print(padded_sequences)

# tensor([[1, 2, 5],

# [0, 3, 6],

# [0, 4, 7],

# [0, 0, 8],

# [0, 0, 9]])

pack_padded = pack_padded_sequence(padded_sequences, lengths, enforce_sorted=False)

print(pack_padded)

# PackedSequence(data=tensor([5, 2, 1, 6, 3, 7, 4, 8, 9]),

# batch_sizes=tensor([3, 2, 2, 1, 1]),

# sorted_indices=tensor([2, 1, 0]),

# unsorted_indices=tensor([2, 1, 0]))

pad_packed = pad_packed_sequence(pack_padded)

print(padded_sequences)

# tensor([[1, 2, 5],

# [0, 3, 6],

# [0, 4, 7],

# [0, 0, 8],

# [0, 0, 9]])

pack_sequence & unpack_sequence

# pad_sequence & pack_padded_sequence的合体

torch.nn.utils.rnn.pack_sequence(sequences, enforce_sorted=True)

参数:

sequences (list[Tensor]): A list of sequences of decreasing length.

enforce_sorted (bool, optional): if True, checks that the input contains sequences sorted by length in a decreasing order. If False, this condition is not checked. Default: True.

返回:

a PackedSequence object

# pad_packed_sequence & unpad_sequence的合体

torch.nn.utils.rnn.unpack_sequence(packed_sequences)

参数:

packed_sequences (PackedSequence): A PackedSequence object.

返回:

a list of :class:`Tensor` objects

import torch

from torch.nn.utils.rnn import pack_sequence, unpack_sequence

a = torch.tensor([1])

b = torch.tensor([2, 3, 4])

c = torch.tensor([5, 6, 7, 8, 9])

pack_seq = pack_sequence([a, b, c], enforce_sorted=False)

print(pack_seq)

# PackedSequence(data=tensor([5, 2, 1, 6, 3, 7, 4, 8, 9]),

# batch_sizes=tensor([3, 2, 2, 1, 1]),

# sorted_indices=tensor([2, 1, 0]),

# unsorted_indices=tensor([2, 1, 0]))

unpack_seq = unpack_sequence(pack_seq)

print(unpack_seq)

# [tensor([1]), tensor([2, 3, 4]), tensor([5, 6, 7, 8, 9])]

语言翻译例

import torch

import torch.optim as optim

import torch.utils.data as Data

from torch import nn

from torch.nn import RNN

from torch.nn.utils.rnn import pad_sequence, pack_padded_sequence

# ====================================================================================================

# 参数配置

if torch.cuda.is_available():

device = 'cuda'

else:

device = 'cpu'

epochs = 1000

input_size = 512

hidden_size = 512

# ====================================================================================================

# 数据处理

sentences = [

# 中文和英语的单词个数不要求相同

# enc_input dec_input dec_output

['我 有 一 个 好 朋 友', 'S i have a good friend .', 'i have a good friend . E'],

['我 有 零 个 女 朋 友', 'S i have zero girl friend .', 'i have zero girl friend . E']

]

# 中文词库

src_vocab = {'P': 0, '我': 1, '有': 2, '一': 3, '个': 4, '好': 5, '朋': 6, '友': 7, '零': 8, '女': 9}

src_idx2word = {w: i for i, w in src_vocab.items()}

src_vocab_size = len(src_vocab)

# 英文词库

tgt_vocab = {'P': 0, 'i': 1, 'have': 2, 'a': 3, 'good': 4, 'friend': 5, 'zero': 6, 'girl': 7, 'S': 8, 'E': 9, '.': 10}

tgt_idx2word = {w: i for i, w in tgt_vocab.items()}

tgt_vocab_size = len(tgt_vocab)

class MyDataSet(Data.Dataset):

"""

自定义DataLoader,返回:

enc_input: [batch_size, len_src]

dec_input: [batch_size, len_tgt]

dec_output: [bath_size, len_tgt]

enc_input_len: [batch_size]

"""

def __init__(self, sentences, src_vocab, tgt_vocab):

super(MyDataSet, self).__init__()

self.len = len(sentences)

self.enc_input = []

self.dec_input = []

self.dec_output = []

self.enc_input_len = []

for i in range(self.len):

self.enc_input.append(torch.tensor([src_vocab[n] for n in sentences[i][0].split()]))

self.dec_input.append(torch.tensor([tgt_vocab[n] for n in sentences[i][1].split()]))

self.dec_output.append(torch.tensor([tgt_vocab[n] for n in sentences[i][2].split()]))

self.enc_input_len.append(len(self.enc_input[-1]))

# padding之后的长度即为len_src

self.enc_input = pad_sequence(self.enc_input, batch_first=True)

# padding之后的长度即为len_tgt

dec = pad_sequence(self.dec_input + self.dec_output, batch_first=True)

self.dec_input = dec[:self.len]

self.dec_output = dec[self.len:]

def __len__(self):

return self.len

def __getitem__(self, idx):

return self.enc_input[idx], self.dec_input[idx], self.dec_output[idx], self.enc_input_len[idx]

loader = Data.DataLoader(MyDataSet(sentences, src_vocab, tgt_vocab), 2, True)

# ====================================================================================================

# RNN模型

class Model(nn.Module):

def __init__(self, input_size, hidden_size, src_vocab_size , tgt_vocab_size):

super(Model, self).__init__()

self.src_emb = nn.Embedding(src_vocab_size, input_size)

self.tgt_emb = nn.Embedding(tgt_vocab_size, input_size)

self.rnn = RNN(input_size, hidden_size)

self.projection = nn.Linear(hidden_size, tgt_vocab_size)

def forward(self, enc_input, dec_input, enc_input_len):

"""

:param enc_input: [batch_size, len_src]

:param dec_input: [batch_size, len_tgt]

:param enc_input_len: [batch_size]

:return:

"""

# [len_src/len_tgt, batch_size, input_size]

enc_input = self.src_emb(enc_input.t())

dec_input = self.tgt_emb(dec_input.t())

# [1, batch_size, hidden_size]

# 注意,虽然enforce_sorted=False使得rnn会将enc_input排序为长度降序,但返回的hidden_state的batch顺序和原始输入enc_input相同

_, hidden_state = self.rnn(pack_padded_sequence(enc_input, enc_input_len, enforce_sorted=False))

# [len_tgt, batch_size, hidden_size]

output, _ = self.rnn(dec_input, hidden_state)

# [len_tgt, batch_size, hidden_size]

# -> [len_tgt, batch_size, tgt_vocab_size]

# -> [len_tgt * batch_size, tgt_vocab_size]

dec_logits = self.projection(output)

dec_logits = dec_logits.view(-1, dec_logits.size(-1))

return dec_logits

# ====================================================================================================

# 训练

model = Model(input_size, hidden_size, src_vocab_size, tgt_vocab_size)

model = model.to(device)

criterion = nn.CrossEntropyLoss(ignore_index=tgt_vocab['P'])

optimizer = optim.SGD(model.parameters(), lr=1e-3, momentum=0.99) # 用adam的话效果不好

for epoch in range(epochs):

for enc_input, dec_input, dec_output, enc_input_len in loader:

"""

enc_input: [batch_size, len_src]

dec_input: [batch_size, len_tgt]

dec_output: [batch_size, len_tgt]

enc_input_len: [batch_size]

"""

enc_input, dec_input, dec_output = enc_input.to(device), dec_input.to(device), dec_output.to(device)

output = model(enc_input, dec_input, enc_input_len)

loss = criterion(output, dec_output.t().flatten())

print('Epoch:', '%04d' % (epoch + 1), 'loss =', '{:.6f}'.format(loss))

optimizer.zero_grad()

loss.backward()

optimizer.step()

# ==========================================================================================

# 预测

def greedy_decoder(model, enc_input, src_vocab, tgt_vocab, device):

"""

:param model: 模型

:param enc_input: str sequence

:param src_vocab: 源语言字典

:param tgt_vocab: 目标语言字典

:param device: 设备

:return:

"""

# str seq -> tensor int seq

# [1, ?] ?和len_src可以不等

seq = torch.tensor([[src_vocab[n] for n in enc_input.split()]], device=device)

# tensor([], size=(1, 0), dtype=torch.int64)

dec_input = torch.zeros(1, 0).to(device=device, dtype=torch.int64)

# 首先存入开始符号S

next_symbol = tgt_vocab['S']

terminal = False

while not terminal:

# [1, dec_input_len_cur]

dec_input = torch.cat([dec_input, torch.tensor([[next_symbol]]).to(device)], -1)

# [dec_input_len_cur, tgt_vocab_size]

dec_logits = model(seq, dec_input, [seq.shape[1]])

# [1]

next_symbol = dec_logits[-1].argmax()

print(tgt_idx2word[next_symbol.item()])

if next_symbol == tgt_vocab["E"]:

terminal = True

greedy_decoder(model, '我 有 零 个 女 朋 友', src_vocab, tgt_vocab, device)