TensorFlow神经网络(八)卷积神经网络之Lenet-5

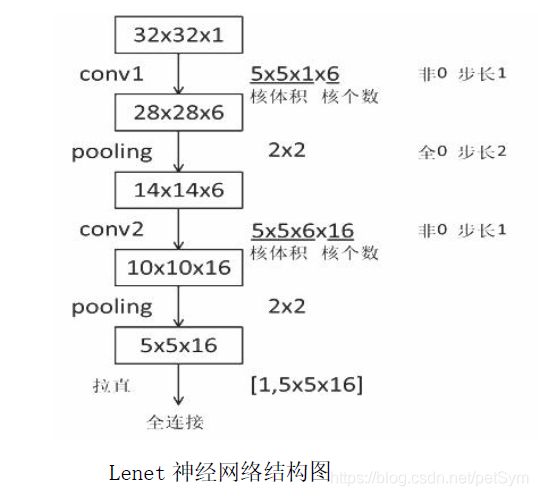

一、Lenet神经网络基本结构

【注】内容来自MOOC人工智能实践TensorFlow笔记课程第七讲第2课

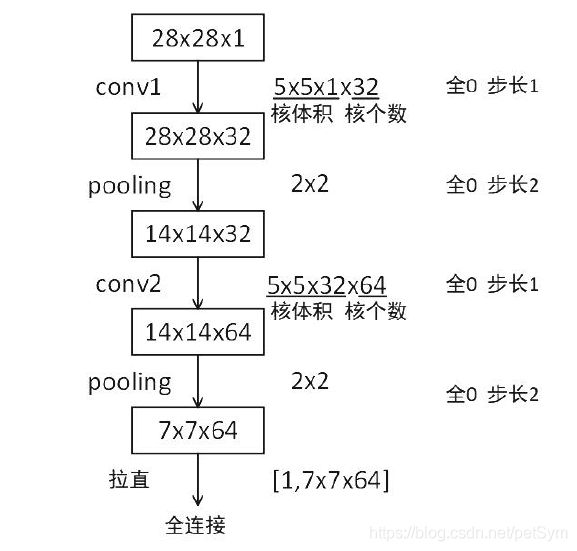

二、Lenet神经网络在Mnist数据集上的实现

1.前向传播mnist_lenet5_forward.py

# mnist_lenet5_forward.py

# coding: utf-8

import tensorflow as tf

IMAGE_SIZE = 28

NUM_CHANNELS = 1

CONV1_SIZE = 5

CONV1_KERNEL_NUM = 32

CONV2_SIZE = 5

CONV2_KERNEL_NUM = 64

FC_SIZE = 512

OUTPUT_NODE = 10

# 给w赋初值,并把w的正则化损失加到总损失中

def get_weight(shape, regularizer):

w = tf.Variable(tf.truncated_normal(shape, stddev = 0.1))

if regularizer != None: tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(w))

return w

# 给b赋初值

def get_bias(shape):

b = tf.Variable(tf.zeros(shape))

return b

# 计算卷积,使用padding尺寸不变

def conv2d(x, w):

return tf.nn.conv2d(x, w, strides = [1, 1, 1, 1], padding = 'SAME')

# 池化,使用padding尺寸不变

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize = [1, 2, 2, 1], strides = [1, 2, 2, 1], padding = 'SAME')

# 前传

def forward(x, train, regularizer):

conv1_w = get_weight([CONV1_SIZE, CONV1_SIZE, NUM_CHANNELS, CONV1_KERNEL_NUM], regularizer)

conv1_b = get_bias([CONV1_KERNEL_NUM])

conv1 = conv2d(x, conv1_w)

relu1 = tf.nn.relu(tf.nn.bias_add(conv1, conv1_b))

pool1 = max_pool_2x2(relu1)

conv2_w = get_weight([CONV2_SIZE, CONV2_SIZE, CONV1_KERNEL_NUM, CONV2_KERNEL_NUM], regularizer)

conv2_b = get_bias([CONV2_KERNEL_NUM])

conv2 = conv2d(pool1, conv2_w)

relu2 = tf.nn.relu(tf.nn.bias_add(conv2, conv2_b))

pool2 = max_pool_2x2(relu2)

# 得到pool2输出矩阵的维度,存入list中

# 4个值分别是一个batch的值,提取特征的长度、宽度、深度

pool_shape = pool2.get_shape().as_list()

# 所有特征点的个数

nodes = pool_shape[1] * pool_shape[2] * pool_shape[3]

# 将结果拉成二维

reshaped = tf.reshape(pool2, [pool_shape[0], nodes])

# 全连接层

fc1_w = get_weight([nodes, FC_SIZE], regularizer)

fc1_b = get_bias([FC_SIZE])

fc1 = tf.nn.relu(tf.matmul(reshaped, fc1_w) + fc1_b)

# 如果是训练集,则对该层输出使用50%的dropout

if train: fc1 = tf.nn.dropout(fc1, 0.5)

fc2_w = get_weight([FC_SIZE, OUTPUT_NODE], regularizer)

fc2_b = get_bias([OUTPUT_NODE])

y = tf.matmul(fc1, fc2_w) + fc2_b

return y

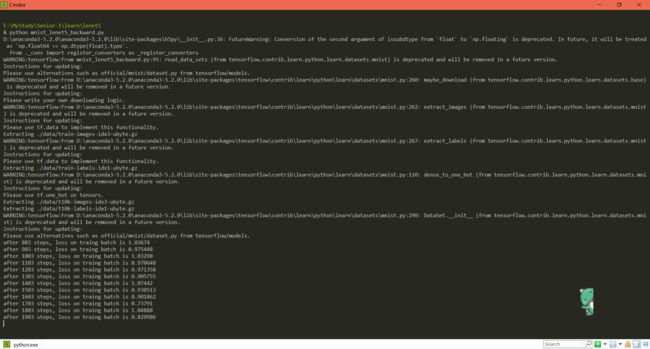

2.反向传播mnist_lenet5_backward.py

# mnist_lenet5_backward.py

# coding: utf-8

import tensorflow as tf

# 导入imput_data模块

from tensorflow.examples.tutorials.mnist import input_data

import mnist_lenet5_forward

import numpy as np

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' #hide warnings

# 定义超参数

BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.005 #初始学习率

LEARNING_RATE_DECAY = 0.99 # 学习率衰减率

REGULARIZER = 0.0001 # 正则化参数

STEPS = 50000 #训练轮数

MOVING_AVERAGE_DECAY = 0.99

MODEL_SAVE_PATH = "./model/"

MODEL_NAME = "mnist_model"

def backward(mnist):

# placeholder占位

x = tf.placeholder(tf.float32, [

BATCH_SIZE,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.NUM_CHANNELS])

y_ = tf.placeholder(tf.float32, shape = (None, mnist_lenet5_forward.OUTPUT_NODE))

# 前向传播推测输出y

y = mnist_lenet5_forward.forward(x, True, REGULARIZER) ## True表示训练参数时使用dropout

# 定义global_step轮数计数器,定义为不可训练

global_step = tf.Variable(0, trainable = False)

# 包含正则化的损失函数

# 交叉熵

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits = y, labels = tf.argmax(y_, 1))

cem = tf.reduce_mean(ce)

# 使用正则化时的损失函数

loss = cem + tf.add_n(tf.get_collection('losses'))

# 定义指数衰减学习率

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase = True)

# 定义反向传播方法:包含正则化

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step = global_step)

# 定义滑动平均时,加上:

ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step, ema_op]):

train_op = tf.no_op(name = 'train')

# 实例化saver

saver = tf.train.Saver()

# 训练过程

with tf.Session() as sess:

# 初始化所有参数

init_op = tf.global_variables_initializer()

sess.run(init_op)

# 断点续训 breakpoint_continue.py

ckpt = tf.train.get_checkpoint_state(MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

# 恢复当前会话,将ckpt中的值赋给 w 和 b

saver.restore(sess, ckpt.model_checkpoint_path)

# 循环迭代

for i in range(STEPS):

# 将训练集中一定batchsize的数据和标签赋给左边的变量

xs, ys = mnist.train.next_batch(BATCH_SIZE)

# 修改喂入神经网络的参数

reshaped_xs = np.reshape(xs, (

BATCH_SIZE,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.NUM_CHANNELS))

# 喂入神经网络,执行训练过程train_step

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict = {x: reshaped_xs, y_: ys})

if i % 100 == 0: # 拼接成./MODEL_SAVE_PATH/MODEL_NAME-global_step路径

# 打印提示

print("after %d steps, loss on traing batch is %g" %(step, loss_value))

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step = global_step)

def main():

mnist = input_data.read_data_sets('./data/', one_hot = True)

# 调用定义好的测试函数

backward(mnist)

# 判断python运行文件是否为主文件,如果是,则执行

if __name__ == '__main__':

main()

3.测试mnist_lenet5_test.py

# mnist_lenet5_test.py

# coding:utf-8

import time

import tensorflow as tf

# 导入imput_data模块

from tensorflow.examples.tutorials.mnist import input_data

import mnist_lenet5_forward

import mnist_lenet5_backward

import numpy as np

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' #hide warnings

# 程序循环间隔时间5秒

TEST_INTERVAL_SECS = 5

def test(mnist):

# 用于复现已经定义好了的神经网络

with tf.Graph().as_default() as g: # 其内定义的节点在计算图g中

# placeholder占位

x = tf.placeholder(tf.float32, [

mnist.test.num_examples,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.NUM_CHANNELS])

y_ = tf.placeholder(tf.float32, shape=(None, mnist_lenet5_forward.OUTPUT_NODE))

# 前向传播推测输出y

y = mnist_lenet5_forward.forward(x, False, None) # False表示不使用dropout

# 实例化带滑动平均的saver对象

# 这样,所有参数在会话中被加载时,会被复制为各自的滑动平均值

ema = tf.train.ExponentialMovingAverage(mnist_lenet5_backward.MOVING_AVERAGE_DECAY)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

# 计算正确率

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

while True:

with tf.Session() as sess:

# 加载训练好的模型,也即把滑动平均值赋给各个参数

ckpt = tf.train.get_checkpoint_state(mnist_lenet5_backward.MODEL_SAVE_PATH)

#若ckpt和保存的模型在指定路径中存在

if ckpt and ckpt.model_checkpoint_path:

# 恢复会话

saver.restore(sess, ckpt.model_checkpoint_path)

# 恢复轮数

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

# 修改喂入神经网络的参数

reshaped_x = np.reshape(mnist.test.images, (

mnist.test.num_examples,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.IMAGE_SIZE,

mnist_lenet5_forward.NUM_CHANNELS))

# 计算准确率

accuracy_score = sess.run(accuracy, feed_dict={x: reshaped_x, y_: mnist.test.labels})

# 打印提示

print("after %s training steps, test accuracy = %g" % (global_step, accuracy_score))

#如果没有模型

else:

print("no checkpoint file found")

return

time.sleep(TEST_INTERVAL_SECS)

def main():

mnist = input_data.read_data_sets('./data/', one_hot=True)

# 调用定义好的测试函数

test(mnist)

if __name__ == '__main__':

main()

很遗憾每次跑test程序电脑都死机,不知道为什么,就很尴尬了,下载了老师的代码也还是死机。