深度学习笔记(2)——pytorch实现MNIST数据集分类(FNN、CNN、RNN、LSTM、GRU)

文章目录

- 0 前言

- 1 数据预处理

- 2 FNN(前馈神经网络)

- 3 CNN(卷积神经网络)

- 4 RNN(循环神经网络)

- 5 LSTM(长短期记忆网络)

- 6 GRU(门控循环单元)

- 7 完整代码

0 前言

快开学了,花了一个晚上时间复习深度学习基础代码,复习了最基础的MNIST手写数字识别数据集分类,使用FNN、CNN、RNN、LSTM、GRU实现。

1 数据预处理

import matplotlib.pyplot as plt

import torch

import time

import torch.nn.functional as F

from torch import nn, optim

from torchvision.datasets import MNIST

from torchvision.transforms import Compose, ToTensor, Normalize, Resize

from torch.utils.data import DataLoader

from sklearn.metrics import accuracy_score

# 超参数

BATCH_SIZE = 64 # 批次大小

EPOCHS = 5 # 迭代轮数

# 设备

DEVICE = 'cuda' if torch.cuda.is_available() else 'cpu'

# 数据转换

transformers = Compose(transforms=[ToTensor(), Normalize(mean=(0.1307,), std=(0.3081,))])

# 数据装载

dataset_train = MNIST(root=r'./data', train=True, download=False, transform=transformers)

dataset_test = MNIST(root=r'./data', train=False, download=False, transform=transformers)

dataloader_train = DataLoader(dataset=dataset_train, batch_size=BATCH_SIZE, shuffle=True)

dataloader_test = DataLoader(dataset=dataset_test, batch_size=BATCH_SIZE, shuffle=True)

2 FNN(前馈神经网络)

# FNN

class FNN(nn.Module):

# 定义网络结构

def __init__(self):

super(FNN, self).__init__()

self.layer1 = nn.Linear(28 * 28, 28) # 隐藏层

self.out = nn.Linear(28, 10) # 输出层

# 计算

def forward(self, x):

# 初始形状[batch_size, 1, 28, 28]

x = x.view(-1, 28 * 28)

x = torch.relu(self.layer1(x)) # 使用relu函数激活

x = self.out(x) # 输出层

return x

3 CNN(卷积神经网络)

# CNN

class CNN(nn.Module):

# 定义网络结构

def __init__(self):

super(CNN, self).__init__()

# 卷积层+池化层+卷积层

self.conv1 = nn.Conv2d(in_channels=1, out_channels=32, kernel_size=(3, 3), stride=(1, 1), padding=1)

self.conv2 = nn.Conv2d(in_channels=32, out_channels=64, kernel_size=(3, 3), stride=(1, 1), padding=1)

self.pool = nn.MaxPool2d(2, 2)

# dropout

self.dropout = nn.Dropout(p=0.25)

# 全连接层

self.fc1 = nn.Linear(64 * 7 * 7, 512)

self.fc2 = nn.Linear(512, 64)

self.fc3 = nn.Linear(64, 10)

# 计算

def forward(self, x):

# 初始形状[batch_size, 1, 28, 28]

x = self.pool(F.relu(self.conv1(x)))

x = self.dropout(x)

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 64 * 7 * 7)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

4 RNN(循环神经网络)

# RNN

class RNN(nn.Module):

# 定义网络结构

def __init__(self):

super(RNN, self).__init__()

self.rnn = nn.RNN(input_size=28, hidden_size=64, num_layers=1, batch_first=True) # RNN

self.dropout = nn.Dropout(p=0.25)

self.out = nn.Linear(64, 10) # 全连接层

# 计算

def forward(self, x):

x = x.view(-1, 28, 28)

x = self.dropout(x)

r_out, _ = self.rnn(x, None)

x = self.out(r_out[:, -1, :])

return x

5 LSTM(长短期记忆网络)

# LSTM

class LSTM(nn.Module):

# 定义网络结构

def __init__(self):

super(LSTM, self).__init__()

self.lstm = nn.LSTM(input_size=28, hidden_size=64, num_layers=1, batch_first=True) # LSTM

self.dropout = nn.Dropout(p=0.25)

self.out = nn.Linear(64, 10) # 全连接层

# 计算

def forward(self, x):

x = x.view(-1, 28, 28) # [64, 28, 28]

x = self.dropout(x)

r_out, _ = self.lstm(x, None)

x = self.out(r_out[:, -1, :]) # [64, 10]

return x

6 GRU(门控循环单元)

class GRU(nn.Module):

# 定义网络结构

def __init__(self):

super(GRU, self).__init__()

self.gru = nn.GRU(input_size=28, hidden_size=64, num_layers=1, batch_first=True) # GRU

self.dropout = nn.Dropout(p=0.25)

self.out = nn.Linear(64, 10) # 全连接层

def forward(self, x):

x = x.view(-1, 28, 28)

x = self.dropout(x)

r_out, _ = self.gru(x, None)

x = self.out(r_out[:, -1, :])

return x

7 完整代码

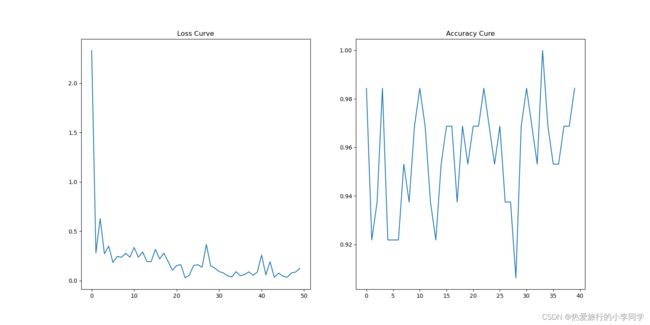

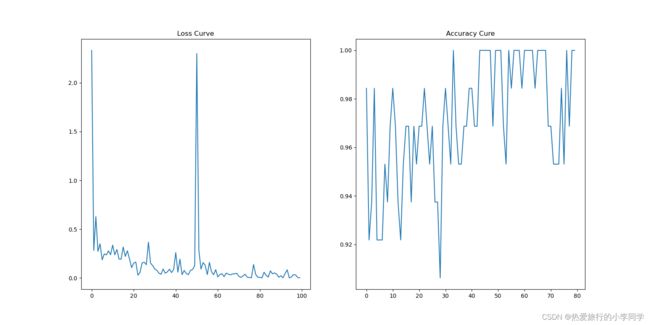

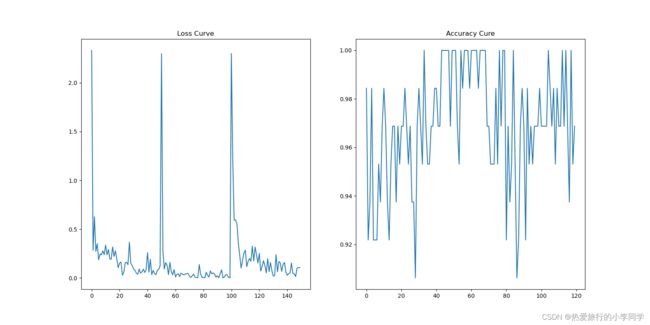

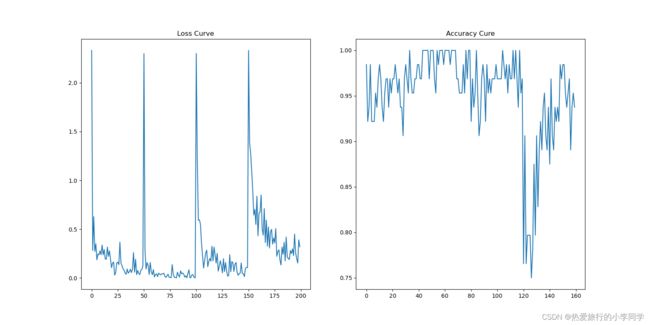

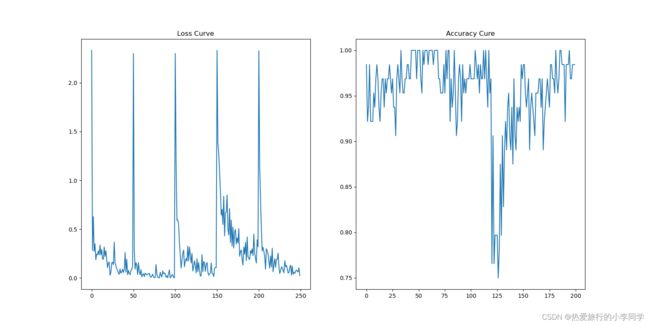

综合评价:物种模型均能达到96%以上的准确率。CNN效果最好,FNN其次,RNN、LSTM、GRU波动较大。

代码中封装了(造轮子)几个函数,包括get_accuracy()、train()、test()、run()、initialize()

"""

MNIST数据集分类

尝试使用FNN、CNN、RNN、LSTM、GRU

"""

import matplotlib.pyplot as plt

import torch

import time

import torch.nn.functional as F

from torch import nn, optim

from torchvision.datasets import MNIST

from torchvision.transforms import Compose, ToTensor, Normalize, Resize

from torch.utils.data import DataLoader

from sklearn.metrics import accuracy_score

# 超参数

BATCH_SIZE = 64 # 批次大小

EPOCHS = 5 # 迭代轮数

# 设备

DEVICE = 'cuda' if torch.cuda.is_available() else 'cpu'

# 数据转换

transformers = Compose(transforms=[ToTensor(), Normalize(mean=(0.1307,), std=(0.3081,))])

# 数据装载

dataset_train = MNIST(root=r'./data', train=True, download=False, transform=transformers)

dataset_test = MNIST(root=r'./data', train=False, download=False, transform=transformers)

dataloader_train = DataLoader(dataset=dataset_train, batch_size=BATCH_SIZE, shuffle=True)

dataloader_test = DataLoader(dataset=dataset_test, batch_size=BATCH_SIZE, shuffle=True)

# FNN

class FNN(nn.Module):

# 定义网络结构

def __init__(self):

super(FNN, self).__init__()

self.layer1 = nn.Linear(28 * 28, 28) # 隐藏层

self.out = nn.Linear(28, 10) # 输出层

# 计算

def forward(self, x):

# 初始形状[batch_size, 1, 28, 28]

x = x.view(-1, 28 * 28)

x = torch.relu(self.layer1(x)) # 使用relu函数激活

x = self.out(x) # 输出层

return x

# CNN

class CNN(nn.Module):

# 定义网络结构

def __init__(self):

super(CNN, self).__init__()

# 卷积层+池化层+卷积层

self.conv1 = nn.Conv2d(in_channels=1, out_channels=32, kernel_size=(3, 3), stride=(1, 1), padding=1)

self.conv2 = nn.Conv2d(in_channels=32, out_channels=64, kernel_size=(3, 3), stride=(1, 1), padding=1)

self.pool = nn.MaxPool2d(2, 2)

# dropout

self.dropout = nn.Dropout(p=0.25)

# 全连接层

self.fc1 = nn.Linear(64 * 7 * 7, 512)

self.fc2 = nn.Linear(512, 64)

self.fc3 = nn.Linear(64, 10)

# 计算

def forward(self, x):

# 初始形状[batch_size, 1, 28, 28]

x = self.pool(F.relu(self.conv1(x)))

x = self.dropout(x)

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 64 * 7 * 7)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

# RNN

class RNN(nn.Module):

# 定义网络结构

def __init__(self):

super(RNN, self).__init__()

self.rnn = nn.RNN(input_size=28, hidden_size=64, num_layers=1, batch_first=True) # RNN

self.dropout = nn.Dropout(p=0.25)

self.out = nn.Linear(64, 10) # 全连接层

# 计算

def forward(self, x):

x = x.view(-1, 28, 28)

x = self.dropout(x)

r_out, _ = self.rnn(x, None)

x = self.out(r_out[:, -1, :])

return x

# LSTM

class LSTM(nn.Module):

# 定义网络结构

def __init__(self):

super(LSTM, self).__init__()

self.lstm = nn.LSTM(input_size=28, hidden_size=64, num_layers=1, batch_first=True) # LSTM

self.dropout = nn.Dropout(p=0.25)

self.out = nn.Linear(64, 10) # 全连接层

# 计算

def forward(self, x):

x = x.view(-1, 28, 28) # [64, 28, 28]

x = self.dropout(x)

r_out, _ = self.lstm(x, None)

x = self.out(r_out[:, -1, :]) # [64, 10]

return x

class GRU(nn.Module):

# 定义网络结构

def __init__(self):

super(GRU, self).__init__()

self.gru = nn.GRU(input_size=28, hidden_size=64, num_layers=1, batch_first=True) # GRU

self.dropout = nn.Dropout(p=0.25)

self.out = nn.Linear(64, 10) # 全连接层

def forward(self, x):

x = x.view(-1, 28, 28)

x = self.dropout(x)

r_out, _ = self.gru(x, None)

x = self.out(r_out[:, -1, :])

return x

loss_func = nn.CrossEntropyLoss() # 交叉熵损失函数

# 记录损失值、准确率

loss_list, accuracy_list = [], []

# 计算准确率

def get_accuracy(model, datas, labels):

out = torch.softmax(model(datas), dim=1, dtype=torch.float32)

predictions = torch.max(input=out, dim=1)[1] # 最大值的索引

y_predict = predictions.to('cpu').data.numpy()

y_true = labels.to('cpu').data.numpy()

# accuracy = float(np.sum(y_predict == y_true)) / float(y_true.size) # 准确率

accuracy = accuracy_score(y_true, y_predict) # 准确率

return accuracy

# 训练

def train(model, optimizer, epoch):

model.train() # 模型训练

for i, (datas, labels) in enumerate(dataloader_train):

# 设备转换

datas = datas.to(DEVICE)

labels = labels.to(DEVICE)

# 计算结果

out = model(datas)

# 计算损失值

loss = loss_func(out, labels)

# 梯度清零

optimizer.zero_grad()

# 反向传播

loss.backward()

# 梯度更新

optimizer.step()

# 打印损失值

if i % 100 == 0:

print('Train Epoch:%d Loss:%0.6f' % (epoch, loss.item()))

loss_list.append(loss.item())

# 测试

def test(model, epoch):

model.eval()

with torch.no_grad():

for i, (datas, labels) in enumerate(dataloader_test):

# 设备转换

datas = datas.to(DEVICE)

labels = labels.to(DEVICE)

# 打印信息

if i % 20 == 0:

accuracy = get_accuracy(model, datas, labels)

print('Test Epoch:%d Accuracy:%0.6f' % (epoch, accuracy))

accuracy_list.append(accuracy)

# 运行

def run(model, optimizer, model_name):

t1 = time.time()

for epoch in range(EPOCHS):

train(model, optimizer, epoch)

test(model, epoch)

t2 = time.time()

print(f'共耗时{t2 - t1}秒')

# 绘制Loss曲线

plt.rcParams['figure.figsize'] = (16, 8)

plt.subplots(1, 2)

plt.subplot(1, 2, 1)

plt.plot(range(len(loss_list)), loss_list)

plt.title('Loss Curve')

plt.subplot(1, 2, 2)

plt.plot(range(len(accuracy_list)), accuracy_list)

plt.title('Accuracy Cure')

# plt.show()

plt.savefig(f'./figure/mnist_{model_name}.png')

def initialize(model, model_name):

print(f'Start {model_name}')

# 查看分配显存

print('GPU_Allocated:%d' % torch.cuda.memory_allocated())

# 优化器

optimizer = optim.Adam(params=model.parameters(), lr=0.001)

run(model, optimizer, model_name)

if __name__ == '__main__':

models = [FNN().to(DEVICE),

CNN().to(DEVICE),

LSTM().to(DEVICE),

RNN().to(DEVICE),

GRU().to(DEVICE)]

model_names = ['FNN', 'CNN', 'RNN', 'LSTM', 'GRU']

for model, model_name in zip(models, model_names):

initialize(model, model_name)

# 保存模型

torch.save(model.state_dict(), f'./model/mnist_{model_name}.pkl')

欢迎点赞收藏关注。

本人深度学习小白一枚,如有误,欢迎留言指正。