08 线性回归 动手学深度学习 PyTorch版 李沐视频课笔记

动手学深度学习 PyTorch版 李沐视频课笔记

李沐视频课笔记其他文章目录链接(不定时更新)

文章目录

- 动手学深度学习 PyTorch版 李沐视频课笔记

- 一、线性回归从零开始实现

-

- 1. 根据带有噪声的线性模型构造一个人造数据集。

-

- 1.1 使用线性模型参数 w = [ 2 , − 3.4 ] T w=[2,-3.4]^T w=[2,−3.4]T, b = 4.2 b=4.2 b=4.2和噪声项 ϵ \epsilon ϵ生成数据集及其标签

- 1.2 features中的每一行都包含一个二维数据样本,labels中的每一行都包含一维标签值(一个标量)

- 2. 定义一个data_iter函数,该函数接受批量大小、特征矩阵和标签向量作为输入,生成大小为batch_size的小批量

- 3. 定义初始化模型参数

- 4. 定义模型

- 5. 定义损失函数

- 6. 定义优化算法

- 7. 训练过程

- 8. 比较真实参数和通过训练学到的参数来评估训练的成功程度

- 二、线性回归简洁实现

-

- 1. 通过使用深度学习框架来简洁地实现线性回归模型生成数据集

- 2. 调用框架中现有的API来读数据

- 3. 使用框架预定义好的层

- 4. 初始化模型参数

- 5. 计算均方误差使用的是MSELoss类,也称为平方范数

- 6. 实例化SGD实例

- 7. 训练过程

一、线性回归从零开始实现

1. 根据带有噪声的线性模型构造一个人造数据集。

1.1 使用线性模型参数 w = [ 2 , − 3.4 ] T w=[2,-3.4]^T w=[2,−3.4]T, b = 4.2 b=4.2 b=4.2和噪声项 ϵ \epsilon ϵ生成数据集及其标签

y = X w + b + ϵ y=Xw+b+\epsilon y=Xw+b+ϵ

Code:

%matplotlib inline

import random

import torch

from d2l import torch as d2l

def synthetic_data(w, b, num_examples):

"""生成y=Xw+b+噪声。"""

X = torch.normal(0, 1, (num_examples, len(w)))

y = torch.matmul(X, w) + b

y += torch.normal(0, 0.01, y.shape)

return X, y.reshape((-1, 1))

true_w = torch.tensor([2, -3.4])

true_b = 4.2

features, labels = synthetic_data(true_w, true_b, 1000)

print('features:', features[0], '\nlabel:', labels[0])

Result:

1.2 features中的每一行都包含一个二维数据样本,labels中的每一行都包含一维标签值(一个标量)

Code:

d2l.set_figsize()

d2l.plt.scatter(features[:, -1].detach().numpy(),

labels.detach().numpy(), 1)

Result:

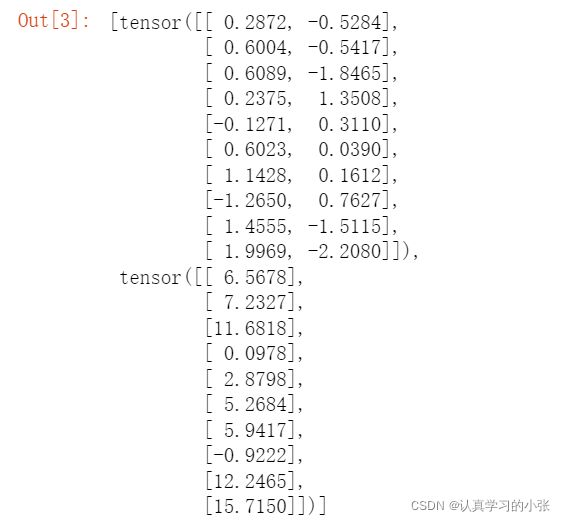

2. 定义一个data_iter函数,该函数接受批量大小、特征矩阵和标签向量作为输入,生成大小为batch_size的小批量

Code:

def data_iter(batch_size, features, labels):

num_examples = len(features)

indices = list(range(num_examples))

# 样本随机读取,没有特定顺序

random.shuffle(indices)

for i in range(0, num_examples, batch_size):

batch_indices = torch.tensor(

indices[i:min(i + batch_size, num_examples)])

yield features[batch_indices], labels[batch_indices]

batch_size = 10

for X, y in data_iter(batch_size, features, labels):

print(X, '\n', y)

break

Result:

3. 定义初始化模型参数

Code:

w = torch.normal(0, 0.01, size=(2, 1), requires_grad=True)

b = torch.zeros(1, requires_grad=True)

4. 定义模型

Code:

def linreg(X, w, b):

"""线性回归模型"""

return torch.matmul(X, w) + b

5. 定义损失函数

Code:

def squared_loss(y_hat, y):

"""均方损失"""

return (y_hat - y.reshape(y_hat.shape))**2 / 2

6. 定义优化算法

Code:

def sgd(params, lr, batch_size):

"""小批量随机梯度下降"""

with torch.no_grad():

for param in params:

param -= lr * param.grad / batch_size

param.grad.zero_()

7. 训练过程

Code:

lr = 0.03

num_epochs = 3

net = linreg

loss = squared_loss

for epoch in range(num_epochs):

for X, y in data_iter(batch_size, features, labels):

l = loss(net(X, w, b), y) # 小批量损失

l.sum().backward()

sgd([w, b], lr, batch_size)

with torch.no_grad():

train_l = loss(net(features, w, b), labels)

print(f'epoch {epoch + 1}, loss{float(train_l.mean()):f}')

Result:

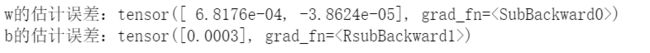

8. 比较真实参数和通过训练学到的参数来评估训练的成功程度

Code:

print(f'w的估计误差:{true_w - w.reshape(true_w.shape)}')

print(f'b的估计误差:{true_b - b}')

Result:

二、线性回归简洁实现

1. 通过使用深度学习框架来简洁地实现线性回归模型生成数据集

Code:

import numpy as np

import torch

from torch.utils import data

from d2l import torch as d2l

true_w = torch.tensor([2, -3.4])

true_b = 4.2

features, labels = d2l.synthetic_data(true_w, true_b, 1000)

Result:

2. 调用框架中现有的API来读数据

Code:

def load_array(data_arrays, batch_size, is_train=True):

"""g构造一个PyTorch数据迭代器"""

dataset = data.TensorDataset(*data_arrays)

return data.DataLoader(dataset, batch_size, shuffle=is_train)

batch_size = 10

data_iter = load_array((features, labels), batch_size)

next(iter(data_iter))

Result:

3. 使用框架预定义好的层

Code:

from torch import nn

net = nn.Sequential(nn.Linear(2, 1))

4. 初始化模型参数

Code:

net[0].weight.data.normal_(0, 0.01)

net[0].bias.data.fill_(0)

5. 计算均方误差使用的是MSELoss类,也称为平方范数

Code:

loss = nn.MSELoss()

6. 实例化SGD实例

Code:

trainer = torch.optim.SGD(net.parameters(), lr=0.03)

7. 训练过程

Code:

num_epochs = 3

for epoch in range(num_epochs):

for X, y in data_iter:

l = loss(net(X), y)

trainer.zero_grad()

l.backward()

trainer.step()

l = loss(net(features), labels)

print(f'epoch {epoch + 1}, loss {l:f}')

Result: