时间序列分析全集-python3

文章目录

- 1. 导言

-

- 1.1 基本定义

- 1.2 预测评估指标

- 2. 移动、平滑、评估

-

- 2.1 滑动窗口估计

-

- 2.1.1 moving average

- 2.1.2 weighted average

- 2.2 指数平滑

-

- 2.2.1 exponential smoothing

- 2.2.2 double exponential smoothing

- 2.2.3 Triple exponential smoothing

- 2.3 时间序列交叉验证

- 3. 计量经济学方法

-

- 3.1 平稳性

- 3.2 摆脱平稳性

- 3.3 SARIMA模型构建

- 3.4 ARIMA模型的速成教程

- 4. 时间序列的(非)线性模型

-

- 4.1 特征提取

-

- 4.1.1 时间序列的滞后值

- 4.1.3 平均值编码

- 4.2 正则化与特征筛选

- 4.3 Boosting

- 5.文章完整代码

1. 导言

1.1 基本定义

根据维基百科上对时间序列的定义,我们简单将其理解为:

时间序列:一系列以时间顺序作为索引的数据点的集合。

因此,时间序列中的数据点,是围绕着相对确定的时间戳组织在一起的,与随机样本相比,它们包含了一些我们待提取的其他信息。

咱们先来看看,对时间序列数据分析,需要用到哪些库

import numpy as np # 向量和矩阵运算

import pandas as pd # 表格与数据处理

import matplotlib.pyplot as plt # 绘图

import seaborn as sns # 更多绘图功能

sns.set()

from dateutil.relativedelta import relativedelta # 日期数据处理

from scipy.optimize import minimize # 优化函数

import statsmodels.formula.api as smf # 数理统计

import statsmodels.tsa.api as smt

import statsmodels.api as sm

import scipy.stats as scs

from itertools import product # 一些有用的函数

from tqdm import tqdm_notebook

import warnings # 勿扰模式

warnings.filterwarnings('ignore')

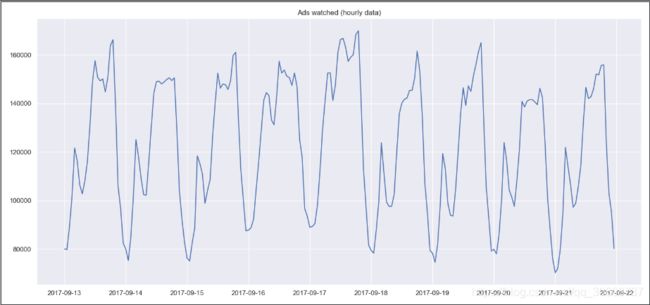

作为例子,本文以真实手游数据为例,来看一下我们玩家每小时观看的广告量和每天的游戏币消费情况 这两个时间序列数据:

数据下载地址:https://pan.baidu.com/s/15Ca_0U1etdJyNDdnZWgU0A 提取码: twg3

ads = pd.read_csv('../../data/ads.csv', index_col=['Time'], parse_dates=['Time'])

currency = pd.read_csv('../../data/currency.csv', index_col=['Time'], parse_dates=['Time'])

plt.figure(figsize=(15, 7))

plt.plot(ads.Ads)

plt.title('Ads watched (hourly data)')

plt.grid(True)

plt.show()

plt.figure(figsize=(15, 7))

plt.plot(currency.GEMS_GEMS_SPENT)

plt.title('In-game currency spent (daily data)')

plt.grid(True)

plt.show()

玩家在2017-09-13到2017-09-22这十天内,每小时广告阅读量的折线图:

玩家在2017-05到2018-03这十一个月内,每天游戏币消费的折线图:

1.2 预测评估指标

在我们开始预测时间序列数据之前,先来了解一些比较流行的模型评估指标。

- R squared:R²分数,表示确定系数(在计量经济学中,它可以理解为描述模型方差的百分比),描述模型的泛化能力,取值区间 (−inf,1](−inf,1],值为1时模型的性能最好;

sklearn.metrics.r2_score - Mean Absolute Error:平均绝对值损失,一种预测值与真实值之间的度量标准,也称作 l1-norm 损失,取值区间 [0,+inf)[0,+inf);

sklearn.metrics.mean_absolute_error - Median Absolute Error:绝对值损失的中位数,抗干扰能力强,对于有异常点的数据集的鲁棒性比较好,取值区间 [0,+inf)[0,+inf);

sklearn.metrics.median_absolute_error - Mean Squared Error:均方差损失,常用的损失度量函数之一,对于真实值与预测值偏差较大的样本点给予更高(平方)的惩罚,反之亦然,取值区间 [0,+inf)[0,+inf);

sklearn.metrics.mean_squared_error - Mean squared logarithmic error:均方对数误差,定义形式特别像上面提的MSE,只是计算的是真实值的自然对数与预测值的自然对数之差的平方,通常适用于 target 有指数的趋势时,取值区间 [0,+inf)[0,+inf);

sklearn.metrics.mean_squared_log_error - Mean Absolute Percentage Error:作用和MAE一样,只不过是以百分比的形式,用来解释模型的质量,但是在sklearn的库中,没有提供这个函数的接口,取值区间 [0,+inf)[0,+inf);

导入上面提到的损失度量函数:

from sklearn.metrics import r2_score, median_absolute_error, mean_absolute_error

from sklearn.metrics import median_absolute_error, mean_squared_error, mean_squared_log_error

def mean_absolute_percentage_error(y_true, y_pred):

return np.mean(np.abs((y_true - y_pred) / y_true)) * 100

2. 移动、平滑、评估

2.1 滑动窗口估计

在开始学习时序预测之前,我们得先做一个基本的假设,即 “明天将和今天一样” ,但是并不是用类似 yˆt=yt−1 的形式(虽然说这种方式可以作为一个baseline在时序数据的预测中)。

2.1.1 moving average

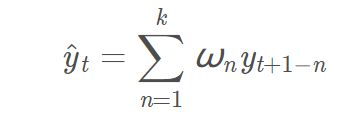

我们假设未来某个值的预测,取决于它前面的 n 个数的平均值,因此,我们将用 moving average (移动平均数) 作为 target 的预测值,数学表达式为:

用 numpy.average() 函数实现上述的功能:

def moving_average(series, n):

"""

Calculate average of last n observations

"""

return np.average(series[-n:])

mvn_avg=moving_average(ads, 24) # 用过去24小时的广告浏览量来预测下一个时间的数据

print(mvn_avg)

#116805.0

不幸的是,上面这种方式,不适合我们进行长期的预测(为了预测下一个值,我们需要实际观察的上一个值)。但是 移动平均数 还有另一个应用场景,即对原始的时间序列数据进行平滑处理,以找到数据的变化趋势。

pandas 提供了一个实现接口DataFrame.rolling(window).mean(),滑动窗口 window 的值越大,意味着变化趋势将会越平滑,对于那些噪音点很多的数据集(尤其是金融数据),使用 pandas 的这个接口,有助于探测到数据中存在的共性(common pattern)。

def plotMovingAverage(series, window, plot_intervals=False, scale=1.96, plot_anomalies=False):

"""

series - dataframe with timeseries

window - rolling window size

plot_intervals - show confidence intervals

plot_anomalies - show anomalies

"""

rolling_mean = series.rolling(window=window).mean()

plt.figure(figsize=(15,5))

plt.title("Moving average\n window size = {}".format(window))

plt.plot(rolling_mean, "g", label="Rolling mean trend")

# Plot confidence intervals for smoothed values

if plot_intervals:

mae = mean_absolute_error(series[window:], rolling_mean[window:])

deviation = np.std(series[window:] - rolling_mean[window:])

lower_bond = rolling_mean - (mae + scale * deviation)

upper_bond = rolling_mean + (mae + scale * deviation)

plt.plot(upper_bond, "r--", label="Upper Bond / Lower Bond")

plt.plot(lower_bond, "r--")

# Having the intervals, find abnormal values

if plot_anomalies:

anomalies = pd.DataFrame(index=series.index, columns=series.columns)

anomalies[seriesupper_bond] = series[series>upper_bond]

plt.plot(anomalies, "ro", markersize=10)

plt.plot(series[window:], label="Actual values")

plt.legend(loc="upper left")

plt.grid(True)

plt.show()

#设置滑动窗口值为4(小时)

plotMovingAverage(ads, 4)

#设置滑动窗口值为12(小时)

plotMovingAverage(ads, 12)

#设置滑动窗口值为24(小时)

plotMovingAverage(ads, 24)

#我们还可以给我们的 平滑值 添上置信区间

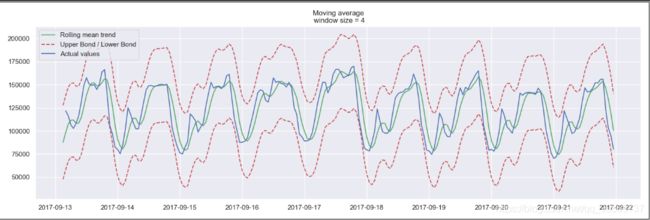

plotMovingAverage(ads, 4, plot_intervals=True)

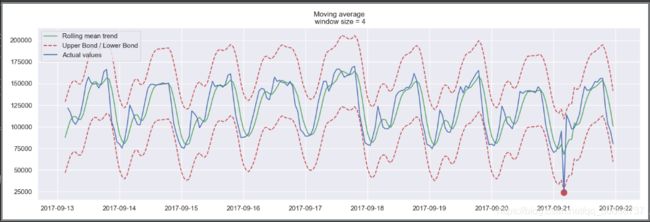

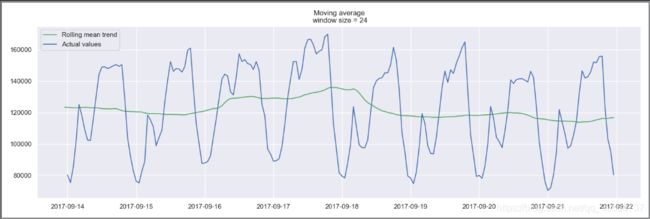

设置滑动窗口值为4(小时),plotMovingAverage(ads, 4),绘制预测结果:

设置滑动窗口值为12(小时),plotMovingAverage(ads, 12),绘制预测结果:

设置滑动窗口值为24(小时),plotMovingAverage(ads, 24),绘制预测结果:

以24小时作为滑动窗口的大小,来分析玩家每小时阅读广告量的信息时,可以清晰地发现广告浏览量的变化趋势,周末时广告浏览量较高,工作日广告浏览量较低。

我们还可以给我们的 平滑值 添上置信区间plotMovingAverage(ads, 4, plot_intervals=True)

现在我们再用 moving average 创建一个简单的异常检测系统(即如果数据点在置信区间之外,则认为是异常值),显然在我们上面的时间系列数据中,数据点都在置信区间以内,因此我们故意把数据中的某个值改为异常值。

ads_anomaly = ads.copy()

ads_anomaly.iloc[-20] = ads_anomaly.iloc[-20] * 0.2 # say we have 80% drop of ads

我们再来瞧一下,上面这个简单的方法,是否能够找到异常值。

plotMovingAverage(ads_anomaly, 4, plot_intervals=True, plot_anomalies=True)

瞧!我们的方法,找到了异常点的位置(2017-09-21),那我们再来试试第二个数据序列(每天游戏币的消费情况),并且设置滑动窗口大小为7,看看是什么效果。

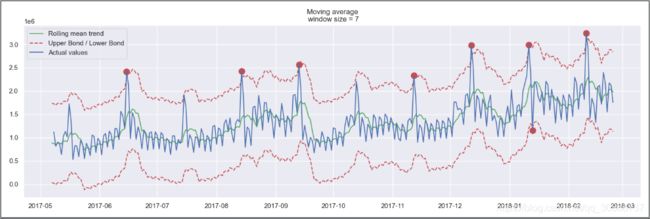

plotMovingAverage(currency, 7, plot_intervals=True, plot_anomalies=True)

这就暴露出我们简单方法的缺点了。它没有在我们的数据中捕捉到每个月中出现的季节性变化,反倒几乎把所有每隔30天出现的峰值标记为异常。

如果你不想有那么多的错误警报,那么最好考虑更复杂的模型。

2.1.2 weighted average

上面提到了用 移动平均值对原始数据做平滑处理,接下来要说的是加权平均值,它是对上面 移动平均值 的简单改良。

也就是说,前面 k 个观测数据的值,不再是直接求和再取平均值,而是计算它们的加权和(权重和为1)。通常来说,时间距离越近的观测点,权重越大。数学表达式为:

def weighted_average(series, weights):

"""

Calculate weighter average on series

"""

result = 0.0

weights.reverse()

for n in range(len(weights)):

result += series.iloc[-n-1] * weights[n]

return float(result)

#三个权重值,就是用最后三个值预测下一个值

weighted_avg = weighted_average(ads, [0.6, 0.3, 0.1])

print(weighted_avg)

#98423.0

2.2 指数平滑

2.2.1 exponential smoothing

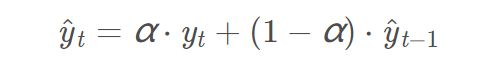

如果不用上面提到的,每次对当前序列中的前k个数的加权平均值作为模型的预测值,而是直接对目前所有的已观测数据进行加权处理,并且每一个数据点的权重,呈指数形式递减。

这个就是指数平滑的策略,具体怎么做的呢?一个简单的数学式为:

式子中的 αα 表示平滑因子,它定义我们“遗忘”当前真实观测值的速度有多快。αα 越小,表示当前真实观测值的影响力越小,而前一个模型预测值的影响力越大,最终得到的时间序列将会越平滑。(这个结论要记住哦,有助于理解接下来的绘图)

那么指数体现在哪呢?指数就隐藏在递归函数之中,我们上面的函数,每次都要用(1−α)(1−α)乘以模型的上一个预测值。

#对目前所有的已观测数据进行加权处理,并且每一个数据点的权重,呈指数形式递减

def exponential_smoothing(series, alpha):

"""

series - dataset with timestamps

alpha - float [0.0, 1.0], smoothing parameter

"""

result = [series[0]] # first value is same as series

for n in range(1, len(series)):

result.append(alpha * series[n] + (1 - alpha) * result[n - 1])

return result

def plotExponentialSmoothing(series, alphas):

"""

Plots exponential smoothing with different alphas

series - dataset with timestamps

alphas - list of floats, smoothing parameters

"""

with plt.style.context('seaborn-white'):

plt.figure(figsize=(15, 7))

for alpha in alphas:

plt.plot(exponential_smoothing(series, alpha), label="Alpha {}".format(alpha))

plt.plot(series.values, "c", label="Actual")

plt.legend(loc="best")

plt.axis('tight')

plt.title("Exponential Smoothing")

plt.grid(True)

plt.show()

plotExponentialSmoothing(ads.Ads, [0.7, 0.3, 0.05])

plotExponentialSmoothing(currency.GEMS_GEMS_SPENT, [0.7, 0.3, 0.05])

游戏玩家每小时浏览的广告量在不同平滑因子下的时序图:

游戏玩家每天游戏币的消费量在不同平滑因子下的时序图:

单指数平滑小结

- 单指数平滑的特点: 能够追踪数据变化。预测过程中,添加了最新的样本数据之后,新数据逐渐取代老数据的地位,最终老数据被淘汰。

- 单指数平滑的局限性: 第一,预测值不能反映趋势变动、季节波动等有规律的变动;第二,这个方法多适用于短期预测,而不适合中长期的预测;第三,由于预测值是历史数据的均值,因此与实际序列相比,有滞后的现象。

- 单指数平滑的系数: EViews提供两种确定指数平滑系数的方法:自动给定和人工确定。一般来说,如果序列变化比较平缓,平滑系数值应该比较小,比如小于0.l;如果序列变化比较剧烈,平滑系数值可以取得大一些,如0.3~0.5。若平滑系数值大于0.5才能跟上序列的变化,表明序列有很强的趋势,不能采用一次指数平滑进行预测。

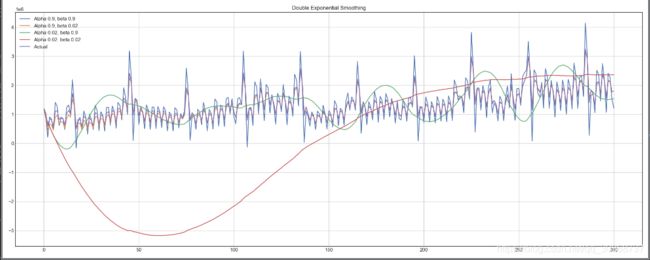

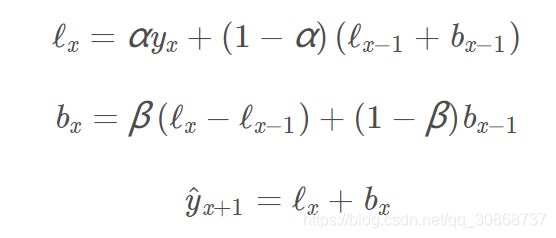

2.2.2 double exponential smoothing

前面在对于单指数平滑的小结中,提到了它的一些局限性。

单指数平滑在产生新的序列的时候,考虑了前面的 K 条历史数据,但是仅仅考虑其静态值,即没有考虑时间序列当前的变化趋势。

如果当前的时间序列数据处于上升趋势,那么当我们对明天的数据进行预测的时候,就不应该仅仅是对历史数据进行”平均“,还应考虑到当前数据变化的上升趋势。同时考虑历史平均和变化趋势,这个就是我们的双指数平滑法。

下面看看它具体是怎么做的:

通过 序列分解法 (series decomposition),我们可以得到两个分量,一个叫 intercept (also, level) ℓℓ ,另一个叫 trend (also, slope,斜率) bb. 我们根据前面学习的方法,知道了如何预测 intercept (截距,即序列数据的期望值),我们可以将同样的指数平滑法应用到 trend (趋势)上。时间序列未来变化的方向取决于先前加权的变化。

我们得到上述的一组函数。

第一个函数 ℓx表示截距,第一项表示序列的当前值 yxy ,第二项现在被拆分为 level 和 trend 的上一个值; 第二个函数

bx表示斜率(或趋势),第一项为当前的截距值与上一个截距值之差,描述趋势的变化情况,第二项为趋势的前一个值。β系数表示指数平滑的权重;

第三个函数 yˆx+1 ,表示最后序列的预测值,为截距和趋势的模型预测值之和;

def double_exponential_smoothing(series, alpha, beta):

"""

series - dataset with timeseries

alpha - float [0.0, 1.0], smoothing parameter for level

beta - float [0.0, 1.0], smoothing parameter for trend

"""

# first value is same as series

result = [series[0]]

for n in range(1, len(series)+1):

if n == 1:

level, trend = series[0], series[1] - series[0]

if n >= len(series): # forecasting

value = result[-1]

else:

value = series[n]

last_level, level = level, alpha*value + (1-alpha)*(level+trend)

trend = beta*(level-last_level) + (1-beta)*trend

result.append(level+trend)

return result

def plotDoubleExponentialSmoothing(series, alphas, betas):

"""

Plots double exponential smoothing with different alphas and betas

series - dataset with timestamps

alphas - list of floats, smoothing parameters for level

betas - list of floats, smoothing parameters for trend

"""

with plt.style.context('seaborn-white'):

plt.figure(figsize=(20, 8))

for alpha in alphas:

for beta in betas:

plt.plot(double_exponential_smoothing(series, alpha, beta), label="Alpha {}, beta {}".format(alpha, beta))

plt.plot(series.values, label = "Actual")

plt.legend(loc="best")

plt.axis('tight')

plt.title("Double Exponential Smoothing")

plt.grid(True)

plotDoubleExponentialSmoothing(ads.Ads, alphas=[0.9, 0.02], betas=[0.9, 0.02])

plotDoubleExponentialSmoothing(currency.GEMS_GEMS_SPENT, alphas=[0.9, 0.02], betas=[0.9, 0.02])

现在我们需要调整两个参数 αα 和 ββ 。前者决定时间序列数据自身变化趋势的平滑程度,后者决定趋势的平滑程度(有点拗口,可自行观看上图来理解这句话)。

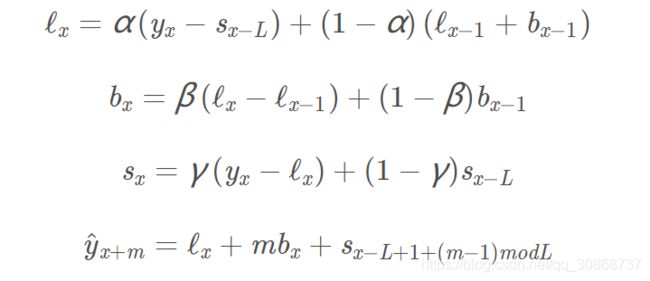

2.2.3 Triple exponential smoothing

三指数平滑,也叫 Holt-Winters 平滑,与前两种平滑方法相比,我们这次多考虑了一个因素,seasonality (季节性)。这其实也意味着,如果我们的时间序列数据,不存在季节性变化,就不适合用三指数平滑。

模型中的 季节性 分量,用来解释 截距 和 趋势 的重复变化,并且由季节长度来描述,也就是变化重复的周期来描述。

对于一个周期内的每一个观测点,都有一个单独的组成部分。例如,如果季节长度为7(每周季节性),我们将有7个季节性组成部分,一个用于一周中的一天。

第一个函数 ℓx表示截距,第一项表示序列的当前值 yx与相应的季节性分量 sx−L的差值,第二项现在被拆分为 level 和 trend

的上一个值; 第二个函数 bx表示斜率(或趋势),第一项为当前的截距值与上一个截距值之差,描述趋势的变化情况,第二项为趋势的前一个值。β

系数表示指数平滑的权重; 第三个函数 sx 表示季节性分量,第一项为序列的当前值 yx与截距的当前值 ℓx之差,季节性分量的上一个值

sx−L 。γ 系数表示指数平滑的权重; 第四个函数 yˆx+m 表示 未来 m 步之后的预测值,该值等于当前的截断值,加上 m 个趋势值

bx ,再加上 (x+m) 的数据所对应的季节性分量值;

下面是三指数平滑模型的代码,也称Holt-Winters模型,得名于发明人的姓氏——Charles Holt和他的学生Peter Winters。此外,模型中还引入了Brutlag方法,以创建置信区间:

其中T为季节的长度,d为预测偏差。

class HoltWinters:

"""

Holt-Winters model with the anomalies detection using Brutlag method

# series - initial time series

# slen - length of a season

# alpha, beta, gamma - Holt-Winters model coefficients

# n_preds - predictions horizon

# scaling_factor - sets the width of the confidence interval by Brutlag (usually takes values from 2 to 3)

"""

def __init__(self, series, slen, alpha, beta, gamma, n_preds, scaling_factor=1.96):

self.series = series

self.slen = slen

self.alpha = alpha

self.beta = beta

self.gamma = gamma

self.n_preds = n_preds

self.scaling_factor = scaling_factor

def initial_trend(self):

sum = 0.0

for i in range(self.slen):

sum += float(self.series[i+self.slen] - self.series[i]) / self.slen

return sum / self.slen

def initial_seasonal_components(self):

seasonals = {}

season_averages = []

n_seasons = int(len(self.series)/self.slen)

# let's calculate season averages

for j in range(n_seasons):

season_averages.append(sum(self.series[self.slen*j:self.slen*j+self.slen])/float(self.slen))

# let's calculate initial values

for i in range(self.slen):

sum_of_vals_over_avg = 0.0

for j in range(n_seasons):

sum_of_vals_over_avg += self.series[self.slen*j+i]-season_averages[j]

seasonals[i] = sum_of_vals_over_avg/n_seasons

return seasonals

def triple_exponential_smoothing(self):

self.result = []

self.Smooth = []

self.Season = []

self.Trend = []

self.PredictedDeviation = []

self.UpperBond = []

self.LowerBond = []

seasonals = self.initial_seasonal_components()

for i in range(len(self.series)+self.n_preds):

if i == 0: # components initialization

smooth = self.series[0]

trend = self.initial_trend()

self.result.append(self.series[0])

self.Smooth.append(smooth)

self.Trend.append(trend)

self.Season.append(seasonals[i%self.slen])

self.PredictedDeviation.append(0)

self.UpperBond.append(self.result[0] +

self.scaling_factor *

self.PredictedDeviation[0])

self.LowerBond.append(self.result[0] -

self.scaling_factor *

self.PredictedDeviation[0])

continue

if i >= len(self.series): # predicting

m = i - len(self.series) + 1

self.result.append((smooth + m*trend) + seasonals[i%self.slen])

# when predicting we increase uncertainty on each step

self.PredictedDeviation.append(self.PredictedDeviation[-1]*1.01)

else:

val = self.series[i]

last_smooth, smooth = smooth, self.alpha*(val-seasonals[i%self.slen]) + (1-self.alpha)*(smooth+trend)

trend = self.beta * (smooth-last_smooth) + (1-self.beta)*trend

seasonals[i%self.slen] = self.gamma*(val-smooth) + (1-self.gamma)*seasonals[i%self.slen]

self.result.append(smooth+trend+seasonals[i%self.slen])

# Deviation is calculated according to Brutlag algorithm.

self.PredictedDeviation.append(self.gamma * np.abs(self.series[i] - self.result[i])

+ (1-self.gamma)*self.PredictedDeviation[-1])

self.UpperBond.append(self.result[-1] +

self.scaling_factor *

self.PredictedDeviation[-1])

self.LowerBond.append(self.result[-1] -

self.scaling_factor *

self.PredictedDeviation[-1])

self.Smooth.append(smooth)

self.Trend.append(trend)

self.Season.append(seasonals[i%self.slen])

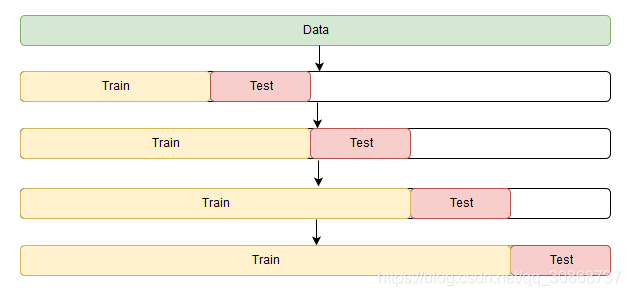

2.3 时间序列交叉验证

我们在常规的数据集中,都有用过交叉验证法,以找到模型最佳的参数。 但是对于时间序列数据,数据间存在时间的依赖性,我们就不能再随机划分数据集,导致数据中的时间结构被破坏了。

因此,我们不得不使用一些技巧性的方法,这个方法叫做滚动交叉验证,可观察下面的式子,来理解这个方法。

fold 1 : training [1], test [2]

fold 2 : training [1 2], test [3]

fold 3 : training [1 2 3], test [4]

fold 4 : training [1 2 3 4], test [5]

fold 5 : training [1 2 3 4 5], test [6]

滚动交叉验证的图形表示:

现在,咱们知道了时间序列的数据,交叉验证集应该怎么划分。接下来开始动手找出 Holt-Winters 模型在玩家每小时的广告浏览量数据集中的最佳参数,我们根据常识可知,这个数据集中,存在一个明显的季节性变化,变化周期为24小时,因此我们设置 slen = 24 :

from sklearn.model_selection import TimeSeriesSplit # you have everything done for you

def timeseriesCVscore(params, series, loss_function=mean_squared_error, slen=24):

"""

Returns error on CV

params - vector of parameters for optimization

series - dataset with timeseries

slen - season length for Holt-Winters model

"""

# errors array

errors = []

values = series.values

alpha, beta, gamma = params

# set the number of folds for cross-validation

tscv = TimeSeriesSplit(n_splits=3)

# iterating over folds, train model on each, forecast and calculate error

for train, test in tscv.split(values):

model = HoltWinters(series=values[train], slen=slen,

alpha=alpha, beta=beta, gamma=gamma, n_preds=len(test))

model.triple_exponential_smoothing()

predictions = model.result[-len(test):]

actual = values[test]

error = loss_function(predictions, actual)

errors.append(error)

return np.mean(np.array(errors))

在 Holt-Winters 模型以及其他指数平滑模型中,对平滑参数的大小有一个限制,每个参数都在0到1之间。因此我们必须选择支持模型参数约束的最优化算法,在这里,我们使用 Truncated Newton conjugate gradient (截断牛顿共轭梯度法)

%%time

data = ads.Ads[:-20] # leave some data for testing

# initializing model parameters alpha, beta and gamma

x = [0, 0, 0]

# Minimizing the loss function

'''

scipy.optimize.minimize(fun, x0, args=(), method=None, jac=None, hess=None, hessp=None, bounds=None, constraints=(), tol=None, callback=None, options=None)

解释:

fun: 求最小值的目标函数

x0:变量的初始猜测值,如果有多个变量,需要给每个变量一个初始猜测值。minimize是局部最优的解法,所以

args:常数值,后面demo会讲解,fun中没有数字,都以变量的形式表示,对于常数项,需要在这里给值

method:求极值的方法,官方文档给了很多种。一般使用默认。每种方法我理解是计算误差,反向传播的方式不同而已,例如BFGS,Nelder-Mead单纯形,牛顿共轭梯度TNC,COBYLA或SLSQP

constraints:约束条件,针对fun中为参数的部分进行约束限制

'''

opt = minimize(timeseriesCVscore, x0=x,

args=(data, mean_squared_log_error),

method="TNC", bounds = ((0, 1), (0, 1), (0, 1))

)

# Take optimal values...

alpha_final, beta_final, gamma_final = opt.x

print(alpha_final, beta_final, gamma_final)

# ...and train the model with them, forecasting for the next 50 hours

model = HoltWinters(data, slen = 24,

alpha = alpha_final,

beta = beta_final,

gamma = gamma_final,

n_preds = 50, scaling_factor = 3)

model.triple_exponential_smoothing()

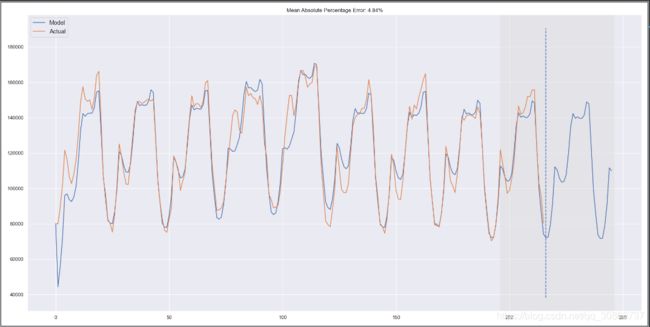

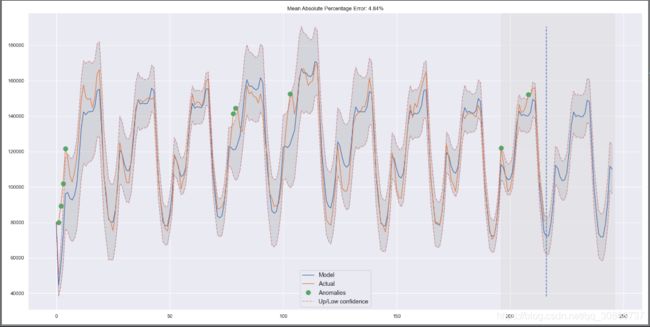

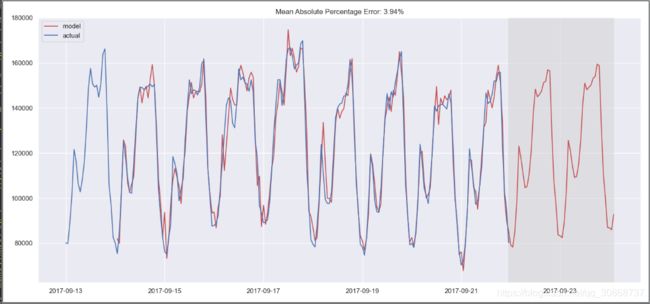

将上面训练后得到的最优参数组合(三个平滑系数),绘制图形:

def plotHoltWinters(series, plot_intervals=False, plot_anomalies=False):

"""

series - dataset with timeseries

plot_intervals - show confidence intervals

plot_anomalies - show anomalies

"""

plt.figure(figsize=(20, 10))

plt.plot(model.result, label = "Model")

plt.plot(series.values, label = "Actual")

error = mean_absolute_percentage_error(series.values, model.result[:len(series)])

plt.title("Mean Absolute Percentage Error: {0:.2f}%".format(error))

if plot_anomalies:

anomalies = np.array([np.NaN]*len(series))

anomalies[series.valuesmodel.UpperBond[:len(series)]] = \

series.values[series.values>model.UpperBond[:len(series)]]

plt.plot(anomalies, "o", markersize=10, label = "Anomalies")

if plot_intervals:

plt.plot(model.UpperBond, "r--", alpha=0.5, label = "Up/Low confidence")

plt.plot(model.LowerBond, "r--", alpha=0.5)

plt.fill_between(x=range(0,len(model.result)), y1=model.UpperBond,

y2=model.LowerBond, alpha=0.2, color = "grey")

plt.vlines(len(series), ymin=min(model.LowerBond), ymax=max(model.UpperBond), linestyles='dashed')

plt.axvspan(len(series)-20, len(model.result), alpha=0.3, color='lightgrey')

plt.grid(True)

plt.axis('tight')

plt.legend(loc="best", fontsize=13);

plotHoltWinters(ads.Ads)

plotHoltWinters(ads.Ads, plot_intervals=True, plot_anomalies=True)

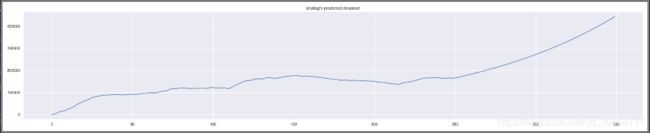

从图表判断,我们的模型能够成功地逼近初始时间序列,捕捉到日季节性、整体下降趋势甚至一些异常。如果你看一下模型的偏差(deviation),你可以清楚地看到模型对序列结构的变化反应非常强烈,但是很快就会把偏差恢复到正常值,“遗忘”过去。(参看下面折线图) 该模型的这一特性允许我们快速构建异常检测系统,即使对于非常嘈杂的系列,也不需要花费太多的时间和金钱来准备数据和训练模型。

plt.figure(figsize=(25, 5))

plt.plot(model.PredictedDeviation)

plt.grid(True)

plt.axis('tight')

plt.title("Brutlag's predicted deviation")

我们把同样的方法,用于第二个时间序列(玩家每天游戏币的消费情况),这里我们把季节性周期设置为 30 ,即 slen = 30 :

data = currency.GEMS_GEMS_SPENT[:-50]

slen = 30 # 30-day seasonality

x = [0, 0, 0]

opt = minimize(timeseriesCVscore, x0=x,args=(data, mean_absolute_percentage_error, slen),method="TNC", bounds = ((0, 1), (0, 1), (0, 1)))

alpha_final, beta_final, gamma_final = opt.x

print(alpha_final, beta_final, gamma_final)

model = HoltWinters(data, slen = slen,alpha = alpha_final,beta = beta_final, gamma = gamma_final, n_preds = 100, scaling_factor = 3)

model.triple_exponential_smoothing()

plotHoltWinters(currency.GEMS_GEMS_SPENT)

plotHoltWinters(currency.GEMS_GEMS_SPENT, plot_intervals=True, plot_anomalies=True)

plt.figure(figsize=(25, 5))

plt.plot(model.PredictedDeviation)

plt.grid(True)

plt.axis('tight')

plt.title("Brutlag's predicted deviation")

plt.show()

玩家每天游戏币的消费情况在三指数平滑模型下的预测图:

玩家每天游戏币的消费情况在三指数平滑模型下的异常点检测图:

玩家每天游戏币的消费情况模型的偏差(deviation)走势图:

3. 计量经济学方法

3.1 平稳性

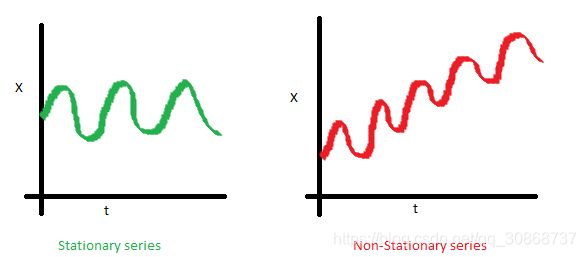

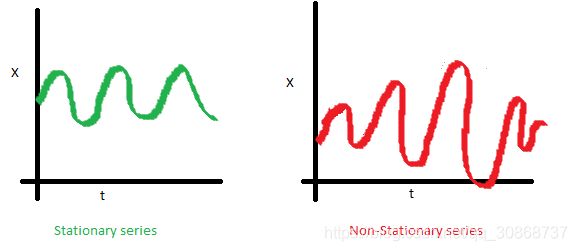

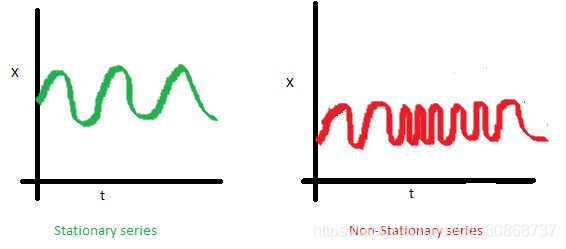

在我们开始建模之前,我们需要提到时间序列的一个重要特性,如平稳性 (stationarity)。

我们称一个时间序列是平稳的,是指它不会随时间而改变其统计特性,即平均值和方差不会随时间而改变。

下图中,红色的序列是不平稳的,因为它的均值随着时间增加;

下图中,红色的序列是不平稳的,因为它的均值随着时间增加;

下图中,红色的序列是不平稳的,因为随着时间的增加,序列点越来越靠近,所以它的协方差也不是一个常数;

那么为什么平稳性这么重要呢?

因为现在大多数的时间序列模型,或多或少都是基于未来序列与目前已观测到的序列数据有着相同的统计特性(均值、方差等) 的假设。也就是说,如果原始序列(已观测序列)是不平稳的,那么我们现有模型的预测结果,就可能会出错。

糟糕的是,我们在教科书之外所接触到的时间序列数据,大多都是不平稳的,不过还好,我们有办法把它改变成平稳分布。

3.2 摆脱平稳性

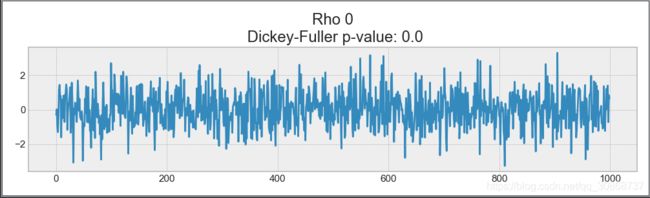

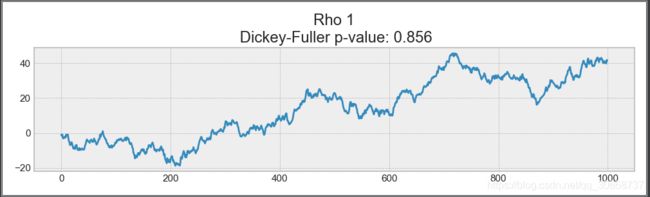

首先呢,咱们得知道,导致原始序列不平稳的数据点在哪。为了做到这一点,我们来看看 白噪声 和 随机游走 。

绘制白噪声图:

#绘制白噪声图

white_noise = np.random.normal(size=1000)

with plt.style.context('bmh'):

plt.figure(figsize=(15, 5))

plt.plot(white_noise)

plt.show()

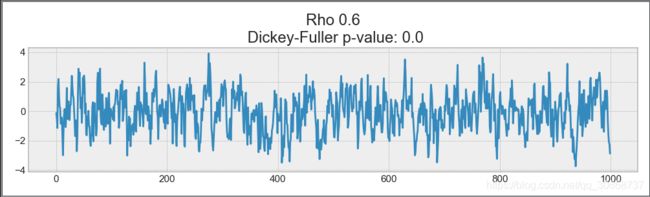

上面是通过标准正态分布生成所生成的样本点,所以它们的分布显然是平稳的,均值为0,方差为1。 现在我们基于这个样本分布,再生成一个样本分布,新样本点的生成式为 x(t)=ρ∗x(t−1)+e(t),x(t−1)为当前序列的上一个新样本点,e(t)为当前序列的原始样本点:

绘制新的样本点分布图:

#绘制新的样本点分布图:

def plotProcess(n_samples=1000, rho=0):

x = w = np.random.normal(size=n_samples)

for t in range(n_samples):

x[t] = rho * x[t-1] + w[t]

with plt.style.context('bmh'):

plt.figure(figsize=(10, 3))

plt.plot(x)

plt.title("Rho {}\n Dickey-Fuller p-value: {}".format(rho, round(sm.tsa.stattools.adfuller(x)[1], 3)))

plt.show()

for rho in [0, 0.6, 0.9, 1]:

plotProcess(rho=rho)

ρ=0.6 时,新的样本分布出现了更大的周期,但总体上它仍然是平稳的:

ρ=0.9时,新的样本分布与均值0的偏差更大,但仍在其周围振荡:

上述情况出现的原因,是因为当到达了临界值之后,时间序列 x(t)=ρ∗x(t−1)+e(t)不再返回它的均值,怎么理解呢?请看下面。

等式左边,我们称之为一阶差分 (first difference),如果 ρ=1,则一阶差分则为平稳的白噪声分布 e(t),这个正是 Dickey-Fuller 时间序列平稳性检验(单位根的存在)的主要思想。

如果我们可以用一阶差分从非平稳序列中得到一个平稳序列,我们称这个非平稳序列为一阶积分。需要指出的是,一阶差分并不总是足以得到平稳序列,因为可能是d阶单整且d > 1(具有多个单位根),在这样的情形下,需要使用增广迪基-福勒检验(augmented Dickey-Fuller test)。

我们可以使用不同的方法来对抗非平稳性,如 d阶差分、趋势和季节性消除、平滑处理,也可以使用像box cox或对数这样的转换。

3.3 SARIMA模型构建

现在呢,我们可以通过经历让原始序列平稳的每一个阶段,来构建一个 SARIMA 模型。

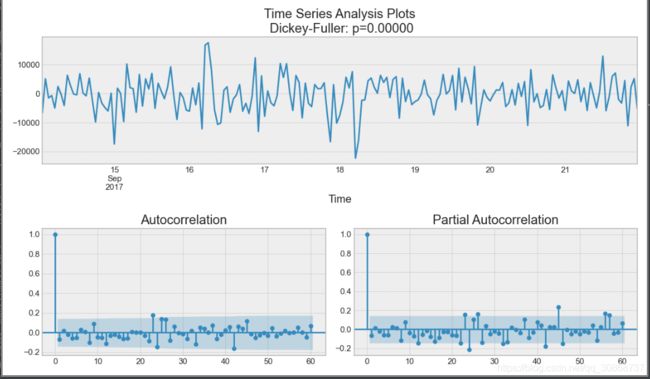

绘制时间序列图、ACF 图和 PACF 图代码:

def tsplot(y, lags=None, figsize=(12, 7), style='bmh'):

"""

Plot time series, its ACF and PACF, calculate Dickey–Fuller test

y - timeseries

lags - how many lags to include in ACF, PACF calculation

"""

if not isinstance(y, pd.Series):

y = pd.Series(y)

with plt.style.context(style):

fig = plt.figure(figsize=figsize)

layout = (2, 2)

ts_ax = plt.subplot2grid(layout, (0, 0), colspan=2)

acf_ax = plt.subplot2grid(layout, (1, 0))

pacf_ax = plt.subplot2grid(layout, (1, 1))

y.plot(ax=ts_ax)

p_value = sm.tsa.stattools.adfuller(y)[1]

ts_ax.set_title('Time Series Analysis Plots\n Dickey-Fuller: p={0:.5f}'.format(p_value))

smt.graphics.plot_acf(y, lags=lags, ax=acf_ax)

smt.graphics.plot_pacf(y, lags=lags, ax=pacf_ax)

plt.tight_layout()

plt.show()

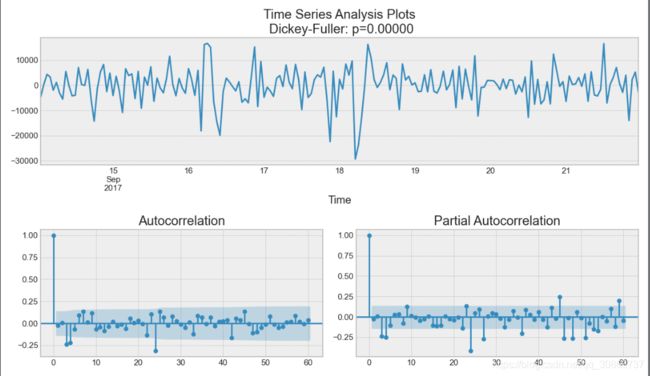

tsplot(ads.Ads, lags=60)

(-7.089633890638512, 4.4448036886224977e-10, 9, 206, {‘1%’: -3.4624988216864776, ‘5%’: -2.8756749365852587, ‘10%’: -2.5743041549627677}, 4210.8045211241315)

如何确定该序列能否平稳呢?主要看:

1%、%5、%10不同程度拒绝原假设的统计值和ADF Test result的比较,ADF Test result同时小于1%、5%、10%即说明非常好地拒绝该假设,本数据中,adf结果为-7, 小于三个level的统计值。

P-value是否非常接近0.本数据中,P-value 为 4e-10,接近0.

ADF检验的原假设是存在单位根,只要这个统计值是小于1%水平下的数字就可以极显著的拒绝原假设,认为数据平稳。注意,ADF值一般是负的,也有正的,但是它只有小于1%水平下的才能认为是及其显著的拒绝原假设。

出乎意料,初始序列是平稳的,迪基-福勒检验拒绝了单位根存在的零假设。 实际上,从上面的图形本身就可以看出这一点——没有明显的趋势,所以均值是恒定的,整个序列的方差也相对比较稳定。在建模之前我们只需处理季节性。为此让我们采用“季节差分”,也就是对序列进行简单的减法操作,时差等于季节周期。

ads_diff = ads.Ads - ads.Ads.shift(24)

tsplot(ads_diff[24:], lags=60)

观察上图,图表中可见的季节性消失,但是自相关 (autocorrelation) 函数仍然有太多的明显滞后的情况(图中浅色阴影之外的一些点为滞后点)。为了移除它们,我们将取一阶差分:从序列中减去自身(时差为1)

ads_diff = ads_diff - ads_diff.shift(1)

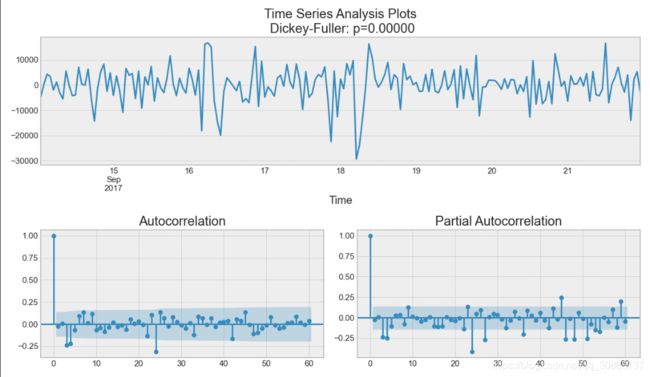

tsplot(ads_diff[24+1:], lags=60)

可以看到,我们的序列可以看到是在零周围振荡,迪基-福勒检验表明它是平稳的,ACF中显著的尖峰不见了。我们终于可以开始建模了!

3.4 ARIMA模型的速成教程

我们逐字母地来理解 SARIMA(p,d,q)(P,D,Q,s)SARIMA(p,d,q)(P,D,Q,s),Seasonal Autoregression Moving Average model(季节自回归移动平均模型)。

AR§:autoregression model(自回归模型),即时间序列对自身的回归。基本假设是当前的序列值取决于它之前的值,并且存在一定的滞后。 模型最大的滞后值称为 p,要确定初始的 p 值,我们需要在 PACF 图中找到最大的滞后点;

MA(q):moving average model(移动平均值模型),这里不讨论它的细节,它基于当前的误差依赖于先前的误差,有一定的滞后性(滞后值记为q) 这一假设,对时间序列进行建模。和上面一样,可以在 ACF 图找到滞后值 qq 的初始值;

此时,AR§+MA(q)=ARMA(p,q),这个可以叫做自回归移动平均模型,如果初始序列是平稳的,我们可以通过这个模型逼近这一序列,我们继续往下看。

I(d):d 阶,表示使得序列平稳所需的非季节性差异的数量。 在我们的例子中,它的值取 1,因为我们使用的是一阶差分。

加上字母 I 之后,我们得到了 ARIMA 模型,可以通过非季节性差分处理非平稳数据。

S(s):字母 S表示序列是季节性的,S(s) 等于这个季节周期的长度值。

现在,我们的模型 SARIMA(p,d,q)(P,D,Q,s)只剩下(P,D,Q)三个参数没有解释了。

P:自回归模型的季节性分量的阶数,同样可以从 PACF 中得到。但是这次你需要看看显著滞后的数量,PP 是季节周期长度的倍数,例如,如果周期等于24,并且看 PACF,我们看到第24和第48滞后是显著的,这意味着初始 PP 应该是2。

Q:移动平均模型中季节分量的阶数,初始值的确定和 PP 同理,只不过使用的是 ACF 图。

D:季节性整合阶数,取值等于1或0,表示是否应用季节差分。

现在我们知道了如何设置初始的参数值,那我们再最后看一遍图表,然后找到模型上述的参数。

tsplot(ads_diff[24+1:], lags=60)

观察可得:

pp 最有可能为4,从 PACF 图中,可以观测到的最大滞后值;

dd 等于1,因为我们使用的是一阶差分;

qq 大概等于4,从 ACF 图中可以看出;

PP 应该是2,因为 PACF 图中第24个和第48个是比较明显的滞后点;

DD 等于1,表示我们要进行 季节差分 处理;

QQ 可能是1,因为 ACF 图中,第24个滞后是比较明显的,第48个滞后不太明显;

那么我们现在对 SARIMA 模型的参数进行搜索,看哪一组参数效果最好:

- 设置参数搜索区间:

# setting initial values and some bounds for them

ps = range(2, 5)

d=1

qs = range(2, 5)

Ps = range(0, 3)

D=1

Qs = range(0, 2)

s = 24 # season length is still 24

# creating list with all the possible combinations of parameters

parameters = product(ps, qs, Ps, Qs)

parameters_list = list(parameters)

len(parameters_list)

# 输出为 54

- 寻找 SARIMA 模型的最佳参数组合:

def optimizeSARIMA(parameters_list, d, D, s):

"""

Return dataframe with parameters and corresponding AIC

parameters_list - list with (p, q, P, Q) tuples

d - integration order in ARIMA model

D - seasonal integration order

s - length of season

"""

results = []

best_aic = float("inf")

for param in tqdm_notebook(parameters_list):

# we need try-except because on some combinations model fails to converge

try:

model=sm.tsa.statespace.SARIMAX(ads.Ads, order=(param[0], d, param[1]),

seasonal_order=(param[2], D, param[3], s)).fit(disp=-1)

except:

continue

aic = model.aic

# saving best model, AIC and parameters

if aic < best_aic:

best_model = model

best_aic = aic

best_param = param

results.append([param, model.aic])

result_table = pd.DataFrame(results)

result_table.columns = ['parameters', 'aic']

# sorting in ascending order, the lower AIC is - the better

result_table = result_table.sort_values(by='aic', ascending=True).reset_index(drop=True)

return result_table

%%time

result_table = optimizeSARIMA(parameters_list, d, D, s)

print(result_table)

- 设置 SARIMA 模型最佳参数,查看模型输出结果:

p, q, P, Q = result_table.parameters[0]

best_model=sm.tsa.statespace.SARIMAX(ads.Ads, order=(p, d, q),

seasonal_order=(P, D, Q, s)).fit(disp=-1)

print(best_model.summary())

- 我们绘制模型的残差分布情况:

tsplot(best_model.resid[24+1:], lags=60)

- 很明显,模型的残差是平稳的,没有明显的自相关,让我们用此模型进行预测.

def plotSARIMA(series, model, n_steps):

"""

Plots model vs predicted values

series - dataset with timeseries

model - fitted SARIMA model

n_steps - number of steps to predict in the future

"""

# adding model values

data = series.copy()

data.columns = ['actual']

data['arima_model'] = model.fittedvalues

# making a shift on s+d steps, because these values were unobserved by the model

# due to the differentiating

data['arima_model'][:s+d] = np.NaN

# forecasting on n_steps forward

forecast = model.predict(start = data.shape[0], end = data.shape[0]+n_steps)

forecast = data.arima_model.append(forecast)

# calculate error, again having shifted on s+d steps from the beginning

error = mean_absolute_percentage_error(data['actual'][s+d:], data['arima_model'][s+d:])

plt.figure(figsize=(15, 7))

plt.title("Mean Absolute Percentage Error: {0:.2f}%".format(error))

plt.plot(forecast, color='r', label="model")

plt.axvspan(data.index[-1], forecast.index[-1], alpha=0.5, color='lightgrey')

plt.plot(data.actual, label="actual")

plt.legend()

plt.grid(True);

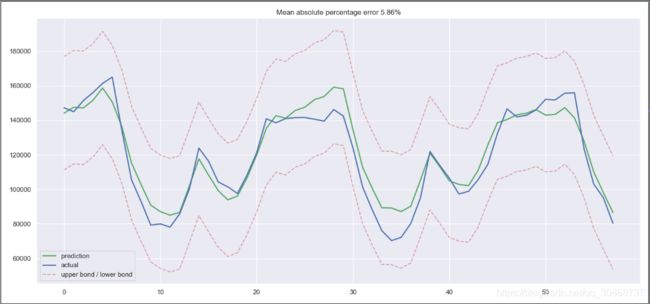

plotSARIMA(ads, best_model, 50)

最后,我们得到了相当充分的预测,我们的模型平均错误率为4.01%,这是非常好的,但是准备数据,使得原始序列平稳和蛮力参数选择的总成本可能不值得这个精度。

4. 时间序列的(非)线性模型

在工作中,构建模型的原则是快、好、省。 这也就意味着有些模型并不适合用于生产环境。

因为它们需要过长的数据准备时间,或者需要频繁地重新训练新数据,或者很难调整参数(前面提到的 SARIMA 模型就包含了着三个缺点)。

因此,我们一般使用一些轻松得多的方法,比如说从现有时间序列中选取一些特征,然后创建一个简单的线性回归或随机森林模型。

4.1 特征提取

咱们来分析一下,现在我们所拥有的只是一个一维时间序列。它能有哪些可以提取的特征呢?

- 时间序列的滞后值

- 窗口相关统计量

一个窗口序列中的最大值/最小值;

一个窗口序列中的平均值和中位数;

窗口的方差; - 日期和时间特征

每小时的第几分钟,每天的第几小时,每周的第几天;

这一天是节假日吗?有什么特别的事情发生了吗?这可以作为布尔值特征; - 目标值编码

- 其他模型的预测值

咱们再来看看,能否从广告浏览量的序列数据中,通过上面的一些方法,提取出某些特征。

4.1.1 时间序列的滞后值

将时间序列来回移动 n 步,我们能得到一个特征,其中时序的当前值和其t-n时刻的值对齐。如果我们移动1时差,并训练模型预测未来,那么模型将能够提前预测1步。增加时差,比如,增加到6,可以让模型提前预测6步,不过它需要在观测到数据的6步之后才能利用。

# Creating a copy of the initial datagrame to make various transformations

data = pd.DataFrame(ads.Ads.copy())

data.columns = ["y"]

# Adding the lag of the target variable from 6 steps back up to 24

for i in range(6, 25):

data["lag_{}".format(i)] = data.y.shift(i)

咱们现在有了数据集了,那先用线性回归训练一个模型试试吧!

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import cross_val_score

# for time-series cross-validation set 5 folds

tscv = TimeSeriesSplit(n_splits=5)

def timeseries_train_test_split(X, y, test_size):

"""

Perform train-test split with respect to time series structure

"""

# get the index after which test set starts

test_index = int(len(X) * (1 - test_size))

X_train = X.iloc[:test_index]

y_train = y.iloc[:test_index]

X_test = X.iloc[test_index:]

y_test = y.iloc[test_index:]

return X_train, X_test, y_train, y_test

y = data.dropna().y

X = data.dropna().drop(['y'], axis=1)

print(y)

print(X)

# reserve 30% of data for testing

X_train, X_test, y_train, y_test = timeseries_train_test_split(X, y, test_size=0.3)

def plotModelResults(model, X_train=X_train, X_test=X_test, plot_intervals=False, plot_anomalies=False, scale=1.96):

"""

Plots modelled vs fact values, prediction intervals and anomalies

"""

prediction = model.predict(X_test)

plt.figure(figsize=(15, 7))

plt.plot(prediction, "g", label="prediction", linewidth=2.0)

plt.plot(y_test.values, label="actual", linewidth=2.0)

if plot_intervals:

cv = cross_val_score(model, X_train, y_train,

cv=tscv,

scoring="neg_mean_squared_error")

# mae = cv.mean() * (-1)

deviation = np.sqrt(cv.std())

lower = prediction - (scale * deviation)

upper = prediction + (scale * deviation)

plt.plot(lower, "r--", label="upper bond / lower bond", alpha=0.5)

plt.plot(upper, "r--", alpha=0.5)

if plot_anomalies:

anomalies = np.array([np.NaN] * len(y_test))

anomalies[y_test < lower] = y_test[y_test < lower]

anomalies[y_test > upper] = y_test[y_test > upper]

plt.plot(anomalies, "o", markersize=10, label="Anomalies")

error = mean_absolute_percentage_error(prediction, y_test)

plt.title("Mean absolute percentage error {0:.2f}%".format(error))

plt.legend(loc="best")

plt.tight_layout()

plt.grid(True)

plt.show()

def plotCoefficients(model):

"""

Plots sorted coefficient values of the model

"""

coefs = pd.DataFrame(model.coef_, X_train.columns)

coefs.columns = ["coef"]

coefs["abs"] = coefs.coef.apply(np.abs)

coefs = coefs.sort_values(by="abs", ascending=False).drop(["abs"], axis=1)

plt.figure(figsize=(15, 7))

coefs.coef.plot(kind='bar')

plt.grid(True, axis='y')

plt.hlines(y=0, xmin=0, xmax=len(coefs), linestyles='dashed');

plt.show()

# machine learning in two lines

lr = LinearRegression()

lr.fit(X_train, y_train)

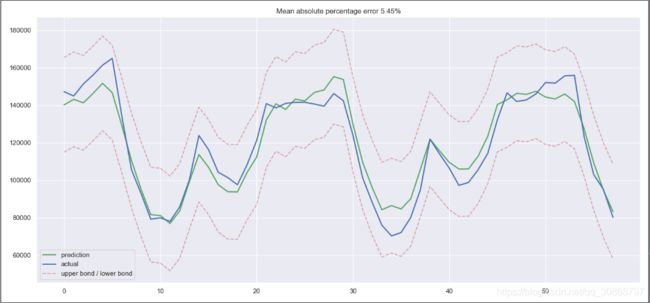

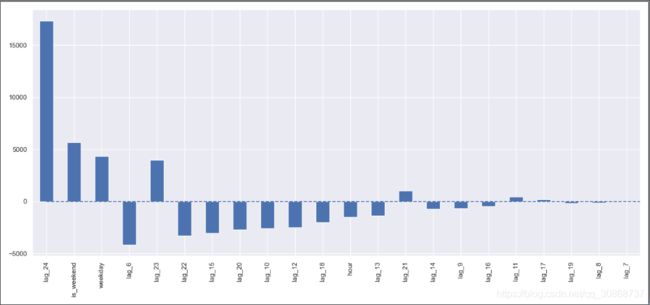

plotModelResults(lr, plot_intervals=True)

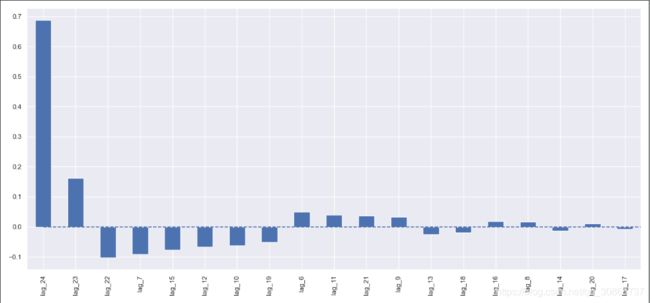

plotCoefficients(lr)

简单的处理,效果还不是很差,但是里面有大量不必要的特征,咱们继续来做特征工程。

4.1.2 日期和时间特征

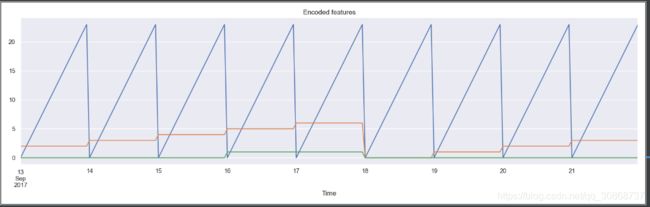

我们将在数据集中加入小时、星期几、是否周末三个特征。为此我们需要转换当前dataframe的索引为datetime格式,并从中提取hour和weekday。

#数据集中加入小时、星期几、是否周末三个特征

data.index = pd.to_datetime(data.index)

data["hour"] = data.index.hour

data["weekday"] = data.index.weekday

data['is_weekend'] = data.weekday.isin([5,6])*1

我们可以可视化上面得到的特征:

plt.figure(figsize=(16, 5))

plt.title("Encoded features")

data.hour.plot()

data.weekday.plot()

data.is_weekend.plot()

plt.grid(True)

plt.show()

现在我们需要对上述特征进行归一化处理:

#特征进行归一化处理:

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

y = data.dropna().y

X = data.dropna().drop(['y'], axis=1)

X_train, X_test, y_train, y_test = timeseries_train_test_split(X, y, test_size=0.3)

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

lr = LinearRegression()

lr.fit(X_train_scaled, y_train)

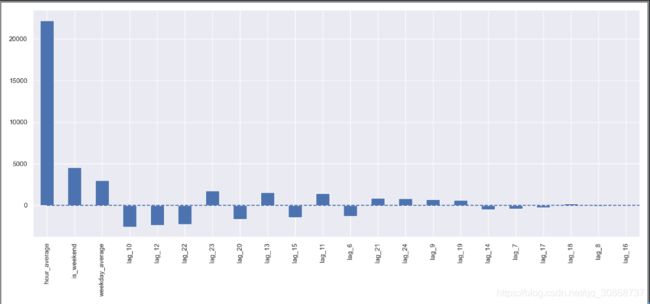

plotModelResults(lr, X_train=X_train_scaled, X_test=X_test_scaled, plot_intervals=True)

plotCoefficients(lr)

测试集上的误差下降了一点,根据系数图判断,我们可以发现工作日weekday和周末is_weekend是很有用的特征。

4.1.3 平均值编码

我们可以在已有的特征空间中,再添加一个对类别型变量进行编码的变体,平均值编码。

如果使用大量的dummy变量来分解数据集,则会导致有关距离的信息丢失,并且它们不能被当作实数值,如 “0点 < 23 点”,而实际上第二天的 0 点比前一天的 23 点大。

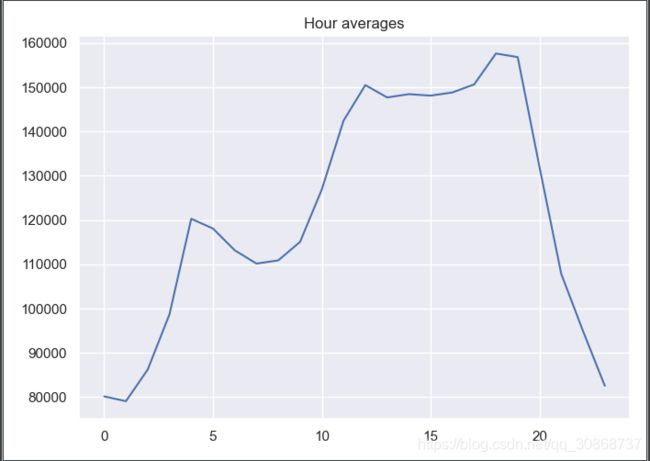

因此我们应该用一些更易于解释的值对变量进行编码,自然而然的想法就是平均值编码。在我们的案例中,做法就是把 “星期几” 和 “每天的几点” 这两种类别型变量,用相对应的广告阅读量的平均值进行编码。

举个例子,我们把所有特征值为星期三的广告阅读量累加起来,再取平均值,然后用该值覆盖原本特征值。(实际做法是生成字典结构,key为星期几,value为target的均值,生成新的特征字段,再drop掉原来的类别型特征)

聚类计算均值

def code_mean(data, cat_feature, real_feature):

"""

cat_feature:类别型特征,如星期几;

real_feature:target字段

"""

return dict(data.groupby(cat_feature)[real_feature].mean())

以 hours 字段为例

average_hour = code_mean(data, 'hour', "y")

plt.figure(figsize=(7, 5))

plt.title("Hour averages")

pd.DataFrame.from_dict(average_hour, orient='index')[0].plot()

plt.grid(True)

plt.show()

def prepareData(series, lag_start, lag_end, test_size, target_encoding=False):

"""

series: pd.DataFrame

dataframe with timeseries

lag_start: int

initial step back in time to slice target variable

example - lag_start = 1 means that the model

will see yesterday's values to predict today

lag_end: int

final step back in time to slice target variable

example - lag_end = 4 means that the model

will see up to 4 days back in time to predict today

test_size: float

size of the test dataset after train/test split as percentage of dataset

target_encoding: boolean

if True - add target averages to the dataset

"""

# copy of the initial dataset

data = pd.DataFrame(series.copy())

data.columns = ["y"]

# lags of series

for i in range(lag_start, lag_end):

data["lag_{}".format(i)] = data.y.shift(i)

# datetime features

data.index = pd.to_datetime(data.index)

data["hour"] = data.index.hour

data["weekday"] = data.index.weekday

data['is_weekend'] = data.weekday.isin([5,6])*1

if target_encoding:

# calculate averages on train set only

test_index = int(len(data.dropna())*(1-test_size))

data['weekday_average'] = list(map(

code_mean(data[:test_index], 'weekday', "y").get, data.weekday))

data["hour_average"] = list(map(

code_mean(data[:test_index], 'hour', "y").get, data.hour))

# frop encoded variables

data.drop(["hour", "weekday"], axis=1, inplace=True)

# train-test split

y = data.dropna().y

X = data.dropna().drop(['y'], axis=1)

X_train, X_test, y_train, y_test =\

timeseries_train_test_split(X, y, test_size=test_size)

return X_train, X_test, y_train, y_test

X_train, X_test, y_train, y_test =\

prepareData(ads.Ads, lag_start=6, lag_end=25, test_size=0.3, target_encoding=True)

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

lr = LinearRegression()

lr.fit(X_train_scaled, y_train)

plotModelResults(lr, X_train=X_train_scaled, X_test=X_test_scaled,

plot_intervals=True, plot_anomalies=True)

plotCoefficients(lr)

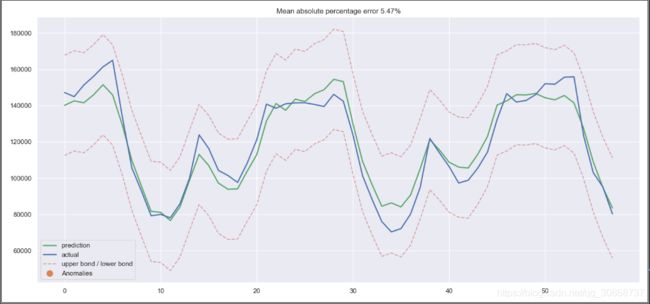

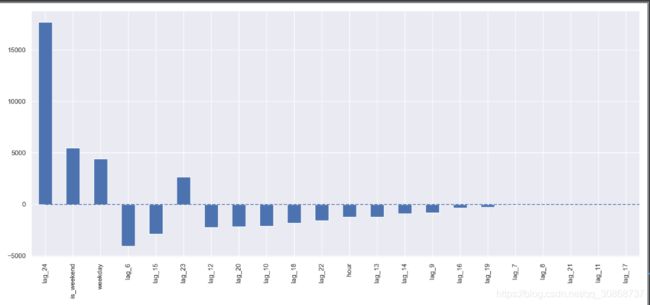

线性回归模型的预测图:

线性回归模型的权重系数:

发生了过拟合!我们可以看到,hour_average 这个特征的系数比起其他的特征,显得太大了,以至于其他特征在模型的计算中,所起到的作用变得很小。解决这个问题的办法有很多,比如说我们可以不在整个训练集上进行target的平均值编码,而是在相应的滑动窗口上进行计算。或者说我们直接手动移除这种特征,反正我们确定它只会带来更多的坏处。

X_train, X_test, y_train, y_test =\

prepareData(ads.Ads, lag_start=6, lag_end=25, test_size=0.3, target_encoding=False)

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

4.2 正则化与特征筛选

我们都知道,并不是每一个特征都是重要的,有些特征甚至会带来过拟合,像这种特征应该被移除。除此之外,我们还要尝试正则化方法,两种常见的带正则化的线性回归模型分别是 Lasso( L1正则化) 和 Ridge(L2正则化)

绘制特征热度图,删除相关度比较高的特征

plt.figure(figsize=(10, 8))

sns.heatmap(X_train.corr())

Ridge( L2正则化)进行训练并绘制预测结果图和权重系数分布图实现代码:

from sklearn.linear_model import LassoCV, RidgeCV

ridge = RidgeCV(cv=tscv)

ridge.fit(X_train_scaled, y_train)

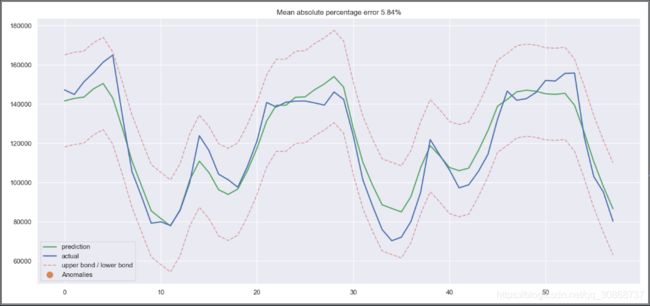

plotModelResults(ridge,

X_train=X_train_scaled,

X_test=X_test_scaled,

plot_intervals=True, plot_anomalies=True)

plotCoefficients(ridge)

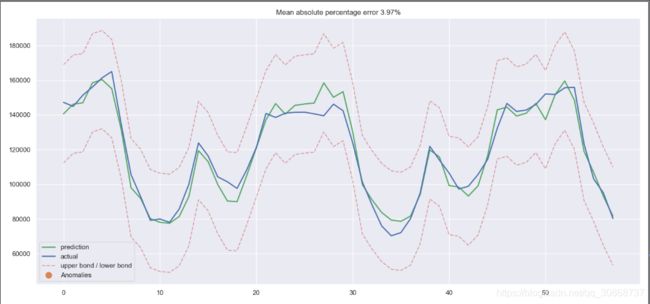

Ridge 预测结果:

Ridge 权重分布

观察发现,Ridge的参数分布相对比较均匀,并且那些不太重要的特征,系数越来越趋向于0;

Lasso( L1 正则化)进行训练并绘制预测结果图和权重系数分布图实现代码:

lasso = LassoCV(cv=tscv)

lasso.fit(X_train_scaled, y_train)

plotModelResults(lasso,

X_train=X_train_scaled,

X_test=X_test_scaled,

plot_intervals=True, plot_anomalies=True)

plotCoefficients(lasso)

Lasso 预测结果:

Lasso 权重分布:

观察发现,Lasso的参数分布相对比较系数,并且那些不太重要的特征,系数为 0,即直接移除了部分特征;

4.3 Boosting

最后来试一试咱们的“大杀器”(XGBoost)。

实现代码:

from xgboost import XGBRegressor

xgb = XGBRegressor()

xgb.fit(X_train_scaled, y_train)

plotModelResults(xgb,

X_train=X_train_scaled,

X_test=X_test_scaled,

plot_intervals=True, plot_anomalies=True)

预测效果图:

不愧是大杀器,直接取得了我们目前尝试过的模型中最低的误差率。

不过这一胜利带有一定的欺骗性,刚拿到时序数据时,马上尝试XGBoost也许不是什么明智的选择。一般而言,和线性模型相比,基于树的模型难以应付数据中的趋势,所以你首先需要从序列中去除趋势,或者使用一些特殊技巧。理想情况下,平稳化序列,接着使用XGBoost,例如,你可以使用一个线性模型单独预测趋势,然后将其加入XGBoost的预测以得到最终预测。

5.文章完整代码

按照文章顺序理解学习

# -*- coding:utf-8 -*-

import numpy as np # 向量和矩阵运算

import pandas as pd # 表格与数据处理

import matplotlib.pyplot as plt # 绘图

import seaborn as sns # 更多绘图功能

sns.set()

from dateutil.relativedelta import relativedelta # 日期数据处理

from scipy.optimize import minimize # 优化函数

import statsmodels.formula.api as smf # 数理统计

import statsmodels.tsa.api as smt

import statsmodels.api as sm

import scipy.stats as scs

from itertools import product # 一些有用的函数

from tqdm import tqdm_notebook

import warnings # 勿扰模式

warnings.filterwarnings('ignore')

ads = pd.read_csv('../testdata/ads.csv', index_col=['Time'], parse_dates=['Time'])

currency = pd.read_csv('../testdata/currency.csv', index_col=['Time'], parse_dates=['Time'])

#玩家在2017-09-13到2017-09-22这十天内,每小时广告阅读量的折线图

plt.figure(figsize=(15, 7))

plt.plot(ads.Ads)

plt.title('Ads watched (hourly data)')

plt.grid(True)

plt.show()

#玩家在2017-05到2018-03这十一个月内,每天游戏币消费的折线图

plt.figure(figsize=(15, 7))

plt.plot(currency.GEMS_GEMS_SPENT)

plt.title('In-game currency spent (daily data)')

plt.grid(True)

plt.show()

from sklearn.metrics import r2_score, median_absolute_error, mean_absolute_error

from sklearn.metrics import median_absolute_error, mean_squared_error, mean_squared_log_error

def mean_absolute_percentage_error(y_true, y_pred):

return np.mean(np.abs((y_true - y_pred) / y_true)) * 100

def moving_average(series, n):

"""

Calculate average of last n observations

"""

return np.average(series[-n:])

mvn_avg=moving_average(ads, 24) # 用过去24小时的广告浏览量来预测下一个时间的数据

print(mvn_avg)

#116805.0

def plotMovingAverage(series, window, plot_intervals=False, scale=1.96, plot_anomalies=False):

"""

series - dataframe with timeseries

window - rolling window size

plot_intervals - show confidence intervals

plot_anomalies - show anomalies

"""

rolling_mean = series.rolling(window=window).mean()

plt.figure(figsize=(15, 5))

plt.title("Moving average\n window size = {}".format(window))

plt.plot(rolling_mean, "g", label="Rolling mean trend")

# Plot confidence intervals for smoothed values

if plot_intervals:

mae = mean_absolute_error(series[window:], rolling_mean[window:])

deviation = np.std(series[window:] - rolling_mean[window:])

lower_bond = rolling_mean - (mae + scale * deviation)

upper_bond = rolling_mean + (mae + scale * deviation)

plt.plot(upper_bond, "r--", label="Upper Bond / Lower Bond")

plt.plot(lower_bond, "r--")

# Having the intervals, find abnormal values

if plot_anomalies:

anomalies = pd.DataFrame(index=series.index, columns=series.columns)

anomalies[series < lower_bond] = series[series < lower_bond]

anomalies[series > upper_bond] = series[series > upper_bond]

plt.plot(anomalies, "ro", markersize=10)

plt.plot(series[window:], label="Actual values")

plt.legend(loc="upper left")

plt.grid(True)

plt.show()

#设置滑动窗口值为4(小时)

plotMovingAverage(ads, 4)

#设置滑动窗口值为12(小时)

plotMovingAverage(ads, 12)

#设置滑动窗口值为24(小时)

plotMovingAverage(ads, 24)

#我们还可以给我们的 平滑值 添上置信区间

plotMovingAverage(ads, 4, plot_intervals=True)

#数据中的某个值改为异常值

ads_anomaly = ads.copy()

ads_anomaly.iloc[-20] = ads_anomaly.iloc[-20] * 0.2 # say we have 80% drop of ads

#找到异常值。

plotMovingAverage(ads_anomaly, 4, plot_intervals=True, plot_anomalies=True)

#第二个数据序列(每天游戏币的消费情况),并且设置滑动窗口大小为7,看看是什么效果。

plotMovingAverage(currency, 7, plot_intervals=True, plot_anomalies=True)

def weighted_average(series, weights):

"""

Calculate weighter average on series

"""

result = 0.0

weights.reverse()

for n in range(len(weights)):

result += series.iloc[-n - 1] * weights[n]

return float(result)

#三个权重值,就是用最后三个值预测下一个值

weighted_avg = weighted_average(ads, [0.6, 0.3, 0.1])

print(weighted_avg)

#98423.0

#对目前所有的已观测数据进行加权处理,并且每一个数据点的权重,呈指数形式递减

def exponential_smoothing(series, alpha):

"""

series - dataset with timestamps

alpha - float [0.0, 1.0], smoothing parameter

"""

result = [series[0]] # first value is same as series

for n in range(1, len(series)):

result.append(alpha * series[n] + (1 - alpha) * result[n - 1])

return result

def plotExponentialSmoothing(series, alphas):

"""

Plots exponential smoothing with different alphas

series - dataset with timestamps

alphas - list of floats, smoothing parameters

"""

with plt.style.context('seaborn-white'):

plt.figure(figsize=(15, 7))

for alpha in alphas:

plt.plot(exponential_smoothing(series, alpha), label="Alpha {}".format(alpha))

plt.plot(series.values, "c", label="Actual")

plt.legend(loc="best")

plt.axis('tight')

plt.title("Exponential Smoothing")

plt.grid(True)

plt.show()

plotExponentialSmoothing(ads.Ads, [0.7, 0.3, 0.05])

plotExponentialSmoothing(currency.GEMS_GEMS_SPENT, [0.7, 0.3, 0.05])

def double_exponential_smoothing(series, alpha, beta):

"""

series - dataset with timeseries

alpha - float [0.0, 1.0], smoothing parameter for level

beta - float [0.0, 1.0], smoothing parameter for trend

"""

# first value is same as series

result = [series[0]]

for n in range(1, len(series) + 1):

if n == 1:

level, trend = series[0], series[1] - series[0]

if n >= len(series): # forecasting

value = result[-1]

else:

value = series[n]

last_level, level = level, alpha * value + (1 - alpha) * (level + trend)

trend = beta * (level - last_level) + (1 - beta) * trend

result.append(level + trend)

return result

def plotDoubleExponentialSmoothing(series, alphas, betas):

"""

Plots double exponential smoothing with different alphas and betas

series - dataset with timestamps

alphas - list of floats, smoothing parameters for level

betas - list of floats, smoothing parameters for trend

"""

with plt.style.context('seaborn-white'):

plt.figure(figsize=(20, 8))

for alpha in alphas:

for beta in betas:

plt.plot(double_exponential_smoothing(series, alpha, beta),

label="Alpha {}, beta {}".format(alpha, beta))

plt.plot(series.values, label="Actual")

plt.legend(loc="best")

plt.axis('tight')

plt.title("Double Exponential Smoothing")

plt.grid(True)

plt.show()

plotDoubleExponentialSmoothing(ads.Ads, alphas=[0.9, 0.02], betas=[0.9, 0.02])

plotDoubleExponentialSmoothing(currency.GEMS_GEMS_SPENT, alphas=[0.9, 0.02], betas=[0.9, 0.02])

from sklearn.model_selection import TimeSeriesSplit # you have everything done for you

class HoltWinters:

"""

Holt-Winters model with the anomalies detection using Brutlag method

# series - initial time series

# slen - length of a season

# alpha, beta, gamma - Holt-Winters model coefficients

# n_preds - predictions horizon

# scaling_factor - sets the width of the confidence interval by Brutlag (usually takes values from 2 to 3)

"""

def __init__(self, series, slen, alpha, beta, gamma, n_preds, scaling_factor=1.96):

self.series = series

self.slen = slen

self.alpha = alpha

self.beta = beta

self.gamma = gamma

self.n_preds = n_preds

self.scaling_factor = scaling_factor

def initial_trend(self):

sum = 0.0

for i in range(self.slen):

sum += float(self.series[i + self.slen] - self.series[i]) / self.slen

return sum / self.slen

def initial_seasonal_components(self):

seasonals = {}

season_averages = []

n_seasons = int(len(self.series) / self.slen)

# let's calculate season averages

for j in range(n_seasons):

season_averages.append(sum(self.series[self.slen * j:self.slen * j + self.slen]) / float(self.slen))

# let's calculate initial values

for i in range(self.slen):

sum_of_vals_over_avg = 0.0

for j in range(n_seasons):

sum_of_vals_over_avg += self.series[self.slen * j + i] - season_averages[j]

seasonals[i] = sum_of_vals_over_avg / n_seasons

return seasonals

def triple_exponential_smoothing(self):

self.result = []

self.Smooth = []

self.Season = []

self.Trend = []

self.PredictedDeviation = []

self.UpperBond = []

self.LowerBond = []

seasonals = self.initial_seasonal_components()

for i in range(len(self.series) + self.n_preds):

if i == 0: # components initialization

smooth = self.series[0]

trend = self.initial_trend()

self.result.append(self.series[0])

self.Smooth.append(smooth)

self.Trend.append(trend)

self.Season.append(seasonals[i % self.slen])

self.PredictedDeviation.append(0)

self.UpperBond.append(self.result[0] +

self.scaling_factor *

self.PredictedDeviation[0])

self.LowerBond.append(self.result[0] -

self.scaling_factor *

self.PredictedDeviation[0])

continue

if i >= len(self.series): # predicting

m = i - len(self.series) + 1

self.result.append((smooth + m * trend) + seasonals[i % self.slen])

# when predicting we increase uncertainty on each step

self.PredictedDeviation.append(self.PredictedDeviation[-1] * 1.01)

else:

val = self.series[i]

last_smooth, smooth = smooth, self.alpha * (val - seasonals[i % self.slen]) + (1 - self.alpha) * (

smooth + trend)

trend = self.beta * (smooth - last_smooth) + (1 - self.beta) * trend

seasonals[i % self.slen] = self.gamma * (val - smooth) + (1 - self.gamma) * seasonals[i % self.slen]

self.result.append(smooth + trend + seasonals[i % self.slen])

# Deviation is calculated according to Brutlag algorithm.

self.PredictedDeviation.append(self.gamma * np.abs(self.series[i] - self.result[i])

+ (1 - self.gamma) * self.PredictedDeviation[-1])

self.UpperBond.append(self.result[-1] +

self.scaling_factor *

self.PredictedDeviation[-1])

self.LowerBond.append(self.result[-1] -

self.scaling_factor *

self.PredictedDeviation[-1])

self.Smooth.append(smooth)

self.Trend.append(trend)

self.Season.append(seasonals[i % self.slen])

def timeseriesCVscore(params, series, loss_function=mean_squared_error, slen=24):

"""

Returns error on CV

params - vector of parameters for optimization

series - dataset with timeseries

slen - season length for Holt-Winters model

"""

# errors array

errors = []

values = series.values

alpha, beta, gamma = params

# set the number of folds for cross-validation

tscv = TimeSeriesSplit(n_splits=3)

# iterating over folds, train model on each, forecast and calculate error

for train, test in tscv.split(values):

model = HoltWinters(series=values[train], slen=slen,alpha=alpha, beta=beta, gamma=gamma, n_preds=len(test))

model.triple_exponential_smoothing()

predictions = model.result[-len(test):]

actual = values[test]

error = loss_function(predictions, actual)

errors.append(error)

return np.mean(np.array(errors))

#%%time

data = ads.Ads[:-20] # leave some data for testing

# initializing model parameters alpha, beta and gamma

x = [0, 0, 0]

# Minimizing the loss function

'''

scipy.optimize.minimize(fun, x0, args=(), method=None, jac=None, hess=None, hessp=None, bounds=None, constraints=(), tol=None, callback=None, options=None)

解释:

fun: 求最小值的目标函数

x0:变量的初始猜测值,如果有多个变量,需要给每个变量一个初始猜测值。minimize是局部最优的解法,所以

args:常数值,后面demo会讲解,fun中没有数字,都以变量的形式表示,对于常数项,需要在这里给值

method:求极值的方法,官方文档给了很多种。一般使用默认。每种方法我理解是计算误差,反向传播的方式不同而已,例如BFGS,Nelder-Mead单纯形,牛顿共轭梯度TNC,COBYLA或SLSQP

constraints:约束条件,针对fun中为参数的部分进行约束限制

'''

opt = minimize(timeseriesCVscore, x0=x,args=(data, mean_squared_log_error),method="TNC", bounds = ((0, 1), (0, 1), (0, 1)))

# Take optimal values...

alpha_final, beta_final, gamma_final = opt.x

print(alpha_final, beta_final, gamma_final)

# ...and train the model with them, forecasting for the next 50 hours

model = HoltWinters(data, slen = 24,alpha = alpha_final,beta = beta_final,gamma = gamma_final,n_preds = 50, scaling_factor = 3)

model.triple_exponential_smoothing()

print(model.result)

print(len(model.result))

def plotHoltWinters(series, plot_intervals=False, plot_anomalies=False):

"""

series - dataset with timeseries

plot_intervals - show confidence intervals

plot_anomalies - show anomalies

"""

plt.figure(figsize=(20, 10))

plt.plot(model.result, label="Model")

plt.plot(series.values, label="Actual")

error = mean_absolute_percentage_error(series.values, model.result[:len(series)])

plt.title("Mean Absolute Percentage Error: {0:.2f}%".format(error))

if plot_anomalies:

anomalies = np.array([np.NaN] * len(series))

anomalies[series.values < model.LowerBond[:len(series)]] = \

series.values[series.values < model.LowerBond[:len(series)]]

anomalies[series.values > model.UpperBond[:len(series)]] = \

series.values[series.values > model.UpperBond[:len(series)]]

plt.plot(anomalies, "o", markersize=10, label="Anomalies")

if plot_intervals:

plt.plot(model.UpperBond, "r--", alpha=0.5, label="Up/Low confidence")

plt.plot(model.LowerBond, "r--", alpha=0.5)

plt.fill_between(x=range(0, len(model.result)), y1=model.UpperBond,

y2=model.LowerBond, alpha=0.2, color="grey")

plt.vlines(len(series), ymin=min(model.LowerBond), ymax=max(model.UpperBond), linestyles='dashed')

plt.axvspan(len(series) - 20, len(model.result), alpha=0.3, color='lightgrey')

plt.grid(True)

plt.axis('tight')

plt.legend(loc="best", fontsize=13)

plt.show()

plotHoltWinters(ads.Ads)

plotHoltWinters(ads.Ads, plot_intervals=True, plot_anomalies=True)

plt.figure(figsize=(25, 5))

plt.plot(model.PredictedDeviation)

plt.grid(True)

plt.axis('tight')

plt.title("Brutlag's predicted deviation")

plt.show()

data = currency.GEMS_GEMS_SPENT[:-50]

slen = 30 # 30-day seasonality

x = [0, 0, 0]

opt = minimize(timeseriesCVscore, x0=x,args=(data, mean_absolute_percentage_error, slen),method="TNC", bounds = ((0, 1), (0, 1), (0, 1)))

alpha_final, beta_final, gamma_final = opt.x

print(alpha_final, beta_final, gamma_final)

model = HoltWinters(data, slen = slen,alpha = alpha_final,beta = beta_final, gamma = gamma_final, n_preds = 100, scaling_factor = 3)

model.triple_exponential_smoothing()

plotHoltWinters(currency.GEMS_GEMS_SPENT)

plotHoltWinters(currency.GEMS_GEMS_SPENT, plot_intervals=True, plot_anomalies=True)

plt.figure(figsize=(25, 5))

plt.plot(model.PredictedDeviation)

plt.grid(True)

plt.axis('tight')

plt.title("Brutlag's predicted deviation")

plt.show()

#绘制白噪声图

white_noise = np.random.normal(size=1000)

with plt.style.context('bmh'):

plt.figure(figsize=(15, 5))

plt.plot(white_noise)

plt.show()

#绘制新的样本点分布图:

def plotProcess(n_samples=1000, rho=0):

x = w = np.random.normal(size=n_samples)

for t in range(n_samples):

x[t] = rho * x[t-1] + w[t]

with plt.style.context('bmh'):

plt.figure(figsize=(10, 3))

plt.plot(x)

plt.title("Rho {}\n Dickey-Fuller p-value: {}".format(rho, round(sm.tsa.stattools.adfuller(x)[1], 3)))

plt.show()

for rho in [0, 0.6, 0.9, 1]:

plotProcess(rho=rho)

#绘制时间序列图、ACF 图和 PACF 图代码:

def tsplot(y, lags=None, figsize=(12, 7), style='bmh'):

"""

Plot time series, its ACF and PACF, calculate Dickey–Fuller test

y - timeseries

lags - how many lags to include in ACF, PACF calculation

"""

if not isinstance(y, pd.Series):

y = pd.Series(y)

with plt.style.context(style):

fig = plt.figure(figsize=figsize)

layout = (2, 2)

ts_ax = plt.subplot2grid(layout, (0, 0), colspan=2)

acf_ax = plt.subplot2grid(layout, (1, 0))

pacf_ax = plt.subplot2grid(layout, (1, 1))

y.plot(ax=ts_ax)

p_value = sm.tsa.stattools.adfuller(y)[1]

print(sm.tsa.stattools.adfuller(y))

ts_ax.set_title('Time Series Analysis Plots\n Dickey-Fuller: p={0:.5f}'.format(p_value))

smt.graphics.plot_acf(y, lags=lags, ax=acf_ax)

smt.graphics.plot_pacf(y, lags=lags, ax=pacf_ax)

plt.tight_layout()

plt.show()

tsplot(ads.Ads, lags=60)

#“季节差分”,也就是对序列进行简单的减法操作,时差等于季节周期

ads_diff = ads.Ads - ads.Ads.shift(24)

tsplot(ads_diff[24:], lags=60)

#一阶差分:从序列中减去自身(时差为1)

ads_diff = ads_diff - ads_diff.shift(1)

tsplot(ads_diff[24+1:], lags=60)

#设置参数搜索区间:

# setting initial values and some bounds for them

ps = range(2, 5)

d=1

qs = range(2, 5)

Ps = range(0, 3)

D=1

Qs = range(0, 2)

s = 24 # season length is still 24

# creating list with all the possible combinations of parameters

#product取出每个参数的值形成一个几个,有序的随机组合

parameters = product(ps, qs, Ps, Qs)

parameters_list = list(parameters)

len(parameters_list) # 输出为 54

def optimizeSARIMA(parameters_list, d, D, s):

"""

Return dataframe with parameters and corresponding AIC

parameters_list - list with (p, q, P, Q) tuples

d - integration order in ARIMA model

D - seasonal integration order

s - length of season

"""

results = []

best_aic = float("inf")

for param in tqdm_notebook(parameters_list):

# we need try-except because on some combinations model fails to converge

try:

model = sm.tsa.statespace.SARIMAX(ads.Ads, order=(param[0], d, param[1]),seasonal_order=(param[2], D, param[3], s)).fit(disp=-1)

except:

continue

aic = model.aic

# saving best model, AIC and parameters

if aic < best_aic:

best_model = model

best_aic = aic

best_param = param

results.append([param, model.aic])

result_table = pd.DataFrame(results)

result_table.columns = ['parameters', 'aic']

# sorting in ascending order, the lower AIC is - the better

result_table = result_table.sort_values(by='aic', ascending=True).reset_index(drop=True)

return result_table

#% % time

result_table = optimizeSARIMA(parameters_list, d, D, s)

print(result_table)

#设置 SARIMA 模型最佳参数,查看模型输出结果:

p, q, P, Q = result_table.parameters[0]

best_model=sm.tsa.statespace.SARIMAX(ads.Ads, order=(p, d, q),seasonal_order=(P, D, Q, s)).fit(disp=-1)

print(best_model.summary())

#我们绘制模型的残差分布情况:

tsplot(best_model.resid[24+1:], lags=60)

#预测

def plotSARIMA(series, model, n_steps):

"""

Plots model vs predicted values

series - dataset with timeseries

model - fitted SARIMA model

n_steps - number of steps to predict in the future

"""

# adding model values

data = series.copy()

data.columns = ['actual']

data['arima_model'] = model.fittedvalues

# making a shift on s+d steps, because these values were unobserved by the model

# due to the differentiating

data['arima_model'][:s+d] = np.NaN

# forecasting on n_steps forward

forecast = model.predict(start = data.shape[0], end = data.shape[0]+n_steps)

forecast = data.arima_model.append(forecast)

# calculate error, again having shifted on s+d steps from the beginning

error = mean_absolute_percentage_error(data['actual'][s+d:], data['arima_model'][s+d:])

plt.figure(figsize=(15, 7))

plt.title("Mean Absolute Percentage Error: {0:.2f}%".format(error))

plt.plot(forecast, color='r', label="model")

plt.axvspan(data.index[-1], forecast.index[-1], alpha=0.5, color='lightgrey')

plt.plot(data.actual, label="actual")

plt.legend()

plt.grid(True)

plt.show()

plotSARIMA(ads, best_model, 50)

#时间序列的(非)线性模型

# Creating a copy of the initial datagrame to make various transformations

data = pd.DataFrame(ads.Ads.copy())

data.columns = ["y"]

# Adding the lag of the target variable from 6 steps back up to 24

for i in range(6, 25):

data["lag_{}".format(i)] = data.y.shift(i)

print(data)

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import cross_val_score

# for time-series cross-validation set 5 folds

tscv = TimeSeriesSplit(n_splits=5)

def timeseries_train_test_split(X, y, test_size):

"""

Perform train-test split with respect to time series structure

"""

# get the index after which test set starts

test_index = int(len(X) * (1 - test_size))

X_train = X.iloc[:test_index]

y_train = y.iloc[:test_index]

X_test = X.iloc[test_index:]

y_test = y.iloc[test_index:]

return X_train, X_test, y_train, y_test

y = data.dropna().y

X = data.dropna().drop(['y'], axis=1)

print(y)

print(X)

# reserve 30% of data for testing

X_train, X_test, y_train, y_test = timeseries_train_test_split(X, y, test_size=0.3)

def plotModelResults(model, X_train=X_train, X_test=X_test, plot_intervals=False, plot_anomalies=False, scale=1.96):

"""

Plots modelled vs fact values, prediction intervals and anomalies

"""

prediction = model.predict(X_test)

plt.figure(figsize=(15, 7))

plt.plot(prediction, "g", label="prediction", linewidth=2.0)

plt.plot(y_test.values, label="actual", linewidth=2.0)

if plot_intervals:

cv = cross_val_score(model, X_train, y_train,

cv=tscv,

scoring="neg_mean_squared_error")

# mae = cv.mean() * (-1)

deviation = np.sqrt(cv.std())

lower = prediction - (scale * deviation)

upper = prediction + (scale * deviation)

plt.plot(lower, "r--", label="upper bond / lower bond", alpha=0.5)

plt.plot(upper, "r--", alpha=0.5)

if plot_anomalies:

anomalies = np.array([np.NaN] * len(y_test))

anomalies[y_test < lower] = y_test[y_test < lower]

anomalies[y_test > upper] = y_test[y_test > upper]

plt.plot(anomalies, "o", markersize=10, label="Anomalies")

error = mean_absolute_percentage_error(prediction, y_test)

plt.title("Mean absolute percentage error {0:.2f}%".format(error))

plt.legend(loc="best")

plt.tight_layout()

plt.grid(True)

plt.show()

def plotCoefficients(model):

"""

Plots sorted coefficient values of the model

"""

coefs = pd.DataFrame(model.coef_, X_train.columns)

coefs.columns = ["coef"]

coefs["abs"] = coefs.coef.apply(np.abs)

coefs = coefs.sort_values(by="abs", ascending=False).drop(["abs"], axis=1)

plt.figure(figsize=(15, 7))

coefs.coef.plot(kind='bar')

plt.grid(True, axis='y')

plt.hlines(y=0, xmin=0, xmax=len(coefs), linestyles='dashed');

plt.show()

# machine learning in two lines

lr = LinearRegression()

lr.fit(X_train, y_train)

plotModelResults(lr, plot_intervals=True)

plotCoefficients(lr)

#数据集中加入小时、星期几、是否周末三个特征

data.index = pd.to_datetime(data.index)

data["hour"] = data.index.hour

data["weekday"] = data.index.weekday

data['is_weekend'] = data.weekday.isin([5,6])*1

#可视化上面得到的特征

plt.figure(figsize=(16, 5))

plt.title("Encoded features")

data.hour.plot()

data.weekday.plot()

data.is_weekend.plot()

plt.grid(True)

plt.show()

#特征进行归一化处理:

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

y = data.dropna().y

X = data.dropna().drop(['y'], axis=1)

X_train, X_test, y_train, y_test = timeseries_train_test_split(X, y, test_size=0.3)

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

lr = LinearRegression()

lr.fit(X_train_scaled, y_train)

plotModelResults(lr, X_train=X_train_scaled, X_test=X_test_scaled, plot_intervals=True)

plotCoefficients(lr)

def code_mean(data, cat_feature, real_feature):

"""

cat_feature:类别型特征,如星期几;

real_feature:target字段

"""

return dict(data.groupby(cat_feature)[real_feature].mean())

average_hour = code_mean(data, 'hour', "y")

plt.figure(figsize=(7, 5))

plt.title("Hour averages")

pd.DataFrame.from_dict(average_hour, orient='index')[0].plot()

plt.grid(True)

plt.show()

def prepareData(series, lag_start, lag_end, test_size, target_encoding=False):

"""

series: pd.DataFrame

dataframe with timeseries

lag_start: int

initial step back in time to slice target variable

example - lag_start = 1 means that the model

will see yesterday's values to predict today

lag_end: int

final step back in time to slice target variable

example - lag_end = 4 means that the model

will see up to 4 days back in time to predict today

test_size: float

size of the test dataset after train/test split as percentage of dataset

target_encoding: boolean

if True - add target averages to the dataset

"""

# copy of the initial dataset

data = pd.DataFrame(series.copy())

data.columns = ["y"]

# lags of series

for i in range(lag_start, lag_end):

data["lag_{}".format(i)] = data.y.shift(i)

# datetime features

data.index = pd.to_datetime(data.index)

data["hour"] = data.index.hour

data["weekday"] = data.index.weekday

data['is_weekend'] = data.weekday.isin([5, 6]) * 1

if target_encoding:

# calculate averages on train set only

test_index = int(len(data.dropna()) * (1 - test_size))

data['weekday_average'] = list(map(

code_mean(data[:test_index], 'weekday', "y").get, data.weekday))

data["hour_average"] = list(map(

code_mean(data[:test_index], 'hour', "y").get, data.hour))

# frop encoded variables

data.drop(["hour", "weekday"], axis=1, inplace=True)

# train-test split

y = data.dropna().y

X = data.dropna().drop(['y'], axis=1)

X_train, X_test, y_train, y_test = \

timeseries_train_test_split(X, y, test_size=test_size)

return X_train, X_test, y_train, y_test

X_train, X_test, y_train, y_test = prepareData(ads.Ads, lag_start=6, lag_end=25, test_size=0.3, target_encoding=True)

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

lr = LinearRegression()

lr.fit(X_train_scaled, y_train)

plotModelResults(lr, X_train=X_train_scaled, X_test=X_test_scaled,plot_intervals=True, plot_anomalies=True)

plotCoefficients(lr)

X_train, X_test, y_train, y_test =prepareData(ads.Ads, lag_start=6, lag_end=25, test_size=0.3, target_encoding=False)

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

#绘制特征热度图,删除相关度比较高的特征

plt.figure(figsize=(10, 8))

sns.heatmap(X_train.corr())

print(X_train.corr())

#Ridge 进行训练并绘制预测结果图和权重系数分布图

from sklearn.linear_model import LassoCV, RidgeCV

ridge = RidgeCV(cv=tscv)

ridge.fit(X_train_scaled, y_train)

plotModelResults(ridge,X_train=X_train_scaled,X_test=X_test_scaled,plot_intervals=True, plot_anomalies=True)

plotCoefficients(ridge)

#Lasso进行训练并绘制预测结果图和权重系数分布图实现代码:

lasso = LassoCV(cv=tscv)

lasso.fit(X_train_scaled, y_train)

plotModelResults(lasso,X_train=X_train_scaled,X_test=X_test_scaled,plot_intervals=True, plot_anomalies=True)

plotCoefficients(lasso)

#XGBoost

from xgboost import XGBRegressor

xgb = XGBRegressor()

xgb.fit(X_train_scaled, y_train)

plotModelResults(xgb,X_train=X_train_scaled,X_test=X_test_scaled,plot_intervals=True, plot_anomalies=True)

Reference

- 原文地址:Open Machine Learning Course. Topic 9. Part 1. Time series analysis in Python

- 杜克大学的高级统计预测课程的在线教材,其中介绍了多种平滑技术、线性模型、ARIMA模型的细节

- 基于Python进行时序分析——从线性模型到图模型,其中介绍了ARIMA模型家族,及其在建模财经指数上的应用